Summarize this blog post with:

ChatGPT now has more than 800 million weekly users. Across 60,000+ websites with daily analytics tracking, it sends 8–9x the referral traffic of Perplexity, the next largest AI-first platform.

That shift creates a straightforward question for any brand investing in content: what kind of content gets cited in AI answers?

A recent study by Ahrefs analyzed ChatGPT responses across 750 top-of-the-funnel prompts in three categories — software recommendations, product recommendations, and agency recommendations. The findings were striking: recently updated “best X” blog lists were the single most prominent page type in ChatGPT’s source links, representing 43.8% of all cited pages.

Even more interesting: brands that ranked themselves first on their own lists still showed up in AI answers. And there was a clear correlation between a brand’s position on third-party comparison lists and the likelihood of ChatGPT recommending them.

In this article, you’ll learn why “best X” comparison lists have become the single most cited content type across ChatGPT, Perplexity, and Google’s AI Overviews. You’ll see what recent research into 26,283 source URLs reveals about list positioning, content freshness, and domain authority. And you’ll get a step-by-step playbook for creating, optimizing, and tracking your own “best” lists so they actually show up in AI answers — not just in Google.

Table of Contents

Why “best” lists dominate AI citations

When someone asks ChatGPT, Perplexity, or Google’s AI Mode for a recommendation — “best CRM for startups,” “best treadmill under $1,000,” “best SEO agency in London” — the AI needs structured, comparative information to build its answer.

Blog-style “best X” lists are designed exactly for this. They compare multiple options in a single page, usually with descriptions, pros, cons, and a clear ranking. That format makes them easy for AI models to parse, extract from, and cite.

The Ahrefs study found that across all three categories, “best” blog lists appeared in sources at nearly double the rate of the next most common page type. Non-blog comparison platforms like G2 and Clutch came second, followed by landing pages and social media references.

This pattern held across AI platforms too. In Google’s AI Overviews, comparison lists were actually slightly more prominent than in ChatGPT. And across the top 1,000 most-cited pages on each major AI platform, every single one included comparison listicles in the mix.

The takeaway is clear: if you’re not publishing comparison content, you’re leaving one of the strongest citation signals on the table.

But this isn’t just about AI — it works for traditional search too

Across 250 “best X software”-style Google SERPs studied in the same research, 67.6% featured a list in which the publishing company ranked itself number one. Self-promotional best lists are not a new tactic. What’s new is that AI assistants are amplifying their reach.

SEO and AI search are not separate strategies. The brands winning in both channels right now are the ones treating comparison content as a dual-purpose asset: a page that ranks in Google and feeds AI models the structured information they need to recommend your brand.

What the data says about list position and AI recommendations

The Ahrefs study manually reviewed 750 “best” lists cited by ChatGPT to determine where a recommended brand appeared in the associated list. The results showed a strong trend: brands positioned higher on comparison lists were more likely to be recommended.

When researchers divided lists into thirds — top, middle, and bottom — brands in the top third of a list were cited significantly more than those in the middle or bottom. This held true even when the analysis was limited to lists with 10 or more items, which helps control for shorter lists that naturally skew toward higher positions.

Does this mean AI models are reading the first few items and ignoring the rest? Not necessarily. Research from DEJAN SEO suggests that AI models extract varying amounts of content depending on the source domain and page structure. But regardless of the mechanism, the directional signal is consistent: higher list positions correlate with more AI recommendations.

The practical implication? If you publish a “best” list and your product genuinely deserves a high ranking, position it accordingly. And if your competitors are publishing lists that exclude you or rank you low, you need to know about it.

How to track where your brand appears on competitor lists using AI search data

This is where most “publish a best list” advice stops. But knowing which lists are being cited — and where your brand appears on them — matters more than knowing the tactic exists.

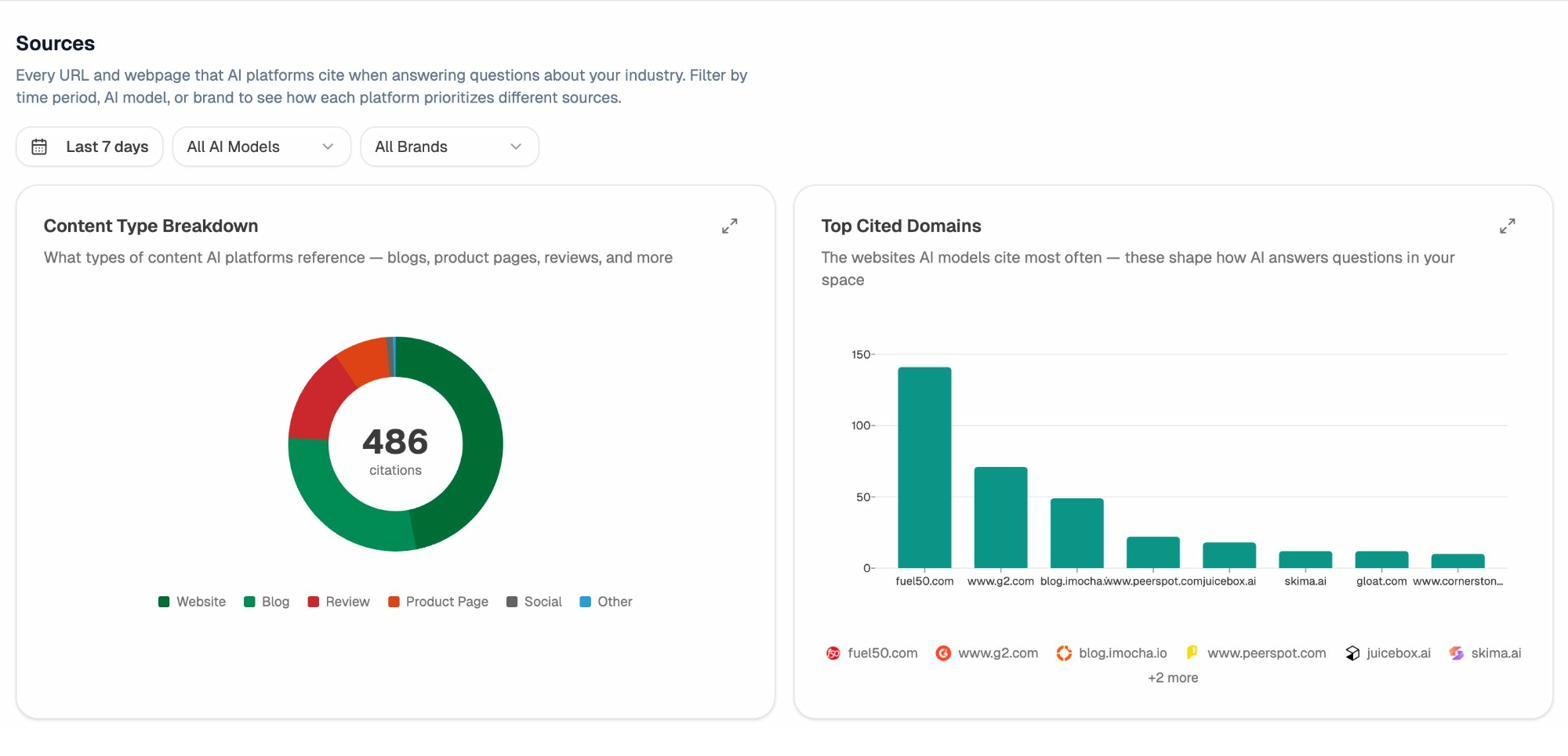

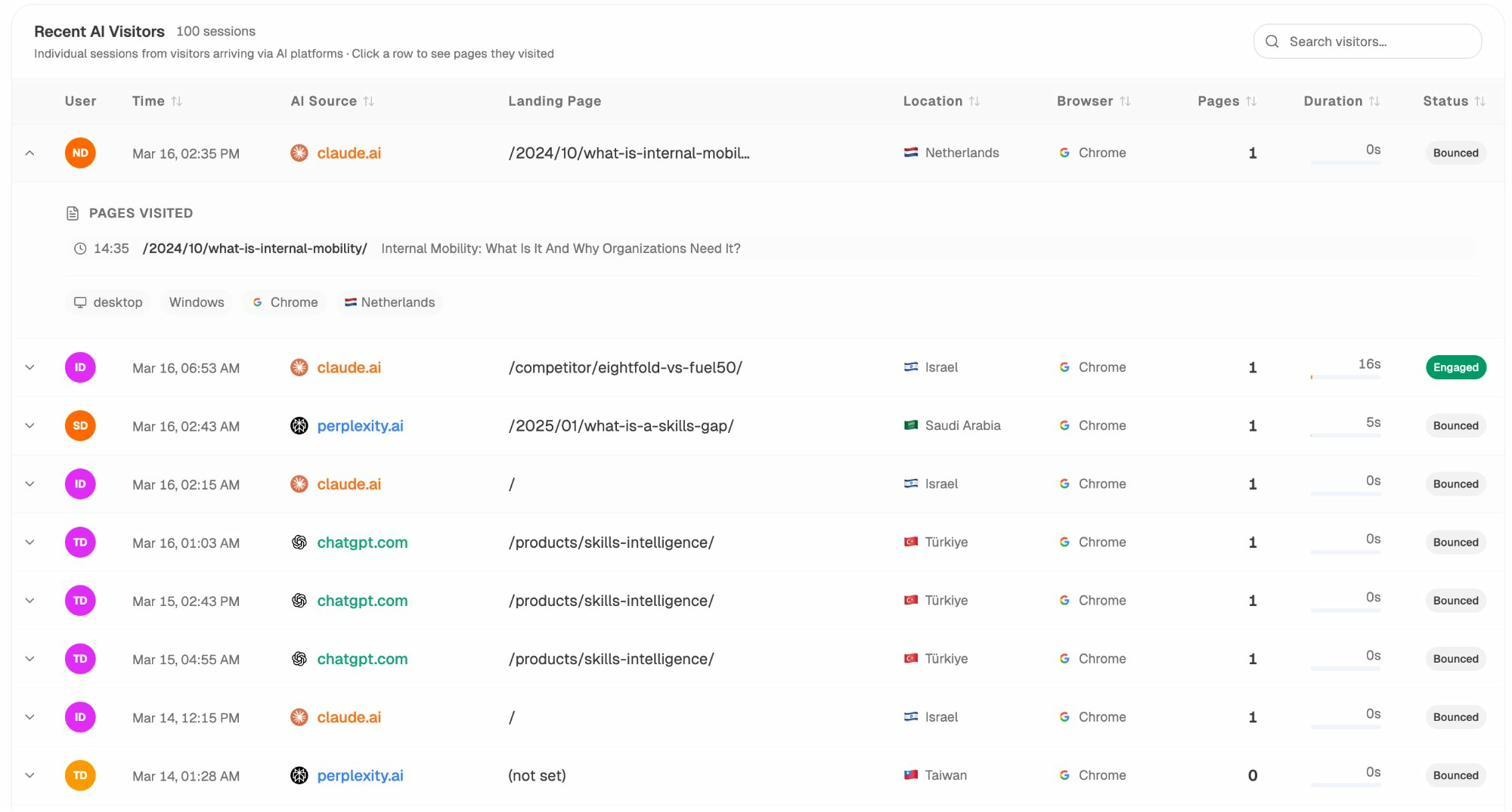

In Analyze AI, the Sources dashboard shows every URL and webpage that AI platforms cite when answering questions about your industry. You can filter by time period, AI model, or brand to see how each platform prioritizes different sources.

The Sources view breaks citations down by content type — websites, blogs, reviews, product pages, and social — so you can see at a glance whether comparison lists or other formats are driving mentions in your space.

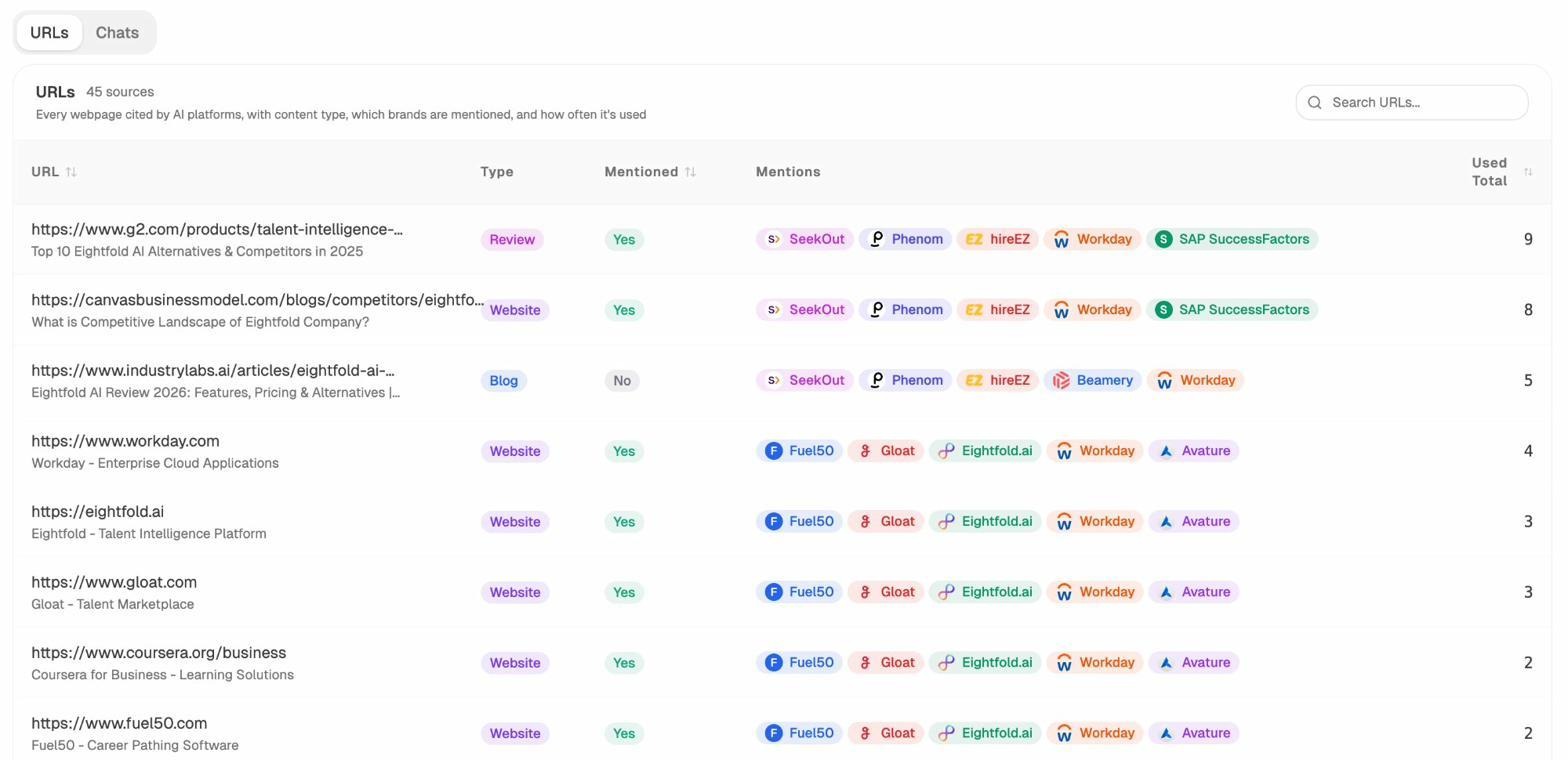

But the real value is in the URL-level detail. The citation list shows every specific page cited by AI platforms, which brands are mentioned alongside it, and how frequently each source is used.

Say you’re tracking your brand and notice that a G2 review page cites five of your competitors but not you. Or that a blog post on a niche industry site keeps appearing as a source. Those are specific, actionable opportunities: update your G2 profile, reach out to the blog author, or create a more comprehensive list of your own.

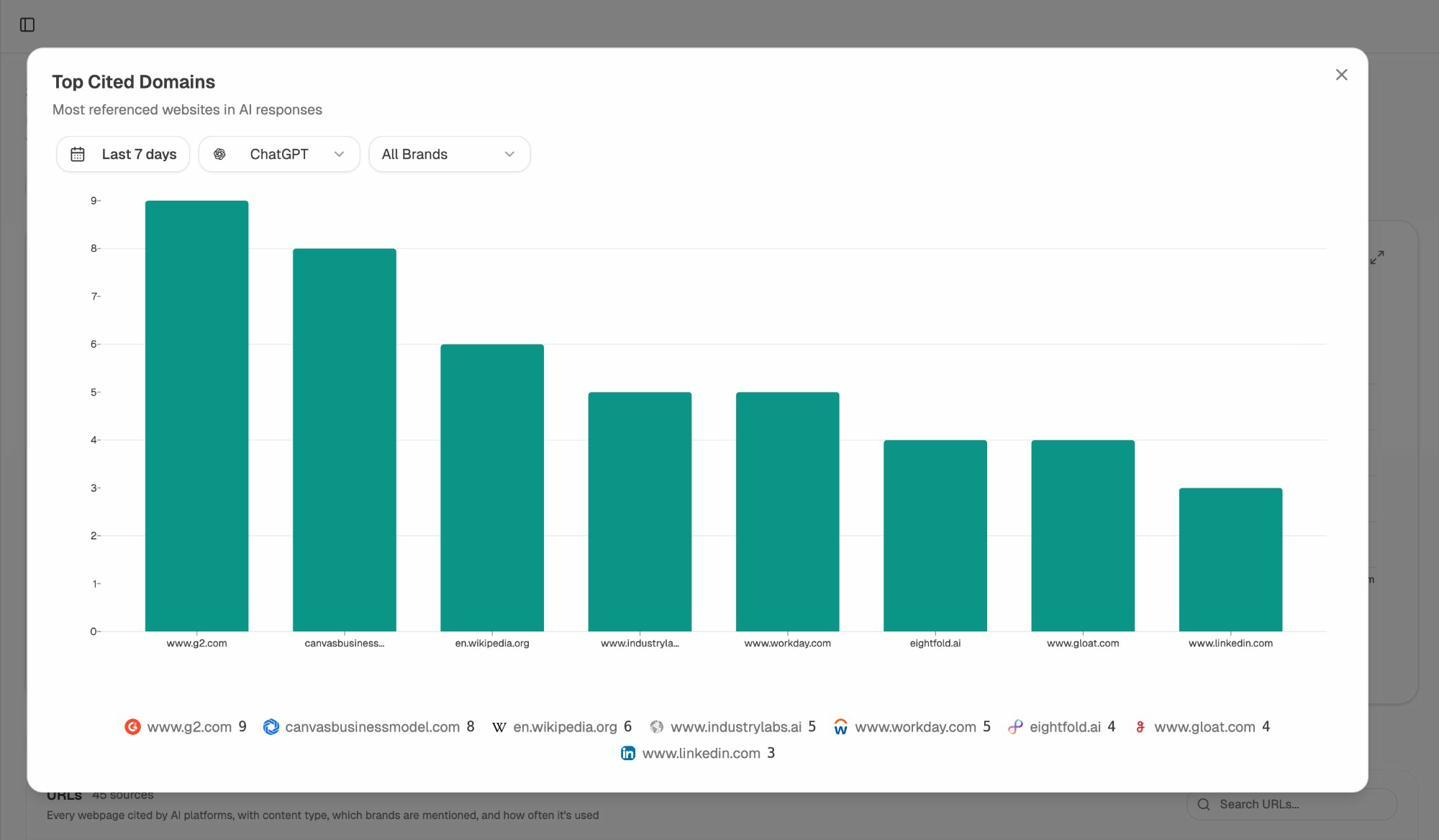

The Top Cited Domains view takes this further. Filtered by AI model — say, ChatGPT only — you can see which domains carry the most weight in your space. If G2 and Wikipedia dominate the citations in your category, you know exactly where to focus your off-site efforts.

This kind of analysis is difficult to do manually. You’d need to run dozens of prompts across multiple AI platforms, collect every source link, categorize them by type, and repeat weekly. AI search monitoring tools automate this entire workflow.

Content freshness is a major factor — 79% of cited lists were updated in the current year

One of the most actionable findings from the Ahrefs study: among 1,100 blog lists analyzed, 79.1% had been updated in 2025. More than a quarter (26%) had been updated in just the previous two months.

This recency bias in AI citations is well-documented. A separate study across 17 million citations confirmed that AI assistants consistently prefer fresher content. Researcher Metehan Yeşilyurt even identified a URL freshness score in ChatGPT that favors newer content, with evidence that refreshing publication dates can improve AI ranking positions significantly.

For “best” lists, this means two things. First, publishing a list once and forgetting about it won’t work. The 57.1% of lists that had been updated since their original publication outperformed those that were published once and left static. Second, the updates need to be substantive. Changing a date and re-publishing doesn’t count — AI models (and readers) can tell the difference.

A practical content refresh cadence for best lists

If you maintain “best X” comparison lists, a quarterly review cycle works well for most industries. Here’s what to check each cycle:

Step 1: Check whether the competitive landscape has changed. Have new competitors entered your market? Have existing ones launched major features, raised funding, or shut down? Any of these changes warrant an update.

![[Screenshot description: Google Trends or industry news showing a new competitor entering the market]](https://www.datocms-assets.com/164164/1775839431-blobid4.png)

Step 2: Review your own product’s positioning. If you’ve shipped new features or integrations since the last update, your comparison list should reflect them. Your “best” list doubles as a living product marketing asset.

Step 3: Update pricing and feature comparisons. Nothing kills credibility faster than outdated pricing. If a competitor changed their pricing model six months ago and your list still shows the old numbers, readers (and AI models parsing your page) will favor a more current source.

Step 4: Add or remove items. Lists that grow over time tend to accumulate more long-tail keyword coverage. But don’t pad the list with irrelevant options just to increase length. Every entry should earn its spot.

Step 5: Re-publish with a clear “last updated” date. Make the freshness signal visible both to readers and to AI crawlers.

For a deeper dive on content refreshing within an SEO content strategy, we wrote a full guide that covers prioritization frameworks for deciding which pages to update first.

Domain authority matters — but not in the way you’d expect

The Ahrefs study found that 35% of cited “best” lists were published on domains with low authority scores. Many had minimal or zero organic search visibility. Some looked like they were built primarily for link-building purposes rather than serving genuine readers.

This finding aligns with broader research on ChatGPT’s citation patterns. A separate Ahrefs study found that 28% of ChatGPT’s most-cited pages have zero organic visibility. And an analysis of AI citation overlap showed that only 12% of URLs cited by AI assistants also rank in Google’s top 10 for the original query.

In other words, AI models don’t exclusively rely on Google’s quality signals when choosing sources. A niche site with deep expertise in a specific category can get cited even if it has a low domain rating.

That said, the trend is moving toward quality. Google’s own AI Overviews already show fewer issues with low-quality sources because they can leverage Google’s existing search quality systems. Other AI platforms will likely develop stronger trust scoring over time. Glenn Gabe’s analysis of AI search quality systems suggests this convergence is already underway.

The practical takeaway: you don’t need to be a massive brand to get your best list cited by AI. But you do need genuine expertise and regular updates. Thin, AI-generated lists built on low-quality domains are a temporary arbitrage, not a long-term strategy.

How to check your domain’s authority and citation potential

Before investing in a “best” list, it helps to understand your domain’s current standing. Analyze AI’s free Website Authority Checker gives you a quick read on your domain authority metrics. Pair that with the Website Traffic Checker to see your estimated organic visibility.

If your domain authority is on the lower end, don’t let that discourage you. The data shows that niche expertise matters more than raw authority for AI citations. Focus on depth, accuracy, and freshness rather than trying to compete on backlink counts alone.

Best lists are prominent across all major AI platforms — not just ChatGPT

One of the study’s most important findings is that “best” lists aren’t a ChatGPT-specific phenomenon. They were actually slightly more prominent in Google’s AI Overviews than in ChatGPT.

This matters because different AI platforms serve different audiences and have different citation behaviors. Research by Semrush across 230,000+ prompts over 13 weeks found significant differences in citation patterns between ChatGPT, Google’s AI Mode, and Perplexity. Reddit and Wikipedia dominance varied sharply by platform. Citation volatility was highest on ChatGPT.

For brands, this means you can’t optimize for a single AI platform and call it a day. A list that gets cited by ChatGPT might not get cited by Perplexity, and vice versa. Multi-platform monitoring is essential.

Tracking your visibility across all AI engines in one place

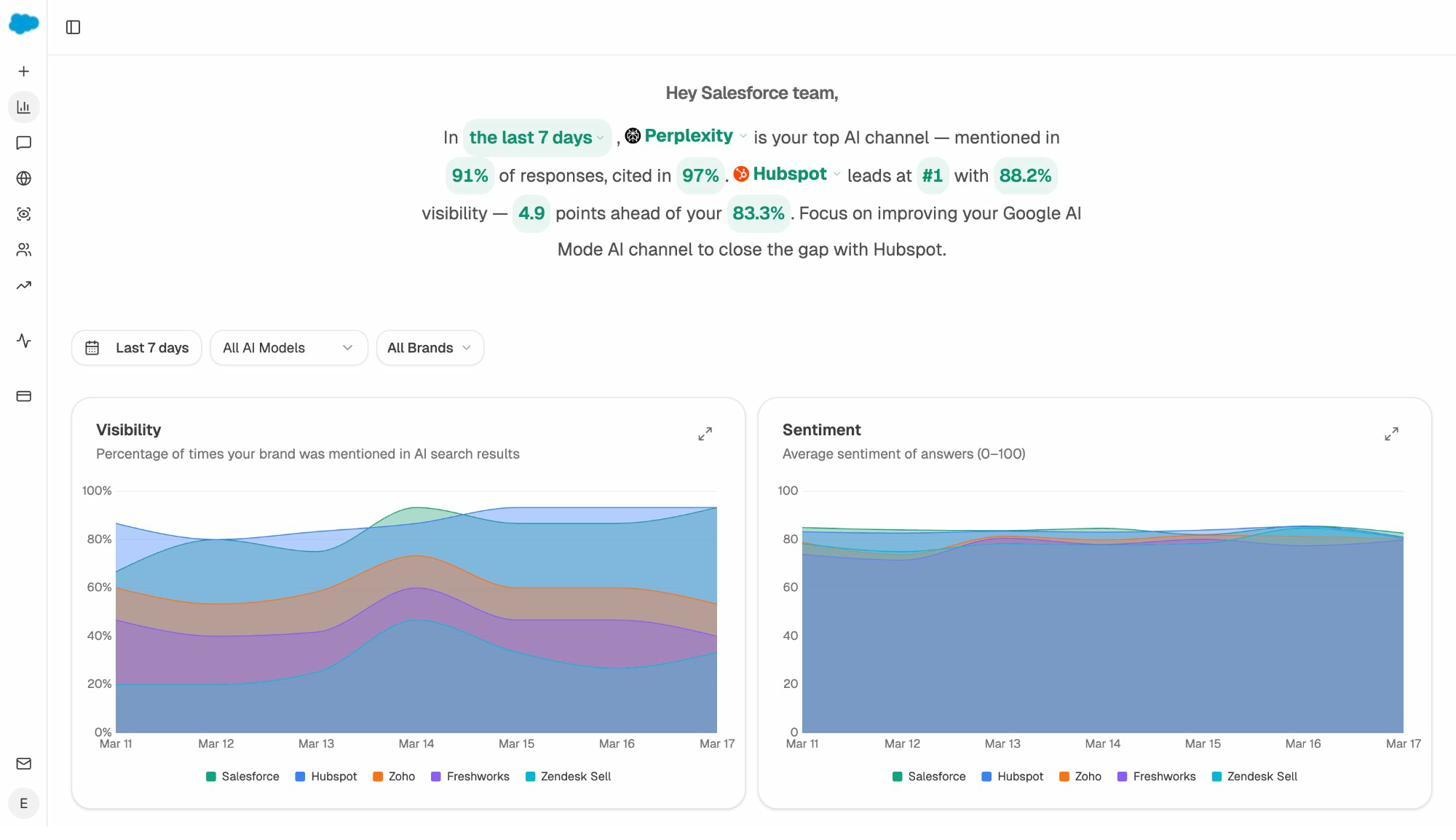

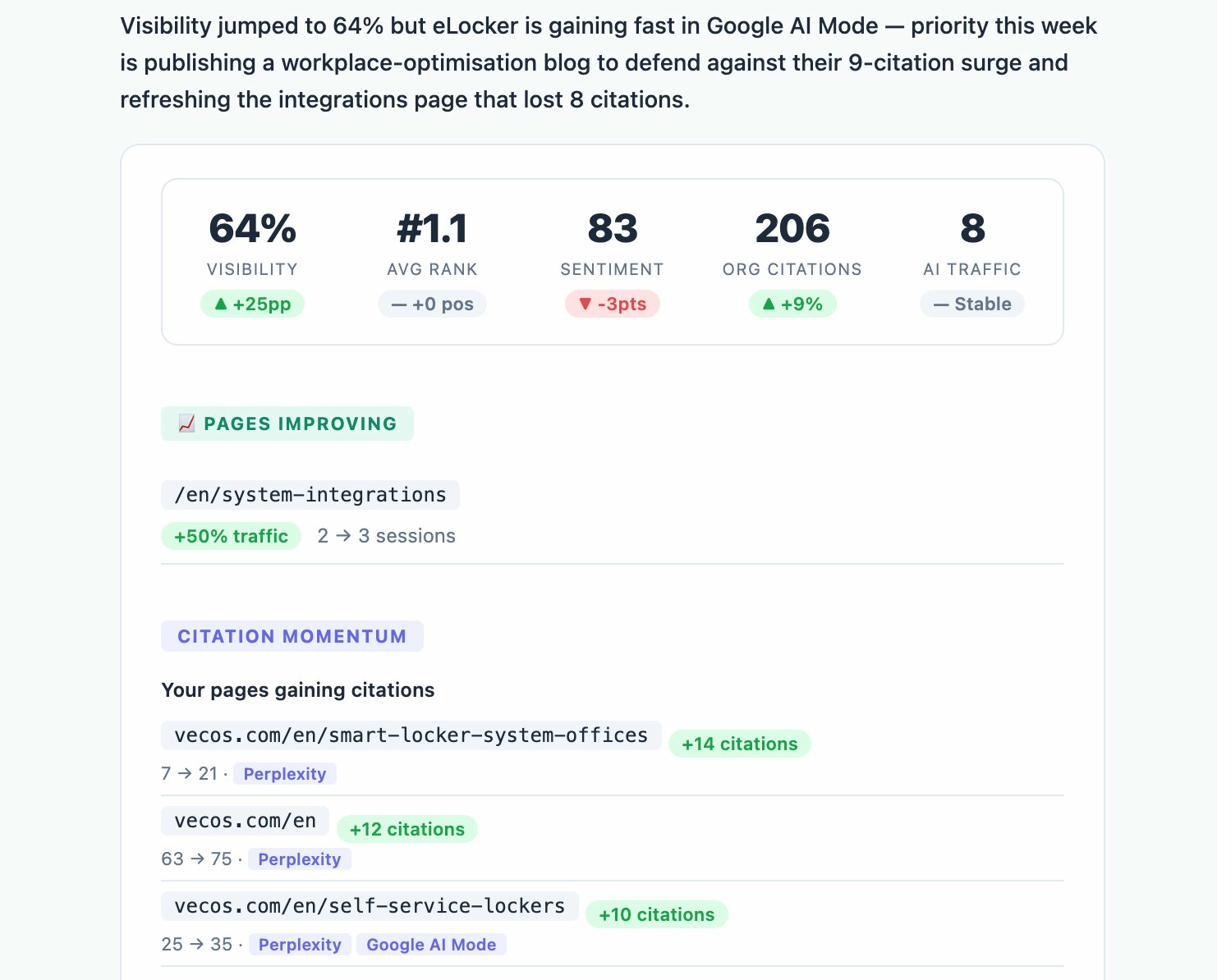

Analyze AI tracks brand mentions and citations across ChatGPT, Perplexity, Google AI, Claude, and Copilot from a single dashboard.

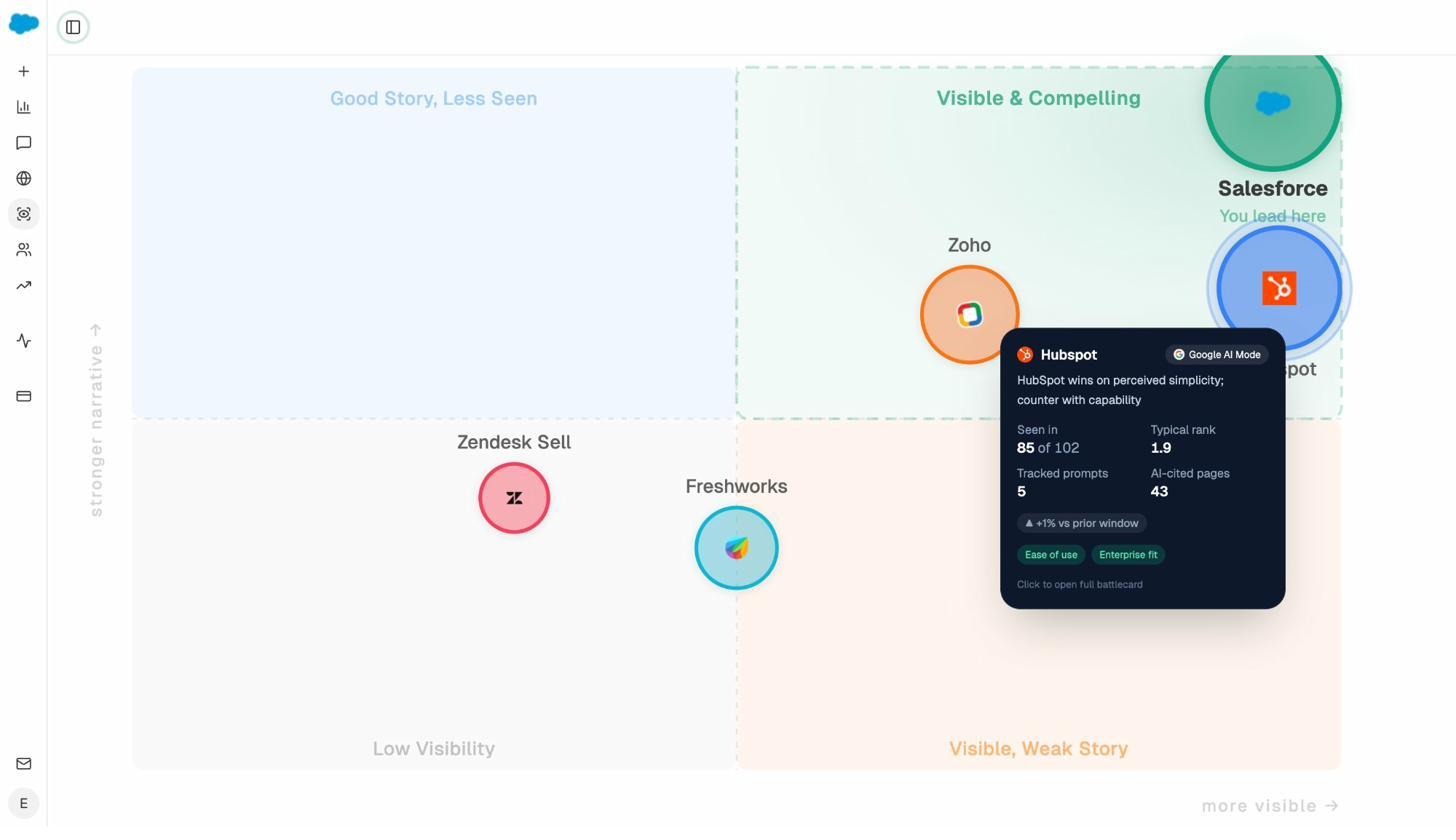

The Overview dashboard gives you a bird’s-eye view of your visibility, sentiment, and competitive positioning across all AI models — or filtered to any single one.

This cross-platform view is critical because AI citation patterns shift frequently. A study of citation volatility showed that ChatGPT’s top citation sources experienced dramatic shifts in mid-September 2025, with Reddit and Wikipedia citations dropping from roughly 55–60% of responses to under 20%. Meanwhile, Forbes and LinkedIn gained share. Perplexity and Google’s AI Mode showed completely different patterns during the same period.

Without cross-platform tracking, you’d have no way to see these shifts happening — let alone respond to them.

When ChatGPT recommends your brand, which of your pages get cited?

Here’s one of the study’s most interesting findings: when ChatGPT recommends a brand, it doesn’t always cite the brand’s “best” list. In many cases, landing pages, documentation, and blog posts from the brand’s own site appeared as sources instead.

In the software category, general landing pages (37.2%) and blog lists (34%) were the most common first-party page types cited. Homepages came third at 15.6%. In the products category, product pages dominated at 87.2%, which makes sense — when someone asks for the “best running shoes,” an AI model wants to link to the actual shoe, not a blog post about it.

This tells us something important: your “best” list is one piece of a larger puzzle. The pages AI models cite when recommending your brand are often the pages that describe what you actually sell — your features pages, product pages, service descriptions, and use cases.

This is why keeping those pages updated, well-structured, and rich with the kind of information AI models need to generate accurate recommendations matters just as much as publishing comparison content.

Understanding which of your pages receive AI traffic

Analyze AI’s AI Traffic Analytics shows you exactly which pages on your site receive visitors from AI platforms. The Landing Pages report breaks down sessions by AI source, engagement metrics, bounce rate, and — critically — which AI prompts drove traffic to each page.

The Landing Pages detail view goes deeper. For each page receiving AI traffic, you can see the traffic source breakdown (ChatGPT vs. Claude vs. Perplexity vs. Copilot), device split, new vs. returning visitors, and which specific prompts cited that page.

This data lets you answer questions like: “Is our pricing page getting cited in AI answers? Which prompts are driving traffic to our product pages? Are AI visitors engaging or bouncing?” Those answers should directly inform which pages you invest in updating.

If your “best CRM software” list is getting cited but your actual CRM product page is not, that’s a signal to improve the product page — not to create more lists.

How to find the right “best” list topics to target

Not every “best” list is worth creating. The goal isn’t to spam comparison posts across every conceivable sub-niche. The Ahrefs study found examples of brands that went overboard, and the takeaway from industry experts was consistent: a few well-researched lists outperform dozens of thin ones.

Here’s how to identify which comparison lists will actually move the needle for your brand.

Step 1: Identify the prompts where your competitors appear and you don’t

The fastest way to find comparison list opportunities is to look at where competitors are winning recommendations that you’re not getting.

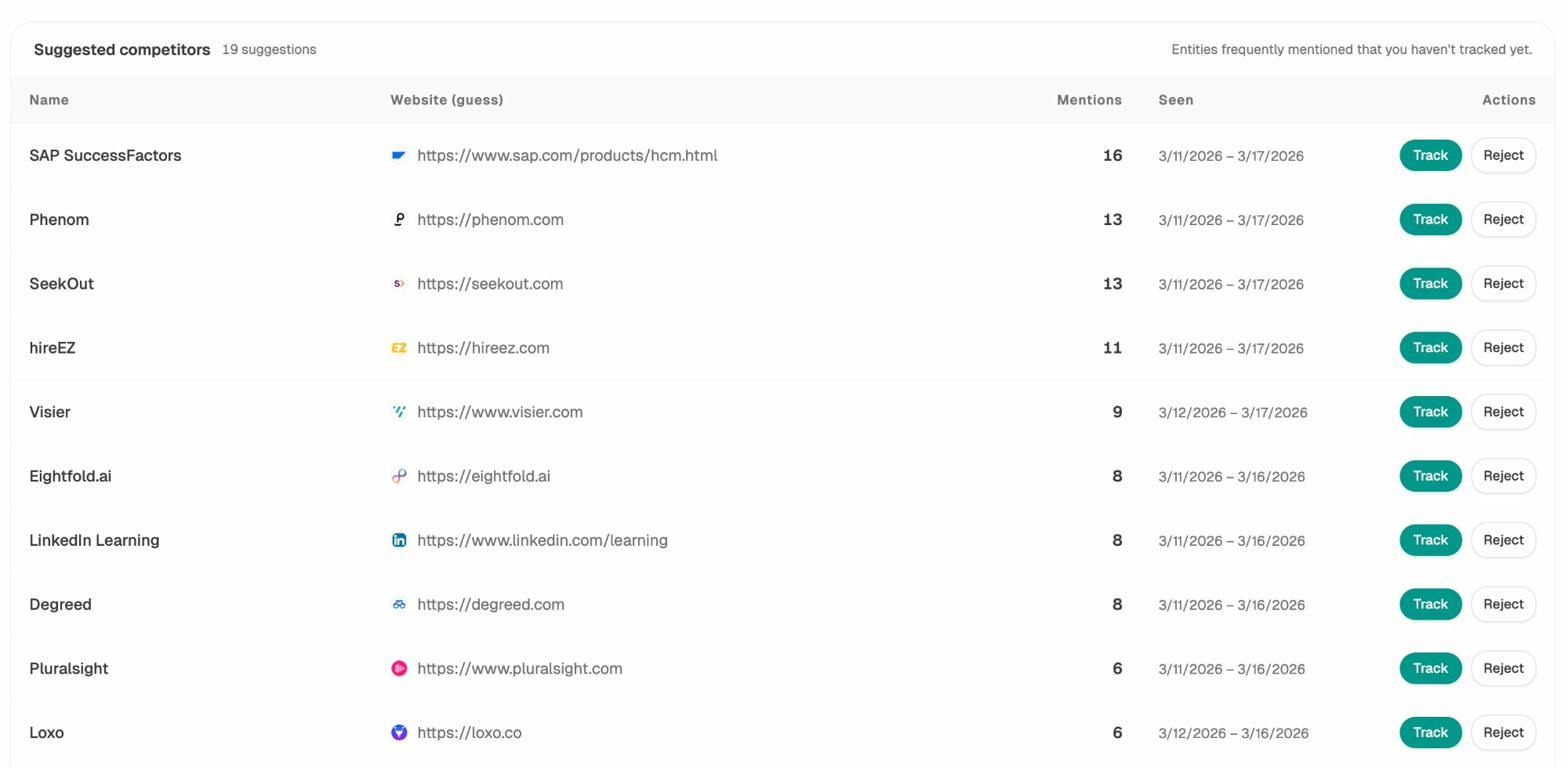

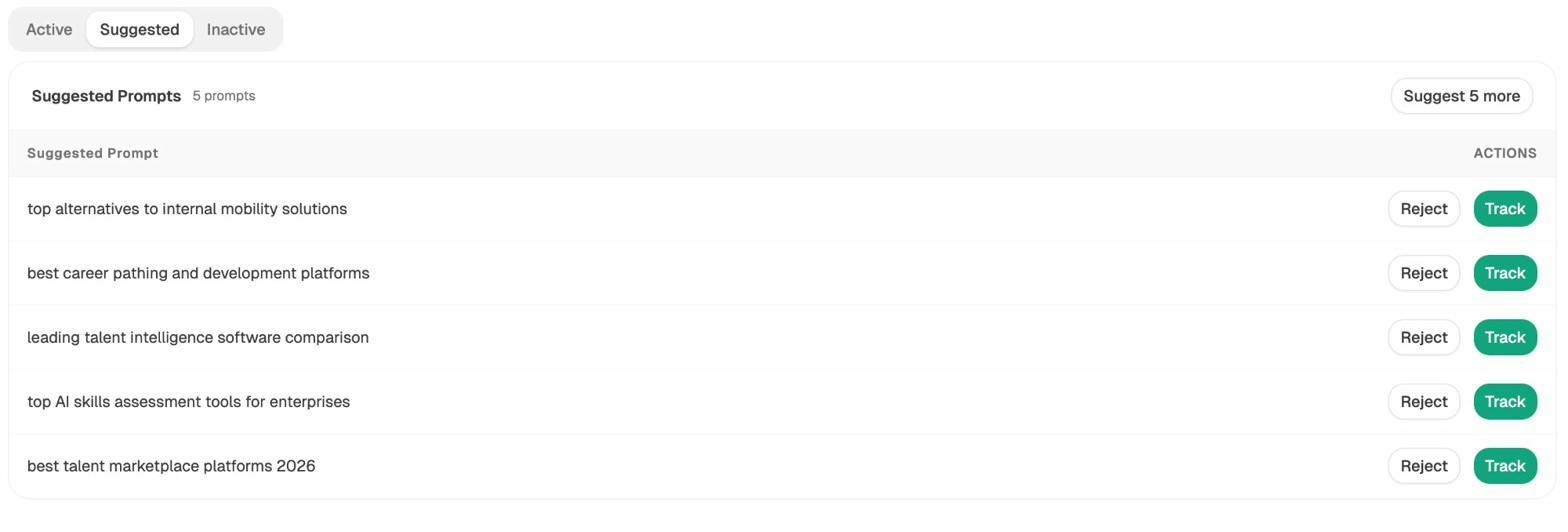

In Analyze AI, the Competitors dashboard shows brands that appear alongside yours in AI answers. The Suggested Competitors tab surfaces entities that are frequently mentioned in your industry but that you haven’t started tracking yet.

Once you’re tracking competitors, the Prompts dashboard shows specific prompts where each competitor is mentioned. If a competitor shows up in “best project management tools for remote teams” and you don’t, that’s a prompt you can target with a comparison post.

Step 2: Use keyword research to validate search demand

A comparison list that gets cited by AI but has zero search volume in Google is still useful — AI traffic is real traffic. But the best lists work for both channels.

Start with the exact prompt format people use in AI search, then check whether an equivalent keyword has search volume in Google. For example, “best CRM for startups” might be a common AI prompt and a keyword with 2,400 monthly searches.

Analyze AI’s free Keyword Generator can help you expand a seed keyword into related variations. And the Keyword Difficulty Checker tells you how hard each variation is to rank for in traditional search.

![[Screenshot description: Analyze AI Keyword Generator tool showing keyword suggestions for “best CRM software”]](https://www.datocms-assets.com/164164/1775839448-blobid10.png)

Step 3: Analyze the existing SERP and AI landscape

Before writing, check what already ranks — both in Google and in AI answers.

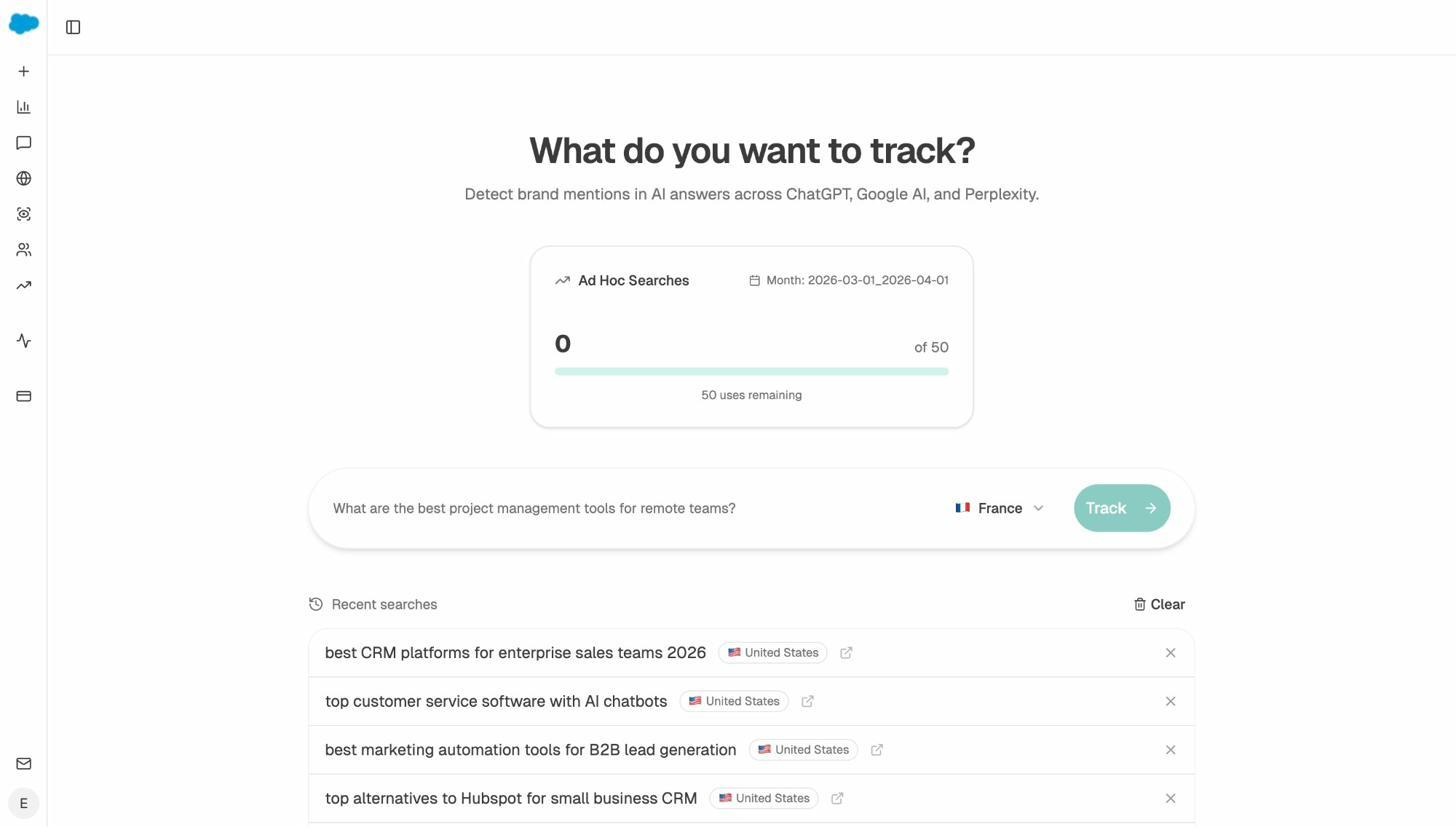

Use the SERP Checker to see who currently ranks for your target keyword in Google. Then run the same query as a prompt in Analyze AI’s Ad Hoc Search feature to see what AI models recommend.

If the existing top-ranking lists are thin, outdated, or missing key competitors, you have a clear opening. If they’re comprehensive and recently updated, you’ll need a meaningfully better angle to compete — deeper analysis, original data, expert quotes, or more nuanced product comparisons.

Step 4: Check for gaps in existing best lists using the Perception Map

Analyze AI’s Perception Map shows how AI models position different brands relative to each other across your tracked prompts. If AI models consistently describe your brand and your competitor as serving different segments or use cases, that’s a signal to create a comparison list that highlights those differences directly.

A comparison list that addresses the specific distinctions AI models already make between brands is more likely to get cited than a generic “these are the 10 best tools” roundup.

How to structure a “best” list that AI models actually cite

Creating the list is the easy part. Creating one that performs in both Google and AI search requires specific structural decisions.

Start with a clear, keyword-targeted title

Use the exact format people search for: “Best [category] for [use case/audience] in [year].” Examples: “Best Project Management Software for Remote Teams in 2026,” “Best SEO Agencies in New York.” This format maps directly to how people phrase both Google searches and AI prompts.

Include your brand — but be transparent about it

The study data shows that self-promotional lists are not penalized. Brands like Shopify, Slack, Salesforce, and HubSpot all publish them. Many rank themselves first.

But there’s a meaningful difference between a self-promotional list that’s genuinely useful and one that’s transparently biased. The approach that builds lasting trust: be upfront about your inclusion, link to competitor websites so readers can do their own research, and explain your evaluation criteria clearly.

As Siege Media founder Ross Hudgens has argued, you can position your own brand first and still provide value. But you have to back it up with substance. Empty self-promotion with no real comparison detail won’t hold up — in search or in AI.

Structure for parseability

AI models extract information most reliably from well-structured content. For each item in your list, include:

A clear heading with the product or service name. H2 or H3 tags help AI models identify individual items in the list.

A concise summary of what it does and who it’s for. One to two sentences. This is the text most likely to appear in AI-generated recommendations.

Specific strengths and weaknesses. AI models look for comparative language. “Better for enterprise teams” or “limited free plan” gives them the detail they need to make nuanced recommendations.

Pricing information. One of the most common reasons AI answers cite comparison lists is to find pricing data. Keep yours current.

A link to the product or service. This is both a user experience best practice and a signal that your list is a legitimate comparison, not a keyword-stuffed placeholder.

Add original insight — not just feature tables

The lists that get cited most are the ones that add genuine information gain. Feature comparison tables are useful, but every competitor has one. What separates a good list from a great one is original commentary: why you’d choose one option over another, real examples of when each tool excels, and honest assessments of limitations.

This is the principle that content marketing leaders like Grow and Convert call “pain point SEO” — targeting content around the specific problems your audience is trying to solve, rather than just chasing keyword volume. A “best” list structured around real buyer pain points outperforms a generic feature roundup every time.

How to track whether your “best” lists are getting cited

Publishing a “best” list and hoping for the best isn’t a strategy. You need to track whether AI models are actually citing your content — and adjust if they’re not.

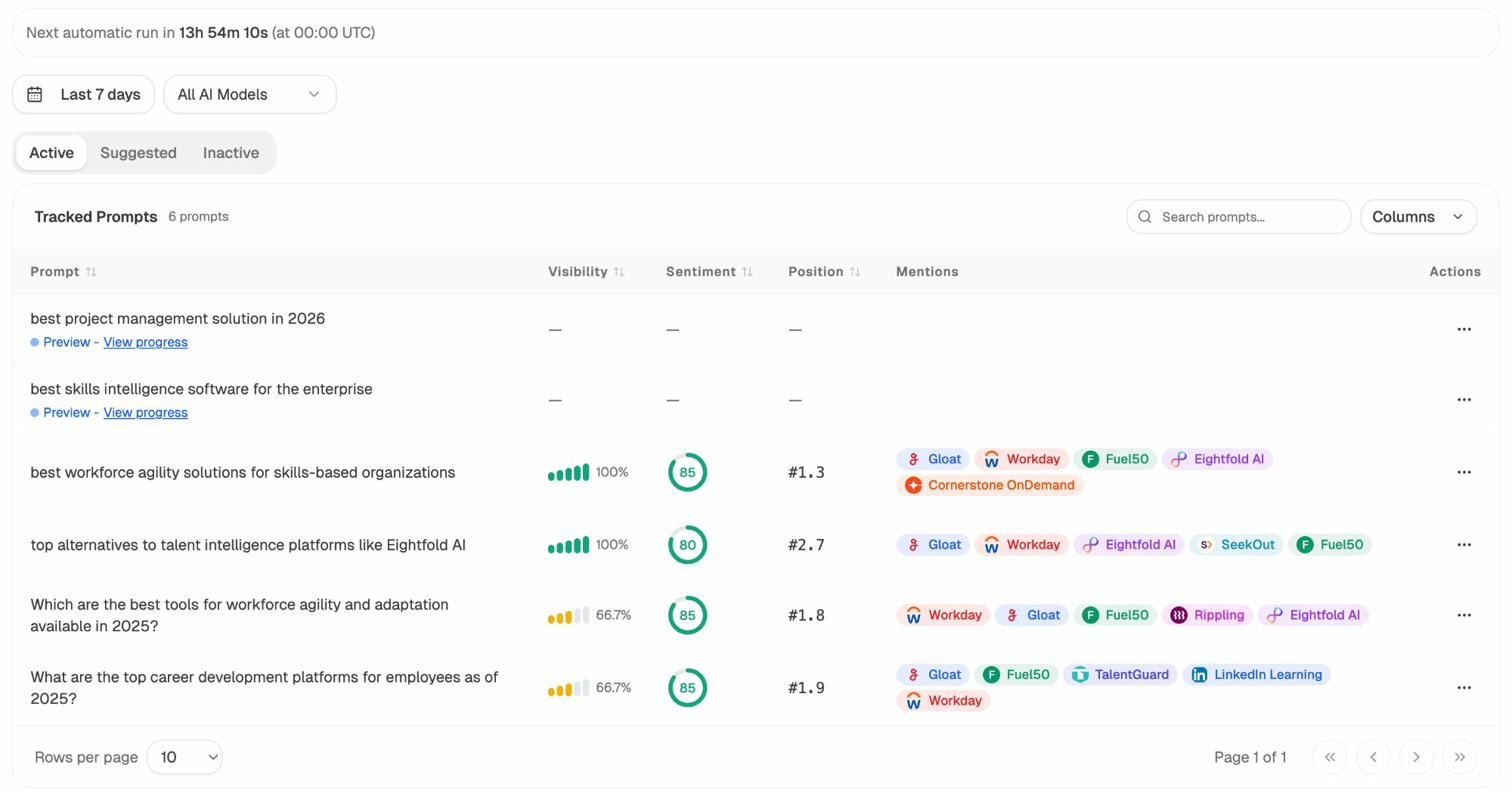

Set up prompt tracking for your target queries

In Analyze AI, add the exact prompts your “best” list targets as tracked prompts. For example, if you published “Best CRM Software for Small Businesses in 2026,” track the prompts “best CRM for small businesses,” “top CRM software small business,” and variations.

Analyze AI runs these prompts across all major AI models automatically, so you’ll see whether your brand appears in the response, which position you’re mentioned in, what sentiment the AI model expresses about your brand, and which competitors are mentioned alongside you.

The Suggested Prompts tab also surfaces prompts that Analyze AI detects in your industry — ones you might not have thought to track. These suggestions often reveal comparison queries you hadn’t considered targeting.

Monitor your citation sources weekly

The Sources dashboard in Analyze AI updates as new citation data comes in. Set a weekly check to see whether your “best” list has started appearing in cited URLs, and which AI platforms are using it.

If your list isn’t getting cited after several weeks, check whether other lists in your space are. If they are, compare: are they more comprehensive, more recently updated, better structured, or published on higher-authority domains?

Use weekly email digests to stay on top of changes

For teams that don’t have time to check dashboards daily, Analyze AI’s Weekly Email Digests deliver a summary of your AI visibility, sentiment shifts, and competitor movements directly to your inbox.

This is especially useful for tracking the impact of content updates. After you refresh a “best” list, set a calendar reminder to check your digest for the next 2–4 weeks to see if citation frequency increases.

Should you actually create “best” lists? Our take

All the data points in the same direction: agencies, SaaS companies, and product brands should publish self-promotional “best” lists, provided they do them well.

The research is clear that these lists are the single most cited content type in AI search. They’re also highly effective in traditional Google search. And the barrier to entry is low — you don’t need massive domain authority or thousands of backlinks to get cited.

But “do them well” is doing a lot of work in that sentence. Here’s what separates the lists that build lasting AI visibility from the ones that get ignored:

|

What works |

What doesn’t |

|---|---|

|

Genuinely useful comparisons with real evaluation criteria |

Thin lists that exist only to rank your product first |

|

Regular updates with substantive changes |

Date-only refreshes with no real content updates |

|

Transparent about your own inclusion |

Written in third person pretending you’re not promoting yourself |

|

Links to competitor websites for reader research |

No outbound links, making the page feel like a dead end |

|

Original insight and experience-based commentary |

Feature tables copy-pasted from competitor websites |

|

Structured for AI parseability (clear headings, summaries) |

Walls of text with no structural markup |

|

Tracked for citation performance and iterated based on data |

Published once and never revisited |

At Analyze AI, we believe that AI search is an additional organic channel — not a replacement for SEO. The brands that succeed in both are the ones building content that serves real readers and gives AI models the structured, up-to-date, expert information they need to make recommendations.

A well-made “best” list does exactly that.

Key takeaways

“Best X” blog lists represent 43.8% of all page types cited by ChatGPT in top-of-the-funnel recommendation queries. This makes them the single most prominent content type in AI search citations.

Higher list positions correlate with more AI recommendations. Brands featured in the top third of comparison lists are cited more frequently than those in the middle or bottom.

79.1% of cited lists were updated in the current year. Content freshness is a significant factor in AI citations. A quarterly review cadence is a good starting point for most industries.

35% of cited lists come from low-authority domains. You don’t need massive domain authority to get cited. Niche expertise and recency matter more — but this gap is likely to close as AI platforms improve their quality scoring.

Best lists are prominent across all AI platforms, not just ChatGPT. Google AI Overviews, Perplexity, Claude, and Copilot all surface comparison lists frequently. Multi-platform AI search monitoring is essential.

When AI recommends your brand, it often cites your product and landing pages — not just your best list. Keep all your key pages updated, well-structured, and rich with the information AI models need to recommend you accurately.

Tracking citation performance is the missing piece. Publishing a list without monitoring whether it gets cited is like publishing a blog post without checking whether it ranks. Use Analyze AI to track prompts, monitor sources, and iterate based on real data.

Want to see how your brand currently shows up in AI search? Start tracking your AI visibility with Analyze AI — and find out which comparison lists are driving recommendations in your industry.

Ernest

Ibrahim