Summarize this blog post with:

In this article, you’ll get an honest read on Writesonic in 2026 after putting the Professional plan through real content and AI visibility work for three months. You’ll see what each tier actually unlocks, which modules hold up under workload, where the upsell traps are, and how Writesonic compares against Analyze AI, the agentic SEO and content platform that handles the same job with fewer dashboards and a programmable layer underneath.

Table of Contents

What Writesonic Actually Is in 2026

Writesonic started in 2020 as an AI writing tool. It pivoted to SEO content in 2023, then bolted on a GEO module in 2024 to track how brands appear inside ChatGPT, Perplexity, Gemini, and Google AI Overviews.

The product you sign up for today is three things sharing a single login.

-

An AI article writer with templates, the long-form Sonic Editor, and a Chrome extension.

-

An SEO audit and optimization tool with a “Sonic” AI agent that scans your site and suggests fixes.

-

A GEO module that tracks brand mentions, citations, and sentiment across AI engines, plus an Action Center that ranks remediation tasks.

That genealogy explains the experience. The writing surface is the most polished. The SEO layer sits on top. The GEO layer sits on top of both, behind a more expensive plan. None of these layers are wrong on their own. You will notice the seams the first time you try to move data between them.

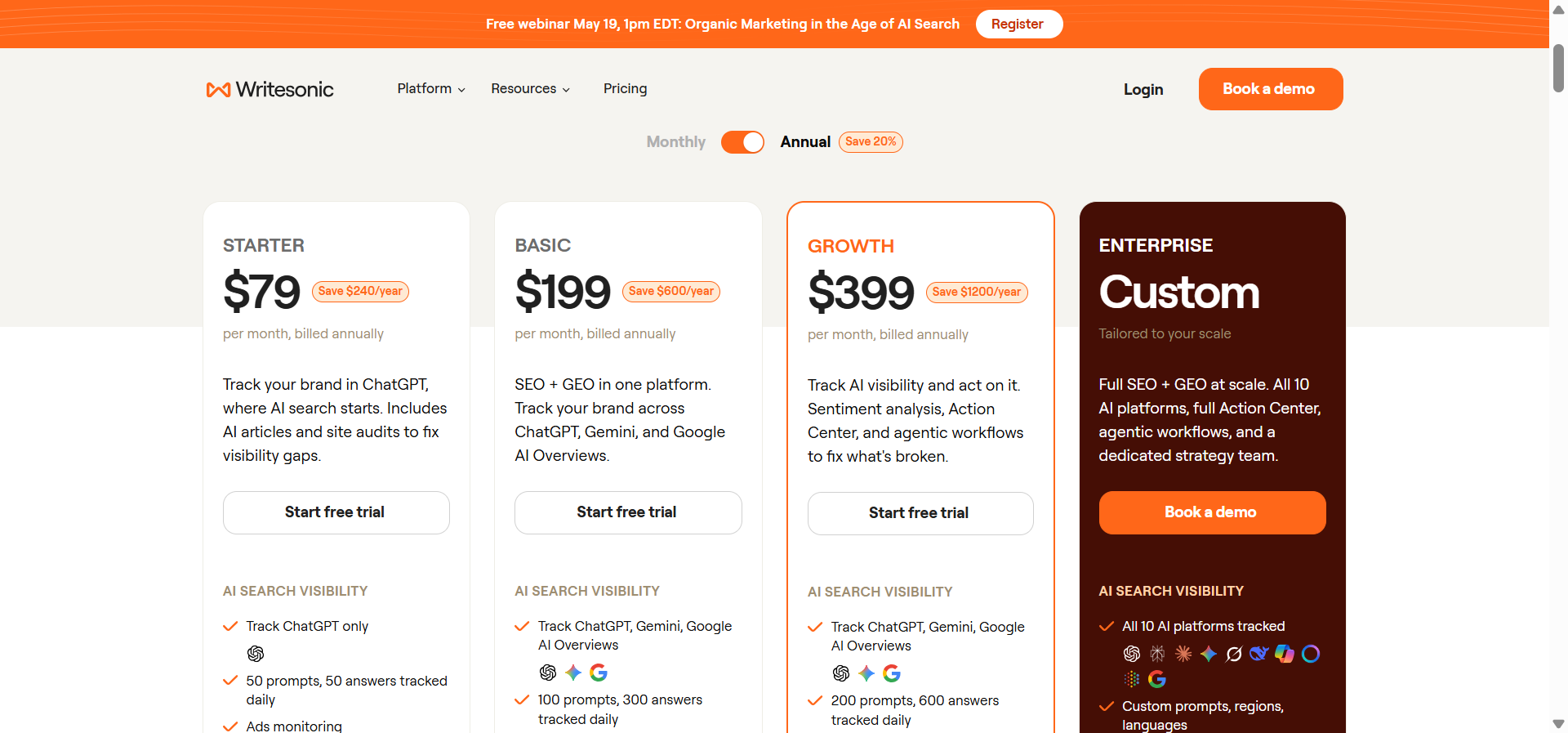

Writesonic Pricing in 2026, Decoded

Writesonic uses a tiered plan structure with annual billing roughly 20% cheaper than monthly. The headline numbers look reasonable. The trap is what each tier actually includes, because almost everything you would buy this product for sits at the Professional tier or higher.

|

Plan |

Annual price |

Articles / month |

GEO tracking |

Best fit |

|---|---|---|---|---|

|

Free |

$0 |

Limited templates |

No |

A test drive only |

|

Lite |

$39/mo |

15 articles |

No |

Solo writers, no AI search |

|

Standard |

$79/mo |

30 articles |

No |

Small teams on traditional SEO |

|

Professional |

$199/mo |

100 articles |

100 prompts |

First plan with real GEO |

|

Advanced |

$399/mo |

200 articles |

200 prompts + sentiment |

Agencies, multi-brand |

|

Enterprise |

$1,499+/mo |

Custom |

Unlimited |

Regulated, multi-domain orgs |

Two things are worth flagging. The Lite and Standard plans do not include any AI search visibility tracking. If your reason for buying Writesonic is GEO, you are starting at $199 a month annually or $249 a month otherwise. That is the entry point for AI features, with most reviewers noting the cheaper plans aren’t useful in a 2026 SEO stack.

Credit consumption also rises with article length and quality settings, so the sticker price often understates real spend. Heavy users testing multiple drafts per brief routinely hit overages on Professional and end up upgrading to Advanced.

What Writesonic Does Well

There are real things to like here. Three modules earned their place in production work.

The AI article writer is fast at first drafts. You can go from topic to a 1,500-word draft in roughly 90 seconds. The output is generic, but it is structurally complete. For teams with strong editors, that is a real time saver.

The SEO audit catches the basic stuff. Title length, meta descriptions, H1 collisions, broken images, slow LCP. None of this is novel, but it is well surfaced inside the same UI as the writer. (For free versions of similar checks, our website traffic checker and broken link checker cover the basics without a paywall.)

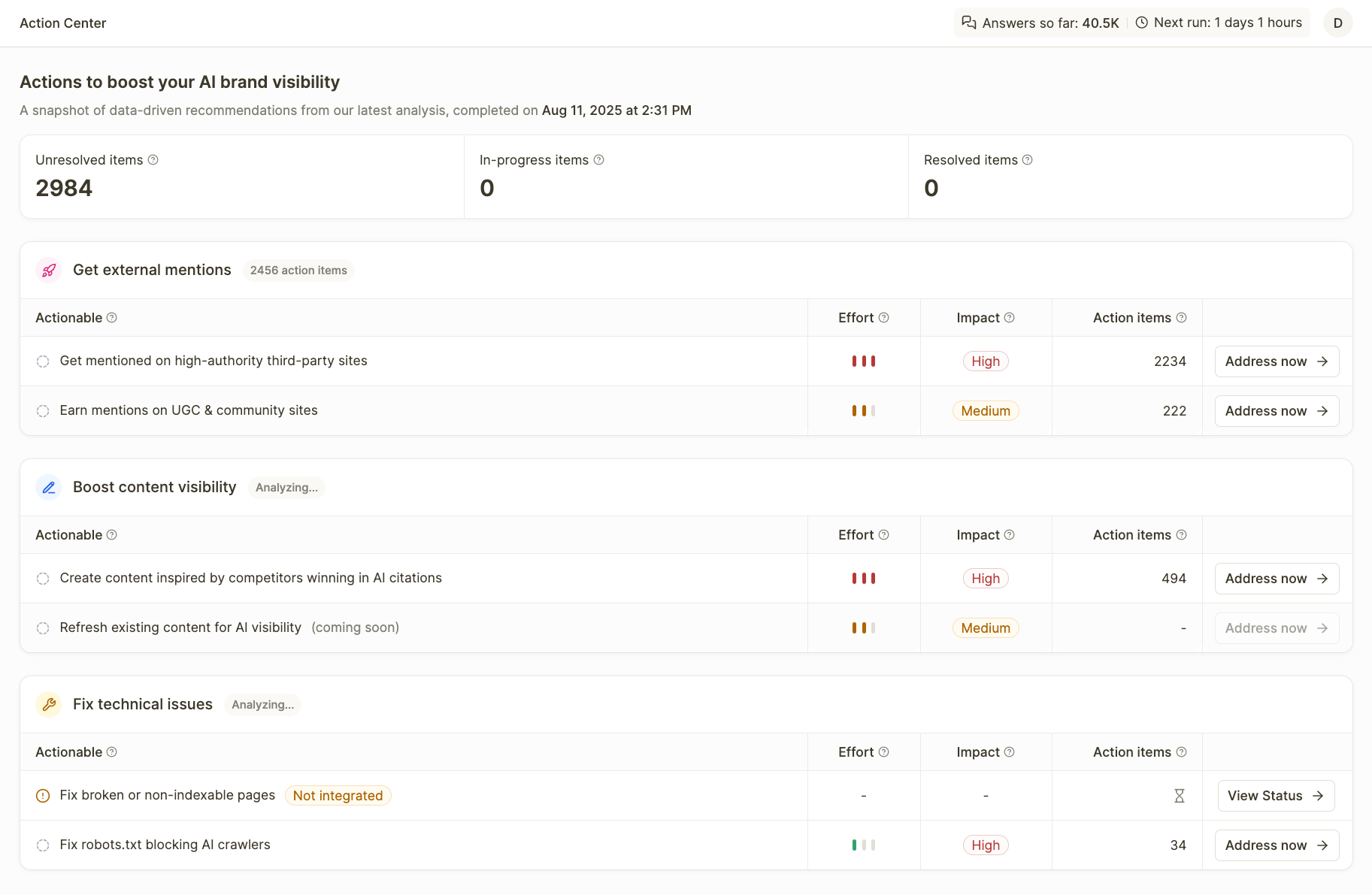

The GEO module gives you a starting baseline. It tracks tracked prompts, brand mentions, citations, and sentiment across the major engines. The Action Center turns those into a ranked list of remediation tasks. For teams who have never measured AI visibility before, this gets you to “we have a number” within a week.

Where Writesonic Falls Short Under Real Workload

The cracks show up the moment you try to operate the platform past surface use cases.

Output quality is uneven and almost always needs heavy editing. This is the most consistent complaint in G2 reviews of Writesonic and Reddit threads. One mid-market marketer described it as a more expensive ChatGPT. For real publication, you are still doing the structural rewrite.

GEO depth is shallow compared to dedicated tools. Writesonic shows you that you appeared in a Perplexity answer. It does not always show you why, which page powered the citation, or what the cited passage said.

There is no GA4-attributed AI traffic data that ties visibility to revenue. Writesonic shipped an AI traffic analytics view, but it is read-only and does not pipe sessions or conversions back into a unified dashboard. You can see that ChatGPT mentioned you. You cannot see whether that mention drove a sign-up.

The UI gets noisy fast. Three modules built at three different times means three navigation patterns, and GEO’s dense data panels stack uncomfortably on top of the writing surface.

Support is email only, even on the $249 plan. Professional and Advanced subscribers do not get live chat. When the GEO module breaks, you wait.

The Structural Limit Most Reviews Miss

Here is the bigger problem, and the one that does not show up in most reviews because most reviews stop at the feature list.

Writesonic gives you tools. Writesonic does not give you a programmable layer.

Every workflow inside Writesonic is a click. You click into the writer, you click into the SEO audit, you click into the GEO dashboard, you click into the Action Center. The platform assumes a human is sitting in front of it driving every step. That works for one writer producing one article. It collapses the moment you want to run the same workflow across 50 pages a week, fire content updates when a competitor changes their pricing page, brief a writer the moment a new prompt cluster appears in your AI visibility data, or turn a closed deal in HubSpot into a case study draft on the same day.

For any of that, you need an automation layer. Writesonic does not have one. This is the single biggest design difference between Writesonic and Analyze AI, and it is the reason the same monthly spend buys very different leverage.

How To Do This Work With Analyze AI

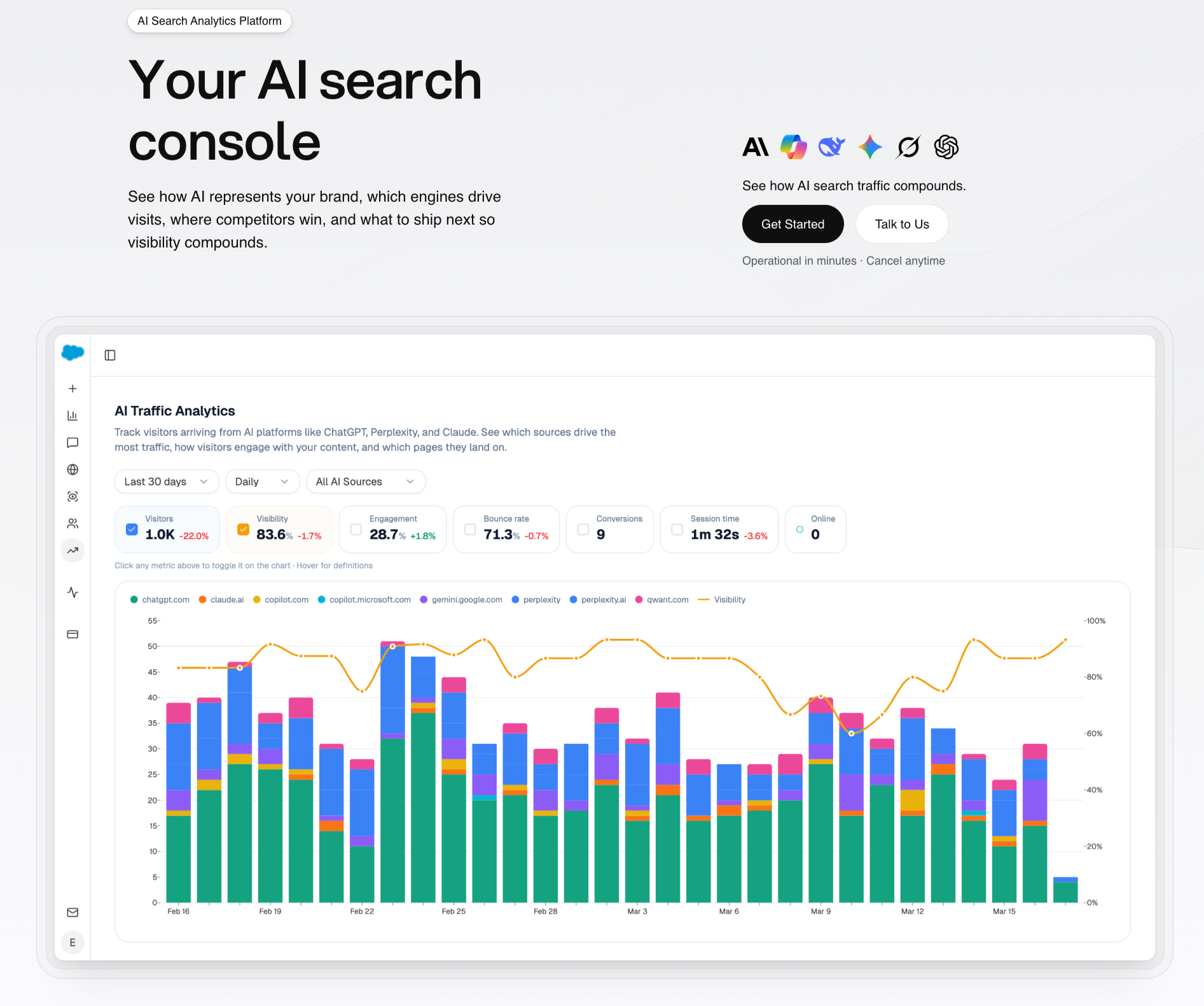

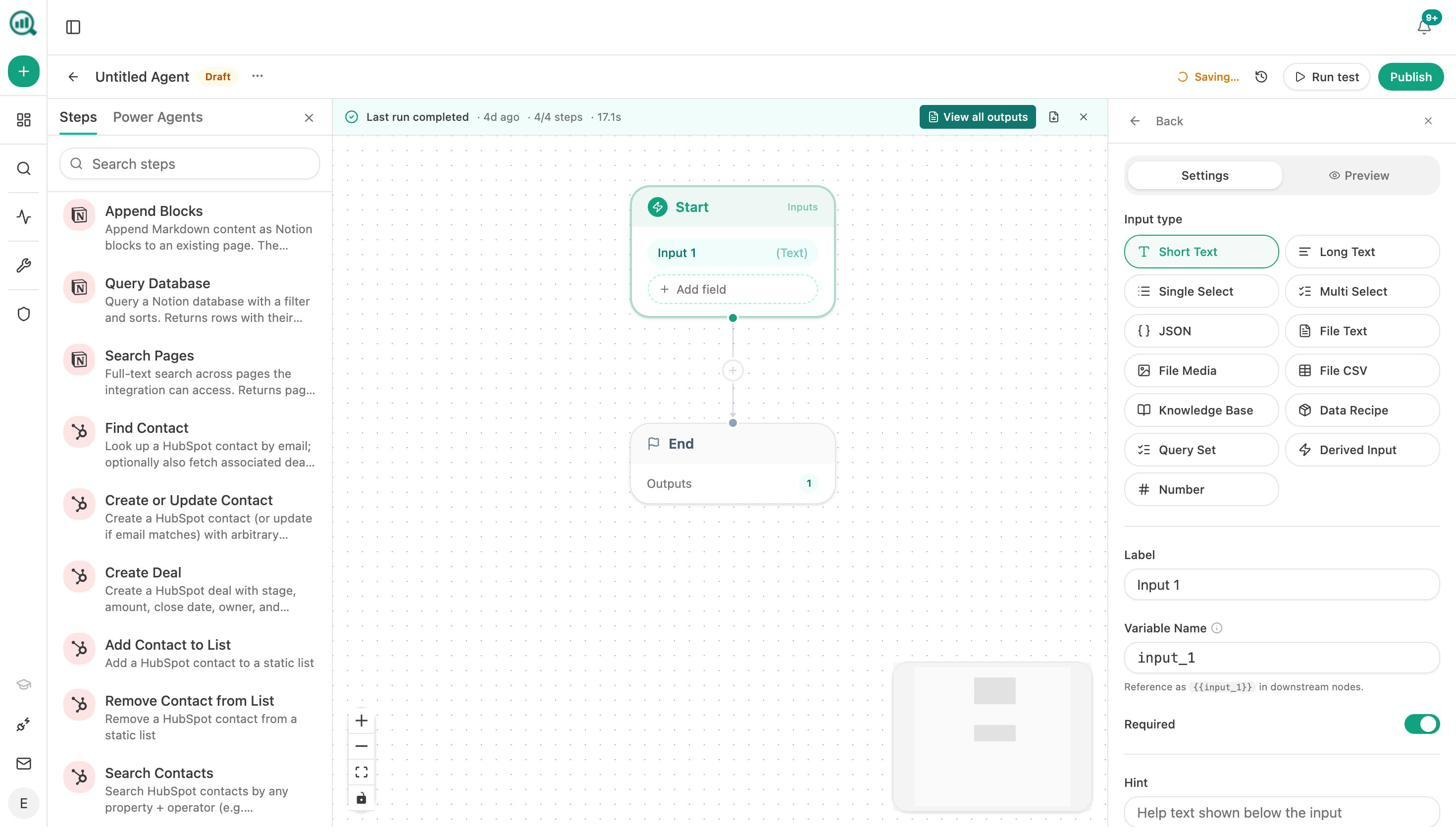

Analyze AI is the agentic SEO and content platform. It runs the same job Writesonic targets, with one architectural choice that changes everything. Every dashboard, every visibility metric, every content surface is also exposed as a node you can drop into an agent. Anything you can do by clicking, you can also schedule, trigger from a webhook, or chain into a pipeline.

The rest of this section is a tutorial on how each piece of work Writesonic charges for actually gets done in Analyze AI.

Step 1. Find the prompts and keywords worth tracking

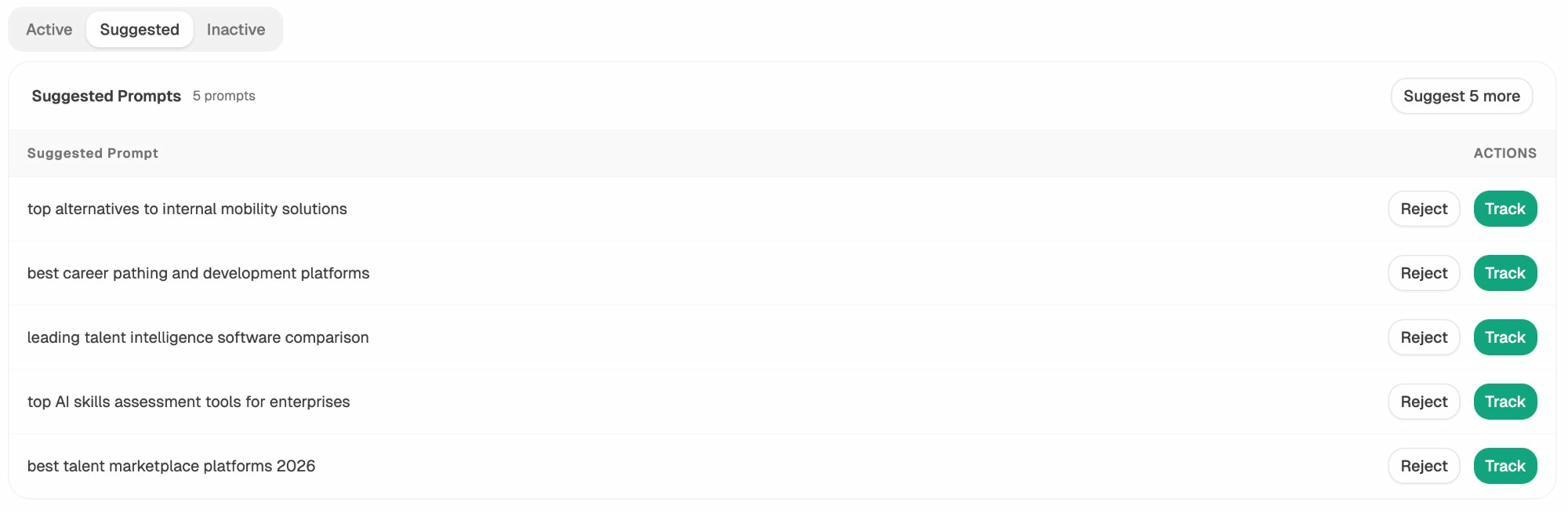

The first job inside any AI search and SEO program is figuring out what to monitor. Writesonic asks you to add prompts manually or pulls a generic list. Analyze AI does it the way you would if you had time, by suggesting prompts grounded in your domain, your competitors, and the prompt patterns AI engines actually receive.

Open Prompt Tracking, switch to the Suggested tab, and you get a live queue of prompt candidates with one-click tracking.

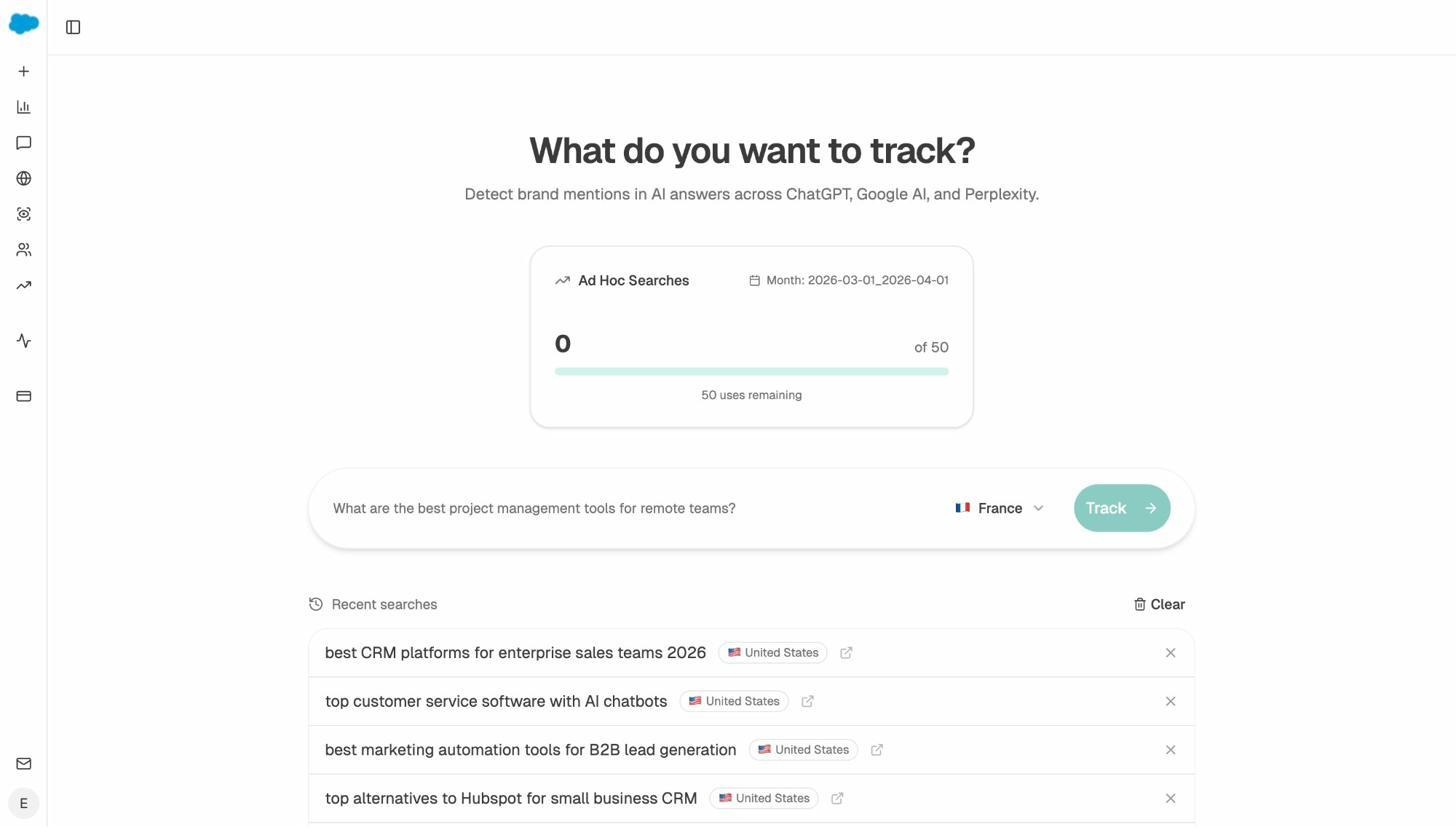

For ad hoc research, the Ad Hoc Prompt Searches surface lets you test a single prompt in any region and see exactly which brands the AI engines name and which sources they cite, without burning your tracked-prompt allocation.

For the traditional SEO half of the work, the keyword generator tool, keyword difficulty checker, and SERP checker cover what most teams use Writesonic’s basic SEO module for, without a paywall. SEO is not being replaced, it is getting a second organic channel layered on top of it. Treating prompt research and keyword research as one combined job is how the work compounds.

Step 2. Track AI visibility with attribution that ties back to revenue

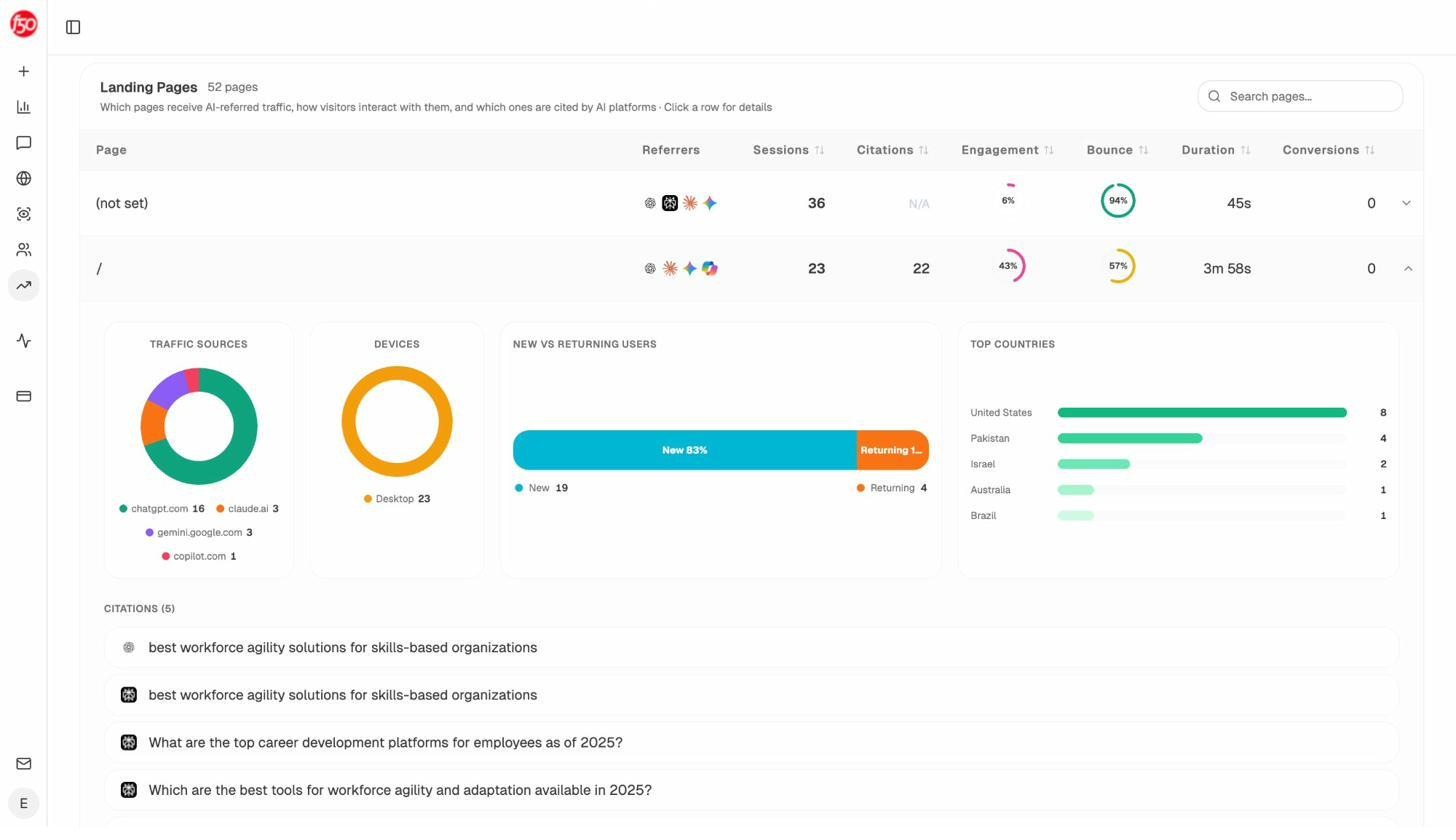

Writesonic shows you visibility and sentiment. That is one half of the job. The other half, the half most GEO tools quietly skip, is connecting that visibility to verified GA4 sessions, conversions, and revenue. Analyze AI’s AI Traffic Analytics is where AI mentions stop being a vanity number and start being a channel.

You see exactly which pages received AI traffic, which engines sent it, which prompts cited those pages, and what visitors did when they landed. Writesonic does not have this loop.

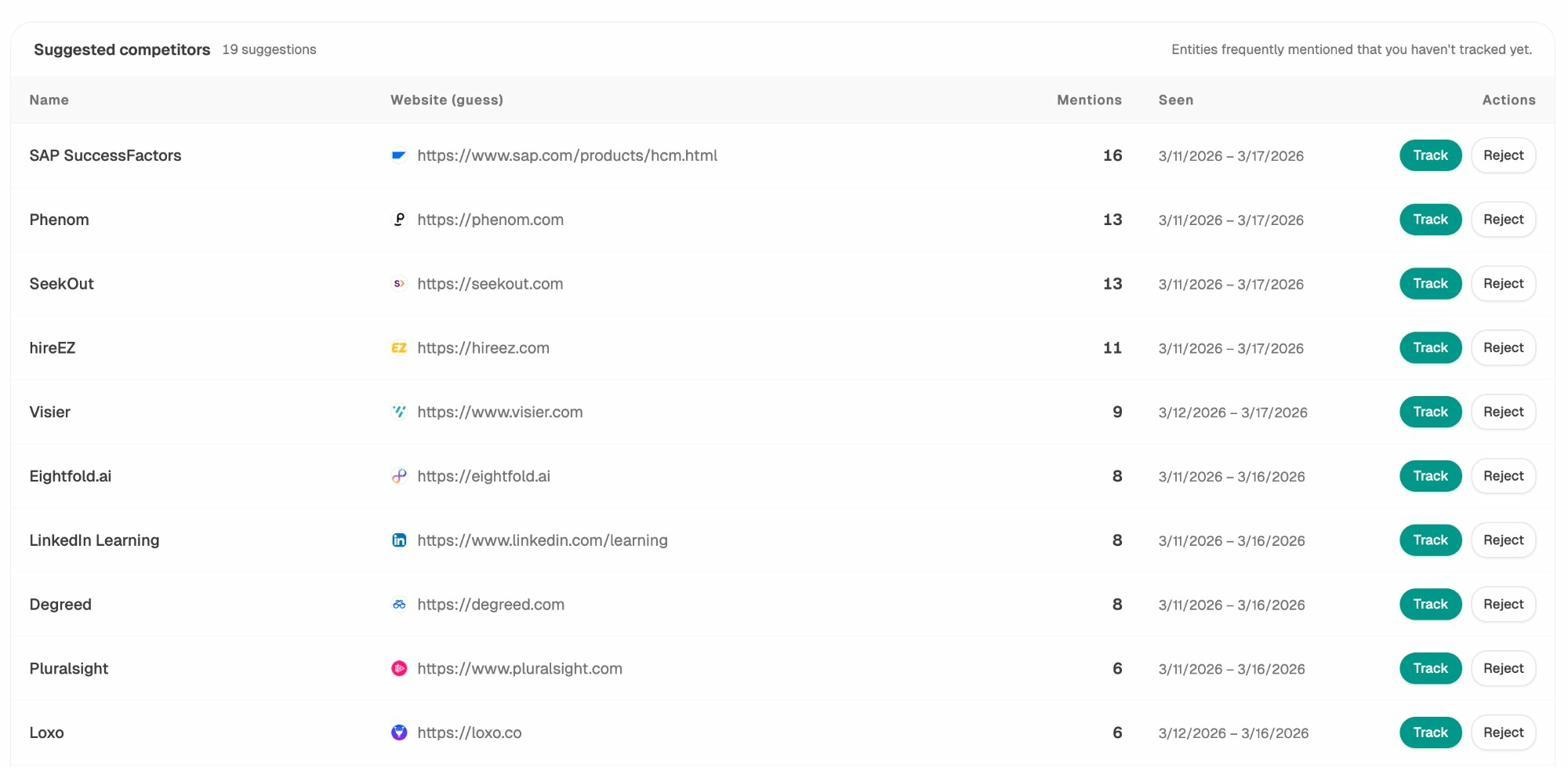

Step 3. Find where competitors win and you do not

Inside Writesonic’s GEO module, competitor data is a chart. You see who appears more often. You do not get a shippable to-do list out of it.

In Analyze AI, the competitor intelligence view runs a different play. The Suggested Competitors queue surfaces brands that AI engines are mentioning alongside you that you have not even tracked yet. One click adds them.

The Opportunities view goes further. It shows the prompts where competitors are winning and you are absent, ranked by traffic potential. That is a content brief queue, not a chart. Our guide to comparing your AI visibility against your competitors covers the full workflow.

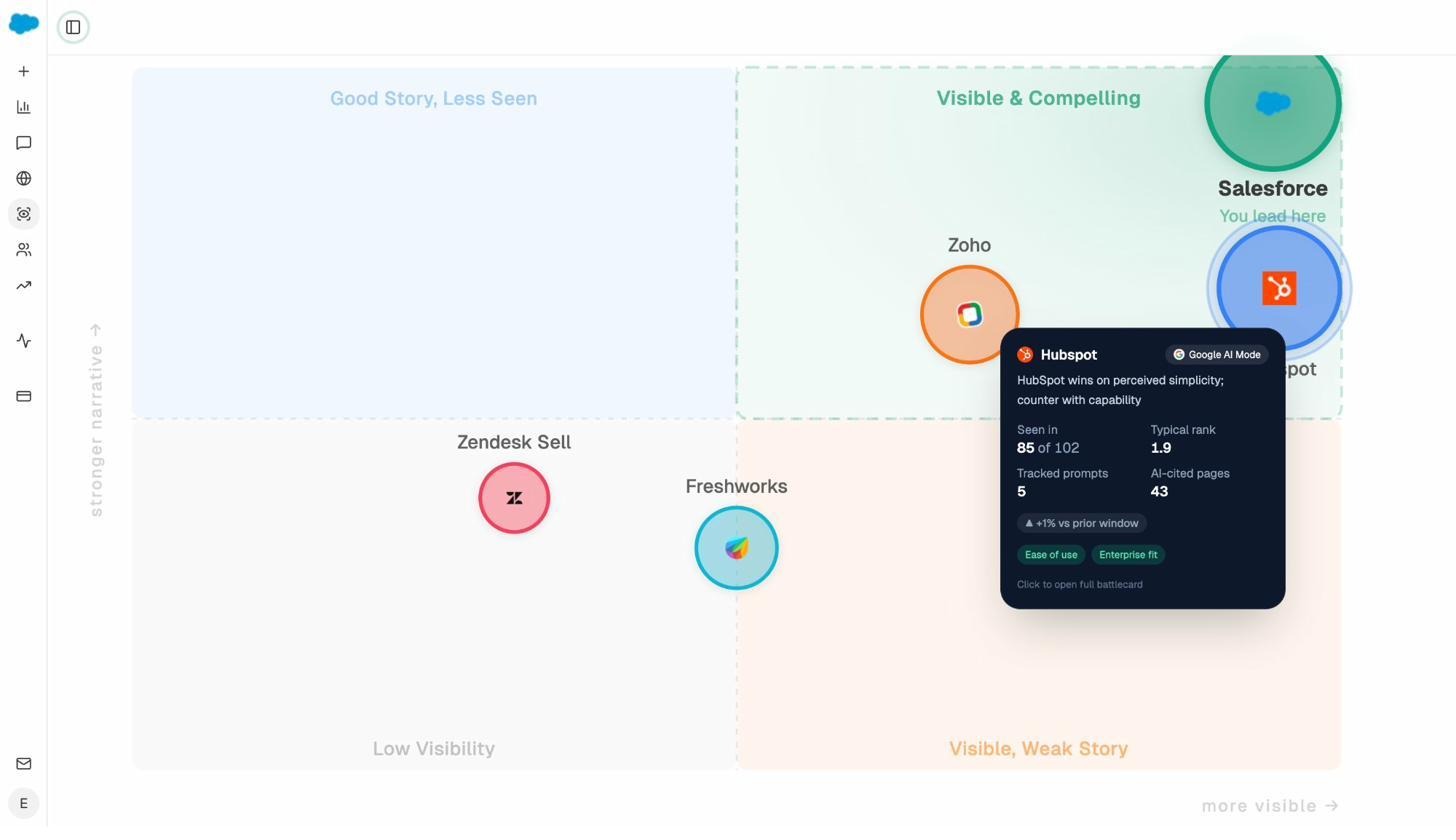

Step 4. Map perception, not just presence

The Perception Map is one thing you cannot do anywhere else. It positions every tracked brand on a quadrant of presence and narrative strength, computed from visibility, rank, sentiment, and proof signals.

If you are a CMO, this is the slide. If you are an agency presenting a quarterly report, this is the slide. Writesonic gives you a sentiment score. Analyze AI gives you the strategic narrative.

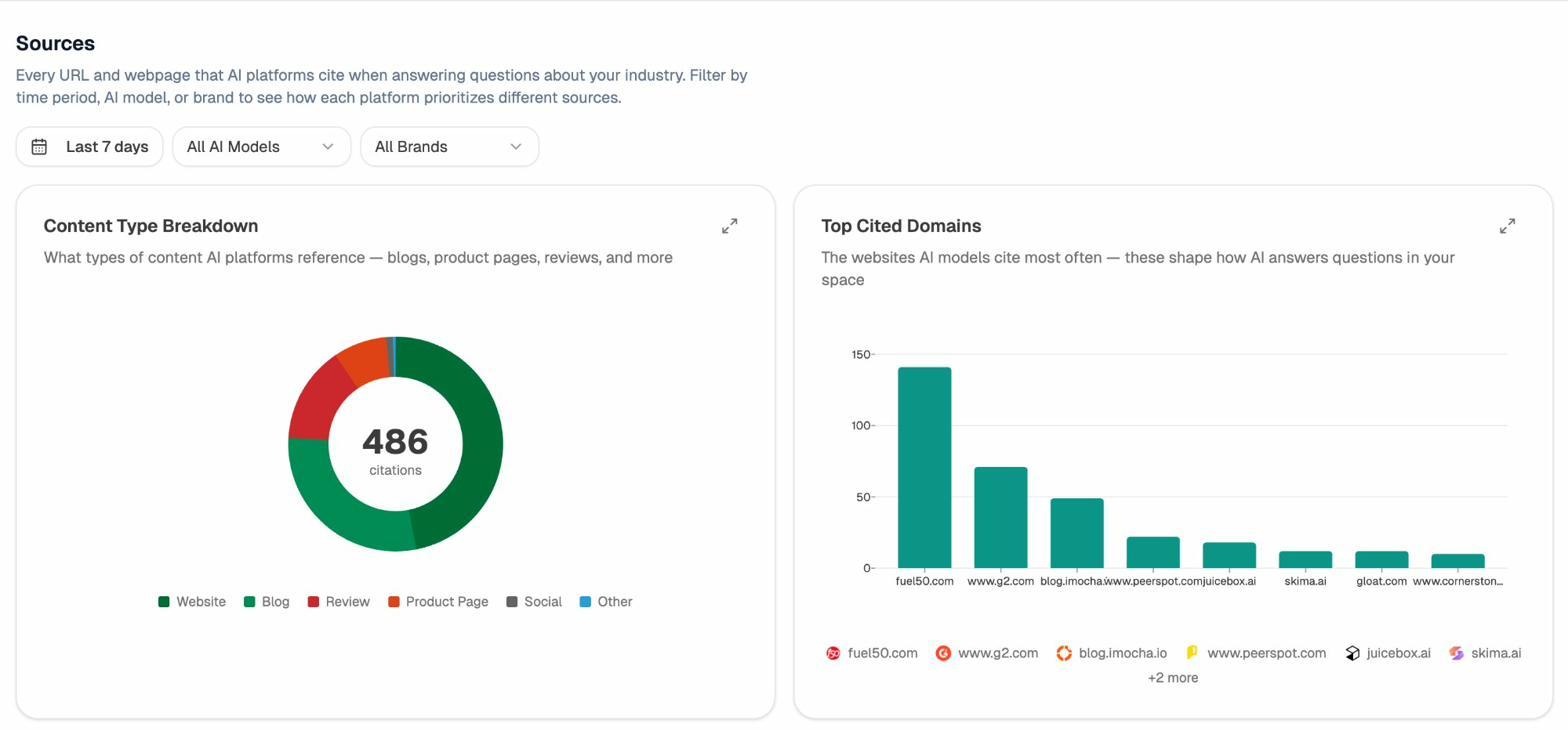

Step 5. See what gets cited and why

Writesonic’s citation view shows that you got cited. Analyze AI’s citation analytics shows what type of content AI engines actually trust in your category, broken down by source domain and content type.

That breakdown changes what you publish. If reviews dominate your category citations, you build review pages. If product pages do, you double down on product page depth.

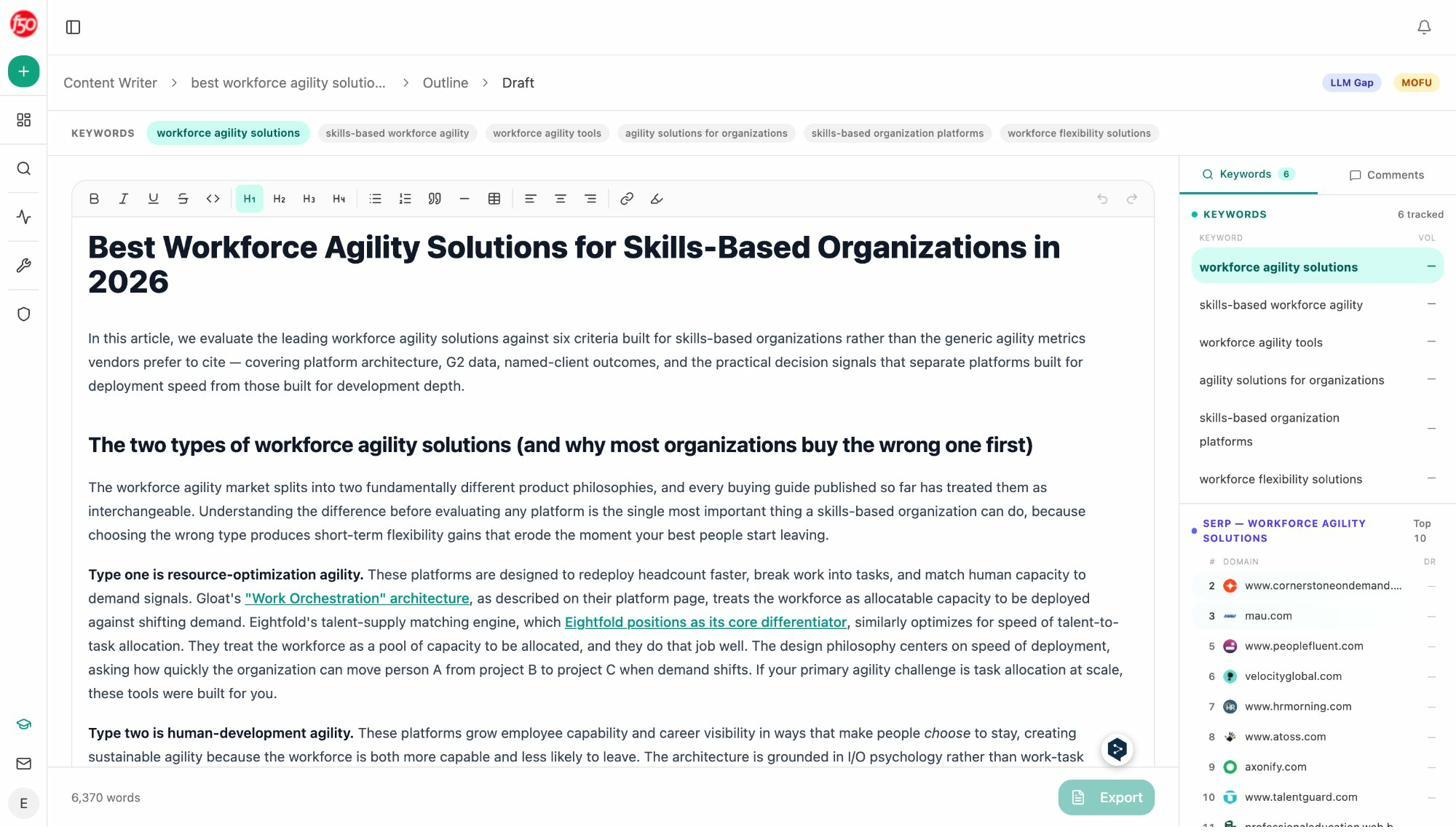

Step 6. Write content that ranks in both Google and AI engines

Writesonic’s article writer optimizes for word count and keyword density. The Analyze AI Content Writer optimizes for the work product. It runs a four-step pipeline of research, outline, draft, and inline keyword tracking, with editorial-grade comments on every section.

The Writer is wired into the brand vault so tone, claims, and required phrases are injected automatically, and the QA layer flags AI-speak patterns before publish. In the head-to-head, draft quality was the largest single difference between the two tools. The content briefs guide walks through the brief stage in detail.

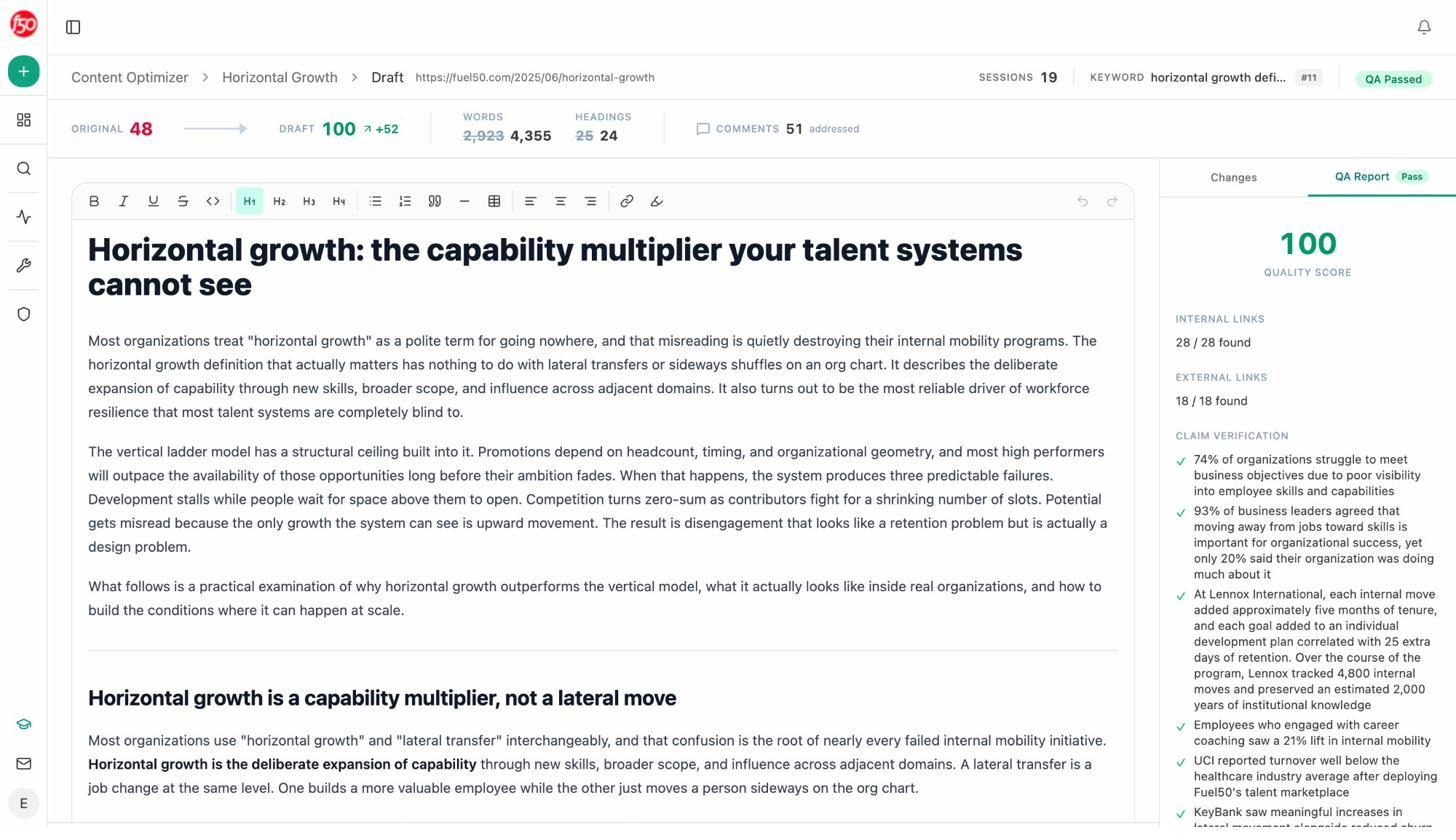

Step 7. Optimize existing content at scale

Most teams have hundreds of pages losing rankings or AI citations and no clean way to triage. The Analyze AI Content Optimizer fetches your live URL, scores it against argument flow, clarity, and AEO readiness, generates a rewrite with a QA gate, and ships back a draft with claim verification and link audit reports built in.

Every claim gets verified. Every internal and external link gets resolved. If the rewrite drops a citation source, you see it before publish. The content optimization guide walks through the full loop.

Step 8. Run the rest of your content and SEO ops on autopilot

This is the part Writesonic cannot match.

The Agent Builder gives you 180 plus production-ready nodes spanning AI models, web research, GA4, GSC, Semrush, DataForSEO, HubSpot, Notion, WordPress, and Mailchimp, plus 34 pre-built data recipes wired to your visibility data, GA4, GSC, and brand vault.

The point is not that you can build agents. The point is what you can build them for.

|

Agent |

Trigger |

What it does |

|---|---|---|

|

Monday board prep |

Schedule |

Pulls AI visibility delta, GA4 traffic, new HubSpot deals, drafts an executive summary, exports a DOCX, emails leadership |

|

Content refresh fleet |

Schedule, weekly |

Finds declining pages, rewrites for freshness and AEO in brand voice, opens a WordPress draft for review |

|

Crisis playbook |

Webhook from media monitoring |

Flags negative coverage, identifies the journalist, drafts response options in brand voice, slacks the comms team |

|

Brief-to-publish pipeline |

Webhook from Notion |

Generates research, outline, full draft with brand vault, scores against AEO, only publishes if quality gate passes |

|

Inbound lead enrichment |

Webhook from Typeform |

Verifies email, runs domain overview, lighthouse audit, recent news, upserts to HubSpot, slacks the AE |

|

Closed deal to case study |

Webhook from HubSpot |

Pulls deal notes, drafts a case study in your format, opens it in Notion for legal review |

Writesonic produces an article on demand. Analyze AI produces an article when a competitor publishes one against you, when a deal closes, when a page starts losing citations, or when leadership asks at 9am Monday for a visibility update. The difference is the trigger. The SEO automation tools breakdown lays out specific workflows.

Writesonic vs Analyze AI Side by Side

|

Capability |

Writesonic |

Analyze AI |

|---|---|---|

|

Entry price for AI search tracking |

$199-$249/mo |

Lower entry tier with full feature parity |

|

GA4-attributed AI traffic |

No, separate dashboard only |

Native, sessions, conversions, revenue per engine |

|

Prompt-level citation source mapping |

Limited |

Full, per page, per engine |

|

Perception quadrant view |

No |

Yes, with battlecards |

|

Content writer with QA gate |

No formal gate |

Claim verification, link audit, AEO scoring |

|

Programmable agents and triggers |

No |

180+ nodes, scheduled and webhook agents |

|

Live chat support on paid plans |

No |

Yes |

|

Pricing simplicity |

Tiered with feature lockouts |

Cleaner unlock at the entry plan |

For a feature-by-feature breakdown, our Analyze AI vs Writesonic GEO comparison page goes into the full set.

When Writesonic Genuinely Fits

There are real teams Writesonic is the right tool for. If your team is one or two writers producing fast first drafts on traditional SEO topics, the Lite or Standard plan is a credible choice. If your only AI visibility need is a baseline number for a quarterly slide, Professional gets you there. If you live inside Writesonic’s writer already and the team has not asked for automation, the inertia cost of switching may outweigh the cost of staying.

When You Should Pick Analyze AI Instead

Analyze AI is the better choice when any of the following are true. You need AI traffic that ties back to GA4 sessions and conversions. You manage more than five tracked competitors and need a perception map, not just a sentiment chart. You operate more than 50 pages and need a content optimizer that can score, rewrite, and QA at scale. You want recurring SEO and content work to run on a schedule, not on a human’s calendar. You are an agency producing client reports and your reporting day is currently a person job.

In all of those cases, the architecture is the deciding factor. Writesonic is a tool you click. Analyze AI is a substrate you program.

Final Verdict

Writesonic in 2026 is a competent multi-purpose tool that works best for small teams who need fast first drafts and a baseline AI visibility view. Above $249 a month, the value gets harder to defend. The GEO module is shallower than dedicated AI search platforms, the writer still requires heavy editing, and there is no programmable layer to absorb recurring work.

If you are a solo founder or a one-writer team, Writesonic Lite or Standard is reasonable. If you are a content team, an agency, or a marketing org running real AI search programs, Analyze AI is the better fit. You will spend less time clicking, more time shipping, and you will end up with attribution data Writesonic does not produce.

The fastest way to decide is to run the same question through both. Track 10 prompts in each. Write the same article with each writer. Audit the same page with each optimizer. Then see which dashboard your CMO actually opens on Monday morning.

Ernest

Ibrahim

![7 LLMrefs Alternatives That Do More Than Track Mentions [2026]](/_next/image?url=https%3A%2F%2Fwww.datocms-assets.com%2F164164%2F1779313840-blobid0.png&w=3840&q=75)