Summarize this blog post with:

In this article, you’ll get a side-by-side breakdown of seven LLMrefs alternatives, including what each one actually does well, where each falls short, and which one fits your team’s goals. You’ll also see exactly how Analyze AI goes beyond mention tracking to connect AI visibility to real traffic, revenue, and action across your entire marketing operation.

Table of Contents

Quick Comparison Table

|

Tool |

Starting Price |

AI Engines |

Best For |

Biggest Limitation |

|---|---|---|---|---|

|

Contact for pricing |

ChatGPT, Perplexity, Claude, Copilot, Gemini |

Teams that need AI traffic attribution, content creation, and workflow automation in one platform |

Learning curve for teams using only monitoring features |

|

|

AthenaHQ |

$295/mo (credit-based) |

8 LLMs |

Enterprise teams wanting guided optimization tasks |

Credits deplete fast with multi-engine tracking |

|

Otterly AI |

$29/mo |

4 core + 2 add-ons |

PR and comms teams needing fast alerts |

No traffic attribution, no content tools |

|

Peec AI |

~$89/mo |

5-6 LLMs + AI Overviews |

Agencies wanting clean dashboards without complexity |

No prescriptive recommendations |

|

Rankability |

Part of SEO suite |

ChatGPT, Gemini, Claude, Perplexity |

Teams already in the Rankability ecosystem |

Locked into their SEO suite |

|

Scrunch AI |

~$250/mo |

7+ LLMs |

Enterprises focused on AI readability audits |

Expensive, steep learning curve |

|

SE Ranking |

Low-cost add-on |

Google AI Overviews only |

Solo marketers testing GEO cheaply |

Google-only, no ChatGPT or Perplexity tracking |

1. Analyze AI: The Agentic Platform for SEO, AEO, Content, and GTM Ops

Most GEO tools stop at visibility scores. You get a chart showing your brand appeared in 18% of responses. But that number tells you nothing about whether those mentions sent anyone to your site, whether those visitors converted, or which pages to improve next.

Analyze AI closes that gap. The platform connects AI visibility data to GA4 traffic, landing pages, and conversions so you can see exactly which engines drive pipeline and which prompts are worth investing in. And it goes further than any tool on this list by giving you the infrastructure to actually act on what you find.

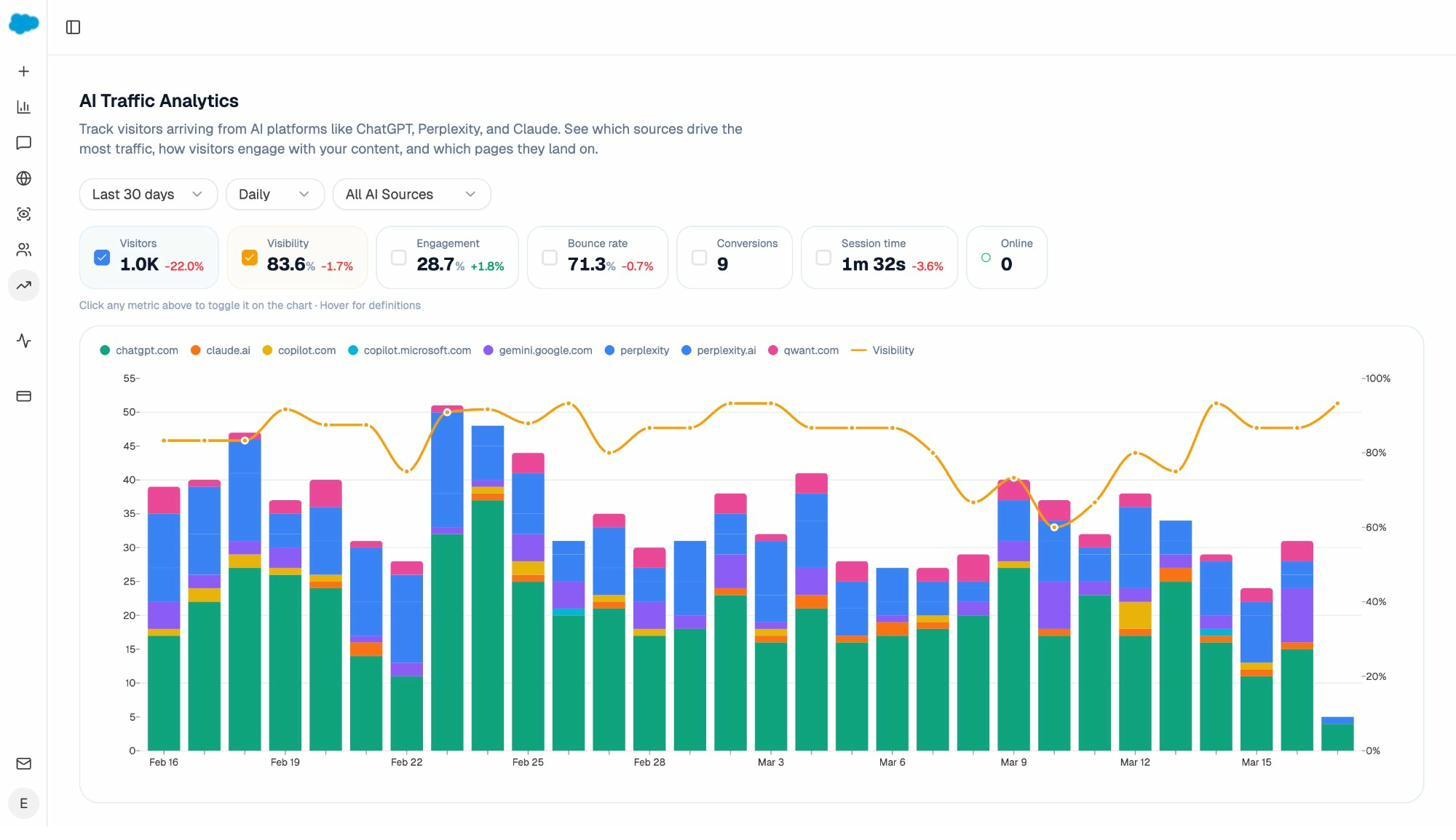

Track AI Traffic Down to the Landing Page

The AI Traffic Analytics dashboard breaks down every session from ChatGPT, Perplexity, Claude, Copilot, and Gemini. You see session volume per engine, month-over-month trends, and the exact landing pages those visitors hit. When your product comparison page gets 50 sessions from Perplexity with a 12% conversion rate while an old blog post gets 40 sessions from ChatGPT with zero conversions, you know exactly where to focus.

No other tool on this list gives you this level of attribution. LLMrefs shows that you were mentioned. Analyze AI shows that 248 sessions came from ChatGPT last month, landed on three specific pages, and generated $14,000 in pipeline.

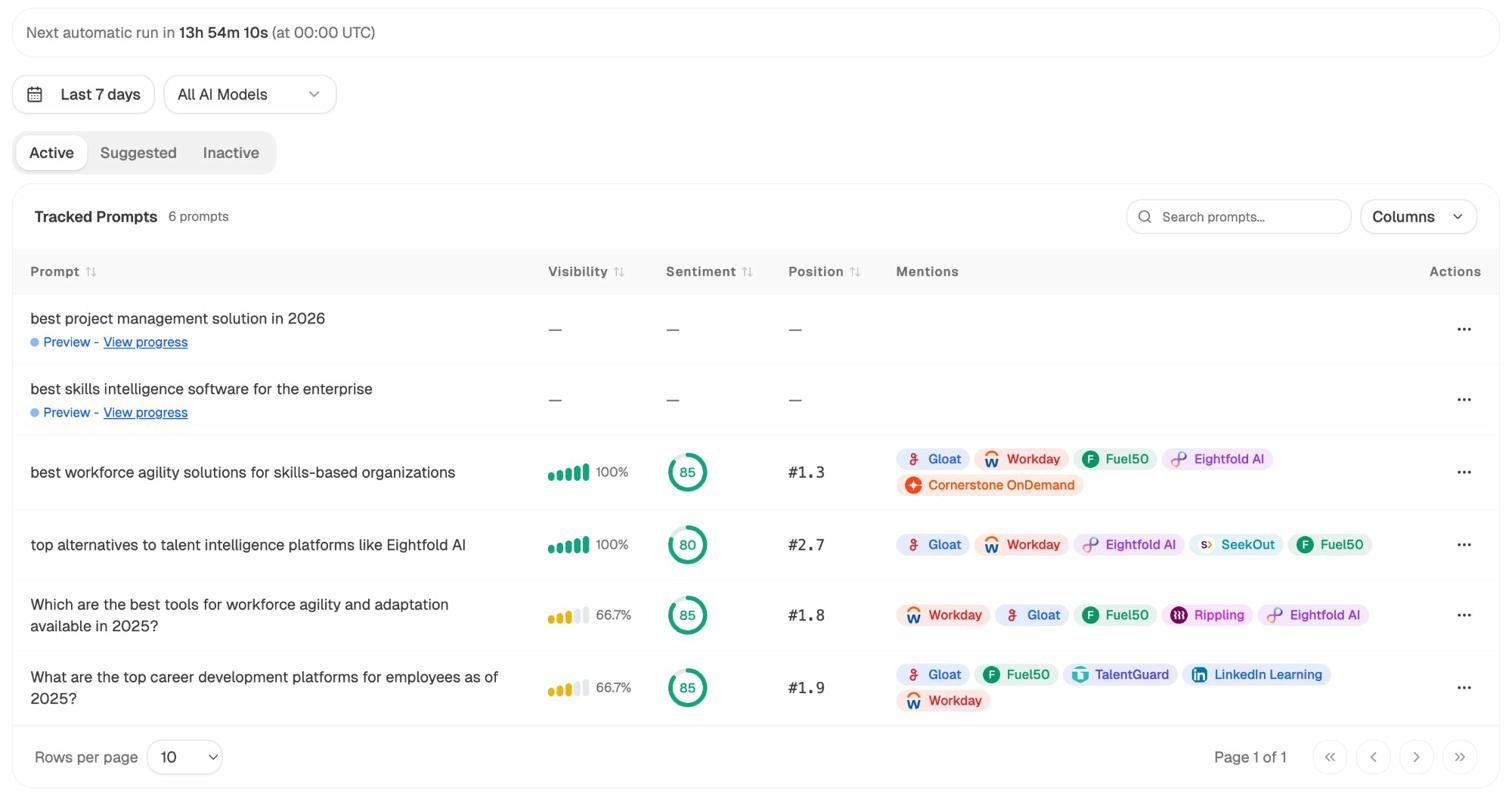

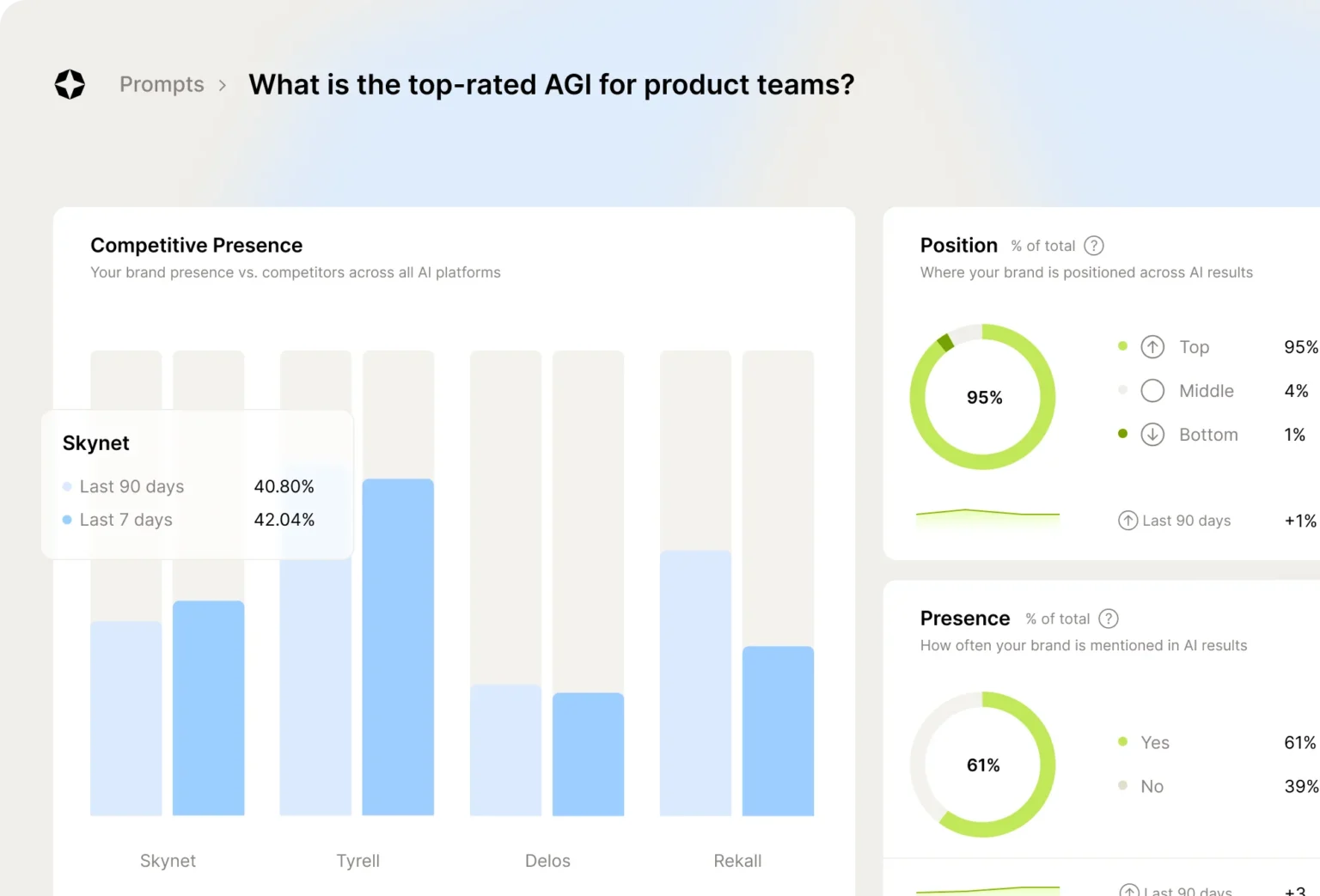

Monitor Prompts, Sentiment, and Competitive Gaps

The Prompt Tracking module monitors specific queries across all major LLMs. For each prompt, you see your brand’s visibility percentage, position relative to competitors, and sentiment score.

Not sure which prompts to track? The Prompt Discovery feature suggests bottom-of-funnel queries your buyers are actually asking in AI engines. This solves a problem every monitoring tool has. You can only track what you think to add. Analyze AI fills the blind spots by surfacing the prompts you should have been watching.

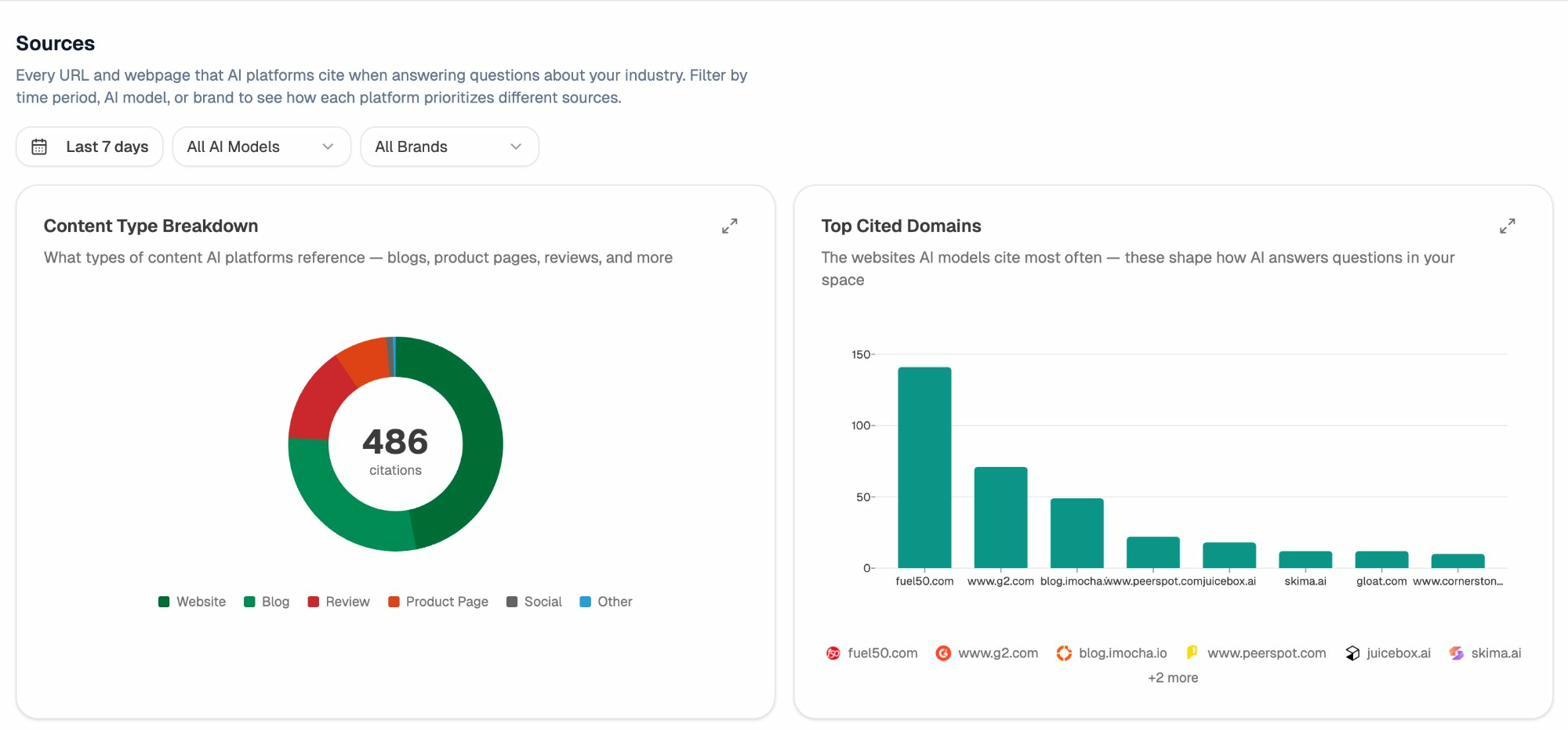

Citation Analytics shows which domains and URLs models cite in your category. You can see exactly which sources shape AI answers and where your own coverage needs to improve. Instead of generic link building, you target the specific domains that models trust.

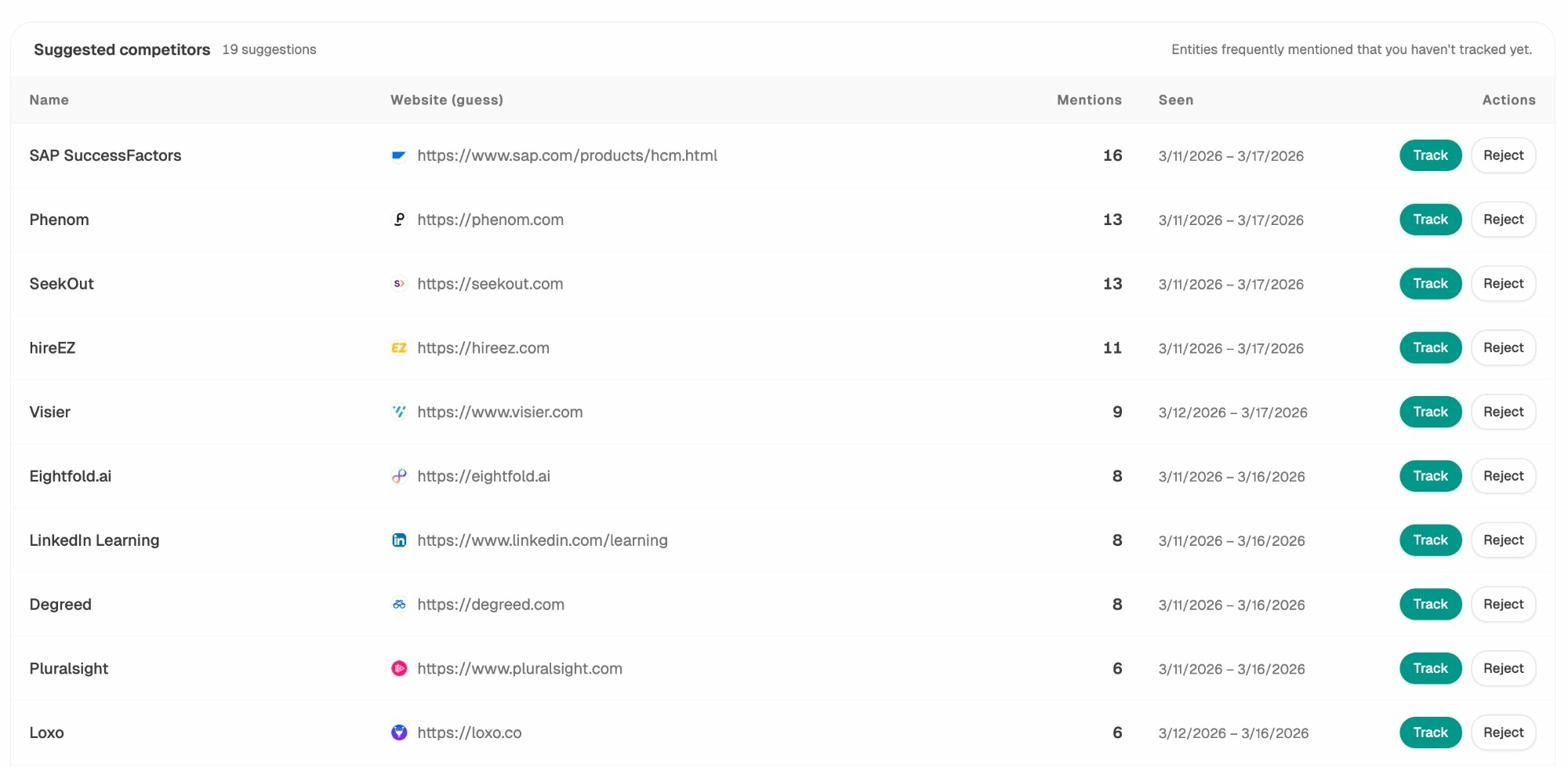

The Competitor Intelligence dashboard puts it all in context. You see where competitors outrank you in AI search, which prompts they win, and which sources give them an advantage.

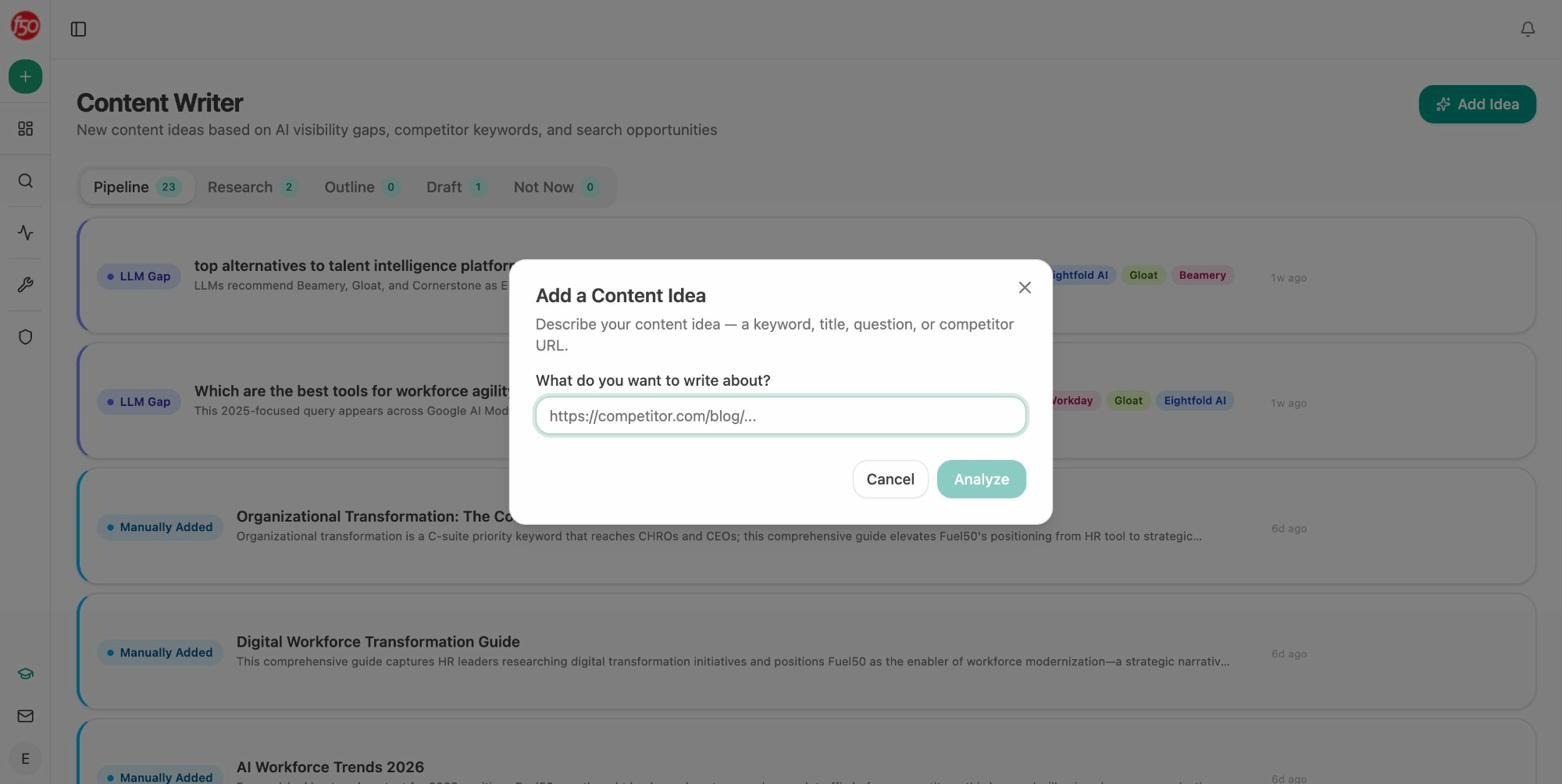

Create and Optimize Content That Gets Cited

This is where Analyze AI separates from every tool on this list. LLMrefs, AthenaHQ, Otterly, Peec, and the rest tell you where you are visible. None of them help you create the content that earns visibility.

Analyze AI’s Content Writer takes you from idea to research to outline to full draft. Each step pulls in AI visibility gaps, competitor analysis, and editorial comments from the platform’s strategist so the output is built for citability from the start.

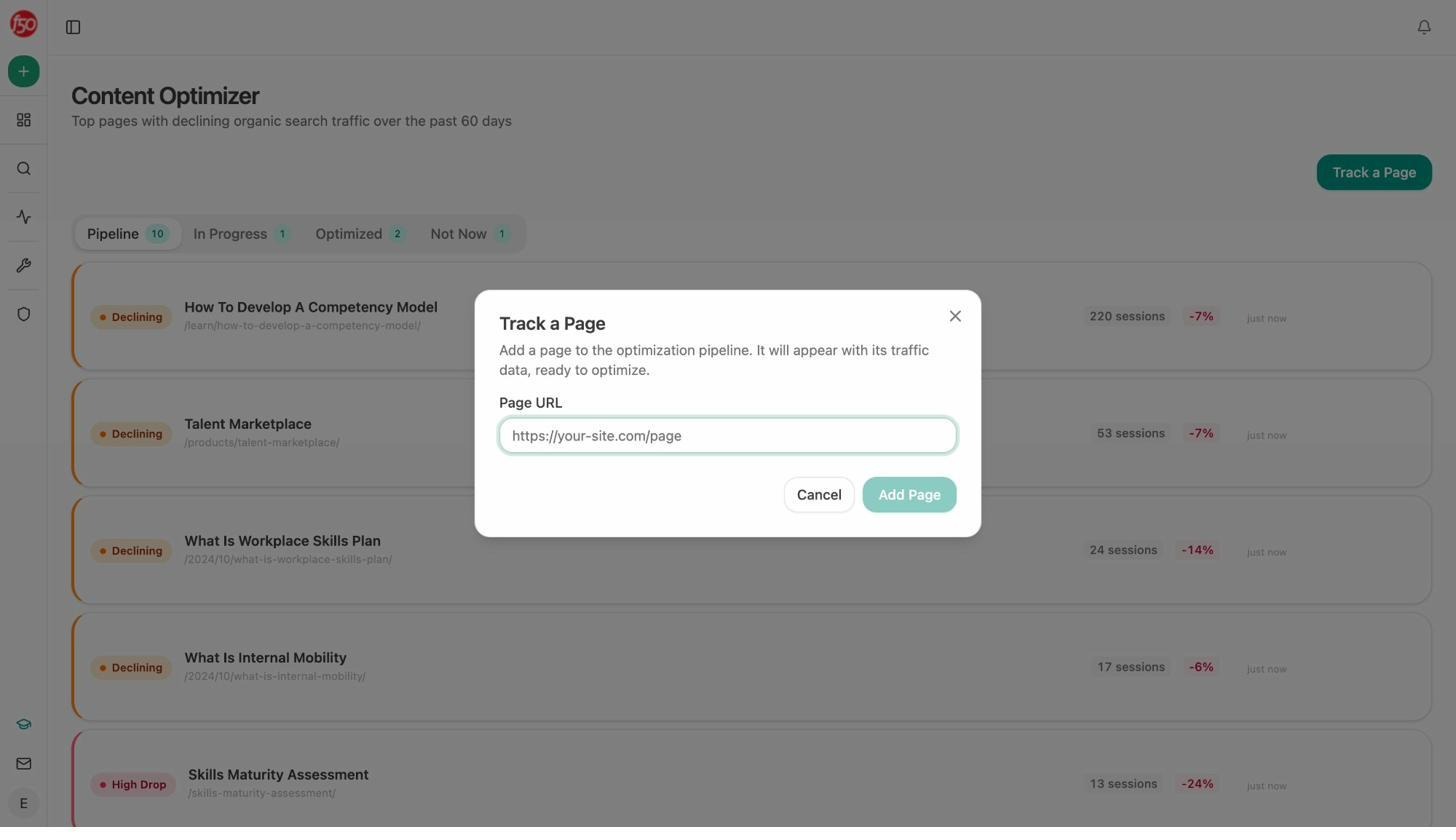

The Content Optimizer audits existing pages. Paste a URL. Get a content score, argument gaps, and line-by-line suggestions that make the page visible to both AI engines and traditional search.

Both tools pull from your Knowledge Base, which stores brand voice, messaging rules, proof points, and differentiators. Every draft or optimization stays on-brand without you copying and pasting style guides.

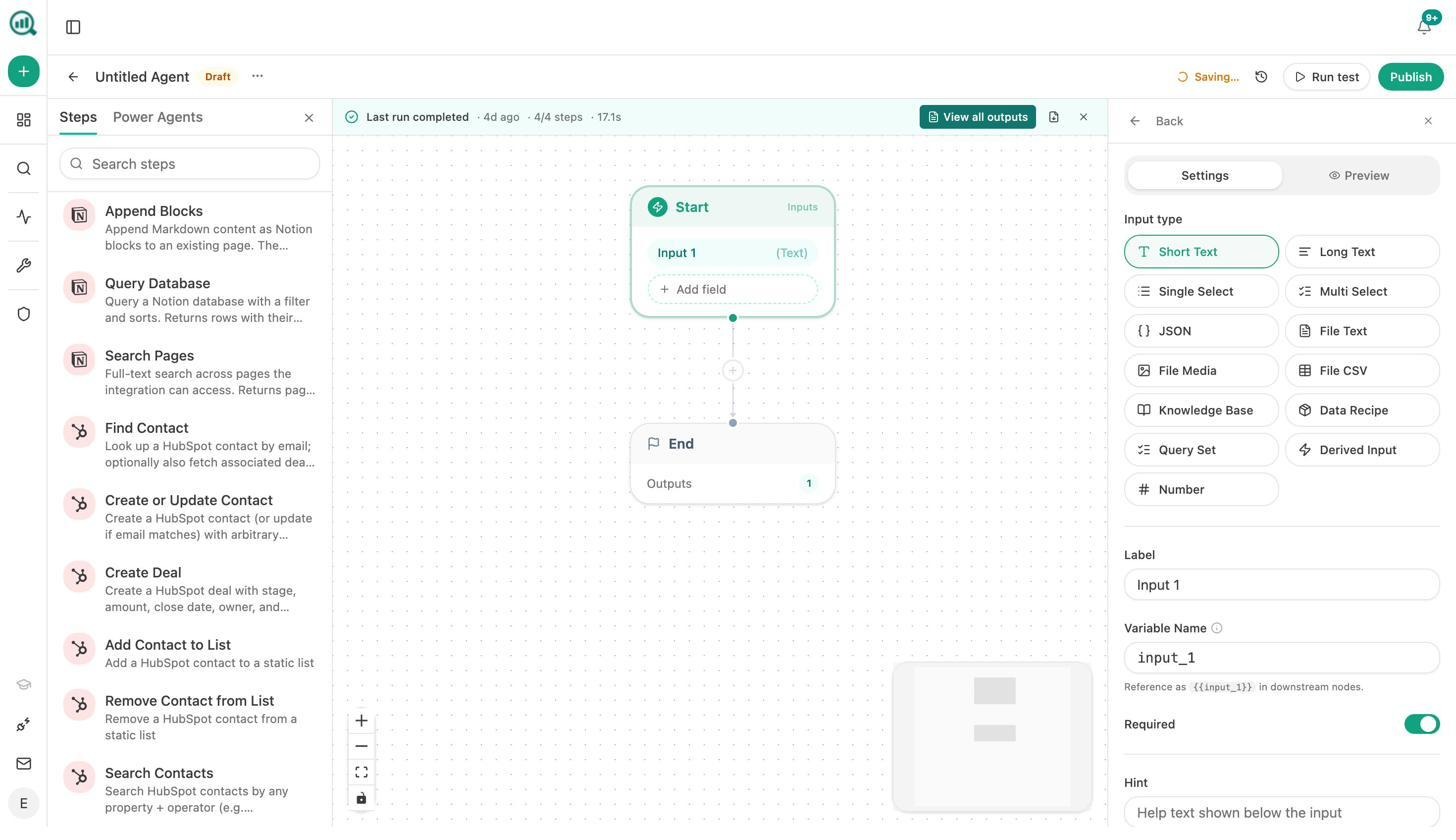

Automate Your Entire Marketing Operation with the Agent Builder

The Agent Builder is the reason Analyze AI is not just another monitoring tool. It is a programmable platform with 180+ nodes, 34 pre-built data recipes, 13 input primitives, and three trigger modes (manual, scheduled, webhook).

It connects directly to GA4, Google Search Console, DataForSEO, Semrush, HubSpot, Notion, WordPress, and every major LLM. That means you can build workflows that would normally require stitching together Zapier, Make, a spreadsheet, and three different dashboards.

Here is what teams actually build with it:

For content teams: A brief-to-publish pipeline that generates research, builds an outline, writes a full draft with brand voice injected, runs an AEO content scorecard, and publishes to WordPress if the score passes. If it fails, it sends the writer the gaps in Slack. One workflow replaces six manual steps.

For agencies: A Monday client briefing that runs at 7am every week. It pulls AI visibility data, GSC rankings, new backlinks, and competitor movement for every client, assembles each report in parallel, and emails each account manager. Reporting day stops existing.

For CMOs: A scheduled executive summary that runs the first of every month. It pulls share of voice, sentiment trends, top sources, and competitive shifts, shapes them into a one-pager, and sends it to the leadership distribution list. No analyst chase required.

For PR teams: A crisis early-warning system that checks brand mentions every 15 minutes. If sentiment drops below a threshold and reach is above a threshold, it sends an alert to Slack and drafts three response options instantly.

The difference between Analyze AI’s Agent Builder and other tools’ “workflow” features is scope. Other tools let you set up alerts. Analyze AI lets you build the entire system that detects, researches, creates, reviews, publishes, and distributes without a human touching it until it needs judgment.

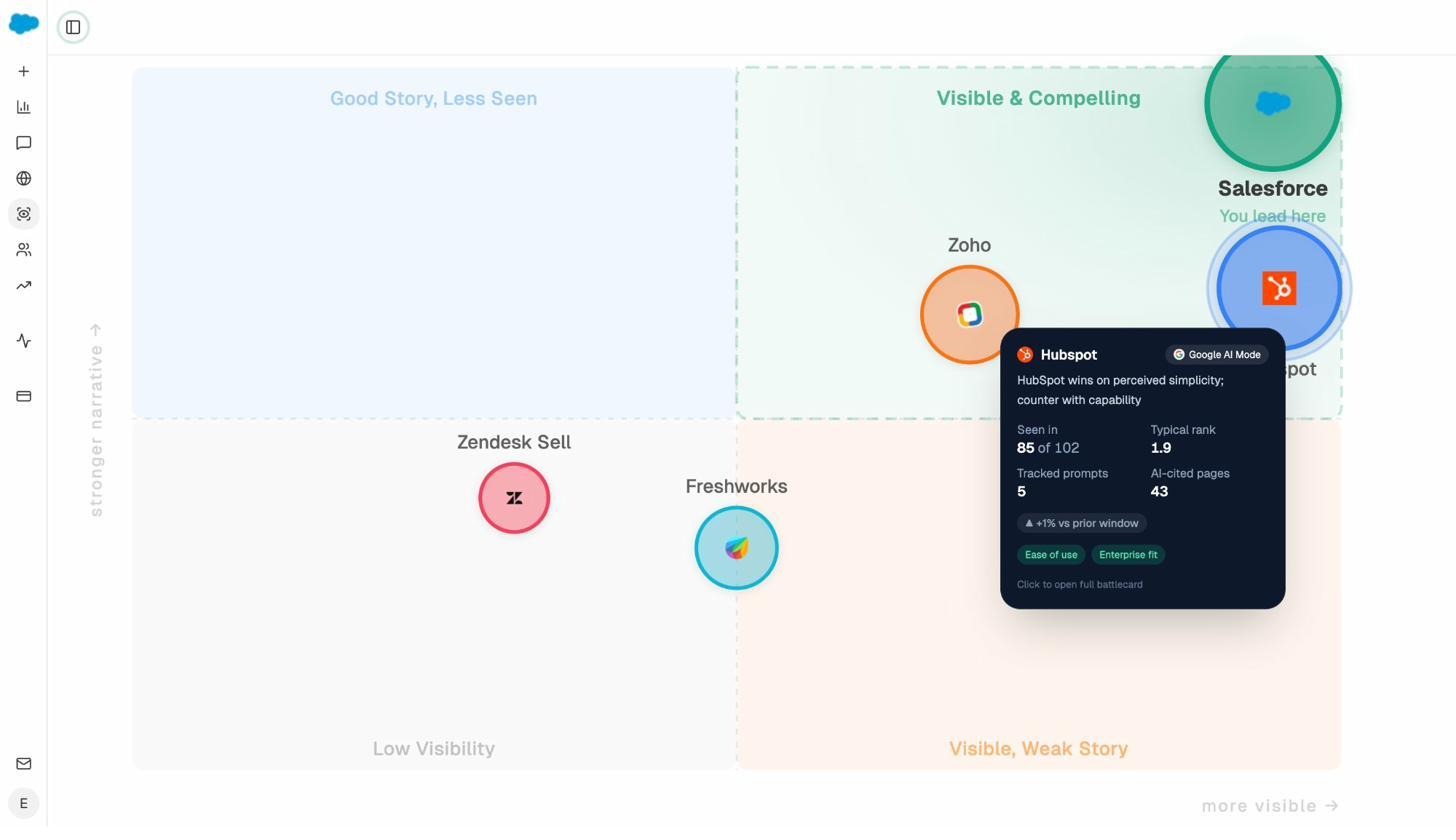

Govern Brand Perception Across AI

The Perception Map positions all tracked brands on a quadrant (presence vs. narrative strength) so you can see where you stand relative to competitors at a glance. The AI Battlecards give your team the competitive context and counter-strategies they need for sales calls and content planning. And Weekly Email Digests surface priority actions every Monday without anyone logging in.

Why pick Analyze AI over LLMrefs: LLMrefs tracks whether you got mentioned. Analyze AI tracks it, attributes it to traffic and revenue, helps you create the content that earns more mentions, and automates the workflows around all of it. If your team needs to prove AI search ROI to leadership and actually improve your numbers, not just report them, Analyze AI is the strongest option on this list.

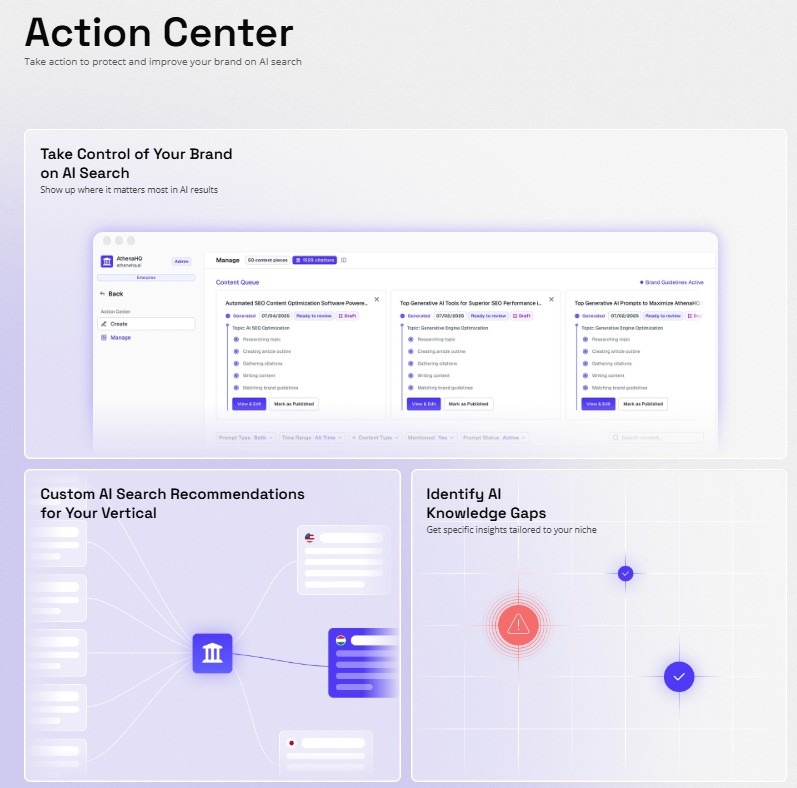

2. AthenaHQ: Best for Enterprise Teams Wanting Guided Optimization

AthenaHQ stands out from LLMrefs because it does not just show you the problem. Its Action Center turns audit findings into clear, prioritized tasks with owners and deadlines.

The platform tracks visibility across 8 LLMs, maps competitive share of voice by topic and market, and monitors sentiment and citation context. For enterprise teams managing multiple products or regions, the portfolio-level views help leaders see progress without digging through raw data.

The main trade-off is cost and complexity. AthenaHQ starts at $295/month on a credit-based model. Every tracked query, every analytics action draws from the same credit pool. Teams that want to increase monitoring cadence or track more competitors can blow through their allocation fast. The best features (Citation Engine, API access, BI integrations) are locked behind the Enterprise tier, which starts above $2,000/month.

Why pick AthenaHQ over LLMrefs: You need structured “find and fix” workflows for a large team. You have the budget and want prescriptive next steps, not just charts.

Why LLMrefs may still work better: You need simple tracking at a predictable cost without credit math. See the full comparison.

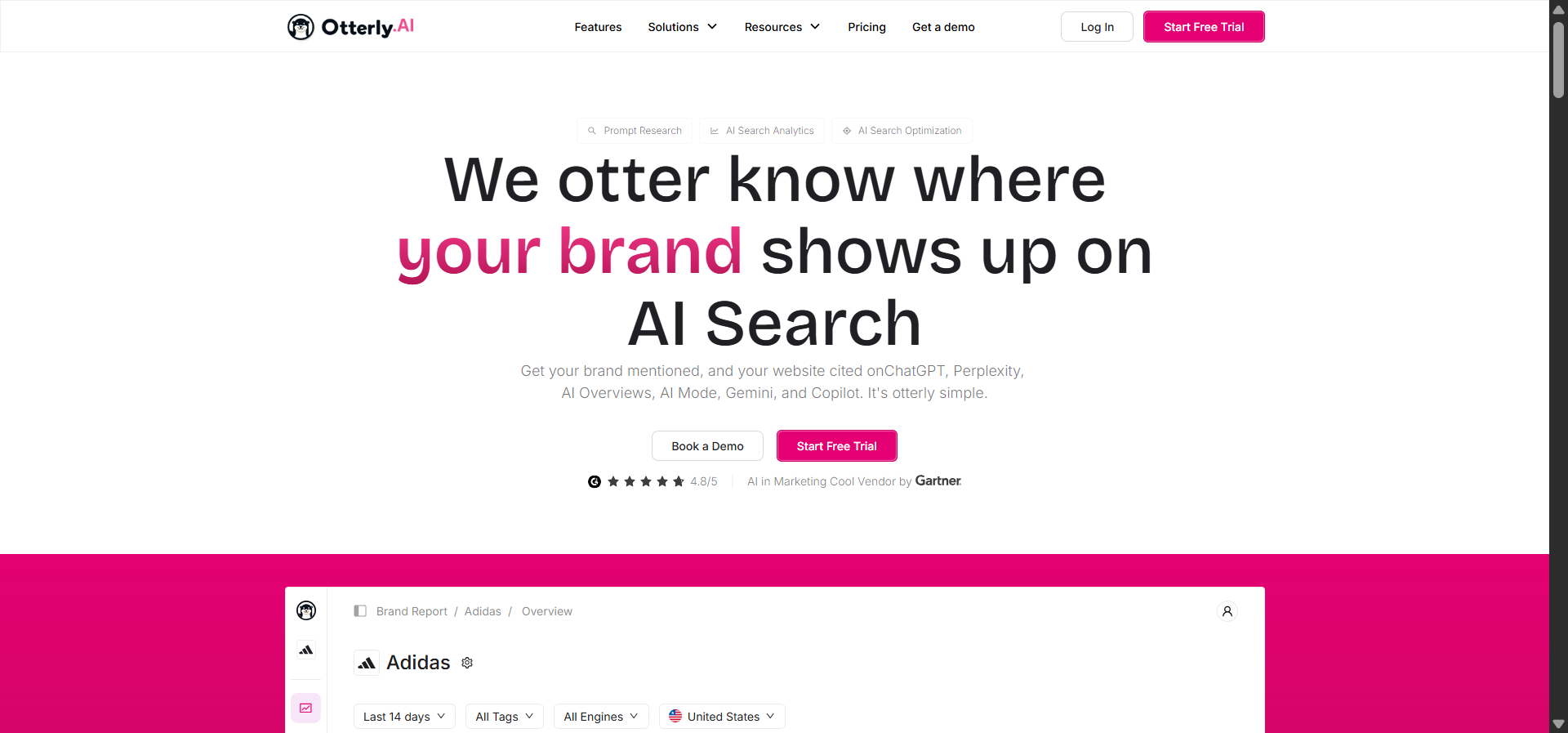

3. Otterly AI: Best for PR Teams That Need Fast Alerts

Otterly AI is the simplest tool on this list. It scans AI responses across ChatGPT, Perplexity, Google AI Overviews, and Bing AI, flags when your brand appears, and sends automated weekly reports. Setup takes minutes.

The platform’s strength is sentiment analysis. It evaluates whether AI responses frame your brand positively, negatively, or neutrally. This makes it useful for comms and PR teams who care about narrative quality, not just mention frequency.

At $29/month for the Lite plan, Otterly is the cheapest entry point to AI visibility monitoring. The limitation is depth. It does not connect mentions to traffic or conversions. It does not help you create content. It does not tell you why you are not being cited or what to change. It watches and reports.

Why pick Otterly over LLMrefs: You want the lowest cost path to automated AI brand monitoring with sentiment context.

Why LLMrefs may still work better: You need broader engine coverage (LLMrefs tracks 11+ platforms) and more detailed competitor data.

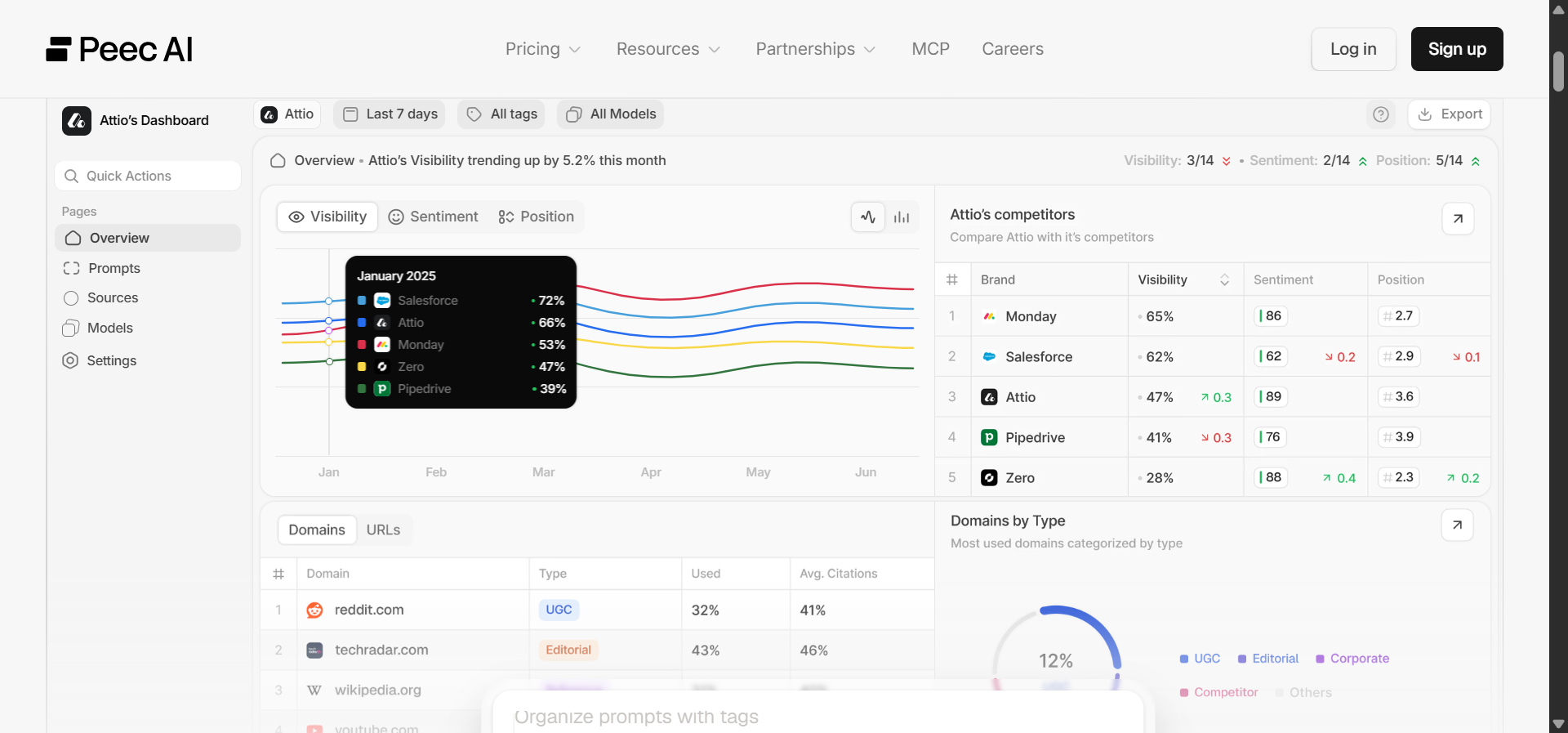

4. Peec AI: Best for Agencies Wanting Clean Dashboards

Peec AI sits between lightweight monitors like Otterly and enterprise platforms like AthenaHQ. It tracks visibility across 5-6 LLMs plus AI Overviews, shows prompt-level insights, and maps citation sources. The interface is clean enough that you can show dashboards to clients without explanation.

Starting at about $89/month with unlimited seats, Peec makes economic sense for agencies managing multiple brands. The data is clear. The dashboards are presentation-ready. The pricing scales without per-user charges.

The gap is on the action side. Peec shows you where you are visible and why. It does not prescribe what to do about it. If your team is confident interpreting data and building its own optimization playbook, that is fine. If you want the platform to hand you next steps, you will hit the ceiling fast.

Why pick Peec over LLMrefs: You want better UI clarity, prompt-level context, and agency-friendly pricing.

Why LLMrefs may still work better: You want broader engine coverage and more detailed export options. See the full comparison.

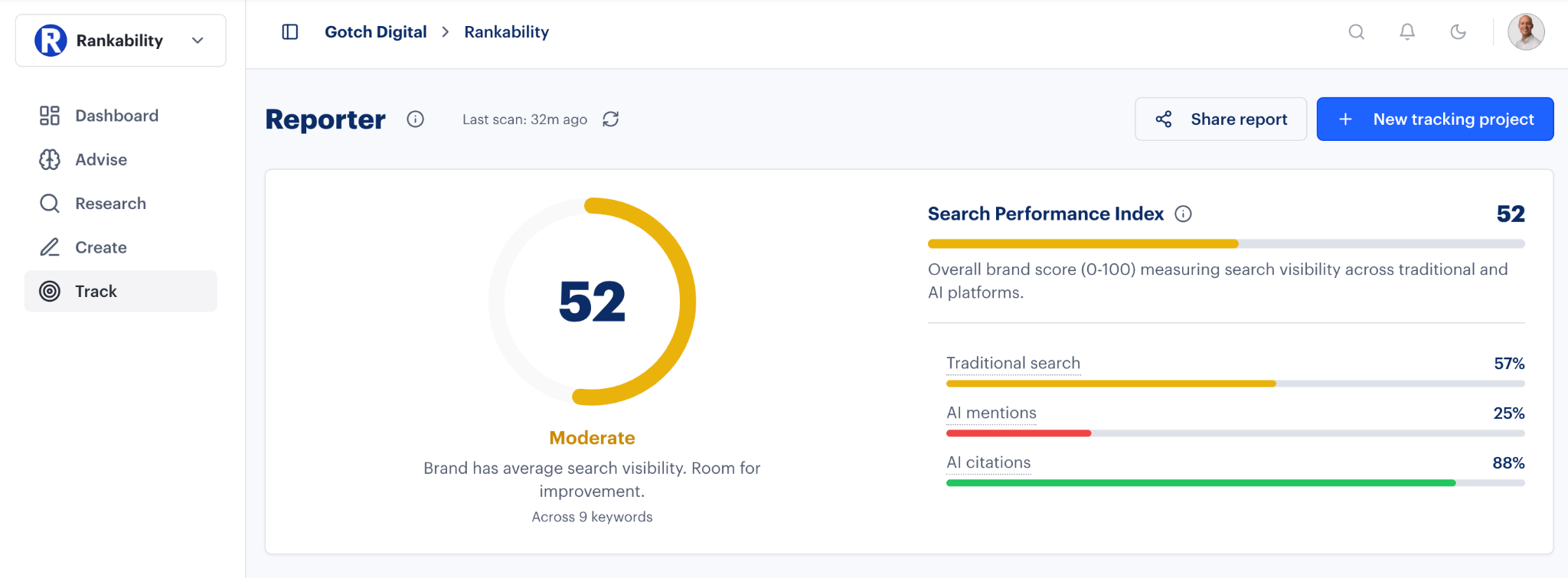

5. Rankability AI Analyzer: Best for Teams Already in the Rankability Ecosystem

Rankability’s AI Analyzer bundles GEO tracking into its existing SEO suite. If your team already uses Rankability for keyword research, site audits, and content briefs, you get AI visibility data inside the same dashboard without adding another vendor.

The integration is the value proposition. You see which keywords trigger AI Overviews, whether your domain appears, and how competitors rank in AI responses. All of it sits alongside your traditional SEO metrics so there is no context switching.

The limitation is lock-in and maturity. The AI Analyzer module is still evolving. Several features that dedicated GEO platforms offer (deeper model behavior mapping, multi-language coverage, advanced attribution) are not available yet. And because the tool is bundled with the broader suite, leaving Rankability later means losing your AI visibility data too.

Why pick Rankability over LLMrefs: You already use Rankability for SEO and want GEO added without a new vendor.

Why LLMrefs may still work better: You need a standalone tool with established tracking depth and no ecosystem dependency.

6. Scrunch AI: Best for Enterprises That Need AI Readability Audits

Scrunch AI goes deeper than most tools into how AI engines interpret your content. It audits your pages for AI readability, detects hallucinations where models misrepresent your brand, and identifies structural gaps that prevent citation.

The platform tracks visibility across 7+ LLMs and layers in persona-based segmentation so you can see how different buyer types encounter your brand in AI conversations. For brands in technical or regulated industries where factual accuracy matters, the hallucination detection is a genuine differentiator.

Starting at about $250/month, Scrunch is expensive for what is still primarily a monitoring and auditing tool. The platform is powerful but heavy. Multiple reviewers describe it as something you “grow into,” which means smaller teams may struggle to extract value quickly. The product is also relatively new out of beta, so expect some rough edges in niche verticals.

Why pick Scrunch over LLMrefs: You need to understand how AI reads your content, not just whether it mentions you.

Why LLMrefs may still work better: You want simpler tracking at one-third the cost without the enterprise learning curve.

7. SE Ranking: Best Budget On-Ramp for Google AI Visibility

SE Ranking’s AI Visibility Tracker adds Google AI Overview monitoring to its existing SEO suite. You see which of your tracked keywords trigger AI-generated results, whether your domain appears, and which competitors show up instead.

The daily refresh cadence is faster than many dedicated GEO tools. And because the feature lives inside an established SEO platform, there is no new login, no onboarding, and no separate cost.

The limitation is coverage. SE Ranking only tracks Google AI Overviews and AI Mode. It does not monitor ChatGPT, Perplexity, Claude, or Gemini. For teams whose buyers rely heavily on those engines, SE Ranking covers only a fraction of the picture. There is also no optimization guidance. It reports what it sees but does not tell you how to improve.

Why pick SE Ranking over LLMrefs: You want the cheapest way to add AI visibility tracking to an existing SEO workflow.

Why LLMrefs may still work better: You need multi-engine tracking beyond Google. That is the entire point of LLMrefs.

What Most Teams Get Wrong When Choosing an LLMrefs Alternative

The biggest mistake is buying a tool that matches what LLMrefs does and expecting different results. If your problem is “I know my brand gets mentioned but I cannot improve the number,” switching to another monitoring tool will not fix that.

Here is a simple way to decide:

If you only need cheaper monitoring: Otterly ($29/month) or SE Ranking (bundled with SEO suite) will track mentions at a lower price point.

If you need better dashboards for clients: Peec gives you presentation-ready visibility data with unlimited seats for agencies.

If you need guided enterprise workflows: AthenaHQ or Scrunch will give you deeper audits and prescriptive recommendations, though at a higher cost.

If you need to connect AI visibility to traffic, revenue, and action: Analyze AI is the only platform on this list that attributes AI mentions to GA4 sessions and conversions, gives you a content writer and optimizer built for AI citability, and provides a programmable Agent Builder with 180+ nodes that can automate your content, reporting, and competitive intelligence workflows end to end.

The tool you need depends on whether your problem is seeing the data or acting on it. If tracking is enough, several options here are solid. If you need the complete system to discover, monitor, improve, and govern your AI presence, start with Analyze AI.

Ernest

Ibrahim

![7 LLMrefs Alternatives That Do More Than Track Mentions [2026]](/_next/image?url=https%3A%2F%2Fwww.datocms-assets.com%2F164164%2F1779313840-blobid0.png&w=3840&q=75)