Summarize this blog post with:

In this article, you’ll get a clear breakdown of seven Hall AI alternatives, what each one does well, where each one falls short, and which type of team each tool fits. You’ll also see exactly how the tools compare on the features that matter most for turning AI visibility data into revenue.

Table of Contents

Why Teams Look for Hall AI Alternatives

Hall does one thing well. It shows you whether your brand appears in AI-generated answers across ChatGPT, Perplexity, Google AI Overviews, and other engines. It tracks generative answer insights, website citation data, and AI agent crawling behavior.

That’s a solid foundation. But once you see the data, a question follows immediately. What do you actually do with it?

Hall tells you that you showed up (or didn’t) in an AI answer. It does not tell you why you lost a specific prompt to a competitor. It does not connect that appearance to traffic hitting your site. And it does not help you fix the page that should be getting cited but isn’t.

Teams shopping for alternatives tend to want one or more of these things that Hall doesn’t fully deliver. They want attribution that ties AI mentions to sessions and conversions. They want competitive intelligence that shows why a rival wins a specific prompt. They want content optimization workflows built into the same platform. Or they want automation that turns weekly reporting from a four-hour task into something that runs itself.

Here are seven alternatives worth evaluating, starting with the one that covers the most ground.

1. Analyze AI

Who it’s for: Marketing and growth teams that want to treat AI search as a measurable revenue channel, not just a visibility dashboard.

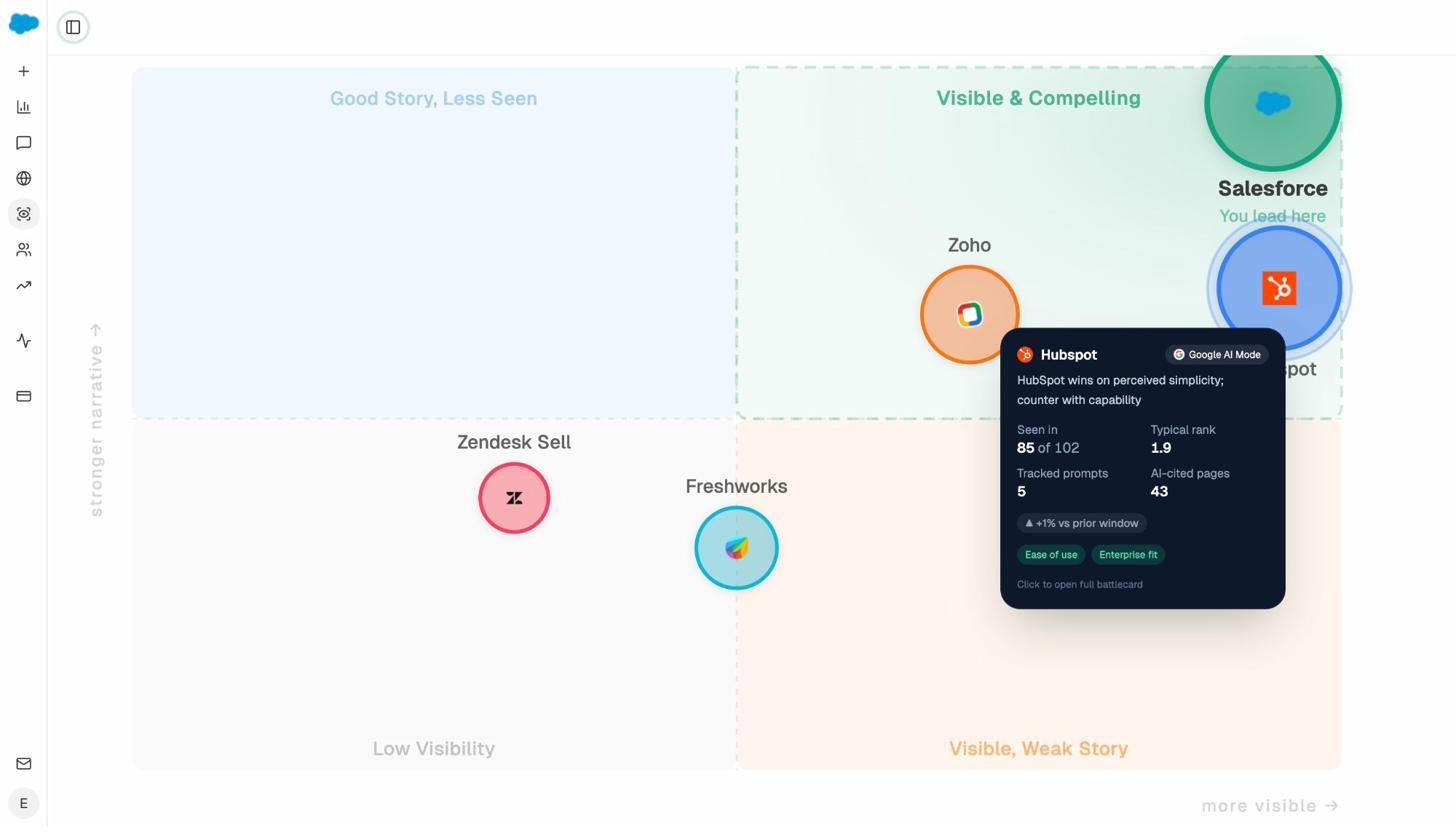

Hall answers “are we showing up?” Analyze AI answers that question and then keeps going. It answers “which competitor is winning this buying conversation, why are they winning, and what is that win costing us in pipeline?”

The platform is organized around four capabilities. Discover, Monitor, Improve, and Govern. Each one solves a different failure point that teams hit as soon as they start treating AI visibility as part of their acquisition strategy.

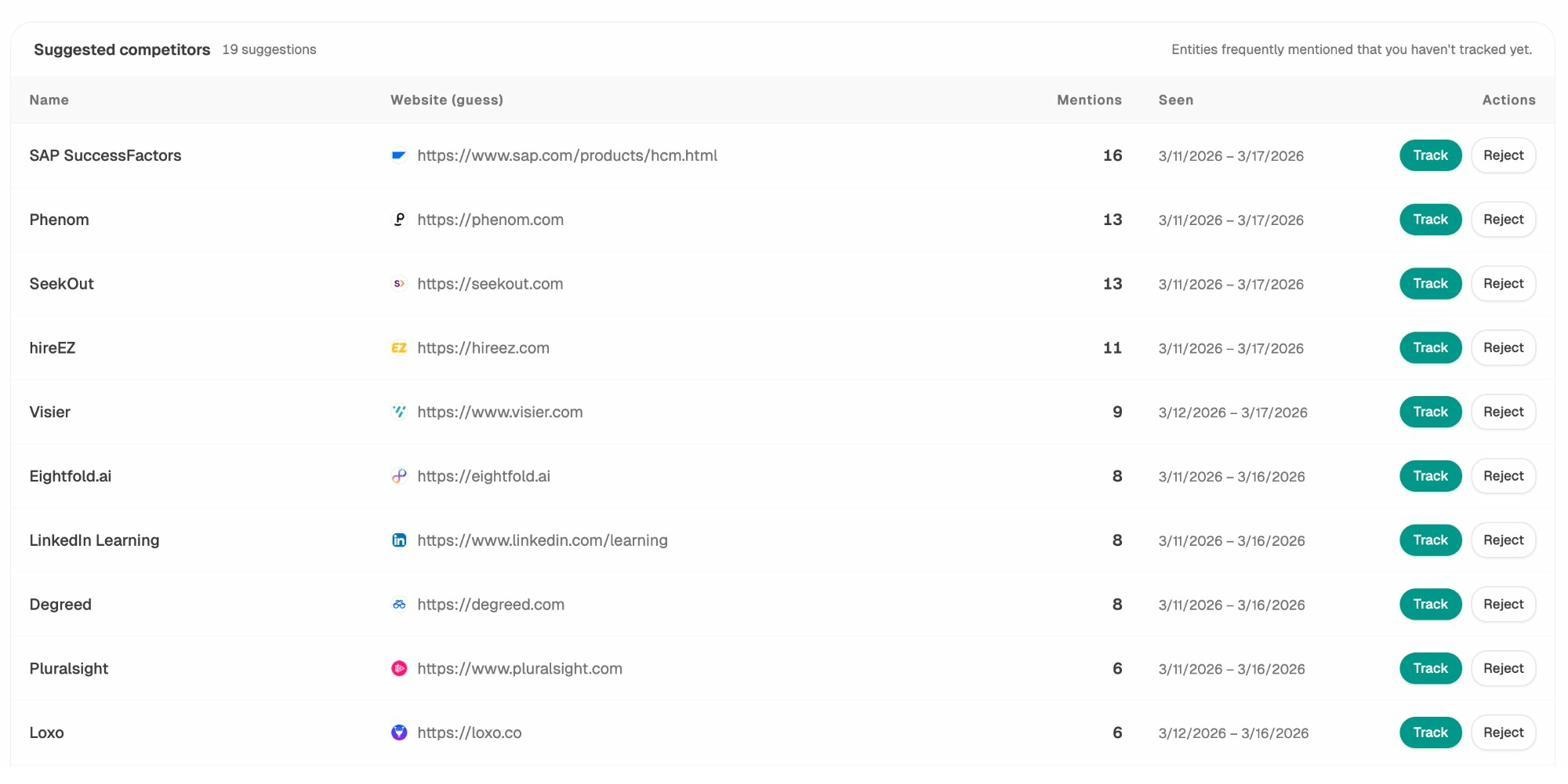

Discover surfaces the buyer-intent prompts your audience is actually asking AI engines. It doesn’t wait for you to manually input queries. It finds the prompts that matter, maps how each engine responds, and shows which competitors get cited in those answers. It also suggests competitors you haven’t tracked yet, so you never miss a rival gaining ground in your category.

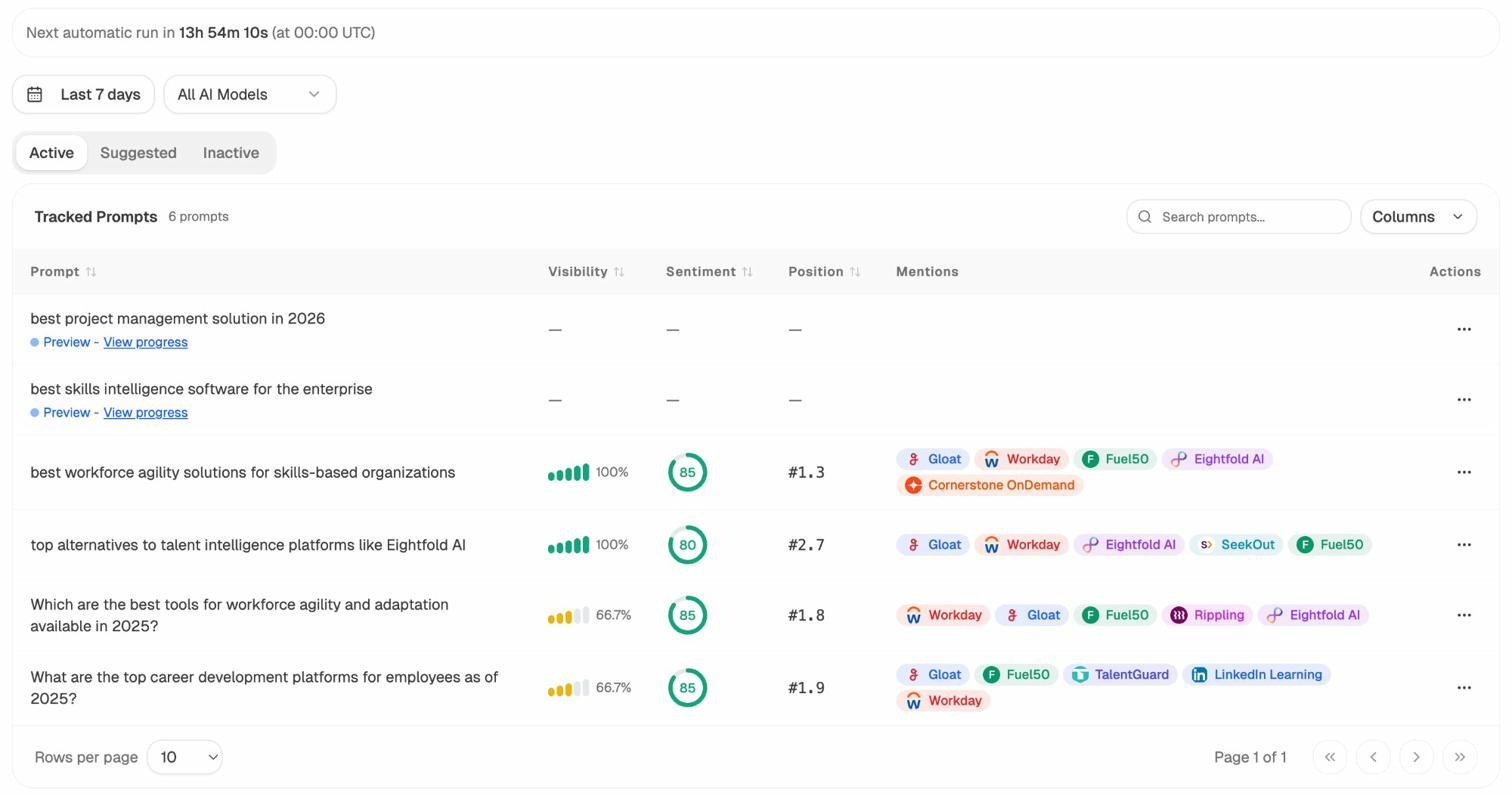

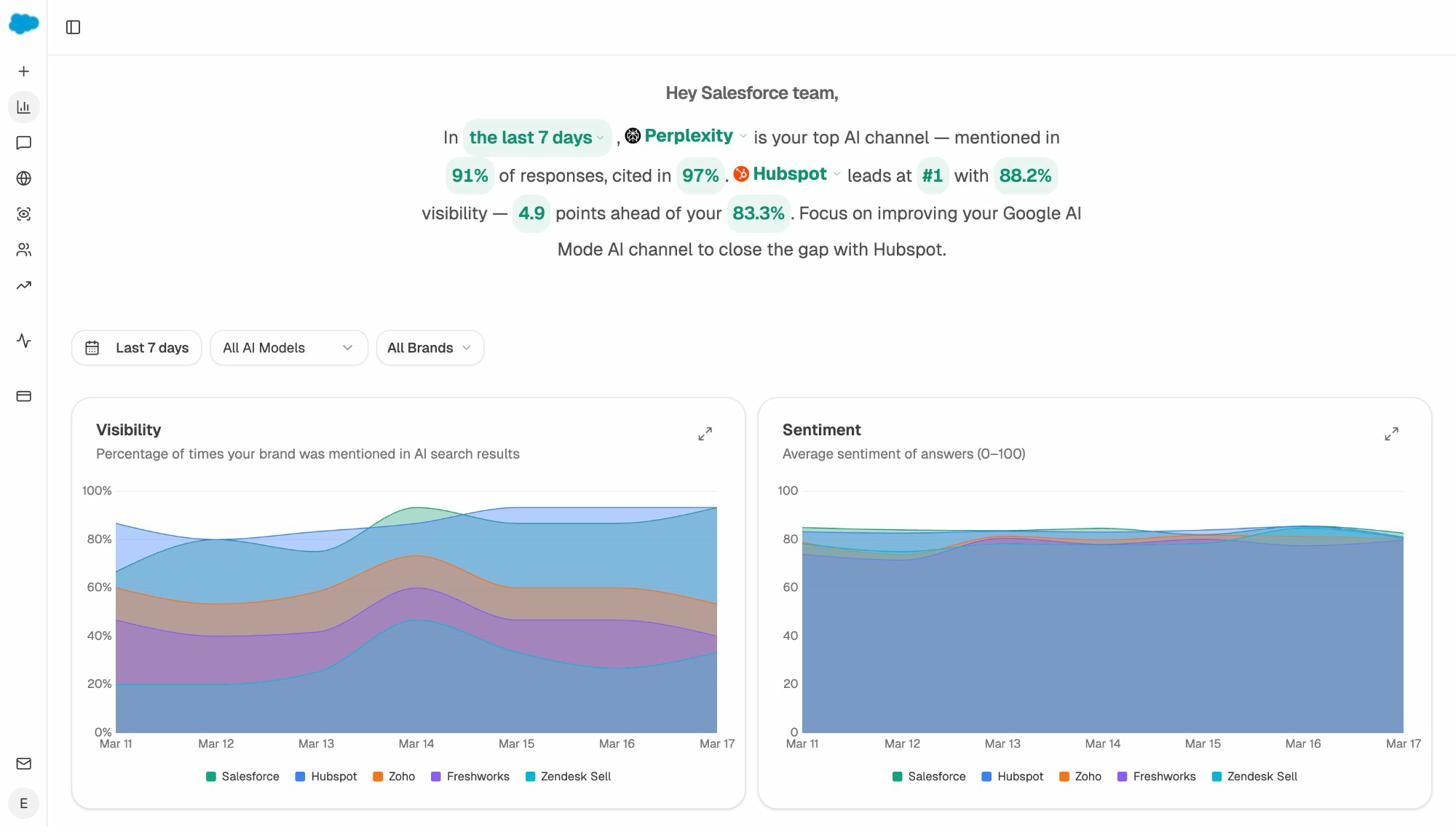

Monitor tracks your visibility daily across every major AI engine and ties those appearances to real traffic. You can see which model is sending visitors to which specific landing pages on your site, and whether those visitors convert. The overview dashboard gives you a daily briefing that reads like a strategist wrote it, telling you which engine is your strongest channel, where your closest competitor leads, and what to focus on next.

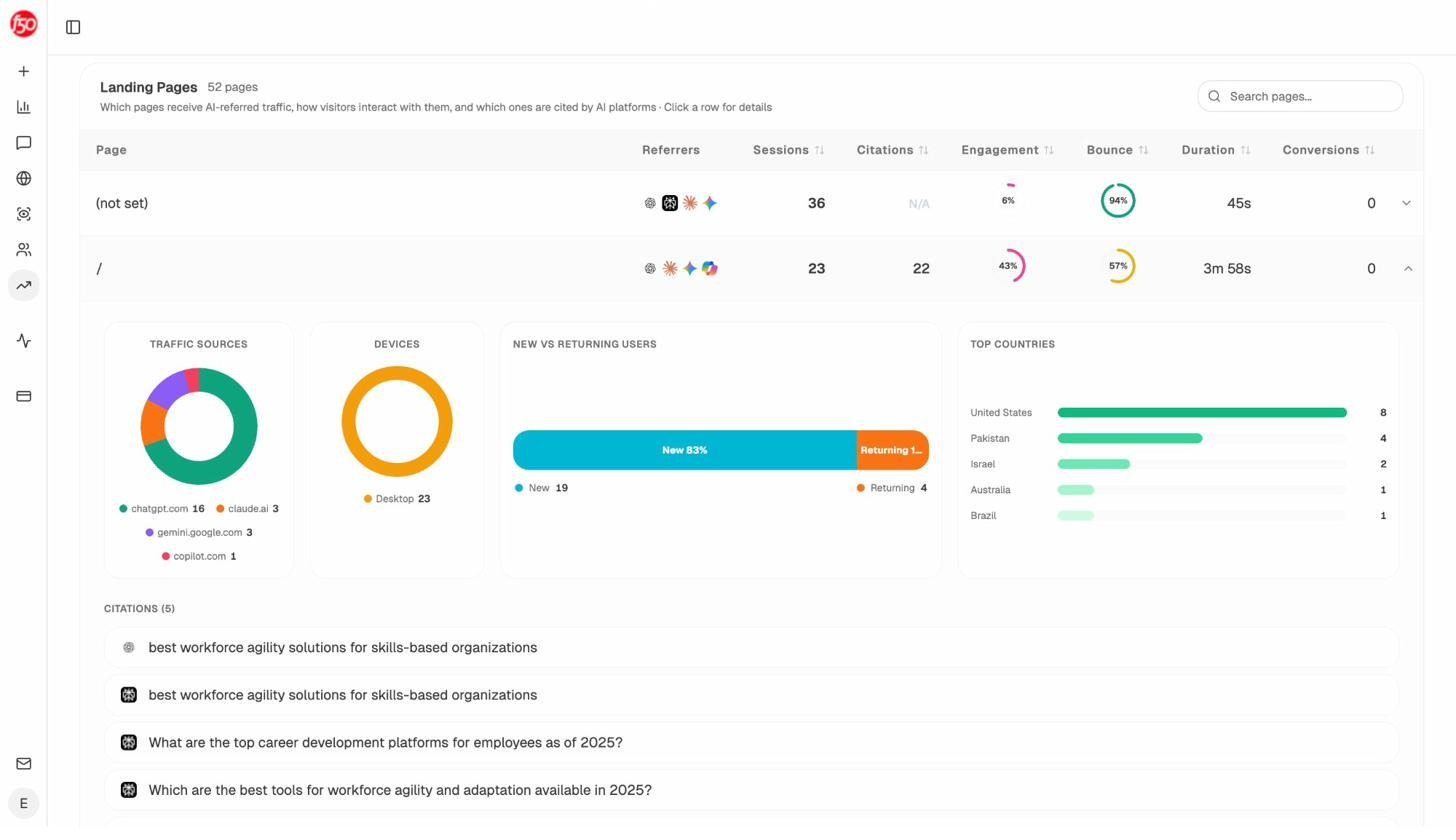

This is the layer that Hall doesn’t have. You’re not just seeing “we got mentioned.” You’re seeing “Perplexity sent 36 sessions to our product page this week, 83% were new users, and they came from the US.”

Improve shows you the prompts where you’re losing to competitors and tells you why. It surfaces the exact URL the AI model is citing for your competitor, the claims that page makes, and what you need to change on your content to take that prompt back.

Govern monitors how AI engines describe your brand. If ChatGPT starts framing you as “the budget option” or Gemini uses outdated pricing in its answer, Govern catches it. You get the exact prompt, the exact answer, and a timestamp so your team can respond with receipts.

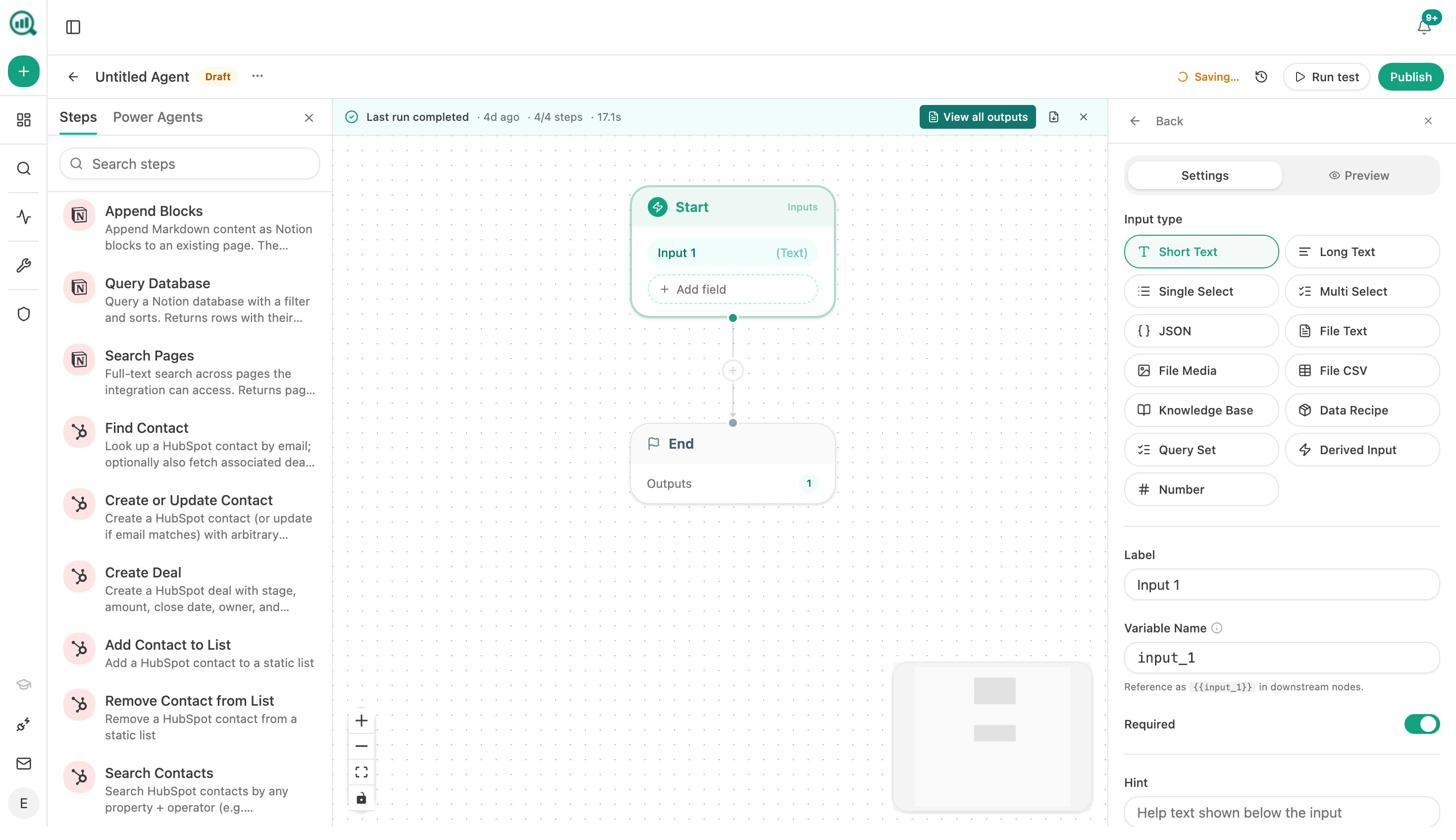

The Agent Builder is where Analyze AI pulls ahead of every tool on this list. Most AI visibility platforms stop at dashboards. Analyze AI includes a programmable automation layer with 180+ nodes that connect to GA4, Google Search Console, DataForSEO, Semrush, HubSpot, WordPress, Notion, Mailchimp, and more.

This isn’t a simple “set up an alert” feature. You can build entire operational workflows. A scheduled agent that generates a client briefing pack for every account in your agency every Monday at 7am. A webhook agent that fires the moment a HubSpot deal closes and drafts a case study. A content pipeline that researches, outlines, writes, quality-gates, and publishes to WordPress automatically, with your brand voice injected from the built-in Brand Vault.

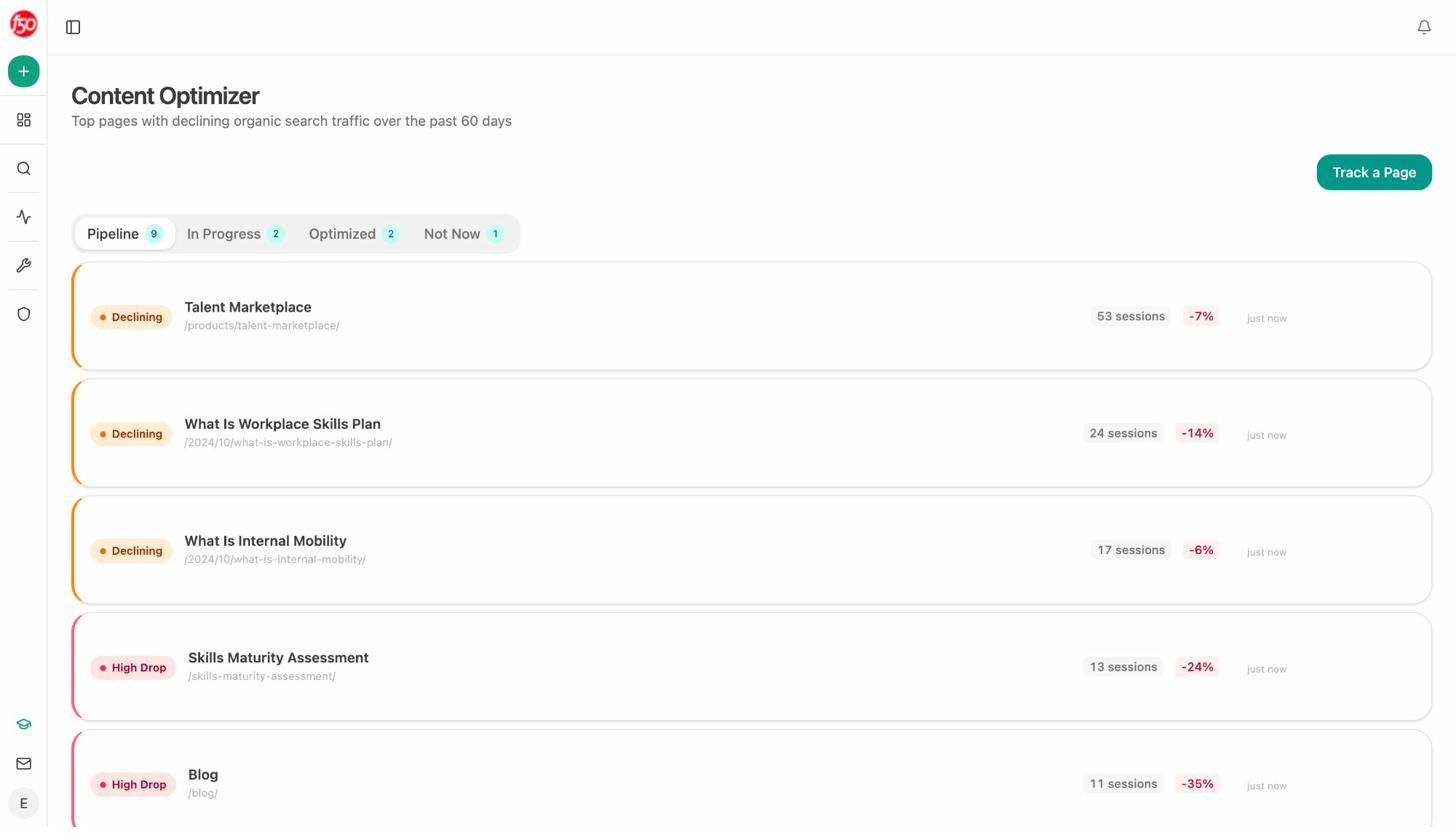

For agencies, the Agent Builder eliminates reporting day entirely. One workflow runs across every client in parallel. For content teams, it runs a weekly content refresh fleet that finds declining pages, rewrites them for freshness, and publishes updates automatically. For PR teams, it monitors brand mentions every 15 minutes and drafts three response options before your CEO even hears about a crisis.

Where Analyze AI may feel like too much: Smaller teams with simple tracking needs may find the depth overwhelming at first. If all you need is a quick visibility snapshot, lighter tools on this list will get you started faster.

2. Peec AI

Who it’s for: Teams that want clean cross-engine visibility tracking and competitor benchmarking without heavy setup.

Peec AI tracks your brand’s presence across ChatGPT, Perplexity, Gemini, Claude, and Google AI Overviews. It starts from the answer (not from keywords), which means it captures exactly what users see when they ask AI a question about your category.

The dashboards are clean. You can see share of voice by engine, track prompt-level visibility over time, and identify which source URLs AI engines cite in their responses. Peec also offers a Looker Studio connector, which makes it straightforward to pull data into client reports.

Where Peec falls short is in the “now what?” department. It tracks visibility well but doesn’t offer deep optimization guidance. There’s no content auditing, no page-level recommendations, and no AI traffic attribution. You know you got mentioned, but not whether that mention drove any traffic. Costs also scale as you add more prompts, engines, and regions.

Use Peec if you need fast, affordable visibility tracking across multiple AI engines and your team already has the SEO expertise to act on the data independently.

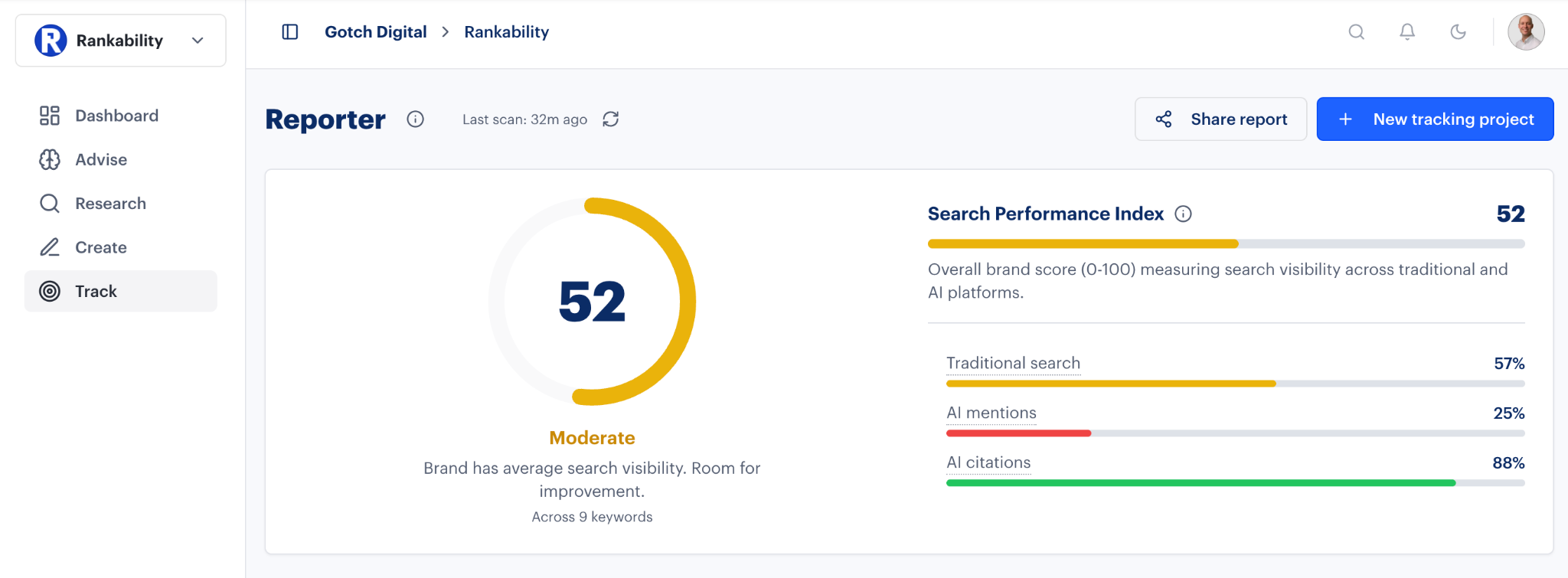

3. Rankability AI Analyzer

Who it’s for: SEO teams that want AI visibility tracking integrated directly into their content optimization workflow.

Rankability built its AI Analyzer as a module within its existing SEO suite. That means you can track how your brand performs across ChatGPT, Gemini, and Claude, then immediately pivot into Rankability’s Content Optimizer or keyword tools to fix what you found.

The biggest strength here is the tight feedback loop. Rankability doesn’t just show you visibility gaps. It audits missing citations and generates actionable recommendations within the same platform. For agencies managing multiple clients, this reduces tool switching.

The limitation is maturity. The AI Analyzer module is still relatively new, and some engines and features are marked as “coming soon.” Data refresh cadence may not yet match dedicated AI visibility tools. Access is also tied to higher Rankability plans, which may price out smaller teams.

Use Rankability if you already use their SEO tools (or want a single platform for both SEO and AI visibility) and your priority is connecting data to action inside one workflow.

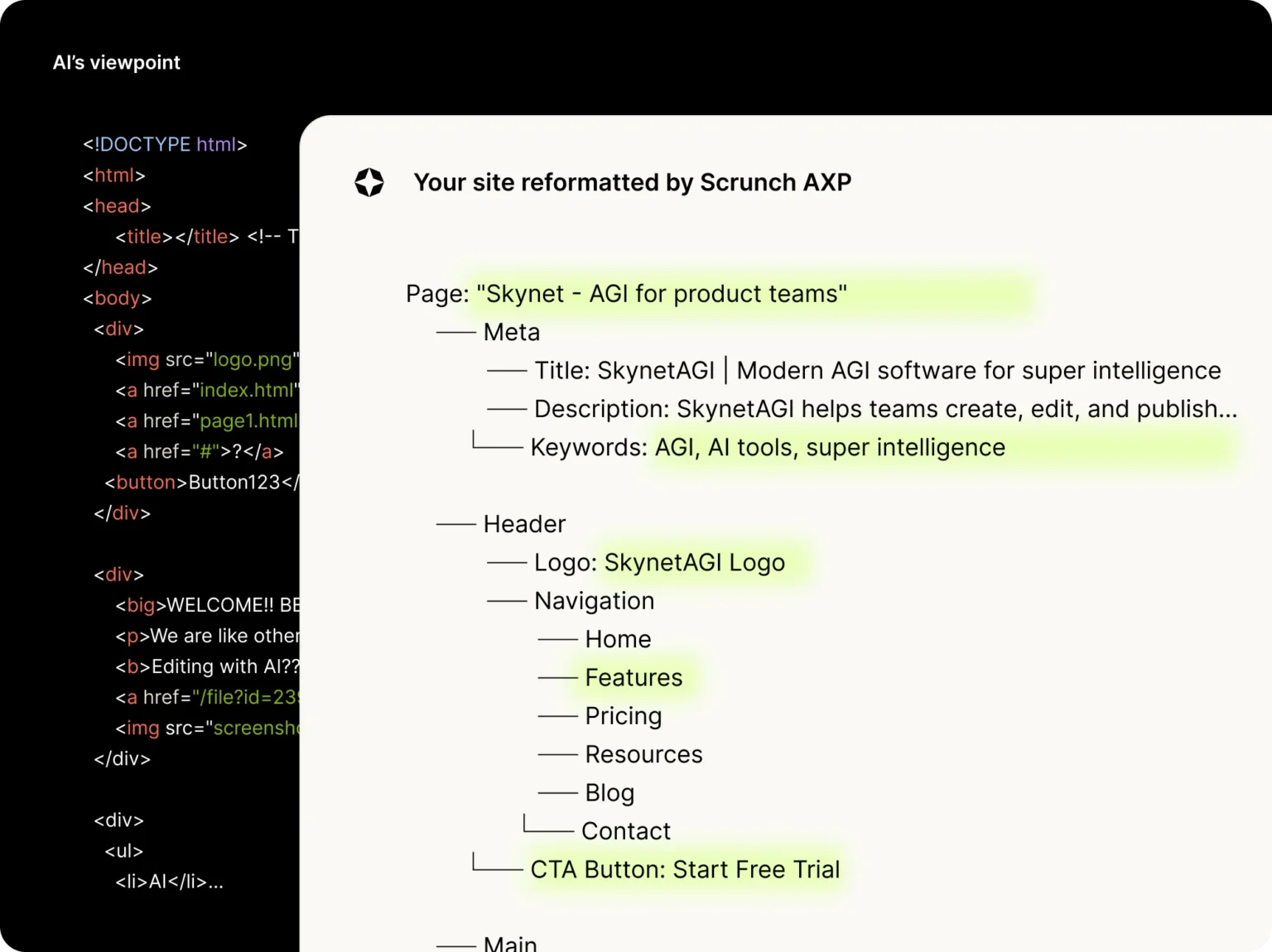

4. Scrunch AI

Who it’s for: Large enterprises managing complex, multi-domain web properties that need infrastructure-level control over how AI agents read their sites.

Scrunch AI takes a fundamentally different approach. On top of standard visibility monitoring, it offers AXP (Agent Experience Platform), which creates a parallel, AI-optimized layer of your website. This layer is invisible to human visitors but fully readable by AI crawlers, giving models cleaner structured data without altering your public-facing content.

For enterprises with thousands of pages, multiple languages, and strict governance requirements, this makes sense. Scrunch is SOC 2 Type II compliant, supports SSO and RBAC, and offers API access for integration with existing data infrastructure.

The trade-off is cost and complexity. Scrunch is built for scale, not for lean teams. The AXP concept is still early, and some SEO professionals raise questions about whether serving different content to AI agents could overlap with practices search engines discourage. Early results are mixed, with some users reporting strong visibility gains and others seeing little change.

Use Scrunch if you’re a global enterprise with the engineering resources to deploy a dual-layer content architecture and the compliance requirements that demand SOC 2-grade tooling.

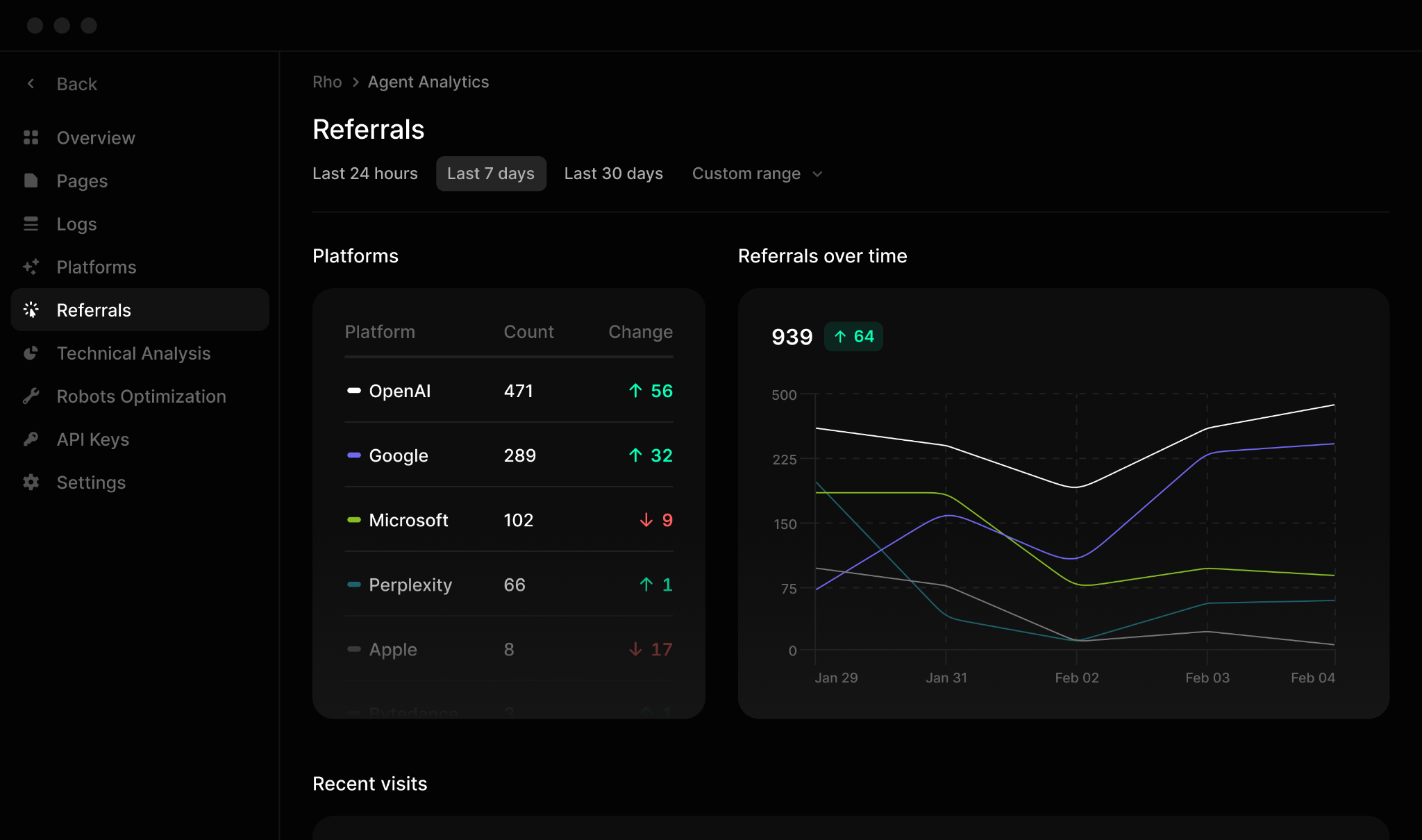

5. Profound

Who it’s for: Compliance-heavy brands that need audit-grade AI visibility data tied to business outcomes.

Profound takes a forensic approach. Its Agent Analytics module tracks how AI crawlers discover, parse, and cite your content. It uses server-log-based bot detection (not just prompt sampling), which gives more accurate data about actual AI agent behavior on your site.

The platform connects AI visibility to traffic and conversions, which is a layer most competitors skip. Its Prompt Volumes view shows what users are asking AI engines across your category, helping you map AI query trends to your content strategy.

Profound is expensive. The entry pricing is steep, and the full enterprise feature set requires custom contracts. It’s also primarily a diagnostic platform, not an optimization suite. You’ll still need other tools (or internal resources) for keyword work, page rewrites, and content creation.

Use Profound if you operate in a regulated industry (finance, healthcare, government) where audit-grade accuracy and compliance controls are non-negotiable, and you have the budget to match.

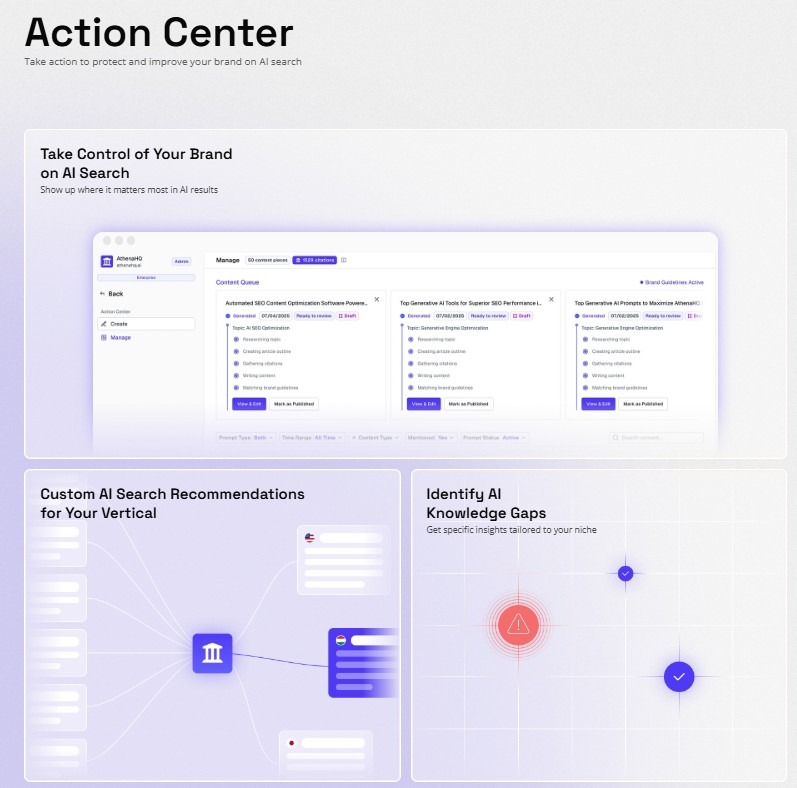

6. AthenaHQ

Who it’s for: Mid-market teams that want actionable AI visibility insights without enterprise-level complexity.

AthenaHQ positions itself as a GEO platform that balances monitoring with guided actions. Its Action Center is the standout feature. It distills monitoring data into specific tasks, telling you which topics lack coverage, where AI agents misunderstand your site, and what to fix first.

The interface is clean and accessible. Reviewers consistently mention fast onboarding and filters that let you segment visibility by engine, date, and geography within seconds. AthenaHQ also includes LLMs.txt configuration guidance and sentiment analysis, which adds useful context beyond raw visibility numbers.

The limitation is depth. Prompt volume data uses broad ranges instead of exact counts, which limits precision for data-heavy teams. Pricing starts above $250 per month, which can stretch budgets for startups. Some advanced modules are still evolving as the platform matures.

Use AthenaHQ if you want guided, actionable recommendations without the overhead of enterprise analytics, and your team prefers clear next steps over raw data.

7. LLMrefs

Who it’s for: Small teams and startups that want fast, affordable AI visibility tracking with minimal setup.

LLMrefs is built for speed. You upload your keywords, it auto-generates prompts, and within minutes you can see where your brand shows up across 11 AI engines. The LLMrefs Score (LS) condenses multi-engine performance into a single number, making it easy to report internally without parsing multiple dashboards.

At $79 per month with unlimited seats, it’s one of the most accessible entry points for AI search tracking. The built-in LLMs.txt generator is a nice touch for teams learning how to manage AI crawler behavior.

Where LLMrefs falls short is depth. There’s no log-level analysis, no technical SEO integration, and limited optimization guidance. The LS Score is convenient but hides nuance. Coverage in niche or regional engines may lag. And costs rise once you need to track hundreds of keywords.

Use LLMrefs if you’re exploring AI visibility for the first time and want a low-risk, low-cost way to see where you stand before committing to a heavier platform.

8. ZipTie

Who it’s for: Teams that need quick, visual snapshots of AI visibility for reporting and stakeholder communication.

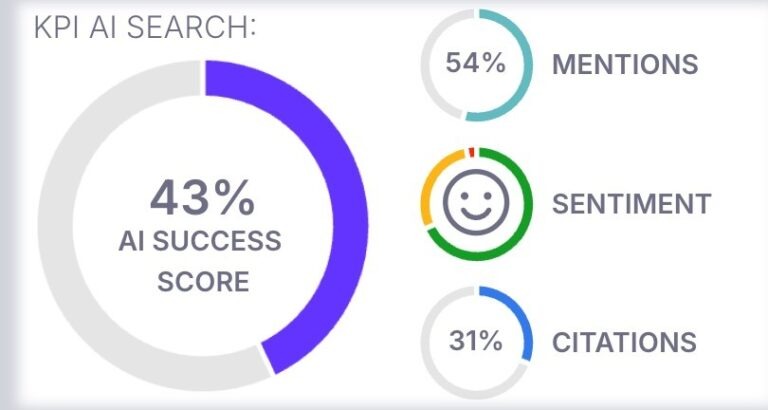

ZipTie keeps things simple. You enter queries or let it generate them automatically. The platform tracks mentions, citations, and sentiment across AI Overviews, ChatGPT, and Perplexity, then condenses everything into an AI Success Score.

The standout feature is visual accountability. ZipTie stores screenshots and metadata of each AI Overview it captures, so you can show clients or stakeholders exactly how AI presented your brand. Geographic coverage extends to smaller European markets that other tools often ignore.

The trade-off is what you’d expect from a simplicity-first tool. No server-log integrations, no advanced attribution, and limited optimization guidance. The scope covers only major engines. Scaling beyond basic tiers adds cost. And the AI Success Score, while useful for reporting, can mask which engines or prompts are driving your visibility.

Use ZipTie if you’re an agency providing quick AI visibility reports for multiple clients, or a small team that values clarity and speed over analytical depth.

Picking the Right Tool

Every platform on this list does something genuinely useful. The question is which failure point you need to solve first.

If you need a dashboard that confirms your brand appears in AI answers, Hall does that. If you want cleaner cross-engine comparisons, Peec AI or LLMrefs will get you there quickly. If you’re an agency that needs to send visual proof to clients fast, ZipTie is worth testing.

But if your team has moved past “are we visible?” and now needs to answer “which competitor is winning this buying conversation, what is that costing us, and how do we take it back,” the lighter tools won’t close that loop. That’s the gap Analyze AI fills. It connects visibility to traffic, traffic to conversions, and gives you the Agent Builder to automate the entire workflow, from competitive monitoring to content creation to client reporting, on a schedule that runs whether you remember to check or not.

The AI visibility space is moving fast. New tools launch every quarter. But the problem they solve stays the same. You need to know where you stand in AI answers, why you’re winning or losing, and what to do about it. Start with the problem you’re solving today. The right tool is the one that closes the gap between seeing data and doing something with it.

If you want to see where your brand stands right now, Analyze AI offers a free AI website audit that shows you how AI engines currently perceive your site. It takes minutes and gives you a baseline to measure everything else against.

Ernest

Ibrahim

![6 AthenaHQ Alternatives That Skip the Credit Math [2026]](/_next/image?url=https%3A%2F%2Fwww.datocms-assets.com%2F164164%2F1778742681-blobid0.png&w=3840&q=75)