Summarize this blog post with:

In this article, you’ll get a hands-on breakdown of seven Nightwatch alternatives worth your time and budget. You’ll learn what each tool does well, where it falls short, and which one fits your team depending on whether you need attribution, automation, competitive intelligence, or all three.

Table of Contents

Why Teams Outgrow Nightwatch

Nightwatch is a good rank tracker. Nobody disputes that. The problem is that rank tracking is table stakes for AI visibility, and the AI-specific features feel secondary to the SEO core.

Here’s what you’ll run into. Nightwatch’s AI visibility module gives you basic presence data across search engines, but it doesn’t show you referral traffic by AI engine. You can’t see which landing pages receive AI-sourced sessions or whether those sessions convert. The competitive intelligence focuses on SERP positions, not prompt-level share of voice across ChatGPT, Perplexity, or Claude. And there’s no content creation, optimization, or automation layer.

At $32/month for 250 keywords, Nightwatch looks affordable. But the pricing scales by keyword count, and by the time you’re tracking 2,500 keywords, you’re spending over $200/month for what remains primarily a rank tracker. For that budget, you can get a platform that tracks AI visibility, attributes revenue, produces content, and automates your reporting.

The tools below each solve different pieces of this puzzle. Some focus on monitoring. Some focus on analytics. One gives you the full stack.

1. Analyze AI: The Nightwatch Alternative That Connects AI Visibility to Revenue

Most tools in this space answer the question “do I show up?” Analyze AI answers the question that comes after: “does showing up actually matter?”

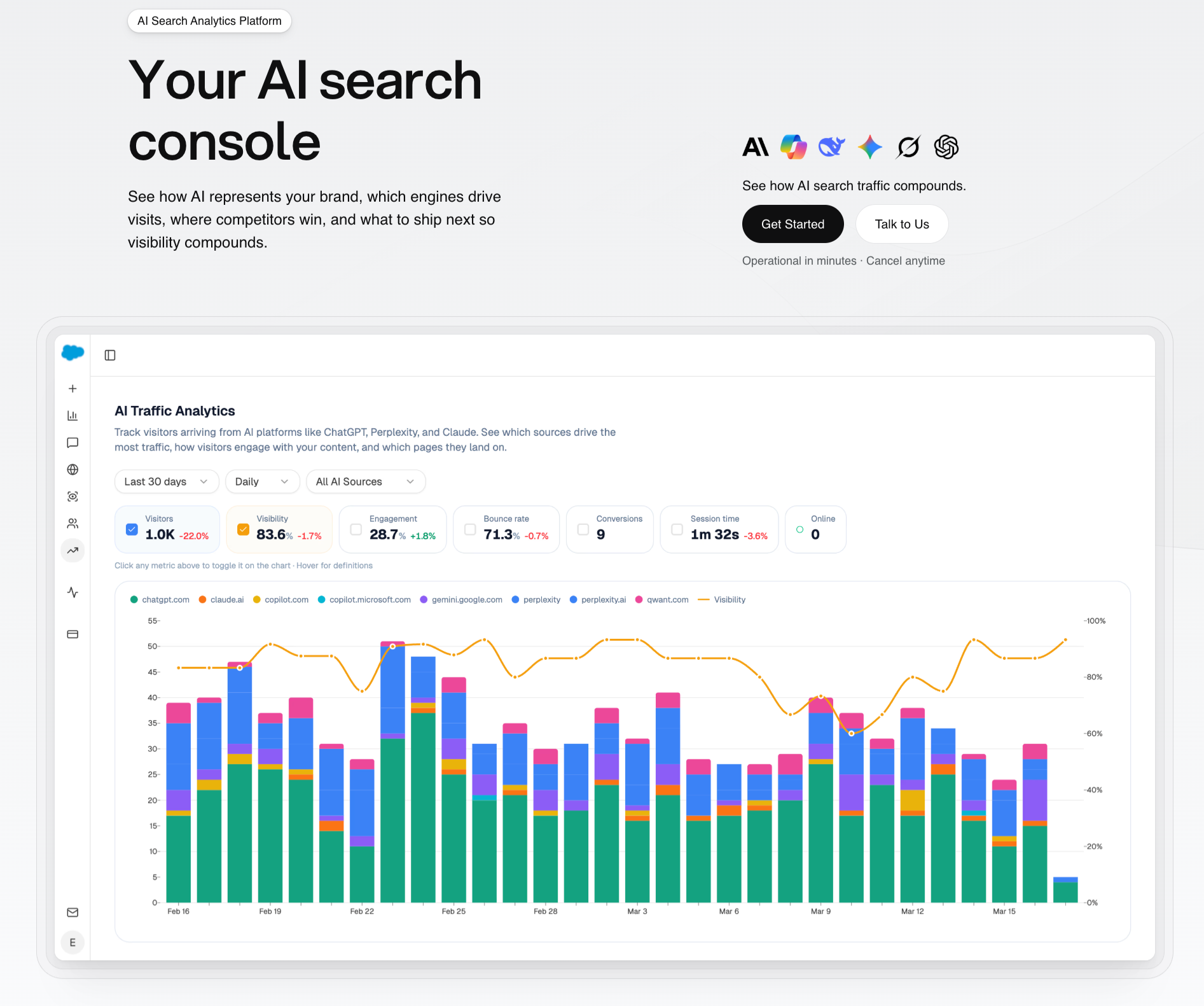

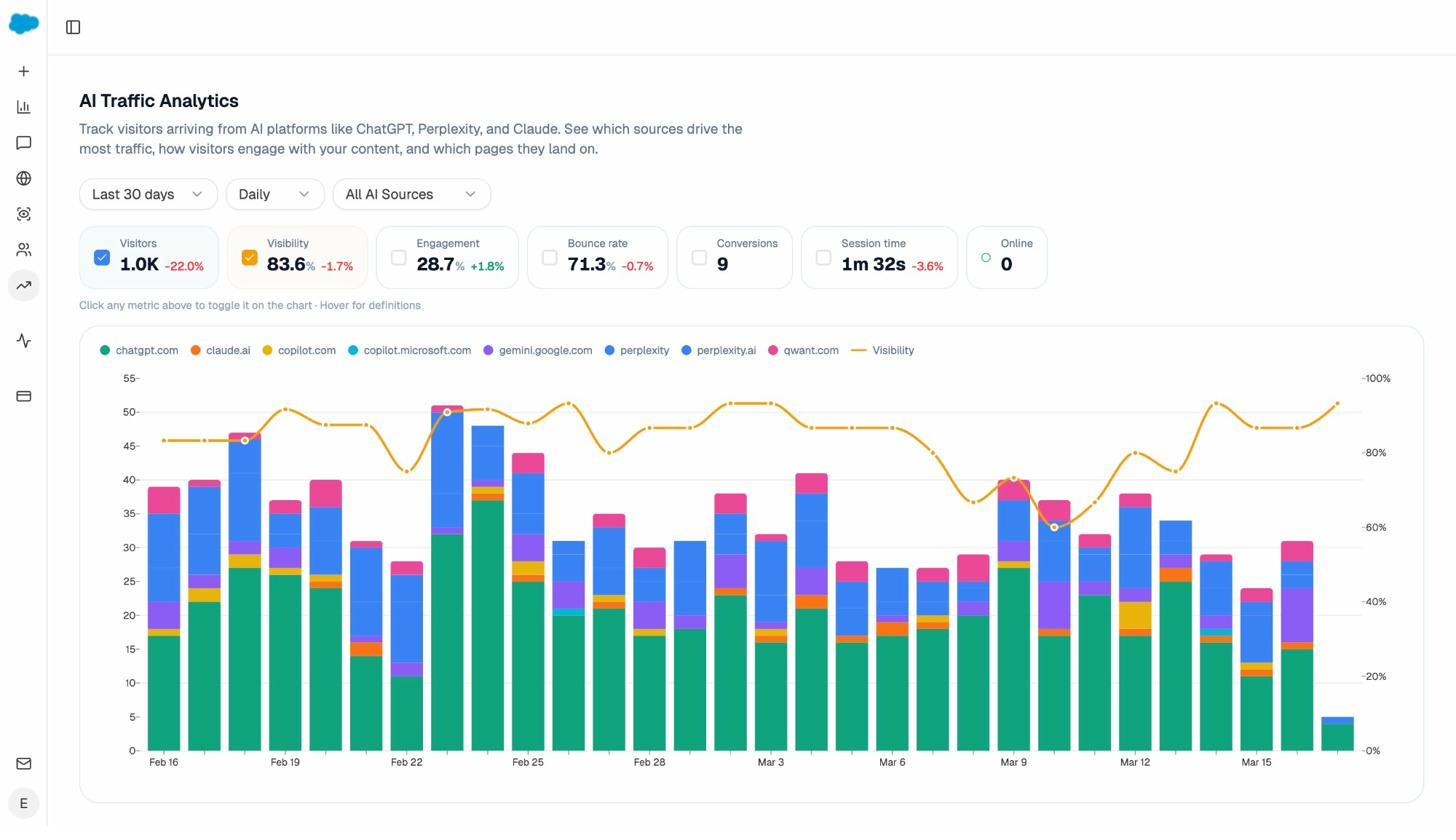

It does this by connecting your AI visibility data to real GA4 sessions, conversions, and revenue. You don’t need to stitch data across tools or build custom dashboards. The platform pulls referral traffic from ChatGPT, Perplexity, Claude, Copilot, and Gemini, then maps that traffic to specific landing pages and conversion events. That means you can tell your CMO exactly how many demo requests came from AI search last month, and which pages drove them.

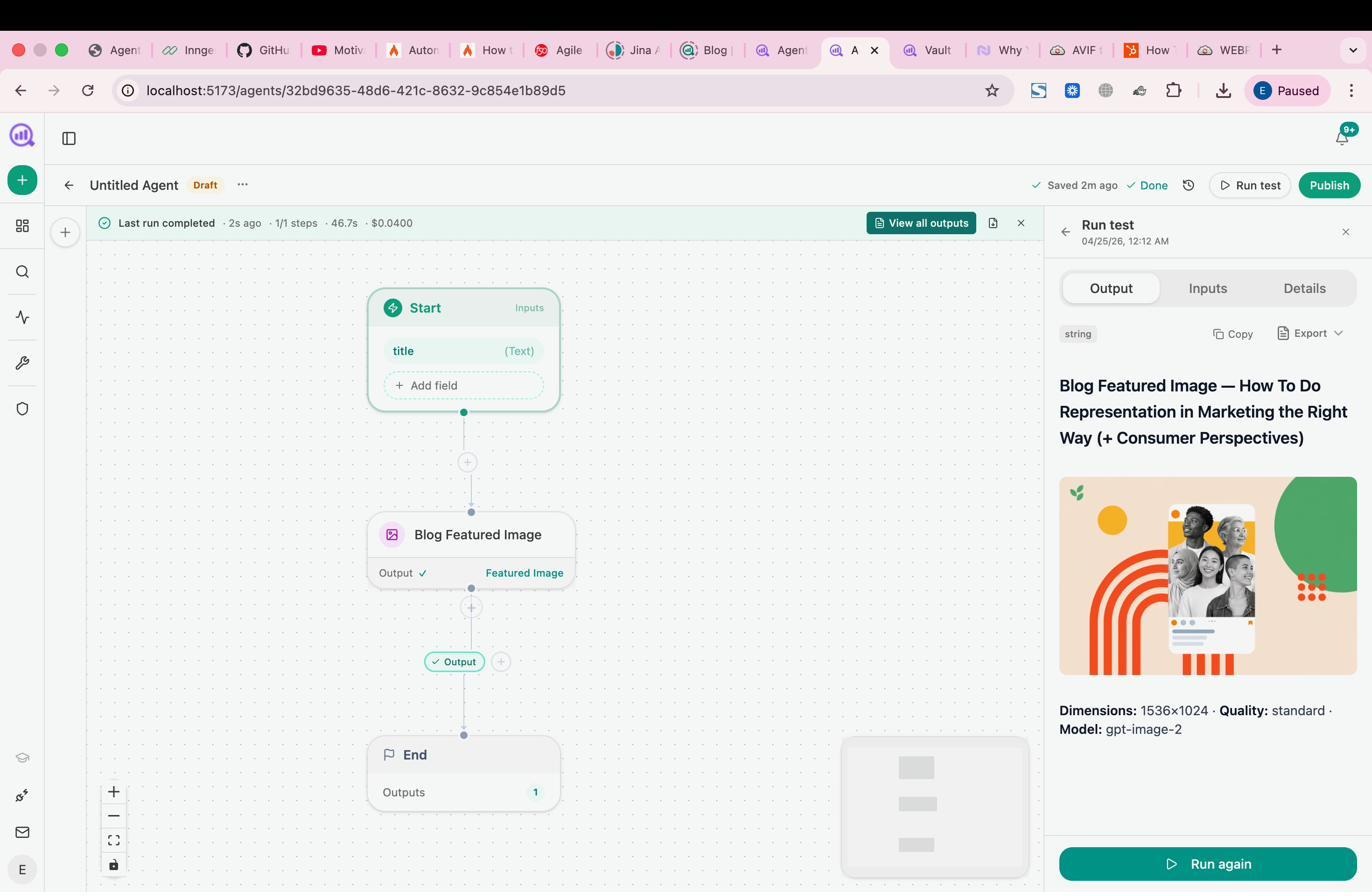

But Analyze AI is not just a monitoring tool. It’s an agentic platform for SEO, AEO, content, and GTM operations with 180+ nodes, 34 pre-built data recipes, and direct integrations with GA4, GSC, Semrush, DataForSEO, HubSpot, Notion, WordPress, Slack, and every major LLM. You can build agents that run competitor reports every Monday morning, auto-publish content that passes an AEO quality gate, enrich inbound leads from Typeform, or trigger crisis response briefs the moment negative coverage appears. The Agent Builder is not an automation layer on top of the product. It is the product.

Here is what you get that Nightwatch does not offer:

AI Traffic Analytics with full attribution. You can see which AI engines send visitors, how many sessions each engine generates, and what those visitors do after they land. Conversions, bounce rate, engagement, session time. All from GA4, not proxy estimates.

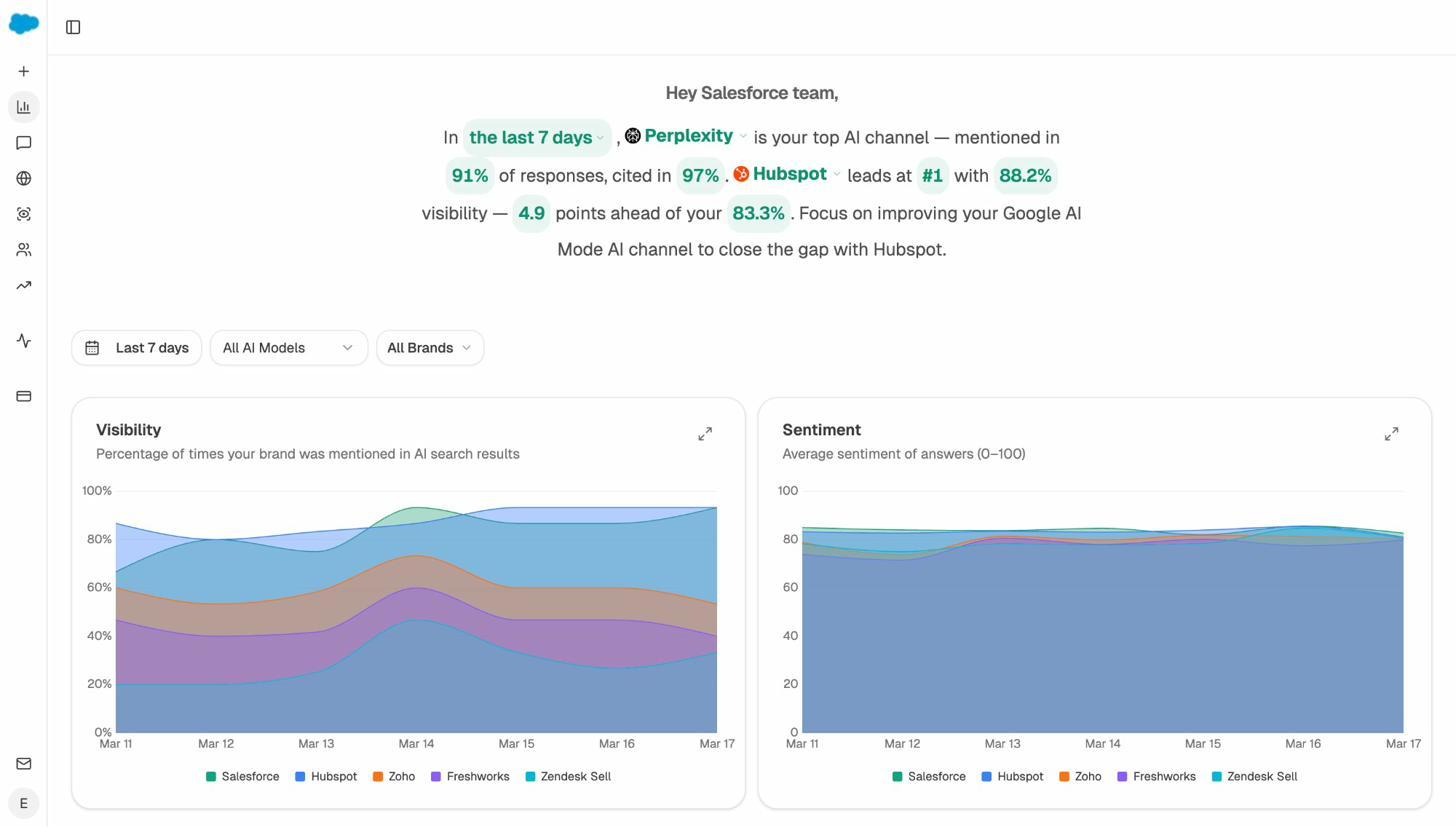

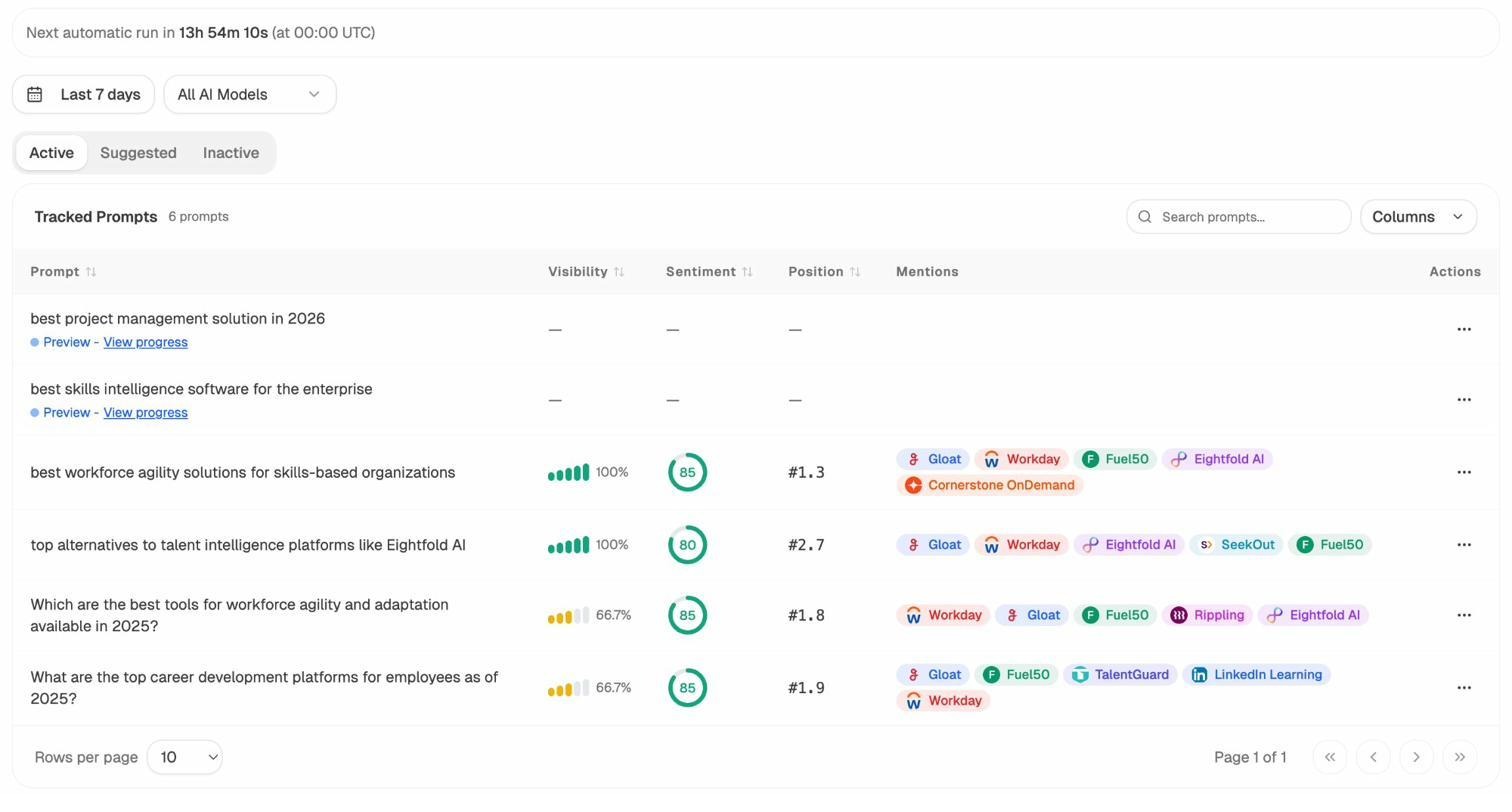

Prompt-level tracking with sentiment. Analyze AI tracks the exact prompts buyers use across engines. For each prompt, you see your brand’s position, presence status, and whether the answer frames you positively or negatively. That tells you which angles models reward and where your messaging needs work.

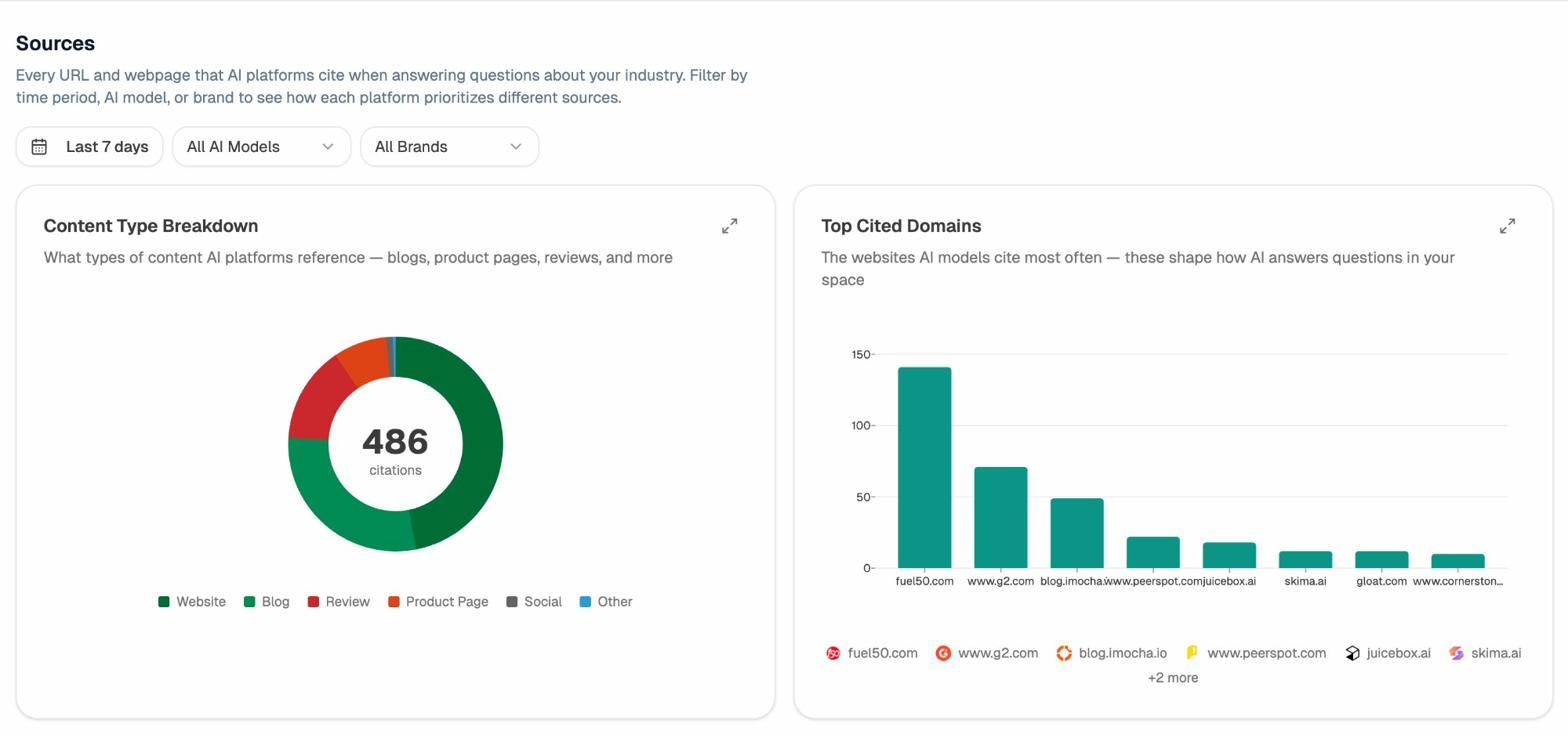

Citation forensics. The Citation Analytics feature shows you which domains and URLs AI models rely on when assembling answers. You can see which sources models trust in your category, spot where competitors earn citations you don’t, and build a targeted plan to earn inclusion on the pages that shape the answer layer.

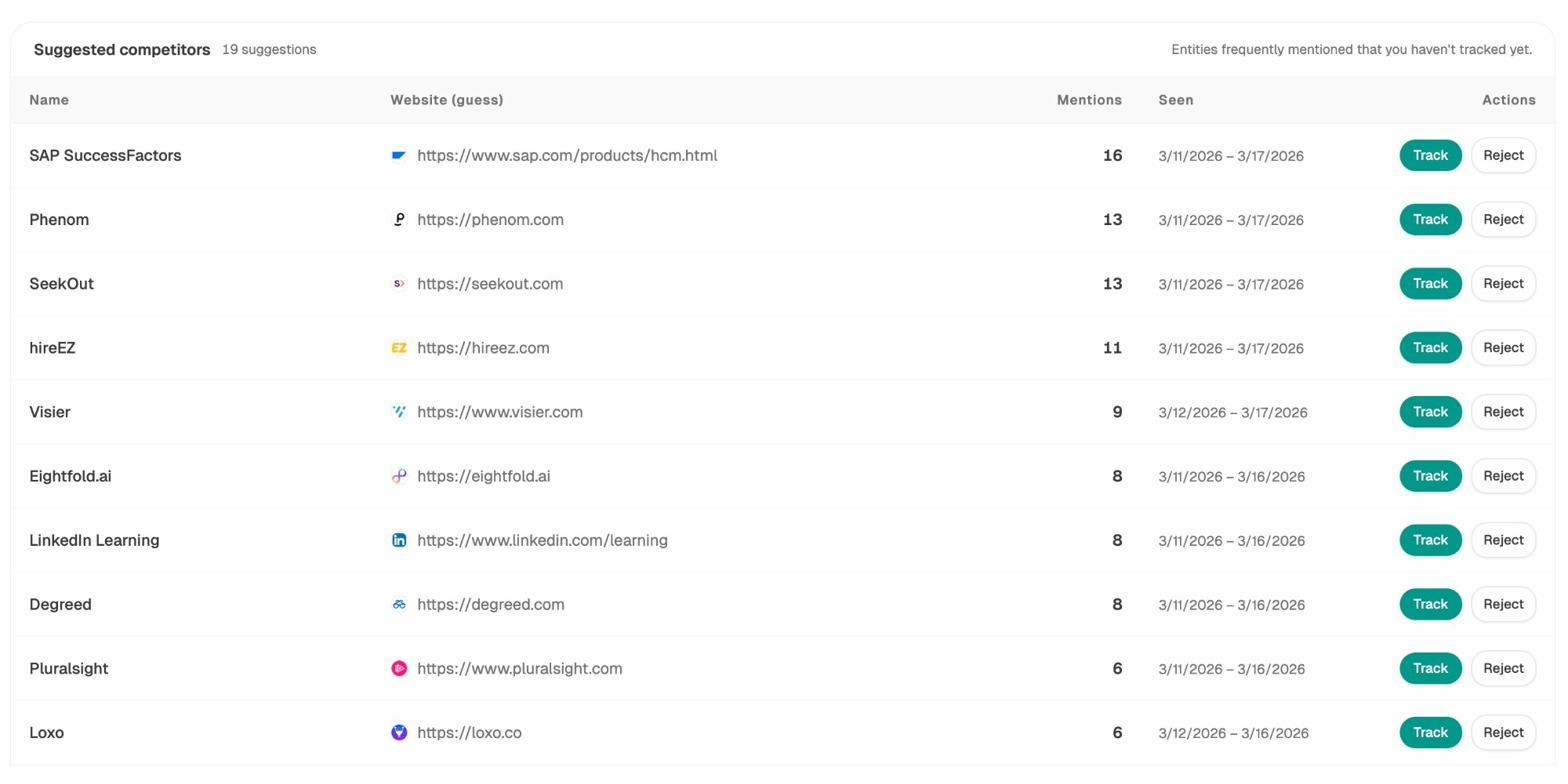

Competitor intelligence with prompt-level gaps. The Competitor Intelligence module shows you exactly where competitors win prompts you lose. It surfaces share-of-voice by engine, tracks rising threats, and highlights under-mentioned prompts where you have commercial opportunities.

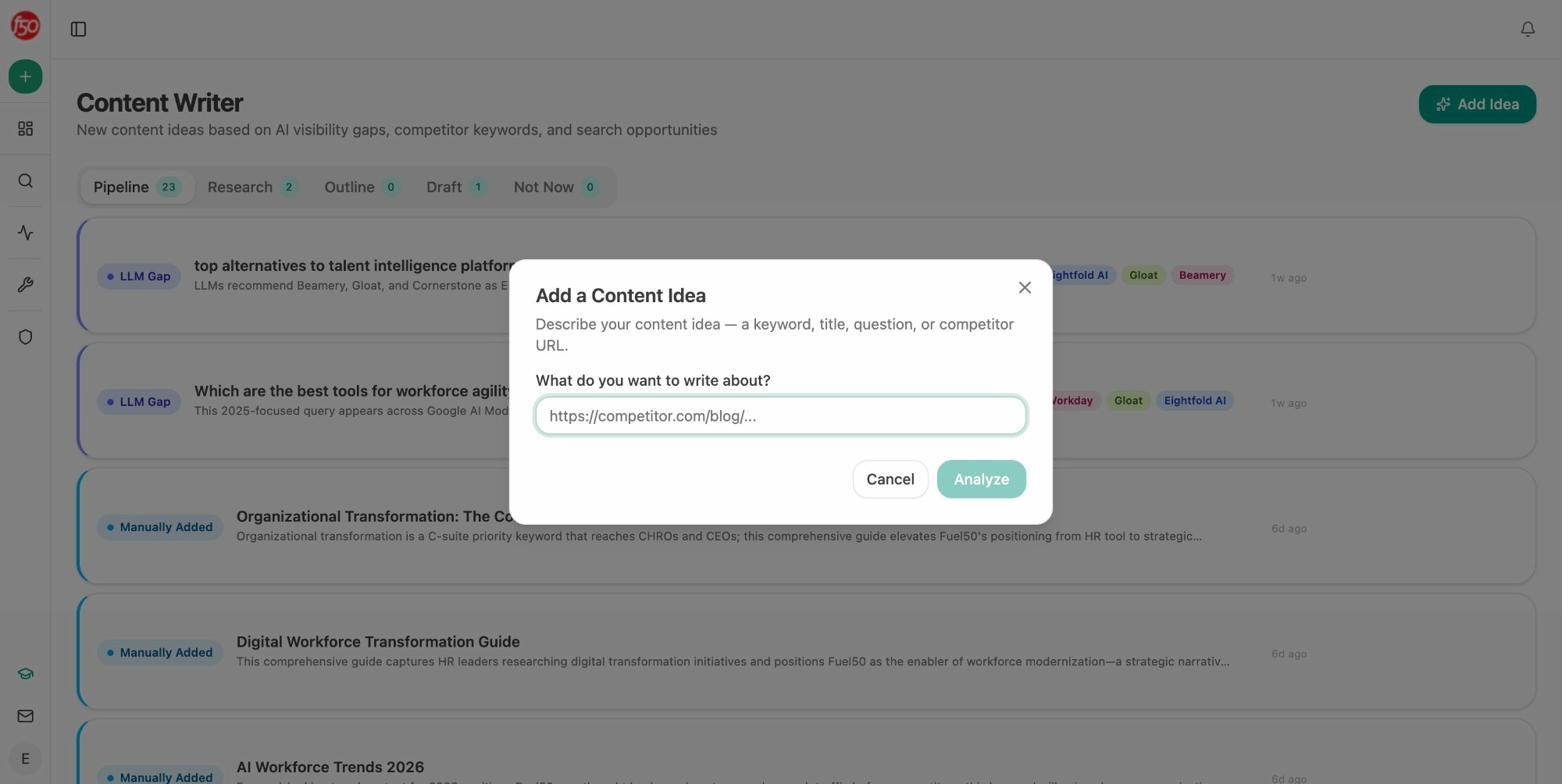

AI Content Writer and Optimizer that produces better outputs. The AI Content Writer does not just generate a draft. It starts with searcher intent analysis, builds a research brief with AI strategist comments, creates an outline, and then produces a full draft grounded in your brand voice from the Knowledge Base. The AI Content Optimizer fetches your existing content, identifies gaps against what AI models cite, and rewrites sections to close those gaps. Both tools are built for AEO, not just SEO.

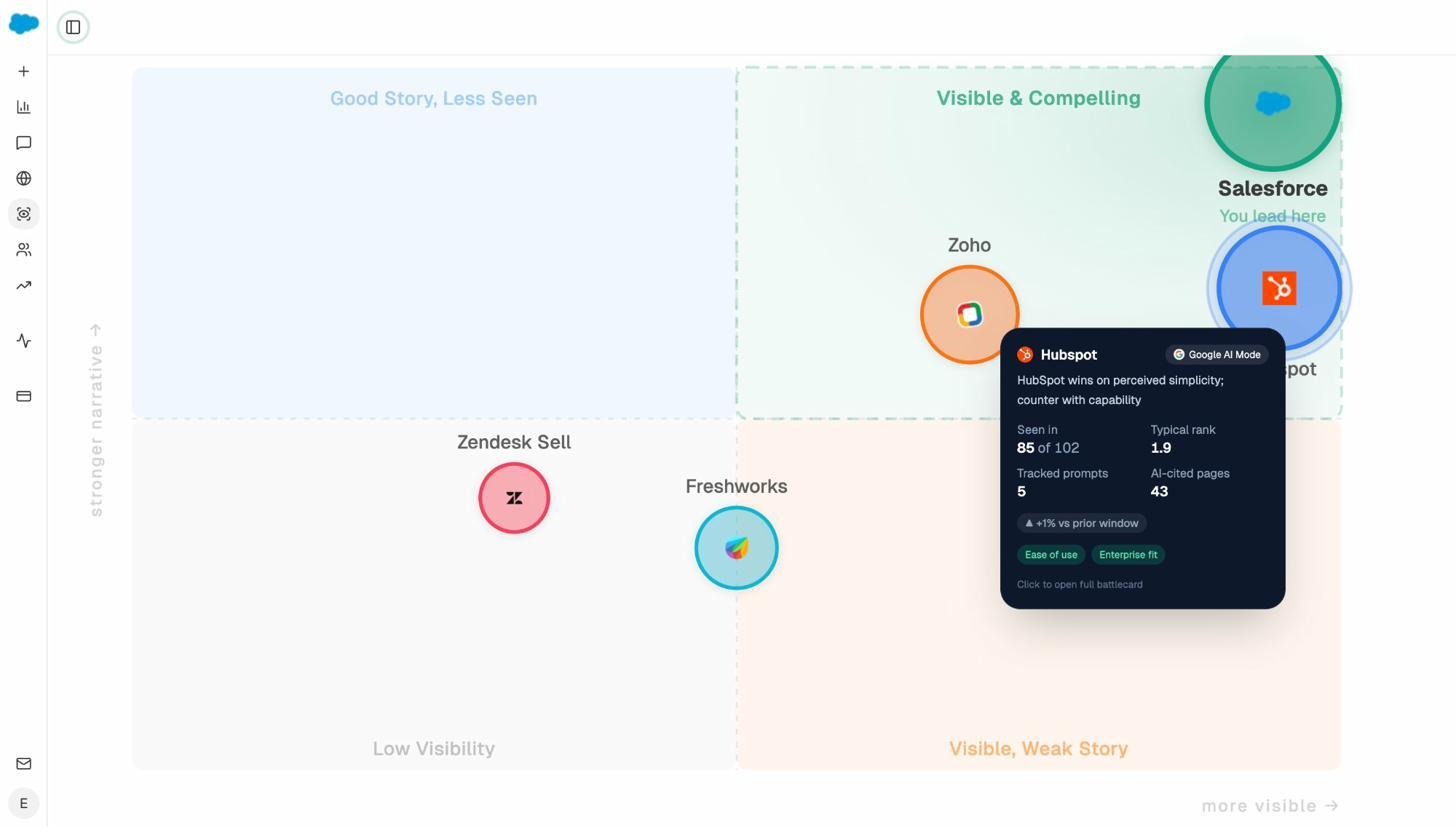

Perception Map and Governance. The Perception Map visualizes how AI models frame your brand versus competitors on key attributes. AI Sentiment Monitoring and AI Battlecards help you catch negative narrative drift before it compounds.

The Agent Builder is the real differentiator. Most tools give you dashboards. Analyze AI gives you a programmable substrate where you can build anything your marketing org needs. An agency can build a single agent that generates a client briefing pack for every account, every Monday, without a human touching it. A content team can build a brief-to-publish pipeline that auto-gates on AEO quality and publishes to WordPress. A PR team can trigger crisis response briefs the moment negative coverage hits. A sales team can auto-enrich every inbound lead with company research, competitor analysis, and a personalized pitch. The Agent Builder supports manual, scheduled, and webhook triggers, which means your agents can run on demand, on a cron, or in response to real-world events like a HubSpot deal closing or a Typeform submission.

Where to be fair. Analyze AI is not a full end-to-end traditional SEO suite. If you need backlink crawling or technical site audits at the depth of a dedicated SEO platform, you’ll pair it with other tools. And if your site gets very little AI traffic today, early results may look modest until the channel scales. But for teams that want to treat AI search as a real revenue channel and not just a visibility metric, Analyze AI is the clear upgrade from Nightwatch.

On pricing, Analyze AI keeps it simple. You’re not paying per keyword or per prompt credit like Nightwatch’s tiered model that can run from $32/month for 250 keywords to $699/month for 10,000. You get the full platform without surprise scaling costs.

Analyze AI also offers a suite of free SEO tools including a keyword generator, keyword difficulty checker, SERP checker, website authority checker, website traffic checker, and broken link checker.

2. Peec AI: Best for Agencies That Need Cross-Engine Reports

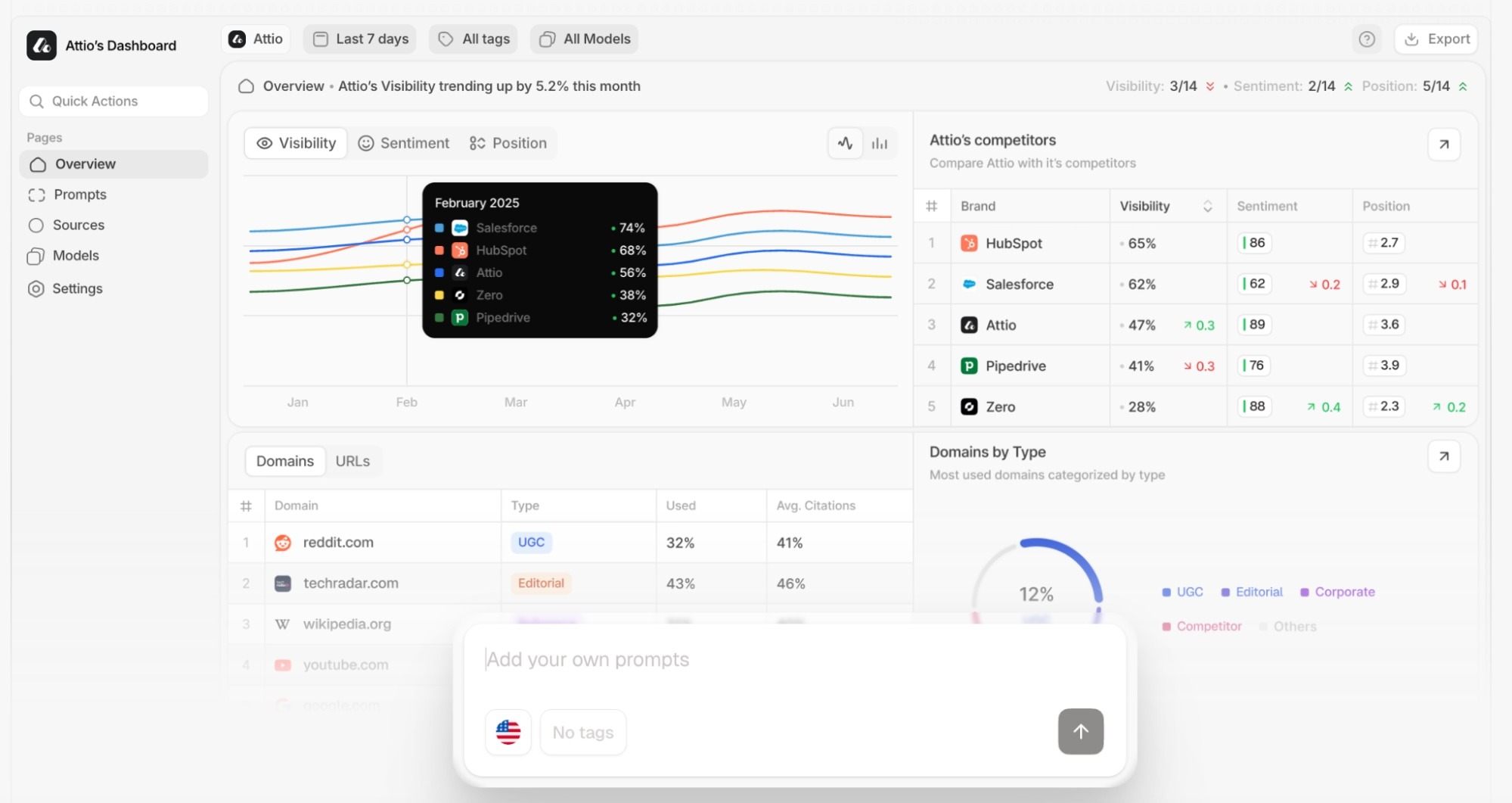

Peec AI starts with AI answers, not keyword positions. That distinction matters because it tracks prompts and responses directly, showing you branded visibility as it actually appears to people across ChatGPT, Perplexity, and AI Overviews.

For agencies, the standout feature is pitch workspaces. You can load client prompts, track cross-engine share of voice, and export findings into presentation-ready reports without switching tools. Citation and source analysis shows which pages power each AI answer, which helps you build targeted content strategies per client.

The tradeoffs are worth noting. Coverage for additional engines like Gemini or Claude can require higher-tier plans. The dashboards favor descriptive analytics, so if you want prescriptive “do this next” guidance, you’ll still need to build your own workflow. And pricing can climb quickly with prompt volume, so scope your usage carefully before committing.

When Peec shines: Multi-brand agencies that need to prove AI visibility in client reports. The exports, API access, and Looker Studio connector make the path from insight to slide deck fast.

When to look elsewhere: If you want to go beyond reporting into automated action, or if you need to tie visibility to actual revenue, Peec’s descriptive layer may not be enough.

3. Profound: Best for Enterprises With Compliance Requirements

Profound treats AI visibility as a workflow, not a metric. It maps relationships between prompts, AI crawlers, and citations to help you understand why your visibility changes, not just that it changed.

The enterprise infrastructure is the real draw. SOC 2 compliance, SSO, data controls, and GA4 attribution make it suitable for large organizations that need to connect visibility metrics with real traffic data. The Agent Analytics feature tracks AI crawler behavior on your site, which shows you how models discover and interact with your content.

The downside is the price and complexity. Profound is built for large teams with dedicated resources. Smaller brands or agencies may find the cost steep, and the dashboards can feel complex for newcomers. Interpreting prompt clusters, crawl timelines, and citation maps requires someone comfortable with data analysis. And while Profound delivers rich analytics, it expects you to handle execution elsewhere.

When Profound shines: Large enterprises managing visibility across multiple markets that need secure, compliant infrastructure.

When to look elsewhere: If you need the platform to help you act on what it finds, not just report it. Profound’s diagnostic depth is impressive, but execution still lives outside the tool.

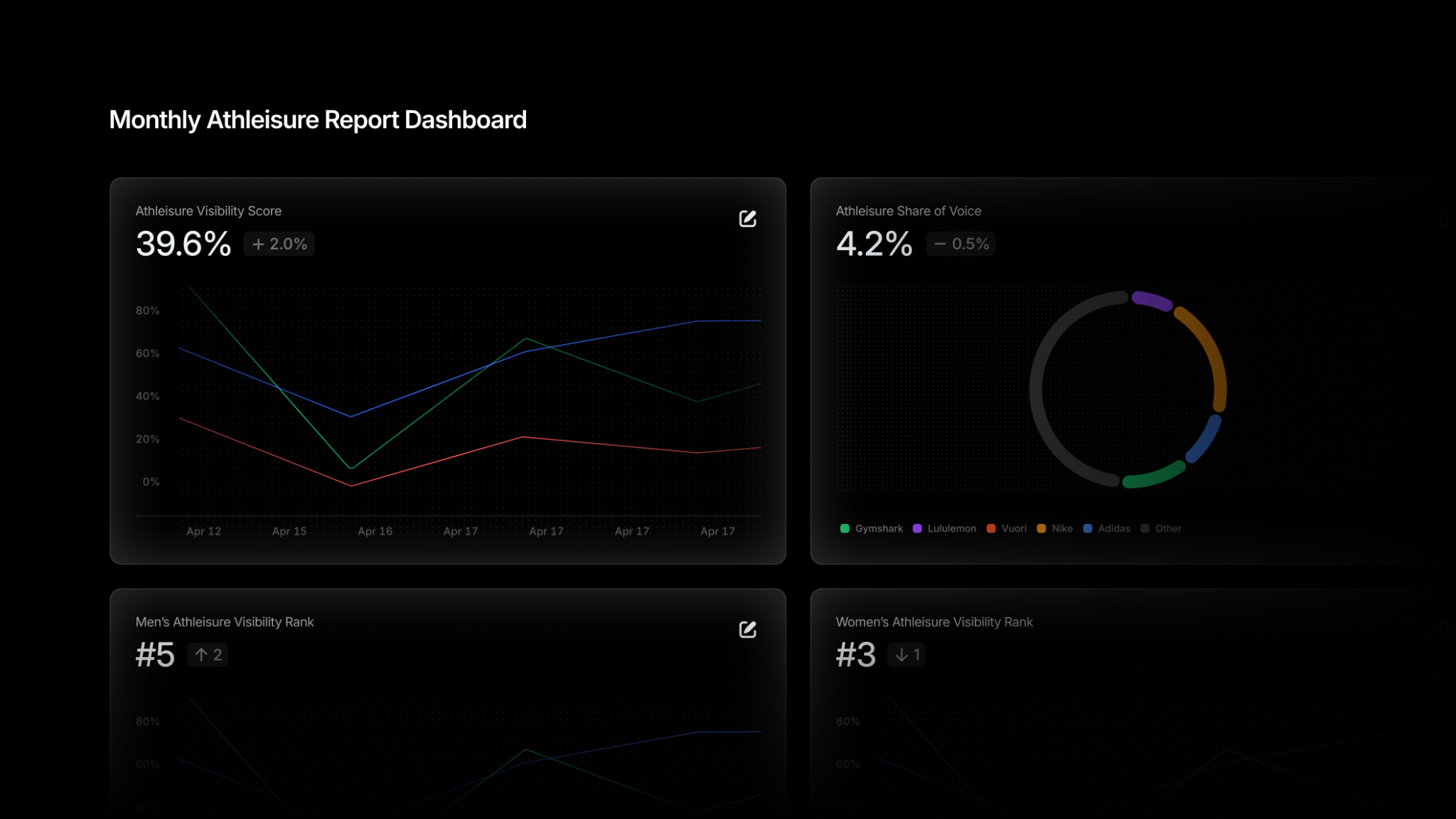

4. Rankscale AI: Best for Budget-Conscious GEO Testing

Rankscale connects output visibility data to prompt-level insights. It tests prompts across models, analyzes which citations drive mentions, and ranks your standing among the top results surfaced by AI. Starting at $20 per month, it’s the most affordable entry point in this list.

The optimization guidance stands out. Rankscale’s recommendations are built around how AI systems process entities, context, and authority rather than keyword density. That makes it a practical bridge between traditional SEO and generative engine optimization for data-driven marketers who want to experiment without enterprise overhead.

The limitations match the price. There’s no AI crawler tracking, so you see what the AI says but not how it got there. The interface leans analytical and can feel dense. Some AI models and advanced prompt types are locked behind higher tiers. And Rankscale doesn’t execute changes automatically, so optimization still depends on your existing content workflow.

When Rankscale shines: Small teams and solo marketers who want to start testing AI visibility without a large budget commitment.

When to look elsewhere: If you need full-funnel attribution, content production, or automation alongside your analytics. Rankscale is a focused measurement tool, not a platform.

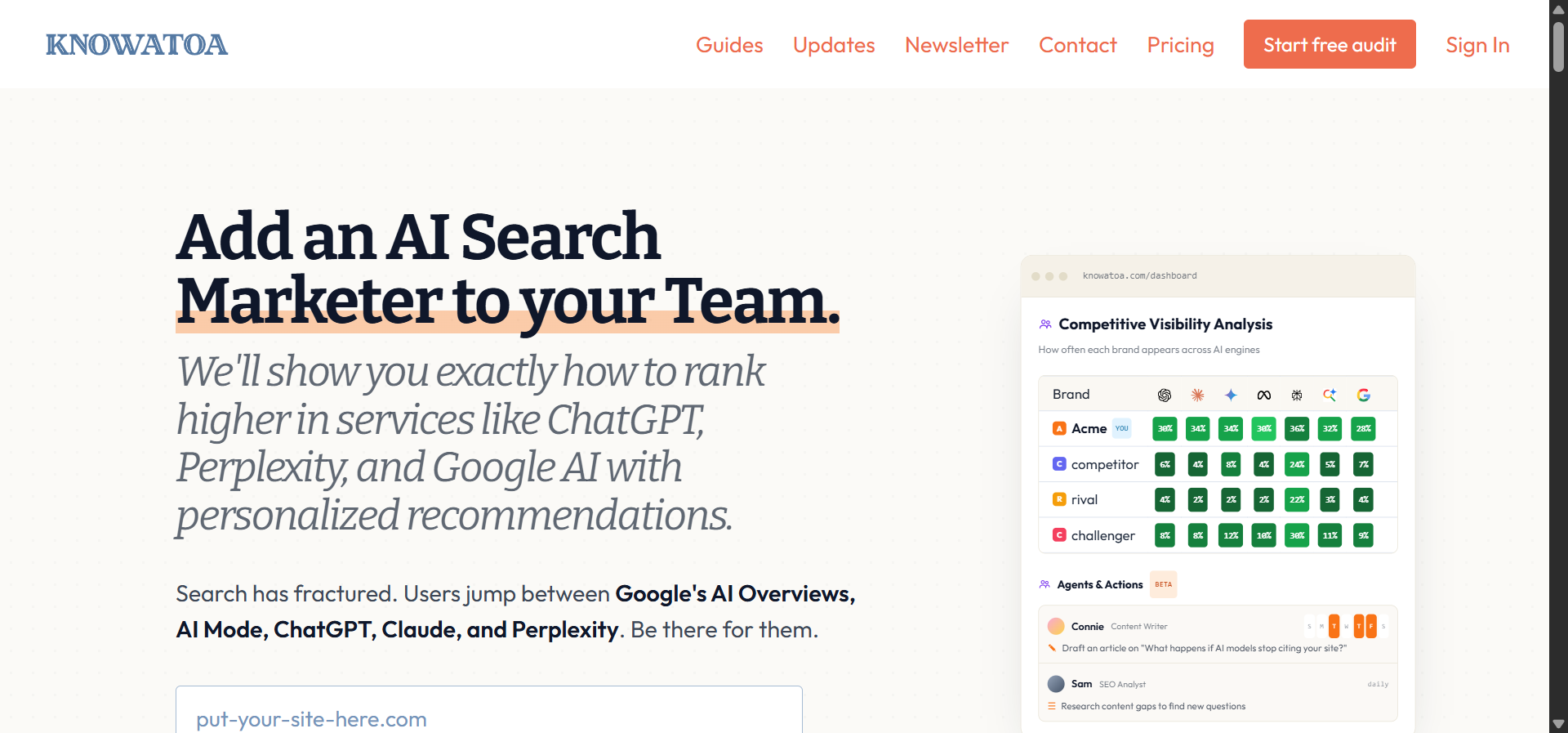

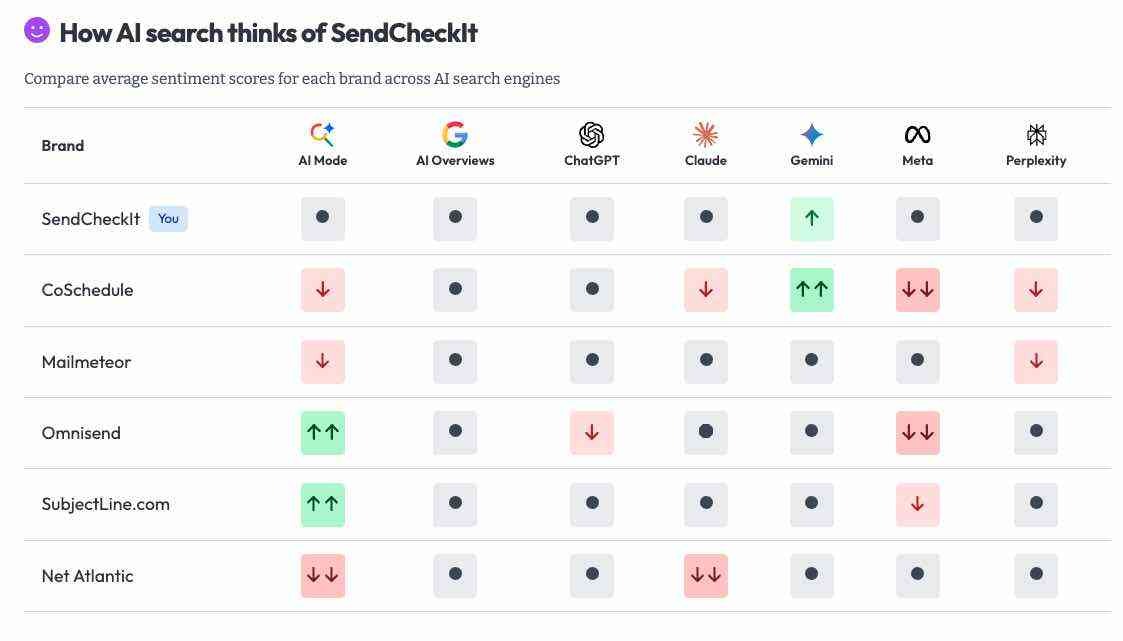

5. Knowatoa AI: Best for Brand Accuracy and Reputation Monitoring

Knowatoa targets a specific blind spot. It checks whether AI tools portray your brand correctly. It doesn’t just count mentions. It evaluates whether those mentions are accurate, detects when AI outputs misstate or distort brand facts, and flags errors before they compound.

For PR and communications teams, that focus on accuracy over volume is the value. Competitor benchmarking shows which rivals dominate generative answers and how they’re framed, which helps comms teams turn vague AI visibility concerns into concrete messaging strategy.

The tradeoffs are clear. Knowatoa lacks crawler analytics, so you won’t see how AI bots interact with your site. It doesn’t automate corrections. And as a newer entrant, engine coverage may be uneven across some models. It’s a reporting and alerting tool, not an optimization platform.

When Knowatoa shines: PR, brand, and communication teams that need to protect accuracy and detect misinformation in AI outputs.

When to look elsewhere: If you need to go beyond detecting problems to actually fixing them. You’ll need to pair Knowatoa with content and SEO tools for execution.

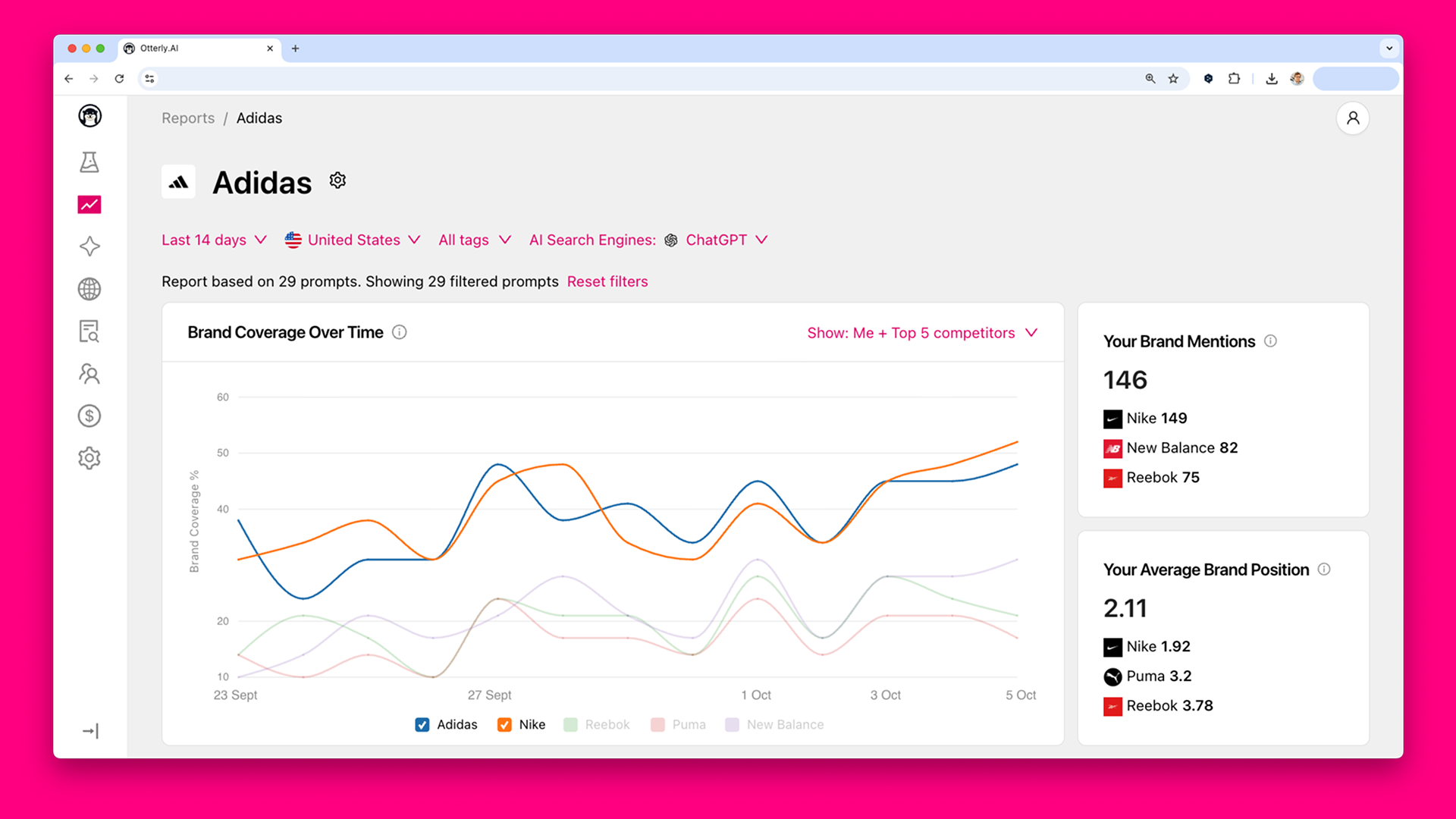

6. Otterly.ai: Best for Simple, Visual AI Visibility Dashboards

Otterly makes AI visibility tracking visual and accessible. Dashboards organize mentions, prompts, and citations into clear charts and share-of-voice metrics that anyone can understand at a glance. Multi-engine coverage includes ChatGPT, Gemini, Perplexity, AI Overviews, and Copilot.

The combination of citation tracking and GEO auditing adds tactical value. It shows which sources drive your mentions and where your site structure might be holding you back. The Semrush integration helps unify AI visibility with existing SEO reporting.

The platform doesn’t automate actions or updates. If you want workflow automation or AI-driven optimization guidance, you’ll need other tools. Aside from Semrush, there are few third-party integrations. And as you monitor more prompts, engines, or markets, both complexity and cost rise. Otterly tracks what AI shows, not how AI crawls, so it’s output-side visibility only.

When Otterly shines: Startups or marketing teams that want fast visibility insights without deep technical setup.

When to look elsewhere: If you need automation, deep integrations, or the ability to act on findings inside the same platform.

7. Scrunch AI: Best for Diagnosing Why AI Ignores Your Content

Scrunch AI gives SEO strategists a forensic view of how AI systems see their websites. It maps where your content surfaces and where it doesn’t, then points to the prompts, sources, or on-page factors responsible for the gap. Persona-based visibility tracking adds another dimension by simulating how different audiences experience AI responses.

The brand safety focus is another strength. Scrunch identifies when AI models cite outdated, incomplete, or misleading data about your brand, which is critical for reputation management in a world where users trust AI summaries more than underlying sources.

The limitations are predictable. Basic prompt analytics, no crawler data, and enterprise pricing that may not fit smaller teams. While Scrunch flags problems, the execution work of rewriting content, updating schema, or restructuring pages still depends on your existing workflow.

When Scrunch shines: SEO strategists and technical content teams conducting GEO audits or investigating why models cite competitors but skip their content.

When to look elsewhere: If you need prompt simulations, automated optimization, or an integrated content production pipeline.

How to Choose the Right Nightwatch Alternative

The right choice depends on what you’re actually trying to accomplish.

If you need to prove ROI from AI search, Analyze AI is the only tool here that ties visibility to GA4 sessions, conversions, and revenue. It also gives you the Agent Builder to automate everything from content production to competitor intelligence to weekly email digests to your team.

If you need agency reporting, Peec AI’s pitch workspaces and export options get you from data to slide deck fast.

If you need enterprise compliance, Profound’s SOC 2 and SSO infrastructure will satisfy your security team.

If you need affordable GEO testing, Rankscale lets you start at $20 per month.

If you need brand accuracy monitoring, Knowatoa focuses specifically on whether AI gets your facts right.

If you need simple dashboards, Otterly makes visibility data accessible to anyone on the team.

If you need diagnostic audits, Scrunch shows you exactly why AI models skip your content.

One thing worth remembering: SEO is not dead. AI search is an additional organic channel alongside traditional search, not a replacement. The brands that show up in AI answers are the same ones producing clear, original, and useful content. The difference now is that your content has to work for AI models too. The tool you pick should help you compound what’s already working, not panic you into a pivot.

Ernest

Ibrahim

![6 AthenaHQ Alternatives That Skip the Credit Math [2026]](/_next/image?url=https%3A%2F%2Fwww.datocms-assets.com%2F164164%2F1778742681-blobid0.png&w=3840&q=75)

![50 GEO Statistics From Tracking 83,670 AI Citations [2026 Data]](/_next/image?url=https%3A%2F%2Fwww.datocms-assets.com%2F164164%2F1779314907-blobid0.png&w=3840&q=75)