Summarize this blog post with:

In this article, you’ll learn what Googlebot is, how it discovers and indexes your pages, how to control its behavior, and how to verify it in your server logs. You’ll also learn about the new generation of AI crawlers — GPTBot, ClaudeBot, PerplexityBot, and others — that now visit your site alongside Googlebot, and how to manage both for maximum visibility across traditional search and AI search.

Table of Contents

What is Googlebot?

Googlebot is Google’s web crawler. It visits pages across the internet, reads their content, follows their links, and sends everything back to Google’s servers so it can be processed, rendered, and stored in Google’s search index.

Without Googlebot, your pages would not appear in Google Search. It is the entry point to organic visibility.

Googlebot comes in several versions. The two main ones are a mobile crawler and a desktop crawler. There are also specialized versions for images, video, and news. Each one identifies itself with a different user-agent string — a line of text in the HTTP request header that tells your server who is making the request.

Here is the full list of Googlebot crawlers and their user-agent strings:

|

Crawler |

User-agent string |

|---|---|

|

Googlebot Smartphone |

Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X Build/MMB29P) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/W.X.Y.Z Mobile Safari/537.36 (compatible; Googlebot/2.1; +http://www.google.com/bot.html) |

|

Googlebot Desktop |

Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; Googlebot/2.1; +http://www.google.com/bot.html) Chrome/W.X.Y.Z Safari/537.36 |

|

Googlebot Image |

Googlebot-Image/1.0 |

|

Googlebot Video |

Googlebot-Video/1.0 |

|

Googlebot News |

Uses standard Googlebot Smartphone/Desktop strings |

|

Google StoreBot Mobile |

Mozilla/5.0 (Linux; Android 8.0; Pixel 2 Build/OPD3.170816.012; Storebot-Google/1.0) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/W.X.Y.Z Mobile Safari/537.36 |

|

Google StoreBot Desktop |

Mozilla/5.0 (X11; Linux x86_64; Storebot-Google/1.0) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/W.X.Y.Z Safari/537.36 |

|

Google-InspectionTool Mobile |

Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X Build/MMB29P) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/W.X.Y.Z Mobile Safari/537.36 (compatible; Google-InspectionTool/1.0;) |

|

Google-InspectionTool Desktop |

Mozilla/5.0 (compatible; Google-InspectionTool/1.0;) |

|

GoogleOther |

Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X Build/MMB29P) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/W.X.Y.Z Mobile Safari/537.36 (compatible; GoogleOther) |

|

GoogleOther-Image |

GoogleOther-Image/1.0 |

|

GoogleOther-Video |

GoogleOther-Video/1.0 |

|

Google-CloudVertexBot |

Google-CloudVertexBot |

|

Google-Extended |

Uses standard Googlebot strings |

The Chrome/W.X.Y.Z placeholder in these strings gets replaced with whatever Chrome version Googlebot is currently running. Googlebot is “evergreen,” which means it always uses the latest stable version of Chrome to render pages. This matters because it means Googlebot sees your site the same way a real user with a modern browser does.

One important distinction: Google-Extended is not a separate crawler. It uses the same Googlebot infrastructure but exists as a separate user-agent token in robots.txt so you can control whether Google uses your content for AI training (Gemini) without affecting your regular search indexing. Blocking Google-Extended does not remove you from Google Search results.

How fast does Googlebot crawl?

Googlebot runs on thousands of machines distributed around the world. It determines how fast and how much to crawl on each website, and it will slow down automatically if it detects that crawling is putting too much load on your server.

According to Cloudflare Radar, Googlebot is the fastest web crawler on the internet, responsible for roughly 23.7% of all verified bot HTTP requests globally.

![[Screenshot: Cloudflare Radar chart showing top verified bots by percentage of HTTP requests, with Googlebot at the top]](https://www.datocms-assets.com/164164/1776443875-blobid1.png)

To put that in perspective, Bingbot accounts for about 4.57% of verified bot requests. The sheer volume of Googlebot traffic underscores why understanding and managing its access to your site is a core part of technical SEO.

How Googlebot crawls and indexes the web

Google has published several versions of its crawling and indexing pipeline over the years. Here is how the current process works, step by step.

Step 1: URL discovery

Google starts with a pool of known URLs. These come from multiple sources: links found on pages it has already crawled, URLs listed in your XML sitemap, RSS feeds, and URLs you manually submit through Google Search Console or the Indexing API.

This is why internal linking matters. Every internal link on your site is a potential discovery path for Googlebot. If a page has no internal links pointing to it and is not in your sitemap, Googlebot may never find it.

Step 2: Crawl prioritization

Google does not crawl every URL it knows about at the same time. It prioritizes based on factors like how frequently a page changes, how important Google considers the page, and whether it has been explicitly submitted for crawling.

This is also where crawl budget comes in. For most small-to-medium sites, crawl budget is not a concern. But for large sites with millions of pages, understanding how Google allocates its crawling resources becomes critical. Pages buried deep in your site architecture with few links pointing to them are less likely to get crawled frequently.

Step 3: Fetching

Once Googlebot decides to crawl a URL, it sends an HTTP request to your server and downloads the raw HTML response. It stores a copy of this response for processing.

At this stage, Googlebot also records the HTTP status code (200, 301, 404, 500, etc.), response headers, and any server-side redirects. These all affect whether and how the page gets indexed.

Step 4: Rendering

Modern websites rely heavily on JavaScript to build page content. A static HTML fetch is not enough — Googlebot needs to execute JavaScript to see the full page.

Google uses a rendering service (based on headless Chrome) to execute JavaScript, load CSS, fire API requests, and render the page the same way a browser would. This happens separately from the initial fetch, which means there can be a delay between when Google first sees your raw HTML and when it processes the fully rendered version.

This has practical implications. If your critical content is loaded via JavaScript, Google will eventually see it — but not instantly. For time-sensitive content or very large sites, server-side rendering (SSR) or static site generation gives Google immediate access to your content without waiting for the rendering queue.

![[Screenshot: Google Search Console URL Inspection tool showing a page’s crawl and rendering status]](https://www.datocms-assets.com/164164/1776443881-blobid2.png)

Step 5: Indexing

After rendering, Google processes the final page content. It extracts text, identifies entities, evaluates quality signals, and stores the result in its search index. Google uses the mobile-rendered version of the page as the primary version for indexing — this is known as mobile-first indexing.

Any new links discovered during this process go back into the URL pool, and the cycle starts again.

![[Screenshot: Diagram showing Google’s crawl-render-index pipeline, as published in Google’s developer documentation]](https://www.datocms-assets.com/164164/1776443881-blobid3.png)

For a deeper explanation of how this entire pipeline works, see our guide on how search engines work.

How to control Googlebot

Google gives you tools to control both what gets crawled and what gets indexed. These are separate decisions with different mechanisms.

Ways to control crawling

Robots.txt is a text file you place at the root of your domain (e.g., yoursite.com/robots.txt). It tells crawlers which parts of your site they can and cannot access. Googlebot checks this file before crawling any URL on your domain.

Here is a basic example:

User-agent: Googlebot

Disallow: /admin/

Disallow: /staging/

Allow: /

This allows Googlebot to crawl everything except your /admin/ and /staging/ directories.

A few things to keep in mind about robots.txt:

-

Blocking a URL in robots.txt prevents crawling but does not prevent indexing. If other pages link to that URL, Google may still index it — it just will not have the page’s content.

-

Robots.txt rules are case-sensitive. /Admin/ and /admin/ are treated as different paths.

-

Use Google’s robots.txt Tester in Google Search Console to validate your file before deploying it.

![[Screenshot: Google Search Console robots.txt Tester showing a validated robots.txt file]](https://www.datocms-assets.com/164164/1776443886-blobid4.png)

Nofollow is a link attribute (rel="nofollow") or a meta robots tag directive that suggests Google should not follow a specific link. It is only a hint — Google may choose to ignore it.

Crawl rate settings were previously available in Google Search Console to let you slow down Googlebot’s crawling speed, but Google deprecated this feature. Googlebot now manages its own crawl rate automatically.

Ways to control indexing

Controlling indexing is about deciding which pages appear in search results. Here are your options:

Noindex is the most common method. Adding <meta name="robots" content="noindex"> to your page’s <head> section, or using the X-Robots-Tag: noindex HTTP header, tells Google not to index the page. The page will still be crawled (unless blocked by robots.txt), but it will not appear in search results.

Delete the content. If you remove a page entirely (returning a 404 or 410 status code), Google will eventually drop it from its index. This is permanent — no one can access it, including your users.

Restrict access. Google does not log in to websites. Password protection or any form of authentication will prevent Googlebot from seeing the content.

URL Removal Tool. Google Search Console offers a temporary removal tool that hides a page from search results for approximately six months. Google will still crawl the content — it just will not show it. This is useful for urgent removals while you implement a permanent solution like noindex.

Robots.txt (images only). Blocking Googlebot-Image via robots.txt prevents your images from appearing in Google Image search, but does not affect the rest of the page’s indexing.

If you are not sure which method to use, the decision comes down to intent. If you want the page to exist but not show up in search, use noindex. If you want the page gone entirely, delete it or restrict access. And if you need an emergency removal while you figure out the permanent fix, use the URL Removal Tool.

You can use Analyze AI’s Broken Link Checker to audit your site for 404 errors, redirect chains, and other issues that waste Googlebot’s crawl budget on dead pages.

Technical details of Googlebot

These details are useful when you are troubleshooting crawling issues or configuring your server to work well with Googlebot.

IP addresses and location

Googlebot primarily crawls from IP addresses based in the United States (Mountain View, California). However, Google also has locale-specific crawling options that it may use in cases where websites block traffic from US-based IP addresses.

Google publishes a JSON file of all Googlebot IP addresses that you can use to verify whether a request actually came from Googlebot.

Maximum file size

Googlebot will download the first 15 MB of an HTML file’s content. Anything beyond that cutoff is ignored. For most web pages, this is more than enough. But if your pages contain extremely large inline data (like massive SVG files or embedded base64 images), keep this limit in mind.

For robots.txt files, the limit is 500 kibibytes (KiB). If your robots.txt exceeds this, Google treats everything beyond the limit as if it were allowed.

Supported protocols

Googlebot supports HTTP/1.1 and HTTP/2, and it chooses whichever protocol gives better crawling performance for your site. It can also crawl over FTP and FTPS, though this is rare.

If your server supports HTTP/2, Googlebot will typically use it — which reduces the overhead of multiple connections and speeds up the crawling of resources like CSS, JavaScript, and images.

Content encoding (compression)

Googlebot supports three compression methods: gzip, deflate, and Brotli (br). Enabling compression on your server reduces the size of responses sent to Googlebot, which means faster page fetching and less bandwidth consumption.

Most modern web servers and CDNs support all three formats. If you are not compressing your responses, you are making Googlebot (and your users) download more data than necessary.

HTTP caching

Google supports standard HTTP caching headers, including ETag, Last-Modified, If-None-Match, and If-Modified-Since. When Googlebot re-crawls a page that has not changed, your server can respond with a 304 Not Modified status instead of sending the full page content again. This saves bandwidth and server resources on both sides.

Is it really Googlebot?

Many bots — including SEO tools, scrapers, and malicious crawlers — impersonate Googlebot by using its user-agent string. If your server makes access decisions based solely on user-agent strings, you could be letting in traffic that is not actually Google.

There are two reliable ways to verify Googlebot:

Method 1: Reverse DNS lookup

This is the traditional verification method. You take the IP address from the request, do a reverse DNS lookup, and check whether the hostname ends in googlebot.com or google.com. Then you do a forward DNS lookup on that hostname to confirm it resolves back to the original IP.

On a Linux server, this looks like:

# Reverse DNS lookup

host 66.249.66.1

# Expected result: crawl-66-249-66-1.googlebot.com

# Forward DNS lookup to verify

host crawl-66-249-66-1.googlebot.com

# Should resolve back to 66.249.66.1

If both lookups match, the request is from a legitimate Googlebot.

Method 2: IP range verification

Google now publishes a public list of Googlebot IP ranges in JSON format. You can download this file and compare incoming request IPs against it. This is faster than DNS lookups and easier to automate.

Here is a quick shell command to check if an IP is in Google’s published ranges:

curl -s https://developers.google.com/search/apis/ipranges/googlebot.json | \

python3 -c "import sys,json; data=json.load(sys.stdin); \

[print(p.get('ipv4Prefix','') or p.get('ipv6Prefix','')) for p in data['prefixes']]" | \

grep -F "66.249."

![[Screenshot: Server log file showing Googlebot requests with IP addresses, user-agent strings, and HTTP status codes]](https://www.datocms-assets.com/164164/1776443886-blobid5.jpg)

Using Google Search Console’s Crawl Stats

You do not need to dig through server logs manually. Google Search Console provides a Crawl Stats report (found under Settings > Crawl Stats) that shows you exactly how Googlebot is crawling your site. You can see which Googlebot variant is making requests, which URLs it is accessing, response codes, file types, and crawl timing.

![[Screenshot: Google Search Console Crawl Stats report showing crawl requests over time, broken down by response type]](https://www.datocms-assets.com/164164/1776443891-blobid6.png)

This report is especially useful for diagnosing issues like excessive 404 responses, slow server response times, or pages that are being crawled but not indexed.

AI crawlers: The new bots visiting your site

Here is what most articles about Googlebot miss: Googlebot is no longer the only important crawler hitting your site.

Since 2023, a new category of web crawlers has emerged. These are bots operated by AI companies — OpenAI, Anthropic, Perplexity, and others — that crawl your pages to train large language models, power AI search engines, and fetch content in real time when users ask questions.

These AI crawlers matter because they directly affect whether your brand appears in AI-generated answers. If GPTBot cannot access your site, your content will not inform ChatGPT’s responses. If PerplexityBot is blocked, you will not be cited in Perplexity’s search results.

This is a different kind of organic visibility — one that runs alongside traditional Google search, not instead of it. Understanding how both sets of crawlers interact with your site is now a core part of any comprehensive SEO strategy.

Major AI crawlers and their user agents

Here is a reference table of the AI crawlers most likely to appear in your server logs:

|

Company |

Crawler |

Purpose |

User-agent token |

Respects robots.txt? |

|---|---|---|---|---|

|

OpenAI |

GPTBot |

Model training data collection |

GPTBot |

Yes |

|

OpenAI |

OAI-SearchBot |

Search indexing for ChatGPT |

OAI-SearchBot |

Yes |

|

OpenAI |

ChatGPT-User |

Real-time retrieval when users ask questions |

ChatGPT-User |

Limited |

|

Anthropic |

ClaudeBot |

Training and citation retrieval |

ClaudeBot |

Yes |

|

Anthropic |

Claude-SearchBot |

Search indexing |

Claude-SearchBot |

Yes |

|

Anthropic |

Claude-User |

User-initiated real-time fetch |

Claude-User |

Limited |

|

Perplexity |

PerplexityBot |

Search indexing |

PerplexityBot |

Yes |

|

Perplexity |

Perplexity-User |

Real-time user-triggered retrieval |

Perplexity-User |

Limited |

|

|

Google-Extended |

Gemini AI training (separate from search indexing) |

Google-Extended |

Yes |

|

Microsoft |

Bingbot |

Bing search + Copilot |

Bingbot |

Yes |

|

Apple |

Applebot-Extended |

Apple Intelligence / Siri |

Applebot-Extended |

Yes |

|

Meta |

Meta-ExternalAgent |

Meta AI training |

meta-externalagent |

Yes |

|

Amazon |

Amazonbot |

Alexa and Amazon AI |

Amazonbot |

Yes |

|

ByteDance |

Bytespider |

TikTok and ByteDance model training |

Bytespider |

Inconsistent |

Notice the pattern: most major AI companies now operate multiple crawlers with distinct purposes. OpenAI runs GPTBot (training), OAI-SearchBot (search indexing), and ChatGPT-User (real-time fetch). Anthropic runs ClaudeBot, Claude-SearchBot, and Claude-User. This multi-bot structure gives you granular control — you can block training crawlers while allowing search and citation crawlers.

The practical difference between training and search crawlers matters for your visibility strategy. Blocking a training crawler means your content will not be baked into future model knowledge. Blocking a search crawler means your site will not appear in that platform’s AI-generated search results — no citations, no referral traffic.

How AI crawlers differ from Googlebot

There are a few important differences between traditional search crawlers like Googlebot and AI crawlers:

Purpose. Googlebot crawls to build a search index of web pages ranked by relevance. AI crawlers crawl for two reasons: to collect training data for language models, and to fetch content in real time for generating answers with citations.

Volume. Googlebot crawls significantly more than any single AI crawler. Cloudflare data shows Googlebot makes roughly 200 times more requests than PerplexityBot. But the aggregate volume of all AI crawlers combined is growing rapidly — some publishers report AI crawler traffic increasing over 2,800% year-over-year.

Rendering. Googlebot fully renders JavaScript using headless Chrome. Most AI crawlers do a simple HTML fetch without full JavaScript execution. This means content that requires JavaScript to load may be invisible to AI crawlers even though Googlebot can see it perfectly.

Compliance. Googlebot has decades of established behavior around robots.txt. AI crawlers are newer, and compliance is less consistent. Some user-triggered fetch bots (like ChatGPT-User and Perplexity-User) may not fully respect robots.txt when a user provides a specific URL.

Spoofing. AI crawler user-agent strings are being impersonated at a high rate. According to recent data, ChatGPT-User saw 7.9 million spoofed requests in early 2026, and PerplexityBot had a spoofing rate near 2.4%. Verifying AI crawlers requires checking IPs against the published IP ranges from each company — the same approach you use to verify Googlebot.

How to configure robots.txt for both Google and AI crawlers

Now that you understand both Googlebot and AI crawlers, here is how to configure your robots.txt to handle both sets of bots strategically.

If you want maximum visibility (recommended for most brands)

Allow Googlebot for search indexing. Allow AI search crawlers so your content appears in AI-generated answers. Optionally allow training crawlers so your brand knowledge gets embedded in future model versions.

# Google (search indexing)

User-agent: Googlebot

Allow: /

# Google (AI training for Gemini — separate from search)

User-agent: Google-Extended

Allow: /

# OpenAI

User-agent: GPTBot

Allow: /

User-agent: OAI-SearchBot

Allow: /

User-agent: ChatGPT-User

Allow: /

# Anthropic (Claude)

User-agent: ClaudeBot

Allow: /

User-agent: Claude-SearchBot

Allow: /

# Perplexity

User-agent: PerplexityBot

Allow: /

# Microsoft (Bing + Copilot)

User-agent: Bingbot

Allow: /

# Apple Intelligence

User-agent: Applebot-Extended

Allow: /

# Block sensitive areas for all bots

User-agent: *

Disallow: /admin/

Disallow: /staging/

Disallow: /api/

Sitemap: https://yoursite.com/sitemap.xml

If you want search visibility without AI training

Allow all search and citation crawlers but block training-specific bots:

# Allow Google search, block Gemini training

User-agent: Googlebot

Allow: /

User-agent: Google-Extended

Disallow: /

# Allow ChatGPT search, block training

User-agent: OAI-SearchBot

Allow: /

User-agent: ChatGPT-User

Allow: /

User-agent: GPTBot

Disallow: /

# Allow Claude search, block training

User-agent: Claude-SearchBot

Allow: /

User-agent: ClaudeBot

Disallow: /

# Allow Perplexity search

User-agent: PerplexityBot

Allow: /

This is a middle-ground approach. Your content appears in AI search results (which sends referral traffic to your site), but it will not be incorporated into future model training.

Common robots.txt mistakes to avoid

Blocking Googlebot when you meant to block Google-Extended. These are separate user-agent tokens. Blocking Googlebot removes you from Google Search entirely. Blocking Google-Extended only prevents AI training.

Forgetting to include a sitemap directive. Adding Sitemap: https://yoursite.com/sitemap.xml at the end of your robots.txt helps both Googlebot and AI crawlers discover all your important URLs.

Assuming robots.txt is enforceable. Robots.txt is a voluntary protocol. Legitimate crawlers from Google, OpenAI, and Anthropic generally respect it, but malicious bots or scrapers will not. For stronger protection, combine robots.txt with server-level access controls.

Not testing after changes. Always validate your robots.txt with Google’s tester in Search Console after making changes. A misplaced Disallow: / can accidentally block your entire site.

How to monitor your visibility across Google and AI search

Verifying that Googlebot is crawling your site correctly is just part of the picture. You also need to understand what happens after the crawling — specifically, whether your content is actually appearing in both Google’s search results and in AI-generated answers.

Monitoring Google Search visibility

Google Search Console is the primary tool for tracking your traditional search visibility. It shows you which queries your pages rank for, how many impressions and clicks you receive, and any indexing issues that might be preventing pages from appearing. You can use Analyze AI’s SERP Checker to see who currently ranks for your target keywords, and the Keyword Rank Checker to track your positions over time.

Monitoring AI search visibility

This is where things get more complex. Unlike Google Search Console, there is no single free tool from OpenAI or Perplexity that shows you exactly when and where your brand appears in AI-generated answers.

This is the gap that Analyze AI fills. The platform tracks your brand’s visibility, sentiment, and citation patterns across all major AI answer engines — ChatGPT, Perplexity, Claude, Gemini, Copilot, and more.

Here is how that works in practice.

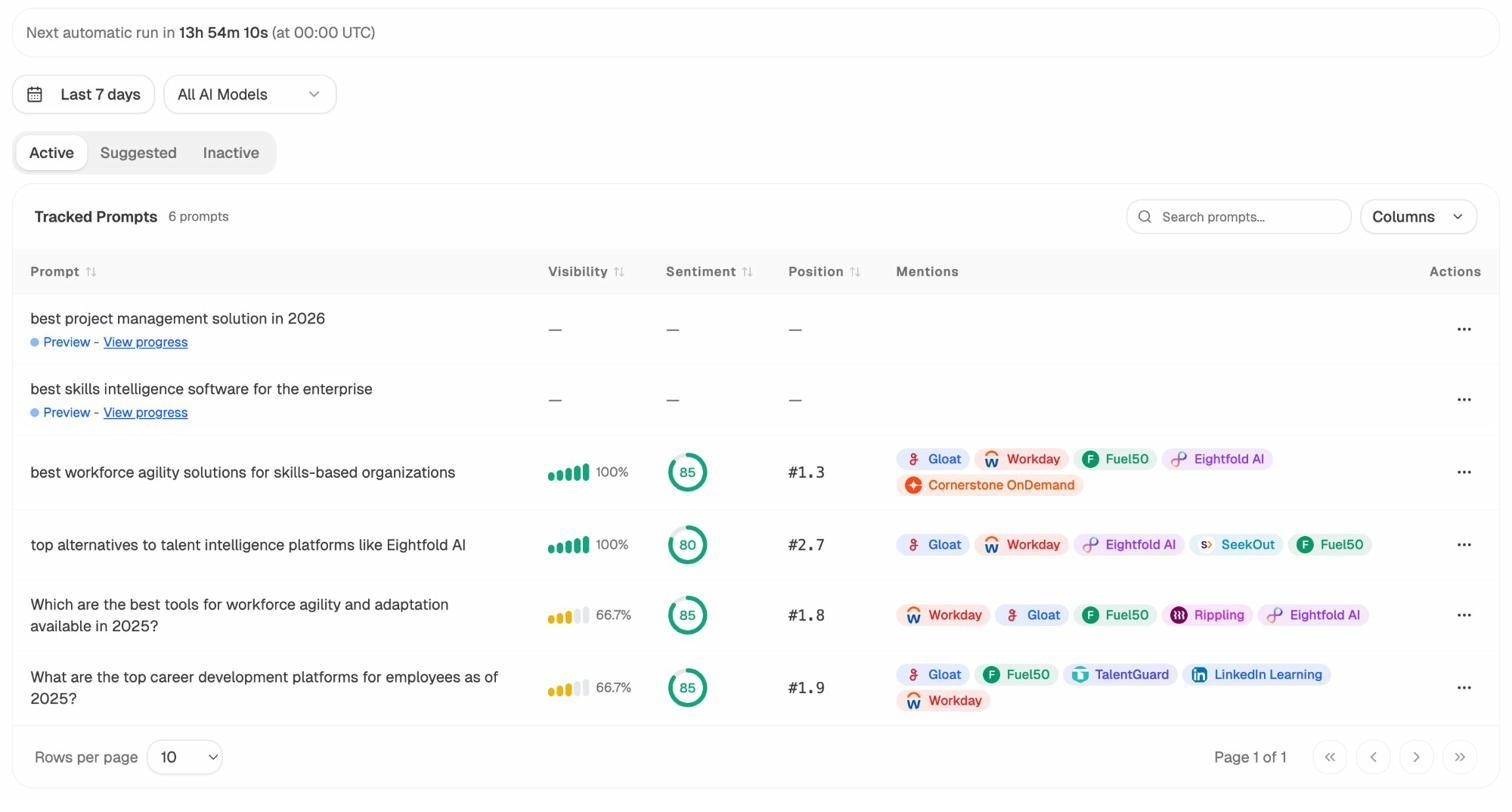

Track the prompts that mention your brand. Analyze AI’s Prompts dashboard lets you set up the specific queries (prompts) that matter to your business and monitors whether your brand appears in the AI-generated responses, your position in those responses, and the sentiment of how you are mentioned.

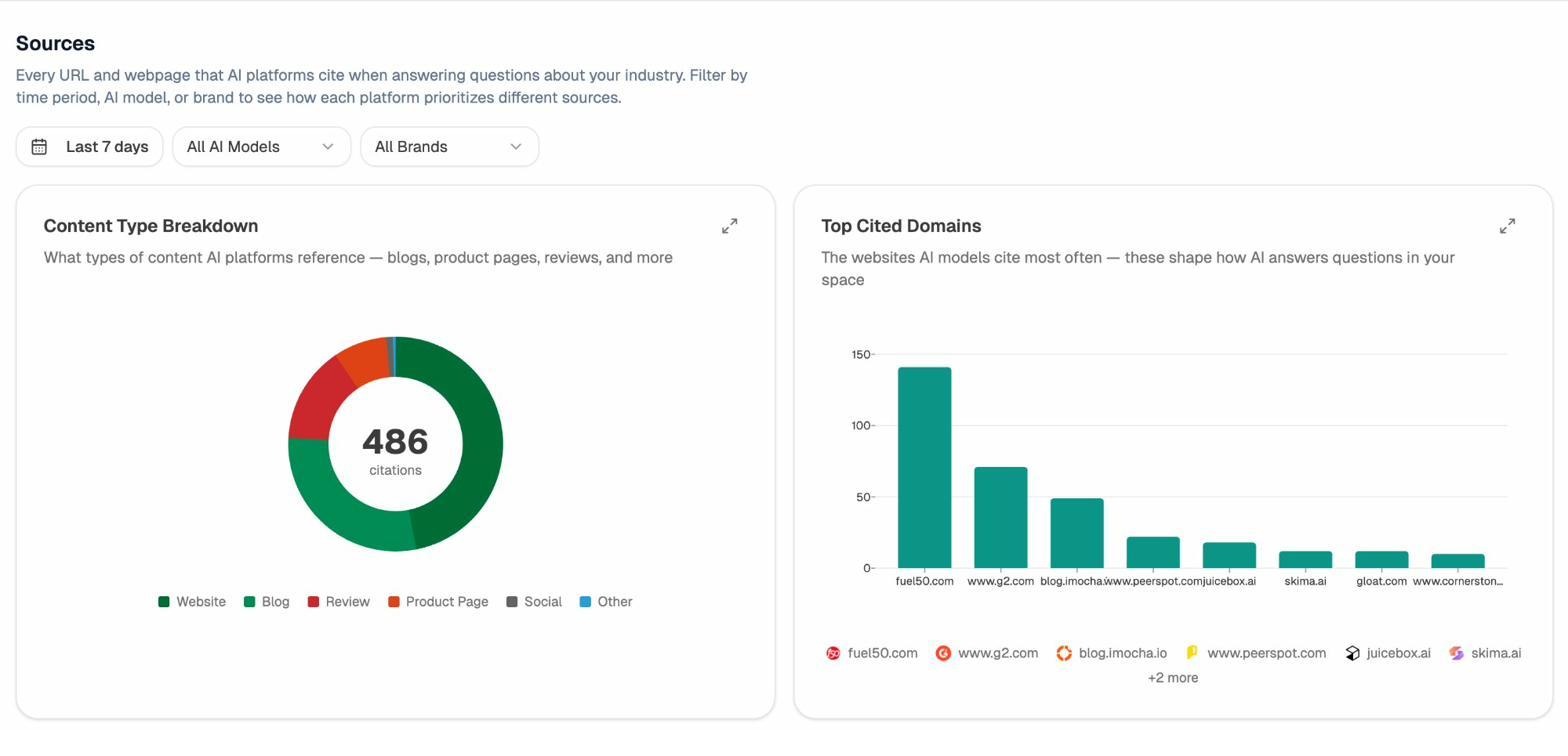

See which sources AI models cite. The Sources dashboard shows every URL and domain that AI platforms cite when answering questions in your industry. You can see which of your pages are being referenced, which competitor pages are getting cited instead, and which content types (blog posts, product pages, reviews) models prefer.

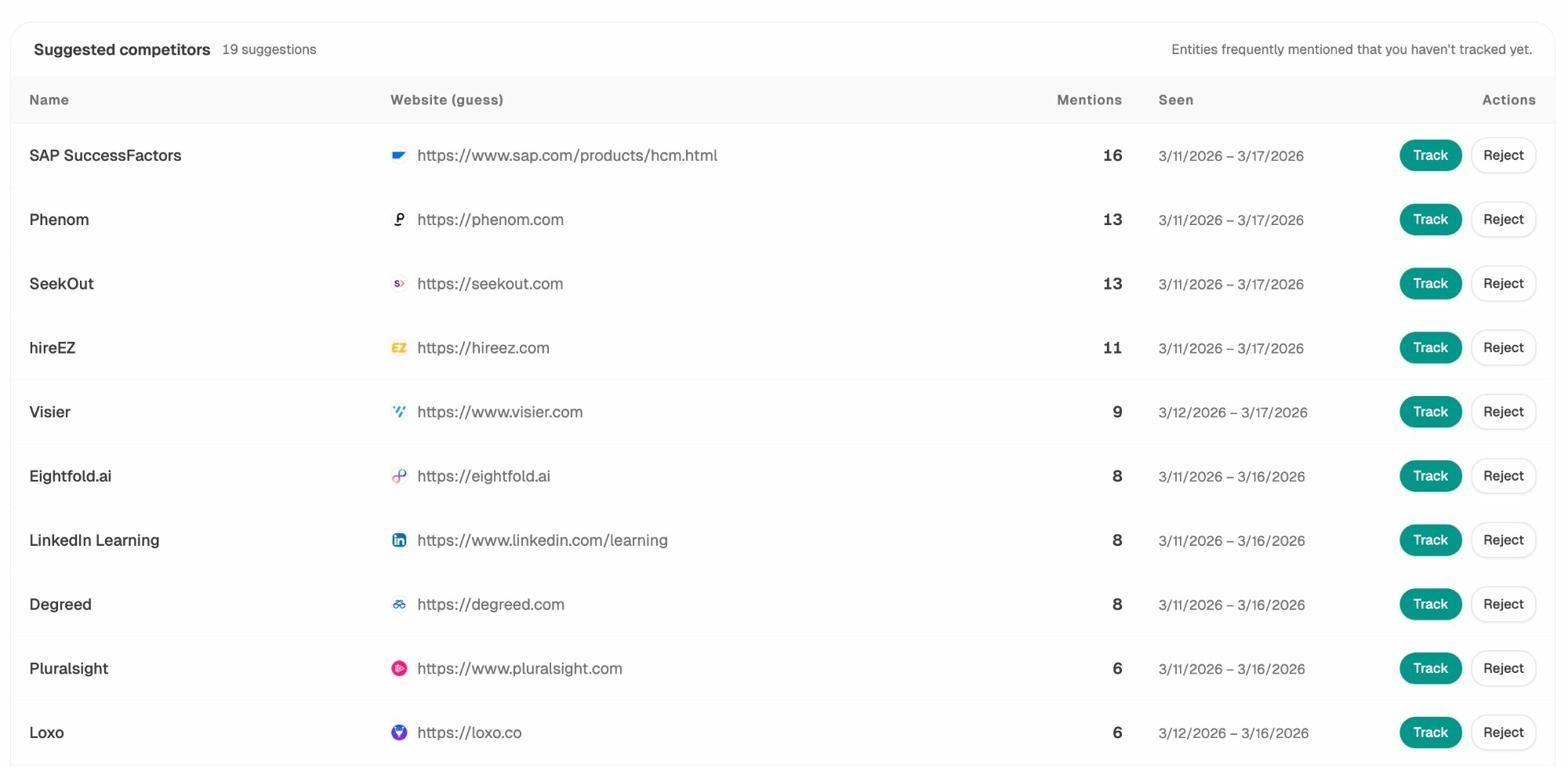

Identify competitive gaps. The Competitors view surfaces brands that appear in AI responses where you do not. This is the AI search equivalent of finding keyword gaps in traditional SEO — except here, the “keywords” are the prompts users type into ChatGPT, Perplexity, and other AI platforms.

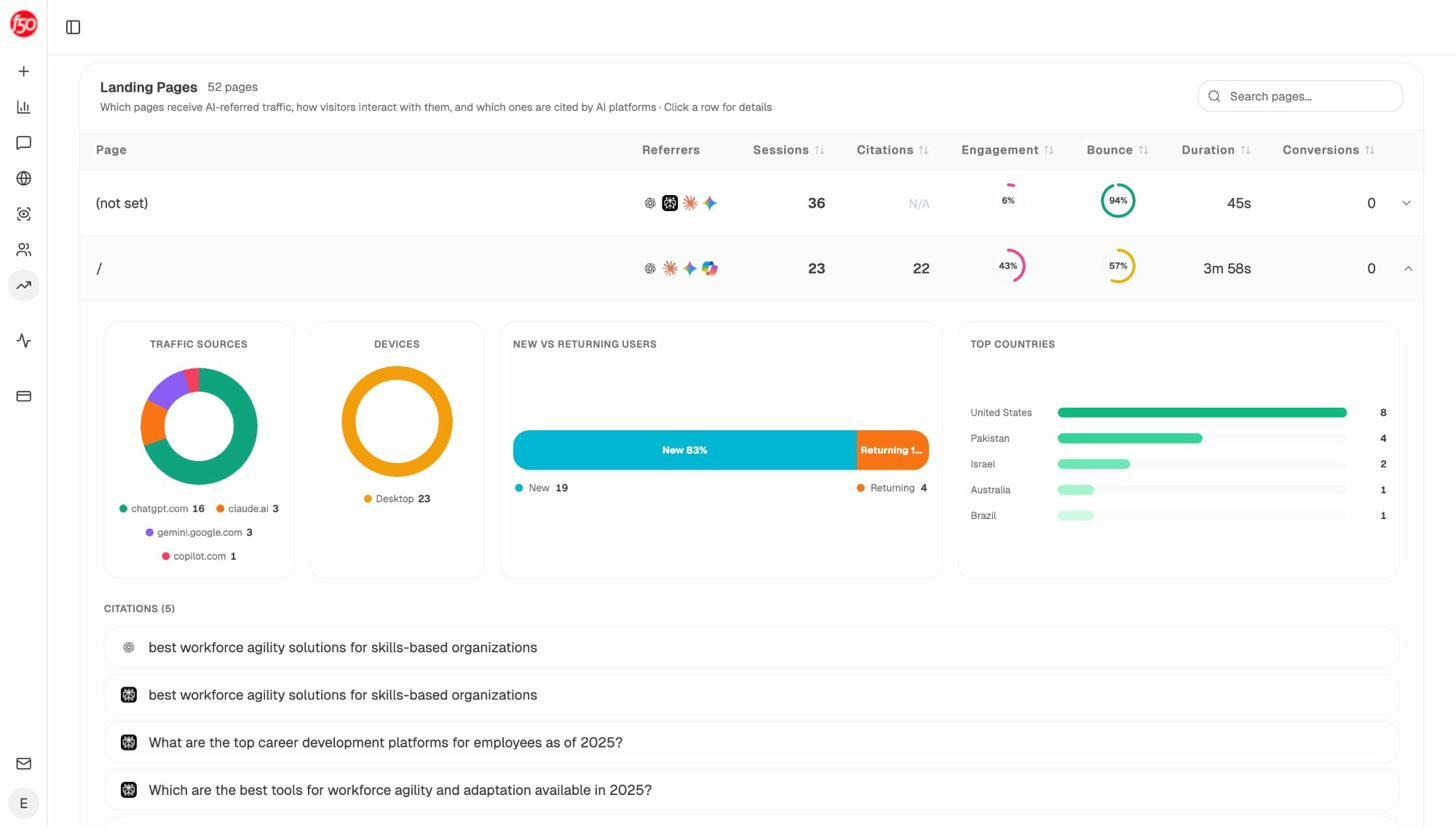

Measure actual AI traffic. Analyze AI connects to your GA4 data to show real AI referral traffic — how many sessions come from each AI engine, which landing pages receive that traffic, and how those visitors behave compared to your organic search visitors.

This last point matters most. You can track visibility in AI responses all day, but what ultimately drives business impact is whether that visibility converts into sessions, engagement, and revenue. The AI Traffic Analytics report in Analyze AI lets you drill down into landing pages that receive AI traffic and see exactly which pages are performing and which need improvement.

Understanding crawl access is step one. Monitoring visibility is step two. And tying visibility to traffic and conversions — that is where the strategy gets actionable.

Common Googlebot issues and how to fix them

Even well-built sites run into Googlebot problems. Here are the issues that come up most often, what causes them, and how to fix them.

Pages are crawled but not indexed

This is one of the most common frustrations in SEO. Google Search Console shows your page as “Crawled — currently not indexed.”

Possible causes:

-

Thin content. The page does not offer enough unique value for Google to justify including it in the index. Add depth, original data, or a unique perspective.

-

Duplicate content. Google found substantially similar content on another URL (on your site or elsewhere) and chose to index that version instead. Implement canonical tags to signal your preferred version.

-

Quality issues. The page may be auto-generated, low-quality, or part of a pattern Google considers unhelpful. Review it against Google’s helpful content guidelines.

Googlebot cannot access resources

If your CSS, JavaScript, or image files are blocked by robots.txt, Googlebot cannot render your page correctly. The URL Inspection tool in Google Search Console shows you the rendered version of your page as Googlebot sees it — compare it to what a real user sees.

Fix: Make sure your robots.txt does not block any resources required for rendering. Check for rules like Disallow: /wp-content/ or Disallow: /assets/ that might unintentionally block critical files.

Slow server response times

If your server takes too long to respond, Googlebot may reduce its crawl rate or abandon requests entirely. Check the Crawl Stats report in Google Search Console for the average response time. Google recommends keeping it under 200ms.

Fix: Upgrade your hosting, enable caching, use a CDN, and optimize database queries. Use Analyze AI’s Website Traffic Checker to benchmark your site’s overall performance and visibility metrics.

Redirect chains and loops

If Googlebot follows a redirect that leads to another redirect that leads to another redirect, it may stop following the chain before reaching the final destination. Google generally follows up to 10 redirects but recommends keeping chains as short as possible.

Fix: Audit your redirects and point each one directly to the final destination URL. Use Analyze AI’s Broken Link Checker to find redirect chains and broken links across your site.

Soft 404 errors

A soft 404 happens when your server returns a 200 OK status code for a page that should be a 404. This wastes crawl budget because Googlebot keeps trying to crawl and index pages that have no real content.

Fix: Configure your server to return actual 404 or 410 status codes for pages that no longer exist. Check Google Search Console’s coverage report for soft 404 flags.

How AI crawlers are shaping the future of organic visibility

Googlebot has been the backbone of organic discovery for over two decades. That is not changing. Google still processes billions of queries per day, and ranking in Google Search remains one of the highest-leverage marketing channels available.

But the landscape is expanding. AI search engines like ChatGPT, Perplexity, Claude, and Gemini are adding a new layer of organic discovery where your content can surface without the user ever visiting a traditional search results page. When someone asks ChatGPT a question about your industry and your brand appears in the response — with a citation link back to your site — that is a new kind of organic traffic that did not exist three years ago.

The brands that will benefit most from this shift are the ones treating AI search as a complement to SEO, not a replacement. The same qualities that make content rank well in Google — depth, originality, structure, authority, and usefulness — are also the qualities that make AI models more likely to cite your content in their responses. Generative engine optimization is not a separate discipline. It is SEO applied to a new set of surfaces.

Here is what this means for how you think about crawlers:

-

Make sure both Googlebot and AI crawlers can access your important content.

-

Use robots.txt strategically to control which bots access which parts of your site.

-

Monitor your visibility in both Google Search and AI search results.

-

Measure AI referral traffic alongside your organic search traffic.

-

Double down on content that performs well in both channels.

Tools like Analyze AI’s Overview dashboard give you a single view of your brand’s visibility across all major AI platforms, including sentiment, competitive positioning, and citation trends — alongside the AI traffic data you need to tie visibility to real business outcomes.

The fundamentals have not changed. What has changed is that the number of discovery surfaces has grown — and with that growth comes a new set of crawlers, a new set of rules, and a new opportunity for brands that move early.

Final thoughts

Googlebot is the foundation of organic search visibility. Understanding how it discovers, crawls, renders, and indexes your pages is not optional — it is a prerequisite for ranking in Google Search.

But Googlebot is now one crawler among many. GPTBot, ClaudeBot, PerplexityBot, and their variants are visiting your site right now, and what they find (or cannot find) directly shapes whether your brand appears in the fastest-growing discovery channel on the web.

Here is what to do next:

-

Audit your robots.txt. Check whether you are accidentally blocking AI crawlers. Use the reference tables in this article to verify your configuration.

-

Check your server logs. Look for GPTBot, ClaudeBot, and PerplexityBot requests to see which AI crawlers are already visiting your site.

-

Set up Google Search Console if you have not already. Use the Crawl Stats report to monitor Googlebot activity and diagnose issues.

-

Start tracking your AI visibility. Use Analyze AI to see where your brand appears (and does not appear) in AI-generated answers, who your competitors are in that space, and how much traffic AI search is actually sending to your site.

Crawl access is the prerequisite. Visibility tracking is the strategy. And tying both to traffic and conversions is how you turn this knowledge into growth.

Ernest

Ibrahim