Summarize this blog post with:

In this article, you’ll see exactly what the Semrush AI Toolkit gives you, where the data starts to crack under real use, what $99 a month actually covers, and the gap that opens up the moment you need more than a visibility score. By the end you’ll know whether the toolkit fits your team, and what an agentic SEO and content platform looks like when AI search is one job in a much bigger workflow.

Table of Contents

What the Semrush AI Toolkit actually is

The Semrush AI Toolkit (officially the AI Visibility Toolkit) tracks how your brand shows up inside answers from ChatGPT, Perplexity, Gemini, Google AI Mode, and Copilot. It runs a dictionary of prompts on a fixed cadence, records when your brand appears, scores sentiment, and compares your share of voice against competitors. You can read the full Semrush review for context on the broader platform.

It sells for $99 per month per domain standalone, or comes bundled inside Semrush One starting at $199 per month. Inside the dashboard you get four main reports. Visibility Overview, Brand Performance, Prompt Tracking, and an AI-readiness Site Audit.

We ran it across multiple domains over several months. Below are the three features users genuinely like, the three places it falls apart, and what happens once you walk out of it.

Three things the Semrush AI Toolkit gets right

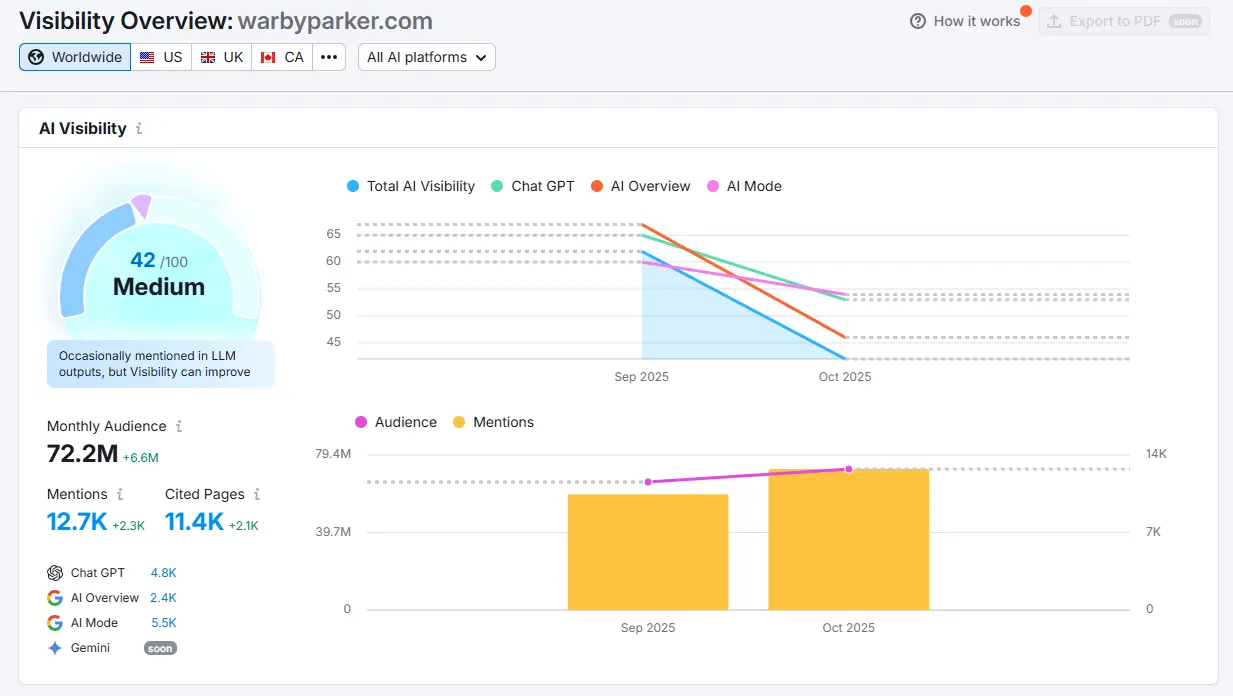

1. Visibility Overview gives you a clear baseline

The Visibility Overview is the strongest feature in the kit. It compiles thousands of AI-generated responses, calculates how often your brand surfaces, and produces a daily AI Visibility Score you can chart over time.

You can filter by branded versus generic prompts, by AI engine, or by geography. Each row drills into the exact phrasing that triggered the mention and the sources the model cited. That prompt-level resolution is what turns “we appear sometimes” into “we appear in 41% of pricing-related questions across ChatGPT but 8% across Gemini.” It replaces the noise of manual ChatGPT spot-checks with a daily number you can share, and the Visibility Overview is the cleanest “see them” view Semrush has shipped if you want to outrank competitors in AI search.

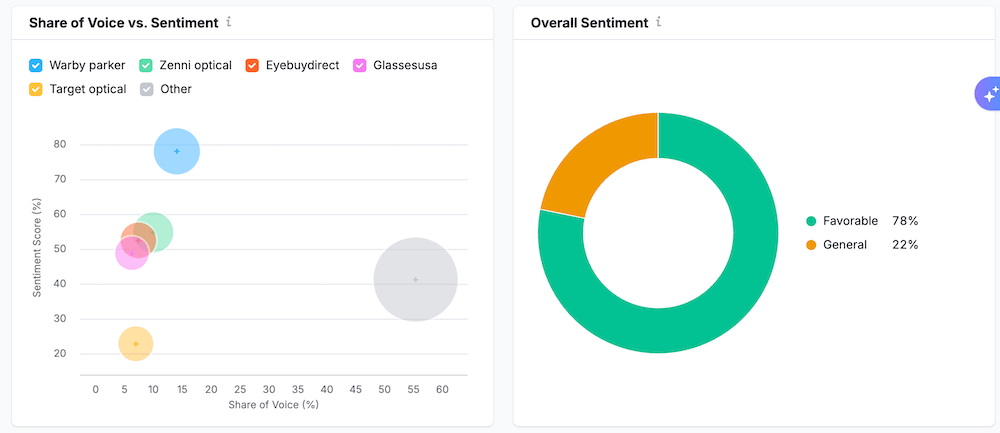

2. Brand Performance reports turn AI mentions into messaging tasks

Once you know where you appear, Brand Performance tells you how AI systems describe you. It buckets every detected mention by sentiment, ties recurring topics back to your brand, and surfaces the language models use most often when explaining who you are.

The view that makes this useful is Narrative Drivers. It exposes the attributes most often anchored to your brand inside AI answers (“simple onboarding,” “expensive enterprise tier,” “weak mobile app”). Layered against share of voice, this gives marketing leadership real input for messaging tests, comparison page rewrites, and PR work. The report doesn’t tell you what to write. It tells you what’s already being said about you.

3. Prompt Tracking and the AI-readiness audit close the loop

Once priority prompts are identified, the Prompt Tracker locks them in for daily monitoring. The AI-readiness Site Audit then crawls the same pages AI systems should be citing and flags structural issues blocking inclusion. Missing schema, thin content, broken internal links, restricted crawl paths.

Each audit finding is mapped back to the prompts it likely affects, which gives you a rough sense of which fixes deserve priority. Re-running tracked prompts after changes is how you confirm whether the fix moved the needle. It works reasonably well for technical SEOs who already understand AI crawlability concepts. For teams new to the space, the audit findings can feel familiar from any standard SEO crawl.

Three places the Semrush AI Toolkit falls short

1. The methodology is a black box

The first frustration most users hit is not knowing how the numbers are produced. Visibility percentages, sentiment scores, share-of-voice trends. They all look precise on screen, but the dashboard tells you almost nothing about which prompts ran, which models answered, or how mentions were weighted.

When a score moves five points, you can’t explain why. When a client asks “what changed this week,” you don’t have an answer. Several reviewers have noted that Semrush relies on simulated rather than real user queries, leading some users to describe the output as “probabilistic guesswork.” That doesn’t make the data wrong. It does make it harder to defend in a board meeting.

2. Metric volatility is hard to separate from real change

Even when you accept the black box, the numbers themselves swing in ways that are tough to act on. Visibility scores can move from dominant to invisible week-over-week without any change to your site.

Did your share of voice drop because of a Google AI Mode model refresh, a Perplexity index change, or because your content slipped? Without a visible change log or per-engine trend separation, it’s easy to misread noise as signal. For agencies, this is especially painful. Reporting day arrives, the chart shows a 40-point drop, and you spend an hour explaining a number you can’t fully verify yourself.

3. Recommendations stop at “do better”

The third gap is the recommendations layer. When the toolkit identifies a problem, the suggested next step is usually generic. “Improve onboarding.” “Increase brand awareness.” “Strengthen visibility on top queries.”

These read like reminders, not strategies. Because the platform doesn’t connect each recommendation back to the specific prompts, pages, and citation sources involved, even motivated teams know what’s wrong but not what to fix first. A negative trend on a high-value prompt should spell out whether the cause is technical, content, or authority. It doesn’t.

How Semrush AI Toolkit pricing actually works

Semrush positions the AI Toolkit as a premium add-on. The standalone price is $99 per month per domain. For multi-brand teams, the cost compounds quickly because each domain you want to monitor needs its own subscription.

|

Plan |

Cost (monthly) |

Domains |

Tracked prompts |

Notable limits |

|---|---|---|---|---|

|

AI Visibility Toolkit (standalone) |

$99 |

1 |

50 daily checks |

+50 prompts: $60. +Domain: $99. +User with toolkit access: $99. |

|

Semrush One Starter |

$199 |

5 |

50 daily checks |

Bundles SEO Toolkit + AI Visibility |

|

Semrush One Pro+ |

$299 |

15 |

100 daily checks |

Adds historical data, content optimization, multi-location tracking |

|

Semrush One Advanced |

$549 |

40 |

200 daily checks |

API access, share of voice |

A few things stand out once you read the fine print. Adding a teammate to a Semrush One plan starts at $45 per user, and giving that teammate access to the AI Toolkit features costs an additional $99 per user. That detail rarely shows up on the public pricing page.

For agencies tracking five or six brands, the bill clears $500 a month before any prompt or seat upgrades. For solo SEOs, the standalone $99 is hard to justify when sparse data tends to make the toolkit produce thin reports for smaller domains. The Semrush AI Toolkit makes financial sense in one specific case. You already live in Semrush, you have one or two flagship domains, and you want a prompt-level visibility view next to your existing keyword data. Outside of that profile, the math gets harder. We’ve covered the best Semrush AI Toolkit alternatives for SMBs separately if pricing is the dealbreaker.

The bigger gap: visibility is one job, your team has ten

The Semrush AI Toolkit answers one question well. Does my brand appear in AI answers. That’s useful. But marketing teams in 2026 are doing more than tracking visibility. They’re researching prompts, writing content, optimizing pages for both Google and LLMs, alerting on competitor moves, and proving the whole stack drove revenue. Semrush ships a separate Content Toolkit for some of that. A separate AI PR Toolkit for some of the rest. By the time you’ve stitched together visibility, content optimization, traffic attribution, and automation, you’re paying for four products that don’t share a workflow.

The Analyze AI position is the opposite. SEO is not dead, AI search is an additional organic channel alongside Google, and the same platform should run both. You’ll find the full manifesto on the home page.

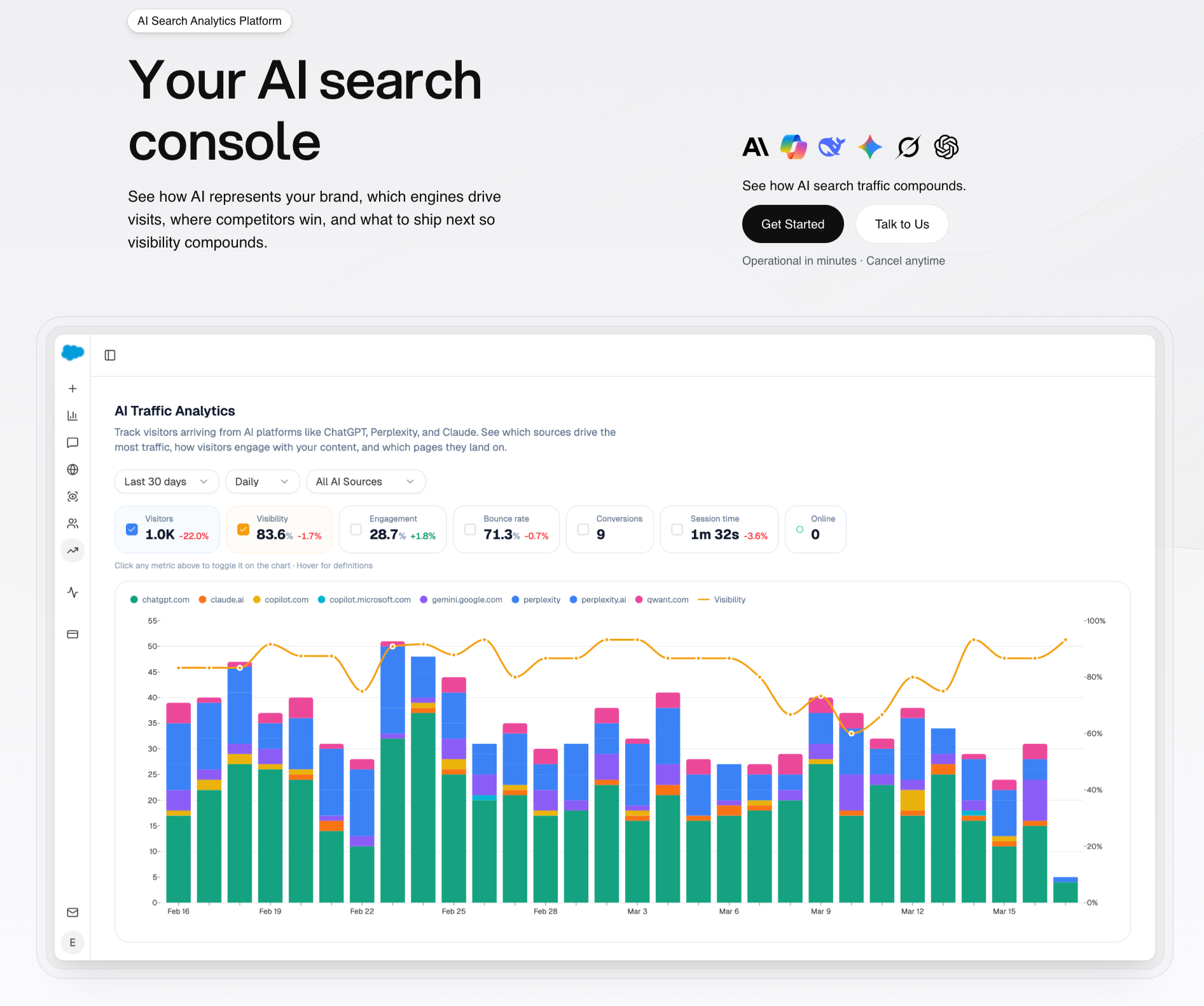

Analyze AI: the agentic SEO and content platform that runs the full loop

Analyze AI is built for the work that happens after you see your visibility number. It tracks AI visibility across every major engine, ties that visibility to actual sessions, conversions, and revenue, and ships a writer and optimizer for the content side. Underneath all of it sits an agent builder with 180+ nodes that pulls data from GA4, GSC, DataForSEO, Semrush, HubSpot, Notion, WordPress, and more. Most things you’d otherwise stitch together with Zapier, n8n, and Retool live inside the same surface as your visibility data.

That changes what the platform replaces. The Semrush AI Toolkit replaces a manual ChatGPT spot-check. Analyze AI replaces a chunk of your weekly operations.

1. See real AI traffic, not just brand mentions

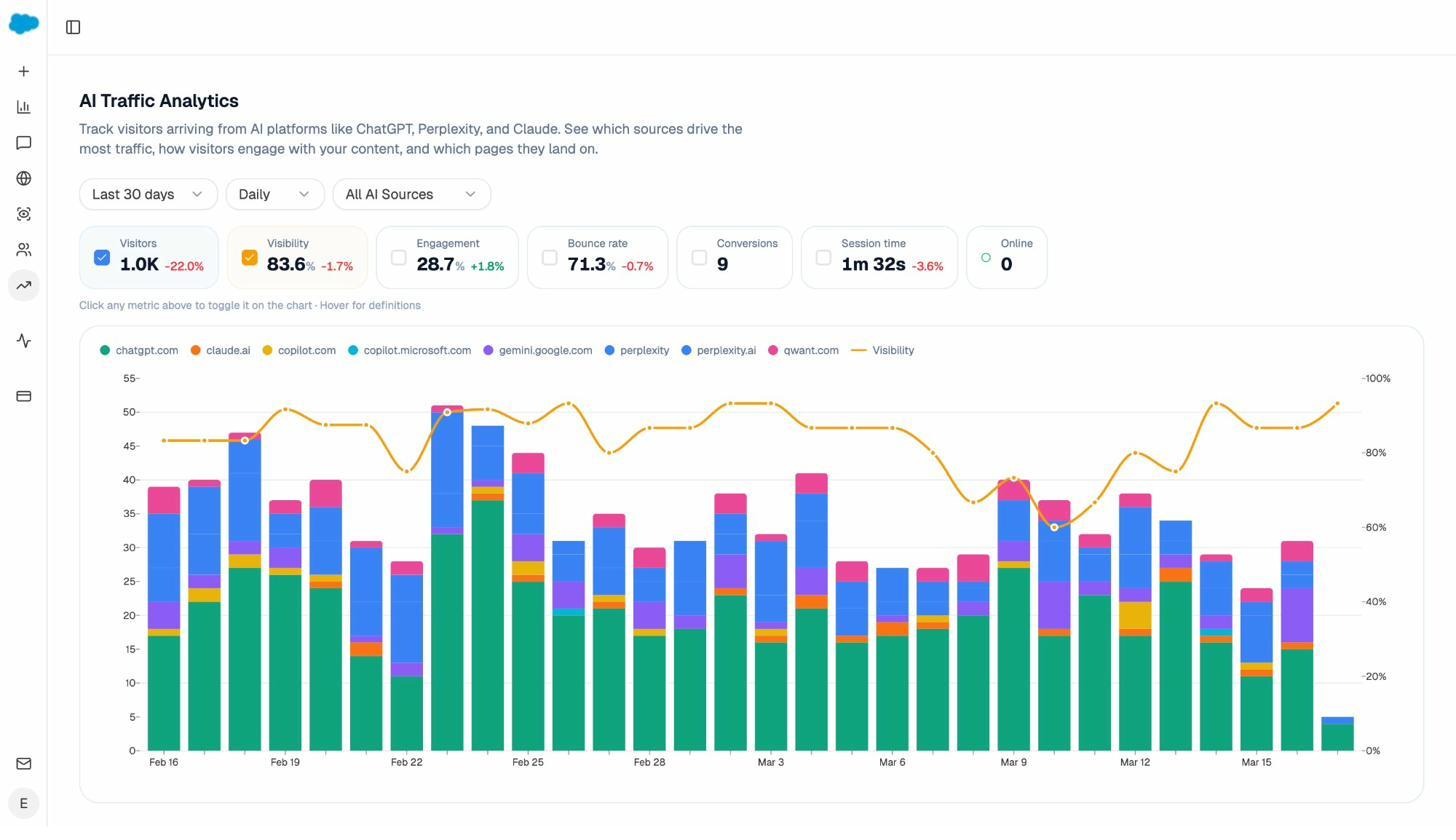

Most visibility tools tell you the percentage of prompts that mention your brand. They stop there. Analyze AI’s AI Traffic Analytics attributes every session from an answer engine to its specific source. ChatGPT, Perplexity, Claude, Gemini, Copilot, Google AI Mode.

You see session volume by engine, six-month trends, and what percentage of total traffic comes from AI referrers. When ChatGPT sends 248 sessions in a week and Perplexity sends 42, you stop guessing where to focus. The Semrush AI Toolkit doesn’t have a comparable view because it lives outside your GA4 data.

2. See which pages convert AI traffic

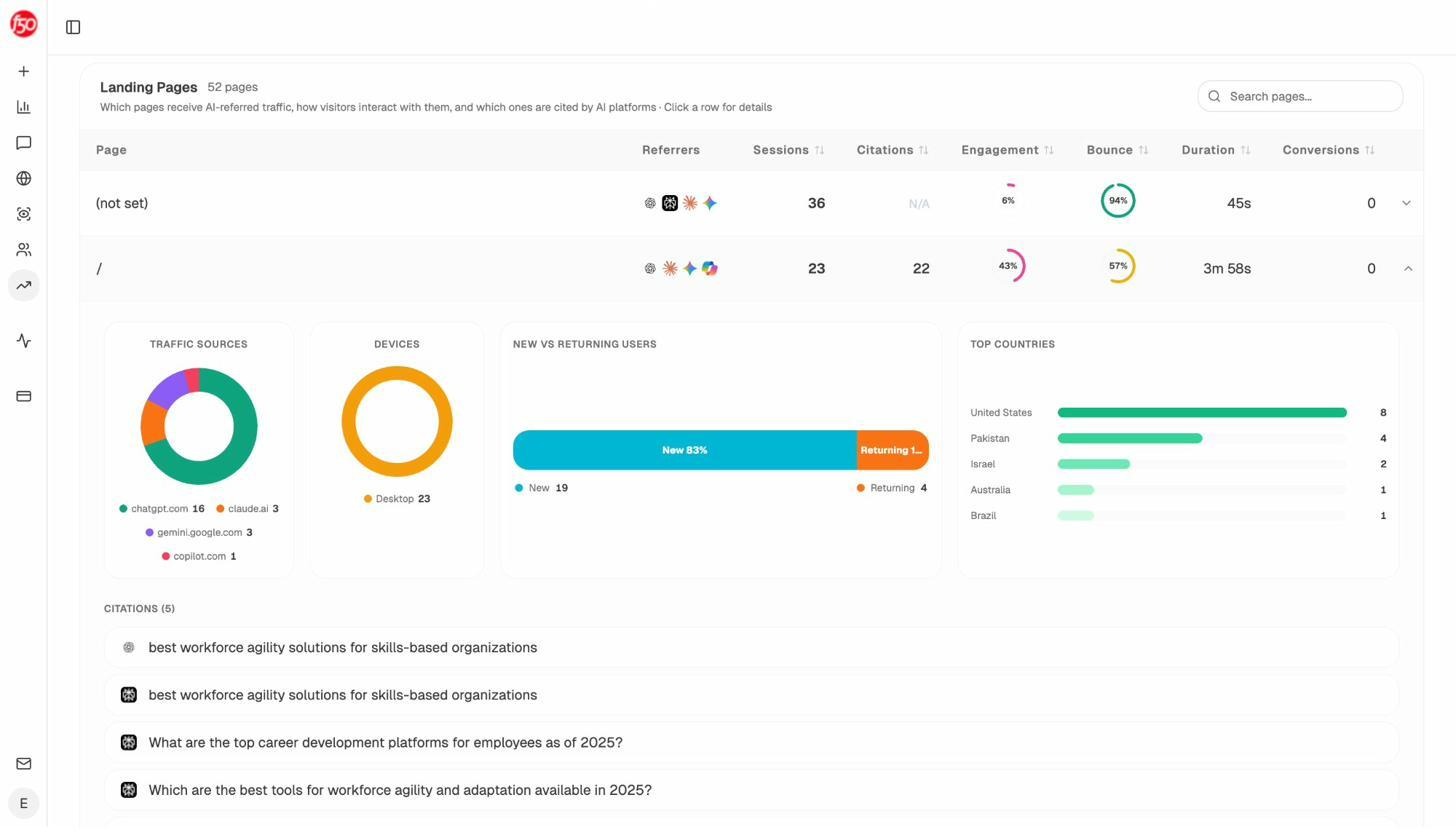

A brand mention is not a session. A session is not a conversion. Analyze AI shows the full path from AI answer to landing page to conversion event.

The Landing Pages report lists every URL that received AI referrals, the engines that sent each session, and the conversion events those visits triggered. When a product comparison page gets 50 sessions from Perplexity and converts 12% to trials, while an old blog post gets 40 sessions from ChatGPT with zero conversions, you know which page deserves the next investment.

This is the layer that turns AI visibility from a vanity metric into something a CFO can read.

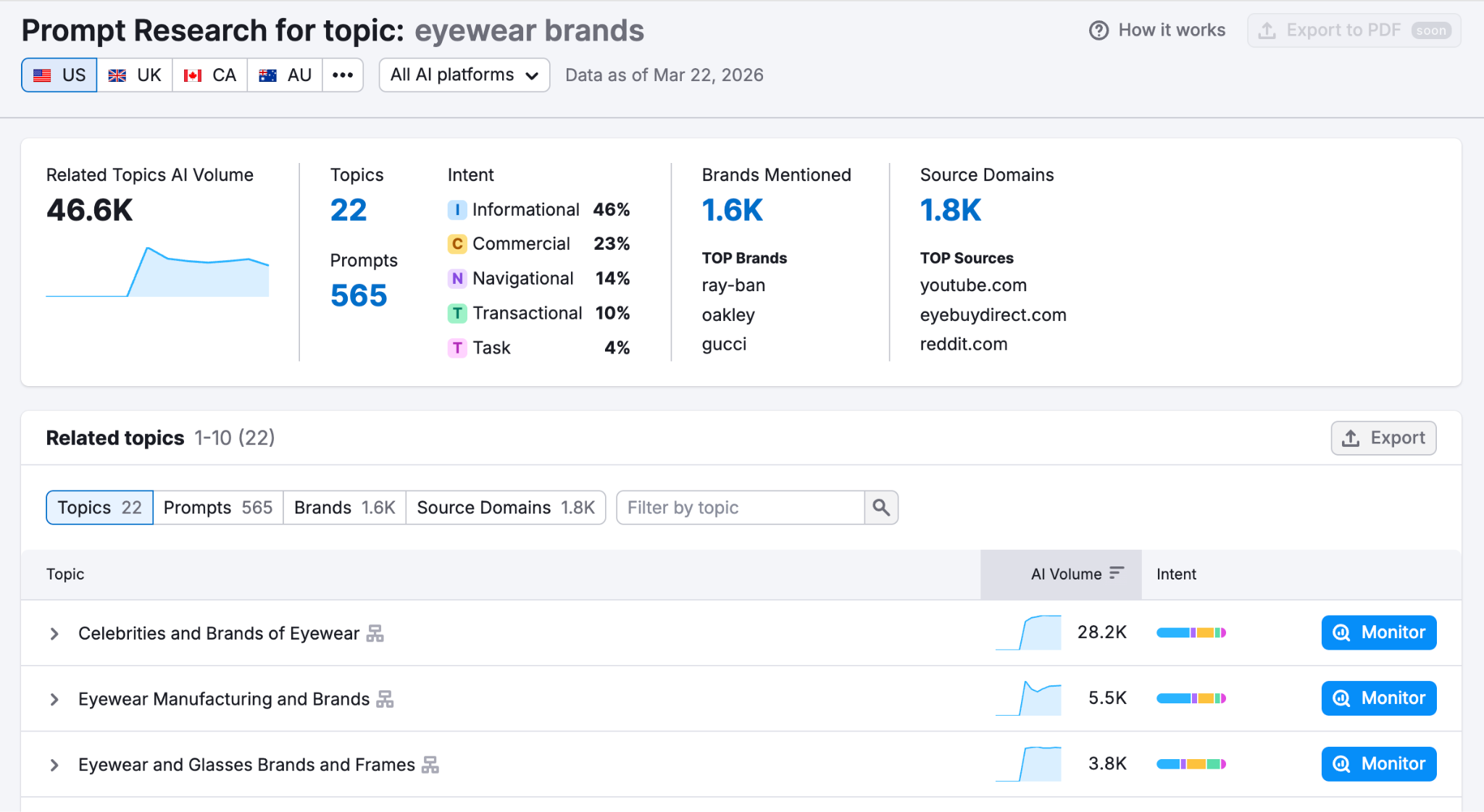

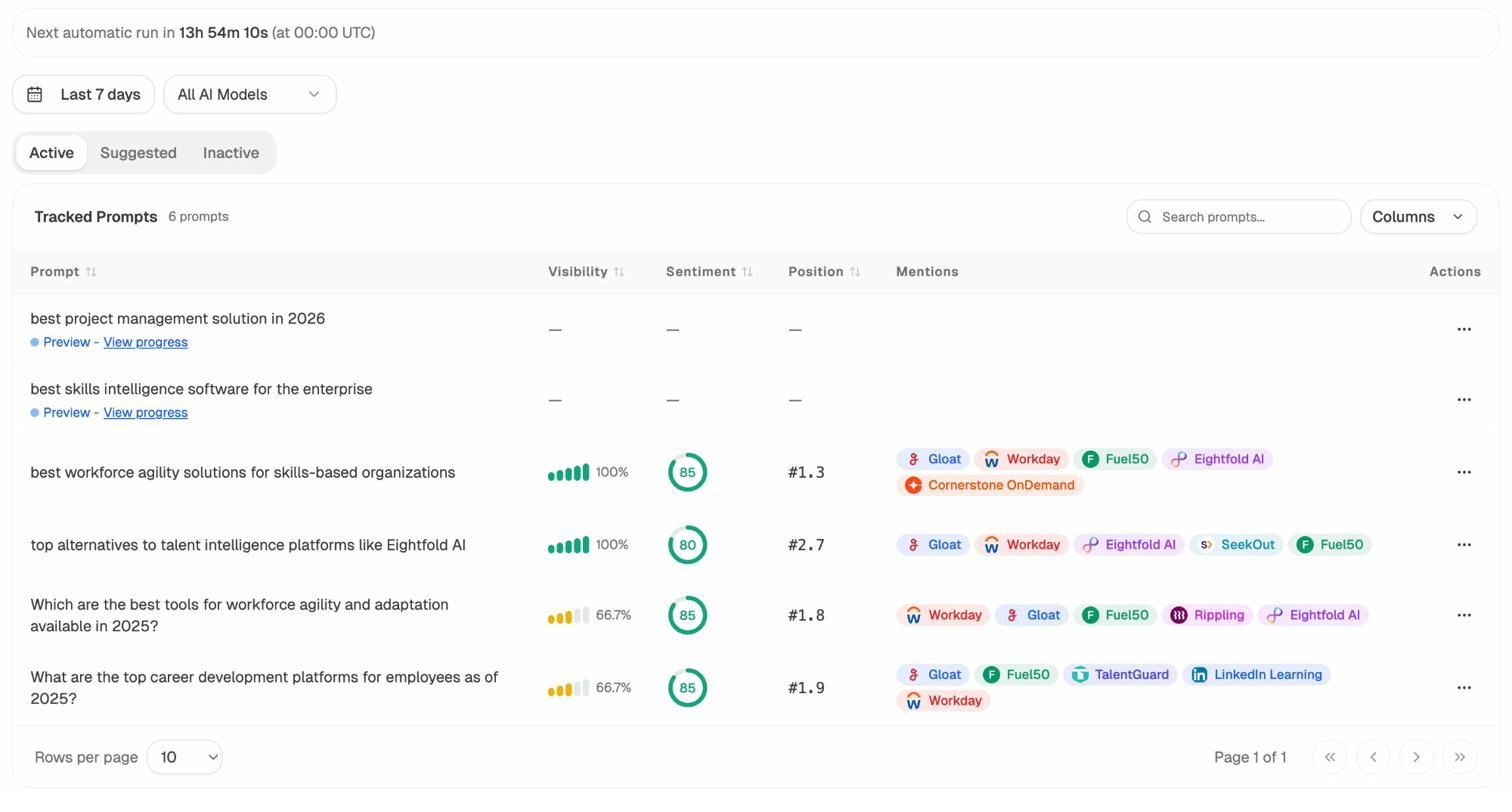

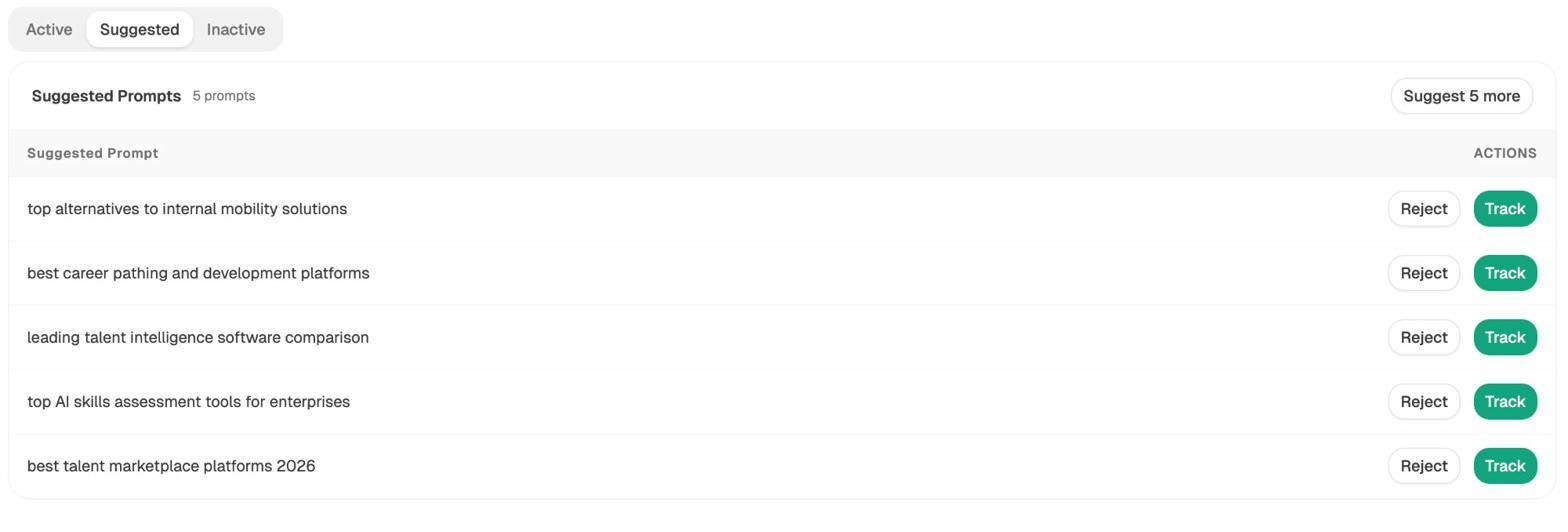

3. Track the prompts buyers actually use, and let the platform suggest them

Most teams struggle to pick which prompts to track in the first place. Analyze AI’s Prompt Tracking monitors the queries you care about across every major LLM, and the Suggested Prompts engine surfaces bottom-of-funnel queries you haven’t considered yet.

For each tracked prompt you see your visibility, your average position, your sentiment score, and which competitors appear alongside you. That competitor list per prompt is the input you need to write the comparison page that displaces them.

Analyze AI mines your category and proposes prompts already pulling AI answers, so you can track or reject each in one click. This is the input the Semrush “broad recommendation” gap leaves you scrambling for.

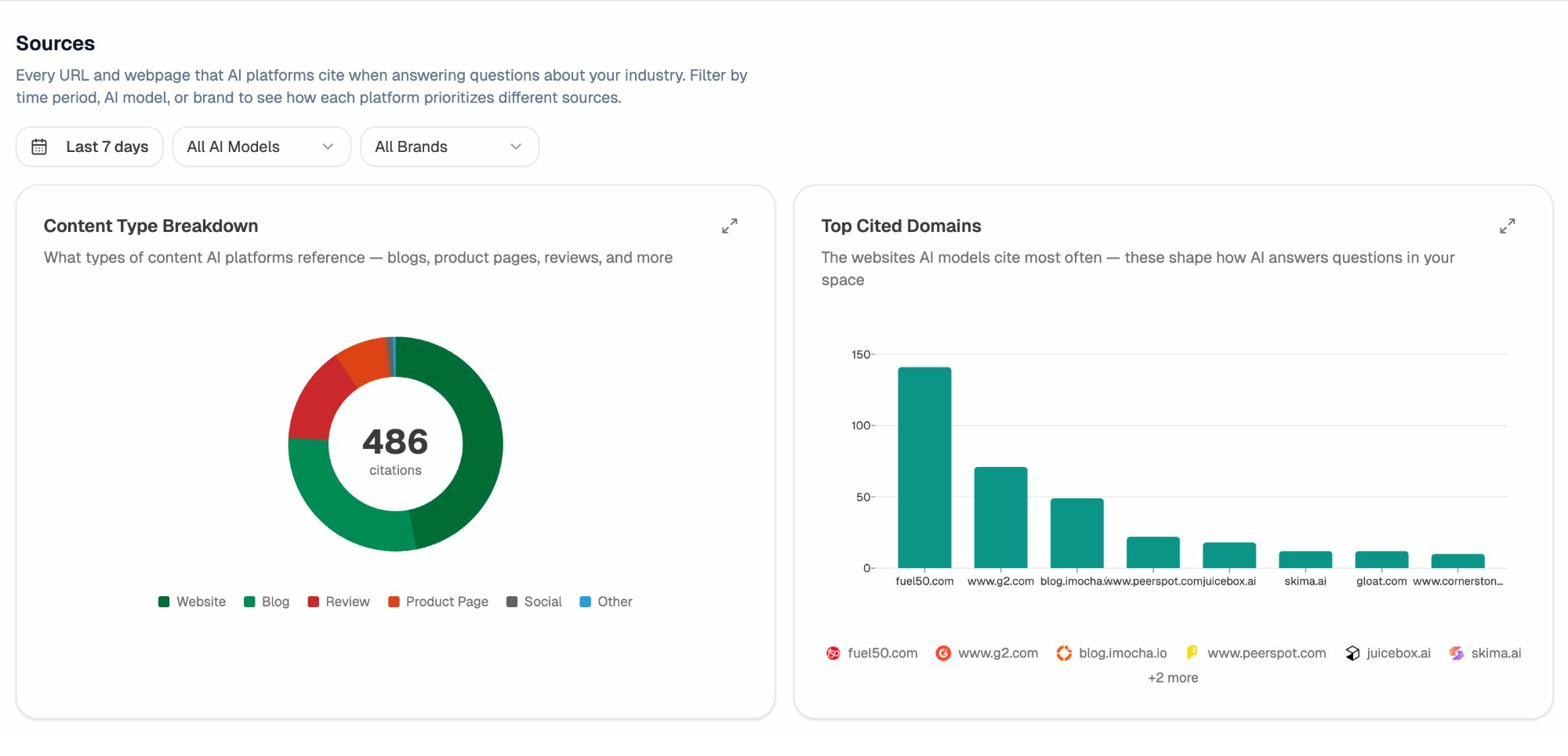

4. Audit which sources AI models cite

The other half of AI visibility is the citation graph. Which domains do models trust when they answer questions in your category? Where does your own coverage drop off?

Analyze AI’s Citation Analytics shows usage count per source, which models reference each domain, and when a citation first appeared. Instead of generic link building, you target the specific sources that shape AI answers in your space and track whether your citation frequency moves after each initiative.

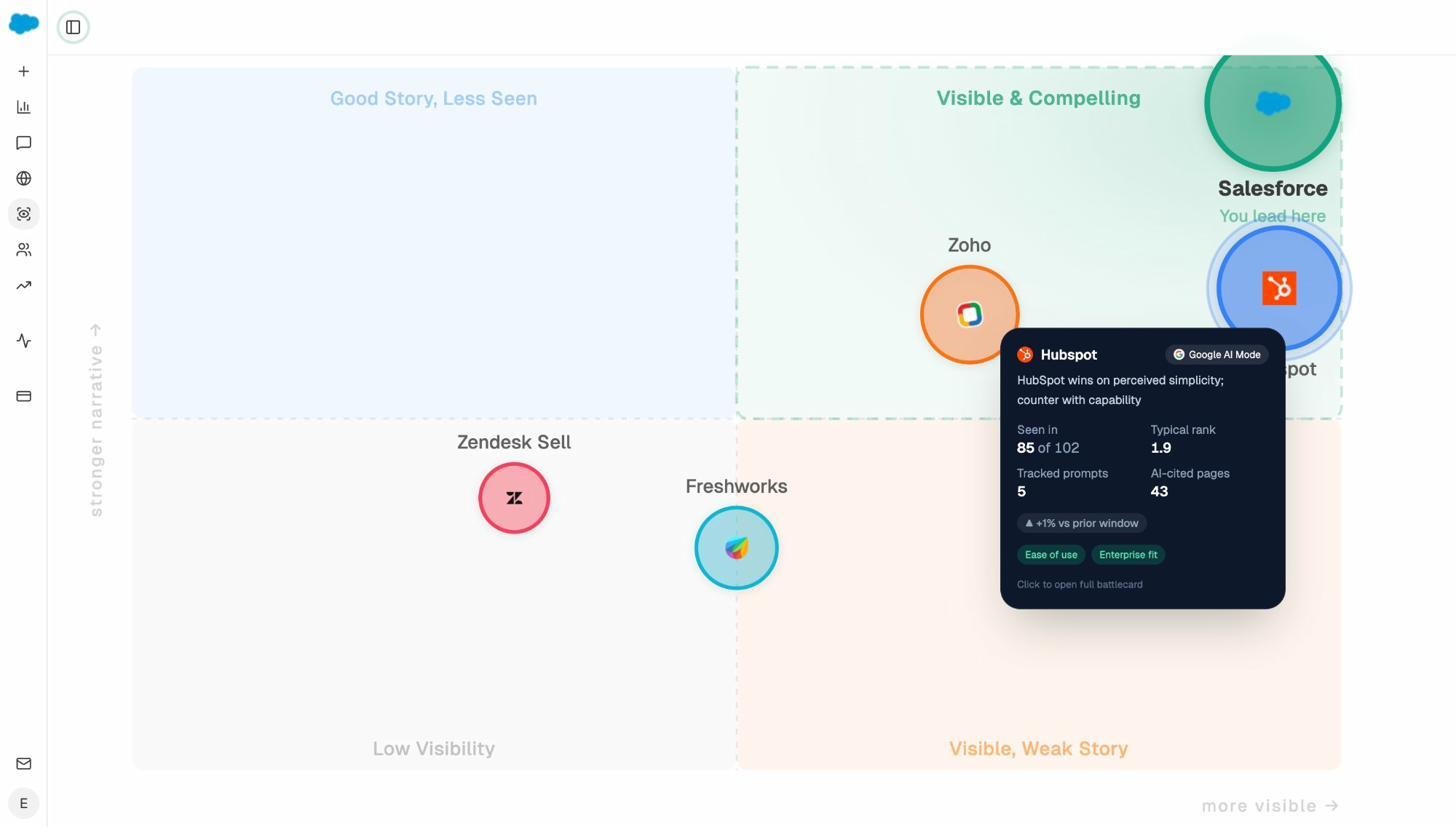

5. Get the perception map executives actually want

Visibility plus sentiment plus narrative strength is a 2D position, not a number. The Perception Map plots every tracked brand on a quadrant of presence and narrative quality.

You see who lives in “Visible & Compelling,” who’s stuck in “Visible, Weak Story,” who has a good story but no reach, and where you sit. Each brand cell opens an AI battlecard with their typical rank, citation count, and the messaging themes pulling them up or down. The Semrush AI Toolkit doesn’t ship anything like it.

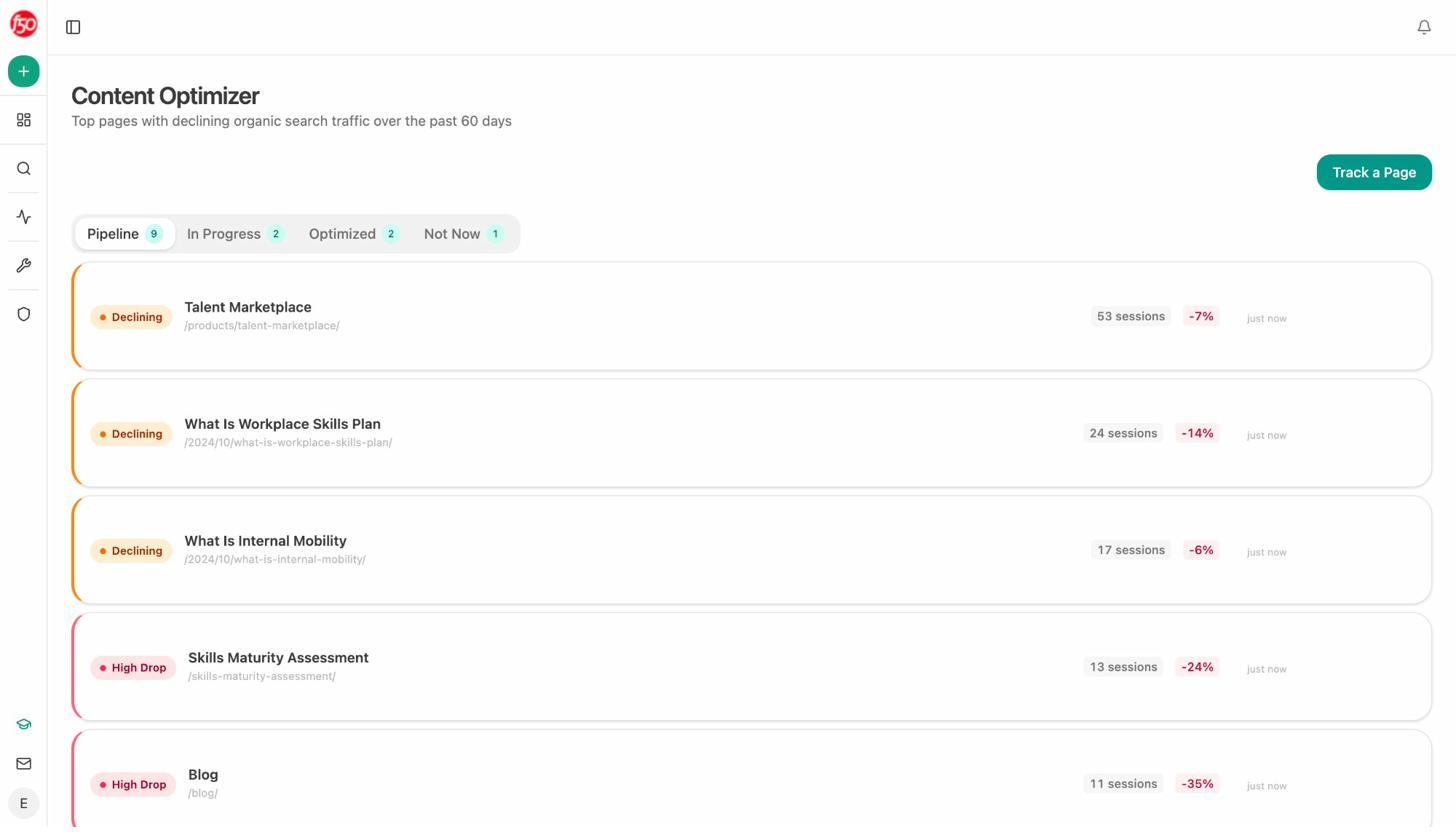

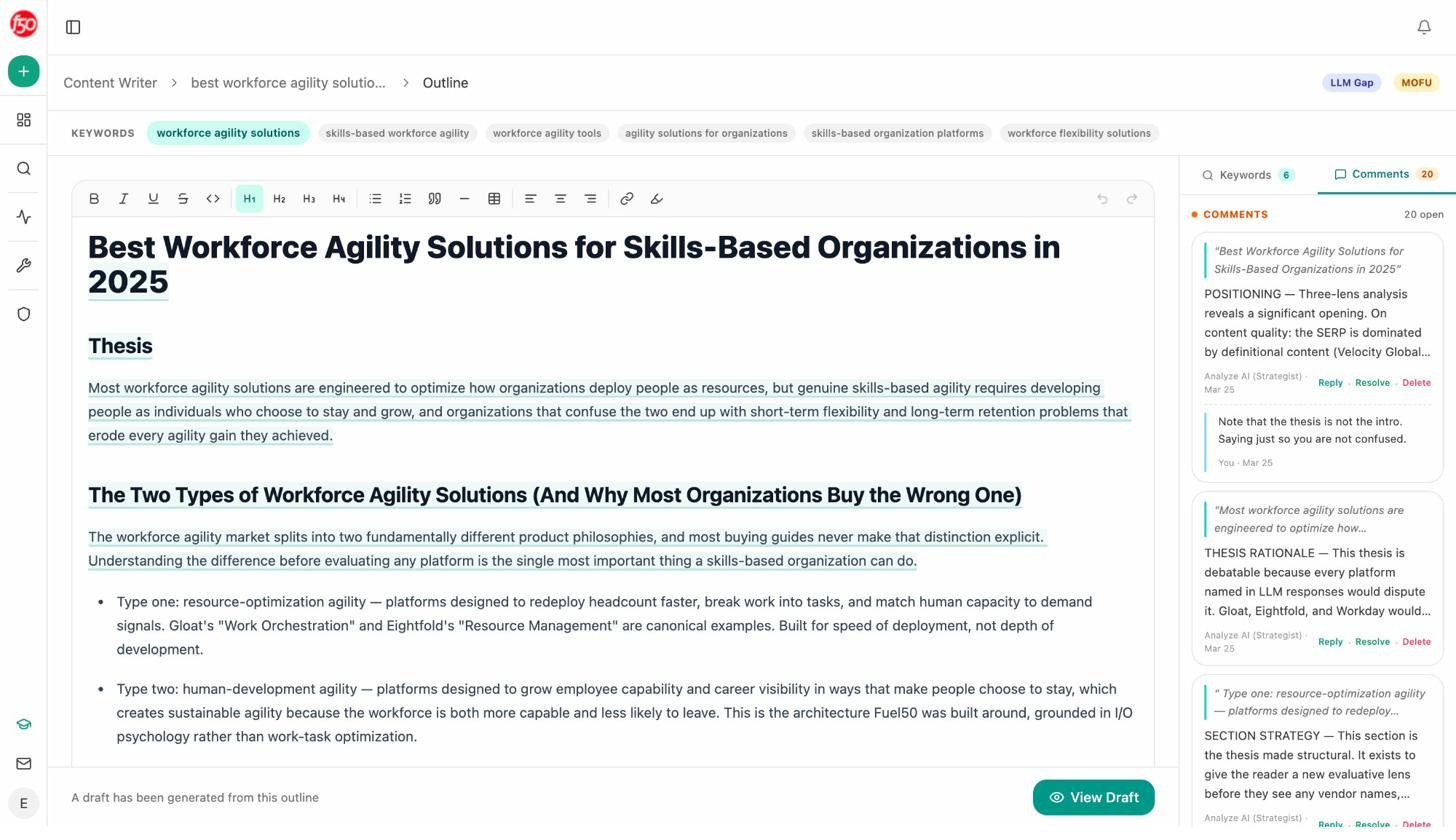

6. Optimize content for SEO and AI search at the same time

Analyze AI’s Content Optimizer audits existing pages for both classical on-page SEO and AI search readiness. It surfaces declining pages, scores each one, and queues up specific edits.

The Content Writer does the other side. It takes a topic, runs research, builds an outline, and produces a full draft that respects your brand voice through the Knowledge Base.

Our free tools cover the classical SEO basics if you want to layer them in. The keyword difficulty checker, keyword rank checker, SERP checker, and website authority checker all live at tryanalyze.ai/free-tools. You don’t bolt on a separate Content Toolkit subscription to write the page that closes a visibility gap. Discover the gap, write the page, optimize the page. Same platform.

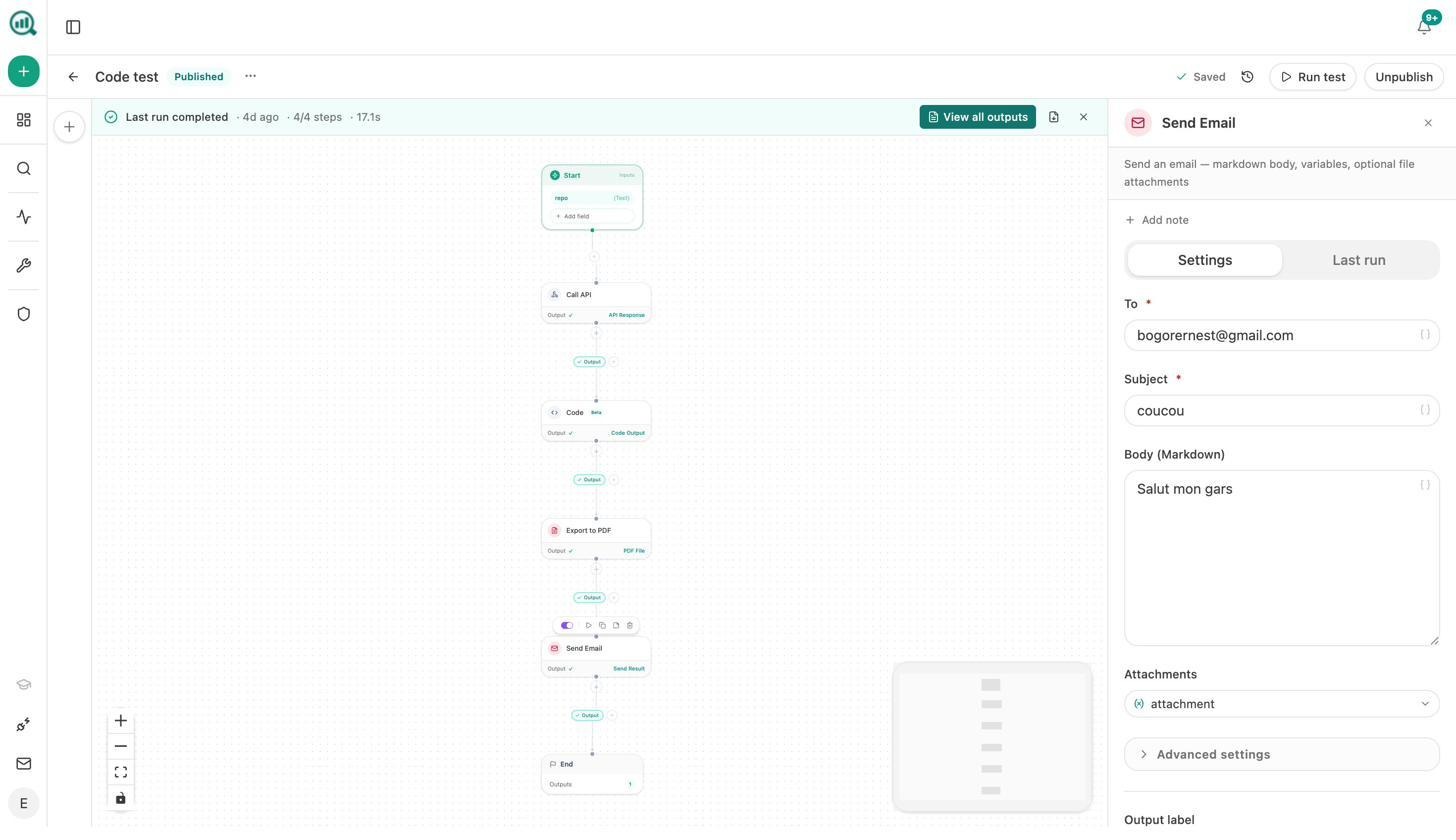

7. Build agents that run the operation while you sleep

This is where Analyze AI stops looking like another visibility tracker and starts looking like a different category. The agent builder ships with 180+ nodes, 34 pre-built data recipes, 13 input primitives, and three trigger modes (manual, scheduled, webhook). Each node maps to a real data source. GA4, Google Search Console, DataForSEO, Semrush, HubSpot, Notion, WordPress, Sanity, Contentful, Mailchimp, Hunter, Tomba, Slack, Claude, GPT, Gemini, Perplexity Sonar.

The agent builder isn’t an automation layer bolted onto the side of a visibility tool. It’s the substrate the rest of the platform sits on. AI search is one of the things you can do with it. A small slice of what teams actually build:

-

A Monday board prep agent at 7am that pulls visibility, GA4, GSC, and HubSpot deals into a one-page exec brief and emails leadership.

-

A competitor-message-shift webhook that fires when a rival publishes new content, scores the narrative threat, and drafts a counter-brief in Notion.

-

A content refresh fleet that runs weekly, finds declining pages, rewrites them in your brand voice, scores them for AI engine optimization, and pushes the update to WordPress.

-

A crisis-response webhook for PR teams that drafts three response options 60 seconds after a media mention crosses a sentiment threshold.

-

An agency client briefing pack that loops over a client list every Monday and produces a per-client DOCX with the agency’s letterhead.

Stack the three triggers and you’ve got an operations layer that runs continuously while humans focus on judgment. The Semrush AI Toolkit gives you a dashboard. Analyze AI gives you the dashboard, the writer, the optimizer, and a programmable substrate that replaces the Zapier and Retool stitching most teams currently rely on. We’ve gone deeper on this in our pieces on content automation and SEO automation tools.

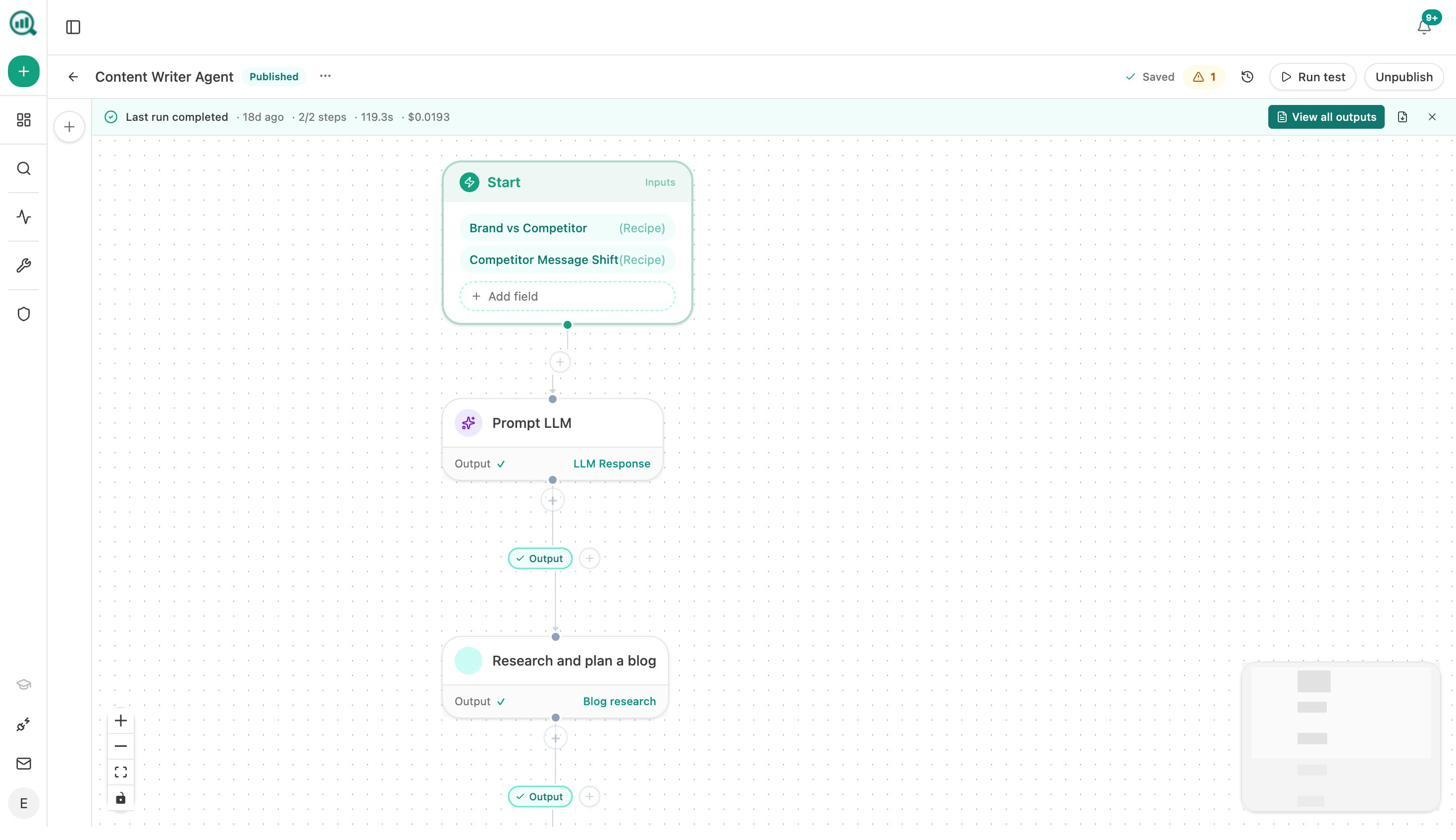

And the weekly digest tells you what to do

Every Monday, the Weekly Email Digest lands in your inbox with the visibility delta, citation momentum, pages improving, and the priority for the week.

Instead of telling you what happened, it tells you what to do this week to compound what’s working.

Side-by-side comparison

|

Capability |

Semrush AI Toolkit |

Analyze AI |

|---|---|---|

|

AI visibility tracking |

Yes |

Yes |

|

Sentiment & share of voice |

Yes |

Yes |

|

Prompt tracking with engine breakdown |

Yes (50 to 200 daily) |

Yes |

|

Suggested prompts to track |

Limited |

Yes (auto-mined from your category) |

|

AI-readiness site audit |

Yes |

Yes |

|

Real AI referral traffic by engine |

No |

Yes |

|

Landing page conversions from AI sessions |

No |

Yes |

|

Citation graph by domain and model |

Limited |

Yes |

|

Perception map and AI battlecards |

No |

Yes |

|

AI content writer |

Separate Content Toolkit |

Included |

|

AI content optimizer |

Separate Content Toolkit |

Included |

|

Agent builder with 180+ nodes |

No |

Yes |

|

GA4 / GSC / HubSpot / Notion / WordPress nodes |

No |

Yes |

|

Per-domain pricing penalty |

Yes ($99 per domain) |

Simpler tiers |

Who should buy what

If you’re already deeply embedded in Semrush for keyword research and site audits, you have one or two flagship domains, and your only goal is to add a prompt-level visibility number to your existing dashboard, the Semrush AI Toolkit is a reasonable add-on at $99 per month.

If you’re an agency tracking five or more clients, a content team that needs to write and optimize the pages closing visibility gaps, or a CMO who wants visibility tied to revenue and a single platform that automates the operation around it, you’ll outgrow the Semrush AI Toolkit fast. That’s the case Analyze AI is built for.

You can browse other LLM monitoring tools and AI search monitoring tools we’ve reviewed if you want a wider view of the category, or read how to compare your AI visibility against your competitors once you’ve picked a tool.

Final word

The Semrush AI Toolkit is a respectable starter dashboard for AI search visibility. Three real strengths, three honest limitations, $99 per domain, and it stops where most marketing teams’ actual work begins.

Analyze AI starts where Semrush stops. The visibility tracking is a single layer. The writer, optimizer, perception map, citation analytics, traffic attribution, and 180-node agent builder are the rest of the platform. All of it built around the same belief the manifesto opens with. SEO is not dead, AI search is an additional organic channel, and quality content still wins both.

Ernest

Ibrahim

![6 AthenaHQ Alternatives That Skip the Credit Math [2026]](/_next/image?url=https%3A%2F%2Fwww.datocms-assets.com%2F164164%2F1778742681-blobid0.png&w=3840&q=75)