Summarize this blog post with:

In this article, you’ll see what large-scale studies actually say about AI content and Google rankings, where the data converges, where it gets nuanced, what AI search engines like ChatGPT, Perplexity, and Google AI Overviews do with AI-written pages, and a step-by-step approach to using AI without losing visibility in either channel.

Table of Contents

The short answer: Google does not penalize AI content for being AI

Google has been clear about this for a while. In a February 2023 post on Search Central, Google said directly that “appropriate use of AI or automation is not against our guidelines.” The position is simple. Google rewards helpful content, regardless of whether a person, a model, or a person assisted by a model wrote it.

![[Screenshot of the Google Search Central blog post titled “Google Search’s guidance about AI-generated content” with the relevant guideline highlighted]](https://www.datocms-assets.com/164164/1778509707-blobid1.png)

There is an even clearer signal at the top of every search result page. Google now rolls out AI Overviews and AI Mode, both of which are AI-generated answers shown above organic listings. If Google were trying to suppress AI content, the company would not be putting AI content first.

Yet a lot of marketers still believe Google is hunting AI pages and demoting them on sight.

The actual evidence tells a different story. Across studies that together cover well over a million pages, the finding is consistent. AI assistance does not hurt rankings on its own. Pure AI dumps do worse, and the reason has nothing to do with how the page was made.

Here’s what the data shows.

What 1.5M+ pages tell us about AI content and rankings

Three independent studies have run at this question with different methodologies and different AI detectors. They reach the same broad conclusion.

Ahrefs analyzed 600,000 pages and found a near-zero correlation

Ahrefs took 100,000 random keywords, pulled the top 20 ranking URLs for each, and ran the resulting 600,000 pages through their AI content detector.

|

AI content level |

Share of top 20 ranking pages |

|---|---|

|

Pure human |

13.5% |

|

Pure AI |

4.6% |

|

Mix of human and AI |

81.9% |

So 86.5% of top-ranking pages contain at least some AI-generated content. That alone tells you Google is not filtering AI content out of the top results.

When Ahrefs ran the correlation between AI content percentage and ranking position, the result was 0.011. That’s not a small effect, that’s no effect. AI usage doesn’t predict where a page ranks. You can read the 600K-page analysis for the full methodology.

Originality.ai analyzed Google AI Overviews and found AI sources are common

Originality.ai studied one million AI Overviews and looked at the top three citations from each, focusing on YMYL queries (health, finance, law, politics) where Google supposedly applies extra scrutiny. About 1 in 10 AI Overview citations was fully AI-generated, and most cited pages were a mix of human and AI work. If Google’s own AI summaries cite AI-assisted pages on the most sensitive queries, the rest of the SERP is not being penalized either.

Graphite analyzed 31,493 keywords and reached the same broad conclusion

Graphite took a stricter view. They classified a page as “AI-generated” only when more than half the text was flagged as machine-written. By that stricter definition, 86% of articles ranking on Google were human-written and 14% were AI-generated. Pure AI dominance is rare in the top results, but it isn’t absent.

The Graphite study introduces a useful nuance. When AI-dominant articles do show up, they tend to rank a little lower. Graphite’s data shows fewer of them in positions 1 to 3 and slightly more as you move down the SERP.

What looks like a contradiction is actually one consistent finding

If you read these studies side by side, they look like they disagree. Ahrefs says AI doesn’t matter. Graphite says AI ranks lower. Both are true, because they measure different things.

|

Study |

Definition of “AI content” |

What they found |

|---|---|---|

|

Ahrefs (600K pages) |

Any AI assistance counted on a sliding scale |

Correlation with ranking is 0.011, basically zero |

|

Originality.ai (1M AI Overviews) |

Page-level classification |

~10% of cited sources are pure AI |

|

Graphite (31K keywords) |

More than 50% of text flagged as AI |

14% of ranking pages are AI-dominant, slightly lower-ranked |

The honest read is this. AI assistance does nothing to your rankings. AI taking over the page makes a small dent at the top of the SERP. Google is not running an AI filter. It’s doing what it has always done, rewarding useful pages and demoting thin ones. AI-dominant pages fall into the second bucket more often because rushed prompting produces thin content.

So why are most marketers using AI anyway?

Because AI does much more than write whole articles. In Ahrefs’ State of AI in Content Marketing report, 87% of surveyed marketers use AI somewhere in their workflow. Most use it to brainstorm, outline, fix grammar, refine titles, or pressure-test arguments. Almost no one is hitting “generate” and pasting straight into a CMS.

That matches the data. The vast majority of top-ranking content is AI-assisted, not AI-generated end to end. The line between the two matters more than the label suggests.

What about AI search? Does AI content hurt visibility in ChatGPT, Perplexity, and AI Overviews?

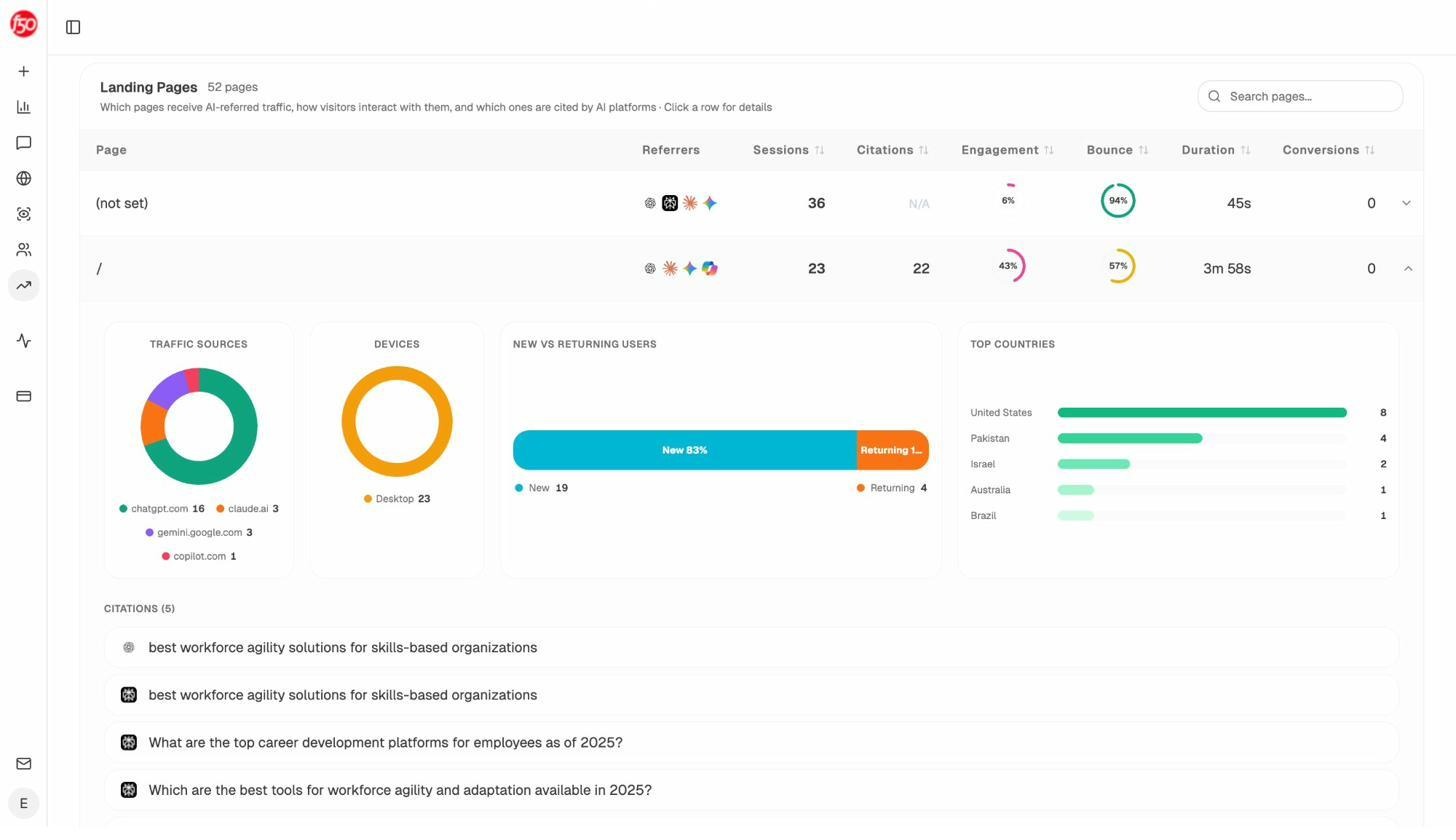

This is the question Ahrefs’ original article didn’t address, and it’s the one that matters in 2026. Most marketers now care about visibility in two channels at once, traditional search and AI search.

The good news is that AI search engines behave a lot like Google. They reward useful content and demote everything else. The bad news is that “useful” looks slightly different in AI search, and you need to track it on its own.

ChatGPT and Perplexity favor human-written sources

Graphite’s same-methodology check on AI search engines found that 82% of articles cited by ChatGPT and Perplexity were human-written, and only 18% were AI-generated under their stricter threshold. That’s broadly similar to Google’s 86% to 14% split. AI engines are not actively boosting AI content. They follow whatever already ranks well, and the web’s most useful pages are still mostly written by humans.

Google’s AI Overviews cite AI-assisted content at high rates

When Ahrefs studied AI Overview citations, they found that about 87.8% of cited pages had some level of AI assistance. That isn’t because Google’s AI prefers other AI. It’s because most modern web content is AI-assisted. AI Overviews pull from the same pool as the rest of Google search.

What AI engines really want is original data

Across Surfer’s analysis of LLM citations, 67% of ChatGPT’s top-cited pages came from original research, first-hand data, or academic sources. Other studies found that adding original survey data, proprietary benchmarks, or first-party analysis can push a page from the 6 to 15% citation range to the 38 to 65% range. The signal is consistent across ChatGPT, Perplexity, and AI Overviews.

The practical implication. AI search engines do not punish you for using AI. They reward originality. If your AI workflow produces pages that say what every other page already says, you’ll be invisible in AI search even if Google ranks you. If it lets you publish more original analysis faster, you’ll do well in both.

|

AI engine |

Behavior toward AI-generated content |

What earns citations |

|---|---|---|

|

Google AI Overviews |

Cites AI-assisted pages at the same rate as the broader web |

Match search intent, structured data, original facts |

|

ChatGPT |

82% human-written sources cited under strict definition |

Original research, named methodology, first-party data |

|

Perplexity |

Real-time web retrieval, cites ~22 sources per response |

Specific stats, named experts, structured H2/H3 |

|

Claude |

Favors legacy journalism and explanatory content |

In-depth context, fewer thin posts |

If you want a deeper dive into what each engine cites, our citation study breaks down 83,670 citations across these engines.

What actually causes content to fail (whether AI or human)

The failure modes haven’t changed. They look the same whether a person wrote the post or generated it with an LLM.

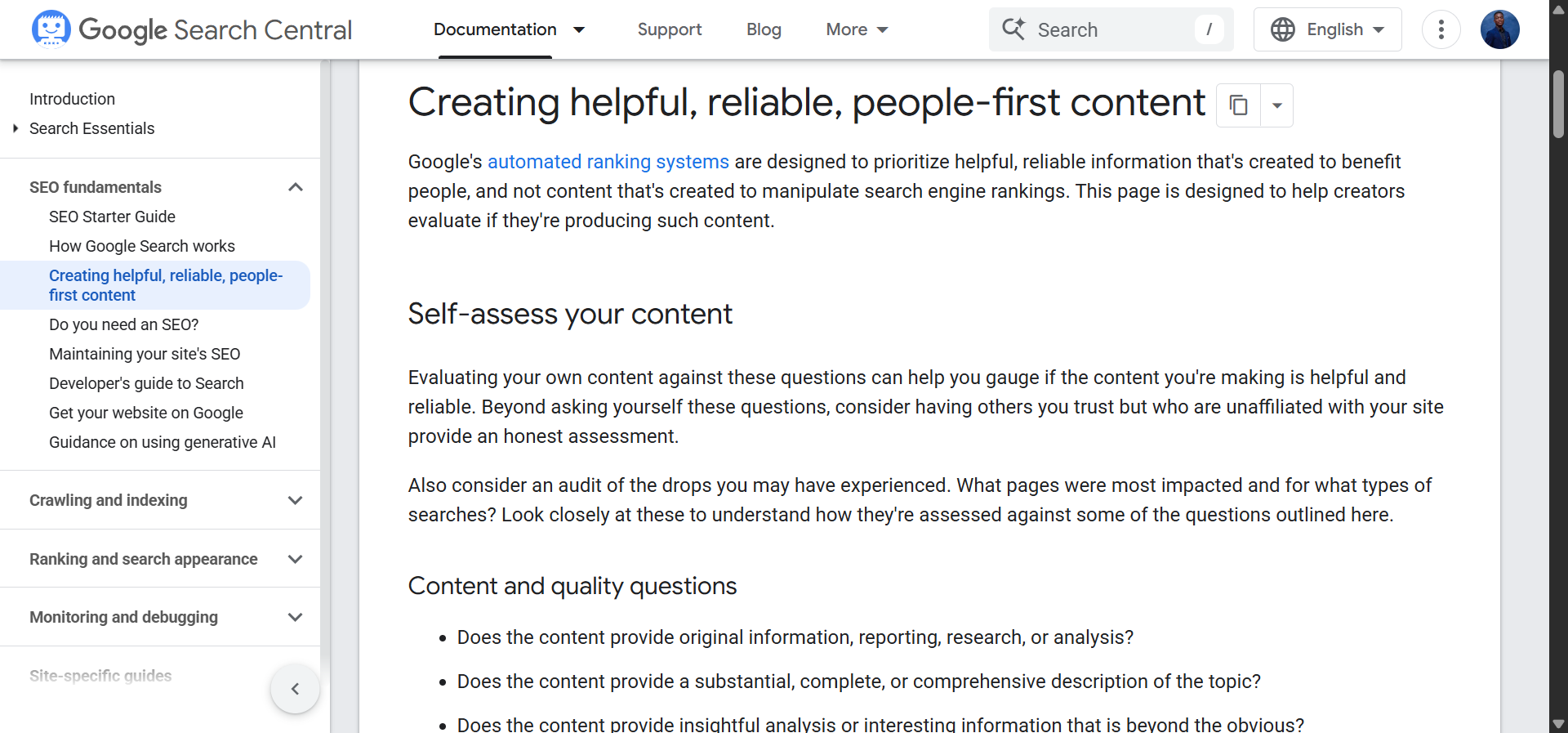

No information gain. If your page says the same thing as the 30 other pages on the SERP, it offers no reason to rank above them. Google’s helpful content guidelines explicitly call this out. AI is good at restating consensus and bad at generating new insight, which is why pure AI dumps tend to do worse.

Hallucinations and inaccuracies. Models still invent statistics, citations, and product details. A page that confidently states something untrue erodes trust the moment a reader checks. AI search engines, which surface citations next to claims, expose these errors directly.

No experience or expertise. Google’s E-E-A-T framework rewards content with first-hand experience and demonstrable expertise. AI alone has neither. A piece written by a model and never touched by anyone with real knowledge of the topic reads like every other piece written that way, and quality raters can tell.

The pattern is the same across all three. The problem isn’t that AI was used. The problem is that nothing was added.

How to use AI without hurting your Google rankings (a practical 5-step process)

This is the part most articles skip. Here’s the workflow that holds up across both Google and AI search.

Step 1: Pick topics where you have real knowledge

Ryan Law from Ahrefs makes this point in his AI content process. AI works well on simple informational topics where you already know enough to spot mistakes. It works badly on opinion pieces, original research, and rapidly changing news. If you can’t tell whether the model got it right, don’t use it.

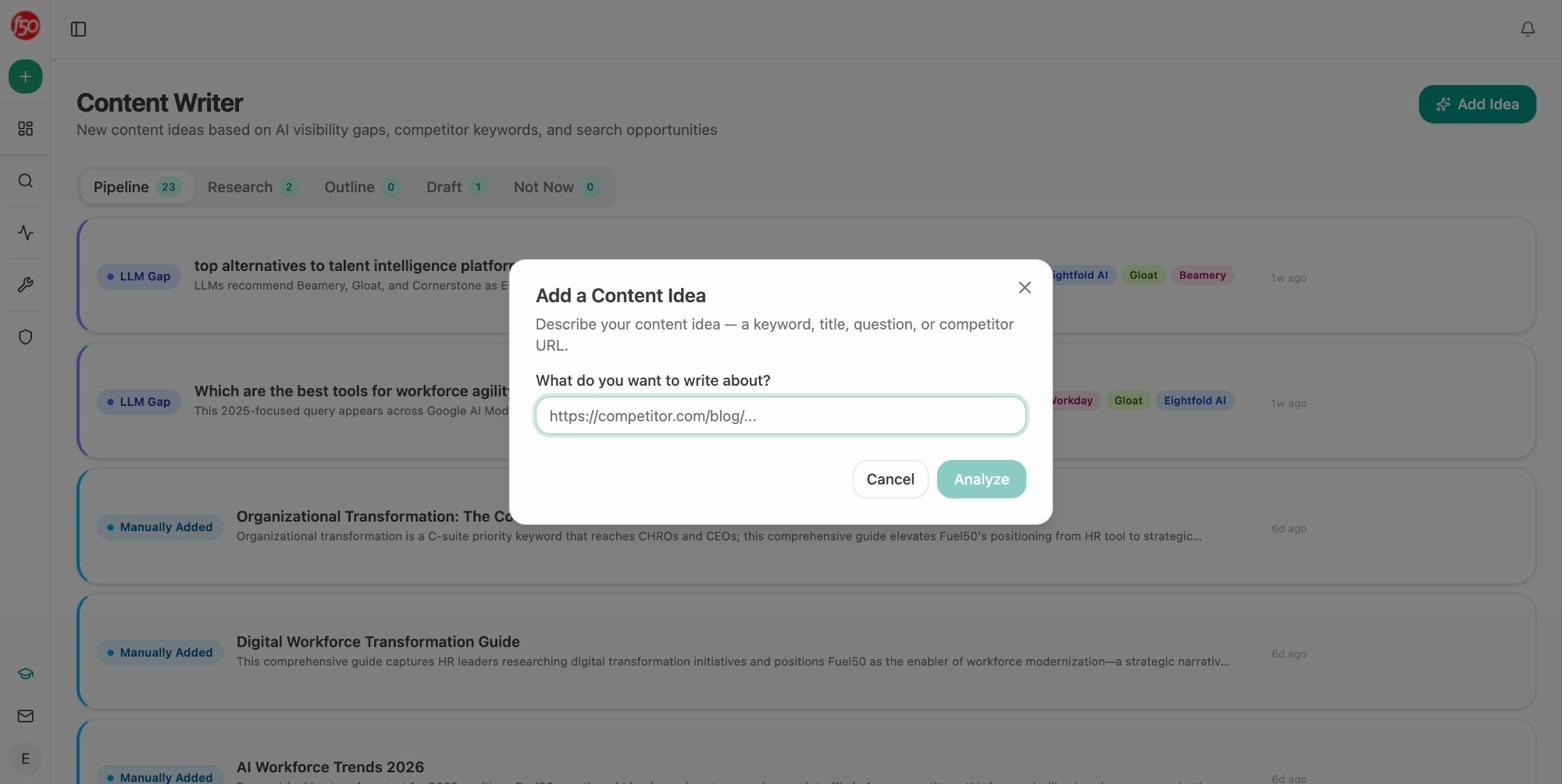

A useful filter is to sort your pipeline into three buckets. Topics you know cold can be drafted with AI and edited fast. Topics you sort of know need expert review. Topics where you have no expertise should not be AI-generated at all.

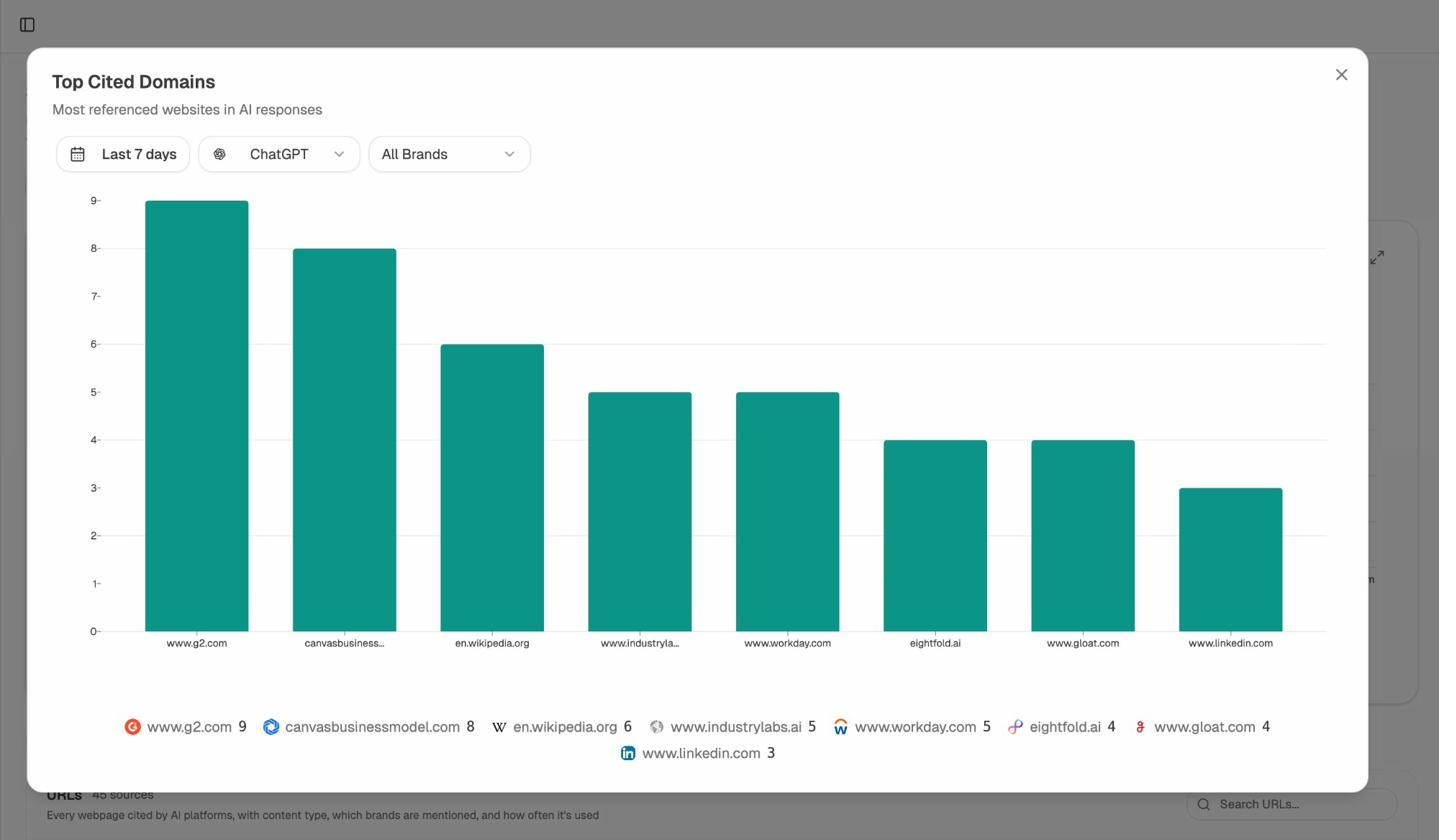

Step 2: Research the SERP and the AI engines together

Before drafting, run two checks. The first is the SERP. What’s already ranking? What questions are unanswered? What angles have been done to death? The second is what the AI engines say. Ask ChatGPT, Perplexity, and Gemini your target query and see what they cite, what they miss, and which competitors they recommend.

The second check is where most workflows leave gaps. With Analyze AI’s prompt research, you can see the actual prompts your buyers run and the gaps in current AI answers. That tells you what to write and what to leave out.

If you’re using third-party tools for the SERP side of the research, [Screenshot of a keyword research tool with the SERP overview pulled up for the target keyword] and [Screenshot of Google Autocomplete suggestions for the same keyword] are the two views your editor should capture.

Step 3: Front-load the brief with original inputs

A blank prompt produces blank content. The more your brief contains things AI can’t generate by itself, the more original your output. Give the model:

-

A specific point of view, not just a topic

-

Internal data, customer interviews, or product usage stats

-

Your own anecdotes and observations

-

Quotes from named experts

-

A clear thesis the article is meant to support

Animalz calls this the thesis-antithesis-synthesis structure, and it works whether a person or a model writes the first draft. Your thesis is the unique take. The antithesis is the prevailing view you’re pushing back on. The synthesis is the resolution. AI can draft the scaffolding once you’ve done the thinking.

Step 4: Edit for voice, accuracy, and information gain

This is where most AI workflows quietly break. The draft comes back coherent enough, the editor reads it once, and it goes live. Six weeks later the page isn’t ranking and no one knows why.

Three checks before you publish. Fact-check every number, citation, and claim, because models hallucinate confidently. Add at least one piece of information that doesn’t appear anywhere else on the SERP, whether that’s original data, a contrarian take, a specific case, or a useful framework. Then rewrite the introduction in your own voice. AI defaults are easy to spot, and the intro is the one paragraph every reader sees.

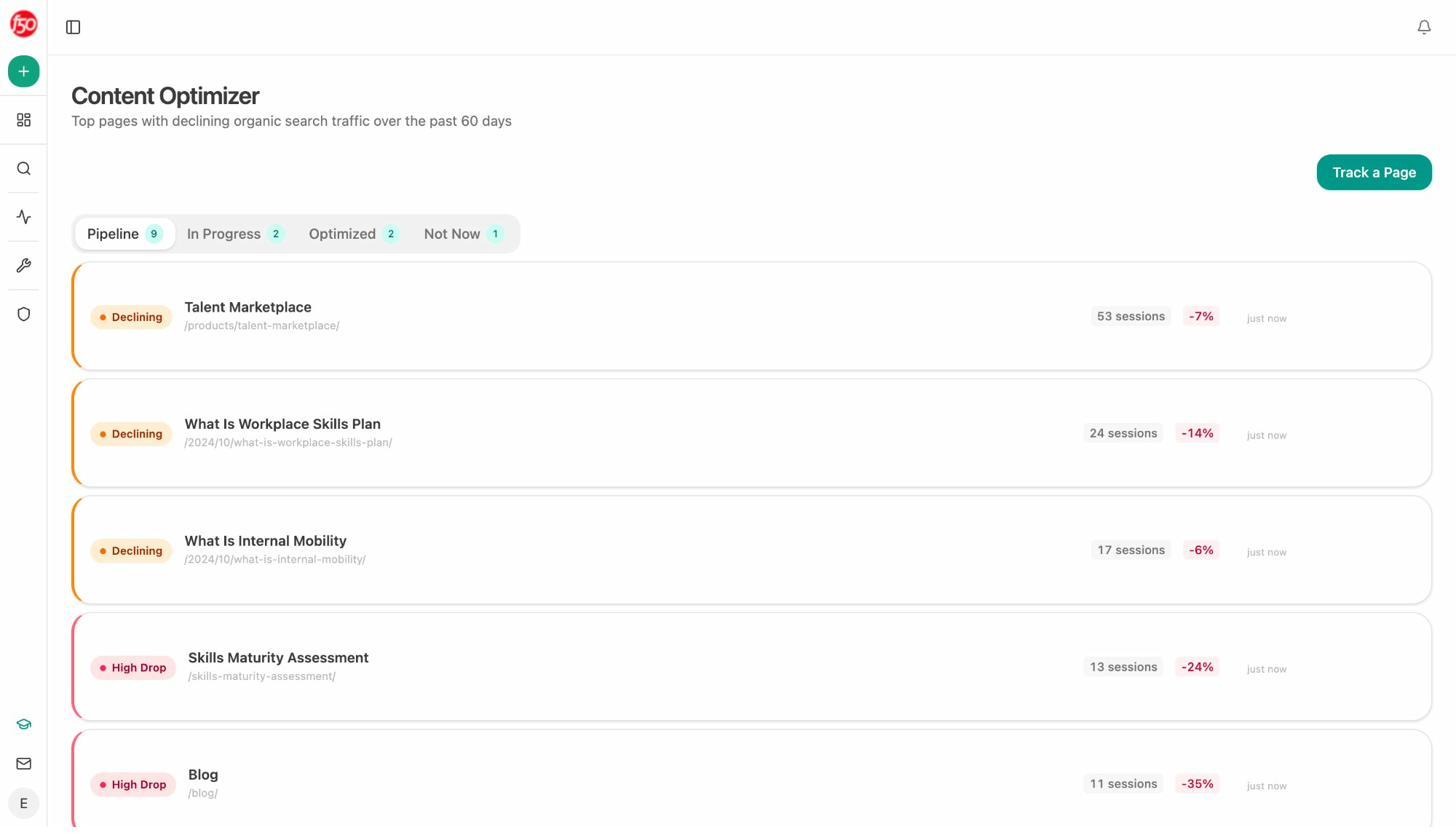

Step 5: Track what actually happens after publishing

This is the step most teams skip. You don’t know if your AI workflow is working until you measure it against both channels.

For Google, watch impressions, clicks, and position over the first 60 to 90 days. For AI search, you need a separate tracking layer because Google Search Console doesn’t show citations in ChatGPT, Perplexity, or Claude.

If you see strong Google performance and zero AI search visibility, the page is too generic for AI engines. If AI search is strong but Google is flat, you’ve written something AI engines find quotable but search engines find too thin or off-keyword. The fix is different in each case, and you can’t see either without measuring.## How to use AI without hurting your AI search visibility

The Google playbook above gets you most of the way there. AI search adds three habits on top.

Find the prompts your buyers are running

Keyword research and prompt research are not the same thing. People type “best CRM for small business” into Google. They ask ChatGPT something more specific. “I’m a 12-person agency, we use HubSpot for marketing, what CRM would integrate well.” Prompt research surfaces those conversational queries and tells you which competitors AI mentions when your brand is missing. You can do this manually by running likely queries through ChatGPT, Perplexity, Gemini, and Claude and recording which sources get cited, or use our prompt discovery feature to surface real prompts at scale.

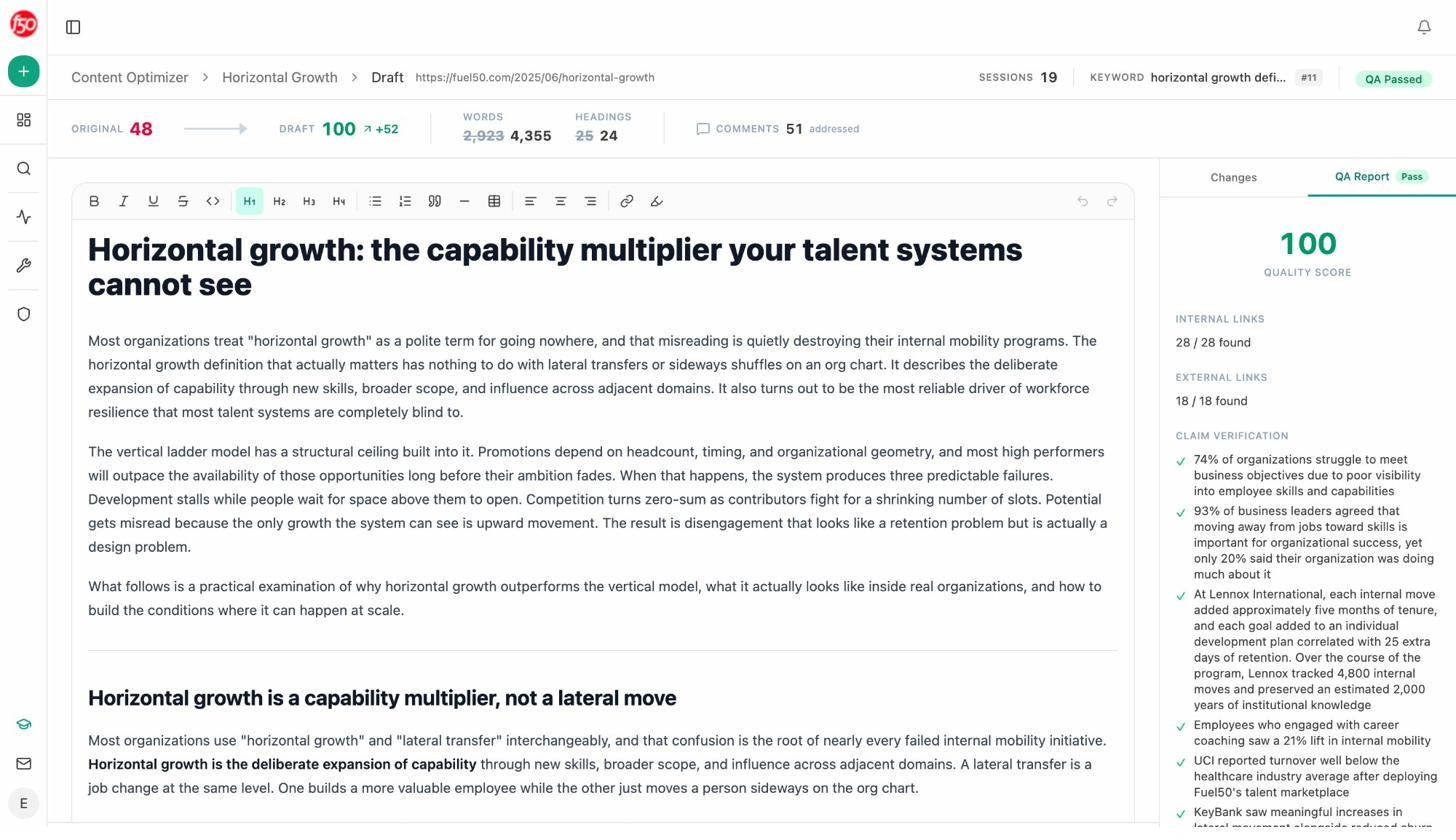

Optimize existing pages for AI search, not just SEO

Pages that already rank on Google often need small structural changes to perform well in AI search. AI engines extract content from clean H2 questions, paragraph-first answers, and pages with named statistics or methodology. If your existing posts open with a 400-word backstory before the answer, they’re easy for Google to rank and hard for AI to quote.

The quick wins are usually the same. Add a clear definition near the top, break long sections into question-style H2s, and surface data points. You can do this manually or use our content optimizer to flag the specific edits.

Double down on what’s already working

Patterns matter more than individual wins. If three of your blog posts get consistent ChatGPT citations and the other twenty don’t, the question is what those three have in common. Original data? Specific frameworks? Clean structure? Whatever the pattern is, write more of those.

The Analyze AI manifesto puts this directly. We’d rather compound what’s working than chase what’s new. The teams winning in AI search right now are not the ones publishing the most. They are the ones with the tightest feedback loop between what gets cited and what they write next.

A note on AI detectors before you go too far

Some readers will want to run their content through an AI detector to make sure it “passes.” Don’t.

AI detectors are statistical models that produce probabilities, not verdicts. False positives are common, especially on technical writing. Ahrefs’ guide to AI detectors is honest about this. Detectors flag plenty of human writing as AI and miss plenty of AI writing as human.

Google has its own internal signals and isn’t running the public detectors against your pages. The relevant question isn’t whether your page passes a detector. It’s whether your page is useful and accurate.

The simple rule that holds across both channels

AI generated content does not hurt your Google rankings. Three independent studies covering more than 1.5 million pages converge on the same answer. Google does not penalize content for being AI-assisted. Pure AI dumps rank slightly lower at the top of the SERP, but only because they tend to be thin and unoriginal.

The same holds for AI search. ChatGPT, Perplexity, and Google AI Overviews all cite AI-assisted content at high rates. What they reward is what every helpful content guideline has rewarded for a decade. Original information, accurate facts, demonstrable expertise, and clear writing.

The Analyze AI position is straightforward. SEO is not dead. AI search is not a replacement for it. They are two organic channels that respond to the same signal, which is whether your content is genuinely useful. Build a workflow that produces useful content and AI helps you produce more of it, faster. Skip that and no amount of AI will save you.

Pick a topic you know well. Write the brief yourself. Use AI to draft. Edit for accuracy and voice. Publish. Track both channels. Repeat with what works.

That’s the playbook.

Ernest

Ibrahim