Summarize this blog post with:

Manual penalties are rare, but the damage they cause is not. A site that drops from page one to page seven loses most of its traffic in a week. Income, leads, and brand searches all follow. The recovery process is slow, manual, and unforgiving of shortcuts.

In this article, you’ll see what really happens when a site gets a manual penalty from Google, how three different teams recovered from three different types of penalties, and the exact steps you can copy when it happens to you. You’ll also see how to monitor the same kind of quality signals in AI search, which is now a second organic channel sitting next to Google.

Table of Contents

What a Manual Penalty Actually Is

A manual penalty (often called a “manual action”) is a sanction applied by a human reviewer at Google, not by an algorithm. It happens when a reviewer decides your site is breaking Google’s spam policies. The penalty shows up in Google Search Console under Security & Manual Actions. It can affect a single page, a section of the site, or the whole domain.

This is different from an algorithmic drop. Algorithm changes such as core updates, the Helpful Content system, and link spam updates lower your rankings without notification. Manual actions are explicit. You will see the message. You will see the affected pattern. And you will not rank again for the affected pages until the penalty is lifted.

![[Description of the screenshot to use: Google Search Console “Manual Actions” report showing the “No issues detected” green check, contrasted with a sample live action message]](https://www.datocms-assets.com/164164/1778277262-blobid1.png)

Google publishes a full list of manual action types. The most common ones are:

|

Manual action |

What triggers it |

|---|---|

|

Unnatural links to your site |

Paid, exchanged, or otherwise manipulated inbound links |

|

Unnatural links from your site |

Outbound links that look paid, undisclosed, or manipulative |

|

Thin content with little or no added value |

Doorway pages, scraped content, low-effort affiliate pages |

|

Pure spam |

Cloaking, auto-generated content, scraped content at scale |

|

User-generated spam |

Spam posted by users in forums, comments, or profiles |

|

Cloaking and sneaky redirects |

Showing different content to Googlebot than to users |

|

Hidden text or keyword stuffing |

Hiding keywords in CSS, white-on-white text, or stuffed pages |

|

Structured data issues |

Marked-up content that doesn’t match the visible page |

|

Site reputation abuse |

Hosting low-quality third-party content under your domain |

|

AMP content mismatch |

AMP version differs significantly from canonical |

|

News and Discover policy violations |

Editorial issues affecting placement in News or Discover |

Each entry in the report links to Google’s specific guidance for that action. Read it before doing anything else. Most failed reconsideration requests fail because the team only fixed the example URLs Google listed and missed the wider pattern.

Now to the case studies.

Case Study One: A 10-Day Recovery From Unnatural Inbound Links

The first case is a blog with around 150,000 monthly users that picked up an “Unnatural links to your site” penalty in mid-June. The penalty hit on a Sunday. The team went to work on Tuesday.

Because the action was manual rather than algorithmic, the diagnosis was fast. The next steps:

-

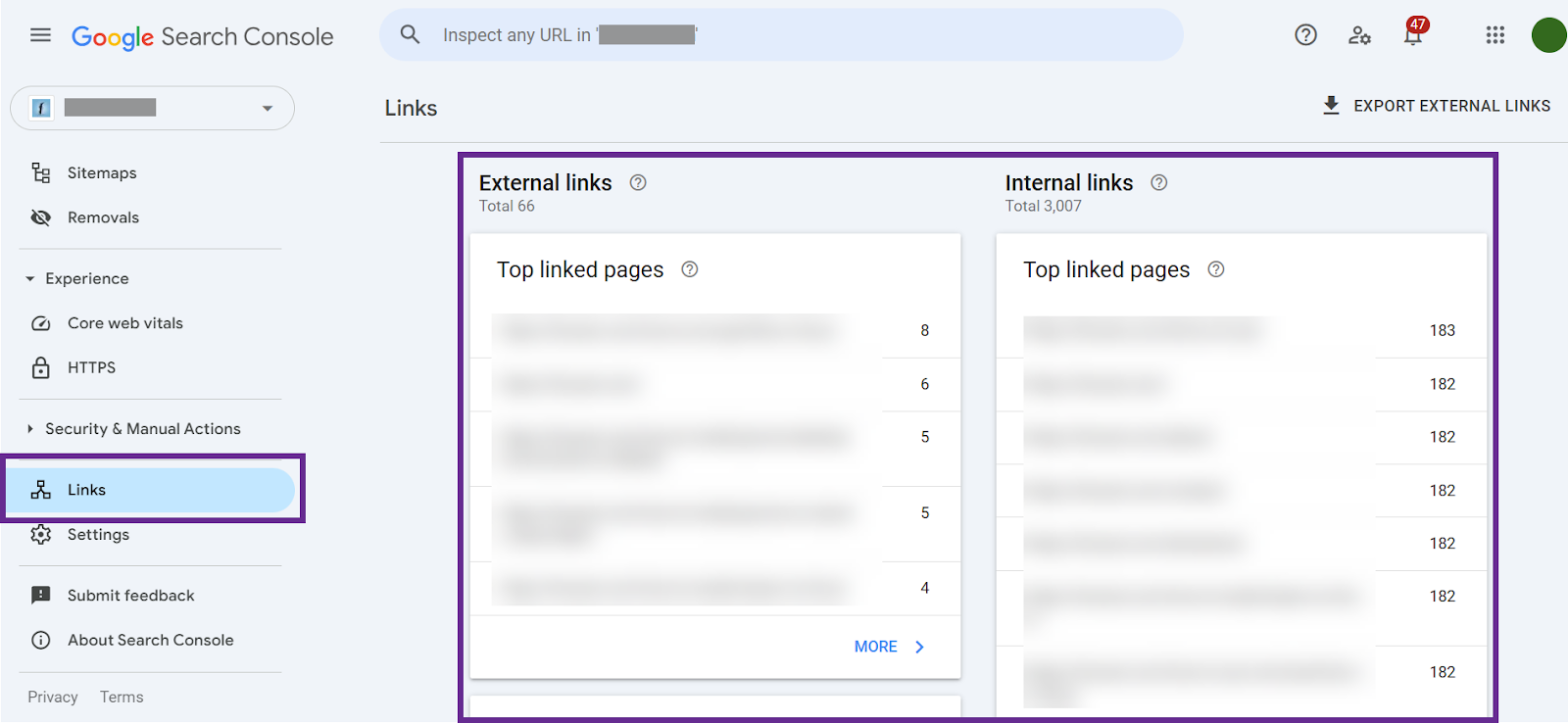

Pull the full backlink profile from Google Search Console.

-

Cross-reference with a third-party backlink tool to catch links GSC missed.

-

Review every linking domain and flag the ones that looked paid, exact-match, or part of a guest-post-for-money network.

The audit surfaced a handful of “write for us” pages charging a small fee for a follow link. Those links were the trigger. The site owner had paid for the placements and didn’t want to see them removed. As he put it, “they were fairly expensive.”

That instinct is the most common reason recoveries stall. If you paid for a link, Google considers it manipulative. Keeping it costs you more than the link is worth.

The team removed every flagged link they could reach through outreach, then disavowed the rest. The reconsideration request followed three principles.

-

Name the issue honestly.

-

List exactly what was removed and disavowed.

-

Commit to following the link guidelines going forward.

Google’s reply came 10 days later. Manual action lifted. Traffic was back inside 24 hours.

The lesson from this case is brutal honesty. A vague “we cleaned up some links” message gets rejected. A request that names the link-buying practice, lists the exact pages, and shows the disavow file gets through faster.

Case Study Two: An 80-Day Recovery for Unnatural Outbound Links

The second case ran far longer. A music industry publisher received a manual action for “Unnatural links from your site” in late August.

This penalty is sneakier. It does not mean the site bought links. It means the site was selling them, or letting paid placements sit in editorial content without nofollow or sponsored attributes. The team did not realize the issue at first because the offending links were buried in old advertorials and partner content from previous years.

Their process:

-

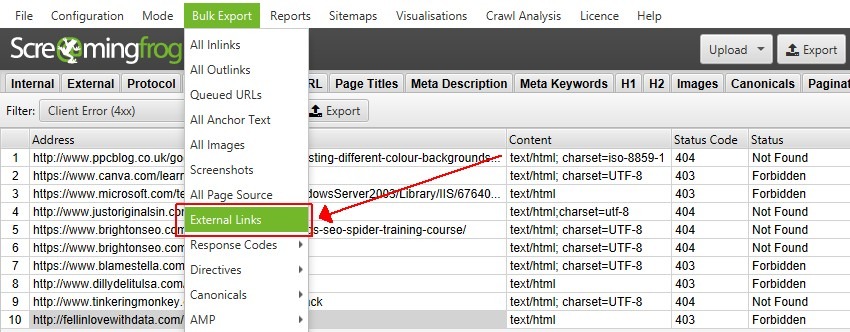

Crawl the affected sections with a tool like Screaming Frog to extract every outbound link.

-

Filter for links inside any post that mentioned a partner, a giveaway, or an ad.

-

Review each one for sponsored attribution.

The first reconsideration request failed. The team had only fixed the example URLs Google provided in the message. They had missed dozens more across the rest of the archive.

This is the most common mistake teams make. Google’s example URLs are illustrative, not exhaustive. You have to fix the pattern across the whole site.

The team spent two more weeks reviewing every advertorial since 2014, deleted some entirely, added rel=“nofollow” or rel=“sponsored” to the rest, then resubmitted. The second request was accepted 80 days after the original notification. Blog subfolder traffic rebounded soon after.

The lesson from this case is patience. A second reconsideration request can take weeks to review. It is faster to spend an extra week being thorough than to submit a half-cleaned site and wait for the rejection.

Case Study Three: Pure Spam and Three Reconsideration Requests

The third case is a site flagged for “Pure spam” alongside several link-related issues. Pure spam is the harshest manual action. Google can deindex affected pages entirely.

The recovery, documented by the team that handled it, took three reconsideration requests. They spent weeks emailing each linking webmaster up to five times from the client’s company domain (not a generic agency email, which raises authority on the request). They tracked every email in a spreadsheet, recording mailbox status, response, and number of attempts.

The reconsideration request linked to that spreadsheet. The team gave Google evidence of effort, not just claims of effort. Google’s responses came in 10 to 21 days each.

The lesson from this case is documentation. The reviewer is not running a script. They are reading what you wrote. The more concrete proof you provide, the easier it is to approve your request.

Here are the three cases at a glance.

|

Case |

Penalty type |

Recovery time |

Number of reconsideration requests |

Key lesson |

|---|---|---|---|---|

|

Blog (150k users) |

Unnatural links to your site |

10 days |

1 |

Honesty about paid links |

|

Music publisher |

Unnatural links from your site |

80 days |

2 |

Fix the pattern, not just the examples |

|

Pure spam site |

Pure spam + unnatural links |

4+ months |

3 |

Document outreach in a spreadsheet |

The Repeatable Framework You Can Copy

Across all three cases, the recovery process follows the same shape. Here is the framework, step by step.

Step 1: Confirm the Manual Action

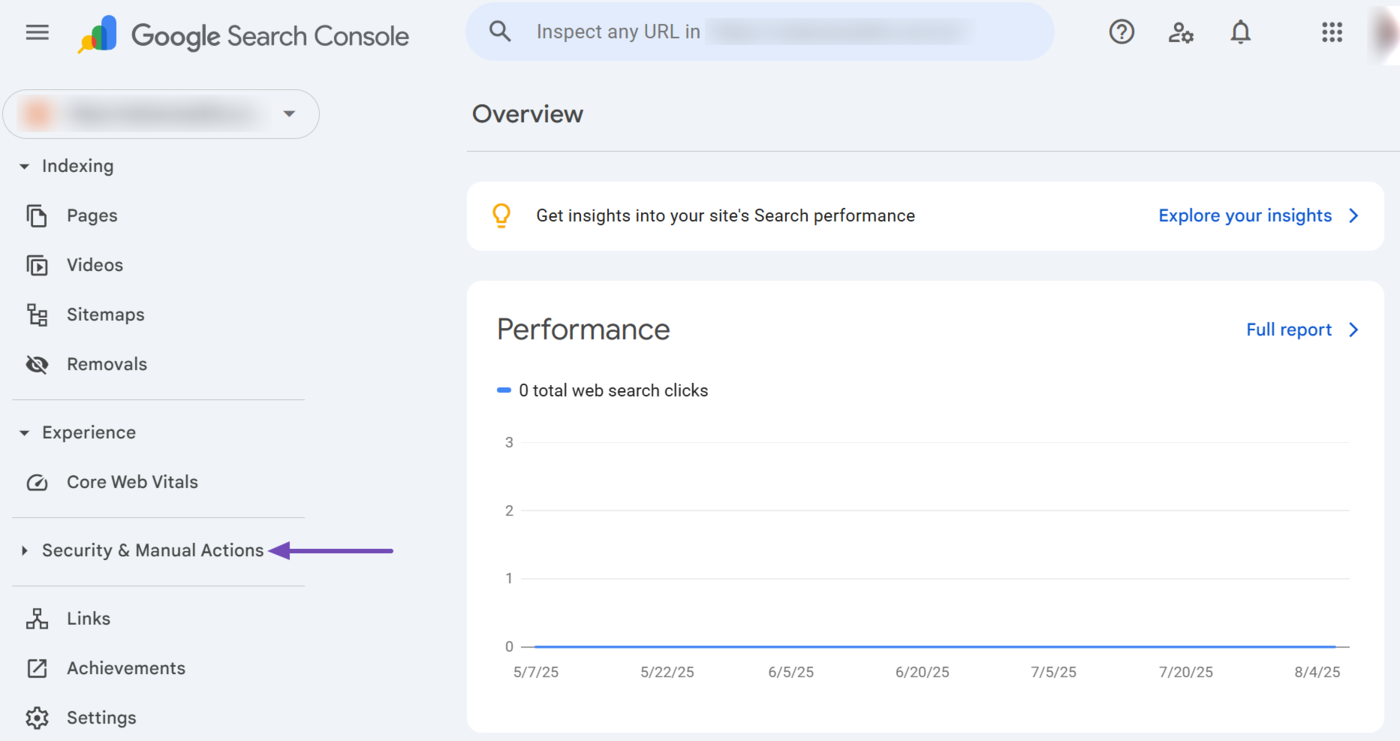

Open Google Search Console. Go to Security & Manual Actions, then Manual Actions. Read the message. Note the action type, the affected pattern, and any example URLs.

If the report says “No issues detected” but your traffic dropped, you are dealing with an algorithmic shift, not a manual penalty. Recovery for algorithmic drops works differently and usually takes 6 months to 2 years rather than weeks.

Step 2: Audit the Full Pattern, Not Just the Examples

The example URLs in the manual action are samples. Google expects you to find every other instance of the same problem.

For link-related actions, export every backlink from Search Console, then cross-reference with at least one third-party crawler. You can run a quick check on outbound links with the free broken link checker, and verify your overall site authority with the website authority checker to baseline before and after.

For content-related actions (thin content, pure spam, doorway pages), inventory every URL on the site and flag any page that:

-

Has fewer than 300 words of unique content.

-

Targets a keyword without offering a substantively different angle.

-

Was auto-generated from a template or feed.

-

Exists only to capture an affiliate click.

For structured data issues, run the URLs through Google’s Rich Results Test and fix any markup that does not match the visible page.

Step 3: Fix or Remove at the Source

For link penalties, contact the site owners and ask for removal first. Do this even when you expect no reply. Google looks for evidence of good-faith outreach.

Send up to five emails per domain over two to four weeks. Track every attempt in a spreadsheet with columns for domain, date contacted, email used, response, and outcome. You will need this spreadsheet for the reconsideration request.

For content penalties, the choice is improve or remove. If a page can be rewritten to genuinely add value, rewrite it. If it cannot, delete it and serve a 410 status code.

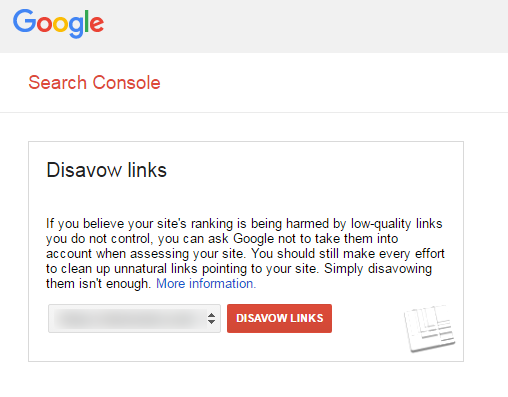

Step 4: Disavow What You Cannot Remove

For inbound link penalties, anything you could not get removed goes in a disavow file. Keep it conservative. Disavow only the links that are genuinely manipulative. A disavow file padded with legitimate links will weaken your reconsideration request.

The format is plain text, one line per entry.

domain:example.com

https://example.com/specific-page

Upload the file through the Google Disavow Tool. Do not disavow links you simply do not like.

Step 5: Write the Reconsideration Request

A good reconsideration request does three things, and only three things.

-

Names the issue honestly.

-

Documents what you fixed and how.

-

Provides evidence such as links to spreadsheets, before/after counts, or removed URLs.

Avoid corporate language. Avoid excuses. The reviewer is human and reads hundreds of these. A clean, specific, honest message rises above the noise.

Sample structure:

“We received a manual action for [exact action name] on [date]. After reviewing our [link profile / content inventory / structured data], we identified [specific patterns] across [N] URLs. We removed [N] links through outreach (documented in this spreadsheet), disavowed [N] more, and will not engage in [practice] going forward. Please review and reconsider.”

Step 6: Submit and Wait

Reconsideration requests typically take 10 to 30 days for manual link penalties. Pure spam, thin content, and partial actions can take longer. Do not submit a second request before you hear back on the first. It will not speed up the process.

When the action is lifted, traffic usually returns within 24 to 72 hours, though full ranking recovery can take longer if you lost links during cleanup.

Why None of This Exists in AI Search (And What Does Instead)

There is no manual action report in ChatGPT. Perplexity does not email you when your brand stops appearing. Gemini will not tell you it stopped citing your site.

AI search does not issue penalties. But it does drop you. A site that AI engines previously cited can disappear from answers within days because of a content update, a model refresh, a competitor’s better page, or a reputational shift in the data the model retrieves.

This is the second organic channel sitting next to Google, and it needs its own monitoring.

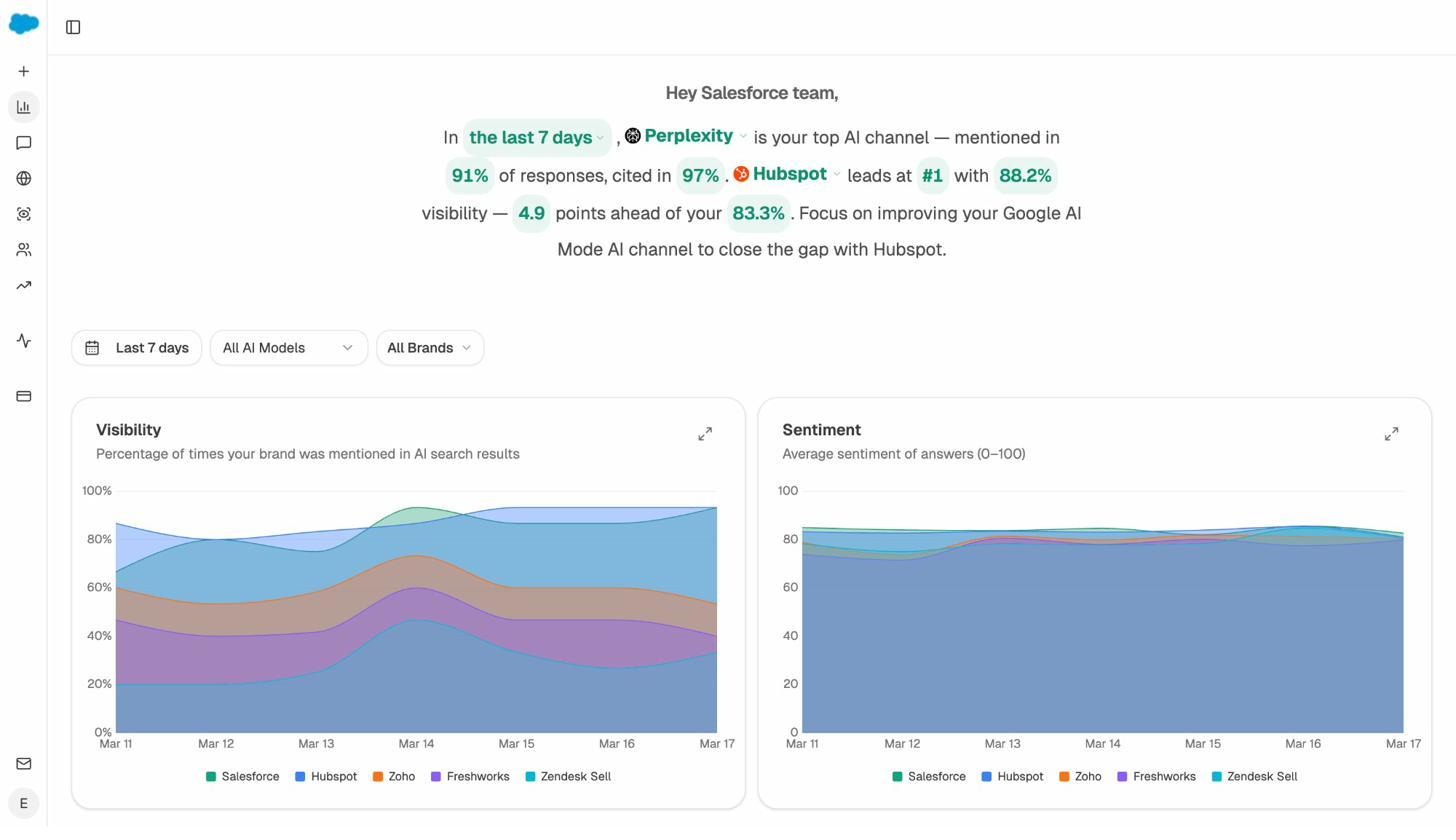

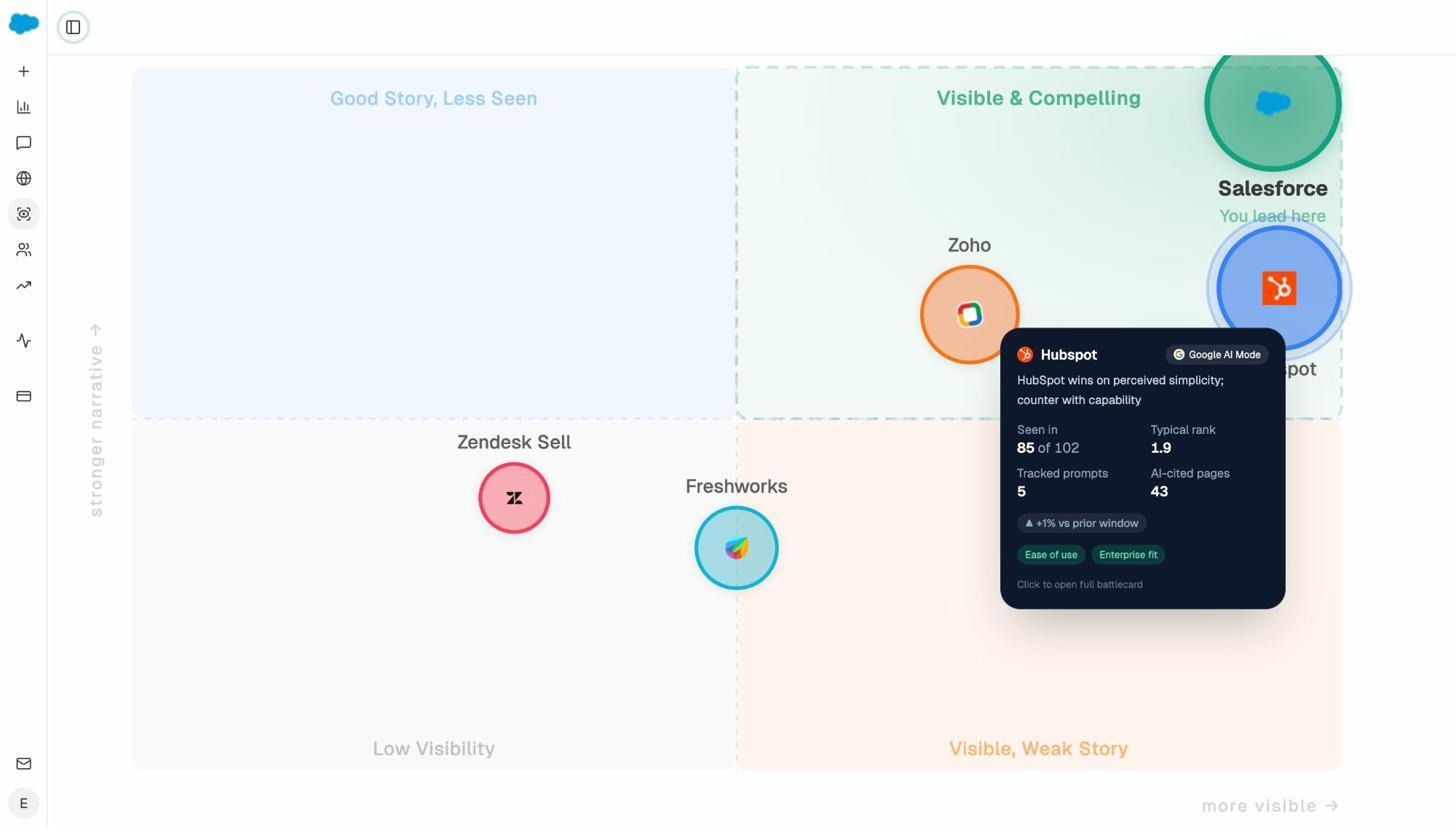

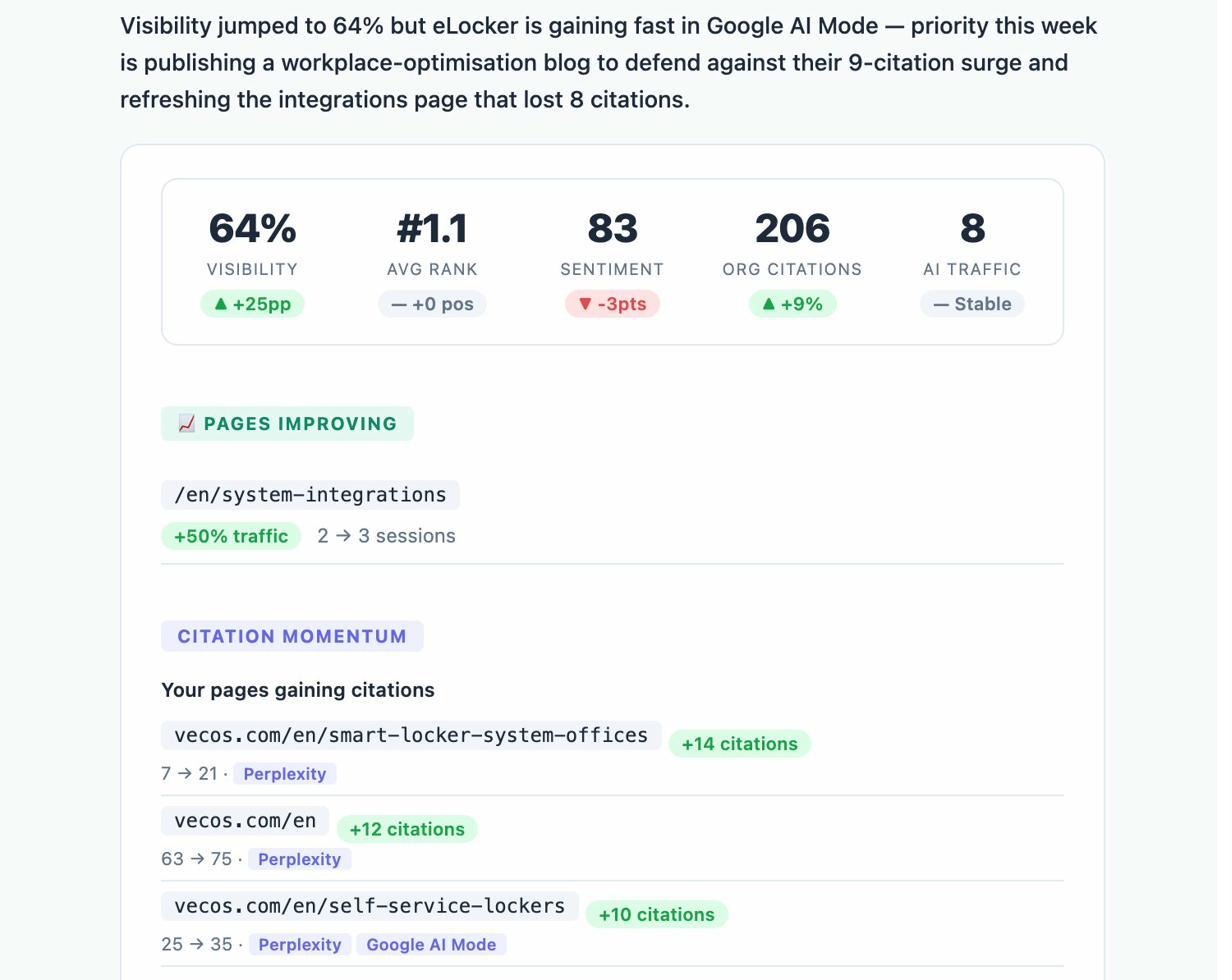

The closest equivalent to a “manual action report” for AI search is a visibility dashboard that tracks where you appear (and don’t) across every major engine, prompt by prompt.

The Analyze AI overview gives you a baseline visibility score across ChatGPT, Perplexity, Gemini, Claude, and Copilot. A sudden drop here is your first signal that something has changed.

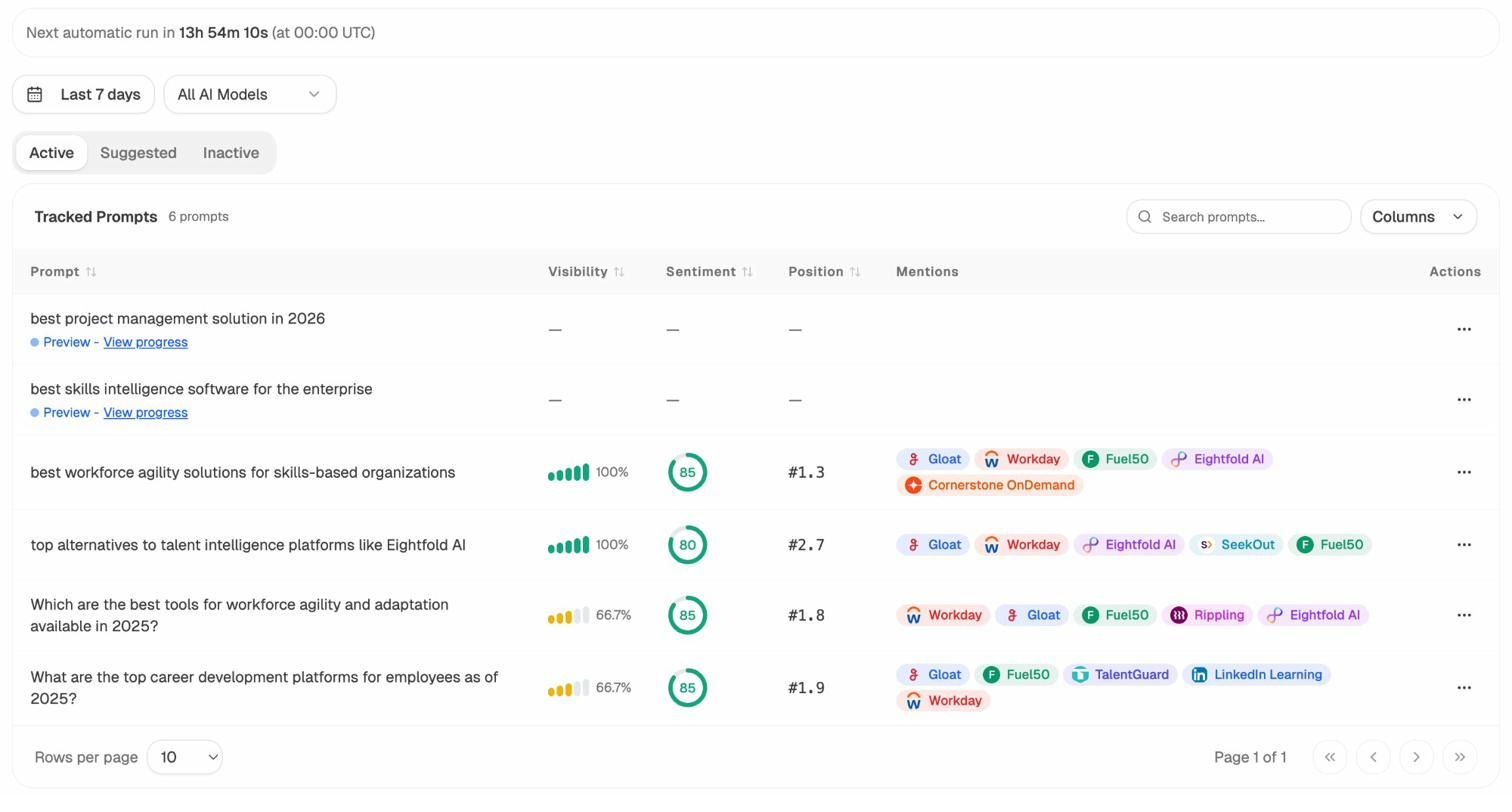

For deeper diagnosis, the prompt-level view shows exactly which queries you have lost ground on.

If your brand was being cited for “best [category] tool” last month and is missing this month, this is where you see it first. From there, you can drill into which competitor took the slot.

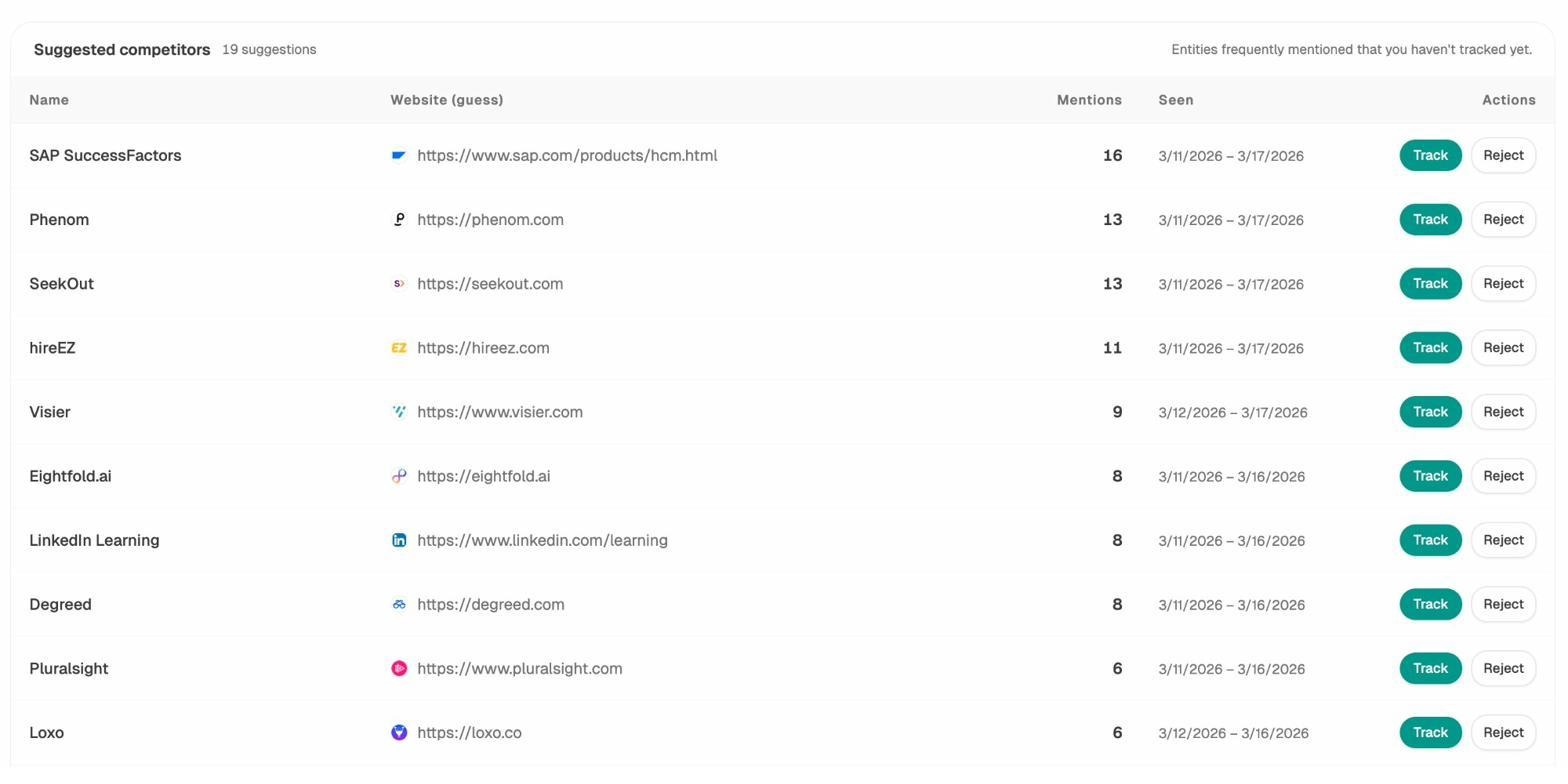

Just like a Google penalty has a root cause (paid links, thin content), AI visibility drops have a root cause too. Usually it is one of three things.

-

A competitor published a stronger page on the topic.

-

A source the model trusts (G2, Reddit, an industry blog) updated and removed your mention.

-

Your content went stale and the model now favors more current sources.

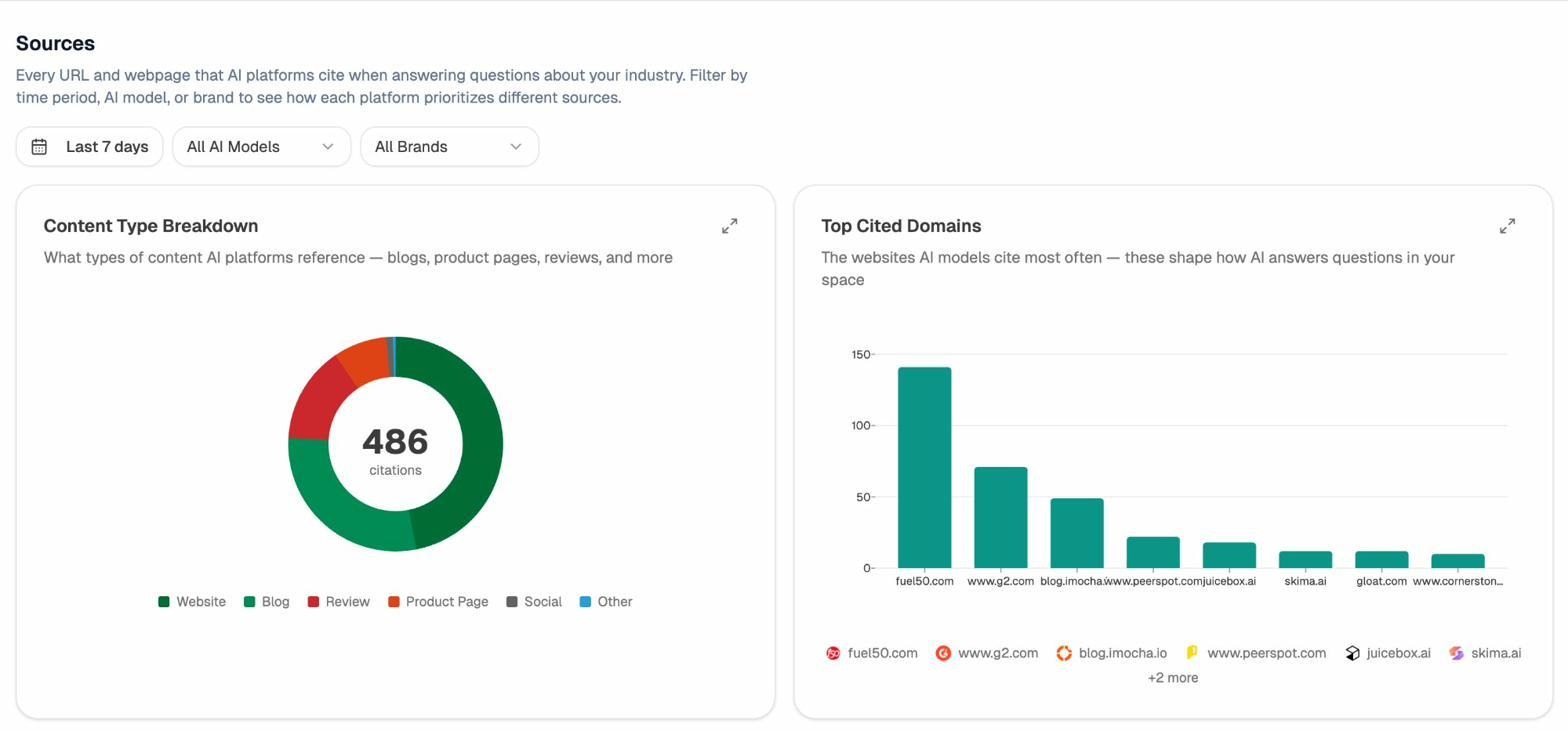

The Sources view tells you which sites are influencing the answers in your space.

If your domain is missing from the cited sources for prompts you should win, that is your equivalent of a manual action. The fix is the same in spirit as a content penalty recovery. You earn the citation back by improving the page or building presence on the source the model trusts.

The third pillar is reputation. AI engines are sensitive to sentiment in the underlying training data and retrieval sources. A wave of negative reviews, a press incident, or a forum thread can shift how the model frames your brand.

The Perception Map surfaces this drift before it becomes the dominant narrative. The pattern matches a Google penalty. Catch the issue early, fix the source, and the recovery is fast. Wait until the narrative is locked in, and recovery takes months.

For ongoing monitoring, the weekly digest does for AI visibility what GSC notifications do for manual actions. It pushes the changes that matter to your inbox so you do not have to check daily.

If you want to dig into how this fits with your broader strategy, the SEO competitor analysis breakdown and the off-page SEO playbook cover the practices that keep both Google and AI engines confident in your site.

How to Prevent the Next Manual Penalty

Most sites that get hit with a manual action saw the warning signs months earlier. Rapid increases in low-quality referring domains. Anchor text profiles dominated by exact-match terms. Outbound link patterns that started looking like paid placements. Thin pages added in bulk to chase keywords.

Three habits keep you out of trouble.

-

Audit backlinks monthly. Look for unusual spikes in referring domains and anchor text shifts. Monthly cleanup is far less painful than annual cleanup. The 9 best backlink building tools roundup covers the options most teams use for this.

-

Hold content to a real bar. If a page would not earn its placement on a brand you respect, do not publish it. Use the SEO audit tools roundup for a starting checklist.

-

Monitor AI visibility alongside Google. A drop in AI citations often shows up before a Google ranking shift, because AI engines refresh more frequently than Google’s index. Treating both as one organic channel keeps you ahead of either.

This is the through-line across the case studies and the AI search work. SEO is not dead. AI search is not replacing it. They are two surfaces, both reading the same quality signals, both punishing the same shortcuts. The teams that recover fastest treat them that way.

A manual penalty is recoverable. So is an AI visibility drop. Both reward the same things, which are honest cleanup, careful documentation, and patience with the timeline.

Ernest

Ibrahim