Summarize this blog post with:

In this article, you’ll learn why teams outgrow Am I On AI, what to look for in an alternative, and which seven tools fill the gaps that a ChatGPT-only visibility checker leaves wide open. Each recommendation is based on testing across real prompts, real engines, and real workflows, so you can pick the right fit without guessing.

Table of Contents

What to look for in an Am I On AI alternative

Before you compare features, get clear on what problem you are solving. A CMO proving AI search ROI to the board needs different tooling than an SEO specialist tracking prompt rankings. Here is what separates useful AI visibility platforms from expensive dashboards:

Engine coverage. Am I On AI tracks ChatGPT and Google AI Overviews. But buyers use Perplexity, Claude, Gemini, and Copilot too. Any serious alternative should cover at least four engines.

Traffic attribution. Knowing your brand was mentioned means nothing if you cannot tie that mention to a session, a landing page, and a conversion. Tools that stop at “mentioned” or “not mentioned” leave you guessing about ROI.

Actionability. The tool should tell you what to do next, not just what happened. That means content recommendations, competitive gap analysis, and optimization workflows.

Pricing simplicity. Some tools charge per engine, per region, per prompt. Others charge per credit. Look for plans that scale without surprise bills.

1. Analyze AI: the best overall Am I On AI alternative

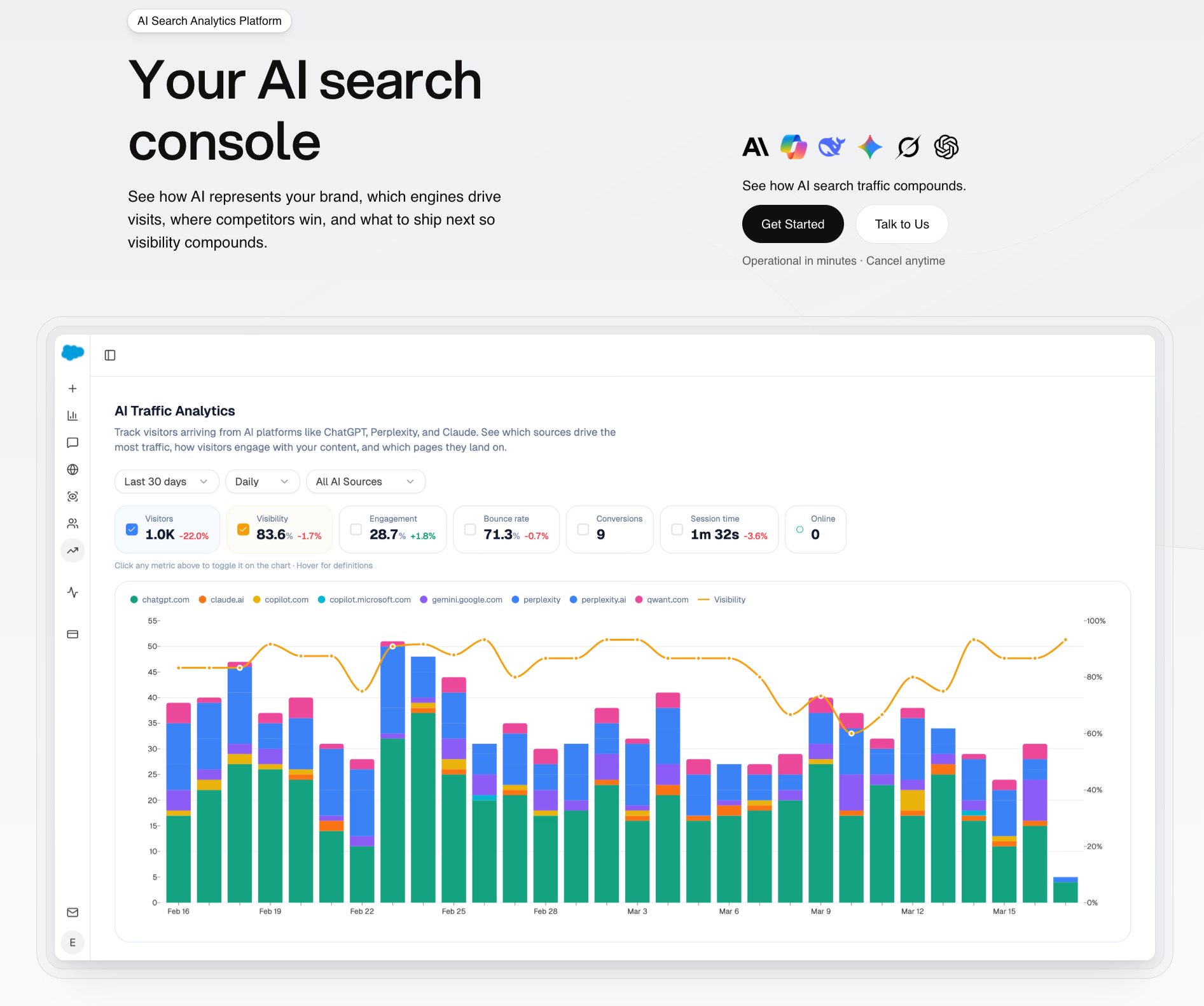

Analyze AI is the agentic platform for SEO, AEO, content, and GTM ops. It tracks your brand across ChatGPT, Perplexity, Claude, Copilot, Gemini, and more. But tracking is where most tools stop and where Analyze AI starts. The platform connects AI visibility to GA4 traffic, landing pages, and conversions, then gives you the tools to improve what the data reveals.

Here is the core difference. Am I On AI tells you “yes, ChatGPT mentioned you.” Analyze AI tells you which engine sent 248 sessions last month, which landing page converted 12% of that traffic to trials, and which competitor overtook you on five bottom-of-funnel prompts this week. Then it helps you fix the gaps.

See actual traffic from AI engines, not just mentions

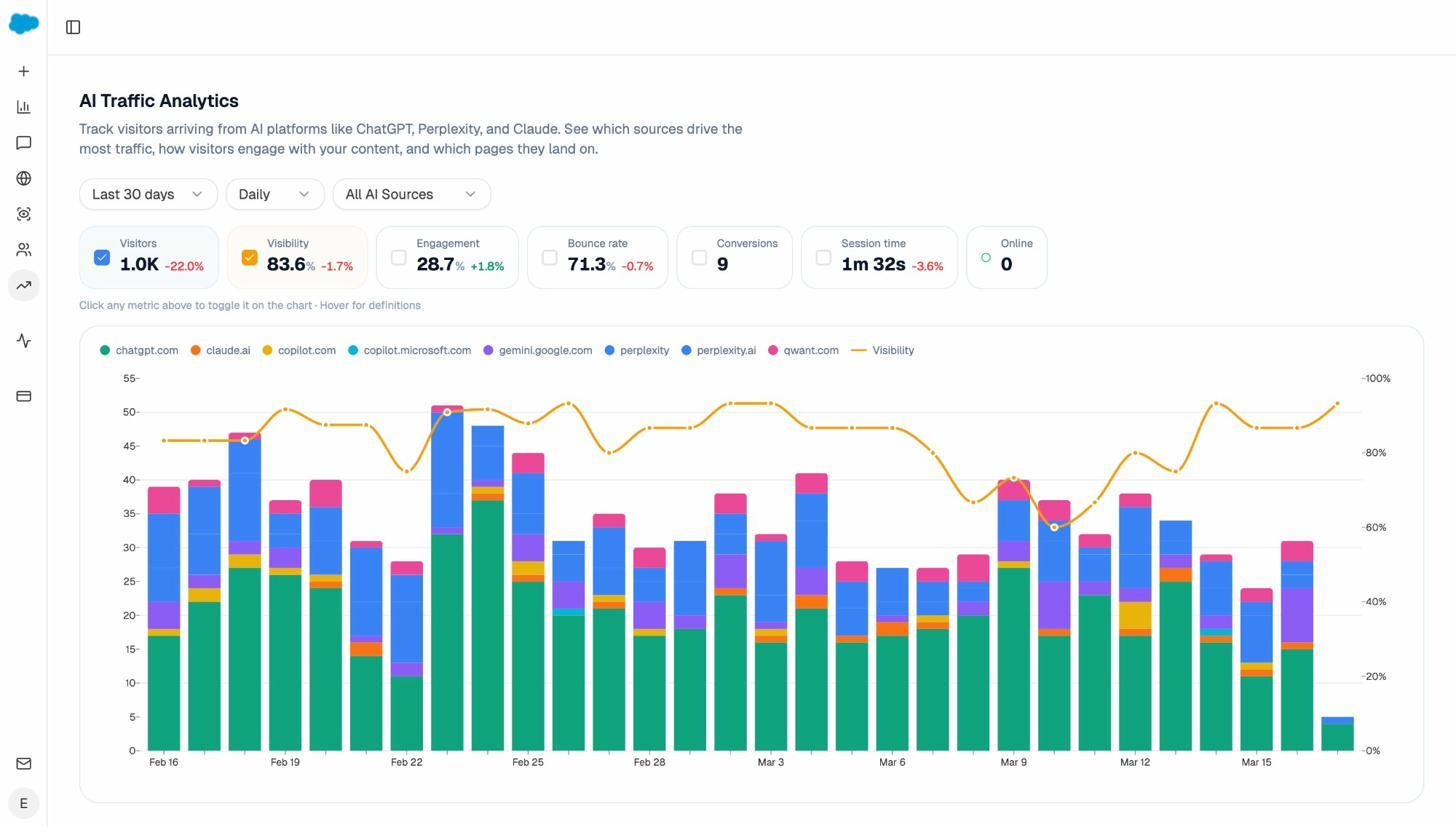

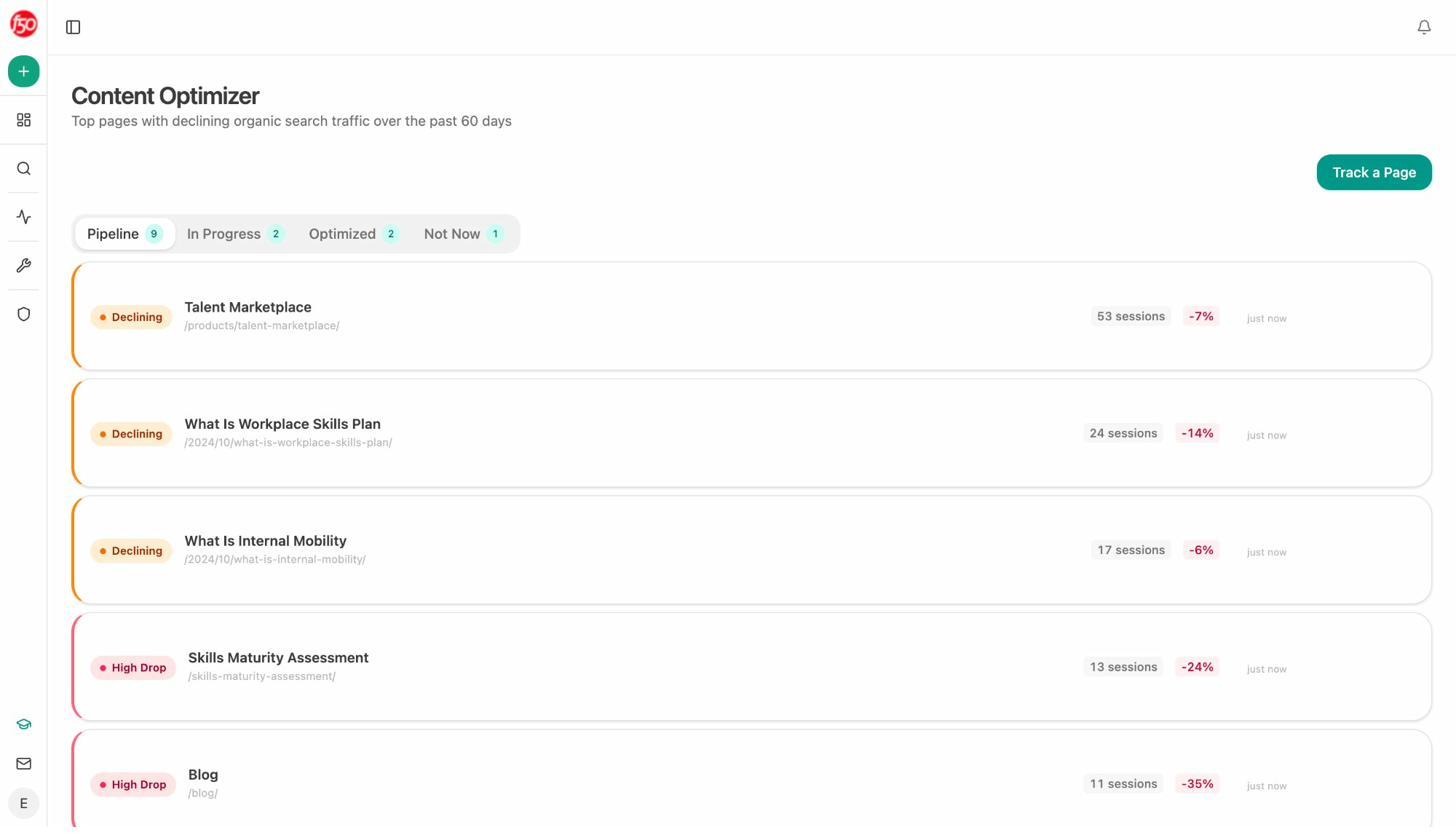

The AI Traffic Analytics dashboard attributes every AI-referred session to its source engine. You see volume by engine, trends over months, and the percentage of your total traffic that comes from AI referrers.

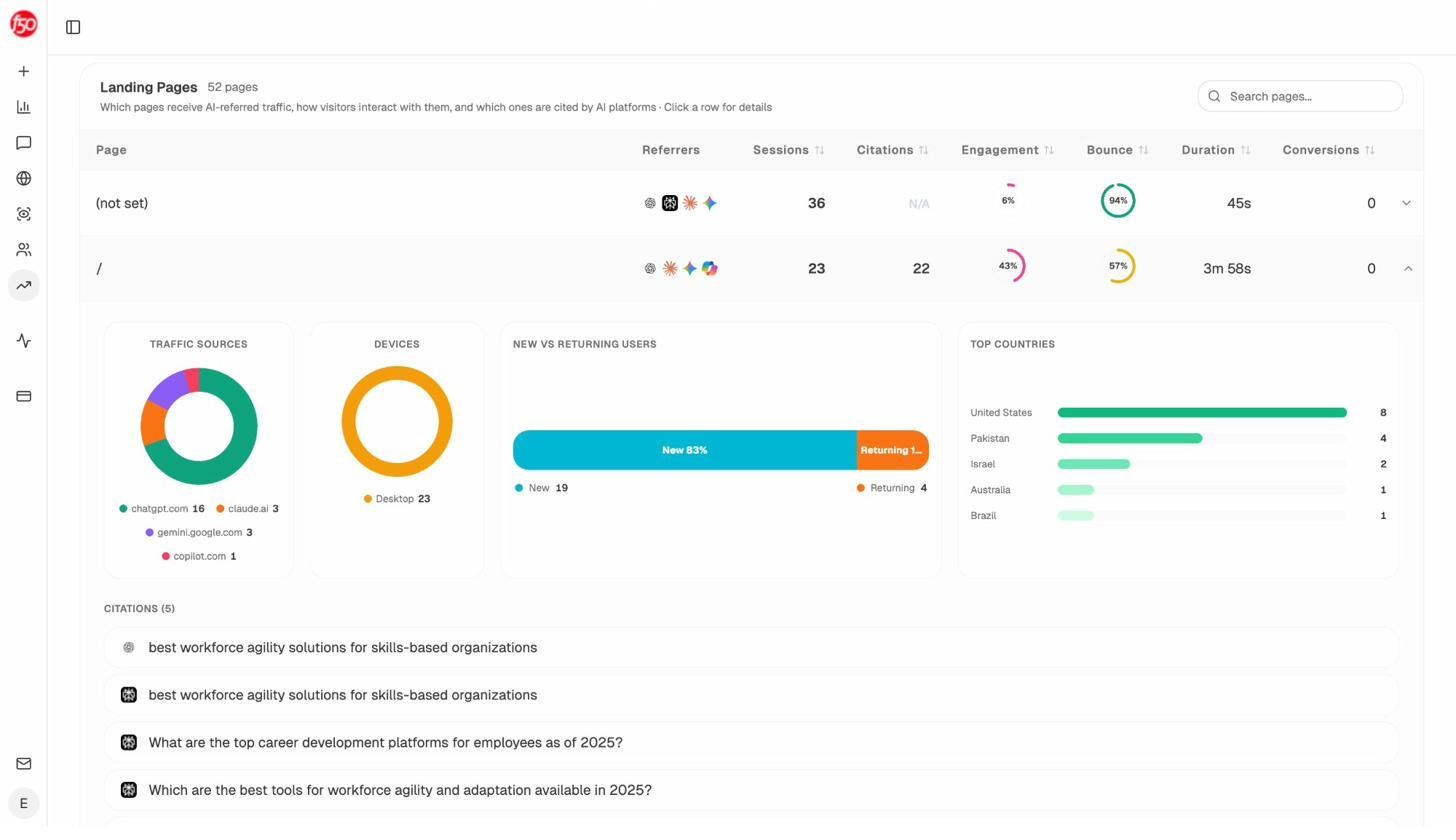

Below the totals, the Landing Pages report shows which pages receive those sessions, which engine sent each visit, and what conversion events those visits trigger. When your product comparison page gets 50 sessions from Perplexity and converts 12% to trials while an old blog post gets 40 sessions from ChatGPT with zero conversions, you know exactly what to strengthen.

This is the part Am I On AI cannot do. It has no GA4 connection, no landing page reporting, and no conversion tracking. You get a visibility score with no way to know if visibility translates to revenue.

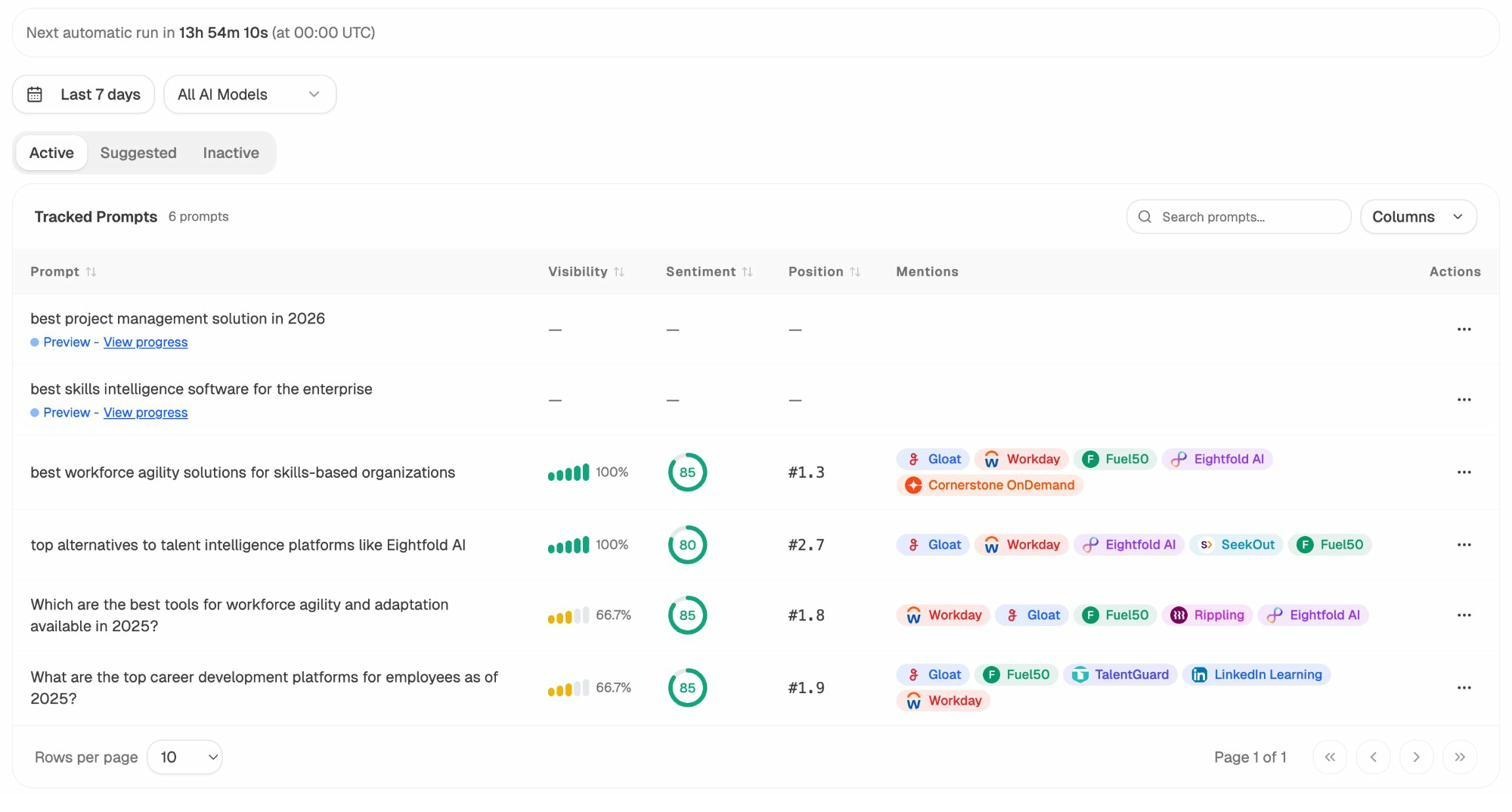

Track prompts across every major engine

Prompt Tracking monitors the exact prompts buyers use across all major LLMs. For each prompt, you see your brand’s visibility percentage, ranking position, sentiment score, and which competitors appear alongside you.

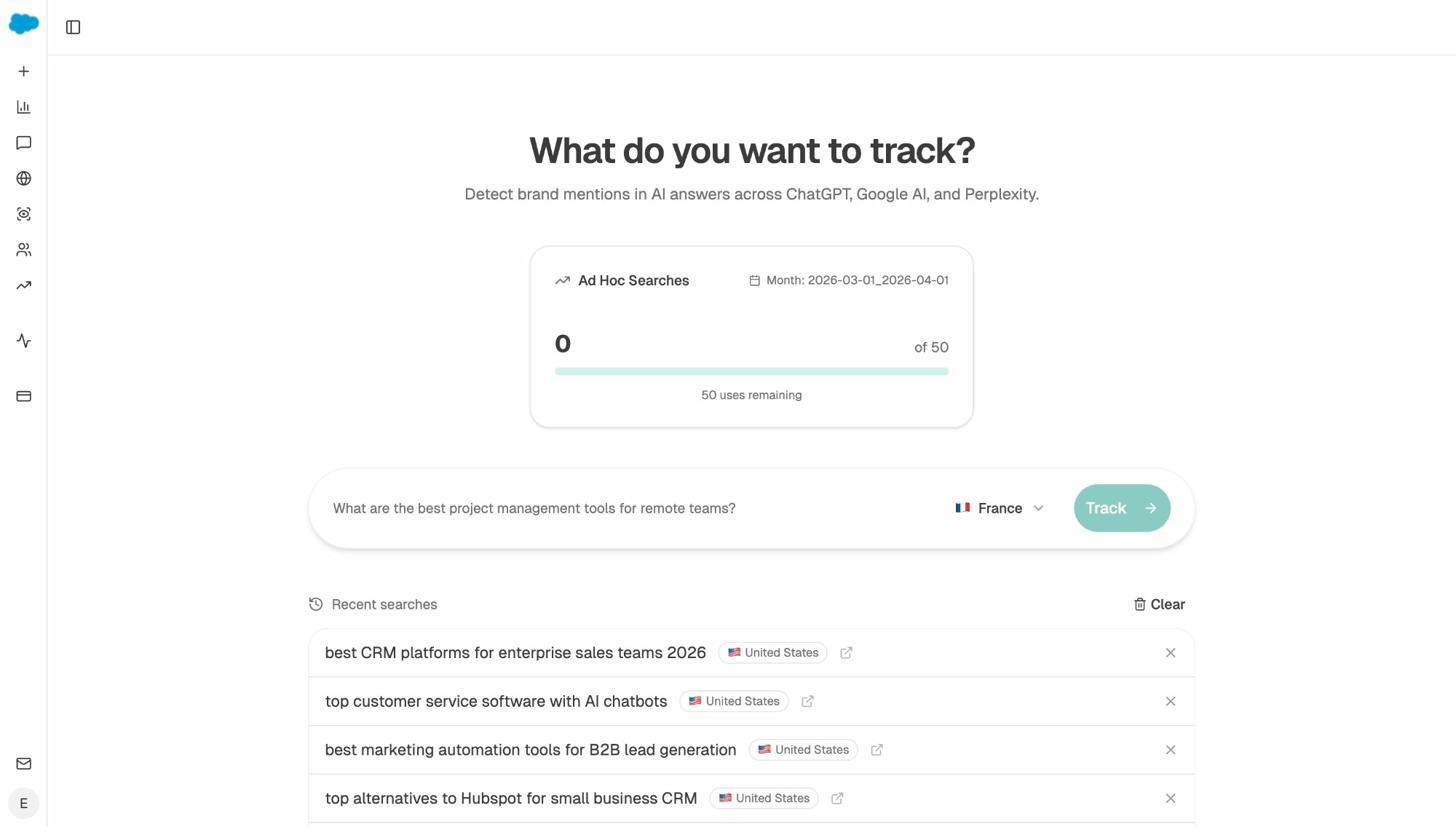

Not sure which prompts to track? The Prompt Discovery feature suggests bottom-of-funnel prompts based on your category, competitors, and existing data. You can also run one-off prompt searches with the Ad Hoc Prompt Search tool to test a query across engines before adding it to your tracking set.

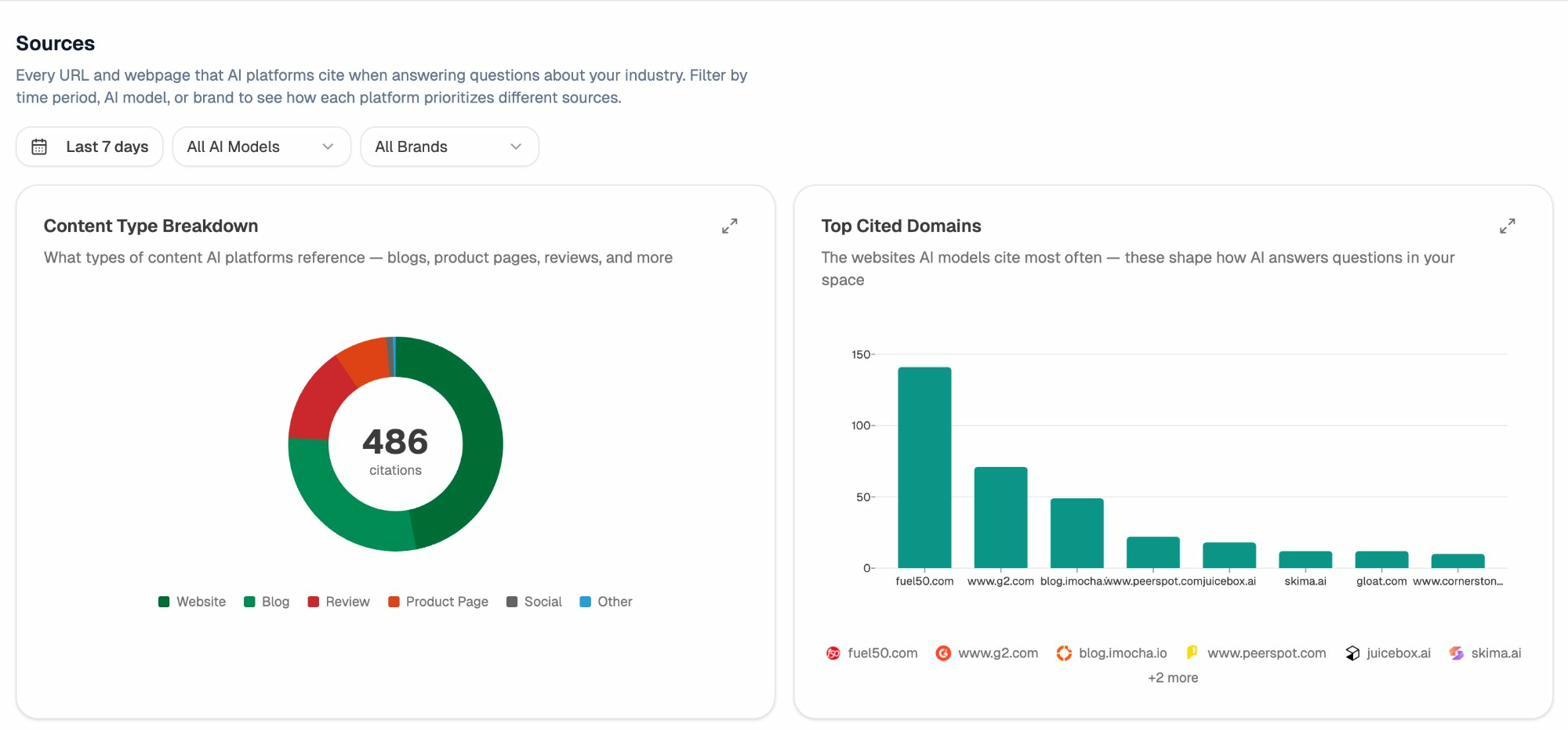

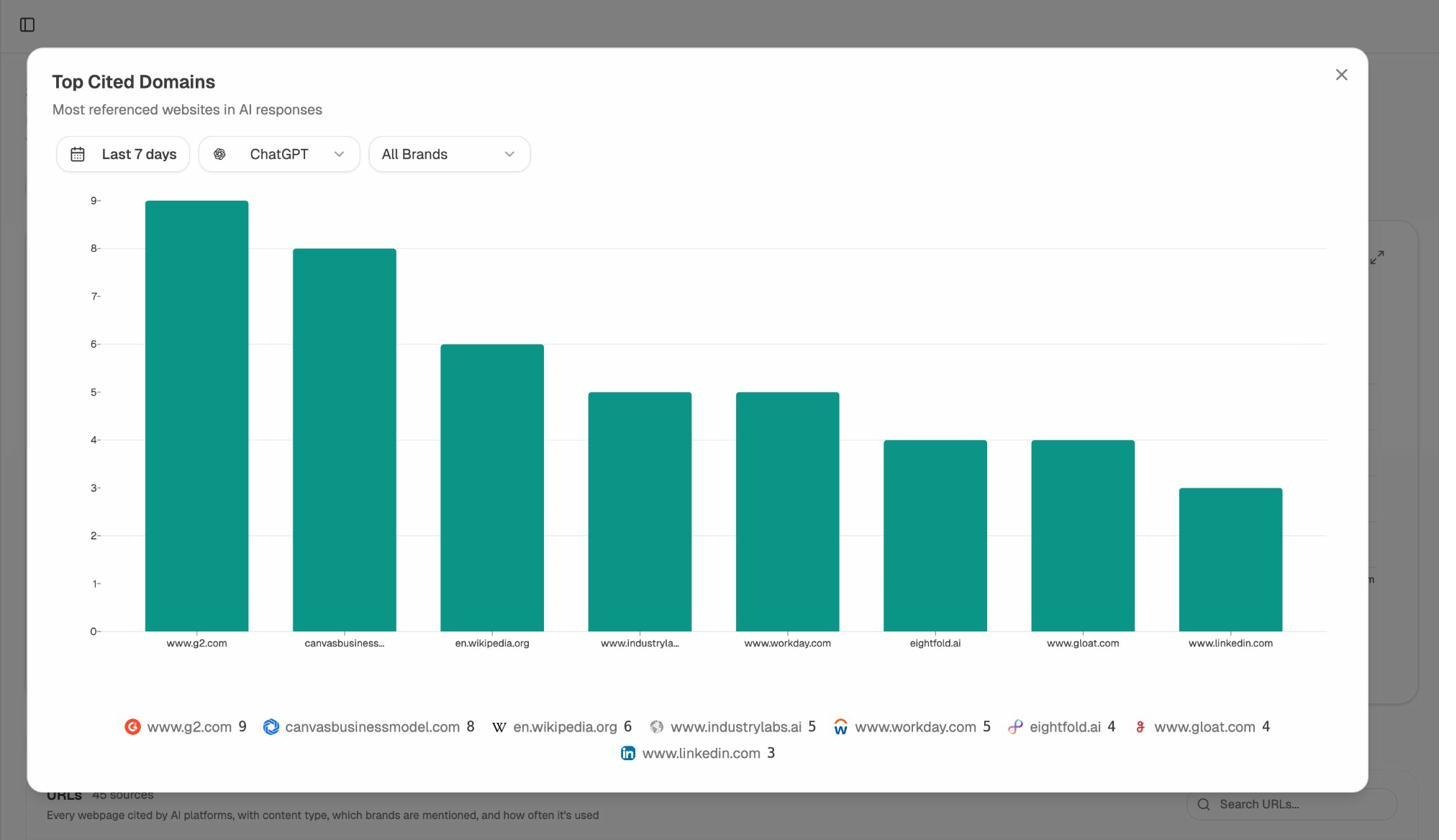

See which sources AI engines trust in your category

Citation Analytics shows every URL and domain that AI engines cite when answering questions in your space. You see usage count per source, which models reference each domain, and when citations first appeared.

This data replaces guesswork with precision. Instead of generic link building, you target the specific sources that shape AI answers. You strengthen relationships with domains that models already trust and create content that fills gaps in their coverage.

Find and close competitive gaps

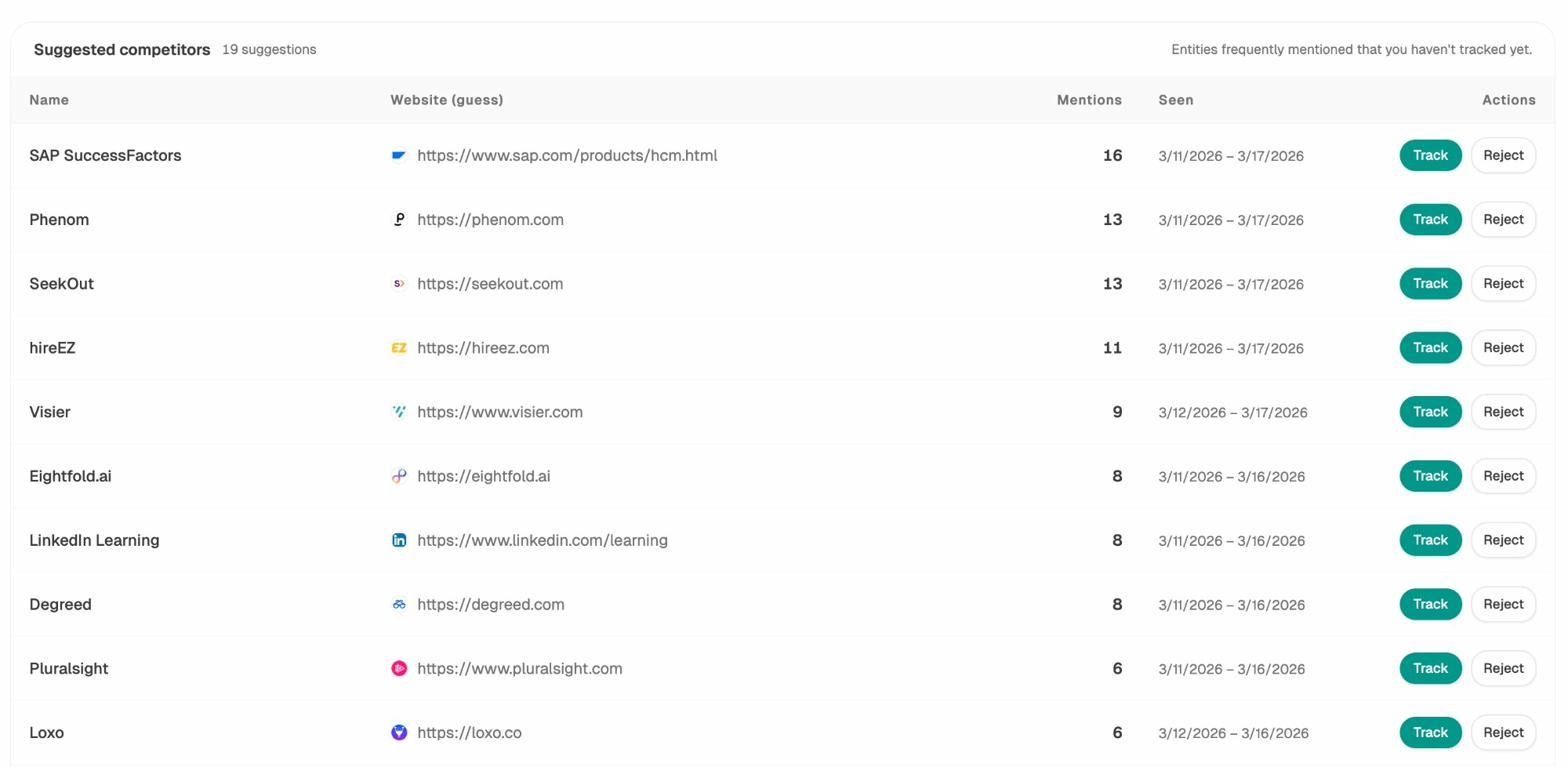

Competitor Intelligence runs a rolling scoreboard that shows where competitors outrank you and on which prompts. You see share of answers, sentiment differences, and citation share across engines.

The platform surfaces opportunities based on omissions, weak coverage, rising prompts, and unfavorable sentiment. Each opportunity pairs with recommended actions ranked by potential impact and required effort.

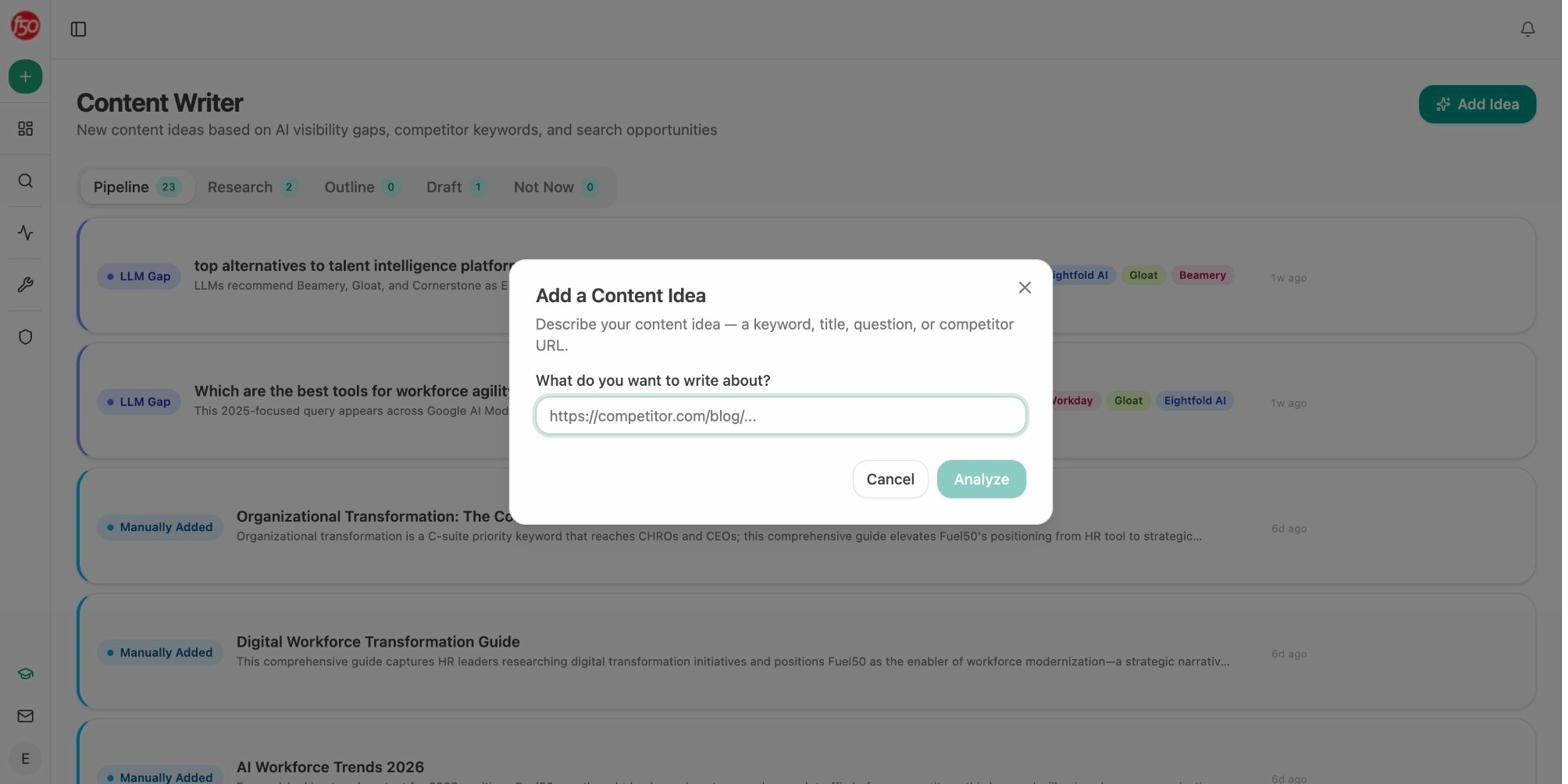

Write and optimize content built to get cited

This is where Analyze AI separates from every other tool on this list. Most AI visibility tools only monitor. Analyze AI includes an AI Content Writer that goes from idea to research to outline to draft, with AI visibility gaps and competitor analysis baked into every step.

The AI Content Optimizer takes existing pages, audits them for AI and search performance, and provides line-by-line suggestions. It scores structure, freshness, claim density, and proof integration to give you a clear path from invisible to cited.

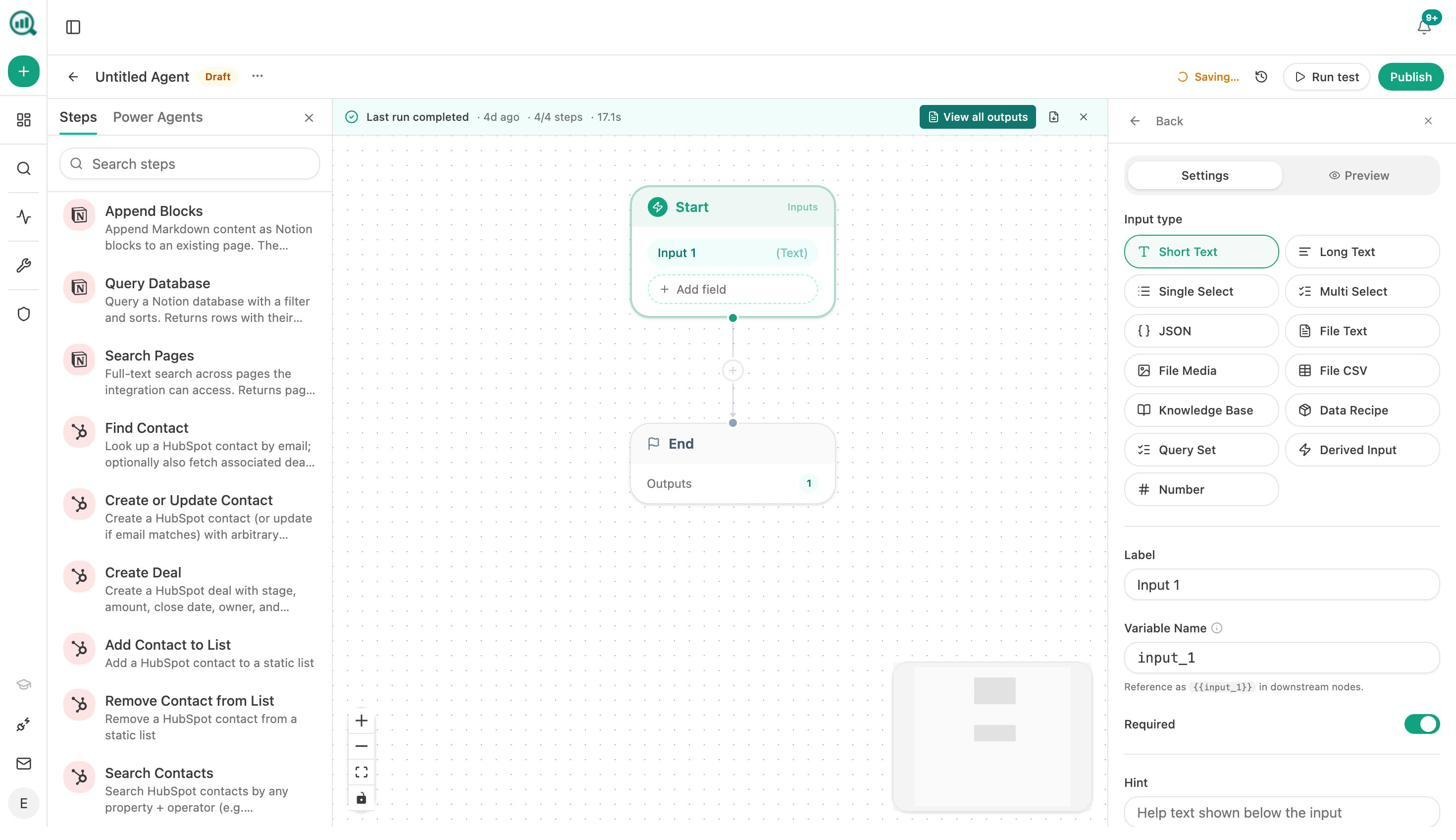

Automate entire workflows with the Agent Builder

The Agent Builder is the reason Analyze AI is not just another visibility tracker. It is a programmable platform with 180+ nodes, 34 pre-built data recipes, 13 input primitives, and three trigger modes (manual, scheduled, webhook). It connects directly to GA4, Google Search Console, DataForSEO, Semrush, HubSpot, Notion, WordPress, Slack, and every major LLM.

This means you can build agents that run your Monday board prep (pulling visibility data, GA4 traffic, competitor shifts, and HubSpot deals into a single executive summary delivered to Slack at 7am), automate your content pipeline from brief to publish with a quality gate, generate client reports for every account in parallel, or trigger a crisis response draft the moment negative coverage appears.

The Agent Builder is not an “automation layer” bolted on top. It is the core product. AI search visibility is one of the things you can do with it. Agencies use it to generate client reports for every account on the first of the month without touching a spreadsheet. Content teams use it to run a brief-to-publish pipeline where nothing goes live until it passes an AEO quality gate. PR teams use it to trigger a crisis response draft the moment negative coverage appears. Sales teams use it to enrich every inbound lead with company research, AI visibility data, and competitor analysis before the AE even sees the notification.

You can also run keyword research, competitor analysis, content refresh cycles, and full editorial calendars, all from composable workflows that run on a schedule without human intervention.

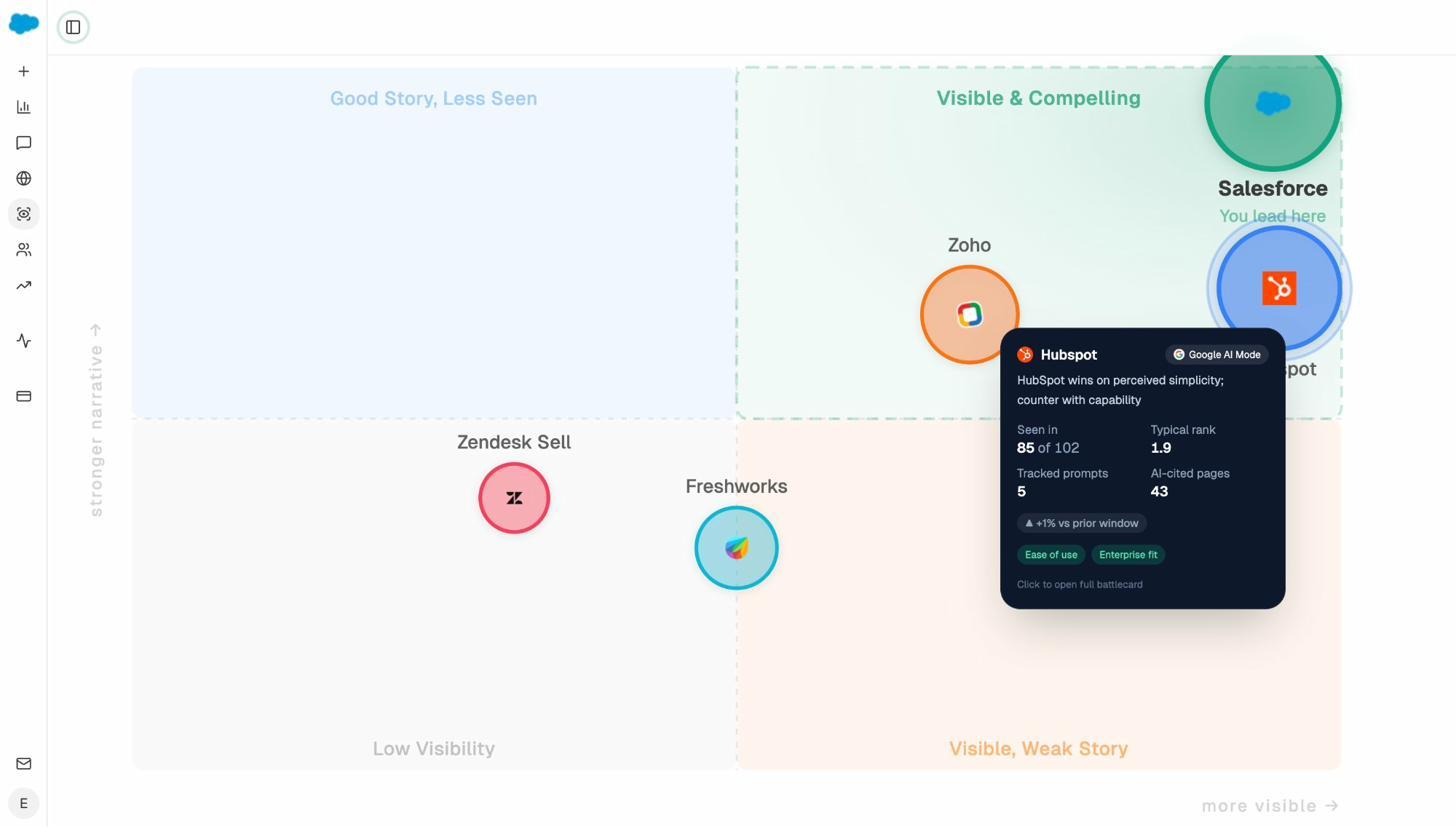

Govern your brand narrative

The Perception Map positions your brand and competitors on a quadrant that plots presence against narrative strength. AI Sentiment Monitoring catches negative shifts before they spread. AI Battlecards give your sales team the counter-narratives they need. And Weekly Email Digests deliver prioritized actions every Monday without requiring you to log in.

Analyze AI Perception Map showing brand positioning across AI engines

Am I On AI vs Analyze AI: quick comparison

|

Capability |

Am I On AI |

Analyze AI |

|---|---|---|

|

Engine coverage |

ChatGPT + Google AI Overviews |

ChatGPT, Perplexity, Claude, Copilot, Gemini, and more |

|

Traffic attribution |

None |

Full GA4 integration with landing pages, sessions, conversions |

|

Content tools |

None |

AI Content Writer + AI Content Optimizer |

|

Automation |

None |

Agent Builder with 180+ nodes and direct integrations |

|

Competitive intelligence |

Basic |

Rolling scoreboard with share of answers, sentiment, citations |

|

Pricing |

$100/mo (150 prompts, 2 engines) |

Best for: marketing teams, agencies, and content operations that need to track, act, and automate across AI and traditional search from a single platform.

2. Peec AI: best for multi-engine share-of-voice tracking

Peec AI starts with AI answers rather than classic links. It maps daily movement and regional output across ChatGPT, Perplexity, and AI Overviews, recording the exact phrasing that triggered your brand’s appearance.

Peec treats each prompt as a research unit and records the exact words that raised your brand, which helps teams test phrasing and improve win rates. The Looker Studio connector and API make reporting clean for agencies that need automated pipelines. CSV exports let analysts build custom views.

Where it beats Am I On AI: multi-engine benchmarking, client-ready reporting, and trend lines you can defend in a meeting.

Watch out for: pricing climbs when you add engines, regions, and large prompt sets. Heavy exports add overhead for teams without strong BI habits.

3. AthenaHQ: best for GEO strategy and competitive analytics

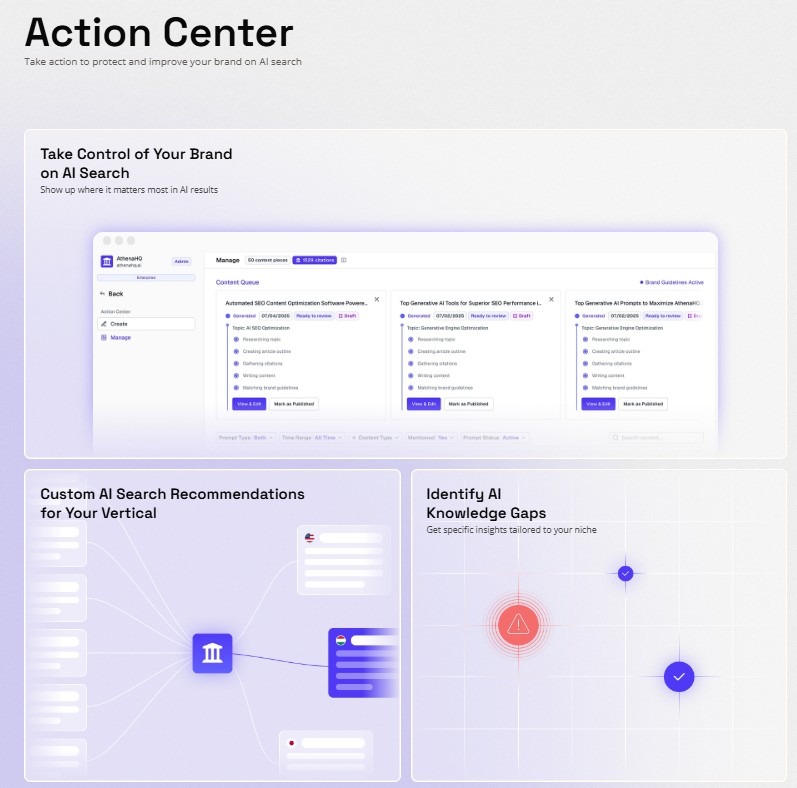

AthenaHQ quantifies AI visibility through a unified GEO Score that blends citation count, share-of-voice, and query coverage across ChatGPT, Gemini, Claude, Perplexity, Copilot, and AI Overviews.

Its content gap detection engine identifies topics where competitors earn citations but your brand does not, then ranks those gaps by potential impact. Advanced tiers include multi-region and multi-language coverage. Pricing starts around $295/month and climbs for enterprise tiers with localization.

Where it beats Am I On AI: diagnostic depth, content gap detection, and a clear path from visibility insights to tactical action.

Watch out for: steeper onboarding, higher cost at scale, and daily prompt data can show volatility that requires context to interpret. Does not include content creation or automation tools.

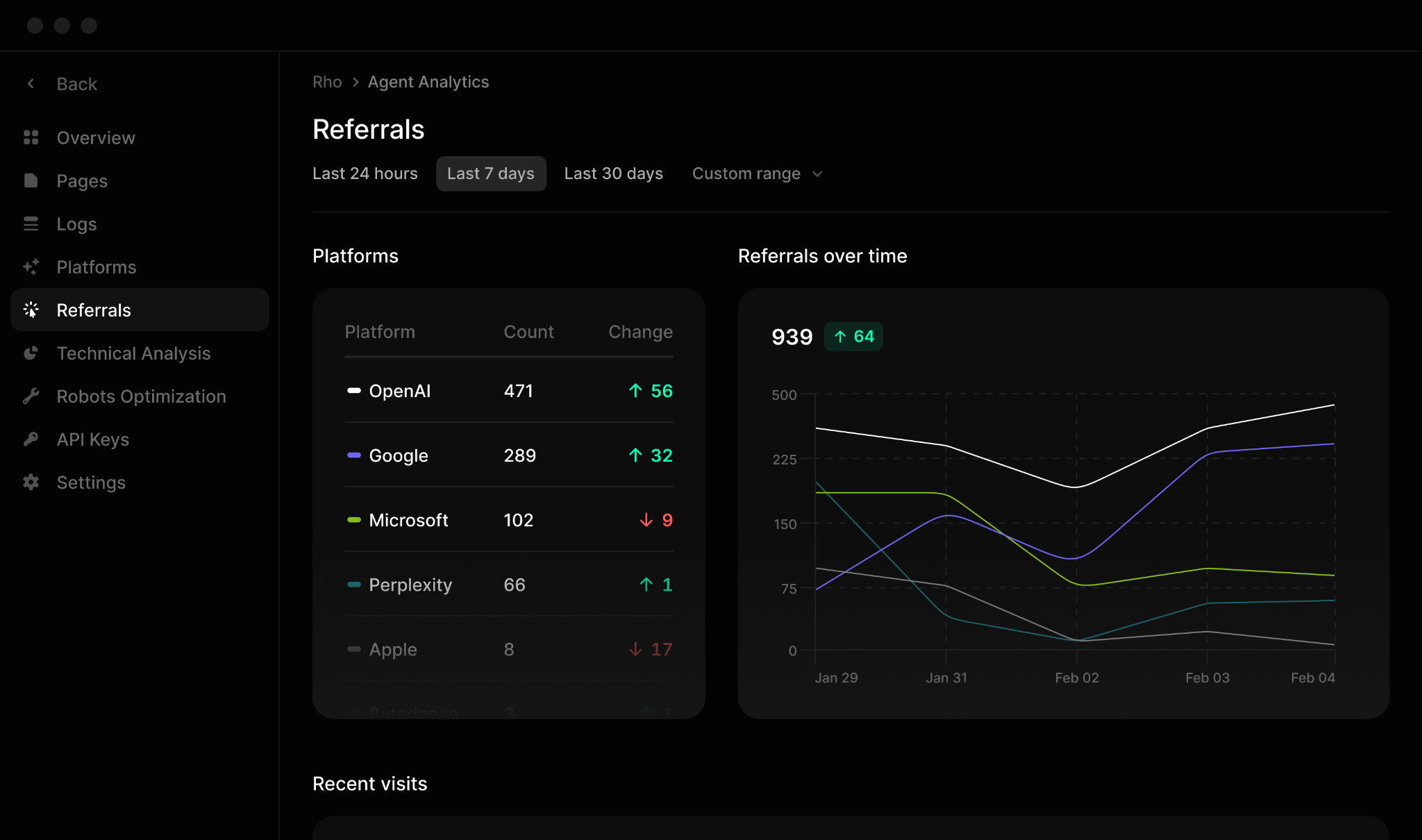

4. Profound: best for enterprise-scale visibility and sentiment

Profound targets enterprise teams that need precision across AI visibility, sentiment, and technical readiness. It tracks brand mentions and citations across major engines and maps them back to the content and sources that drive visibility.

The Agent Analytics feature audits how AI systems crawl your site. The Conversation Explorer highlights trending prompts. The Actions system connects citation data, prompt logs, and AI traffic to pinpoint high-impact optimization opportunities.

Where it beats Am I On AI: enterprise-grade analytics, crawler intelligence, and cross-model sentiment tracking.

Watch out for: premium pricing (custom, requires sales contact), steep learning curve, and connecting visibility gains to revenue still needs external analytics pipelines.

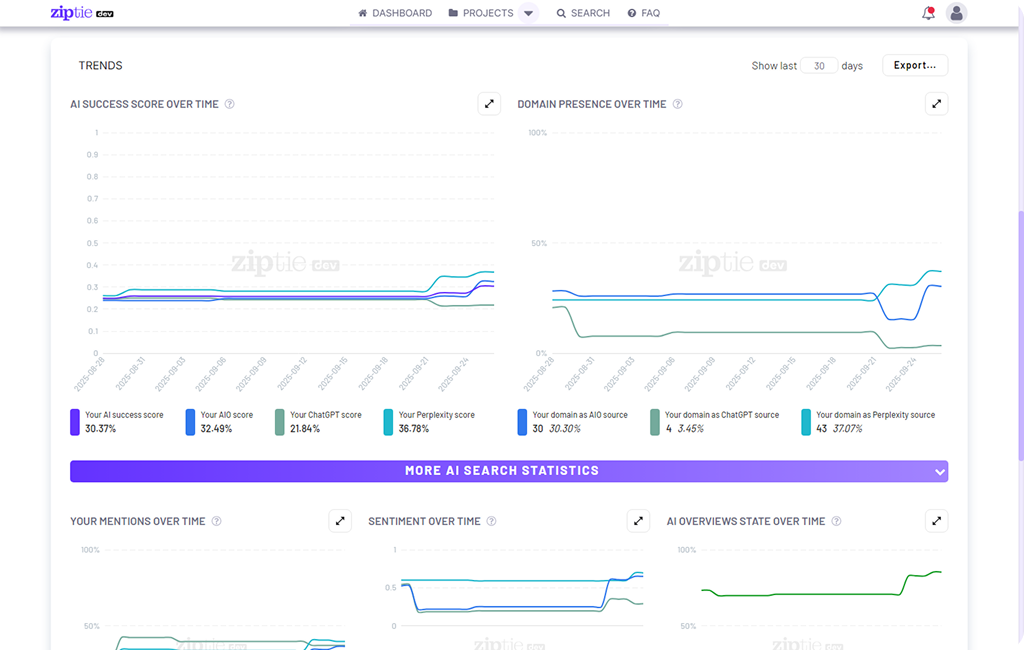

5. ZipTie: best for lightweight, fast setup

ZipTie is the lightest option. Connect your domain, input queries, and start tracking across ChatGPT, Perplexity, and Google AI Overviews. It aggregates appearances into an AI Success Score that blends visibility, citation quality, and sentiment.

Multi-country support (Poland, Spain, Netherlands, and others) is uncommon at this tier. Import queries from Search Console or let ZipTie generate them.

Where it beats Am I On AI: geographic coverage, faster setup, and a composite score that prioritizes action.

Watch out for: only three engines (no Claude, Gemini, or Copilot), limited historical data, and CSV-only exports.

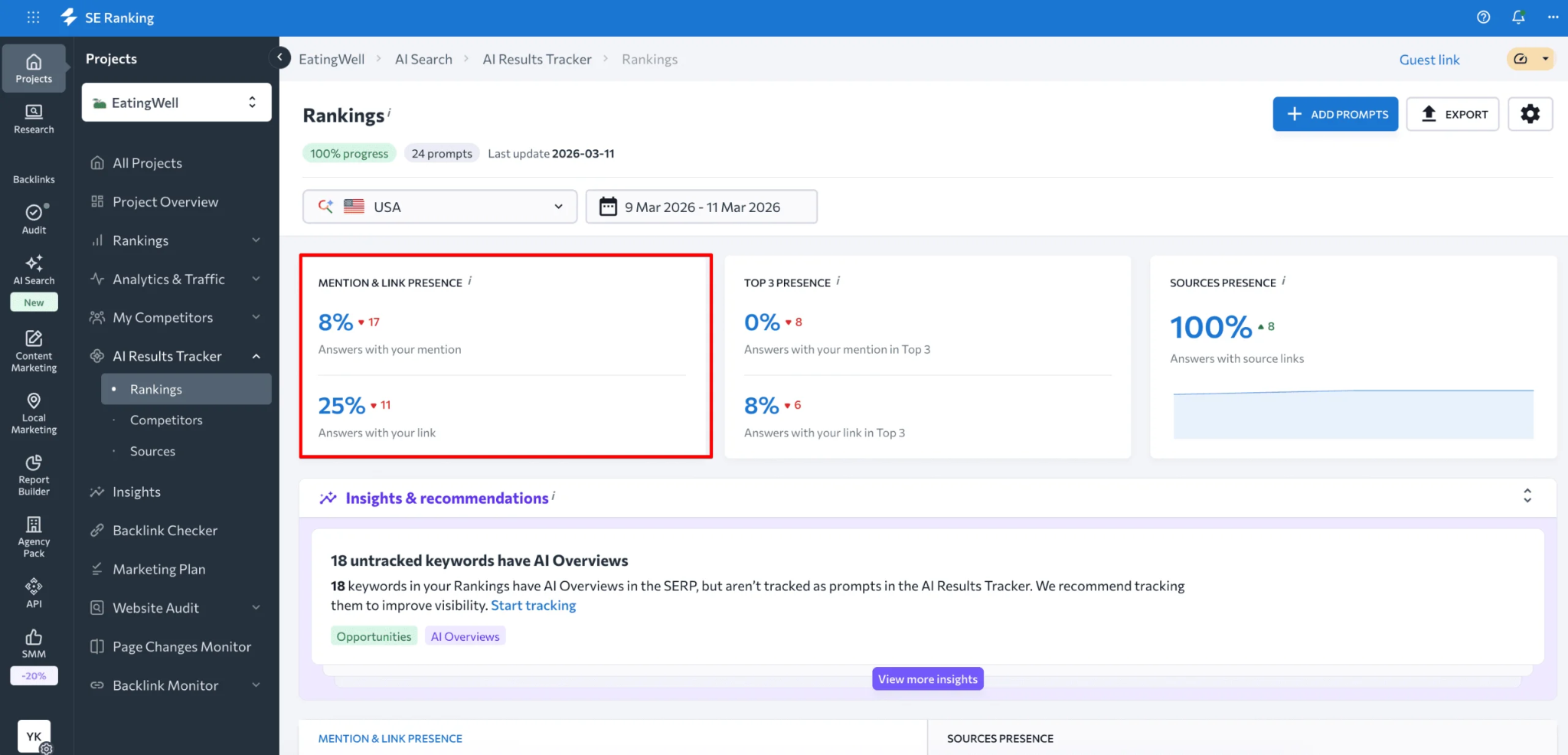

6. SE Ranking: best for SEO teams adding AI tracking

SE Ranking’s AI Visibility Tracker plugs AI tracking into the same dashboard where you already monitor rankings, backlinks, and keyword performance. It tells you when your brand or competitors appear in AI answers and whether those mentions include citations.

Dedicated modules for ChatGPT, Gemini, and AI Overviews expand coverage beyond Google. Because the add-on runs on top of your existing subscription, testing AI visibility costs a fraction of standalone GEO tools.

Where it beats Am I On AI: seamless integration with existing SEO data and lower incremental cost for SE Ranking users.

Watch out for: limited prompt-level diagnostics, partial engine coverage (Perplexity and Claude are planned but not fully supported), and tracking is limited to your existing keyword set.

7. Rankscale AI: best for data-first teams

Rankscale emphasizes accuracy, depth, and data accessibility. Users can export raw datasets, filter by prompt category or region, and build custom dashboards. Daily tracking combines citation counts, sentiment, and ranking positions into quantifiable metrics.

AI readiness audits evaluate whether your site structure makes it easy for AI models to reference your content.

Where it beats Am I On AI: raw data access, customizable dashboards, and daily tracking cadence.

Watch out for: steeper learning curve, UX built for analysts rather than marketers, and longer setup times.

How to choose the right Am I On AI alternative

The right tool depends on what you are trying to accomplish, not which one has the longest feature list.

If you need full-funnel coverage from tracking to content to automation, Analyze AI is the clear choice. It is the only tool on this list that connects AI visibility to GA4 revenue attribution, includes a content writer and optimizer, and gives you a programmable Agent Builder that can run your entire marketing operations layer.

If you need agency reporting with Looker Studio connectors, Peec AI fits that workflow well.

If you are an SE Ranking user and want minimal disruption, the AI Visibility add-on is the lowest-friction entry point.

If you need enterprise sentiment and crawler analytics, Profound has the deepest diagnostic layer.

If you want the fastest possible setup on a small budget, ZipTie gets you running in minutes.

Every option on this list offers more than Am I On AI’s two-engine, mention-only approach. The question is how much action you want the tool to take for you. A visibility tracker that only tells you “you were mentioned” is a starting point. A platform that tells you which mention drove pipeline, what content to create next, and which agent to schedule for Monday morning is how you compound results.

For teams that want to track, act, optimize, and automate from a single platform, start with Analyze AI. You can also explore the free AI visibility tools (including a keyword generator, SERP checker, and website authority checker) to get a sense of the data before committing to a plan.

Ernest

Ibrahim