Summarize this blog post with:

In this article, you’ll get an honest breakdown of what ZipTie AI does well, where it falls short after extended use, how its pricing actually works at scale, and why Analyze AI became my primary platform for tracking and improving brand visibility across AI search engines.

Table of Contents

What ZipTie AI Gets Right

ZipTie earned its reputation for a reason. The team behind it (the founders of SEO agency Onely) built the tool to solve a real problem, and it does three things particularly well.

Accurate AI Overview Detection

ZipTie uses real browser-level monitoring instead of API approximations. That matters because API-based tools miss a significant number of AI Overviews that only render in live browser sessions. In head-to-head benchmarks published on Onely’s blog, ZipTie detected AI Overviews on 28% of tracked keywords while other tools detected them on as low as 1.6% of the same set.

For SEO teams focused specifically on Google AI Overviews, this accuracy is the core reason to use ZipTie. You see the full AI Overview text, the citation set, and a downloadable screenshot of what users actually see.

Content Optimization Based on Citation Patterns

ZipTie does not stop at monitoring. It inspects the pages that get cited for your tracked prompts and compares their patterns against your own pages. The optimization module highlights missing entities, weak evidence sections, and thin explanations that could be preventing your page from earning citations.

Each recommendation maps to a specific URL and section, which means your writers get a focused brief rather than a generic checklist. After you publish updates, ZipTie continues monitoring those same prompts so you can see whether the changes actually moved the needle.

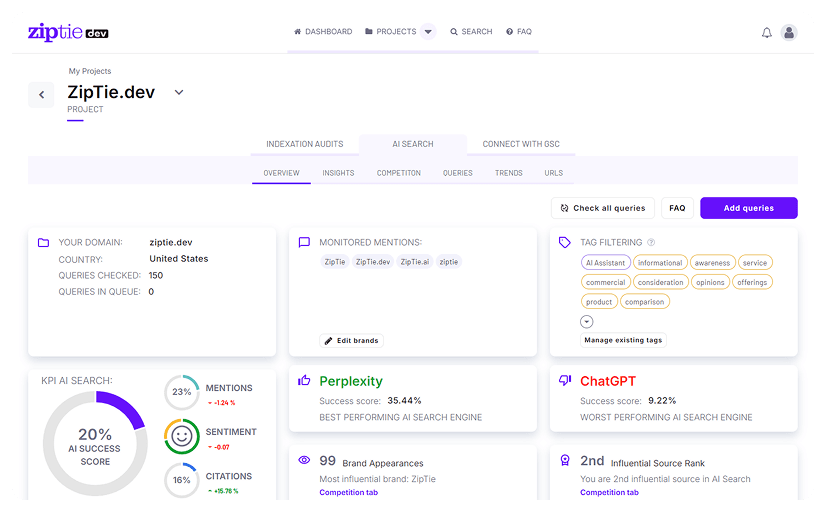

AI Success Score for Prioritization

When you track hundreds of prompts, you need a way to decide where to spend your time first. ZipTie’s AI Success Score blends mention frequency, citation presence, answer placement, and sentiment into a single ranking. Prompts where you get mentioned but never cited surface as authority gaps. Prompts where you get cited but buried low in the answer surface as structure problems.

This scoring helps content teams run focused weekly sprints instead of spreading effort across every prompt equally.

Where ZipTie AI Falls Short

After running ZipTie for 90 days on a real brand monitoring project, three limitations became hard to ignore.

Only Three AI Engines

This is the dealbreaker for most teams evaluating ZipTie today. The platform tracks Google AI Overviews, ChatGPT, and Perplexity. That is it.

It does not track Claude, Gemini, Google AI Mode, Copilot, Grok, or DeepSeek. In 2026, Google AI Mode is rapidly becoming the default Google AI experience. Gemini is integrated across Google Workspace and Android. Claude is growing among professional and technical users. Copilot is embedded in every Microsoft product.

If you are only watching three engines, you are seeing a fraction of where your brand actually appears in AI answers. Worse, you cannot compare performance across engines, which means you cannot identify which engine deserves more investment or which one is framing your brand negatively.

Credit-Based Pricing That Scales Poorly

ZipTie’s pricing structure sounds simple on the surface. The Basic plan costs $69/month for 500 AI Search checks. Standard is $99/month for 1,000 checks. Pro is $159/month for 2,000 checks.

Each check covers one query across all three platforms, so 1,000 checks means 1,000 queries monitored. That works for solo consultants tracking a handful of high-value prompts. But agencies managing multiple clients burn through checks fast. If you are tracking 200 prompts per client across five clients, you need the Pro plan just to cover basic monitoring with almost no room for exploration or ad hoc research.

The single-seat restriction on all standard plans adds another layer of cost for teams. Agency plans exist but are generally considered expensive for the coverage you get.

GSC Dependency Creates Discovery Gaps

ZipTie relies on Google Search Console to auto-discover relevant queries. On paper this makes setup easy. In practice, it ties your AI visibility monitoring to whatever GSC happens to record. GSC often misses conversational queries and long-tail prompts, which are exactly the types of queries that trigger AI Overviews and AI answers.

For new domains or microsites without GSC history, ZipTie cannot automatically surface meaningful prompts. You end up doing manual entry anyway, which breaks the seamless onboarding experience and creates uneven data coverage across your properties.

ZipTie Pricing Breakdown

|

Plan |

Monthly Price |

AI Search Checks |

Content Optimizations |

Seats |

|---|---|---|---|---|

|

Basic |

$69 |

500 |

10 |

1 |

|

Standard |

$99 |

1,000 |

100 |

1 |

|

Pro |

$159 |

2,000 |

200 |

1 |

|

Enterprise |

Custom |

Custom |

Custom |

Custom |

ZipTie offers a 14-day free trial and roughly 15% off with annual billing. The free trial includes most features except GSC integration.

For the value you get (three-engine coverage, single seat, credit-limited optimizations), this pricing sits in the middle of the market. It is cheaper than enterprise tools like Profound but more expensive per engine than platforms that cover five or more AI models at similar price points.

Why I Switched to Analyze AI (And What Changed)

After running both tools side by side, I moved my primary AI visibility monitoring to Analyze AI. The reasons came down to coverage, attribution, and the ability to actually act on what the data tells you.

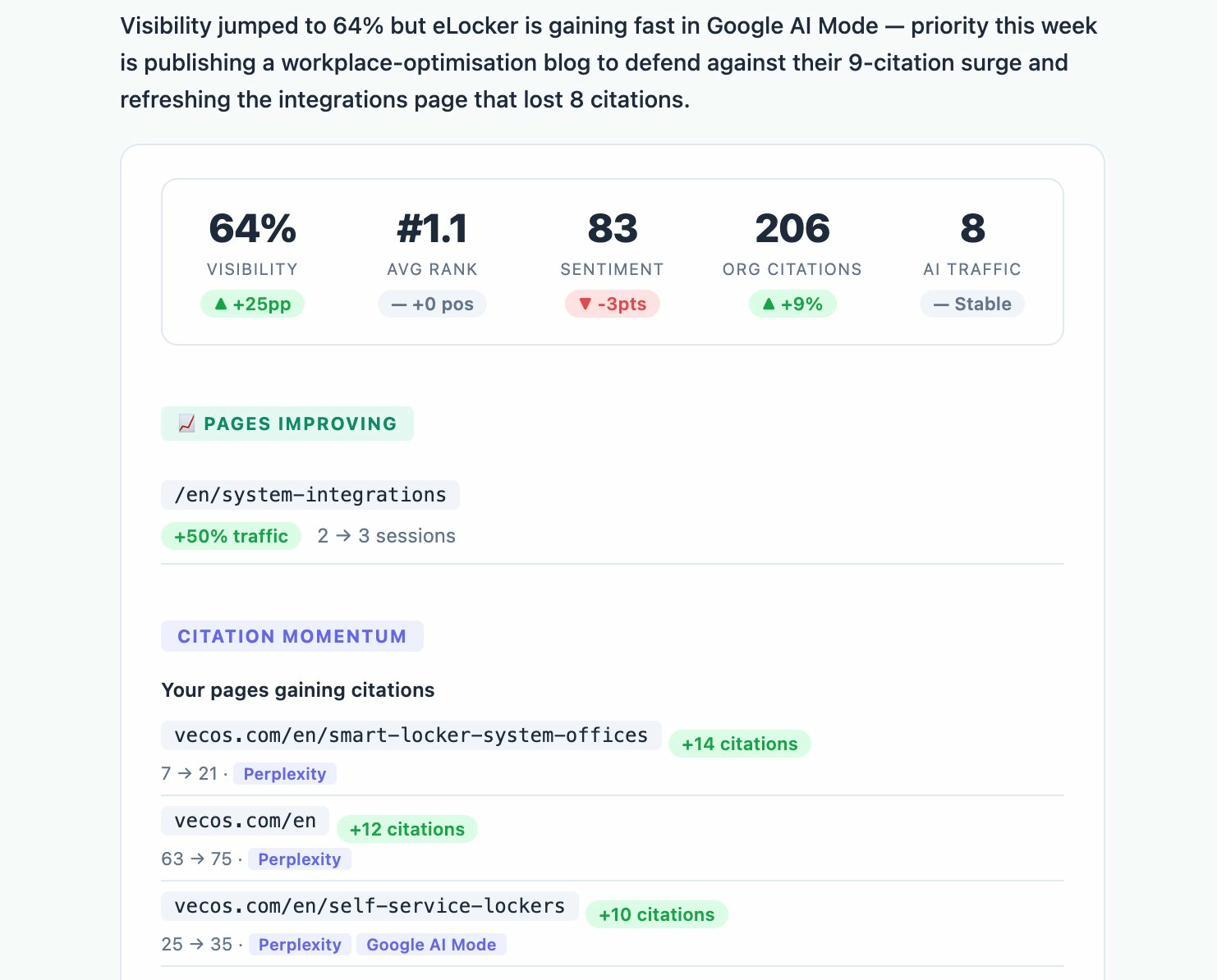

Analyze AI is not just a visibility tracker. It is an agentic platform for SEO, AEO, content, and GTM ops that connects AI search data to real business outcomes. Here is what that looks like in practice.

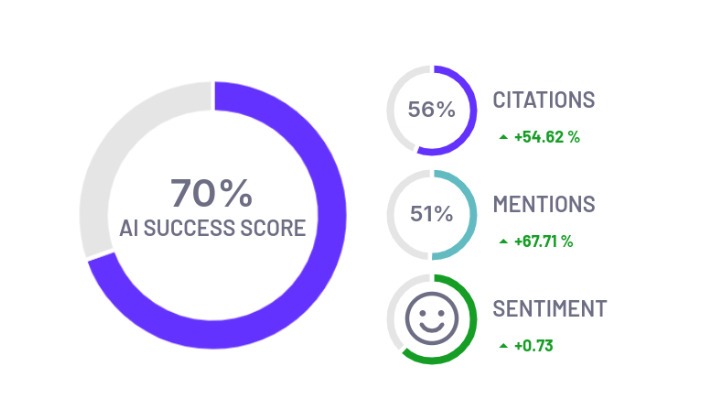

Track Real AI Traffic, Not Just Mentions

Most GEO tools stop at telling you that your brand was mentioned. Analyze AI connects to GA4 and shows you the actual sessions arriving from each AI engine. You see visitor counts, engagement rates, bounce rates, conversions, and session duration broken down by ChatGPT, Claude, Perplexity, Copilot, Gemini, and more.

This is the difference between knowing you appeared in an answer and knowing whether that appearance generated pipeline. When your product comparison page gets 50 sessions from Perplexity and converts 12% to trials while an old blog post gets 40 sessions from ChatGPT with zero conversions, you know exactly where to focus.

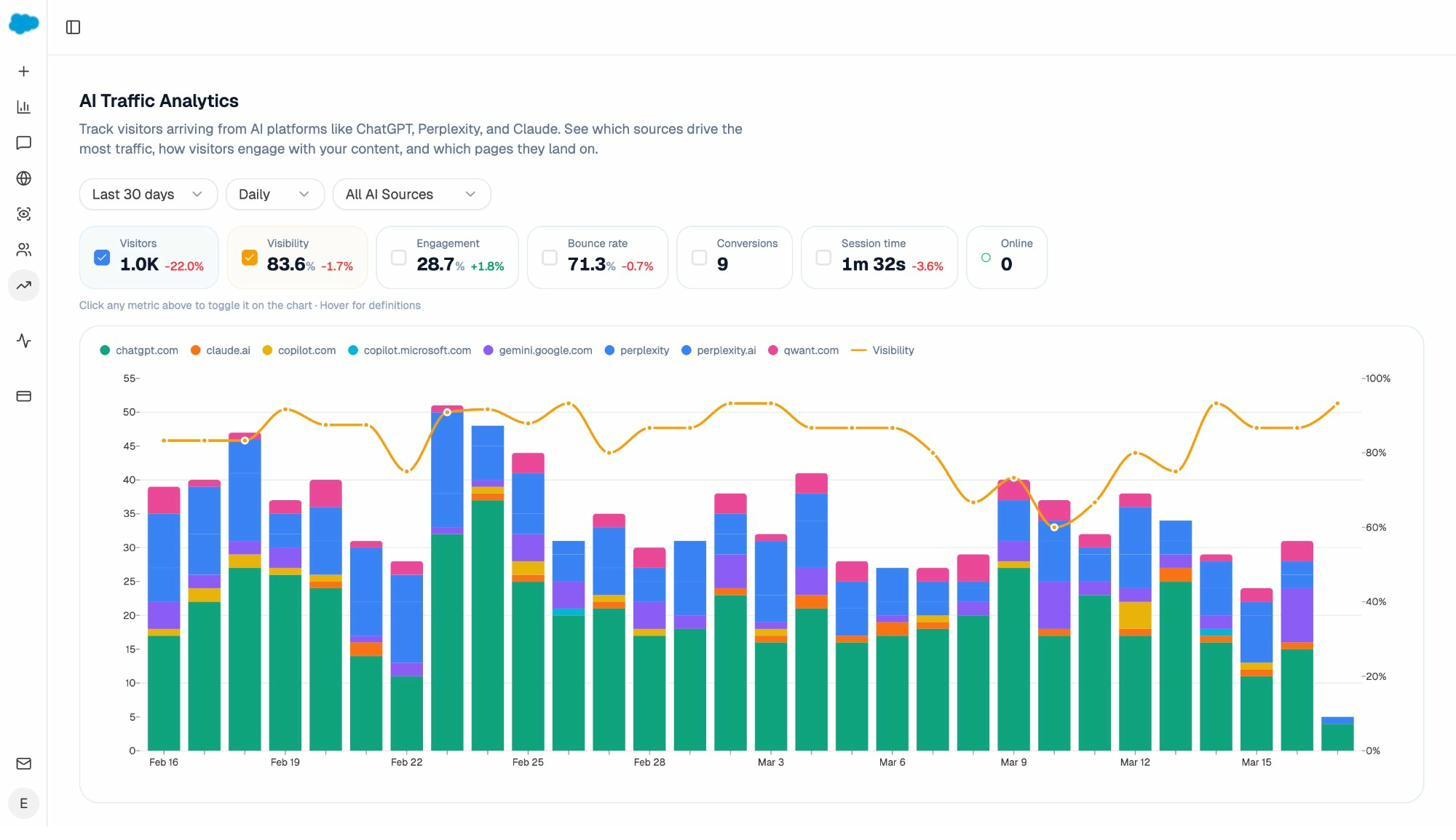

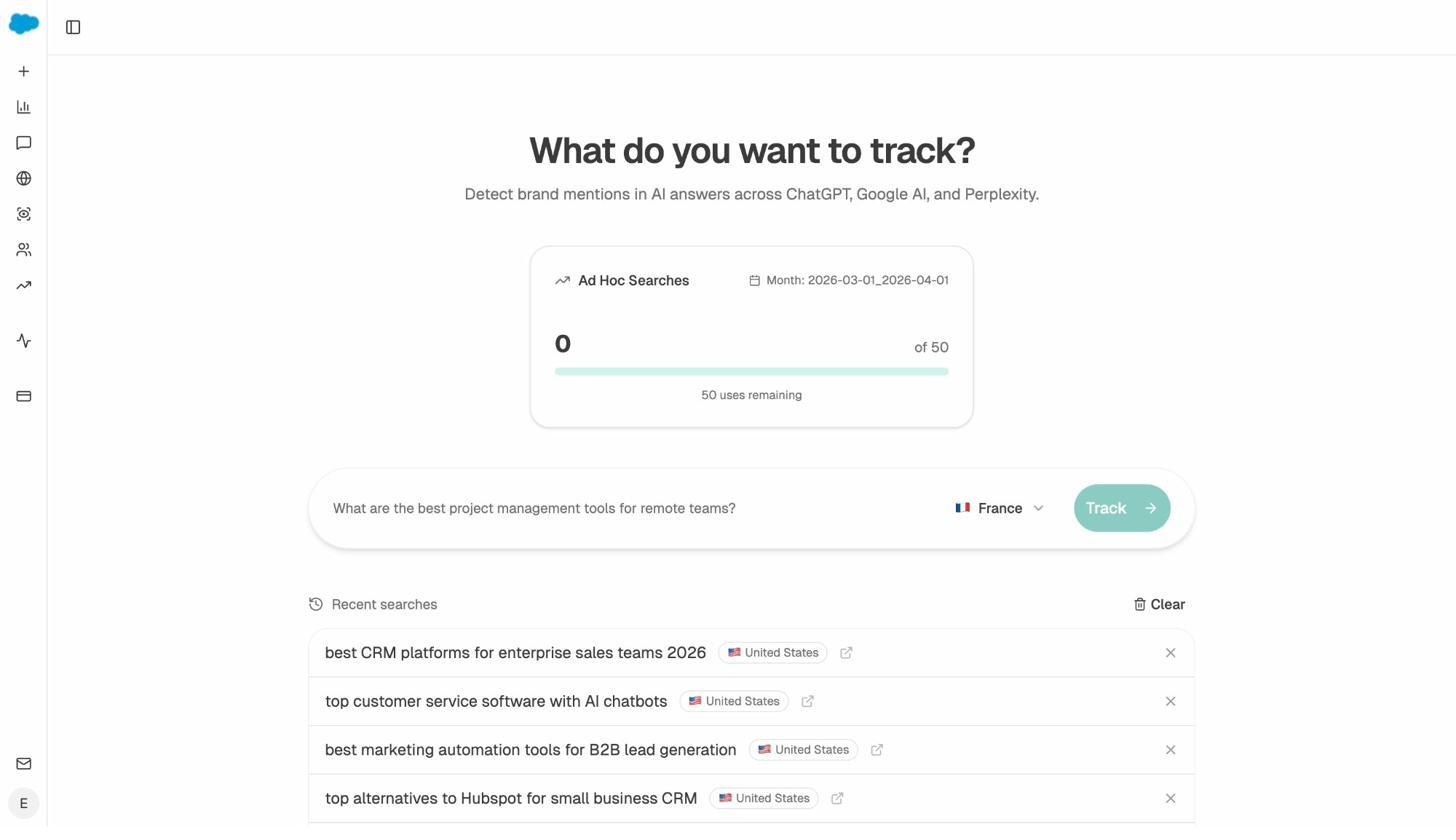

Monitor Prompts Across 8+ AI Engines

Analyze AI tracks your brand visibility, sentiment, and position across ChatGPT, Claude, Perplexity, Copilot, Gemini, Google AI Mode, Grok, DeepSeek, and more. Every prompt shows you the exact engines where you appear, what position you hold, and which competitors appear alongside you.

You can filter by engine, date range, and competitor to isolate the prompts that matter most to your team. The Prompt Discovery feature also suggests bottom-of-funnel prompts you should be tracking but are not. This eliminates the GSC dependency problem because discovery comes from AI answer patterns, not just traditional search data.

If you want to test a prompt before committing to tracking it, the Ad Hoc Prompt Search feature lets you run a one-off query across all engines and see results immediately.

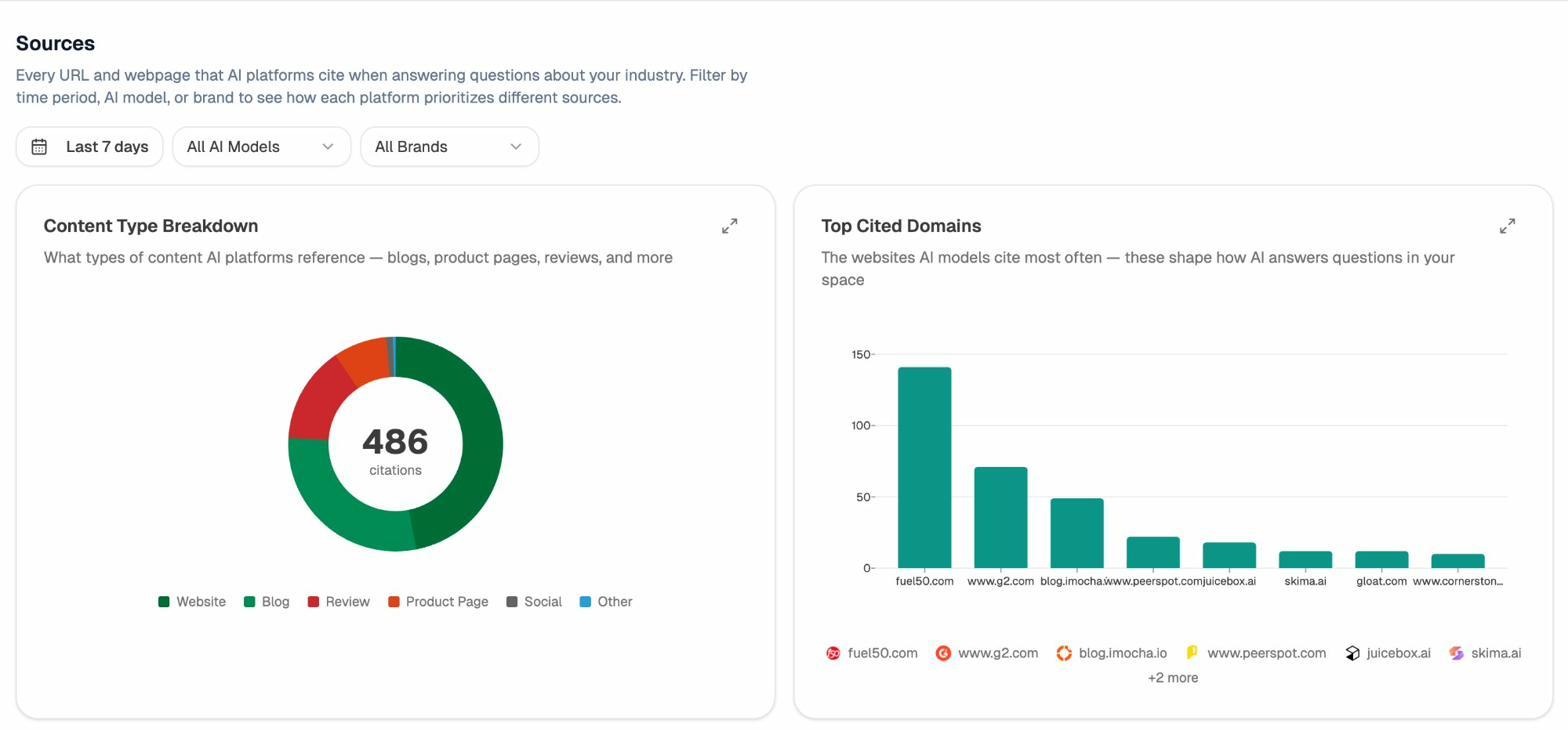

See Which Sources AI Models Actually Trust

The Sources dashboard in Analyze AI shows every URL and domain that AI models cite when answering questions in your category. You see content type breakdown (blogs, product pages, reviews, social), top cited domains, and citation frequency per source.

This tells you exactly where to invest. Instead of generic link building, you target the specific sources that shape AI answers. You strengthen relationships with domains that models already trust and create content that fills gaps in their coverage.

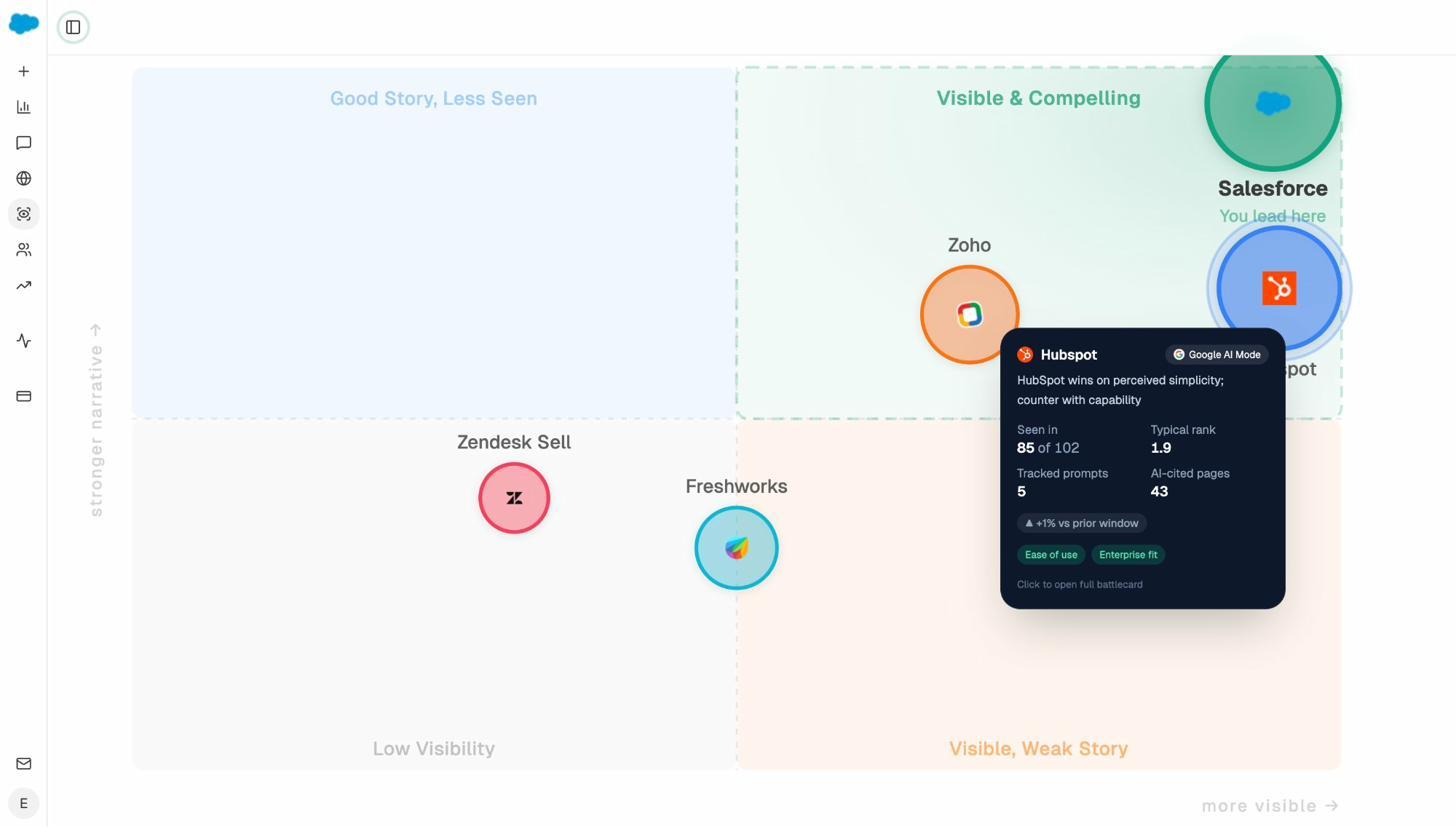

Map Your Competitive Position in AI Search

The Perception Map plots every tracked brand on a two-dimensional quadrant based on visibility and narrative strength. You can see at a glance whether your brand sits in the “Visible and Compelling” quadrant or whether competitors have stronger stories despite lower visibility.

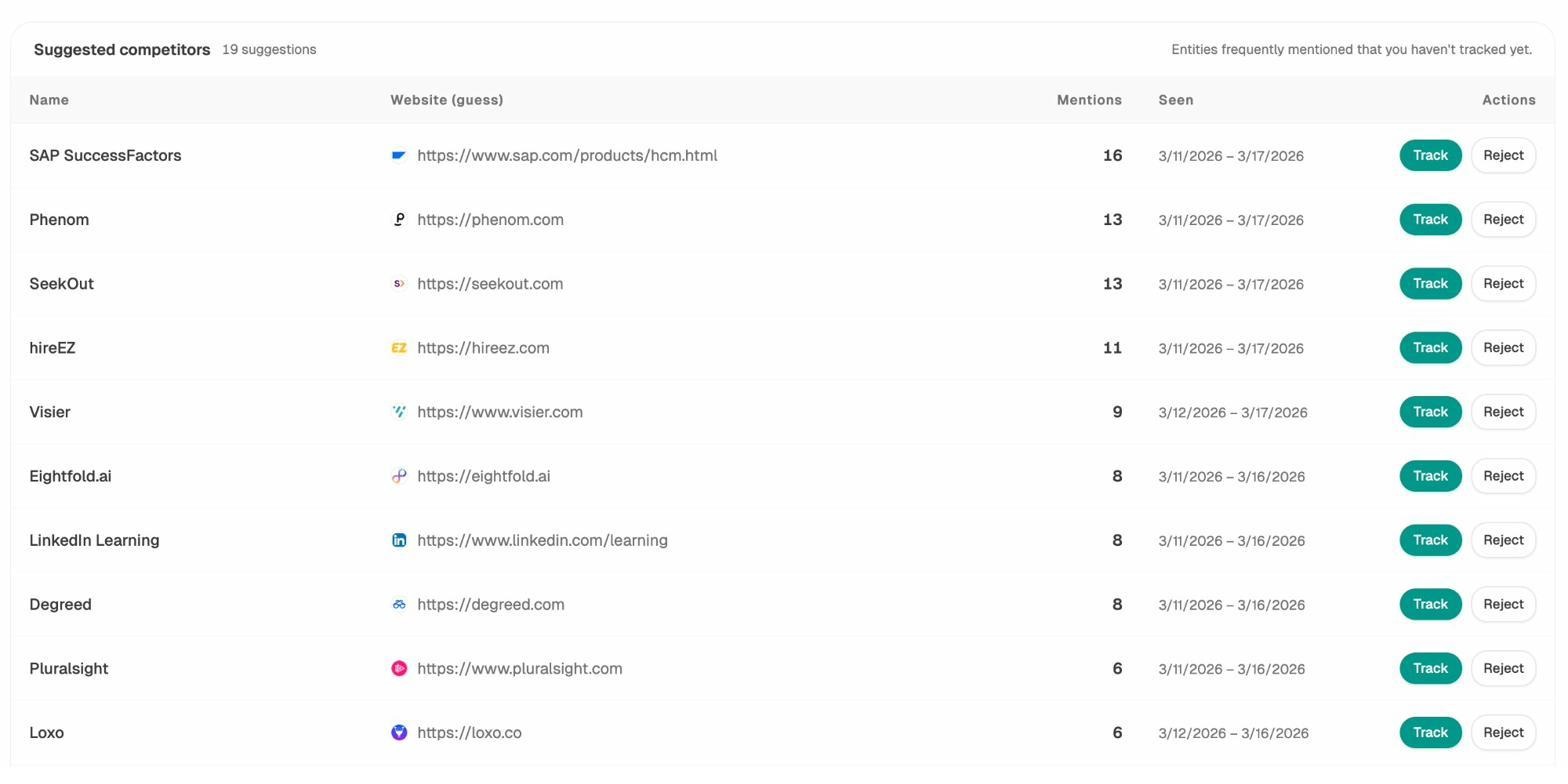

Click any competitor and you get a detailed battlecard showing how many prompts they appear in, their typical rank, AI-cited pages, and the narrative themes AI associates with them. The Competitor Intelligence feature also surfaces competitors you might not have considered by showing entities that frequently appear alongside your brand in AI answers.

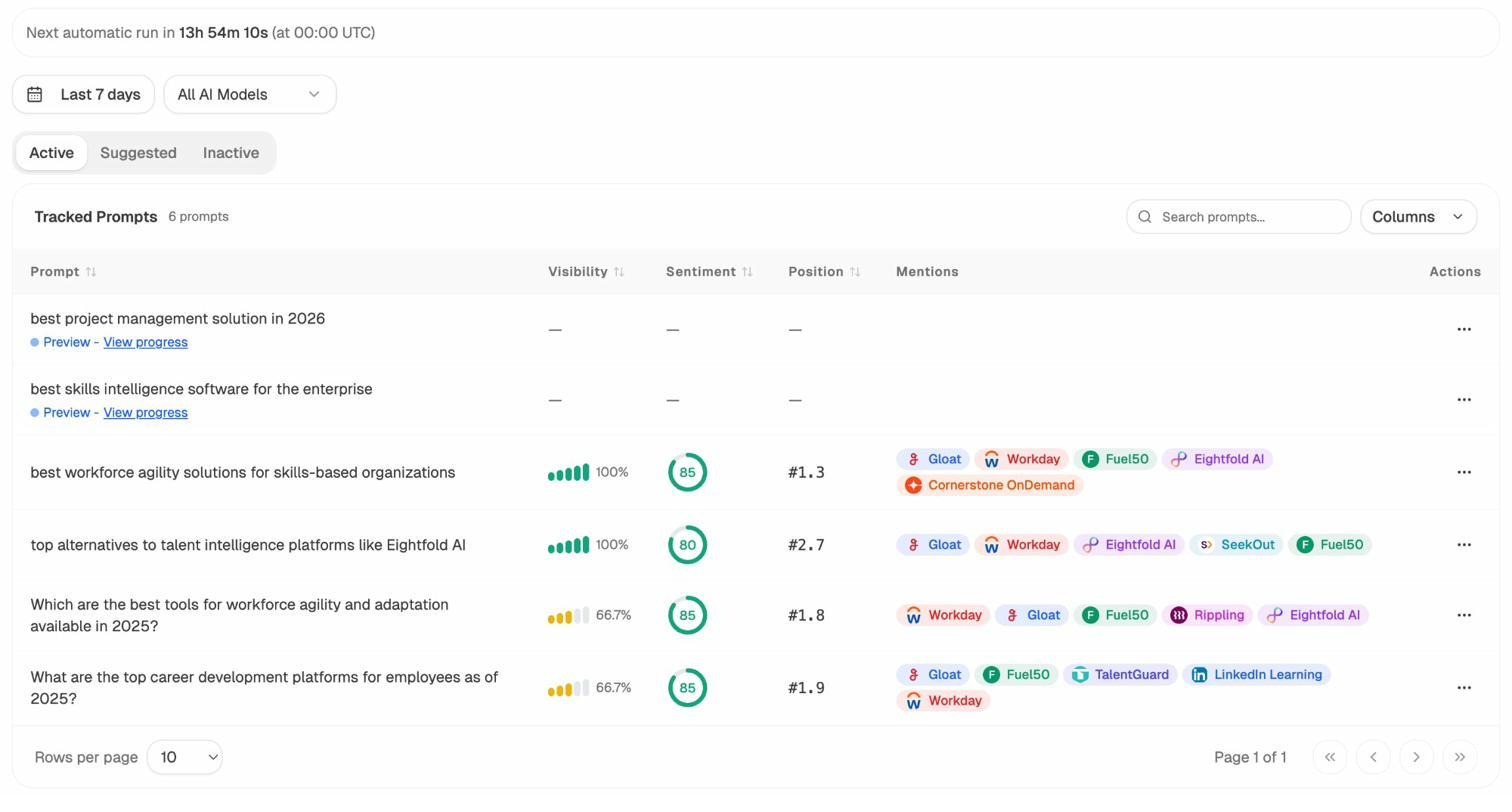

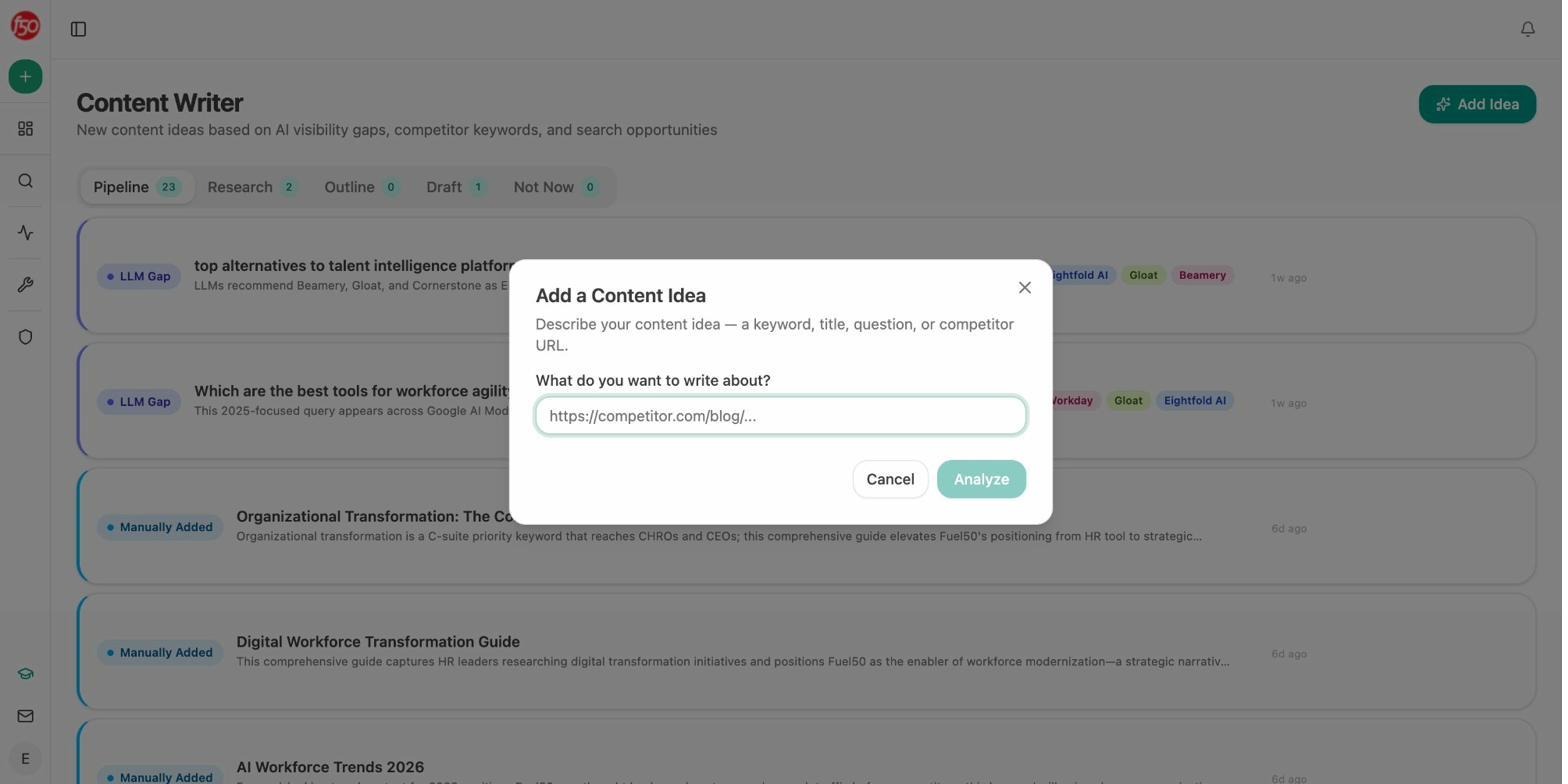

Write and Optimize Content That Gets Cited

Analyze AI includes a full AI Content Writer and AI Content Optimizer built into the platform. These are not bolt-on features. They are deeply integrated with the visibility and citation data you are already collecting.

The Content Writer takes you from idea to research to outline to draft. At every step, it incorporates AI visibility gaps, competitor analysis, and editorial comments based on what is actually working in your space. It does not just generate text. It generates text grounded in real citation patterns and competitive intelligence.

The Content Optimizer audits any existing URL for AI search readiness. It scores structure, entity coverage, claim density, and proof integration, then gives you specific, line-by-line suggestions for improvement. After you publish updates, the platform tracks whether those changes improved your citation rate and visibility.

Both tools produce better outputs than standalone AI writing tools because they are pulling from your actual visibility data, your brand voice rules stored in the Knowledge Base, and competitive context from your tracked prompts. The writing is not generic. It is tuned to what AI engines are already looking for in your category.

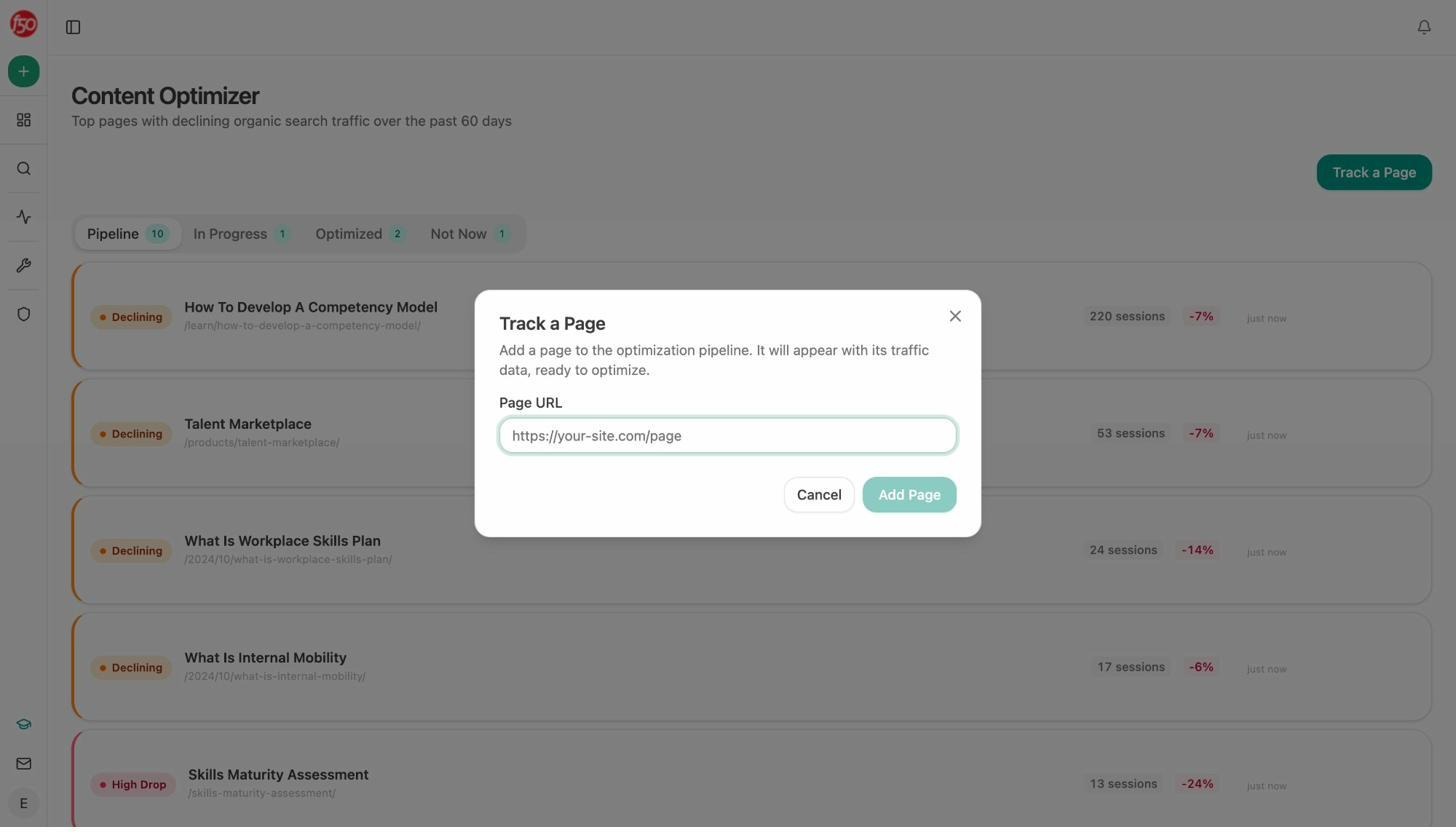

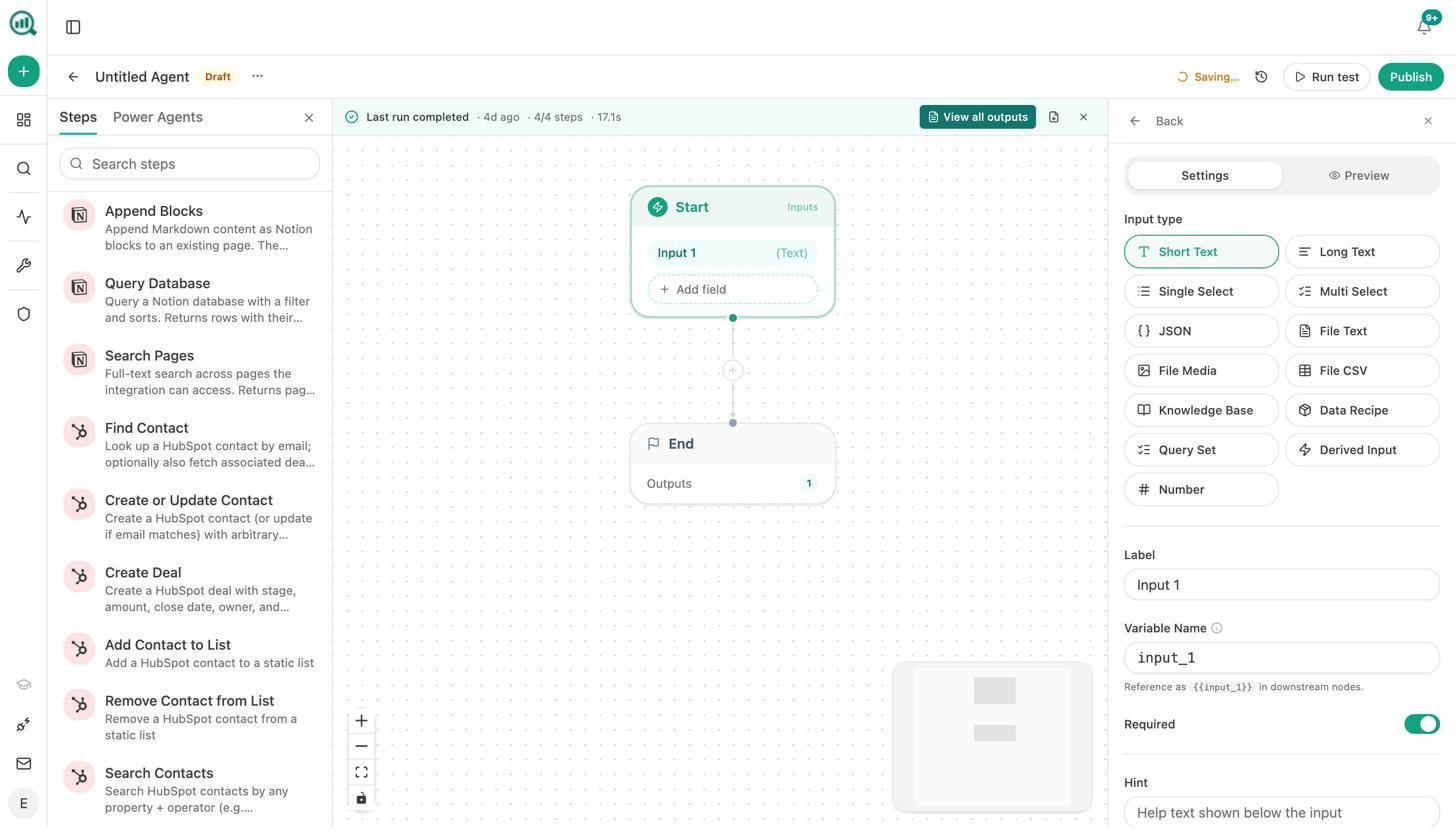

Automate Everything With the Agent Builder

This is where Analyze AI leaves every other tool in the category behind. The Agent Builder gives you 180+ nodes, 34 pre-built data recipes, 13 input primitives, and three trigger modes (manual, scheduled, webhook) to build workflows that do the work for you.

This is not a simple automation layer. It is a programmable substrate with direct integrations to GA4, Google Search Console, DataForSEO, Semrush, HubSpot, Notion, WordPress, Slack, Mailchimp, Contentful, Sanity, and every major LLM. You compose workflows from primitives, not templates.

Here is what teams actually build with it:

For CMOs: A scheduled agent that runs every Monday at 7am, pulls your share-of-voice data, AI traffic trends, new HubSpot deals, and competitor shifts, then composes a board-ready executive brief and emails it to leadership. The 4-hour analyst chase disappears.

For agencies: A single workflow that loops over every client, assembles each weekly report in parallel, and emails each account manager their client’s briefing pack. Reporting day stops existing.

For content teams: A brief-to-publish pipeline triggered by a Notion status change. The agent generates research, outline, and full draft (with brand voice injected from the vault), runs an AEO content scorecard, and only publishes to WordPress if the score exceeds your threshold. Pieces that score below get sent back to the writer with specific gaps flagged.

For PR teams: A crisis early-warning agent that runs every 15 minutes, monitors brand mentions across news sources, and triggers a Slack alert with drafted response options the moment negative coverage crosses your reach threshold. You hear about it before your CEO does.

For sales teams: An inbound form submission triggers lead enrichment. The agent verifies the email, pulls a domain overview and Lighthouse audit of the prospect’s site, creates a research brief, pushes an enriched contact to HubSpot, and notifies the AE in Slack. The lead is fully qualified before anyone touches it.

The Agent Builder transforms Analyze AI from a monitoring tool into the operations layer for your entire marketing org. Competitors offer dashboards. Analyze AI offers a platform that does the work.

Get a Weekly Plan Without Logging In

Every Monday, Analyze AI delivers a prioritized digest to your inbox. It includes competitor shifts, citation changes, visibility trends, and recommended actions ranked by likely impact. You know what to work on before you open the dashboard.

ZipTie AI vs. Analyze AI: Quick Comparison

|

Feature |

ZipTie AI |

Analyze AI |

|---|---|---|

|

AI engines tracked |

3 (AI Overviews, ChatGPT, Perplexity) |

8+ (ChatGPT, Claude, Gemini, Perplexity, Copilot, Google AI Mode, Grok, DeepSeek) |

|

AI traffic attribution (GA4) |

No |

Yes |

|

Landing page conversion tracking |

No |

Yes |

|

Content Writer |

No |

Yes (idea → research → outline → draft) |

|

Content Optimizer |

Basic recommendations |

Full audit with line-by-line suggestions |

|

Agent Builder / workflow automation |

No |

Yes (180+ nodes, 34 data recipes, HubSpot/GA4/GSC/Semrush/DataForSEO integrations) |

|

Perception Map |

No |

Yes |

|

AI Battlecards |

No |

Yes |

|

Weekly Email Digests |

No |

Yes |

|

Prompt Discovery (AI-powered) |

GSC-dependent |

AI-native suggestions |

|

Brand Vault / Knowledge Base |

No |

Yes |

|

Pricing model |

Credit-based (500-2,000 checks) |

Flat-rate plans |

|

Free tools |

No |

Yes (Keyword Generator, SERP Checker, Keyword Difficulty Checker, Website Traffic Checker, and more) |

The Bottom Line

ZipTie AI is a capable tool for one specific job. If all you need is accurate AI Overview detection for Google SERPs alongside basic ChatGPT and Perplexity monitoring, it delivers. The browser-level methodology is genuinely better than most API-based competitors, and the content optimization module gives actionable recommendations.

But the question you should be asking is not “does ZipTie track AI Overviews well?” The question is “does it give me everything I need to win in AI search?”

In 2026, winning in AI search means tracking visibility across every engine buyers actually use. It means connecting that visibility to real traffic and conversions. It means having a content pipeline that produces pages built to get cited. And it means automating the monitoring, reporting, and optimization work that would otherwise eat your team’s weeks.

ZipTie gives you one piece of that puzzle. Analyze AI gives you the whole board.

SEO is not dead. It is evolving. AI search is another organic channel that compounds alongside traditional search when you treat it with the same rigor. The teams that build their visibility program on a platform designed for that full picture are the ones that will own their category in every engine that matters.

Ernest

Ibrahim

![7 seoClarity Alternatives That Won’t Lock You Into $2,500/mo Enterprise Contracts [2026]](/_next/image?url=https%3A%2F%2Fwww.datocms-assets.com%2F164164%2F1779457139-blobid0.png&w=3840&q=75)