Summarize this blog post with:

In this article, you’ll get a 2026 verdict on Peec AI from a team that has compared it side by side with every major AI search visibility tool on the market. You’ll see where its tracking is genuinely strong, the limits that show up the moment you scale past a few prompts and one region, the real cost after add-ons, and the kind of operation that outgrows it. You’ll also see where Analyze AI fits as an agentic SEO and content platform you can point at almost any marketing workflow, with a clean side-by-side comparison at the end.

Table of Contents

What Peec AI actually is

Peec AI is a Berlin-based AI search visibility platform that tracks how your brand appears across ChatGPT, Perplexity, Gemini, Microsoft Copilot, Google AI Mode, and AI Overviews. Every mention is logged with the prompt that triggered it, the position your brand held in the response, and the sources the model cited.

Inside the dashboard, you can compare your share of presence against tracked competitors, segment by country and language, and pipe data out to Looker Studio or an MCP client. The flat per-seat pricing, the daily refreshes, and the direct Slack line to the founders are repeated praise points across independent reviews. It is a real product solving a real problem.

The trouble starts when you ask the platform what to do with what it shows you.

Where Peec AI wins

These three strengths are what most teams stay for.

Visibility tracking that holds up across models and regions

Peec AI runs each tracked prompt against multiple models on a daily cadence and returns a clean, consistent visibility score. The data updates fast enough that a Wednesday content push shows up in Friday’s chart. Multi-country and multi-language coverage is solid, which is why mid-market brands running rollouts in three to five locales tend to like it. For a deeper view on how visibility tracking should work end to end, our LLM visibility guide walks through the metrics that actually matter.

Unlimited seats and an agency-ready workspace

Most AI visibility platforms charge per seat, which gets expensive fast in any agency or larger marketing team. Peec AI charges per workspace and bundles unlimited seats on every plan, which makes it attractive for agencies running five to ten clients out of a single account. Their pitch workspace, used to package a quick visibility audit for a prospect, is a real productivity unlock for new business teams.

Data plumbing for analysts who already live in Looker

The Looker Studio integration on the Advanced plan is the cleanest in the category. If your reporting layer already aggregates GA4, Search Console, and ad data into a single Looker dashboard, Peec AI plugs in without forcing your analyst to rebuild anything. The MCP endpoint adds the same data to any model that supports MCP, which is useful for ad-hoc executive Q&A.

Where Peec AI falls short

Independent reviews, including Surferstack’s February 2026 breakdown, keep returning to the same three gaps. Here they are at a glance.

|

Gap |

What you see in the product |

What it costs you |

|---|---|---|

|

Monitoring, not optimization |

Charts, percentages, and citation lists |

You still need a separate tool, or a person, to decide what to write or fix next |

|

Coverage gated by add-ons and quotas |

50 prompts on Starter, 3 base engines, Claude/DeepSeek/Grok billed extra at €30 to €140/mo |

Real-world coverage costs are 30 to 60 percent above the headline price |

|

AI search only |

No GA4, GSC, traditional rank tracking, or content tooling |

You’re paying a premium for one channel while running three others elsewhere |

It tells you what changed, not what to do about it

Peec AI is excellent at description and weak at prescription. When your visibility for “best CRM for small business” drops from 38 to 22 percent in a week, the dashboard will show you the drop, the prompts affected, and which competitors gained ground. It will not tell you whether the cause was an outdated comparison page, a thinning citation graph, a rising competitor narrative, or a model freshness reset.

That gap is the biggest reason teams describe the product as “tracking, not optimization.” The data is rich, but the decision is still on you. You export, sit with the export in a separate doc, and translate it into a content plan by hand. For small teams that already know what they want to do, this is fine. For a content director with twenty articles in flight, it becomes the bottleneck.

Coverage gated by add-ons and quotas

The headline pricing looks reasonable. The real-world coverage cost rarely is. Starter includes around 50 prompts and three of the available engines. The other engines, including Claude, DeepSeek, and Grok, are billed as add-ons that range from roughly €30 to €140 per month each. Once you cover the engines a competitive B2B buyer actually uses, plus the prompts you need across two product lines and three regions, the bill quietly climbs past Pro and into Advanced. None of this is hidden, but it is not what most teams budget for in month one.

It stops at AI search, and AI search is one channel

This is the structural limit. Peec AI is built for AI visibility, full stop. It does not track Google rankings, does not connect to Search Console or GA4, does not surface keyword opportunities from a tool like DataForSEO, and does not include any content writing or optimization layer.

That is consistent with what we believe at Analyze AI. AI search is a new organic channel that sits alongside SEO, not a replacement for it. Quality content still wins. The brands showing up in AI answers are the ones with clear, original, useful content, and that content has to work for both Google and the models. AI traffic is still around 1 percent of the web for most brands. Important, growing, but small. A tool that only sees the smaller surface gives you half a picture.

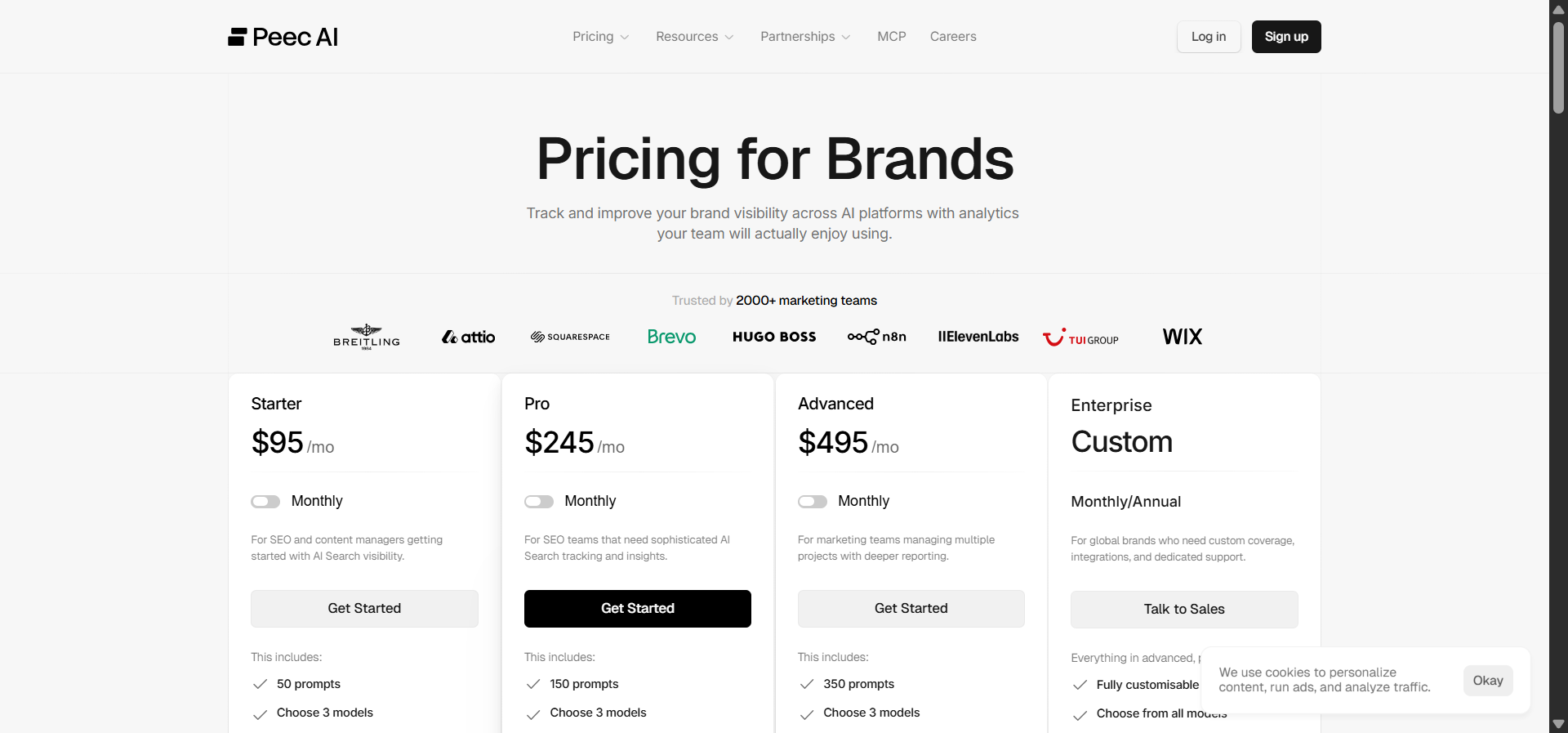

Peec AI pricing: what you actually get per tier

Peec AI publishes pricing in euros. Here is what each tier includes, as of mid-2026, and what the practical ceiling looks like once you add the engines a real B2B team needs.

|

Plan |

Price |

Prompts |

Base engines |

Practical limit |

|---|---|---|---|---|

|

Starter |

€89/mo |

~50 |

3 of your choice |

One product line, one or two regions. Add-ons push the real bill to €130 to €180/mo. |

|

Pro |

€199/mo |

~150 |

3 + Slack support |

Mid-market team with one main brand. Add-ons push the real bill to €260 to €320/mo. |

|

Advanced / Growth |

€499/mo |

300+ |

3 + Looker + dedicated CSM |

Multi-brand or agency. Real bill commonly €600 to €750/mo once Claude and DeepSeek are in. |

For most teams, the honest sticker price is 30 to 60 percent above the published number. That is reasonable for what Peec AI does, and unreasonable when you compare it against a platform that includes the engines plus traditional SEO data, content writing, content optimization, and an automation layer at the same tier.

For deeper comparisons across the category, see our breakdowns of Peec AI vs Profound, Peec AI vs Otterly AI, and the broader list of Peec AI alternatives.

Who outgrows Peec AI

Three patterns show up. The agency lead who needs to wrap each client report in a branded narrative, attribute it to revenue, and ship it on Monday morning. The CMO who wants AI visibility data to sit next to GA4 and Search Console in the same dashboard, with the same alerts. The content director who already has a publishing pipeline and does not want a fourth tool just for visibility data that does not flow into the brief, the draft, or the QA gate.

If any of those describe your team, the rest of this review is for you.

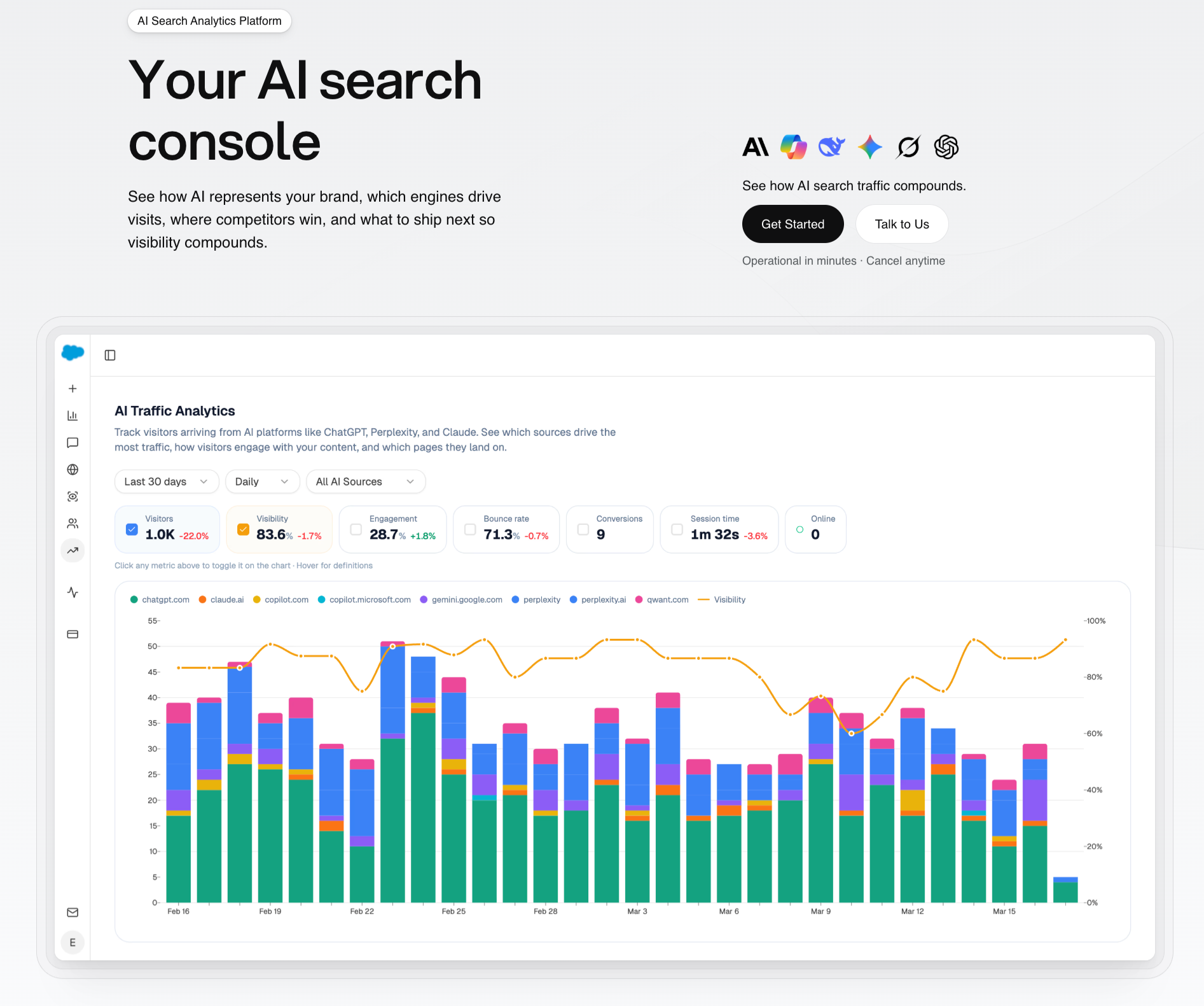

Analyze AI: the agentic SEO and content platform that connects tracking to action

Most GEO tools tell you whether your brand appeared in a ChatGPT response, then stop. Analyze AI runs the full loop. It tracks AI visibility across ChatGPT, Perplexity, Claude, Gemini, and Copilot. It connects that visibility to actual sessions, landing pages, and conversions. It writes and optimizes the content that closes the gaps. And it lets you wire any of that into an agent that runs on a schedule, fires on a webhook, or generates a report on demand.

The positioning is simple. SEO is not dead. AI search is an additional organic channel, and your team needs both surfaces measured, both surfaces optimized, and ideally both in one tool.

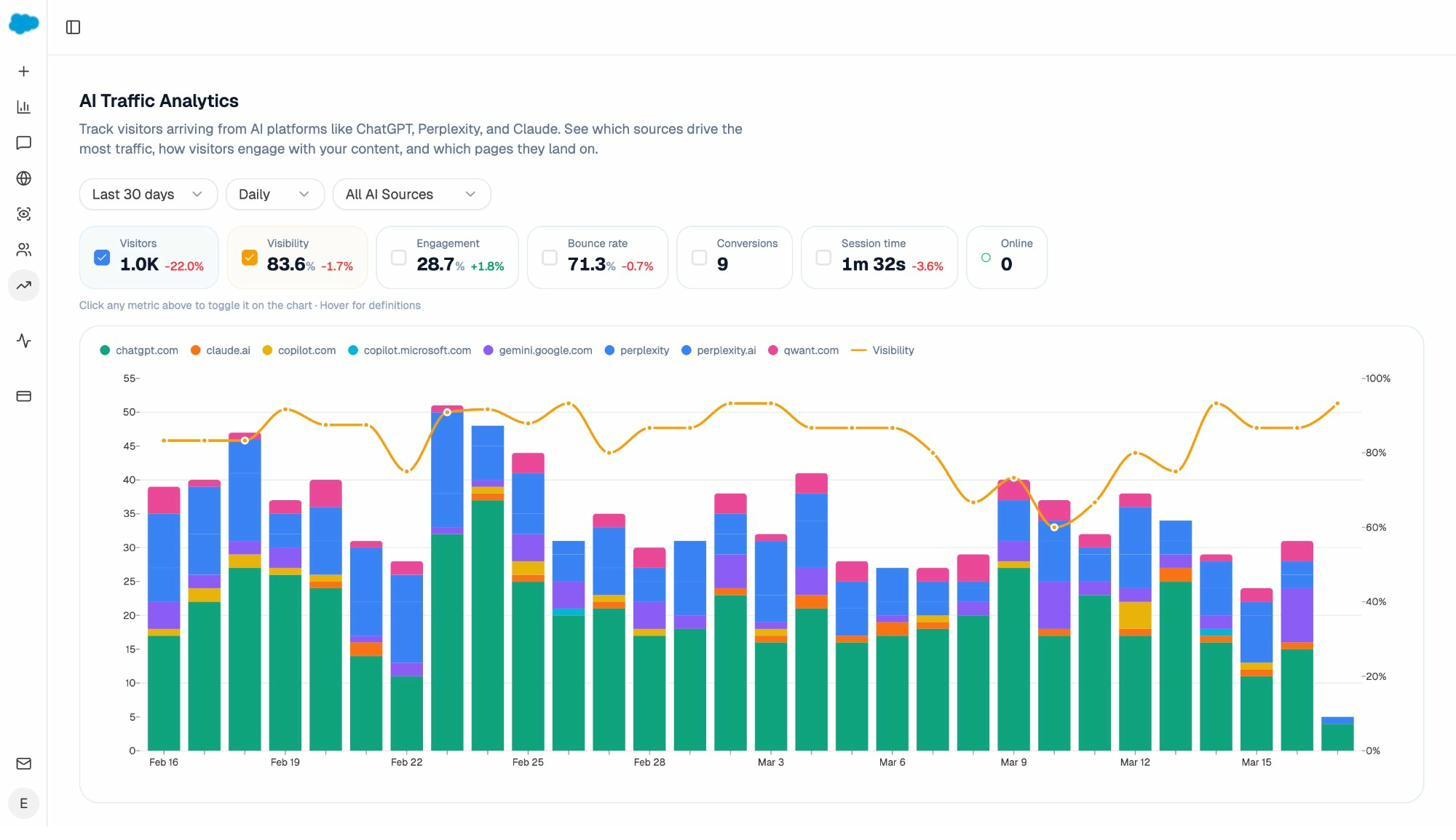

See AI traffic and the conversions it drives

Peec AI shows you mentions. Analyze AI shows you the journey from mention, to click, to landing page, to conversion. You can see exactly which engines send sessions, how those sessions behave, and what they convert at.

Drill into any landing page and you get the engine breakdown, the citations that drove traffic to it, the bounce, the engagement, and the conversion rate, side by side. When your product comparison page gets 50 sessions from Perplexity at 12 percent trial conversion, and an old blog post gets 40 sessions from ChatGPT at zero conversion, you know exactly which page to harden and which one to deprioritize.

This is the loop Peec AI cannot close because it has no GA4 integration. Our AI Traffic Analytics feature is built to close it.

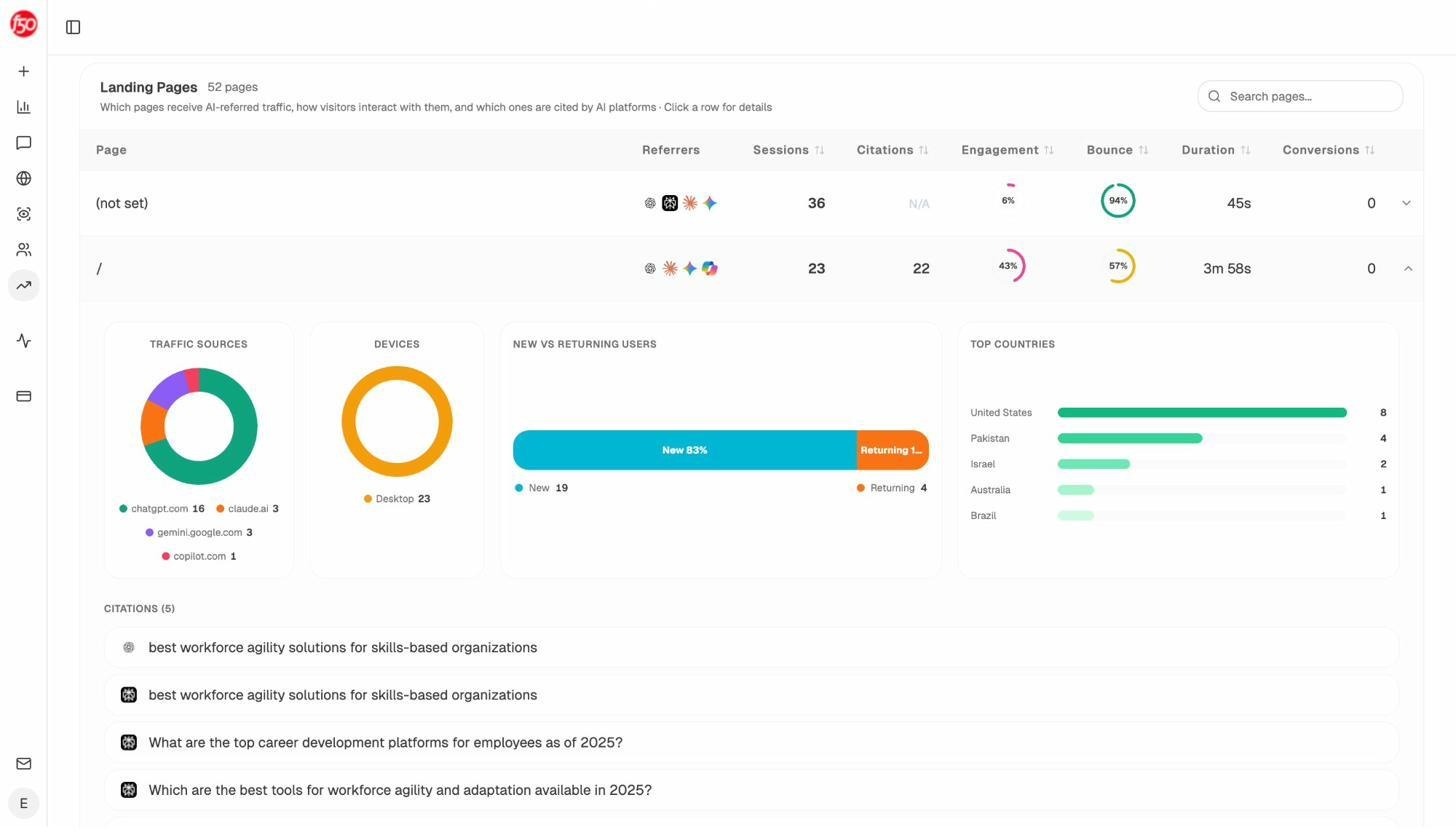

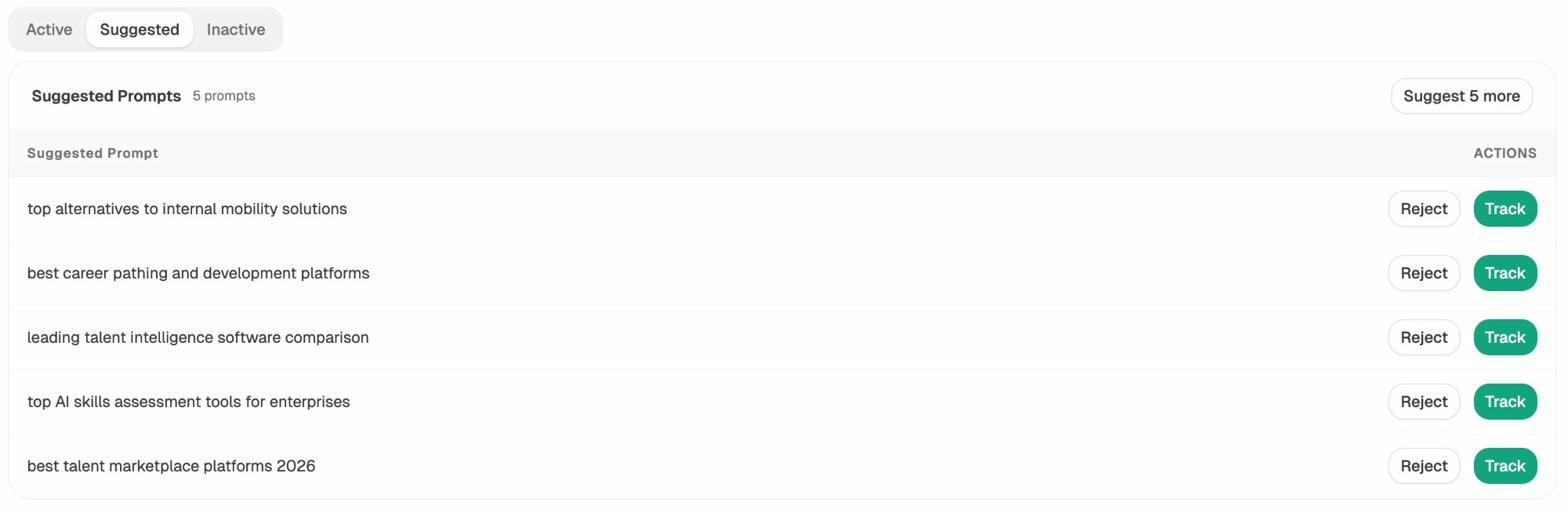

Track prompts, including the ones you didn’t think to track

You can track every prompt you already know about. The harder problem is the long tail of prompts your buyers are using that you never thought to add. Analyze AI suggests bottom-of-funnel prompts based on your category, your competitors, and your existing tracked terms.

For each tracked prompt you see your visibility percentage, your rank against competitors, sentiment, and which competitors appear alongside you. If you’d like to see how this works against a real competitor set, our walkthrough on AI search competitor analysis is the next read.

Audit citations and find the domains models actually trust

Models cite domains, not brands. Citation Analytics shows you the exact domains and URLs models lean on when answering questions in your category, broken down by engine.

Instead of generic link building, you target the specific domains that shape AI answers. You strengthen relationships with the publishers models already trust, fill the topical gaps in their coverage, and watch your citation frequency climb after each push. You can read the deeper methodology in our How LLMs cite sources study, built on 83,670 AI citations.

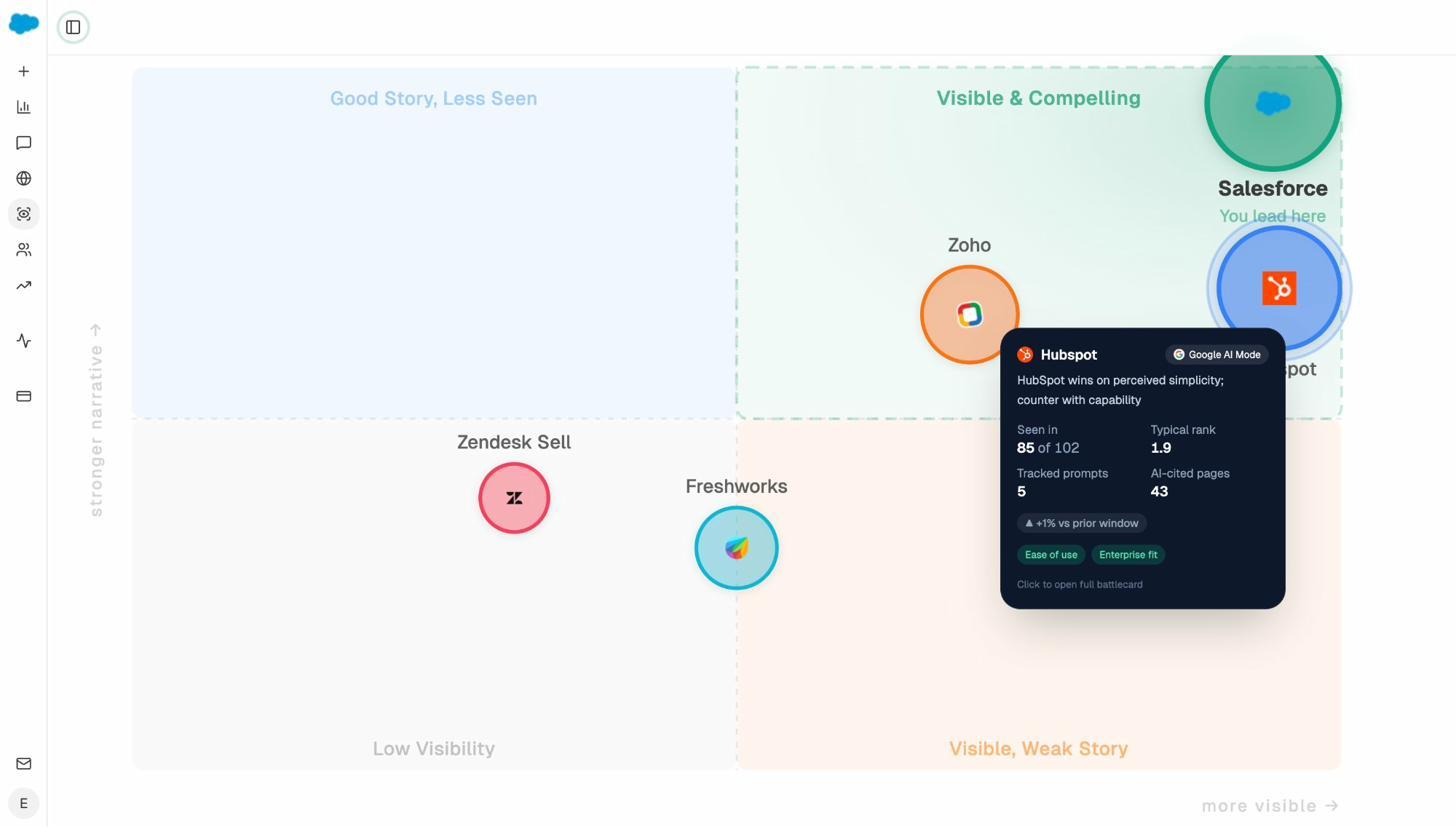

Sit competitors on a Perception Map and battlecard them

The Perception Map plots every brand in your category on two axes. Visibility on one, narrative strength on the other. You instantly see who is “Visible & Compelling,” who is “Visible, Weak Story,” and where you sit.

Click any competitor for a battlecard that summarizes the prompts they win, the pages they get cited from, and the angle of attack you can use against them. This is the panel agency strategists use during quarterly reviews, and it doesn’t exist in Peec AI.

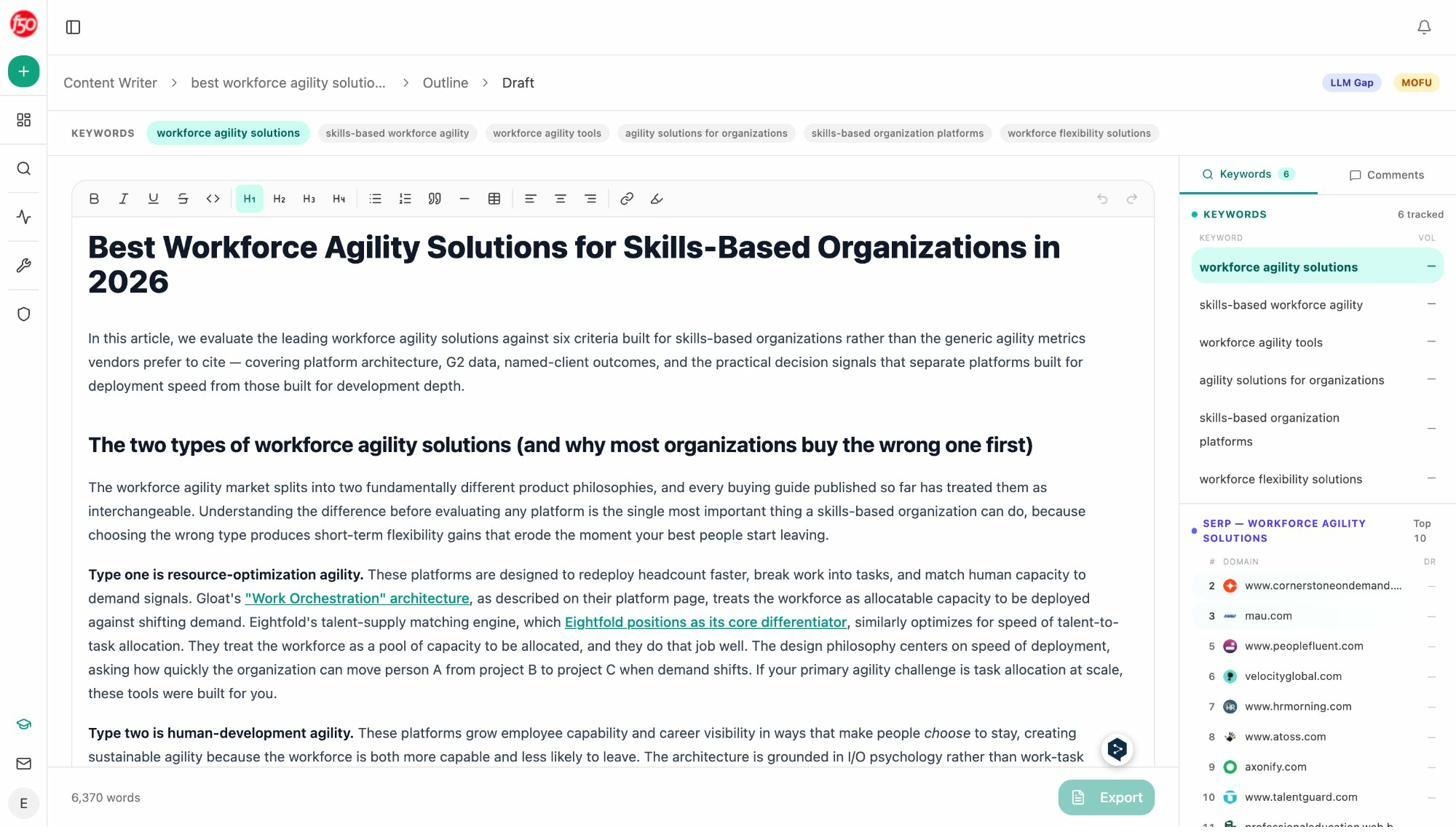

Brief, write, and ship articles that match how AI engines summarize your category

The Content Writer is the part of the platform that does the most to rebut the “AI writers all sound the same” pushback. It runs a four-step pipeline. Research the topic, build an outline against the live SERP and the prompts your buyers actually use, draft against your Brand Vault, and gate the output behind a quality scorecard.

The Brand Vault is the missing piece in most AI writing tools. Twelve blocks for tone, differentiators, proof points, claims rules, required and disallowed phrases. Every draft is generated against the relevant subset, so output reads like your brand wrote it, not the model. For smarter keyword work feeding into briefs, our keyword generator and keyword difficulty checker are free.

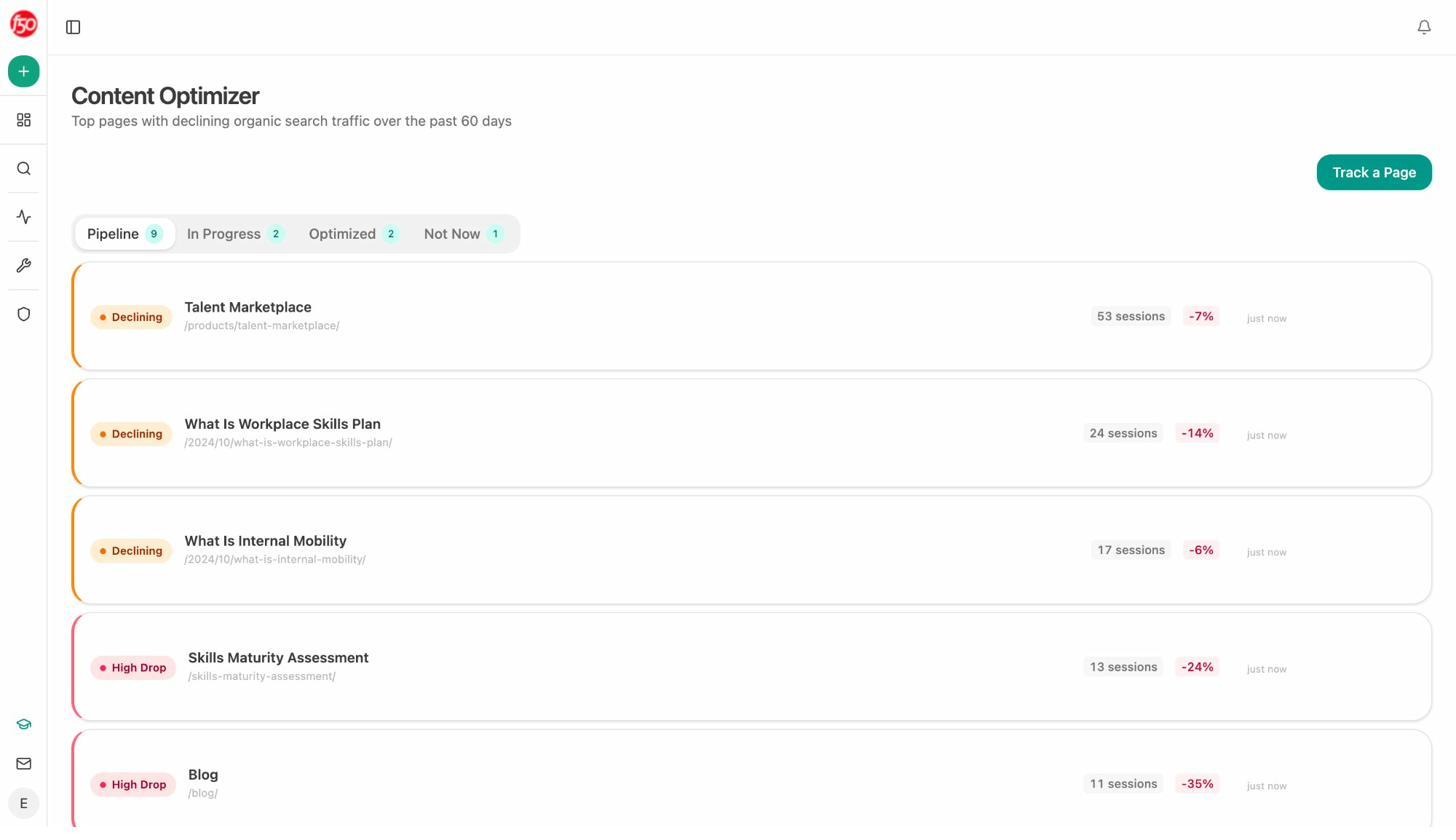

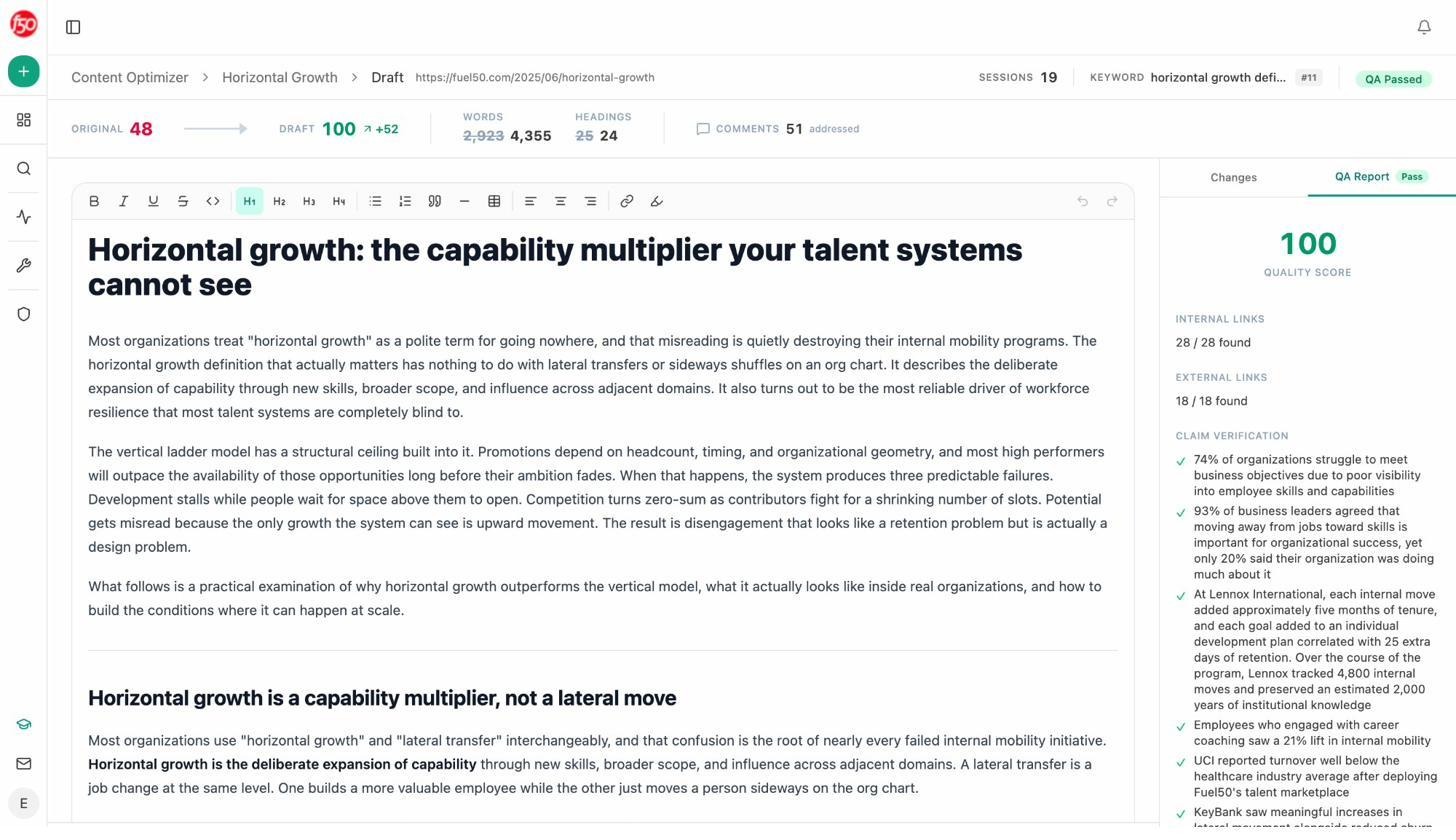

Optimize pages quietly losing rankings, before the cliff

Most pages don’t fail in a single quarter. They drift. Sessions trickle off. Engagement softens. Citations dry up. The Content Optimizer catches that drift and runs each declining page through a structured rewrite cycle.

The output is a rewritten page with a quality score, internal links audited and found, external links verified, and every claim mapped back to a source. You can see the QA report on a real page below.

Compared to running a free broken link checker and rewriting the page in a Google Doc, this is the difference between maintaining a few articles a quarter and maintaining your whole library on a cadence.

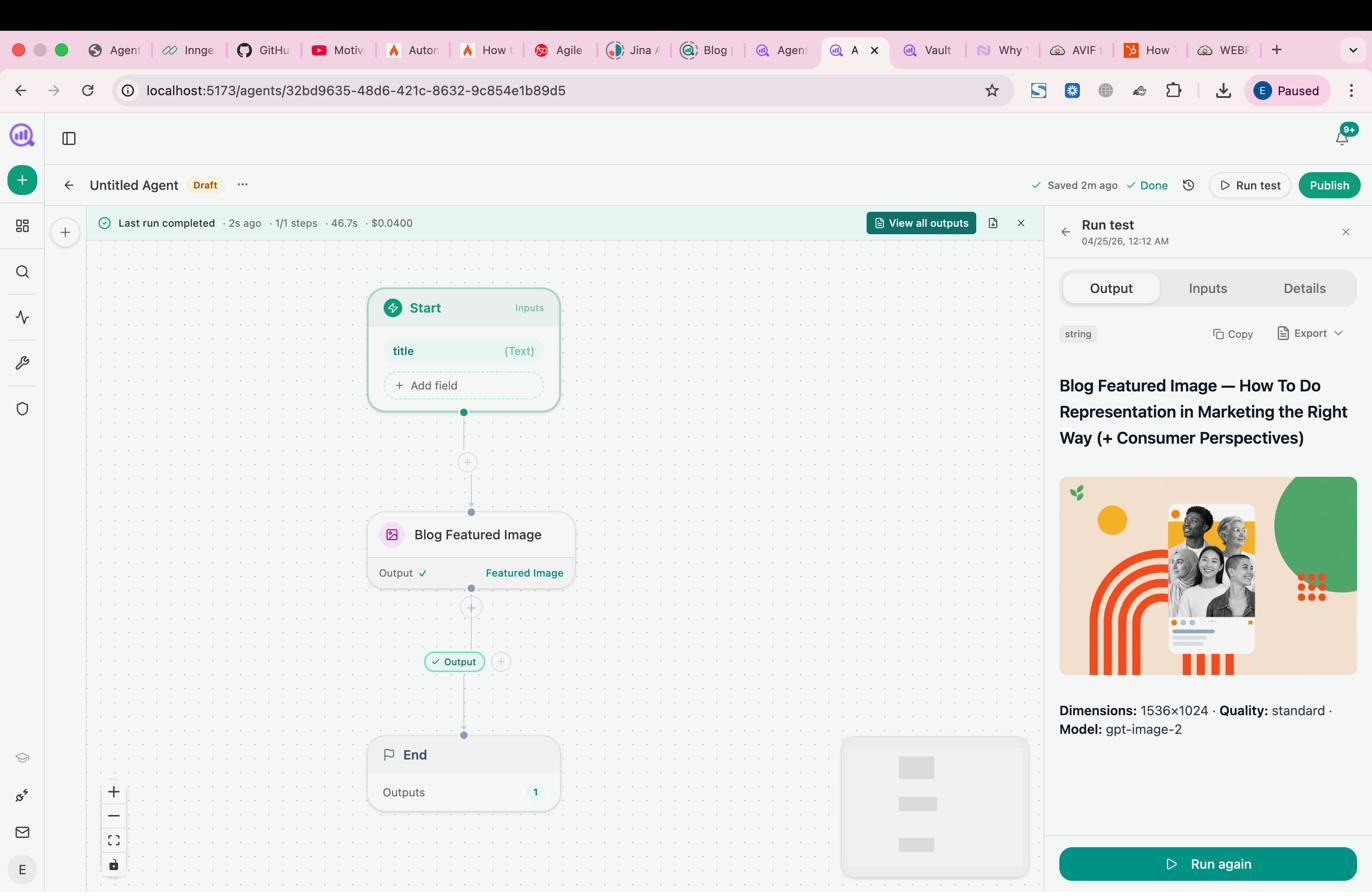

Build agents that run the operations layer of your marketing org

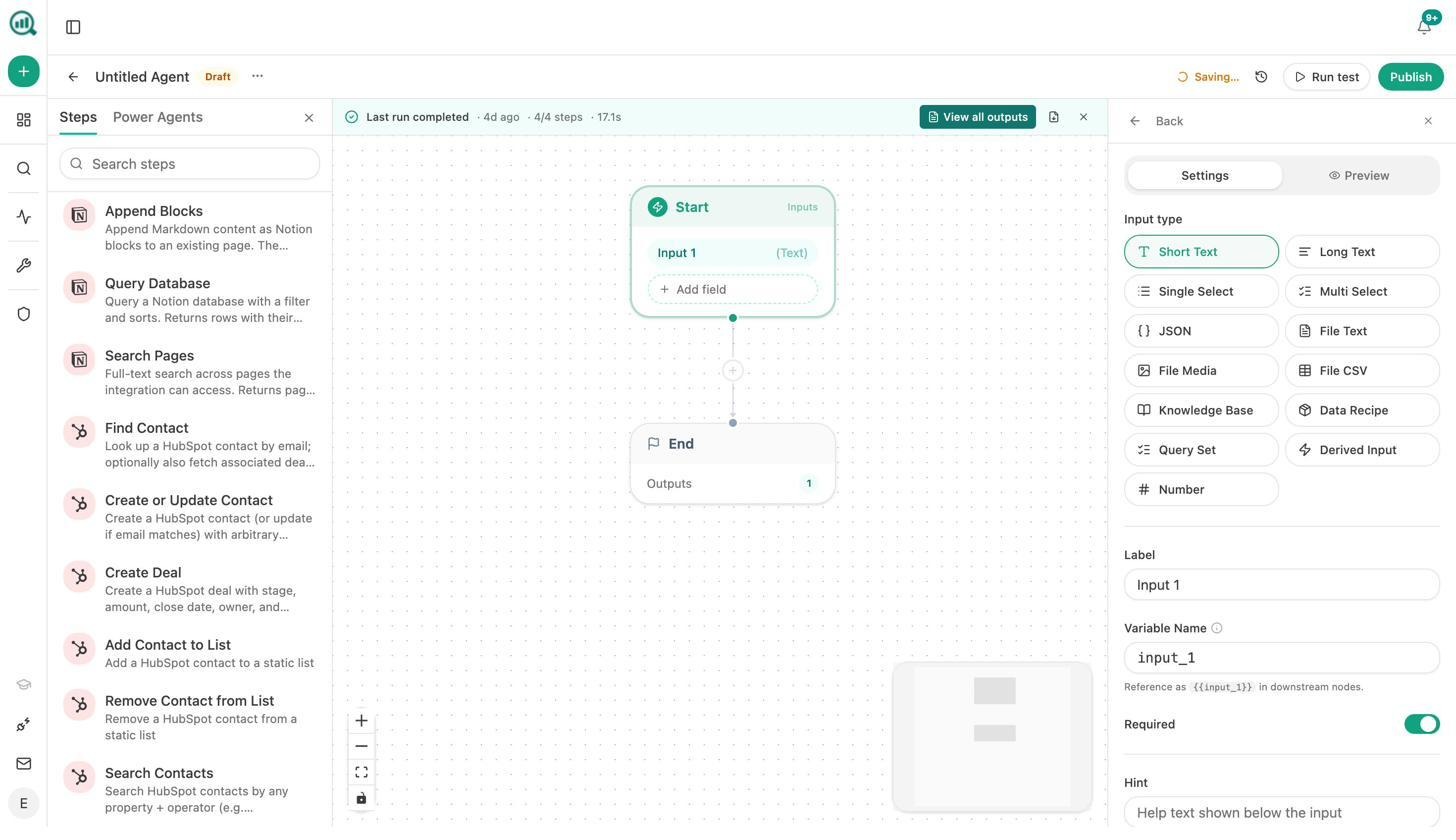

This is the part that makes Analyze AI structurally different from Peec AI, Profound, AthenaHQ, or any tool currently positioned as a GEO platform. The Agent Builder is a programmable substrate with 180+ nodes, 34 pre-baked data recipes, and three trigger modes. Manual, scheduled, and webhook.

The 180+ nodes cover the data sources marketing teams actually use. GA4. Google Search Console. Semrush. DataForSEO. HubSpot. Notion. WordPress. Sanity. Contentful. Mailchimp. Hunter. Tomba. Slack notifications. Plus every Analyze AI native node, including visibility, sentiment, citation share, perception quadrant, and the full GA4 AI traffic suite.

Stack a few of these into a workflow and you get things Peec AI cannot do, no matter how many add-ons you buy. Five real agents teams are running today.

|

Agent |

Trigger |

What it does |

|---|---|---|

|

Monday board prep |

Schedule (Mon 7am) |

Pulls AI visibility, GA4, GSC, HubSpot deals. Composes an executive summary in your brand voice. Emails leadership. |

|

Brief-to-publish pipeline |

Webhook (when a Notion brief flips to “approved”) |

Runs research, outline, full draft, AEO scorecard. Publishes to WordPress if score above 80. Slacks the writer if below. |

|

Citation-stop alert |

Schedule (daily) |

Surfaces pages losing AI citations faster than traffic. Slacks the team with the page and the last five prompts that stopped citing it. |

|

Content refresh fleet |

Schedule (weekly) |

Loops the sitemap, scrapes each page, rewrites for freshness against brand voice, pushes a WordPress update if the diff is substantive. |

|

Inbound lead enrichment |

Webhook (Typeform fill) |

Verifies the email, enriches via DataForSEO and Hunter, creates a HubSpot contact, posts to Slack with a summary. |

For agencies, this is the difference between a 4-hour Monday client report and a click. For content teams, it is the difference between a publishing schedule and a publishing system. The full inventory is in our agent automation guide, and the 10 ways to use Analyze AI post shows more concrete examples.

Peec AI does not have this layer. Looker is a viewer, MCP is a query interface. Neither does work.

Peec AI vs Analyze AI side by side

Same row, both columns, no marketing copy.

|

Capability |

Peec AI |

Analyze AI |

|---|---|---|

|

AI visibility tracking |

Yes (3 base engines, others as add-ons at €30–€140/mo) |

Yes (ChatGPT, Perplexity, Claude, Gemini, Copilot included) |

|

Prompt tracking |

Yes (50/150/300+ per tier) |

Yes, with suggested prompts for the long tail |

|

Citation analytics |

Yes |

Yes, with domain-level breakdowns and trend lines |

|

Competitor benchmarking |

Yes |

Yes, plus Perception Map and AI Battlecards |

|

GA4 / GSC integration |

No |

Yes, native, with AI traffic analytics tied to conversions |

|

Content writing |

No |

Yes, Content Writer with Brand Vault |

|

Content optimization |

No |

Yes, Content Optimizer with QA gate |

|

Looker Studio export |

Yes (Advanced plan) |

Available via Agent Builder data export |

|

MCP endpoint |

Yes |

Available via Agent Builder API |

|

Workflow automation |

No |

Yes, Agent Builder with 180+ nodes, schedule, manual, and webhook triggers |

|

HubSpot, Notion, WordPress, Slack, Mailchimp |

No |

Yes, native nodes |

|

Add-on engines |

€30–€140/mo each |

Included |

|

Free tools |

Limited |

10 free tools including SERP checker, keyword rank checker, and website traffic checker |

|

Customer support |

Slack access to founders (praised) |

Slack and email |

For the granular feature-by-feature view, our Analyze AI vs Peec AI compare page goes deeper.

Verdict: which one is right for your team

Buy Peec AI if you have one product line, one or two regions, and you only need clean AI visibility data with a Looker Studio export. The product is well-built, the team responsive, and the price reasonable for what it does. If you already have a content team, an SEO stack, and an automation layer, Peec AI plugs into the gap labeled “AI visibility data” and stays there.

Choose Analyze AI if you need that visibility data tied to traffic, conversions, content, and an automation substrate that runs the marketing operations layer for you. The product is built on the belief that SEO is not dead, AI search is an additional organic channel, and your tool should measure and act on both. If you’ve ever exported a Peec dashboard, dropped it into a doc, and spent two hours turning it into a brief, you’ll feel the difference inside a week.

The teams winning right now closed the loop between data, decision, and shipped work. That is what Analyze AI is built to do, and it is the layer Peec AI was never designed for. To see your brand’s AI visibility before you commit, our AI Visibility Audit is a good place to start.

Ernest

Ibrahim