Summarize this blog post with:

In this article, you’ll get a buyer’s-side look at AthenaHQ AI. Not the marketing site version. The version you see after 60 days of real use, after the first overage email, and after leadership asks why visibility went up and pipeline didn’t.

You’ll see what AthenaHQ does well, where it stops, what the credit model actually costs at team and agency scale, and what most buyers end up bolting on, because monitoring an AI answer is one job, and getting somebody to fix the page, write the brief, and run the Monday report is a different one.

If you’re a CMO defending a GEO line item, an agency lead protecting a retainer, or a content director sitting on a dashboard that flags problems your team has no time to act on, this article is for you.

Table of Contents

What AthenaHQ AI actually is

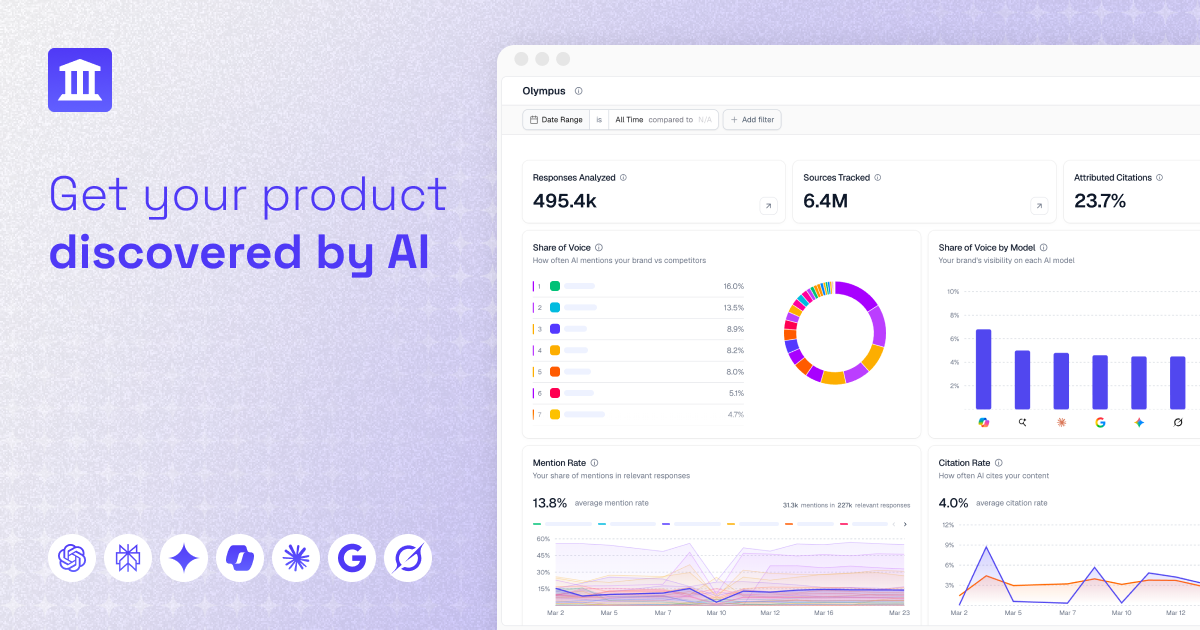

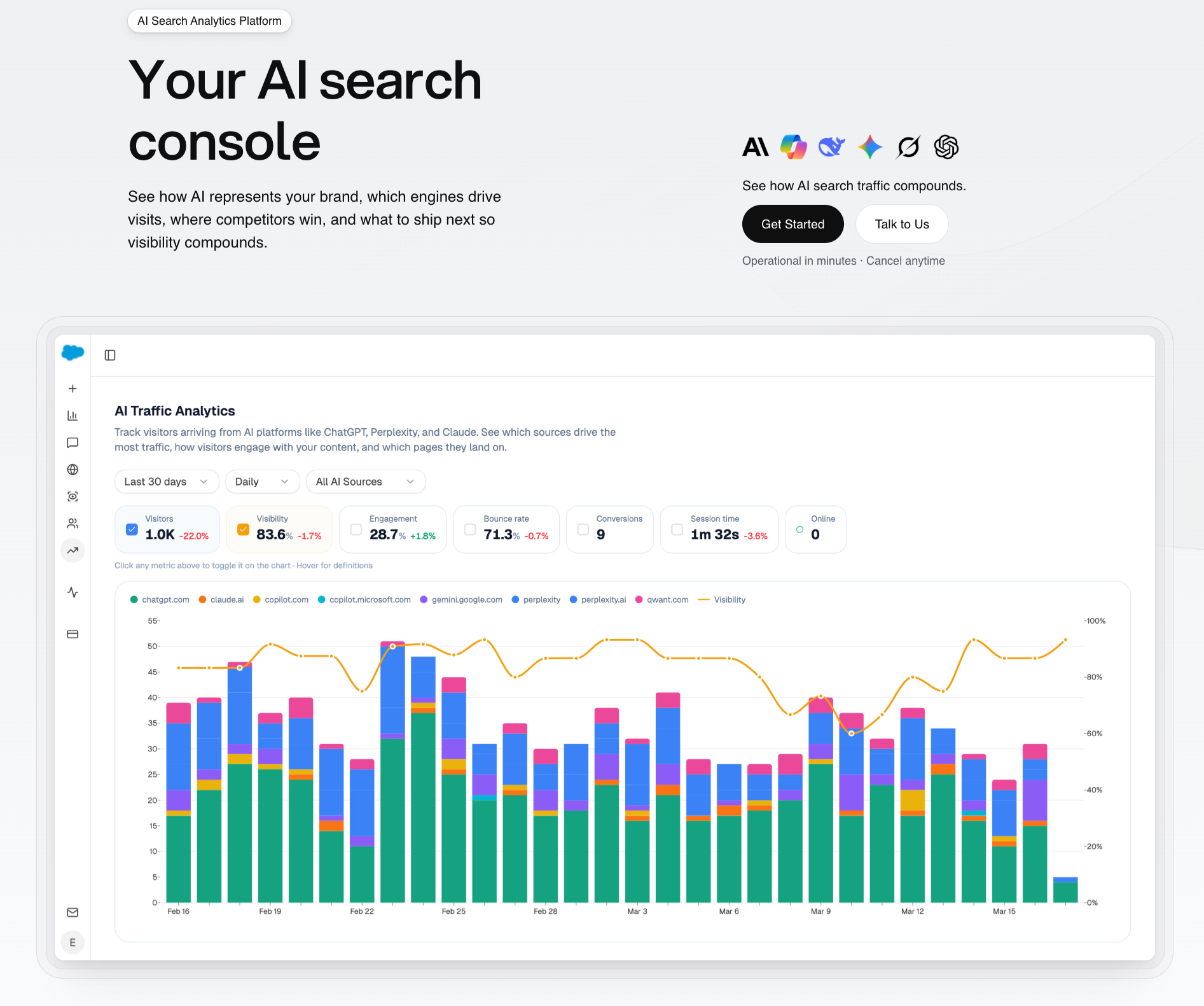

AthenaHQ AI is a Generative Engine Optimization platform. It tracks how your brand shows up across ChatGPT, Perplexity, Gemini, Claude, Copilot, and Google AI Mode, captures the full AI response for each tracked prompt, maps the citations behind those answers, and turns the data into action items.

Founded by ex-Google Search and DeepMind engineers, AthenaHQ raised a $2.2M YC seed and now sits in the premium tier of the LLM tracking tools market alongside Profound and Peec AI. The product runs three loops. A monitoring loop that watches AI responses across engines, a diagnostic loop that maps gaps and citations, and an Action Center that recommends fixes and re-tests them. Pricing is credit-based, which matters more than it looks. We’ll get to that.

Three things AthenaHQ AI does well

1. Multi-engine visibility tracking with prompt-level context

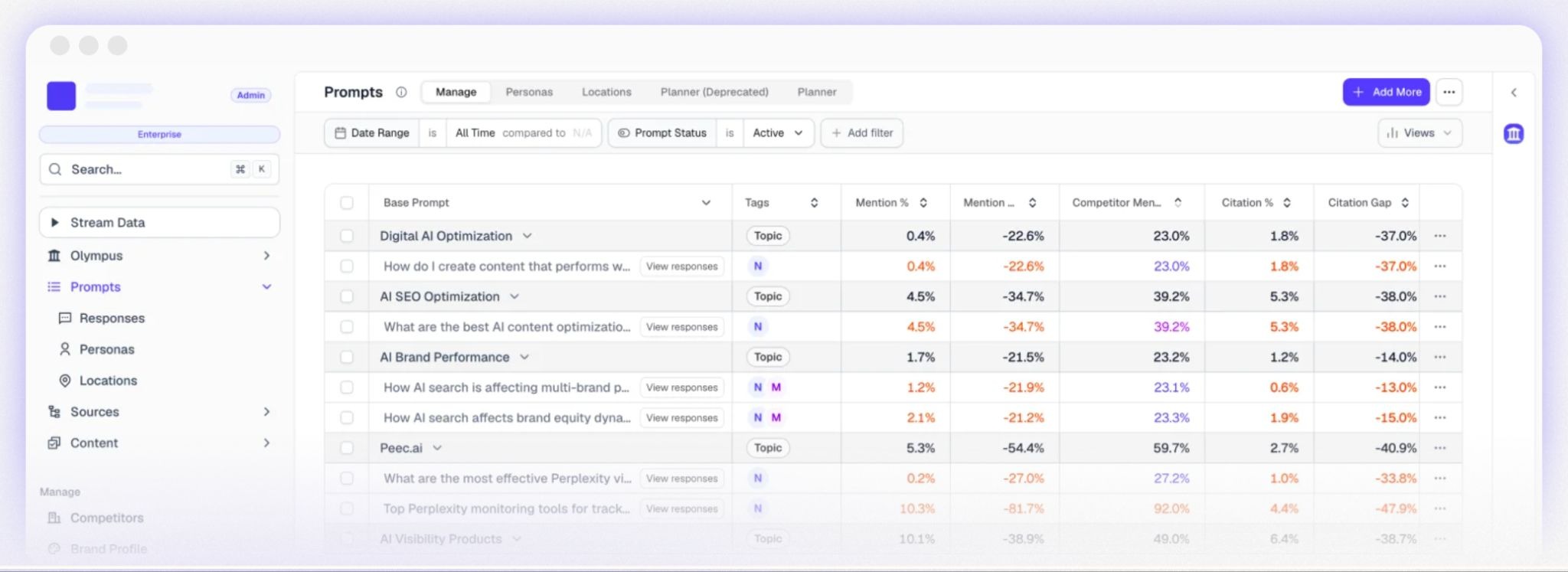

AthenaHQ doesn’t just tell you that ChatGPT mentioned your brand. It logs the prompt that triggered the mention, the full answer, and the position you were cited in, then stitches those snapshots into share-of-voice trend lines you can slice by engine, region, and topic.

For teams that previously checked AI visibility by pasting prompts into five chat windows on a Friday afternoon, this is genuine relief. The data comes back consistent and timestamped, usable in a share of voice report you can actually present.

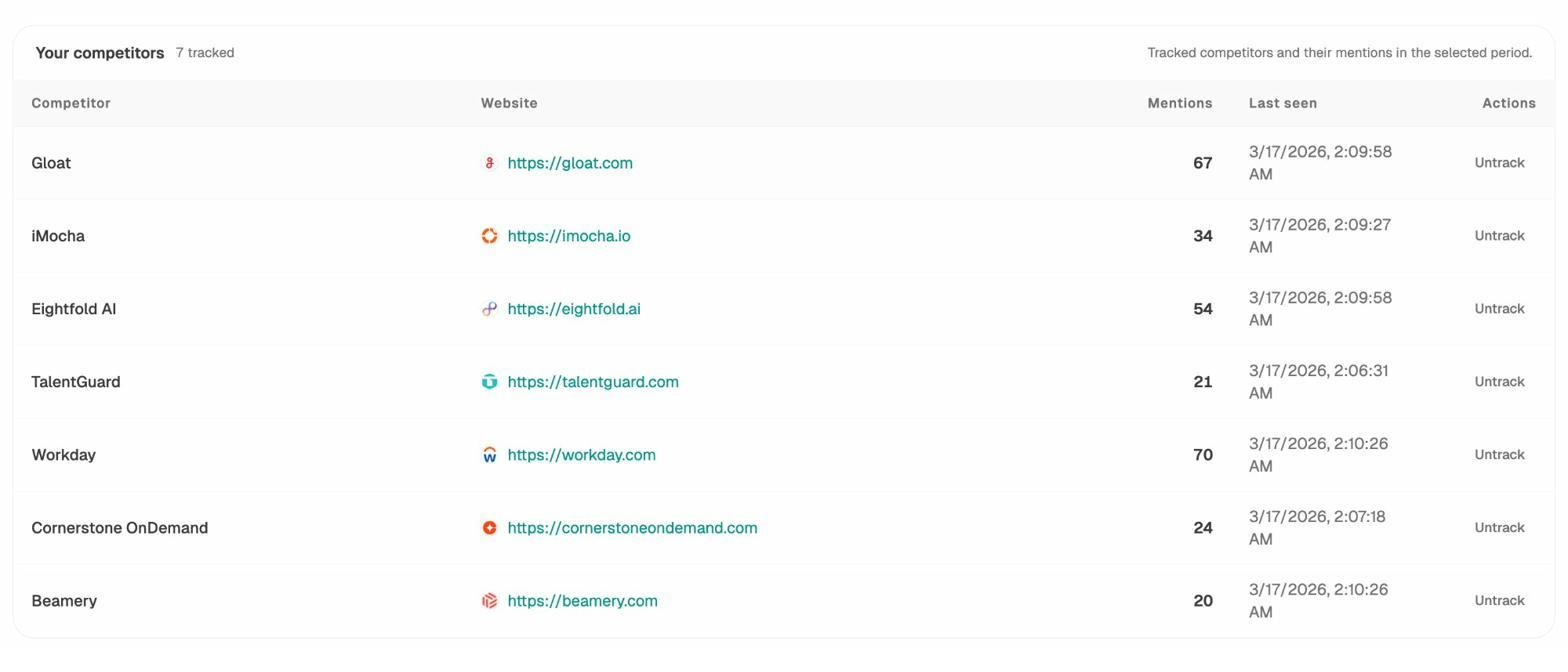

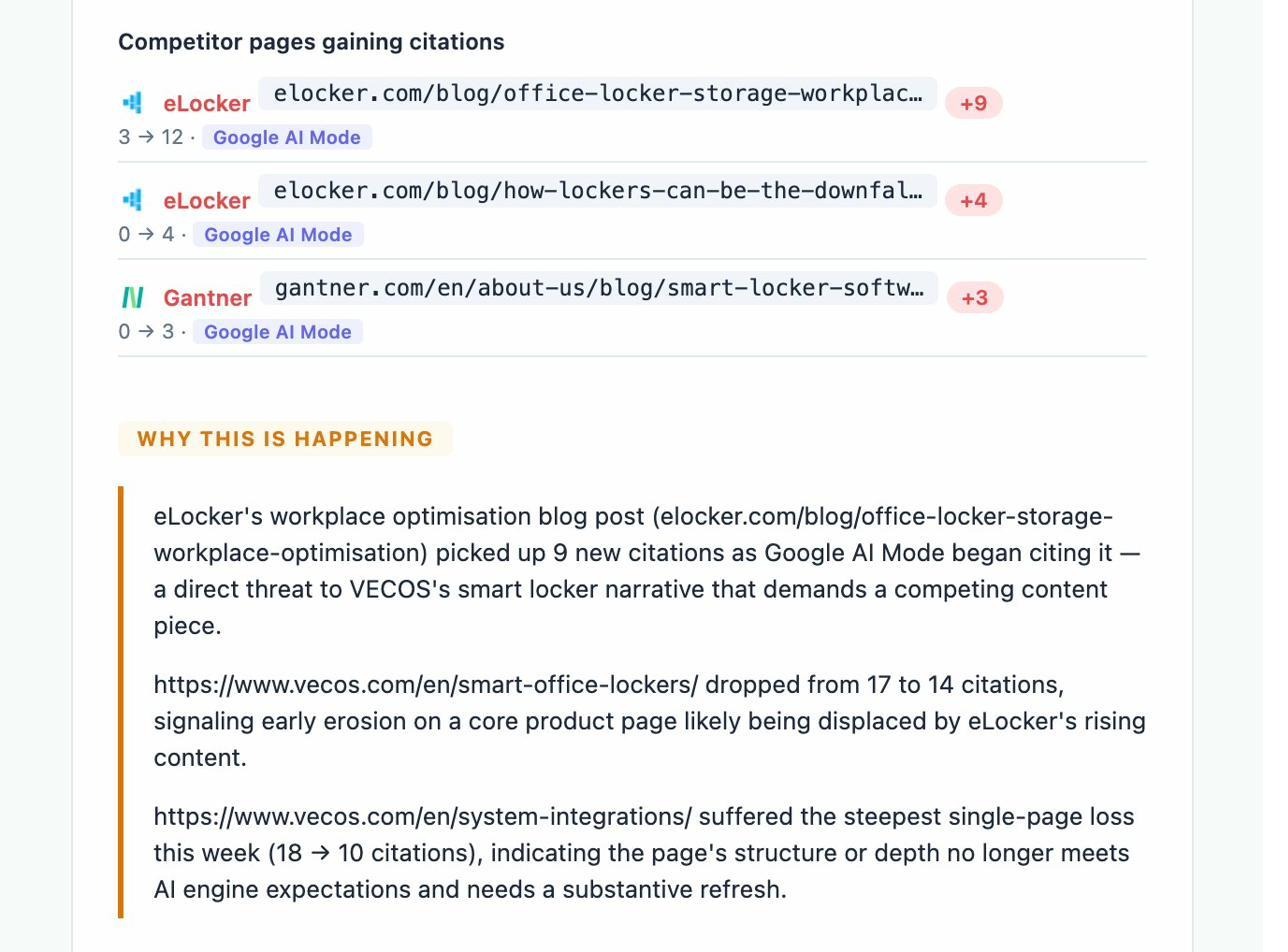

The competitive layer adds a second dimension. When a rival climbs on a high-intent prompt, AthenaHQ flags it and surfaces the sources behind the shift. The earlier you catch a competitor reframing the category, the cheaper your response is.

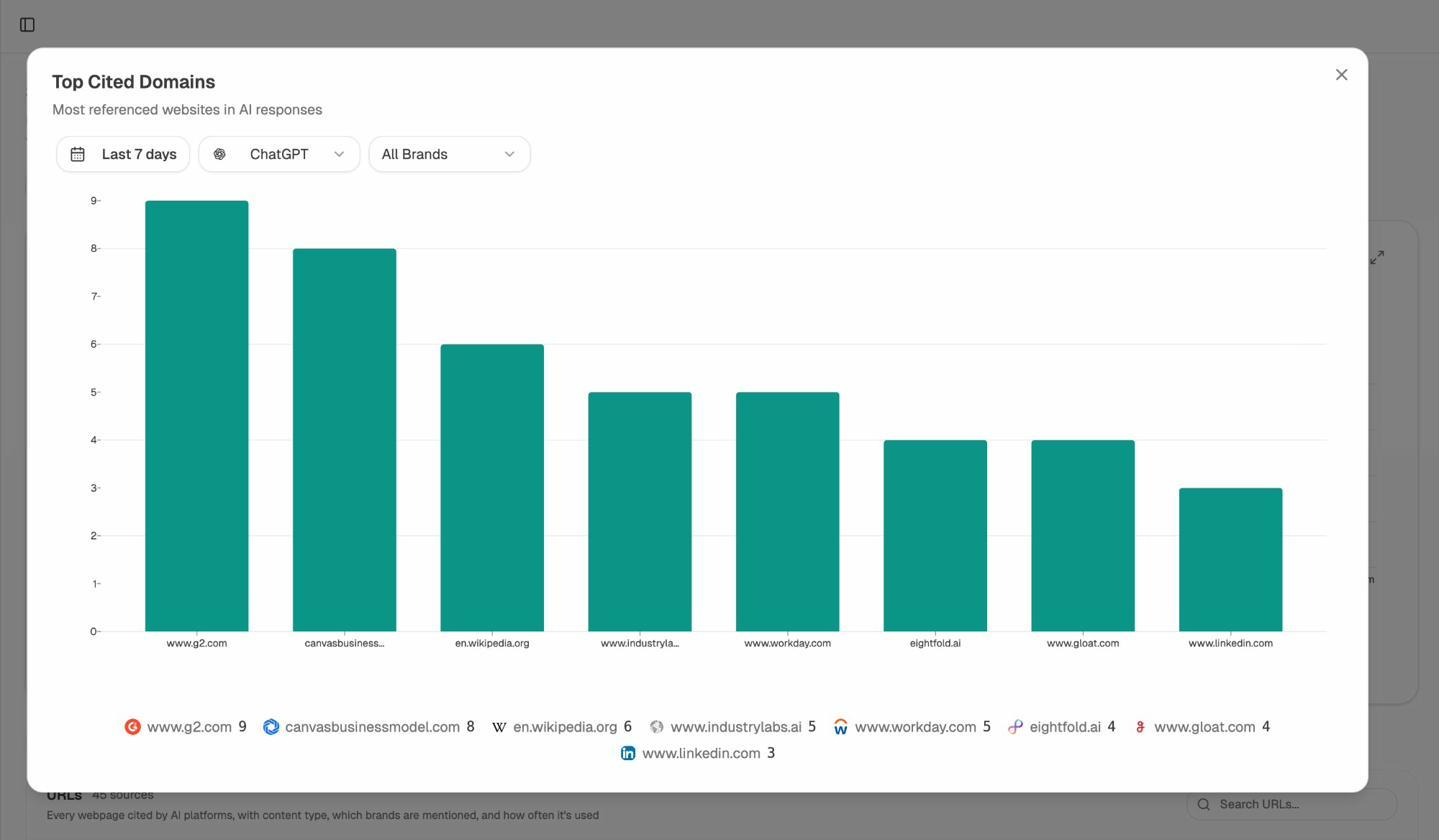

2. Citation source mapping

Ever wondered why a competitor with lower domain authority keeps showing up in ChatGPT answers? Citation source mapping is the answer. AthenaHQ identifies the URLs and domains AI models repeatedly pull from when generating answers in your category, how often, and which prompts they trigger.

This turns “earn more backlinks” advice into a targeted list. Instead of pursuing 200 random publications, you focus on the 15 sources that actually shape the answers your buyers see. The work this surfaces, of course, still needs a person to do it. We’ll come back to that.

3. The Action Center with prompt volume estimation

AthenaHQ’s Query Volume Estimation Model (QVEM) weights tracked prompts by how often they’re likely asked. Pair that with citation gaps and you get a ranked list of moves rather than a flat audit.

Each recommended action lands in the Action Center with the supporting evidence attached. After you act, AthenaHQ reruns the prompt set and shows whether the change moved the needle.

For mid-market and enterprise teams that need to defend GEO investment in a quarterly review, the evidence trail matters. It converts “we’re working on AI visibility” into “we shifted from position 7 to position 3, and here’s the page that did it.”

Three things that frustrate users

1. The credit model burns faster than teams expect

One credit equals one AI response. Track three prompts across five engines, daily, and you’re at 450 credits a month. Add a competitor brand, a region, weekly ad-hoc checks. The numbers move fast.

The Self-Serve plan is roughly $295 a month for ~3,500 credits annually billed. Sounds reasonable until an analyst has a curious afternoon. Several G2 reviewers describe burning through a month’s allocation in the first week because they enabled too many prompts across all engines at once.

Add-ons exist (about $100 for ~1,250 extra credits), which keeps the platform flexible but breaks the most basic finance question, “what does this cost per month.” For an agency, that question multiplies by every client.

2. Time-to-impact and the attribution gap

AthenaHQ tells you when AI engines mention you. It does not, by default, tell you whether those mentions translated into traffic, sessions, or revenue. The integrations help (GA4, GSC, Shopify), but the conversion story still has to be assembled by your team.

This becomes the recurring boardroom problem. Visibility goes up. Sentiment improves. Pipeline stays flat. Without a tight read on which prompts drove which sessions to which converting page, the platform’s value gets relitigated every quarter. Teams either build their own attribution layer in BI (expensive in engineering time) or switch to a platform that ties AI traffic to landing-page conversions by default. The second option is the reason most teams eventually evaluate alternatives.

3. Tooling churn and onboarding drift

AthenaHQ ships fast. Founder-led products usually do. The flip side is that small UI changes, new filter behaviors, and renamed reports show up frequently enough to make internal documentation go stale within weeks.

For a single team this is annoying. For an agency running across ten clients with junior analysts hopping between accounts, it becomes a weekly retraining problem. Screenshots in a playbook stop matching the live product, the “ten-minute weekly check” turns into a thirty-minute “where did that button go” exercise, and trust in the readout slowly erodes.

None of these issues are dealbreakers in isolation. Together, they explain why the AthenaHQ vs alternatives conversation is now a normal Q4 budget discussion. The AthenaHQ alternatives and Profound vs Peec vs AthenaHQ breakdowns are worth a read alongside this one.

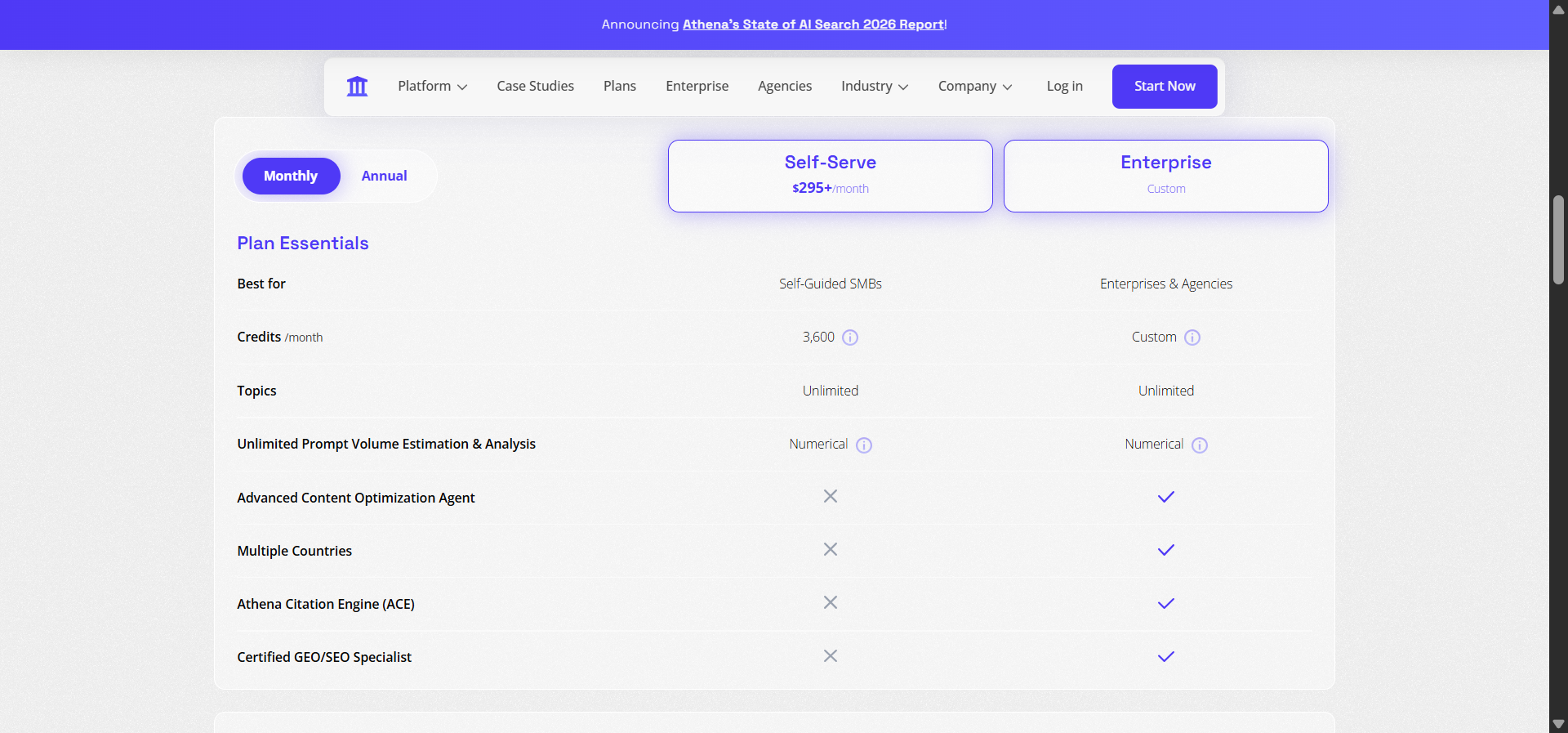

AthenaHQ pricing in plain numbers

Strip the marketing language out, and AthenaHQ’s pricing in 2026 looks like this.

|

Plan |

Price (annual) |

Credits |

Best fit |

|---|---|---|---|

|

Self-Serve / Lite |

~$295/mo |

~3,500/year |

Solo SEO leads or single-brand teams |

|

Growth |

~$545/mo |

Higher cap |

Mid-market teams tracking multiple competitors and engines |

|

Enterprise |

$2,000+/mo |

Custom |

Agencies and Fortune 500 teams needing multi-region and SSO |

|

Add-ons |

~$100 |

+1,250 credits |

Anyone who underestimated their volume in month one |

One credit equals one AI response. Run a prompt across ChatGPT, Perplexity, and Gemini and you’ve spent three credits. Run it daily for a month and you’re at ~90. Track 30 prompts the same way and you’re at ~2,700 before any ad-hoc work. The model rewards restraint, and most teams that buy a GEO platform are buying it precisely because they don’t want to ration their curiosity.

Before you commit to a credit plan, run your own website traffic check, SERP check, and website authority check on the prompts and pages you actually care about. The free tools won’t replace AthenaHQ. They will tell you whether your real prompt list is 30 or 300.

What sits outside the AthenaHQ dashboard

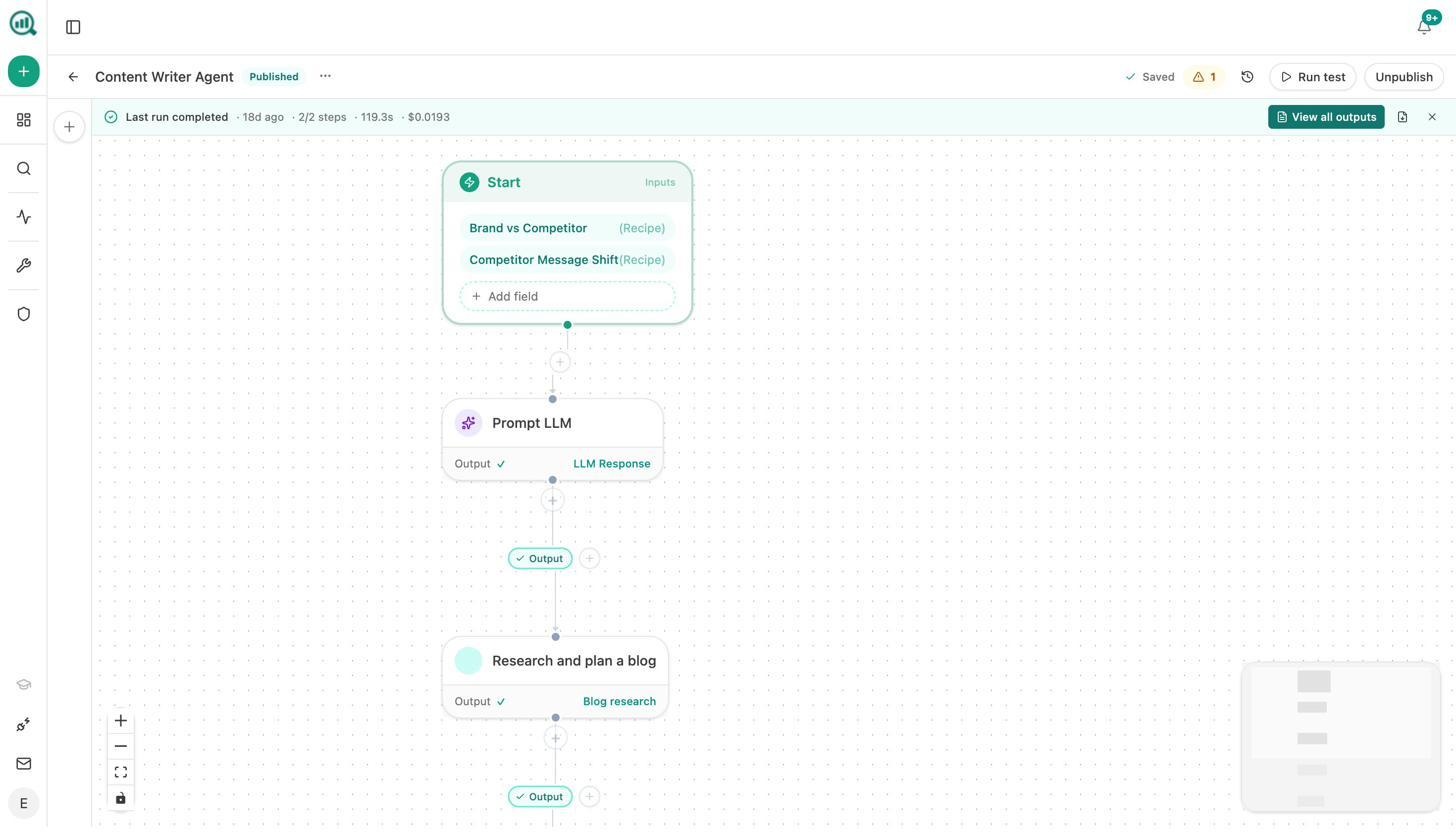

Here’s the pattern almost every AthenaHQ buyer reports somewhere in month two or three.

The dashboard tells you a competitor’s page just earned 9 new citations on Google AI Mode. Useful. The dashboard tells you your own page on the same topic dropped from 17 to 14. Also useful. The dashboard does not write the brief, rewrite your page, draft the journalist outreach, or assemble the Monday board pack. Those jobs go back to your team, the same team that bought the platform to save time.

For teams that only need monitoring, that trade-off is fine. For teams that bought a GEO tool to compress the gap between insight and action, that gap is the whole problem. It’s the gap that drove us to build Analyze AI the way we did.

Analyze AI, the agentic SEO and content platform that closes the loop

We don’t think SEO is dead. We don’t think AI search replaced it. AI search is another organic channel that compounds with the SEO work you’ve been doing for years, and buyers still choose brands the way they always have, on the strength of clear, useful, original content.

What changed is the surface. So we built Analyze AI as the agentic SEO and content platform, with a visibility, traffic, and revenue layer underneath, and a programmable agent layer on top that lets you run almost any SEO, content, brand, PR, or growth workflow you’d otherwise glue together with five tools.

Three things to know. You see actual conversions, not just mentions. You get a Content Writer and a Content Optimizer that turn ideas into published, brand-voice-true posts without leaving the platform. And the Agent Builder lets you orchestrate the rest of your stack (GSC, GA4, Semrush, DataForSEO, HubSpot, Notion, WordPress, Mailchimp, and more) into reliable, scheduled, or event-triggered automations. Below is how each layer earns its place.

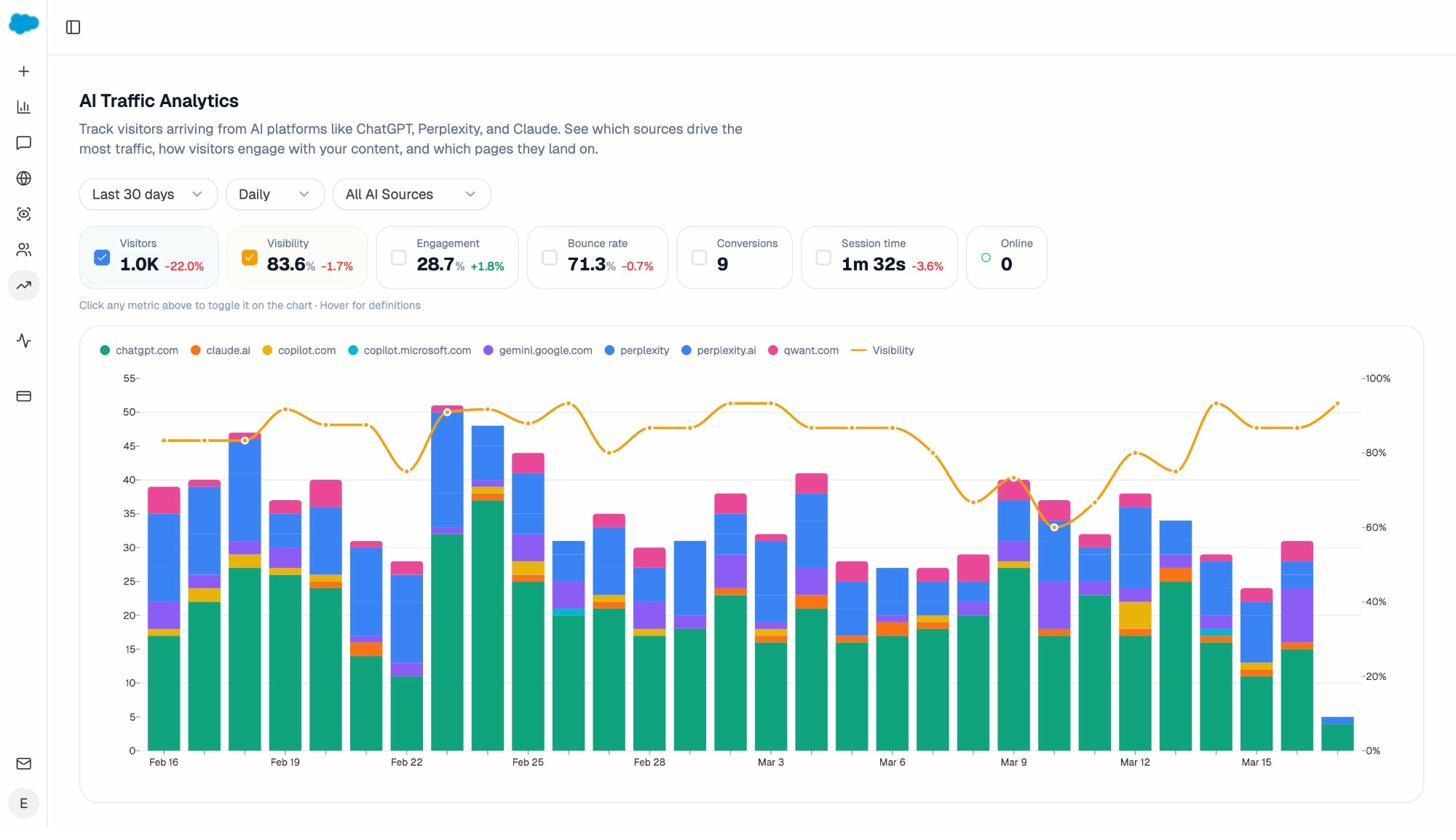

See what AI search drives, not just where you’re mentioned

The AI Traffic Analytics view attributes every session arriving from an answer engine to its origin. ChatGPT, Perplexity, Claude, Copilot, Gemini, and the long tail. You see daily volume, visibility trend, engagement, bounce, session time, and conversions in one view.

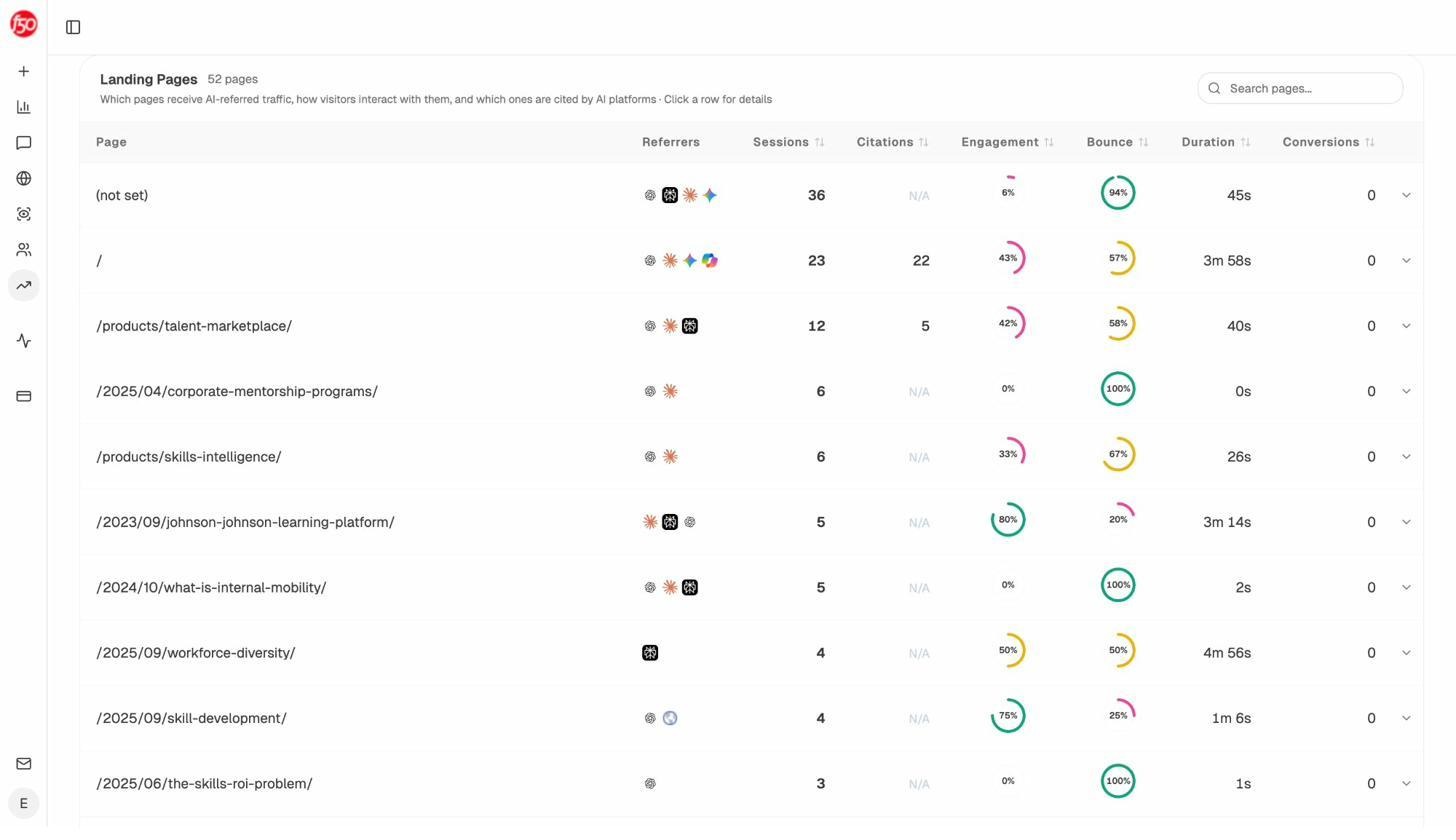

Drop into the Landing Pages report and you see, page by page, which AI engines sent the traffic and whether it converted. This is the layer that turns AI visibility into a defensible business case.

If your product comparison page is converting Perplexity sessions at 12% and your old explainer is bouncing 94% of ChatGPT visits, you stop debating “is GEO worth it” and start moving budget toward the work that pays back.

Track the prompts buyers use, and where competitors win on them

Visibility, sentiment, and rank for every tracked prompt across major LLMs, scoped by competitor. You see who appears beside you, where they overtake you, and which prompts you’ve never been mentioned in. Don’t know which prompts to track. Use the prompt suggestion feature, which surfaces realistic, bottom-of-funnel queries based on your category and competitors, so you don’t have to brainstorm them yourself.

For more on this loop, the AI search competitor analysis guide walks through it end to end.

Audit the sources AI models actually trust

Citation Analytics shows the domains and URLs that AI engines repeatedly pull from when answering questions in your category, plus how often each model uses each source. This turns generic backlink work into focused outreach to the 10 to 20 sources that move the needle in AI answers, not the 200 that don’t.

The brand mentions guide and the audit brand mentions playbook both lean on this view to build a real outreach list.

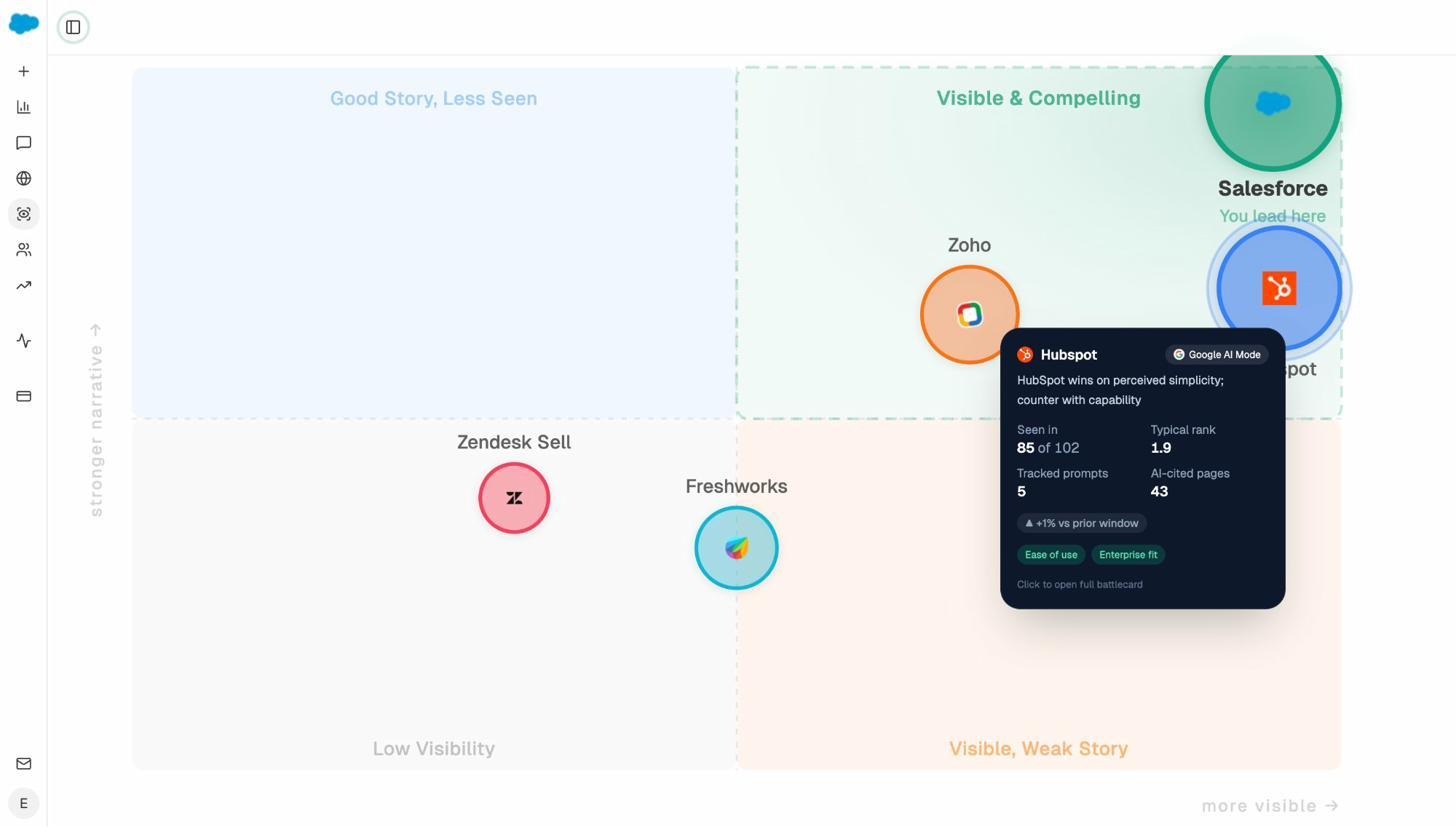

Read your category as a quadrant, not a leaderboard

The Perception Map plots every brand in your category on a 2D quadrant. Visibility on one axis, narrative strength on the other. You see at a glance whether you’re in “Visible and Compelling,” “Good Story, Less Seen,” “Visible, Weak Story,” or “Low Visibility,” and what that tells you about the next move.

AI Battlecards round it out with per-competitor strengths, weaknesses, and recommended counter-narratives, ready to drop into a sales deck.

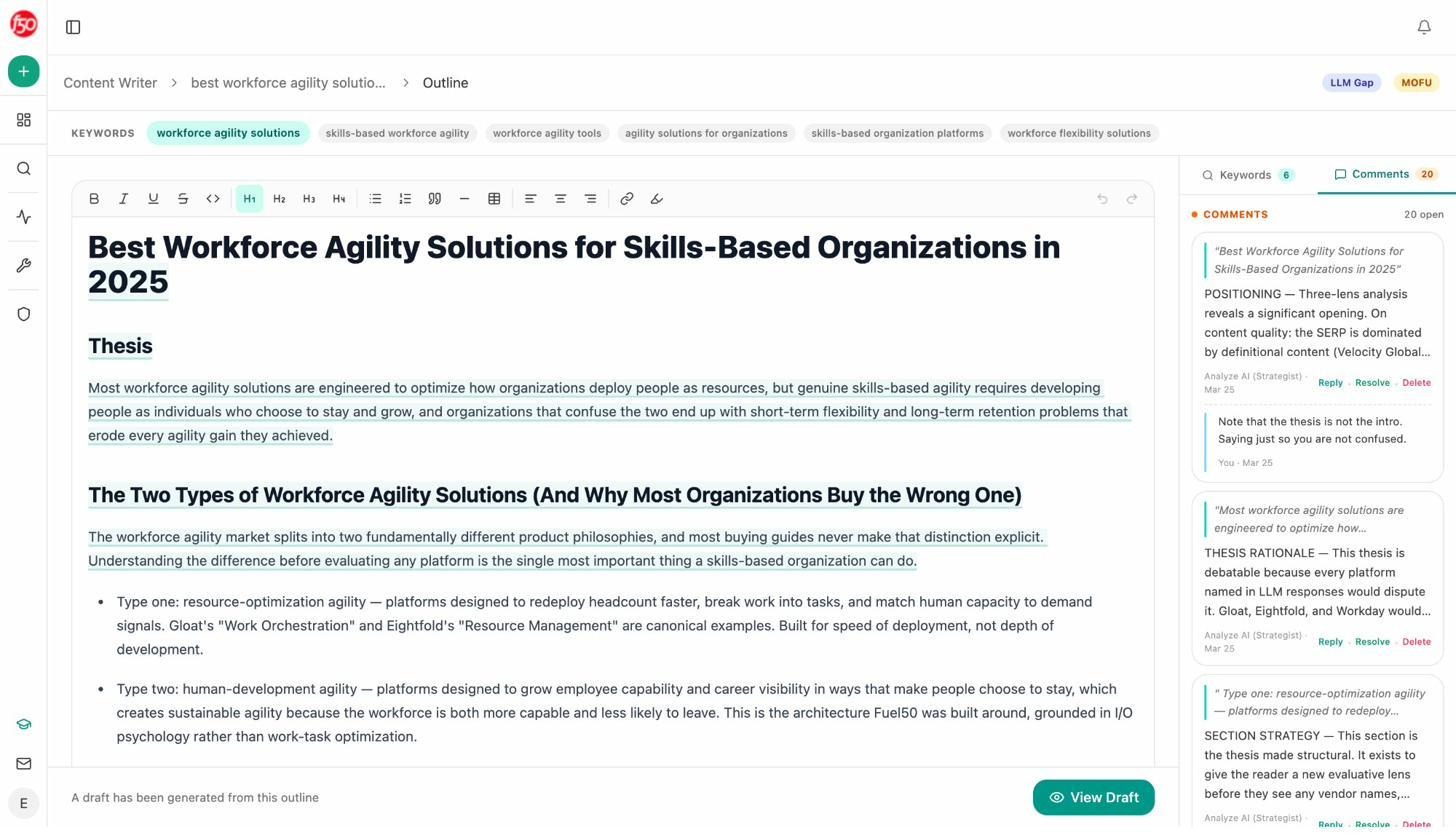

Write articles with research, outlines, and drafts in one pipeline

The Content Writer is not a one-shot prompt box. It’s a research → outline → draft pipeline with a strategist layer that comments on your thesis, sections, and draft as it goes. By the time a piece is ready to publish, it’s been rationalized for argument, structure, and brand voice, with target keywords and LLM gaps tracked on the same page. If you’ve used content briefs as a discipline, this is what one looks like when it isn’t a Google Doc.

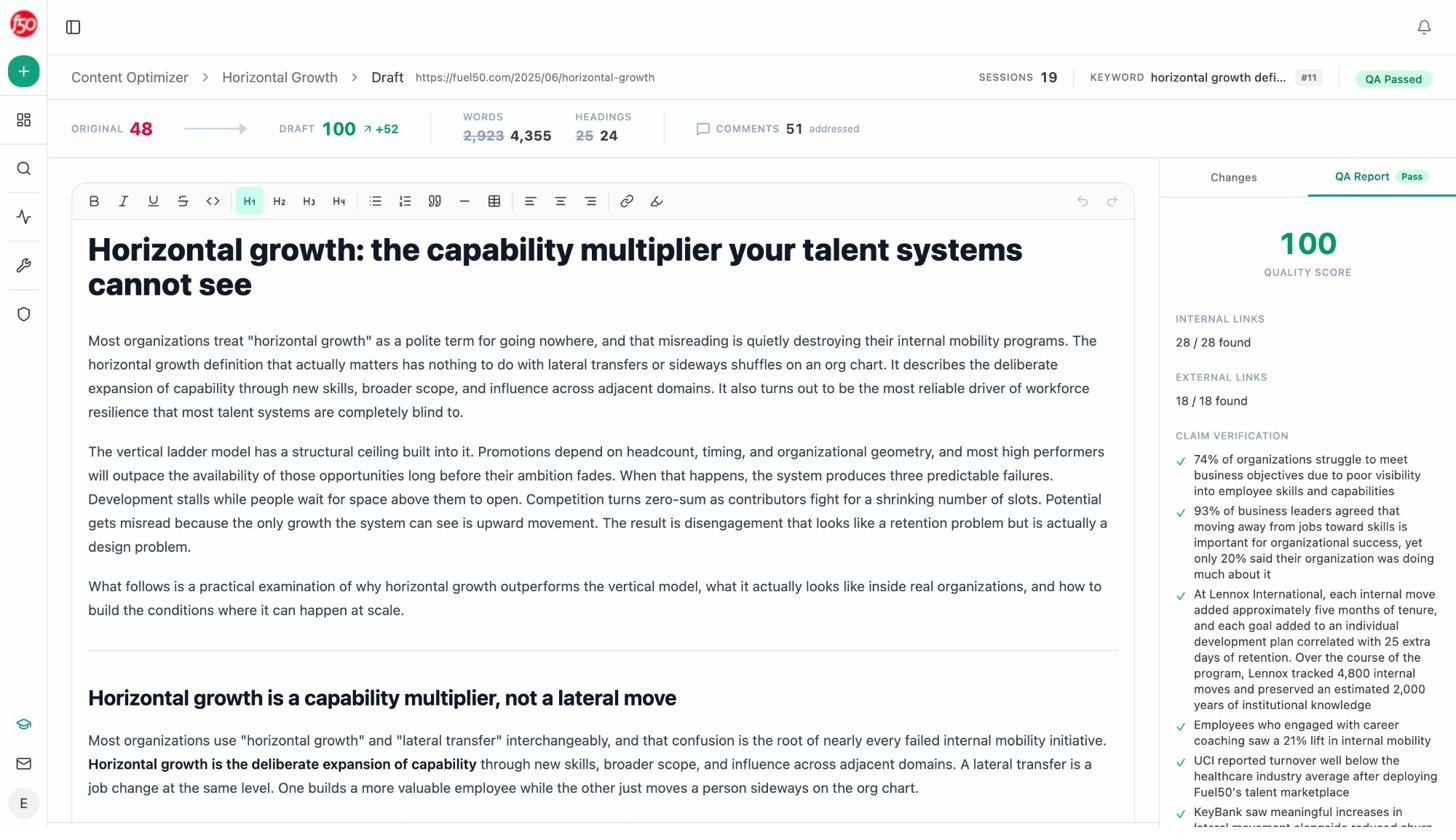

Optimize existing pages with a real QA report, not just a score

Point the Content Optimizer at any URL. It pulls the live page, scores it, drafts a rewritten version, and ships a QA Report that verifies every claim, checks every internal and external link, and flags whatever needs human judgment. This is the layer that turns “AthenaHQ flagged this page” into “the page is rewritten, the claims are sourced, the links resolve, and we’re ready to ship.” Pair it with the republishing content playbook and you have a refresh engine, not a refresh suggestion.

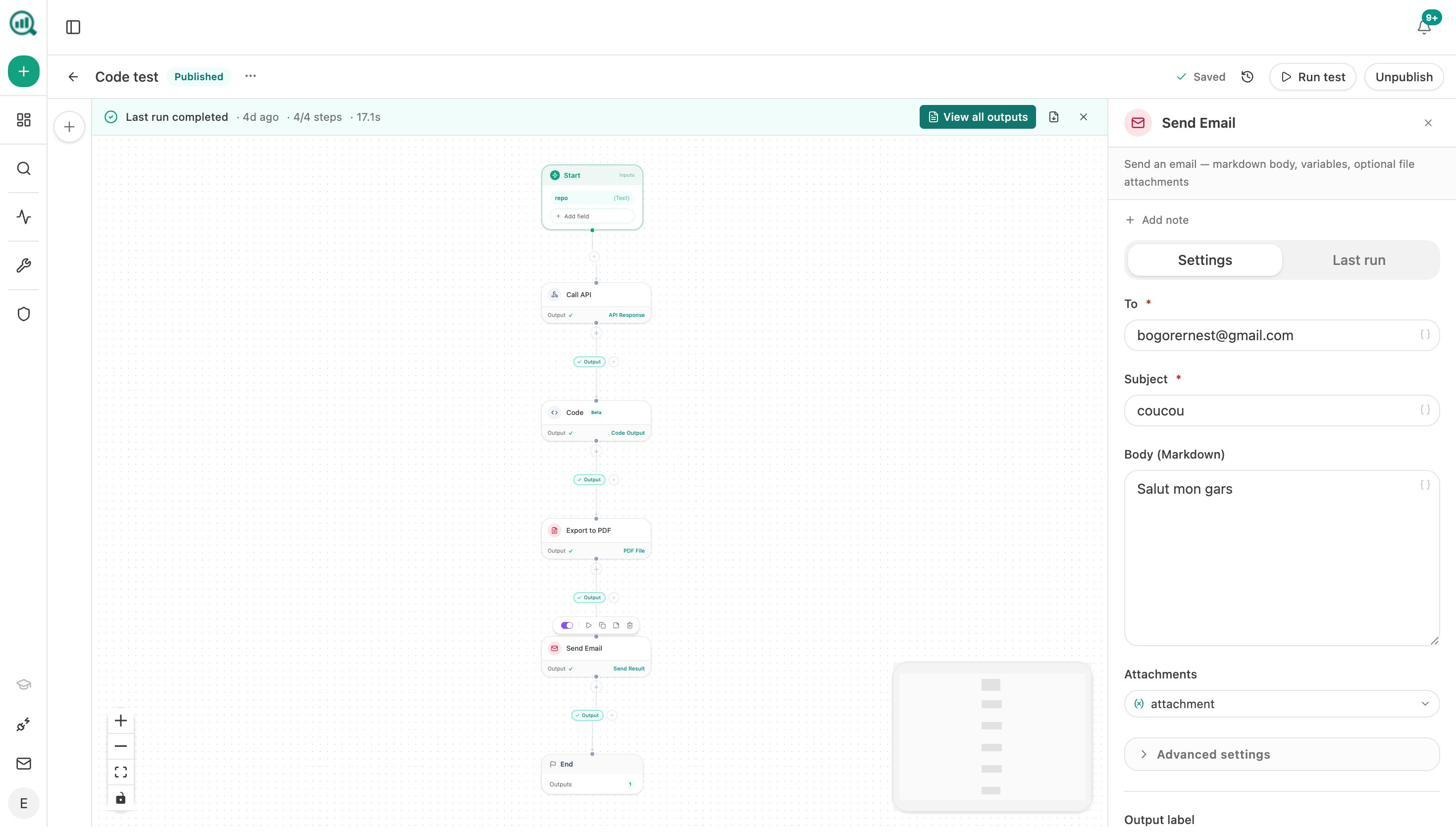

Build agents to run the rest of your operation

This is the part most teams underestimate. The Analyze AI Agent Builder is a programmable substrate with 180+ nodes covering AI, web research, SEO (Semrush, DataForSEO), GSC, GA4, HubSpot, Notion, WordPress, Mailchimp, Sanity, Contentful, Hunter, Tomba, plus logic, control flow, exports, and code. Pair any of those with 34 pre-baked data recipes (share-of-voice, competitor gaps, declining pages, citation magnets, prompt cluster briefs) and you can automate almost any SEO, content, brand, PR, or growth workflow your team currently runs by hand.

Three triggers turn agents into always-on operators. Run on demand, schedule on a cron, or fire from a webhook (HubSpot deal closes, Notion brief gets approved, media-monitoring tool flags negative coverage), and the agent runs in seconds. A few real examples teams have built.

|

Use case |

What the agent does |

|---|---|

|

Monday board prep (CMO) |

Schedule (Mon 7am): pulls share-of-voice + AI traffic + new HubSpot deals + GSC top pages, drafts an exec summary in brand voice, exports DOCX, emails leadership |

|

Daily visibility regression alert |

Schedule: runs the visibility-losers recipe over the last 24h, drafts a counter-content brief, posts to Slack |

|

Brief-to-publish pipeline |

Webhook (Notion brief approved): runs research → outline → full draft → AEO scorecard → if score > 80, publish to WordPress with featured image. If not, ping the writer |

|

Crisis response on autopilot (PR) |

Webhook (negative coverage flagged): pulls the article, finds the journalist via Tomba, drafts three response options in brand voice, slacks the crisis team |

|

Per-client agency reporting |

Schedule (looped over client list): assembles each client’s report in parallel, exports DOCX, emails the account team |

The point isn’t the templates. Anything you can describe as inputs, data pulls, decisions, and outputs is a candidate for an agent. The platform also keeps a memory of past runs, so an agent can read its own history and decide “if last week’s report showed a drop, this week dig deeper.” For more, see our content automation deep dive.

Get the weekly digest that actually says something

Weekly Email Digests summarize what changed and why, in plain English. Competitor pages that gained citations. Your pages that lost them. The likely explanation. Your team starts Monday with a brief, not a blank dashboard.

Side by side, where Analyze AI lands vs AthenaHQ

|

Capability |

AthenaHQ AI |

Analyze AI |

|---|---|---|

|

AI visibility tracking across major LLMs |

Yes |

Yes |

|

Prompt-level visibility, sentiment, position |

Yes |

Yes |

|

Citation source mapping |

Yes |

Yes |

|

Competitor benchmarking |

Yes |

Yes (Competitor Intelligence) |

|

AI traffic attribution to pages and conversions |

Limited (via Shopify/GA4) |

Native, page-level, with revenue ties |

|

Perception quadrant view |

No |

Yes |

|

AI Battlecards per competitor |

No |

Yes |

|

Content Writer (research → outline → draft) |

Limited (recommendation engine) |

Full pipeline |

|

Content Optimizer with claim and link QA |

Limited |

Yes |

|

Agent Builder (180+ nodes, schedule/webhook/manual) |

No |

Yes |

|

Pre-built data recipes |

No |

34 |

|

Pricing model |

Credit-based |

Predictable tiers |

For the deeper feature-by-feature view, see Analyze AI vs AthenaHQ.

The honest verdict

Keep AthenaHQ if your single job is monitoring how AI engines portray your brand, your team can absorb the credit volatility, and you have a separate execution stack already running well.

Move to Analyze AI if you want monitoring, conversion attribution, content production, and the agentic layer that runs the operations work in the background. You stop paying for two tools and you stop paying your team to do the gluing.

Both tools work better than guessing. The real question isn’t which AI visibility tool to buy. It’s whether the tool you buy moves your team from watching the dashboard to shipping the work. That’s the line we built Analyze AI to cross.

To see what your AI visibility looks like before you commit to anything, run the free AI website audit on your domain. It takes a minute and gives you the baseline you’d otherwise pay for.

Ernest

Ibrahim