Summarize this blog post with:

Every GEO tool sells the same promise. See your brand inside ChatGPT, Perplexity, Claude, and the rest, then do something about it. Scrunch sells the “see” half well. The “do something” half is where most teams quietly hit a wall.

This review draws on Scrunch’s pricing, G2 reviews, and direct comparison against other AI search visibility tools. Does $300 to $1,000 a month turn into pipeline, or just into a sharper dashboard?

Table of Contents

What Scrunch AI is, in plain terms

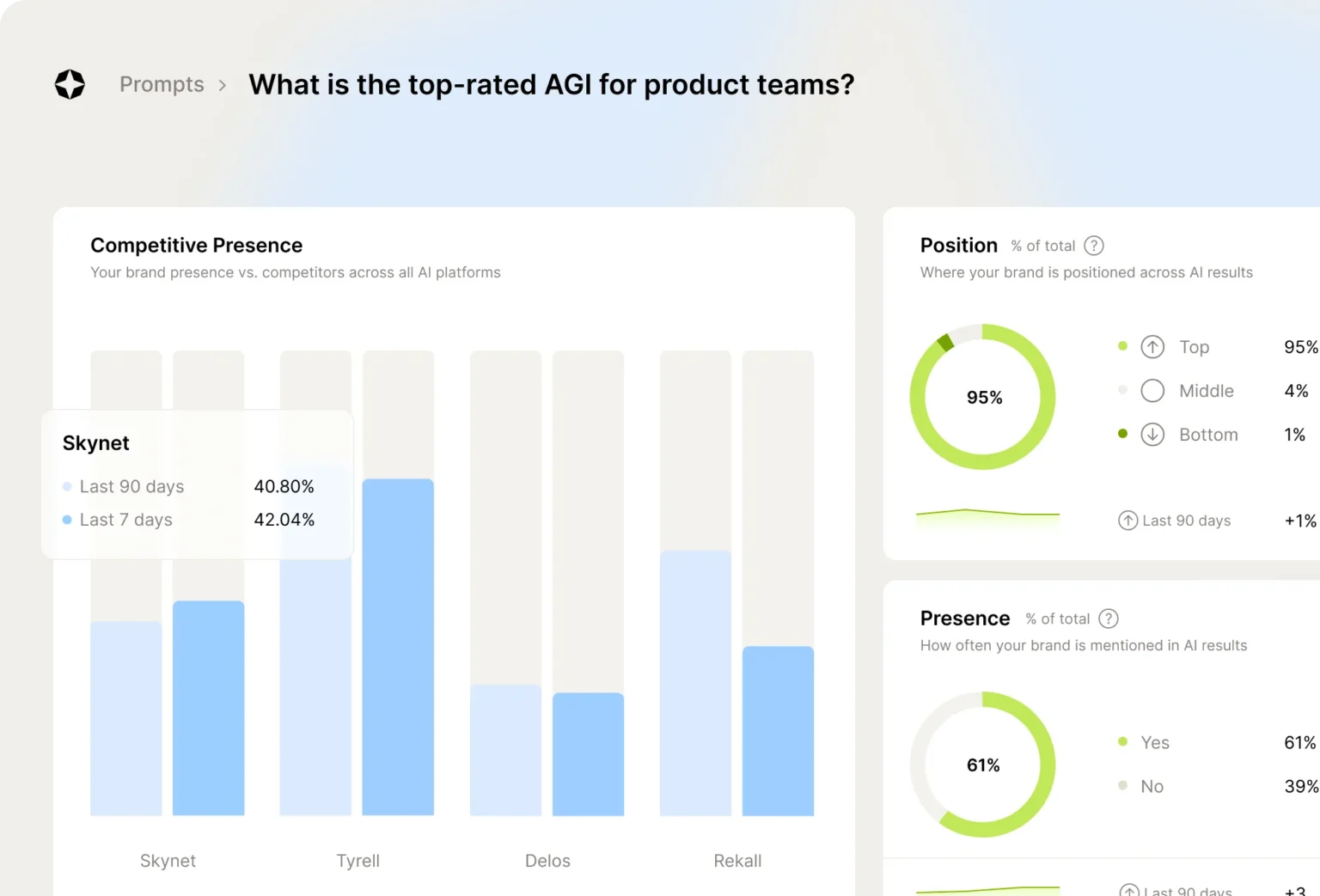

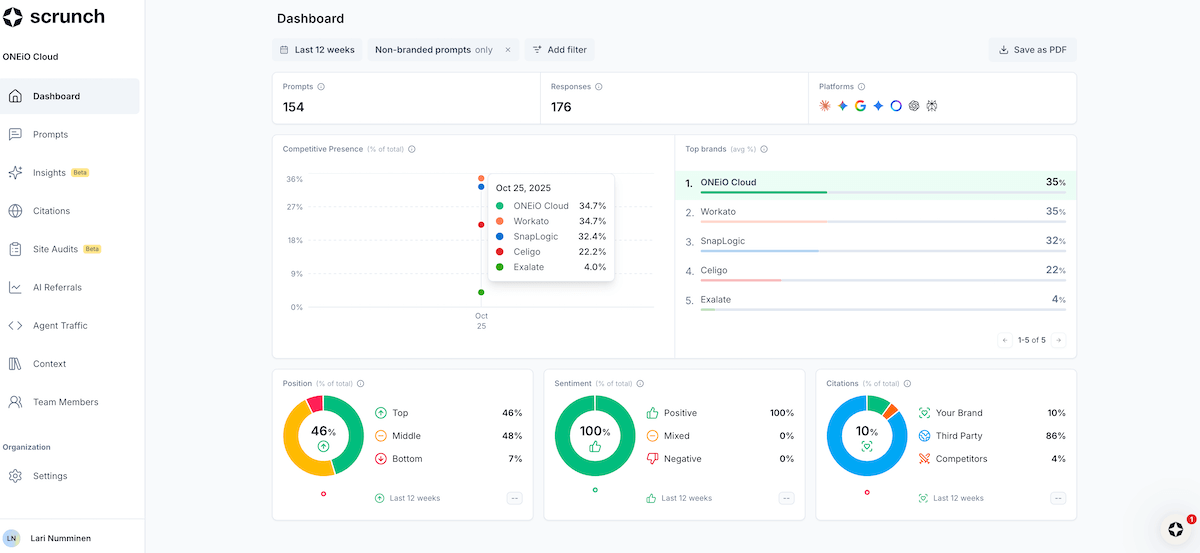

Scrunch AI is a generative engine optimization platform that monitors how your brand appears across major LLMs. It tracks ChatGPT, Gemini, Perplexity, Claude, Copilot, Meta AI, Google AI Mode, and Google AI Overviews, scoring whether your brand gets mentioned, how it ranks against competitors, and which sources the models cite when answering questions in your category.

Founded in 2023, Scrunch has raised a $4M seed plus a $15M Series A from Decibel, Mayfield, and Homebrew. SOC 2 compliance, white-glove onboarding, and a GA4 integration round out what it positions as an enterprise-grade product.

The thesis is simple. As buyers get more answers from AI assistants and fewer from blue links, brands need an analytics layer for the AI half of search. Scrunch builds that layer. It does not build the layer that fixes the gaps it surfaces. That distinction shapes the rest of this review.

Three things Scrunch AI does well

For the price, Scrunch is genuinely strong in three areas. None of these are gimmicks. They show up in how the dashboard is built and in how customers describe it on G2.

Cross-LLM monitoring and prompt families

Scrunch samples answers from eight engines on a recurring schedule and groups related questions into prompt families. Instead of tracking one keyword at a time, you see clusters of intent. Every prompt about “best CRM for small business” rolls into one family with one trend line.

That structure absorbs the natural rephrasing buyers use, so you stop chasing identical-meaning questions across dozens of rows. It also gives you a single visibility number per topic instead of fragmented data nobody can act on in a meeting.

The platform layers on metadata most monitoring tools skip. You see which competitor is mentioned alongside you, which URLs the model cites as evidence, and which attributes of your brand consistently make it into answers. Volatility scores flag families where answers swing week to week, which usually signals weak authority signals around that topic. Our LLM visibility guide walks through how this data should connect back to your existing channels.

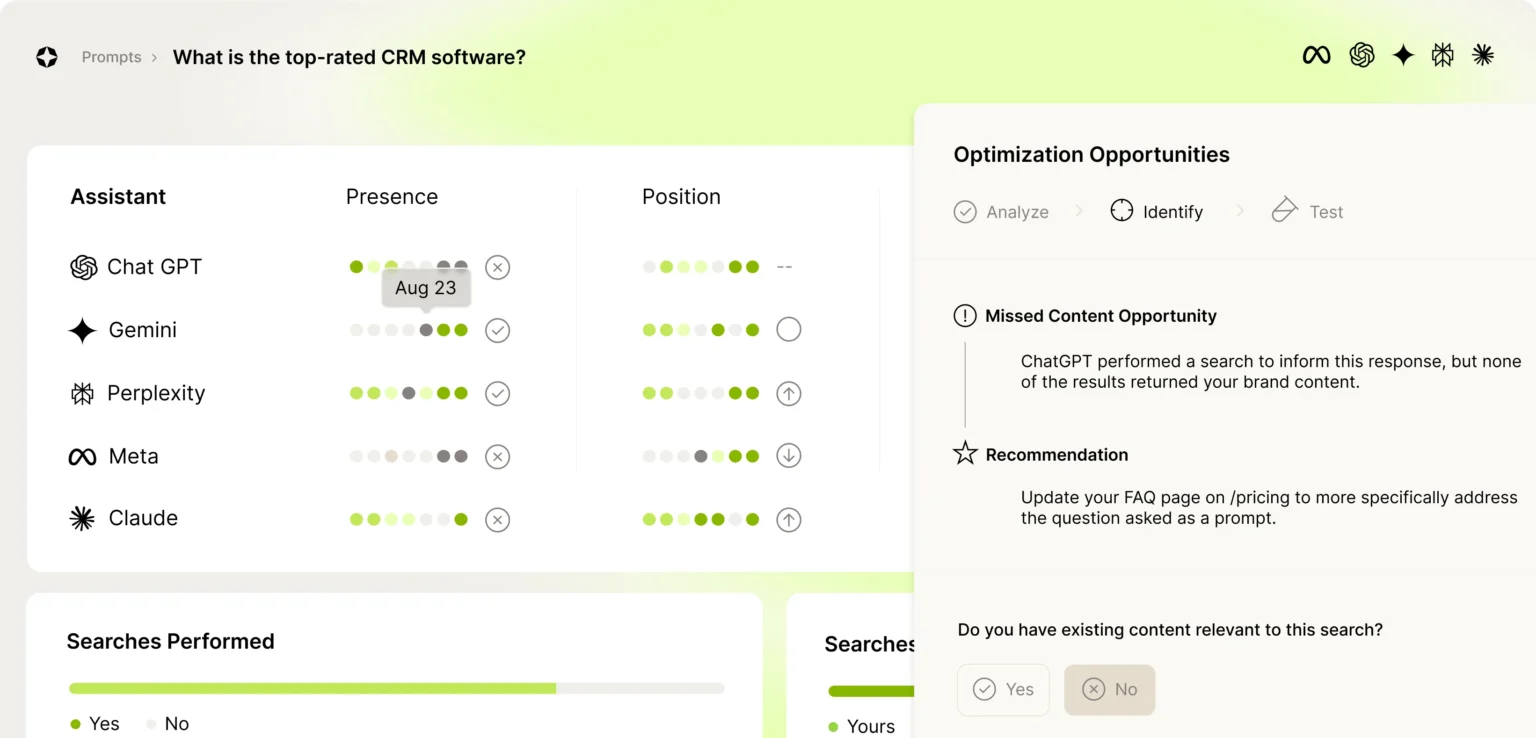

Diagnostic insight on why answers go wrong

Where the monitoring layer shows what AI systems say, Scrunch’s diagnostic engine tries to explain why. It cross-references model outputs against your verified content and surfaces contradictions, outdated stats, and vague phrasing that pushes models to guess.

The analysis extends to the technical layer. Crawlability, robots directives, and rendering behavior get checked so you know whether the issue is your content or whether AI crawlers cannot reach the right pages. If you’ve never run that audit yourself, the free AI website audit tool gives you the same baseline.

Recommendations come paired with the underlying evidence (the snippet, the URL, the model response), so editors act on data rather than inference. Issues get grouped by theme, which keeps teams from fixing isolated symptoms while the root cause stays in place.

Agent Experience Platform (AXP)

Scrunch’s largest roadmap commitment is the Agent Experience Platform. AXP gives you a structured, machine-readable surface that AI crawlers consume in parallel to your normal site, organizing brand knowledge into entities, attributes, and verified claims.

The promise is real. When models see a precise representation of your products, pricing, and positioning, hallucinations drop and citations get more accurate. AXP integrates with sitemaps and schema, so it complements the structured data you already publish for traditional search.

The catch, which the gaps section will return to, is that AXP launched as a pilot in mid-2025 and remains in limited availability as of early 2026. Treat it as a roadmap commitment, not a feature you can deploy on day one.

Three gaps that cost you pipeline

Three issues come up across G2 reviews and direct testing. None sink the product. All three should change how you scope what Scrunch can do for your team.

The data feels smarter than it is

Scrunch does not collect prompts from real LLM sessions. It infers what buyers ask by modeling intent from keywords and search behavior, then runs those modeled prompts against the engines on a schedule.

That gap shows up in the analytics. The platform shows what Scrunch thinks represents your brand’s performance, not what actually shows up when buyers ask. When the model merges unrelated questions or overfits a narrow pattern, you end up tracking a trend that never existed in real prompt traffic.

The problem compounds because LLMs change fast. The way ChatGPT phrased an answer last month is rarely the way it phrases the same question today. A platform built on inferred prompts has to keep relearning what real questions look like, and the lag between change and reflection sits inside the data you base decisions on.

AXP creates new SEO surface area to manage

The Agent Experience Platform is the most futuristic idea in Scrunch’s product. It is also the one that makes traditional SEO leads most uneasy.

AXP creates a second, AI-only version of your site. Search engines have historically penalized exactly this pattern, called cloaking, where two audiences see different content. The platform is engineered to avoid that classification, but the line is thinner than it looks. If Google’s crawlers misinterpret intent and flag the parallel layer, the cleanup is painful.

The maintenance overhead is the second issue. Two content systems mean two places to test, two places to version, and two places where drift quietly happens. When the AI-facing layer drifts from your main site, the version of your brand that AI models learn from goes stale without anyone noticing. For most teams, AXP becomes a project that needs ongoing engineering attention to stay safe.

Insight without an execution layer

This is the gap that hurts pipeline most.

Scrunch is excellent at telling you which prompts misrepresent your brand, which citations are missing, and where models hallucinate. After that, you are on your own. There is no built-in way to apply schema fixes, rewrite flagged sections, regenerate underperforming pages, or push approved updates back to your CMS.

For teams without tight content-engineering alignment, every insight becomes another ticket in a backlog. By the time the fixes ship, AI answers have already moved on. Customers describe this as the insight bottleneck. The intelligence is sharp, but the workflow to act on it lives outside the tool.

Scrunch AI pricing in 2026

Scrunch’s pricing scales prompt volume, persona depth, and audit capacity. The Starter plan still gives access to every core feature. What changes across tiers is scale, not capability.

|

Plan |

Price |

Custom Prompts |

Industry Prompts |

Personas |

Page Audits |

User Licenses |

|---|---|---|---|---|---|---|

|

Starter |

$300/mo |

350 |

1,000 |

3 |

5 |

2 |

|

Growth |

$500/mo |

700 |

2,500 |

5 |

10 |

3 |

|

Pro |

$1,000/mo |

1,200 |

6,000 |

7 |

20 |

5 |

|

Enterprise |

Custom |

Custom |

Custom |

Custom |

Custom |

Custom |

A 7-day trial is available on the entry plan. Additional seats run $25 per month each, up to five extras. Enterprise unlocks SSO (SAML, OIDC), a data API, regional deployment, and dedicated support.

The math gets interesting at the Growth tier. At $500 a month for 8 engines and 700 prompts, Scrunch costs roughly $62.50 per engine. That density beats most direct competitors and is one reason Scrunch wins on pure tracking-volume comparisons.

The price-to-function trade-off is harder. At these tiers, Scrunch competes for budget against full marketing analytics suites, most of which include some flavor of optimization, content tooling, or workflow automation that Scrunch deliberately leaves to other vendors. The real question is not whether Scrunch is overpriced. It is whether the rest of your stack can absorb the gaps Scrunch leaves open.

When Scrunch is the right buy, and when it isn’t

Scrunch fits a specific buyer well. It struggles to justify the price for everyone else.

Scrunch is the right buy when:

-

Your security and compliance posture (SOC 2, regional deployment, SSO) rules out lighter tools.

-

You already have a content-engineering function that can act on insights quickly.

-

AXP fits your actual roadmap and you have the engineering capacity to maintain a parallel AI-facing surface.

-

You need pure breadth of monitoring across many engines and many prompt families, and you are willing to pay the per-engine premium.

Scrunch is the wrong buy when:

-

You need to tie AI visibility to actual traffic and conversions, not just sentiment and rank.

-

The workflow of “see issue, fix issue, ship fix” needs to live in one tool rather than across five.

-

You want content writing, content optimization, and brand-voice automation in the same subscription.

-

You need to trigger workflows from real events without building that plumbing yourself.

The last bullet is where most mid-market teams quietly lose. An insight that does not reach the page that needs fixing, in the same week as the insight, is mostly decoration.

Analyze AI, the agentic SEO and content platform that closes the loop

Most teams discover Analyze AI looking for a Scrunch alternative. They stay because the platform does the rest of the work too. Analyze AI tracks visibility across ChatGPT, Perplexity, Claude, Copilot, Gemini, and Google AI Mode, then connects what it sees to traffic, conversions, and the actions that close the loop.

The thesis is in our manifesto. SEO is not dead. AI search is a new organic channel layered on top of the old one. The teams that win in AI answers are the ones already doing strong content, strong technical SEO, and strong measurement. Analyze AI is built to compound what already works while adding the AI search layer on top.

Tracking parity, where most teams need it

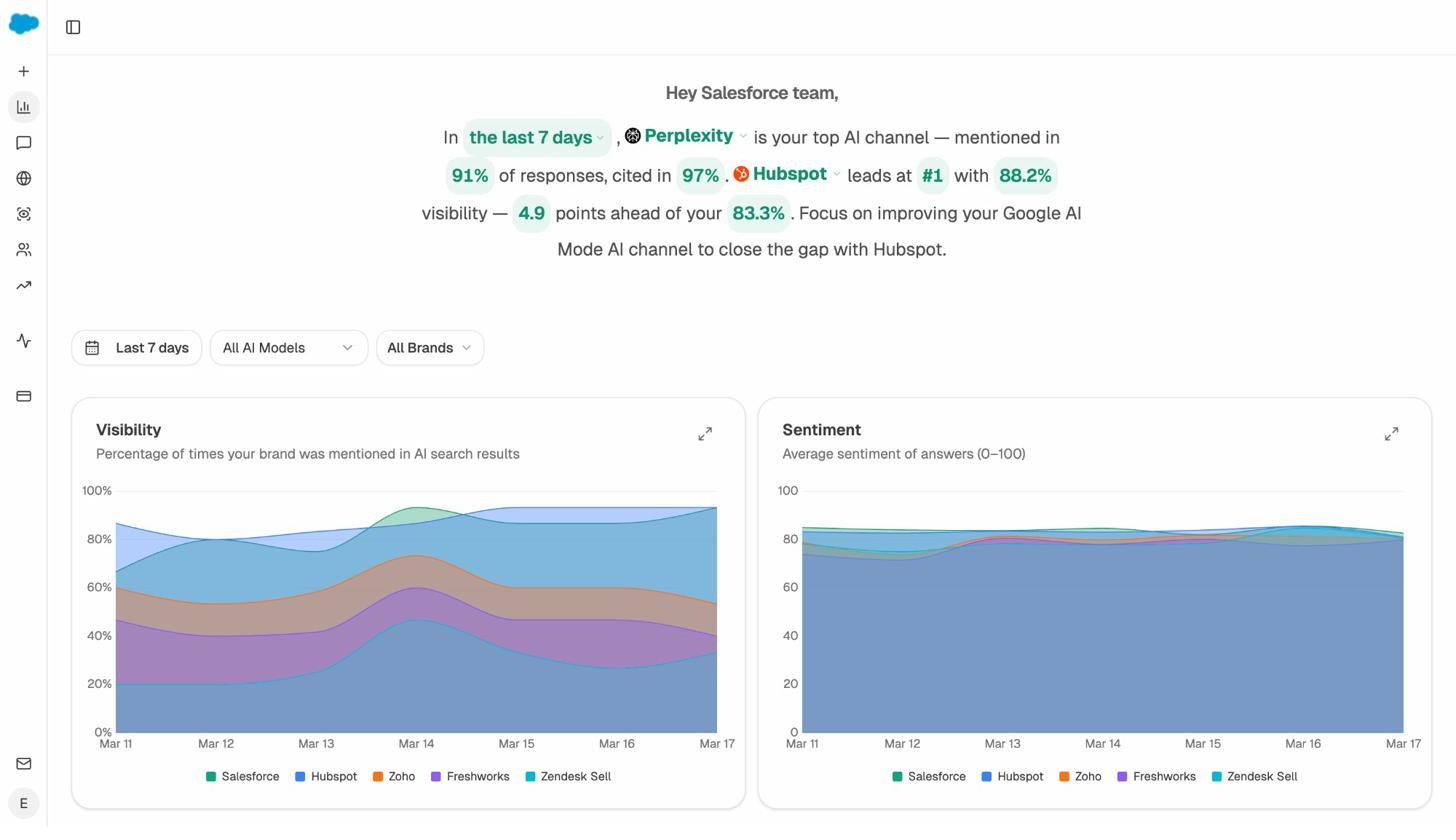

On pure visibility tracking, Analyze AI matches Scrunch on the features that matter day to day. You see AI visibility tracking by engine, prompt-level performance with sentiment and position, citation analytics, competitor benchmarks, and a perception map that positions your brand against tracked competitors on visibility and narrative strength.

Where Analyze AI pulls ahead is where Scrunch leaves teams to fend for themselves.

AI traffic that ties to revenue, not just to mentions

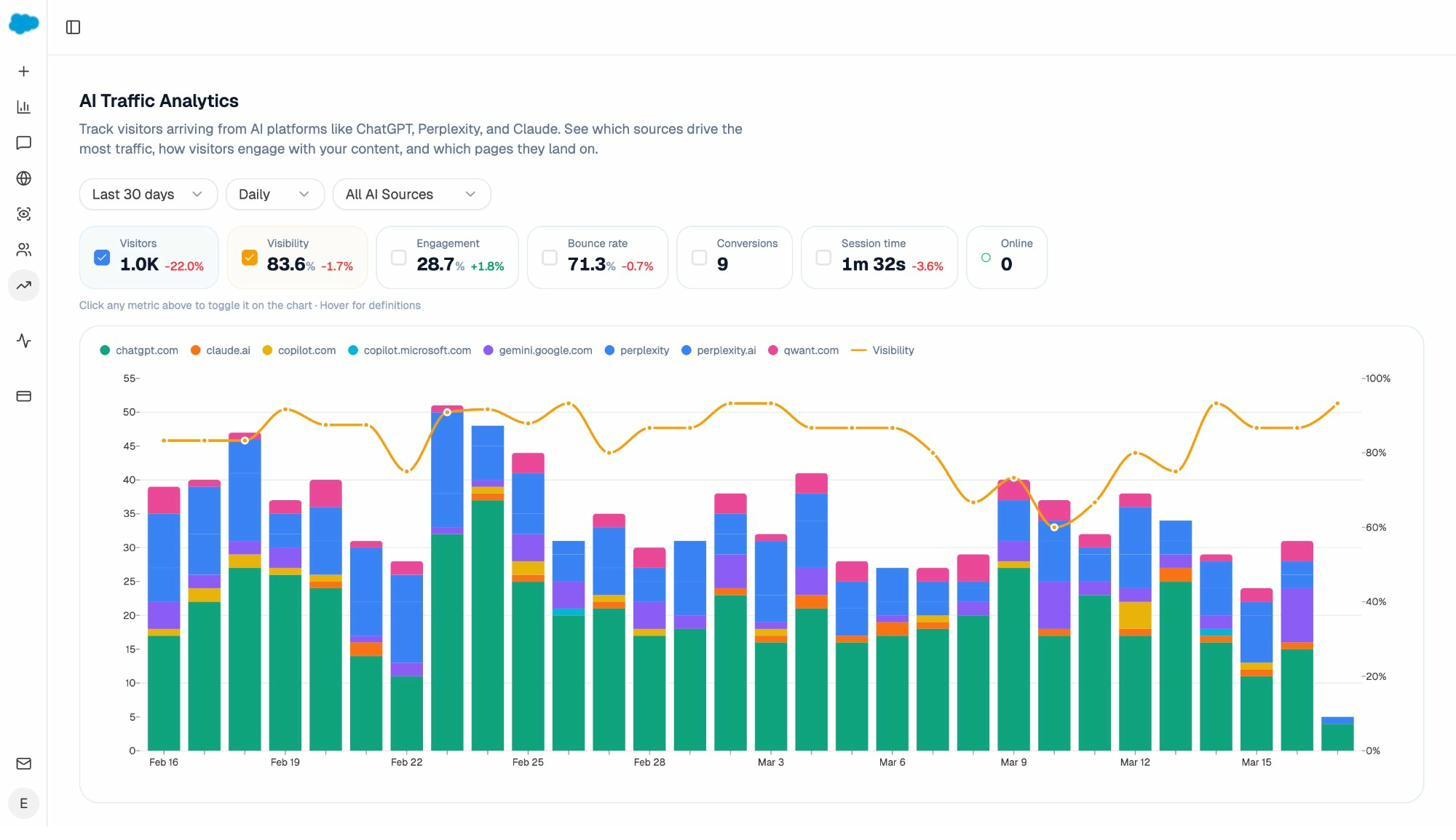

The single largest difference between Scrunch and Analyze AI is what happens after a mention. AI Traffic Analytics attributes every session from answer engines to its specific source (ChatGPT, Perplexity, Claude, Copilot, Gemini). You see session volume by engine, trends over time, conversion rates, and which pages those visits land on.

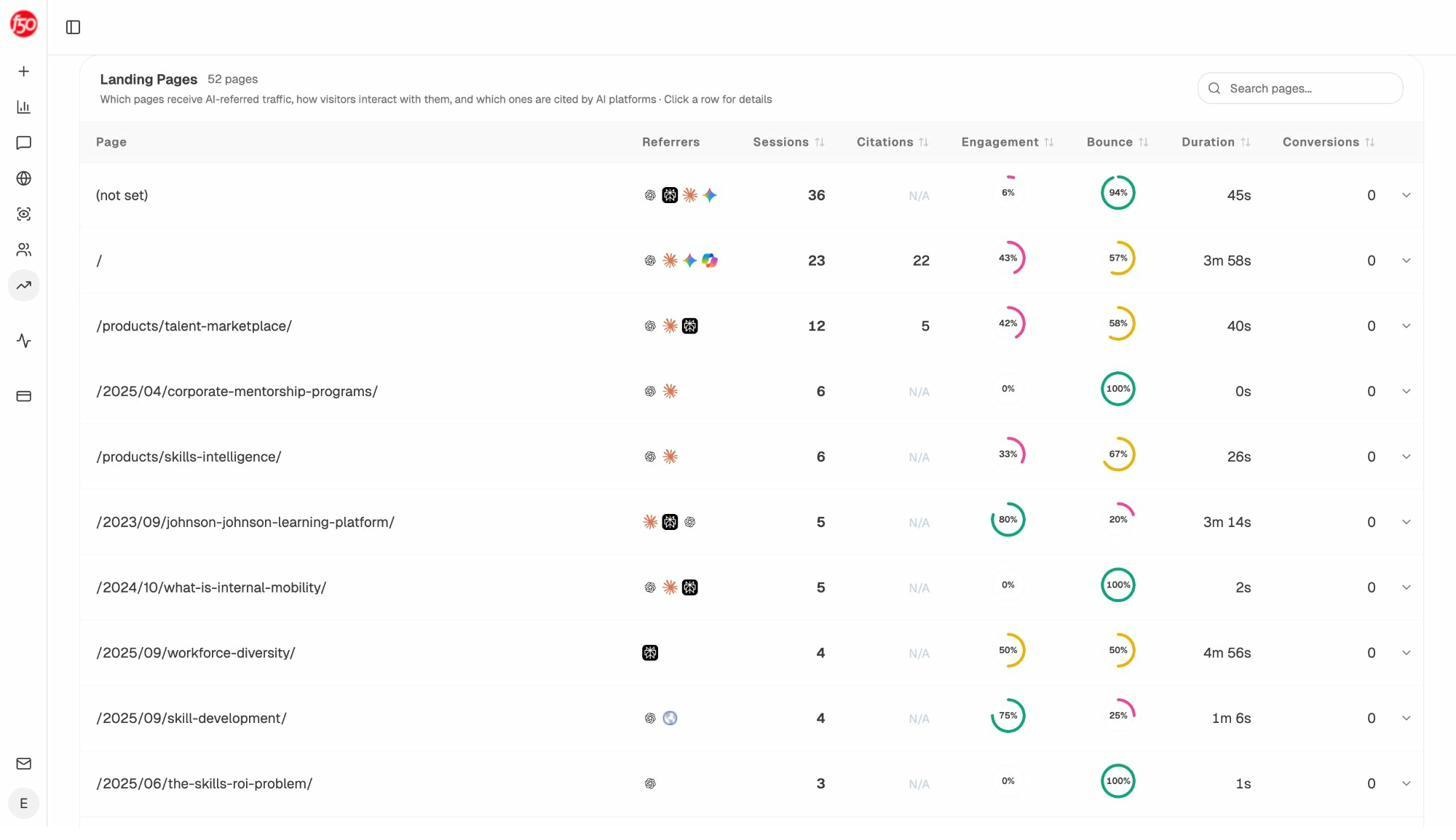

The Landing Pages report is where the data gets actionable. Each row shows the page, which models referred traffic to it, citations received, engagement rate, bounce rate, and conversions. When one product comparison page gets 12 sessions from Perplexity and converts at 8%, while a blog post pulls 36 sessions from ChatGPT with zero conversions, you know exactly where to invest and where to stop.

Which AI traffic actually closes deals is the question Scrunch’s dashboard cannot answer at all.

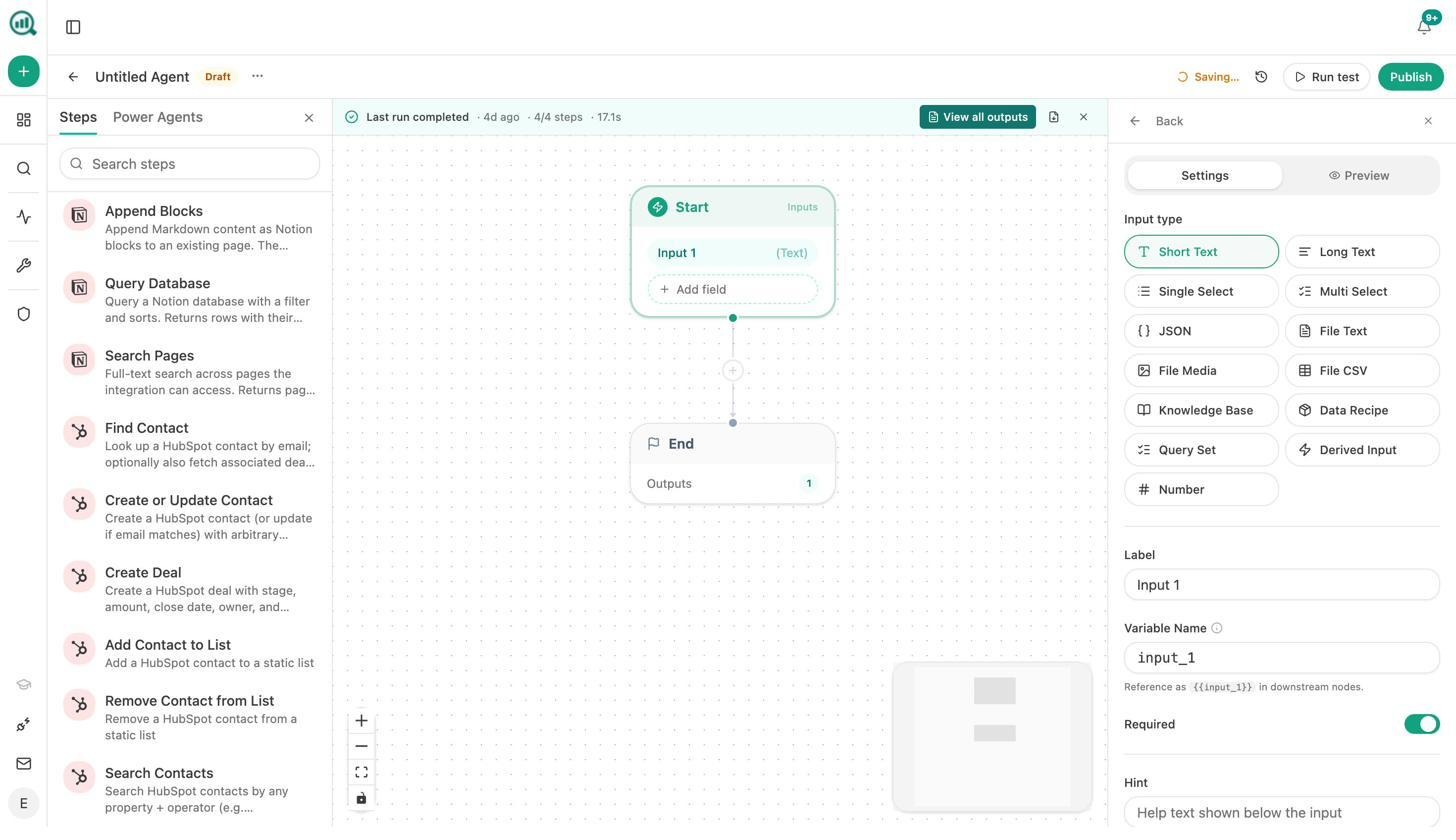

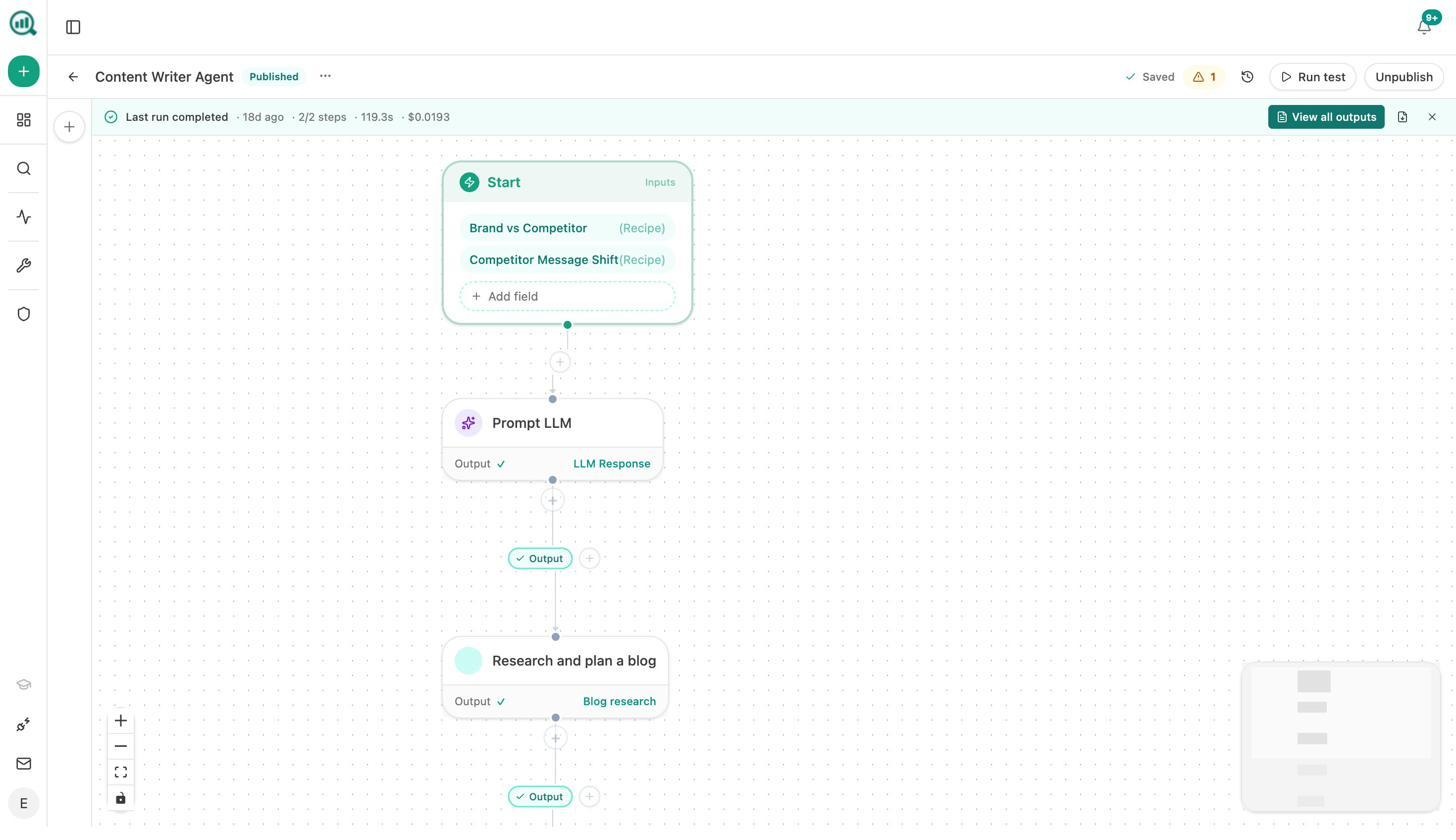

The Agent Builder is the part Scrunch does not have

Most reviews call the Agent Builder an automation layer. That description undersells it. Underneath Analyze AI is a programmable surface with 180+ nodes, 34 pre-built data recipes, 13 input primitives, and three trigger modes. It includes nodes for GA4, Google Search Console, Semrush, DataForSEO, HubSpot, Notion, Sanity, Contentful, WordPress, Mailchimp, Hunter, Tomba, and the AI visibility data Analyze AI already collects.

What that means is that the agents do the work that lives between insight and action. A few examples teams have built:

-

Monday board prep. A scheduled agent pulls AI visibility, GA4, GSC, HubSpot deals, and competitor movement into a one-page brand-voiced executive summary at 7am every Monday. The four-hour analyst chase nobody had time for stops existing.

-

Weekly content refresh. A scheduled agent finds pages losing AI citations, scrapes the page, rewrites the section that is decaying, and pushes the update to WordPress. Pages that were quietly losing rankings stop quietly losing rankings.

-

Lead enrichment on form fill. A webhook-triggered agent fires when an inbound form is submitted, runs domain research, finds contacts, drops the enriched record in HubSpot, and notifies the AE in Slack. The lead is enriched before the rep sees it.

-

Crisis response on press hits. A webhook-triggered agent listens for negative coverage, identifies the journalist, drafts three response options, and posts them to Slack. The response is drafted before the CEO finds out.

Scrunch’s Agent Experience Platform is a static surface for AI crawlers to read. Analyze AI’s Agent Builder is a programmable substrate that does work on real triggers. Different scopes, different outcomes.

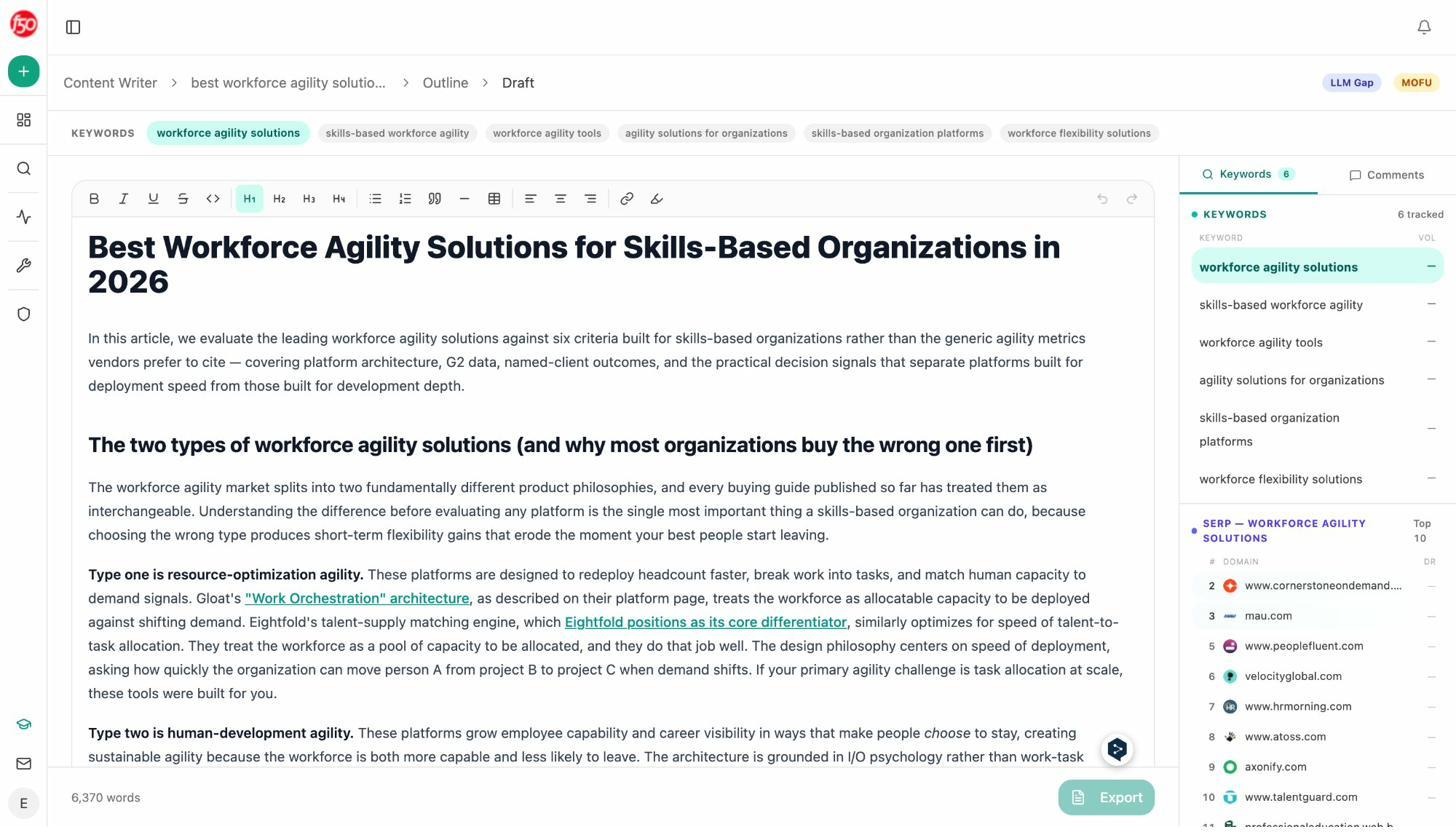

A Content Writer that turns research into draft

Scrunch surfaces topic gaps. It does not write the content that fills them. The Content Writer does, through a structured pipeline rather than a one-shot generator.

The flow runs in four stages. You start with a content idea, a competitor URL, or a tracked LLM gap. The system fetches SERP and AI competitors, generates a research package with cited sources, and turns that into an outline. You review the outline, edit anywhere it misses the mark, then generate a full draft that pulls in your brand voice, internal links, and target keywords automatically.

The reason the output is stronger than typical AI writing is the multi-step structure. Each stage compounds context for the next. By the time the draft generates, the model is working with researched facts, your tone-of-voice rules, your competitor contrast, and the keywords you actually want to rank for.

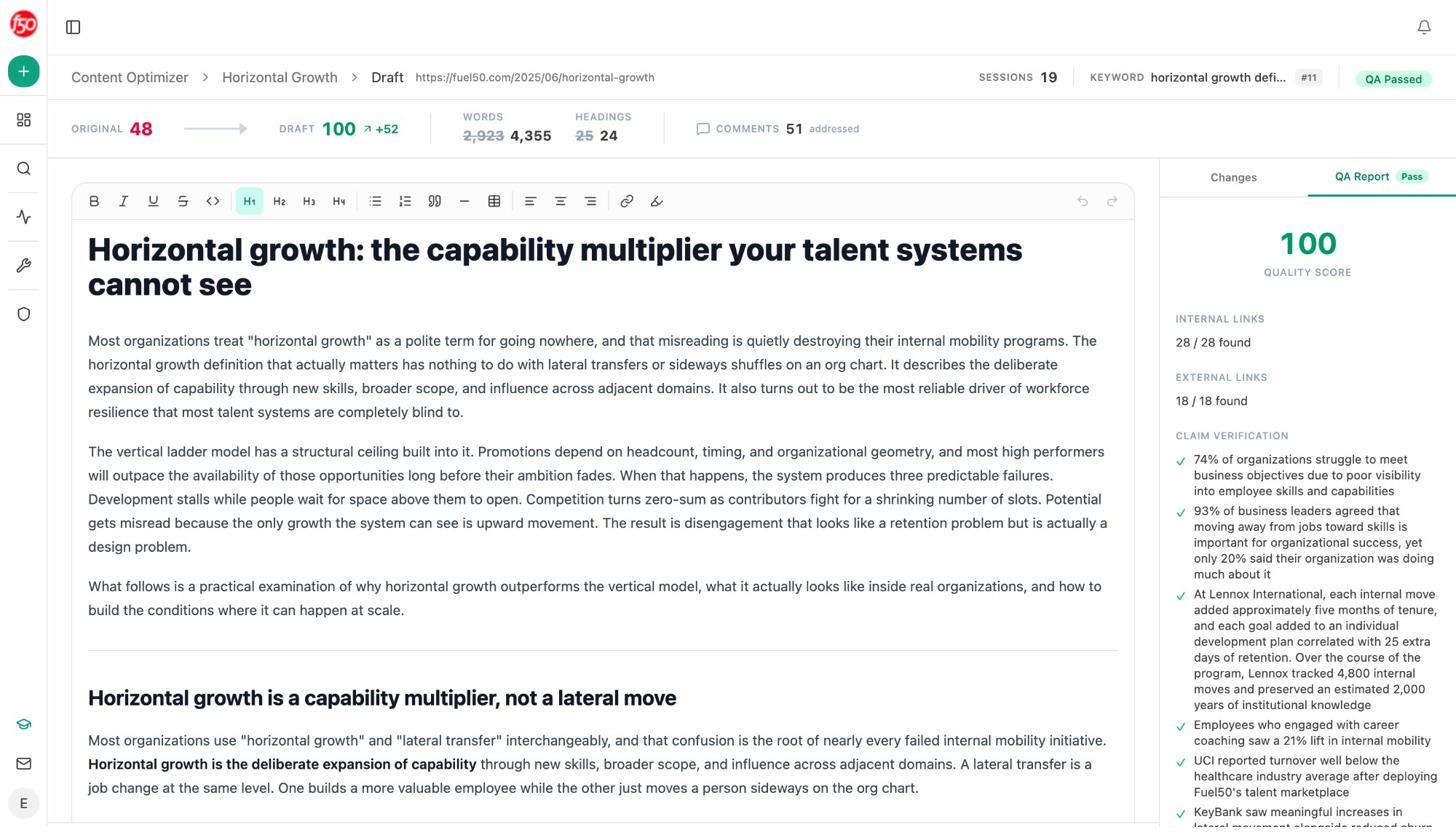

A Content Optimizer that fixes what is already shipping

Most of your traffic loss in 2026 comes from existing pages losing position quietly, not from new pages failing to rank. The Content Optimizer is built for that pattern.

The optimizer ingests your URL and pulls the live page. It scores argument and flow, clarity and polish, and information density across the article. It then surfaces specific ideas based on gaps it detects against the SERP and AI search competitors, with editorial comments at the paragraph and sentence level. You review what to apply, accept the relevant changes, and export the rewritten draft.

That feedback loop is the part Scrunch does not build. With Scrunch, the flagged gap goes into a backlog. With Analyze AI, the gap and the rewrite arrive in the same view.

Pricing that does not require a learning curve

Scrunch tiers on prompt counts, engine counts, persona counts, and audit counts in parallel. Every G2 review mentions a learning curve before someone realizes how their credits actually deplete. Analyze AI keeps the structure flatter. Plans are organized around team size and AI visibility scope, not around how the credits are sliced. You can see the full breakdown on our pricing page.

The summary, after all the comparison. Most things Scrunch does, Analyze AI also does. The things Scrunch does not do (close the loop with execution, tie visibility to revenue, run real workflows on real triggers) are exactly the layers most teams quietly need.

Scrunch AI vs Analyze AI at a glance

|

Capability |

Scrunch AI |

Analyze AI |

|---|---|---|

|

AI visibility tracking |

Yes, 8 engines |

Yes, 6 engines |

|

Prompt-level analytics |

Yes (inferred prompts) |

Yes (tracked, suggested, ad hoc) |

|

Citation and source analytics |

Yes |

Yes |

|

Competitor benchmarking |

Yes |

Yes |

|

Sentiment and perception map |

Yes |

Yes |

|

AI traffic to conversions |

Limited (GA4 read) |

Yes, native landing-page attribution |

|

Content Writer |

No |

Yes, multi-step research → outline → draft |

|

Content Optimizer |

No |

Yes, scored audit + paragraph-level rewrite |

|

Programmable Agent Builder |

AXP only (static surface for crawlers) |

Yes, 180+ nodes across GA4, GSC, Semrush, DataForSEO, HubSpot, CMS |

|

Workflow triggers |

None |

Manual, scheduled, webhook |

|

Pricing model |

Tiered by prompts, engines, personas, audits |

Tiered by team size and scope |

|

Free trial |

7 days on entry plan |

Yes |

The honest answer

If your buying question is whether Scrunch is good at what it does, the answer is yes. The monitoring is solid, the diagnostics are real, and the founding team has the credibility to keep the roadmap moving.

If your question is whether the dashboard pays for itself, the answer depends on what is downstream. A team with engineers, writers, and ops capacity to act on insights quickly will get good value at $300 to $1,000 a month. A team without that bench will spend most of the subscription watching a dashboard light up and a backlog grow.

The platforms that turn AI visibility into pipeline are the ones that close the loop. Analyze AI is built that way, with tracking that matches the category, an Agent Builder that does the work the tracker surfaces, and a Writer and Optimizer that turn insight into shipping content. Start a free trial and run the same prompts you would run in Scrunch. The data will tell you the rest.

Ernest

Ibrahim

![7 LLMrefs Alternatives That Do More Than Track Mentions [2026]](/_next/image?url=https%3A%2F%2Fwww.datocms-assets.com%2F164164%2F1779313840-blobid0.png&w=3840&q=75)