Summarize this blog post with:

In this article, you’ll get an honest breakdown of seoClarity’s ArcAI module. You’ll learn what it does well, where it falls short, how much it actually costs, and why most teams paying enterprise prices still can’t connect AI visibility data to revenue. You’ll also see how Analyze AI fills the gaps that seoClarity leaves open, especially for teams that need to act on data, not just stare at dashboards.

Table of Contents

What seoClarity ArcAI Does Well

Before talking about price or fit, it helps to understand the three things ArcAI does that shape how the platform feels in daily use. Each feature builds on the previous one, moving from tracking to analysis to optimization.

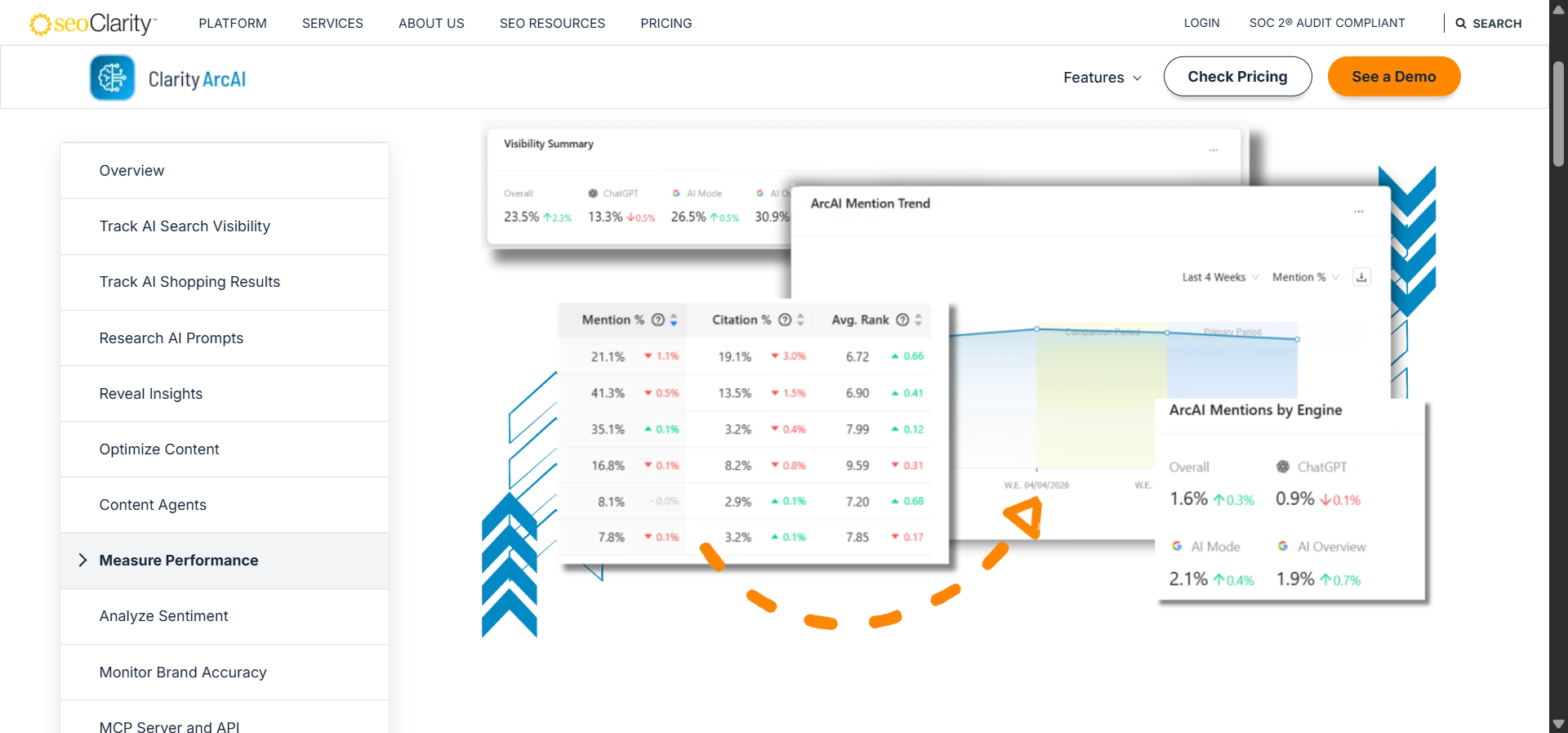

AI Mode Tracking

ArcAI continuously monitors when and how your content appears inside Google’s AI Mode, ChatGPT, Gemini, and Perplexity. Instead of relying on manual spot checks, the tool records which queries trigger AI responses, which pages get cited, and which competitors receive credit when you do not.

This matters because the data is stored over time. You start to see patterns. Certain page types or content formats win citations consistently, while others drop off without explanation. Each data point ties back to a specific URL, so you can pinpoint exactly where the gap is. For enterprise brands managing thousands of pages, that level of specificity turns tracking from a reporting exercise into a diagnostic tool.

ArcAI Insights

The insights layer takes the tracking data and turns it into a prioritized task list. It analyzes each recorded prompt, weighs its potential business impact, and surfaces which missed citations are worth fixing first.

Instead of presenting a wall of metrics, ArcAI Insights organizes findings into plain-language recommendations. Each one identifies the page to adjust, the entities or examples missing from the current draft, and the competitors whose coverage earned them visibility. As your team implements changes, the system recalculates priorities automatically, so outdated tasks don’t linger.

Prompt and Content Optimization

ArcAI’s optimization engine closes the loop. It takes the prioritized opportunities from Insights and helps you rebuild pages so AI models can recognize and cite them accurately.

The prompt research module shows how different engines interpret intent, revealing subtle phrasing shifts that determine whether your page earns a mention or disappears. That intelligence feeds into the Content Optimizer, which diagnoses structural problems like missing context, poor entity linking, or formatting that confuses parsers. You can test edits against target prompts and see in real time whether your changes align with what AI models favor.

Where seoClarity ArcAI Falls Short

ArcAI’s power comes with real tradeoffs. These are the three problems teams run into most often.

Steep Learning Curve

The first login drops you into a dense interface with dozens of filters, modules, and charts competing for attention. New users try to connect prompts, citations, and pages, but the relationships between those elements don’t reveal themselves without practice. Similar reports often live in different places, which adds clicks and raises confusion when speed matters.

Training helps, but the platform expects a shared mental model across SEO leads, analysts, and writers. That model takes weeks to build. Until it forms, team meetings drift toward explaining what a dashboard widget means rather than deciding what to do next.

Feature Overkill for Most Teams

ArcAI ships with modules for visibility tracking, crawl auditing, content scoring, and sentiment review. That breadth serves complex brands with many teams and many questions. But small groups with narrow goals (checking Google AI Overviews weekly or watching a short list of prompts in ChatGPT) don’t need the full spread. Every extra screen introduces friction without adding daily value.

Over time, contributors avoid opening the tool for quick checks and fall back to screenshots or chat messages. The team stops building muscle memory around the shared system, and process drifts back to habits that don’t scale.

Data Volatility Without Revenue Context

ArcAI measures answers from engines that update models, change layouts, and shift results without notice. Yesterday’s citation can vanish today even when your page didn’t change. The platform records those shifts but can’t always tell you whether the drop came from a model refresh, a regional rule, or a subtle wording change in the query.

The bigger problem is that ArcAI tracks visibility in isolation. You see that citations dropped, but you don’t see whether those citations were driving sessions, conversions, or revenue. Without that revenue connection, teams can overreact and chase noise rather than signal.

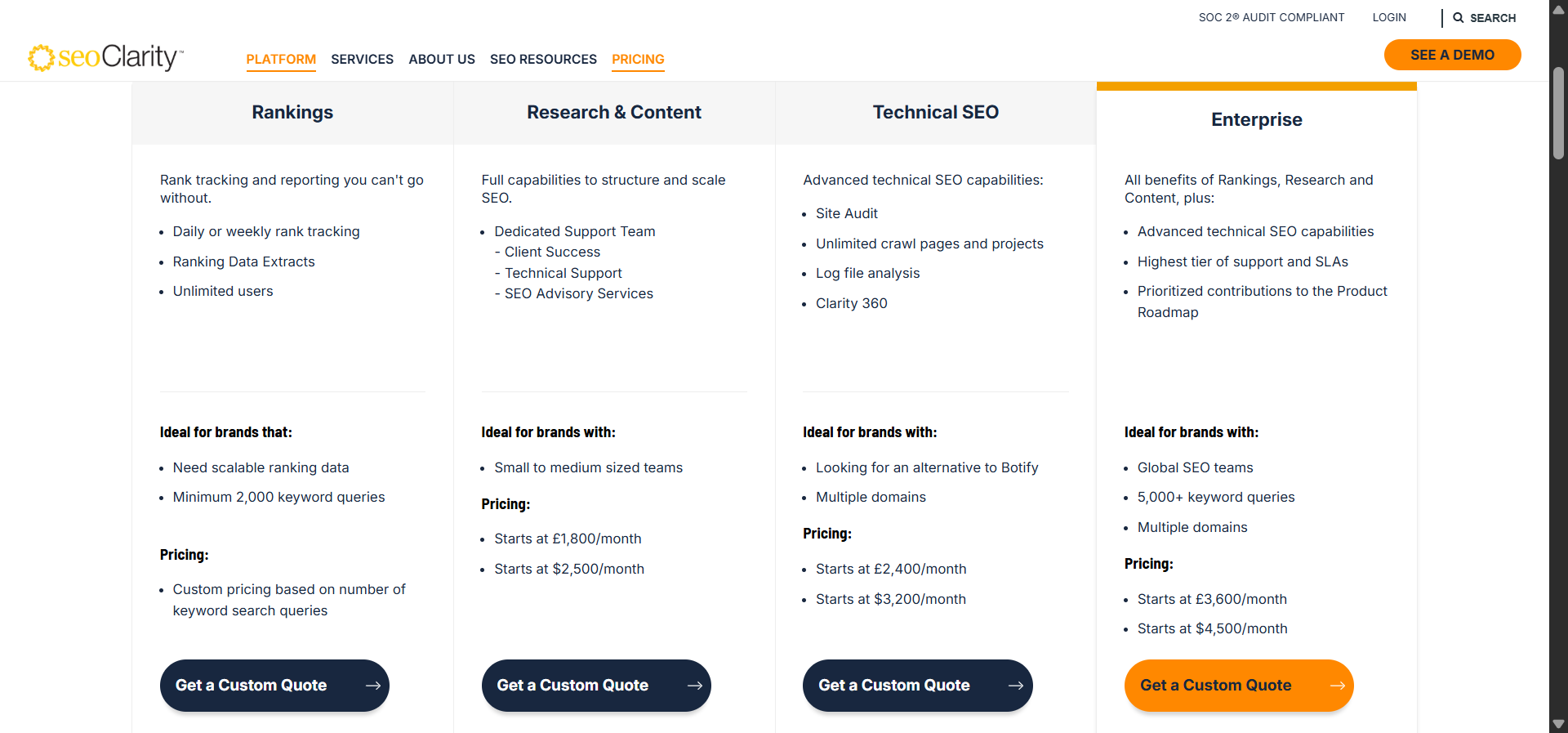

seoClarity ArcAI Pricing

seoClarity has never published transparent pricing for its ArcAI add-on. Here’s what you can expect based on publicly available data from review platforms and independent sources.

|

Package |

Starting Price |

What’s Included |

|---|---|---|

|

Research & Content |

$2,500/month |

SEO research, content tools, dedicated support |

|

Technical SEO |

$3,200/month |

Site audits, unlimited crawls, log file analysis |

|

Enterprise |

$4,500/month |

All modules, 5,000+ keywords, multi-domain support |

|

ArcAI Add-on |

Custom quote |

AI visibility tracking, prompt analysis, content optimization for AI |

ArcAI is not sold as a standalone product. It bundles into custom enterprise quotes based on how many prompts, engines, and brands you want tracked. Extra costs come from SLAs, consulting time, and data refresh frequency.

On the positive side, enterprises can negotiate multi-year discounts and shape the platform to their exact needs. But there’s no simple way to budget for ArcAI without a sales call. If you’re a mid-sized team or agency, you must buy into the full seoClarity ecosystem to access the AI visibility features.

For teams that want AI visibility tracking without a five-figure annual commitment, that’s a problem.

Analyze AI: The Alternative That Connects AI Visibility to Revenue

Most AI visibility tools tell you whether your brand appeared in an AI response. Then they stop. You get a visibility score, maybe a sentiment score, but no connection to what happened next. Did anyone click? Did they convert? Was the effort worth it?

Analyze AI is built differently. It is the agentic platform for SEO, AEO, content, and GTM operations that connects AI visibility to actual business outcomes. It tracks which answer engines send sessions to your site, which pages those visitors land on, what actions they take, and how much revenue they influence. Then it gives you the tools to act on every insight, from content creation to automated workflows.

Here’s how that works in practice.

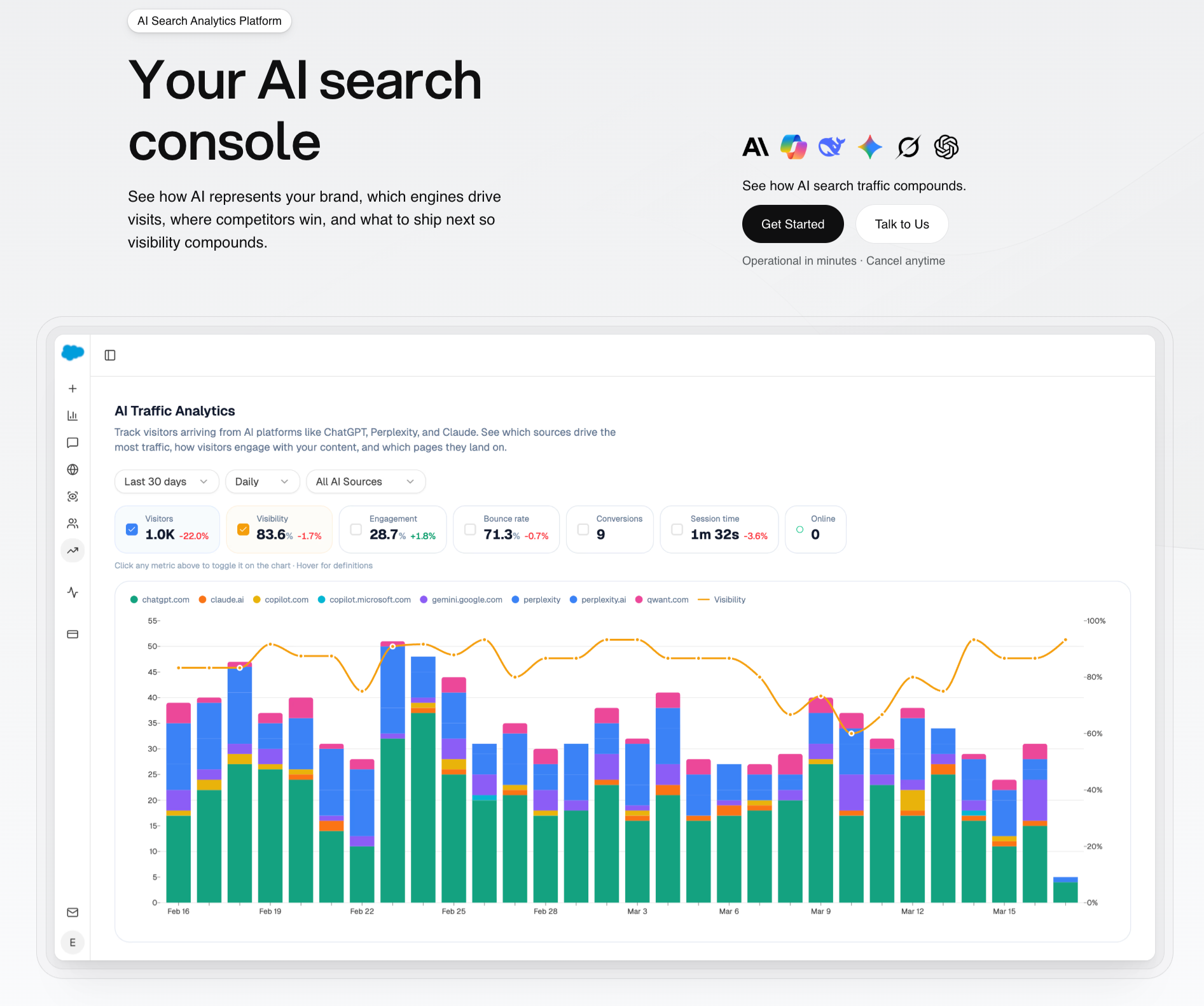

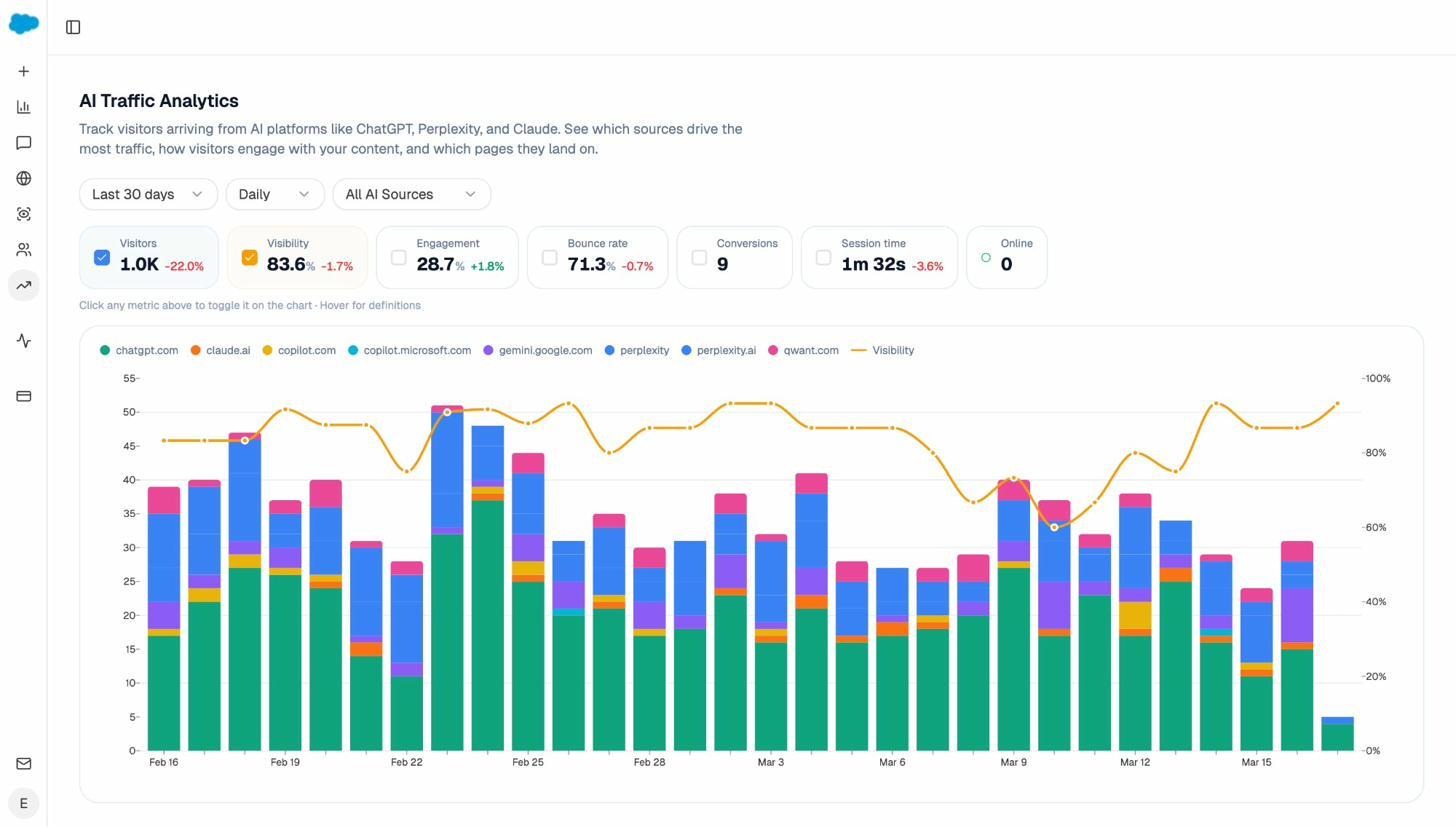

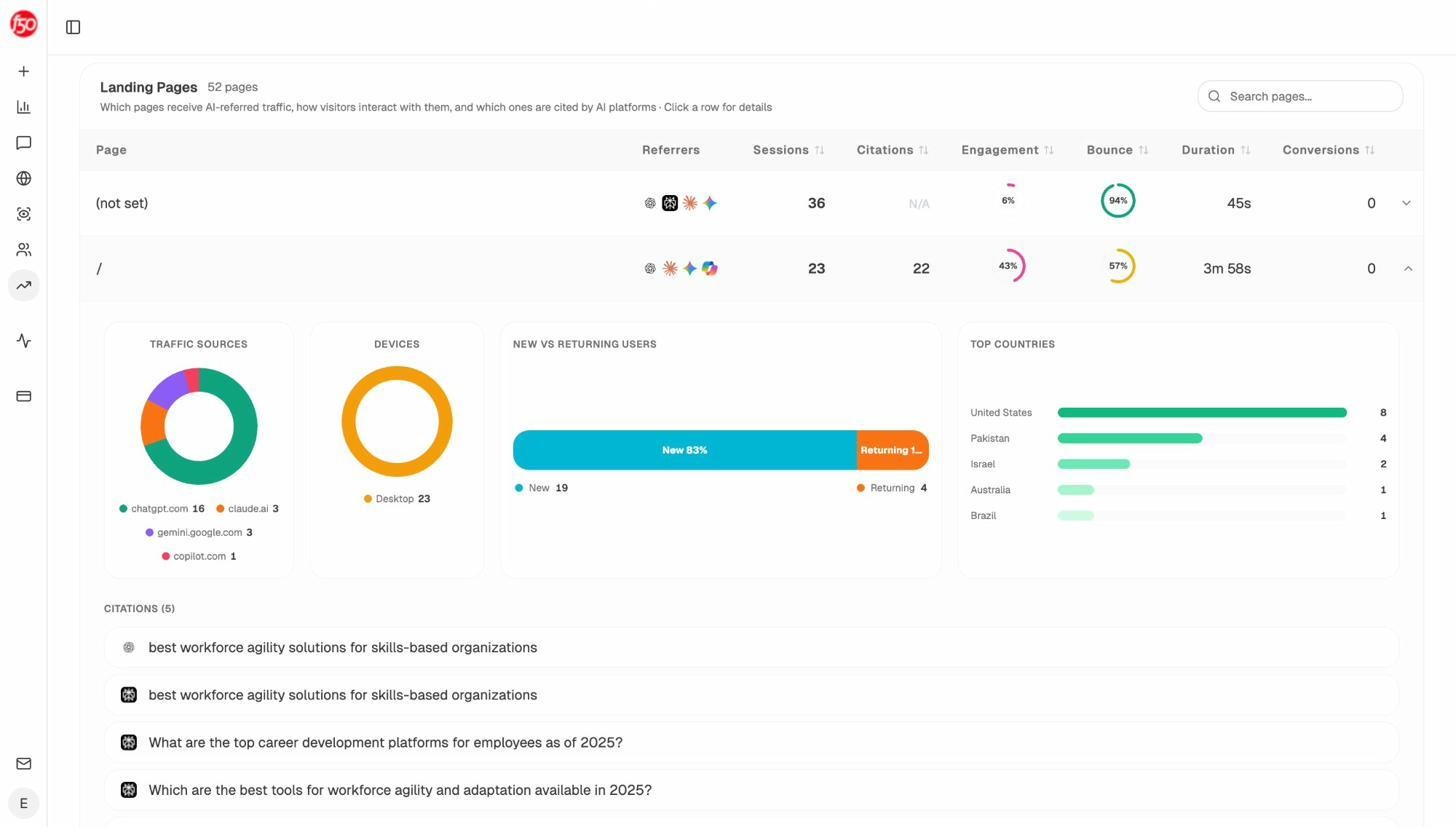

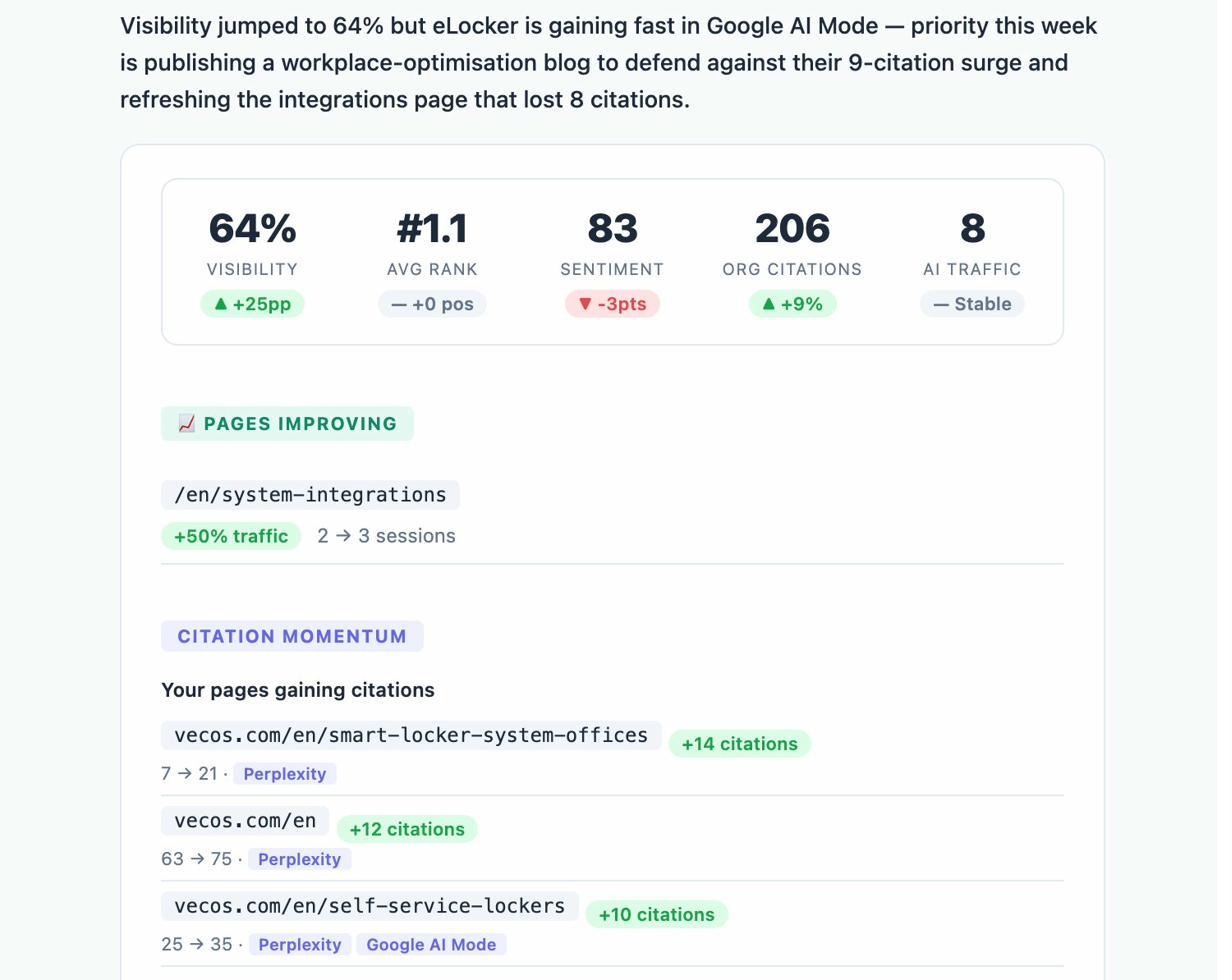

See Real AI Traffic, Not Just Mentions

Analyze AI’s AI Traffic Analytics attributes every session from answer engines to its specific source. You see visitor counts from ChatGPT, Claude, Copilot, Gemini, and Perplexity side by side. You see engagement rates, bounce rates, session time, and conversion counts for each source. That breakdown lets you know exactly which AI engines deserve more optimization effort and which ones send traffic that doesn’t convert.

This is the gap that seoClarity ArcAI doesn’t close. ArcAI tells you that you were mentioned. Analyze AI tells you that the mention generated 248 sessions from ChatGPT with a 28.7% engagement rate and 9 conversions. That’s the difference between a visibility report and a revenue report.

The Landing Pages report goes one level deeper. You see which specific URLs receive AI referral traffic, which engine sent each session, and how those visits compare to your organic search traffic. When your product comparison page gets 50 sessions from Perplexity and converts at 12%, while a blog post gets 40 sessions from ChatGPT with zero conversions, you know exactly where to double down.

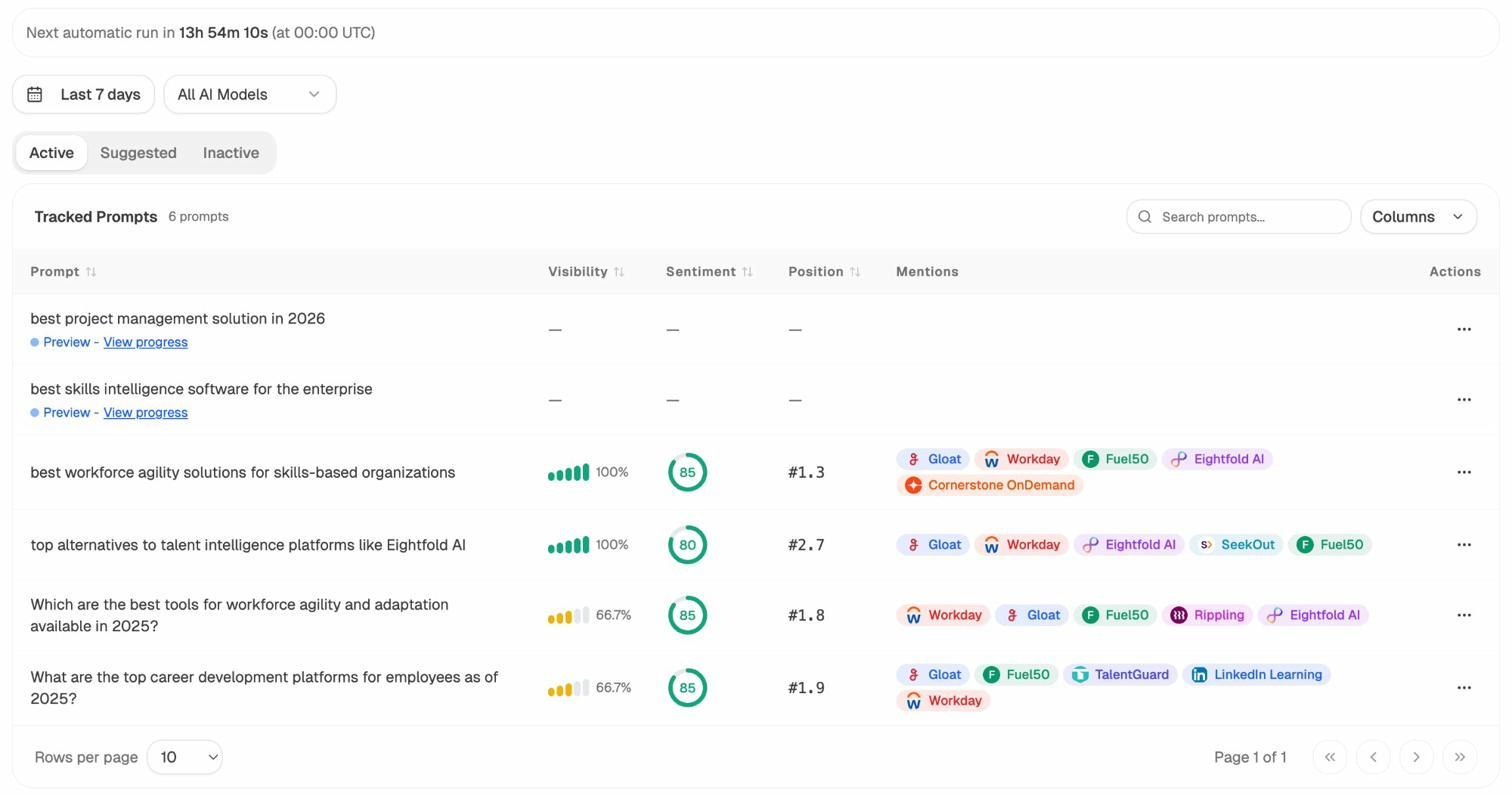

Track the Exact Prompts Buyers Use

Prompt Tracking monitors specific prompts across all major LLMs. For each prompt, you see your brand’s visibility percentage, sentiment score, position relative to competitors, and which other brands appear alongside you.

Not sure which prompts to track? Analyze AI has a Prompt Discovery feature that suggests the actual bottom-of-funnel prompts you should monitor. These aren’t generic topic suggestions. They are the exact queries buyers type into ChatGPT and Perplexity when they are evaluating solutions in your category.

You can also run ad hoc prompt searches to test any query across multiple AI engines instantly without adding it to your tracked list.

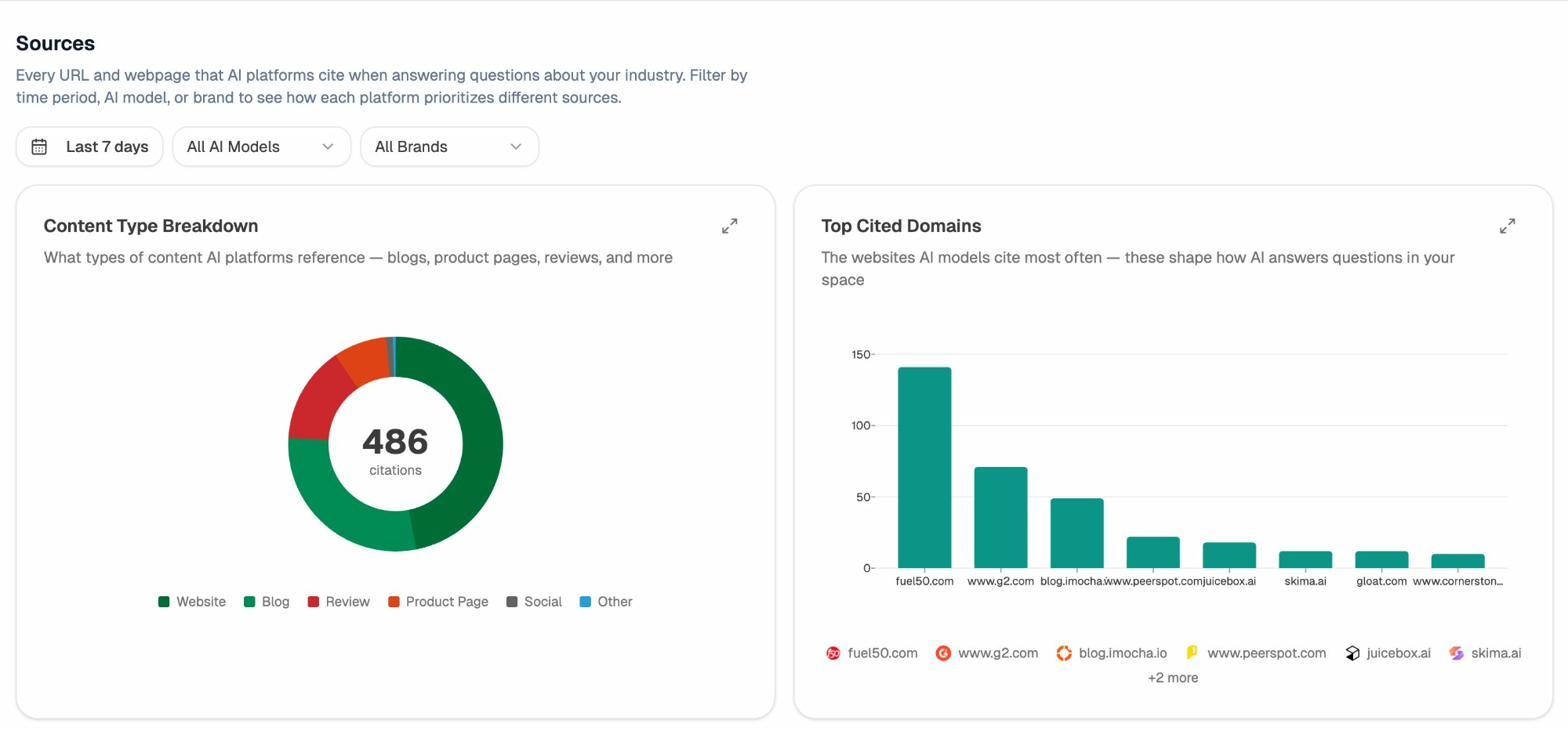

Know Which Sources AI Models Trust

Analyze AI’s Citation Analytics reveals exactly which domains and URLs models cite when answering questions in your space. You see the content type breakdown (blogs, product pages, reviews), the top cited domains, and how citation patterns shift over time.

This changes how you think about link building and digital PR. Instead of generic outreach, you target the specific sources that shape AI answers in your category. You strengthen relationships with domains that models already trust. You create content that fills the gaps in their coverage. And you track whether your citation frequency increases after each initiative.

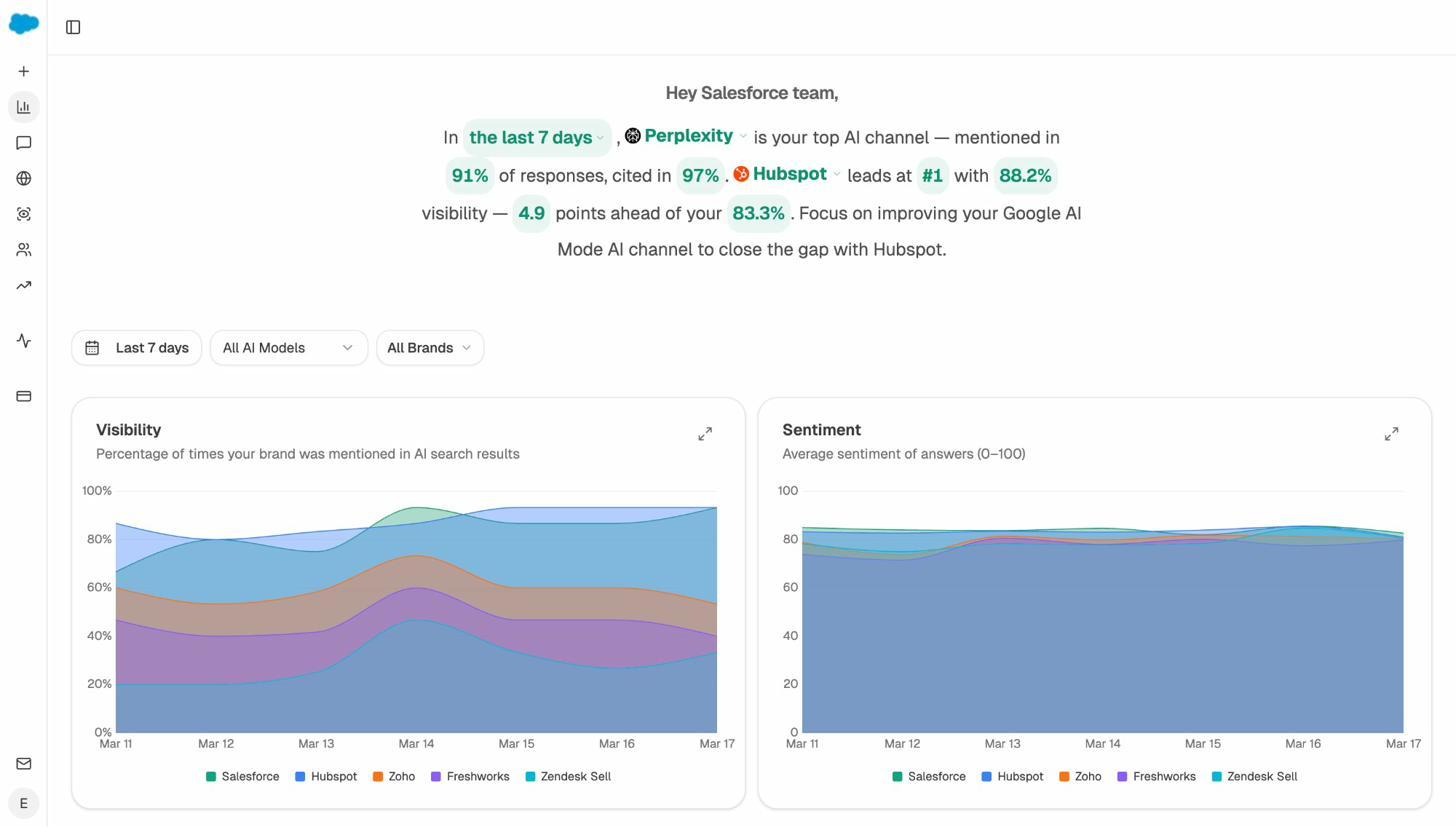

See How Your Brand Compares to Competitors Across Every Engine

The Overview dashboard gives you a complete competitive picture. You see your visibility percentage, sentiment score, and position relative to competitors across all tracked AI models. The platform generates a natural-language summary that tells you which engine is your strongest channel, who leads in your category, and where to focus to close the gap.

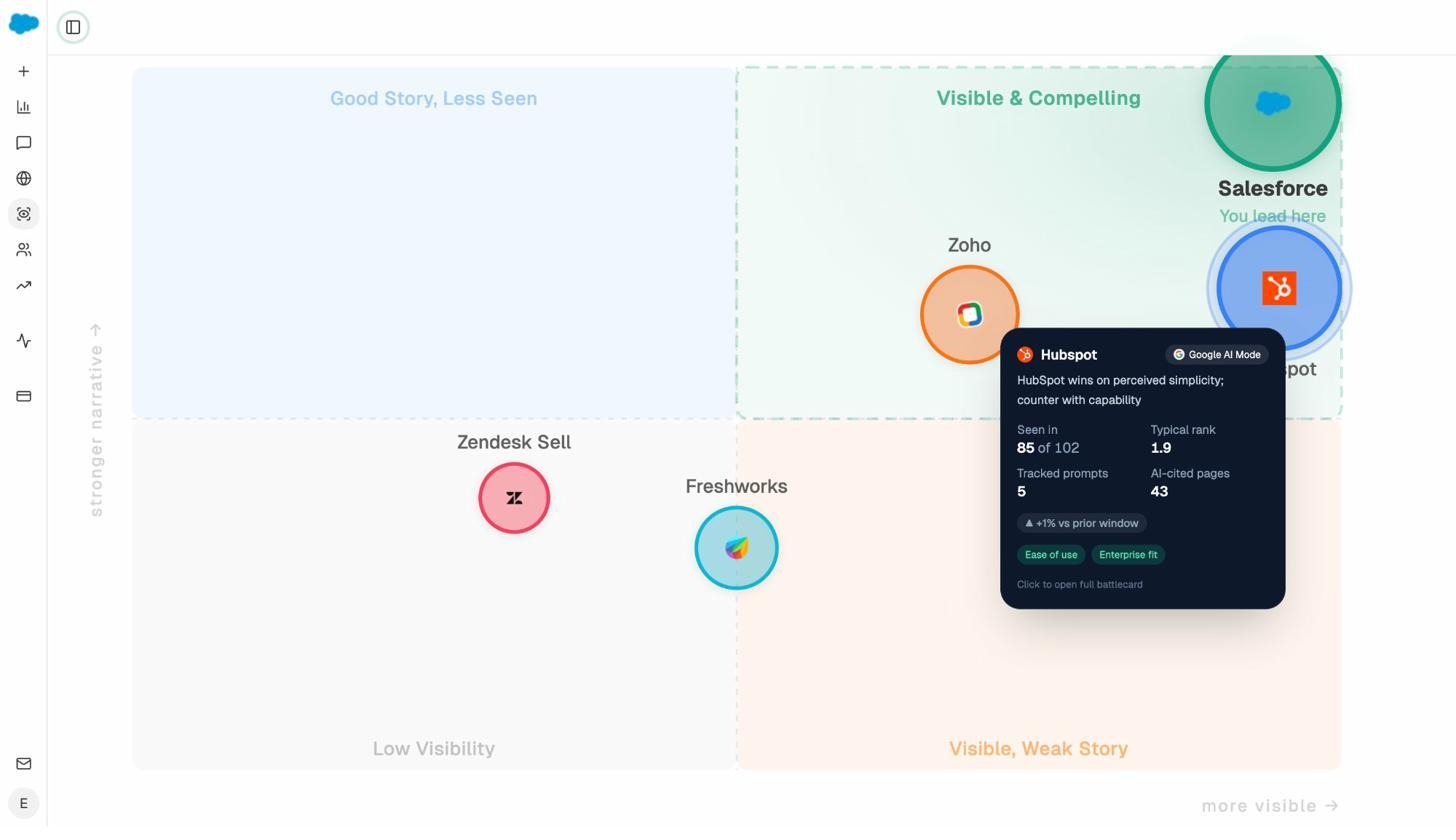

The Perception Map plots every tracked brand on a quadrant of visibility versus narrative strength. You see at a glance who is “Visible & Compelling,” who has a “Good Story, Less Seen,” and who is “Visible, Weak Story.” Each brand card shows mentions, typical rank, tracked prompts, and AI-cited pages. Click any competitor to open their full AI Battlecard.

This is the kind of competitive intelligence that helps CMOs and brand marketers defend positioning in board meetings. It’s not a list of keywords. It’s a visual map of how AI engines perceive your entire category.

Optimize Content for Both SEO and AI Search

Analyze AI doesn’t just track and monitor. It helps you improve.

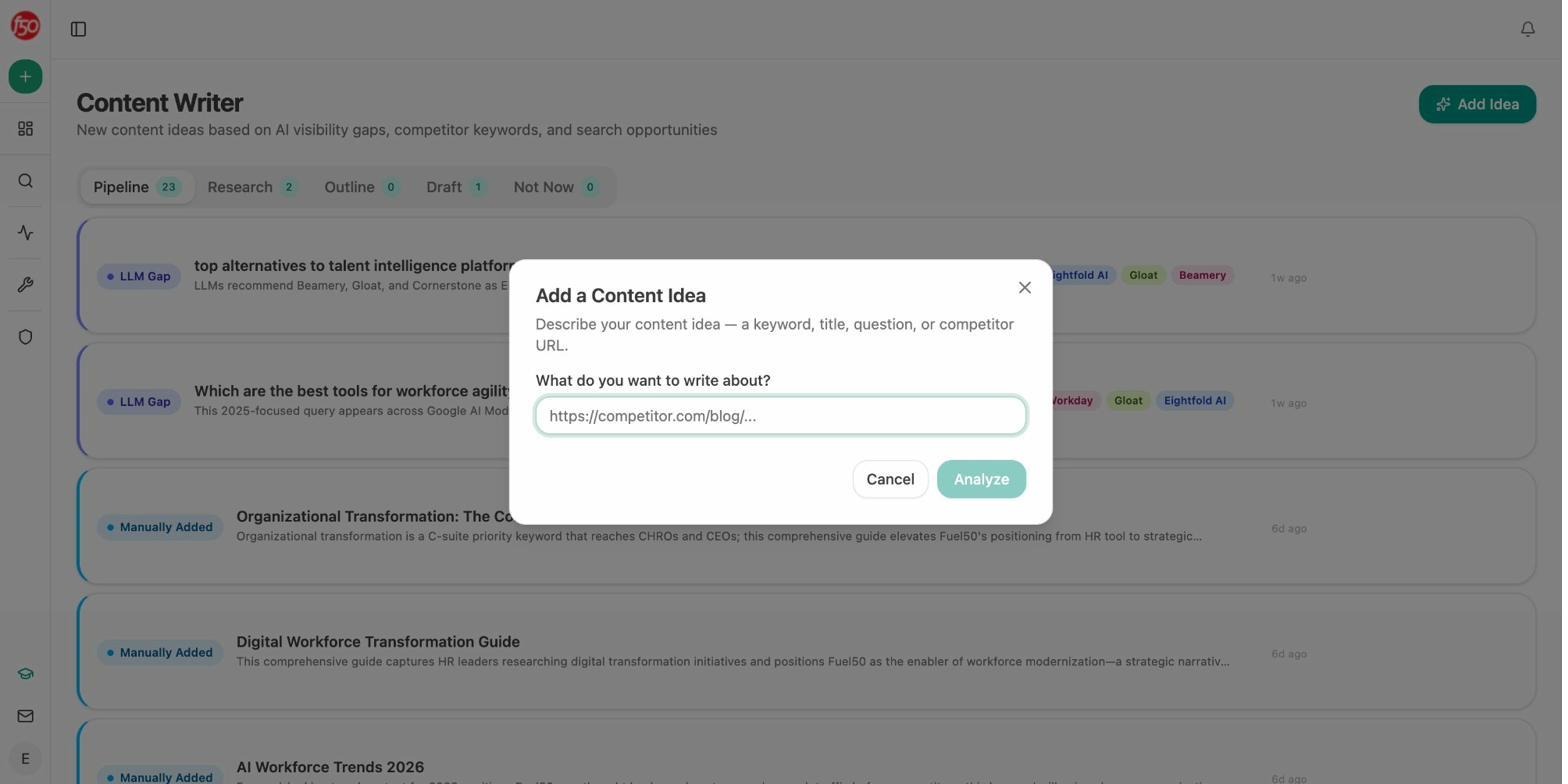

The AI Content Writer moves ideas from concept to published draft through a structured pipeline. You start with a keyword, a competitor URL, or an AI visibility gap. The writer researches the topic, builds an outline, and generates a full draft with your brand voice applied through the Knowledge Base. Every draft is scored for AI engine optimization readiness before it publishes.

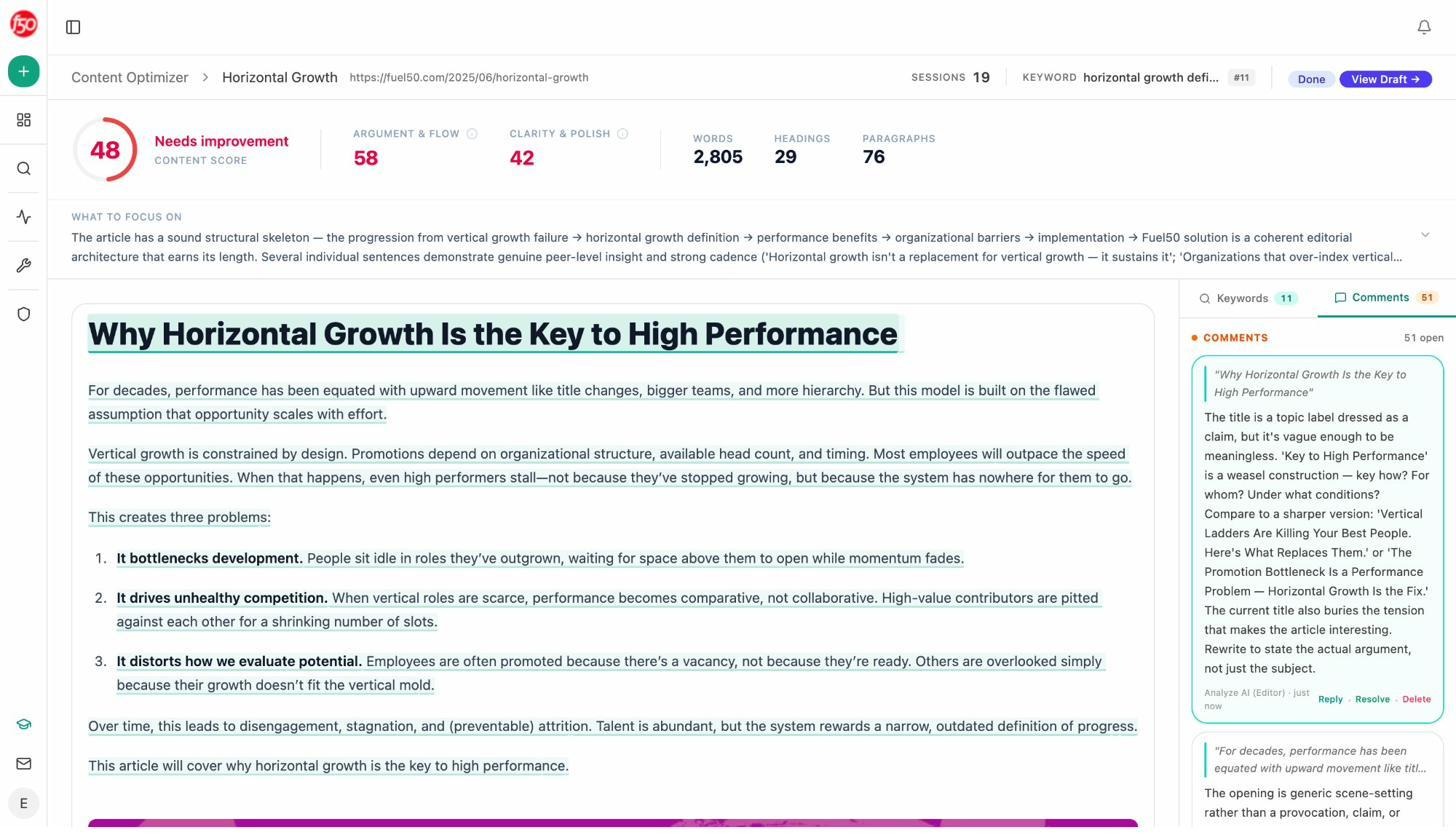

The AI Content Optimizer works on your existing pages. It pulls in your content, identifies structural weaknesses, and provides specific optimization ideas based on gaps. The editor adds inline comments with actionable feedback, then generates an optimized version you can review and publish.

These tools produce better outputs because they use a multi-step research and review process rather than generating content in a single pass. The writer runs topic research, competitive analysis, and outline validation before drafting. The optimizer audits against a real AEO scorecard, not just keyword density. The difference shows in the quality of the final output.

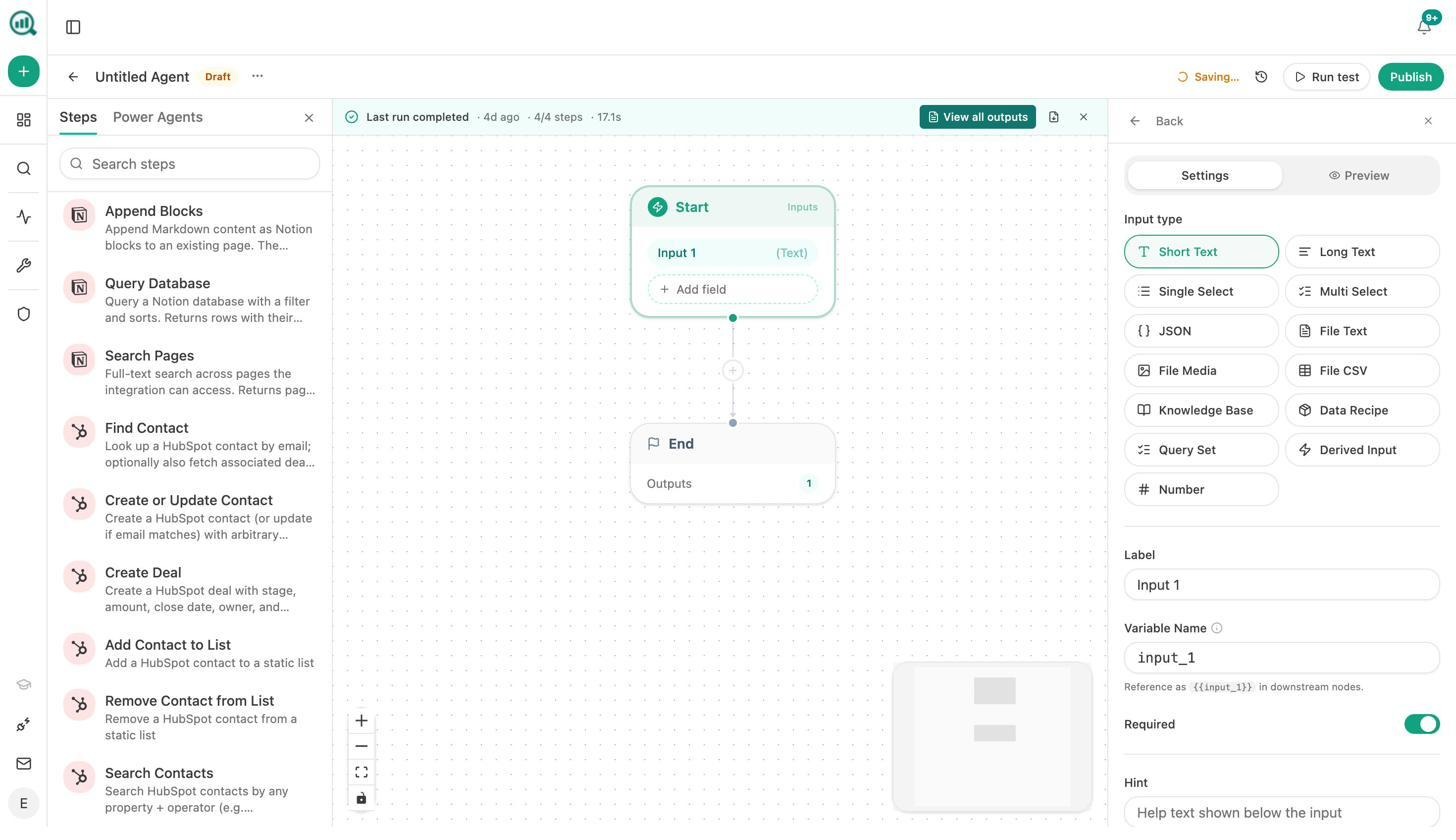

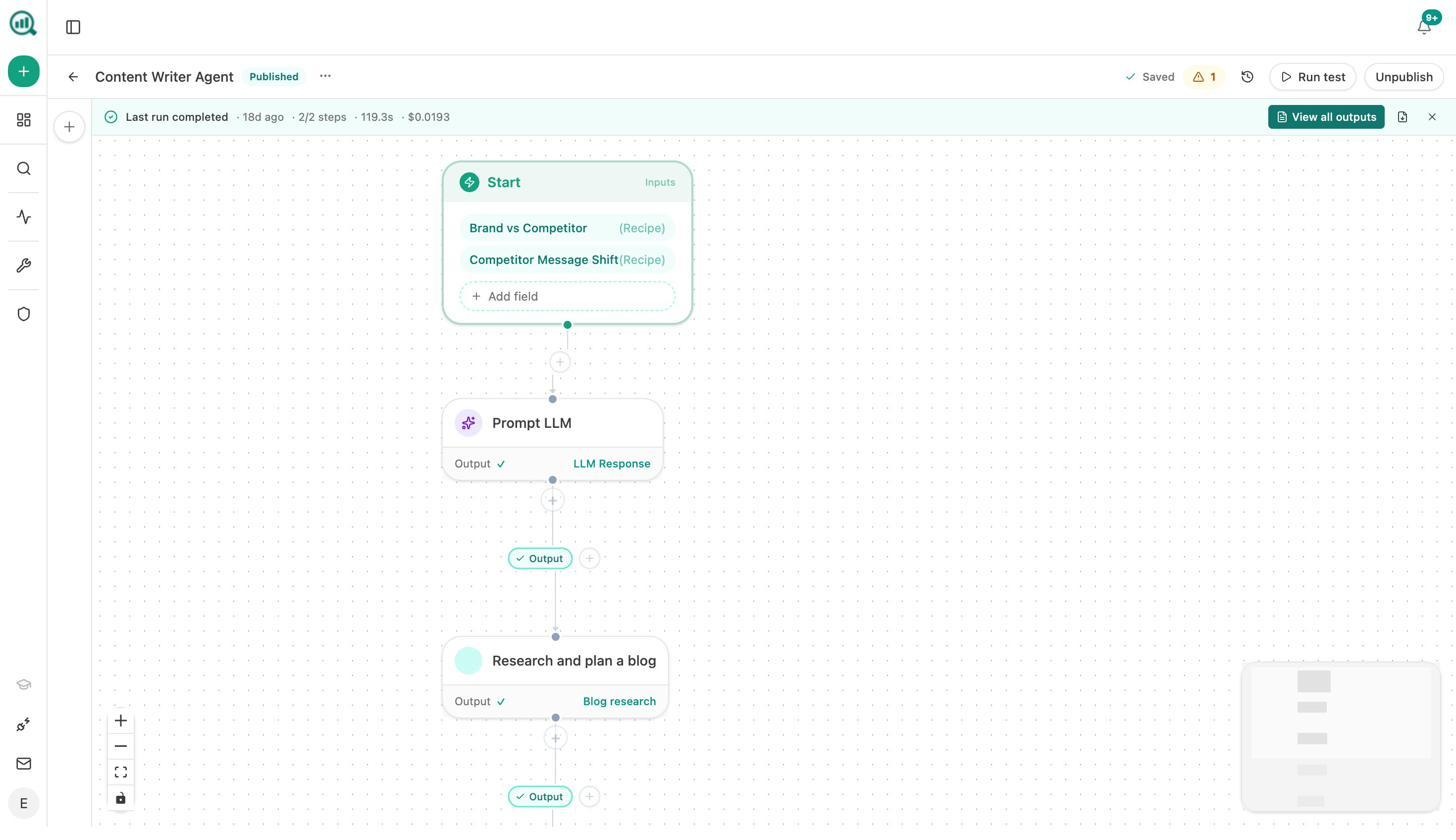

Build Any Workflow With the Agent Builder

This is where Analyze AI separates from every other platform in the category, including seoClarity.

The Agent Builder gives you 180+ nodes, 34 pre-built data recipes, 13 input primitives, and 3 trigger modes (manual, scheduled, webhook). It integrates directly with GA4, Google Search Console, DataForSEO, Semrush, HubSpot, Notion, WordPress, Slack, and every major LLM.

This is not an automation layer you bolt on top of a dashboard. It’s a programmable substrate with the same surface area as Zapier, Retool, Make, and n8n combined, but pre-wired to your SEO, content, and AI visibility data.

Here’s what teams actually build with it.

A CMO sets up a Monday Board Prep agent that runs at 7am every week. It pulls the executive summary, share-of-voice data, GA4 traffic, AI visibility trends, and new HubSpot deals. It generates a brand-voice executive summary, exports it to DOCX, and emails leadership. That replaces a 4-hour analyst chase that nobody has time for.

An agency builds a monthly client retainer report agent. It loops over the client list, assembles each report in parallel, and emails each project manager. Reporting day stops existing.

A content team creates a brief-to-publish pipeline. When a brief moves to “approved” in Notion, the agent generates research, an outline, a full draft with brand voice, and scores it against the AEO Content Scorecard. If the score passes, it publishes to WordPress. If it doesn’t, it messages the writer in Slack with the gaps.

A PR team sets up a crisis early-warning agent that runs every 15 minutes. It monitors brand mentions and news sentiment. If sentiment drops below a threshold and reach is high, it alerts Slack and drafts a response immediately.

The agent runs on schedule, on webhook, or on demand. A scheduled agent is a virtual team member that does the same job every Monday at 7am, never forgets, and costs cents per run. A webhook agent fires when reality changes (a HubSpot deal closes, a form is filled, a CMS post publishes) and the lag from event to action collapses from days to seconds.

If you came for AI search visibility, that’s just one of the things you can build. The agent builder is the actual product.

Stay Informed Without Logging In

Weekly Email Digests land in your inbox with a summary of what changed. Visibility shifts, sentiment changes, new competitor mentions, and citation updates. Your team stays current without needing to open the platform every day.

seoClarity ArcAI vs. Analyze AI: Side-by-Side

|

Feature |

seoClarity ArcAI |

Analyze AI |

|---|---|---|

|

AI Visibility Tracking |

Yes (Google AI Mode, ChatGPT, Gemini, Perplexity) |

Yes (ChatGPT, Perplexity, Claude, Copilot, Gemini, Google AI Mode, Meta AI, DeepSeek) |

|

AI Traffic Attribution |

No |

Yes (sessions, engagement, conversions by engine) |

|

Landing Page Reports |

No |

Yes (which pages convert AI traffic) |

|

Prompt Tracking |

Yes |

Yes (with suggested prompts and ad hoc searches) |

|

Citation Analytics |

Partial |

Yes (content type breakdown, top cited domains, per-source tracking) |

|

Sentiment Monitoring |

Yes |

Yes (with Perception Map and AI Battlecards) |

|

Content Writer |

Basic optimization guidance |

Full pipeline (research, outline, draft, AEO scoring) |

|

Content Optimizer |

Yes |

Yes (inline editorial comments, optimization ideas from gap analysis) |

|

Agent Builder / Automation |

ClarityAutomate (limited) |

180+ nodes, 34 data recipes, GA4/GSC/HubSpot/Notion/WordPress/Semrush integrations |

|

Weekly Digests |

Available |

Yes |

|

Pricing Transparency |

Custom quotes only (starts at $2,500/month for base platform) |

The Bottom Line

seoClarity ArcAI is a capable enterprise platform for teams that need large-scale traditional SEO alongside AI visibility tracking. If you’re managing 5,000+ keywords across multiple domains with a Fortune 500 budget, it can work.

But if you need to connect AI visibility to revenue, produce and optimize content at scale, and automate your entire marketing operations layer, seoClarity doesn’t give you the tools to do it. You get dashboards. You don’t get execution.

Analyze AI gives you both. The tracking tells you where you stand. The Content Writer and Content Optimizer help you improve what’s not working. And the Agent Builder lets you build any workflow your team needs, from automated reporting to content pipelines to crisis alerts, without waiting for a vendor to build the feature.

SEO is not dead. AI search is another organic channel. The teams that win are the ones that add AI search to their existing playbook, not the ones that panic and replace everything. Analyze AI is built for those teams.

Ernest

Ibrahim