Summarize this blog post with:

In this article, you’ll learn what Ahrefs Brand Radar tracks, what its 2026 pricing adds up to once you stack it on top of an Ahrefs base plan, the three places it works well, the three accuracy and coverage gaps that keep showing up in independent tests, and how the same job can be done end-to-end inside Analyze AI, the agentic SEO and content platform that pulls AI visibility, GA4 attribution, citations, content production, and workflow automation into one workspace.

Table of Contents

What Ahrefs Brand Radar actually does

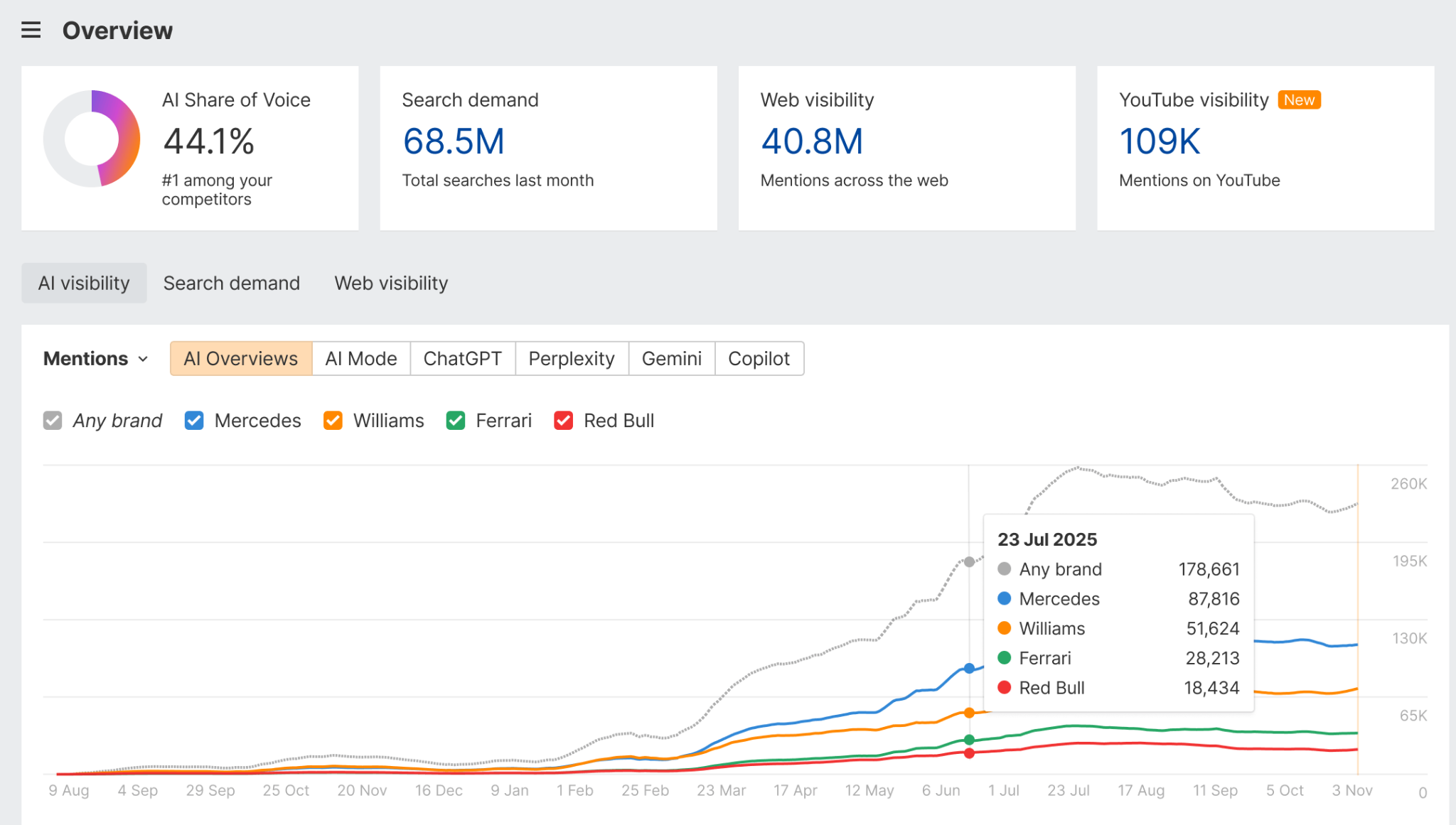

Ahrefs Brand Radar is an add-on inside the Ahrefs ecosystem that monitors how often a brand is mentioned across AI answers and across the open web. It pulls from a database of more than 199 million search-backed prompts and tracks six engines: Google AI Overviews, Google AI Mode, ChatGPT, Perplexity, Gemini, and Microsoft Copilot.

Inside the dashboard, you can search any brand or topic, view share of voice over time, see which domains are cited most often in AI answers, and cross-reference AI mentions with branded search demand and backlink data from Ahrefs’ core dataset. There is also a beta layer for YouTube, TikTok, and Reddit visibility, sold separately.

The pitch is consolidation. One workspace for AI mentions, web mentions, and branded demand, sitting alongside the Site Explorer and Keywords Explorer most SEO teams already use. The pitch holds up in narrow ways. It also breaks in three places that matter for anyone tying AI visibility to revenue.

Ahrefs Brand Radar pricing in 2026

The pricing is the most contentious thing about this product. Most marketing teams hit the cost wall before they hit the feature wall.

Brand Radar is an add-on. It requires an active Ahrefs base subscription, which starts at $129/month for the Lite plan. The Brand Radar AI Indexes are sold per platform at $199/month each, or bundled across all six AI platforms for $699/month. The YouTube, TikTok, and Reddit module is another $199/month after the beta period ends.

Stacked together, full coverage looks like this:

|

What you’re paying for |

Cost per month |

|---|---|

|

Ahrefs base plan (Lite or higher) |

$129–$449 |

|

Brand Radar AI Indexes (6 engines bundled) |

$699 |

|

Video & social visibility (YouTube, TikTok, Reddit) |

$199 |

|

Total for full coverage |

$1,027–$1,347 |

Independent reviews from EWR Digital and Ekamoira put the all-in cost between $828 and $1,148 per month for typical configurations. That is roughly 2.5x the average price of a dedicated AI visibility tool.

There is also a check ceiling. Brand Radar includes 2,500 prompt checks per month by default, where one check equals one prompt × one LLM × one location. Track 10 prompts across the six engines in three locations daily and you blow through the cap in under two weeks.

The 3 things Ahrefs Brand Radar does well

1. AI Overviews tracking is the most accurate of the six engines

If your AI search strategy is mostly about staying visible inside Google AI Overviews and AI Mode, this is the part of Brand Radar that delivers. It pulls from real Google search-backed prompts rather than synthetic prompt libraries, which means the queries it monitors reflect what people actually type into Google rather than scenarios a vendor invented.

In side-by-side tests by Rankability and Dageno, AI Overviews counts came in directionally accurate against manual benchmarks. The numbers weren’t perfect, but they were usable for trend monitoring. If 90% of the AI traffic you care about lives inside Google’s surfaces, you are getting reasonable signal here.

2. Search demand and unlinked mention tracking are genuinely solid

Branded search volume tracking and the unlinked mention crawler are the strongest parts of the product because they sit on top of Ahrefs’ mature web data. The system catches both linked and unlinked references across news, blogs, and forums, then deduplicates and normalizes domains so you see real reach instead of inflated counts. If your PR or comms team needs a quick read on whether a media moment is creating actual brand demand, the data here will hold up.

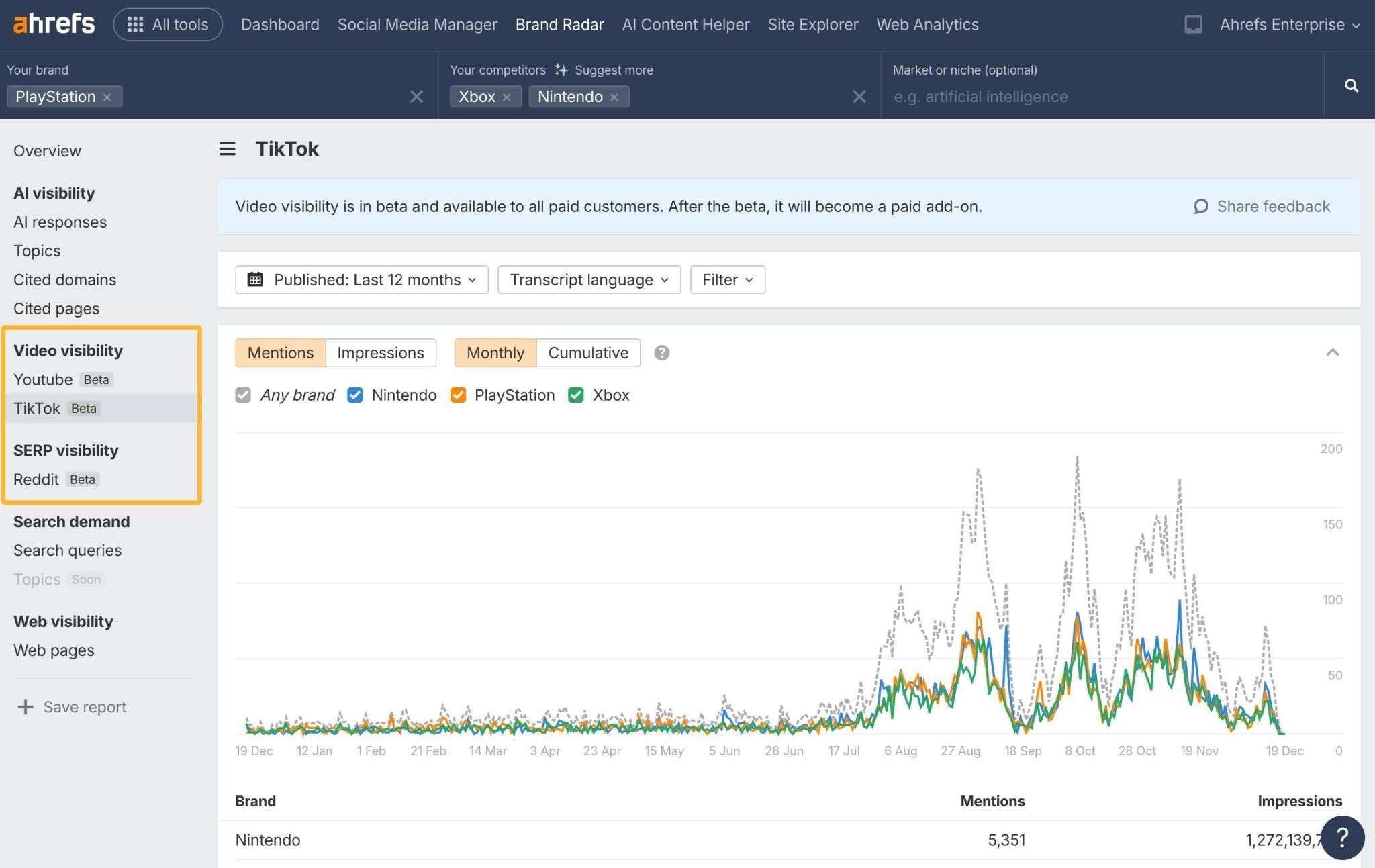

3. The YouTube, TikTok, and Reddit tracking is unique

This is the one feature you genuinely cannot find in dedicated AI visibility tools. Brand Radar scans YouTube transcripts, TikTok captions, and Reddit threads for brand mentions, and surfaces when Reddit threads start appearing inside Google search results.

The argument is that conversations on these platforms eventually become training data and retrieval sources for LLMs, so watching them today gives you a leading indicator of where AI visibility moves tomorrow. Execution is still beta, the YouTube data only goes back to December 2023, and the module costs an additional $199/month after the beta period. But the idea is real and nobody else is doing it at this scale.

The 3 things Ahrefs Brand Radar gets wrong

The product has three documented weak spots that show up consistently across independent reviews. None of them are dealbreakers in isolation. Together, they make the price hard to defend for most teams.

1. The ChatGPT and Perplexity counts are not reliable

This is the single most damaging finding from the Dageno test. For one brand, Brand Radar reported 3 ChatGPT mentions globally. The actual count, verified by manual reproduction, was 123. That is a 97.5% discrepancy.

The root cause is methodology. Brand Radar uses a static prompt library and timed snapshots rather than capturing live, prompt-level executions. Because LLMs regenerate answers constantly, the same prompt can produce different outputs every few hours. A drop in the chart can mean the brand actually lost visibility, or it can mean the model just answered differently that morning.

Without metadata on prompt, timestamp, and model version, you cannot tell which is which. For ChatGPT and Perplexity specifically, that ambiguity makes the trend lines hard to act on.

2. There is no Claude, Grok, or Meta AI tracking

The six engines Brand Radar covers leave out three that are gaining share fast: Anthropic’s Claude, xAI’s Grok, and Meta AI.

For a B2B brand whose buyers spend their day in tools like Notion, Cursor, and Slack, that omission matters. Claude in particular has become a default research assistant for product, engineering, and content teams. If you can’t see how you appear there, you are flying blind on a meaningful slice of your buyer journey. Read more on tracking Claude visibility and Meta AI visibility.

3. The data is not tied to traffic or revenue

This is the gap that hurts the most for marketing leaders who have to defend AI search investment in a board meeting.

Brand Radar tells you that you appeared in an AI answer. It does not tell you whether anyone clicked, what page they landed on, what they did once they got there, or how that visit influenced revenue. You can cross-reference Brand Radar mentions with Ahrefs backlink data, but the chain stops at “this URL was cited.” There is no GA4 attribution, no landing page conversion data, no assisted-revenue model.

A brand mention in Perplexity that drives 200 qualified sessions a month is treated identically to a citation in Copilot that drives zero. That is a vanity metric problem, and it is the reason most CMOs cannot justify the bill past month two.

When Ahrefs Brand Radar is worth it (and when it isn’t)

Brand Radar is worth it when all of these things are true. You are already on a paid Ahrefs plan. You mainly care about Google AI Overviews and AI Mode. You have an in-house data team to interpret the trend lines and reconcile the accuracy gaps. And you have either a $1,000-plus monthly budget for visibility tracking or you can negotiate enterprise pricing.

It stops being worth it when any of these are true. Your AI search strategy depends on Claude, Grok, or Meta AI. You need prompt-level accuracy you can act on without manual verification. You need to tie AI visibility to GA4 sessions and conversions. You want a content production pipeline alongside the tracking. Or you are an SMB or in-house team where $828–$1,148/month buys two senior content roles.

Analyze AI: the agentic SEO and content platform built around the gaps

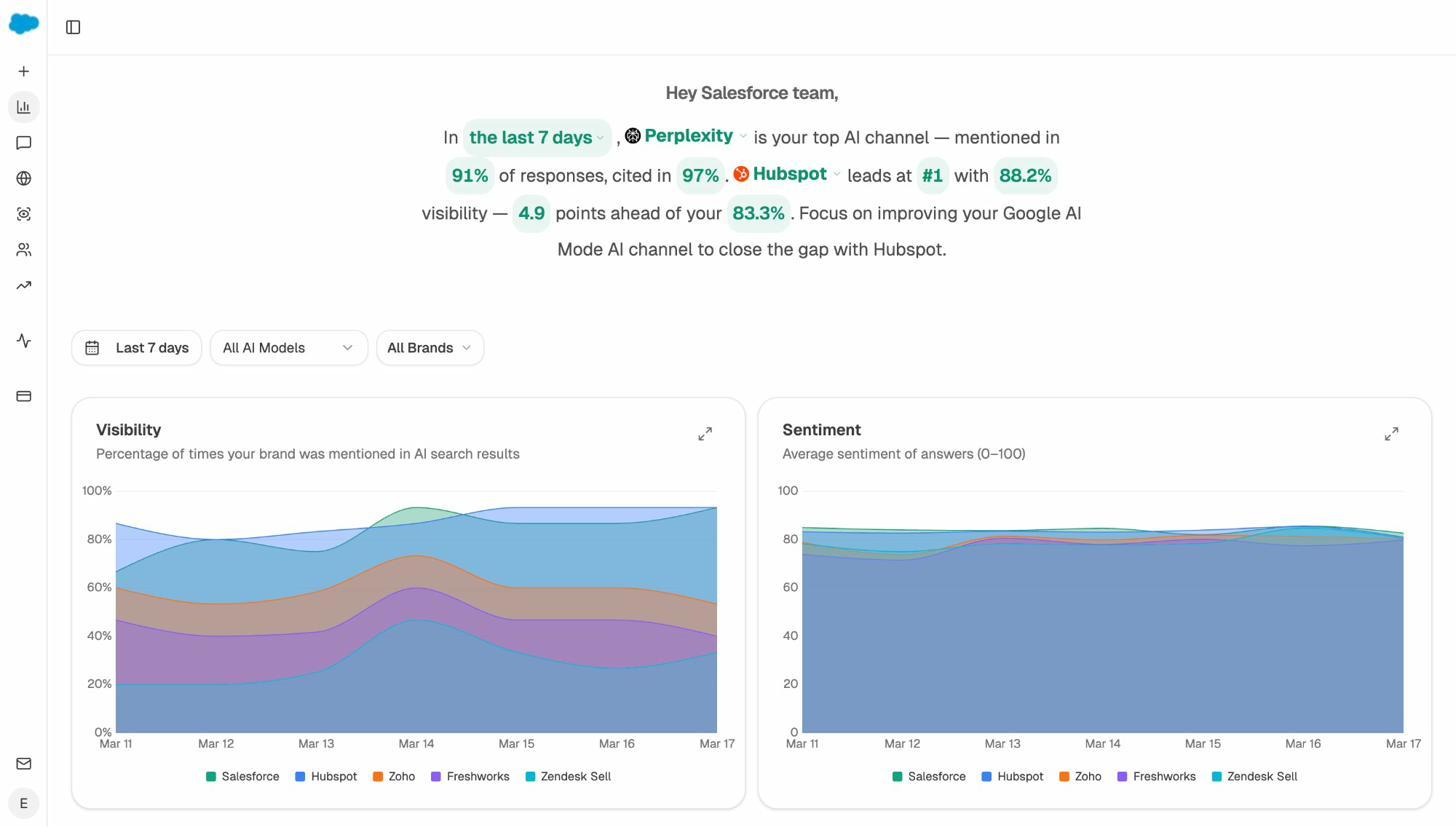

Most AI visibility tools draw a chart and stop. You see a number, maybe a sentiment score, and you are on your own to figure out what to do with it.

Analyze AI takes the opposite approach. We treat AI search as an additional organic channel alongside traditional SEO, not a replacement for it. SEO is not dead. The playbook is evolving, and the brands that win are the ones that compound quality content across both Google and the answer engines.

Inside the platform, that belief turns into four connected layers: Discover, Monitor, Improve, and Govern. Here is what each one does and where it pulls ahead of Brand Radar.

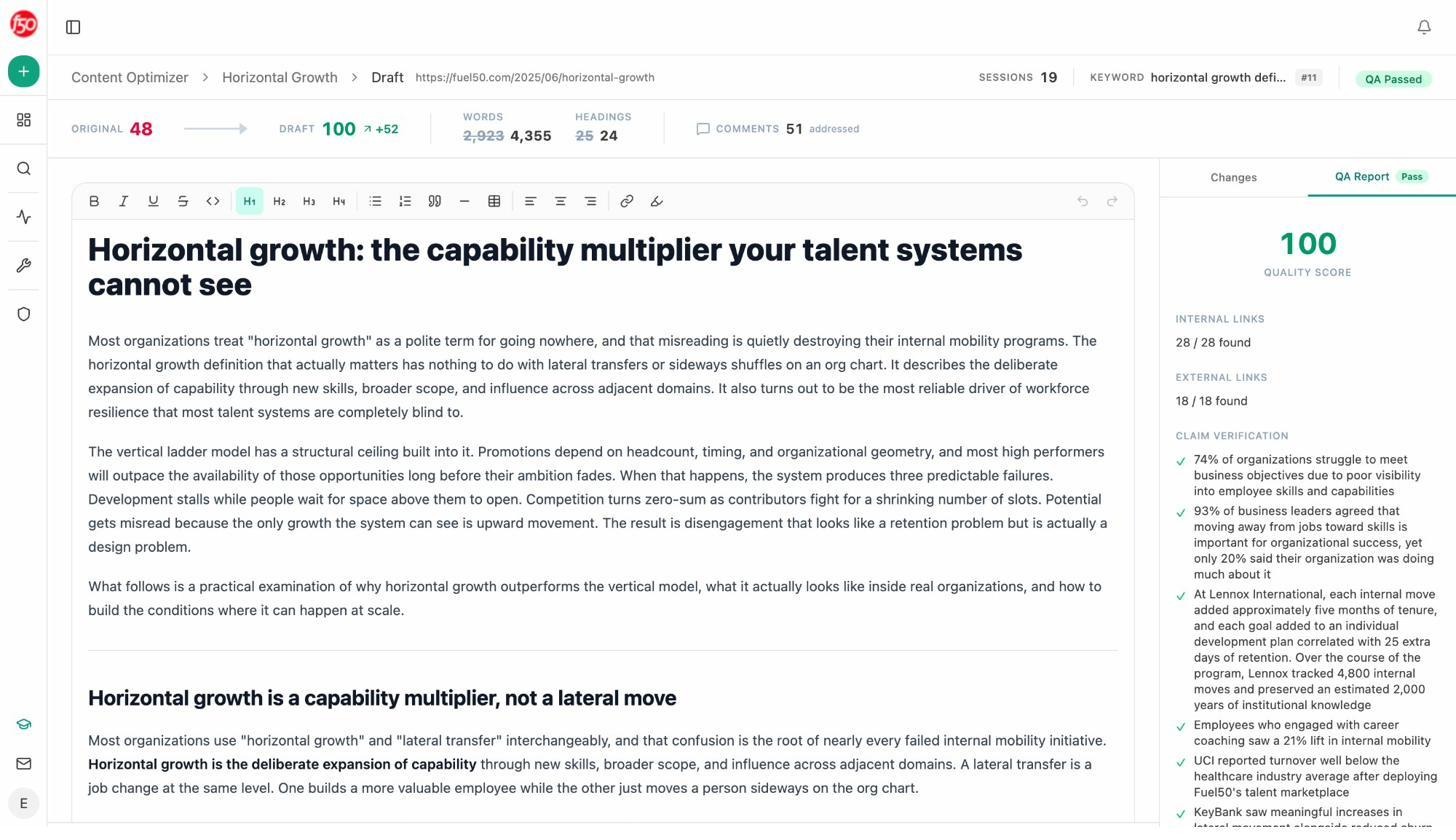

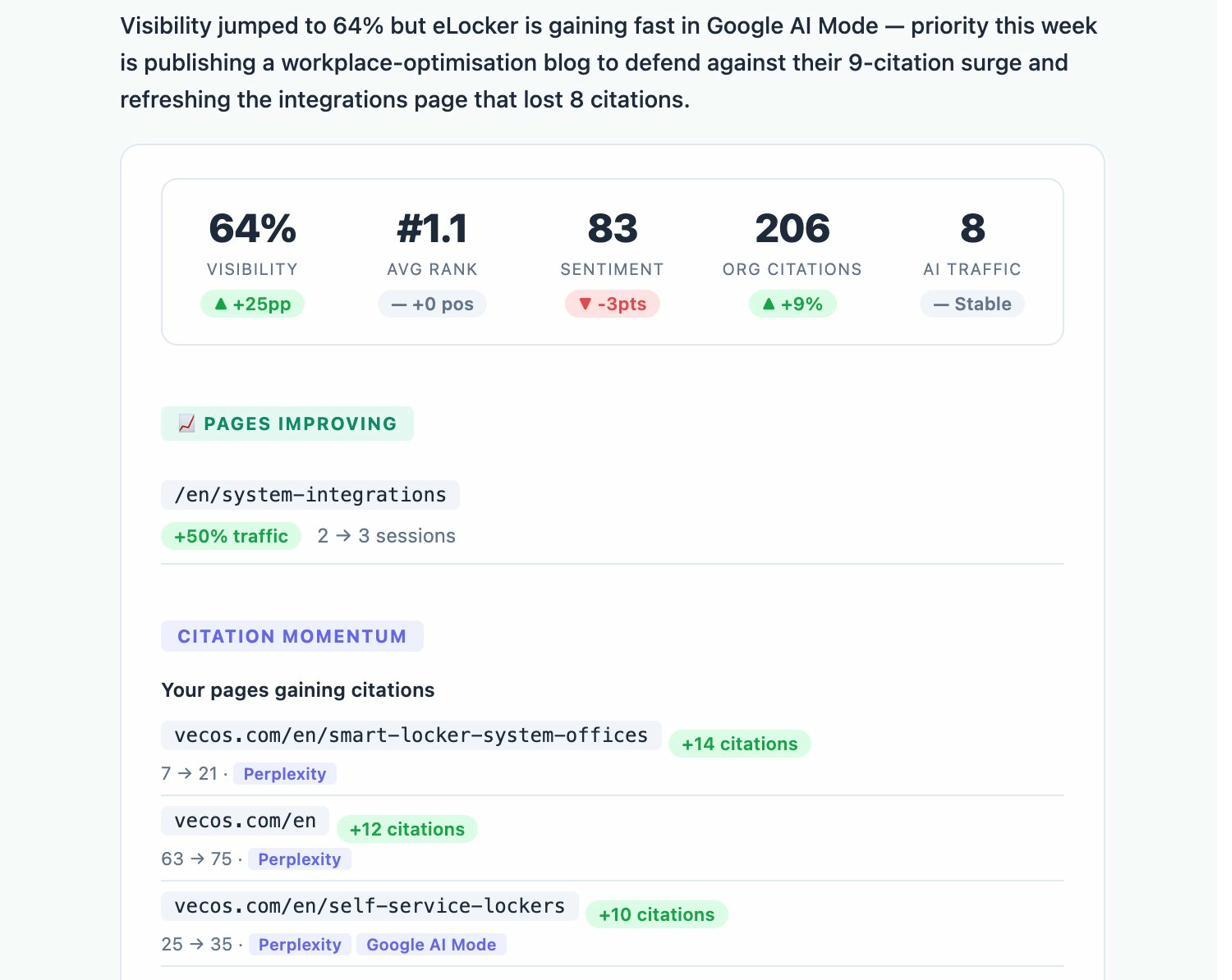

Track AI traffic, conversions, and revenue, not just mentions

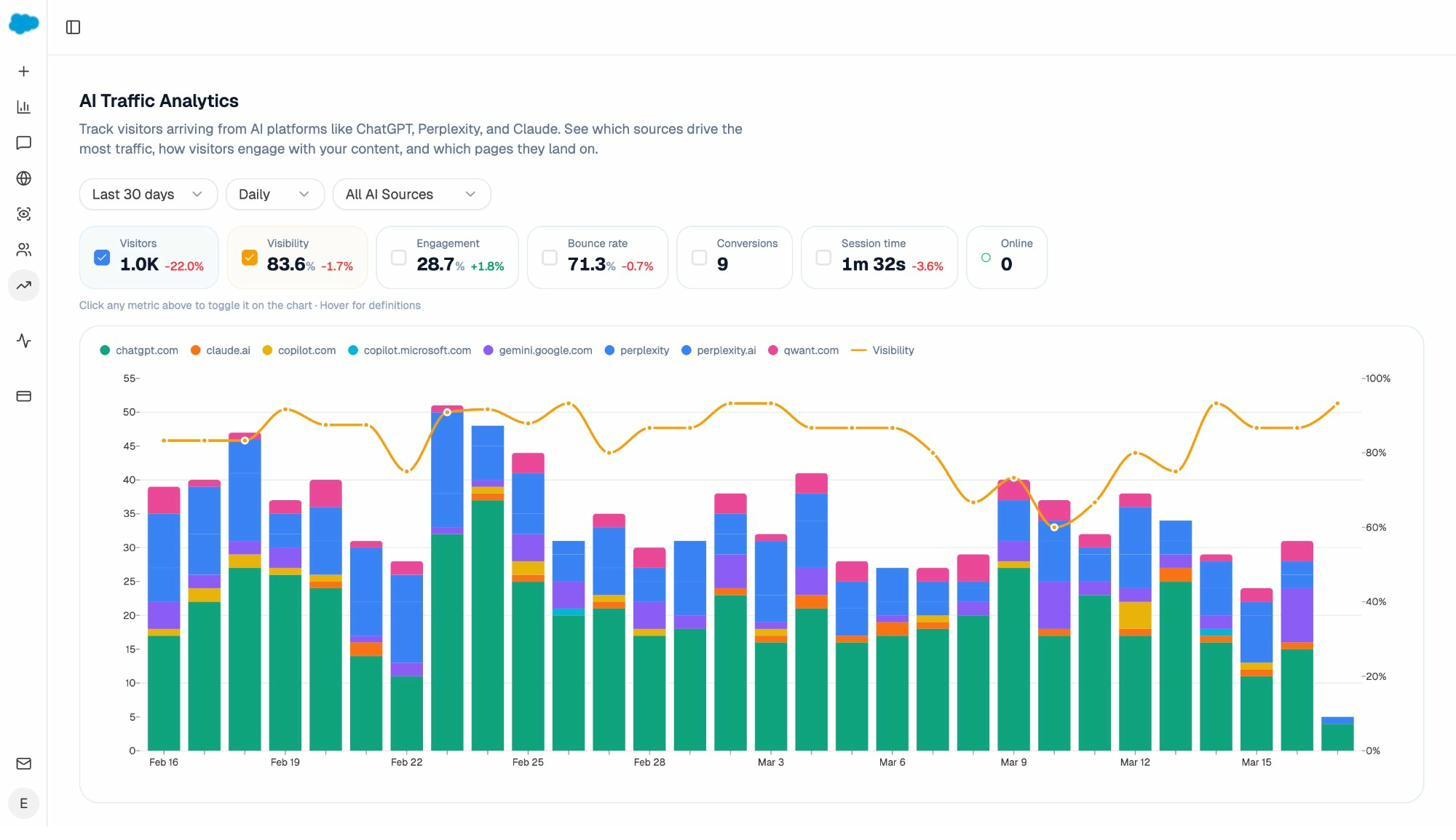

Connect GA4 once and the platform attributes every session from an answer engine to its source. You see ChatGPT, Perplexity, Claude, Copilot, Gemini, and DeepSeek broken out individually, with daily session counts, engagement, bounce rate, conversions, and time on site for each. The chart above is from a real account: 1,000 visitors over 30 days, 9 conversions, 1m 32s average session time, 83.6% visibility, broken out by engine.

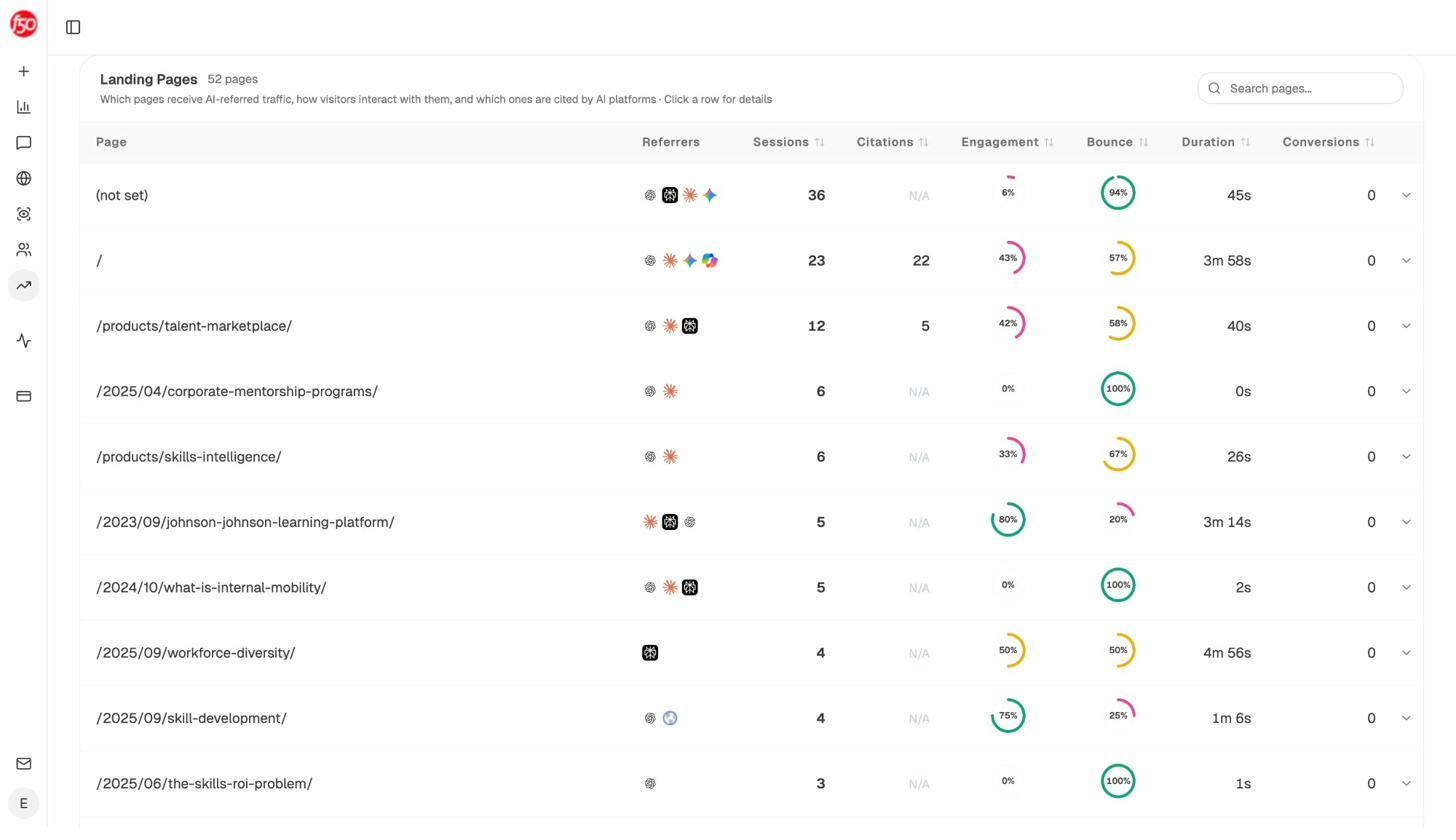

The Landing Pages report goes one level deeper. You see exactly which pages receive AI referrals, which engine sent each session, and what conversion event the visit triggered. When a product comparison page gets 50 sessions from Perplexity and converts 12% to trials while an old blog post gets 40 sessions from ChatGPT with zero conversions, you stop optimizing for visibility and start optimizing for revenue. Mentions without attribution is the AI search equivalent of impressions without clicks.

Track real prompts across all major engines, including the ones Brand Radar misses

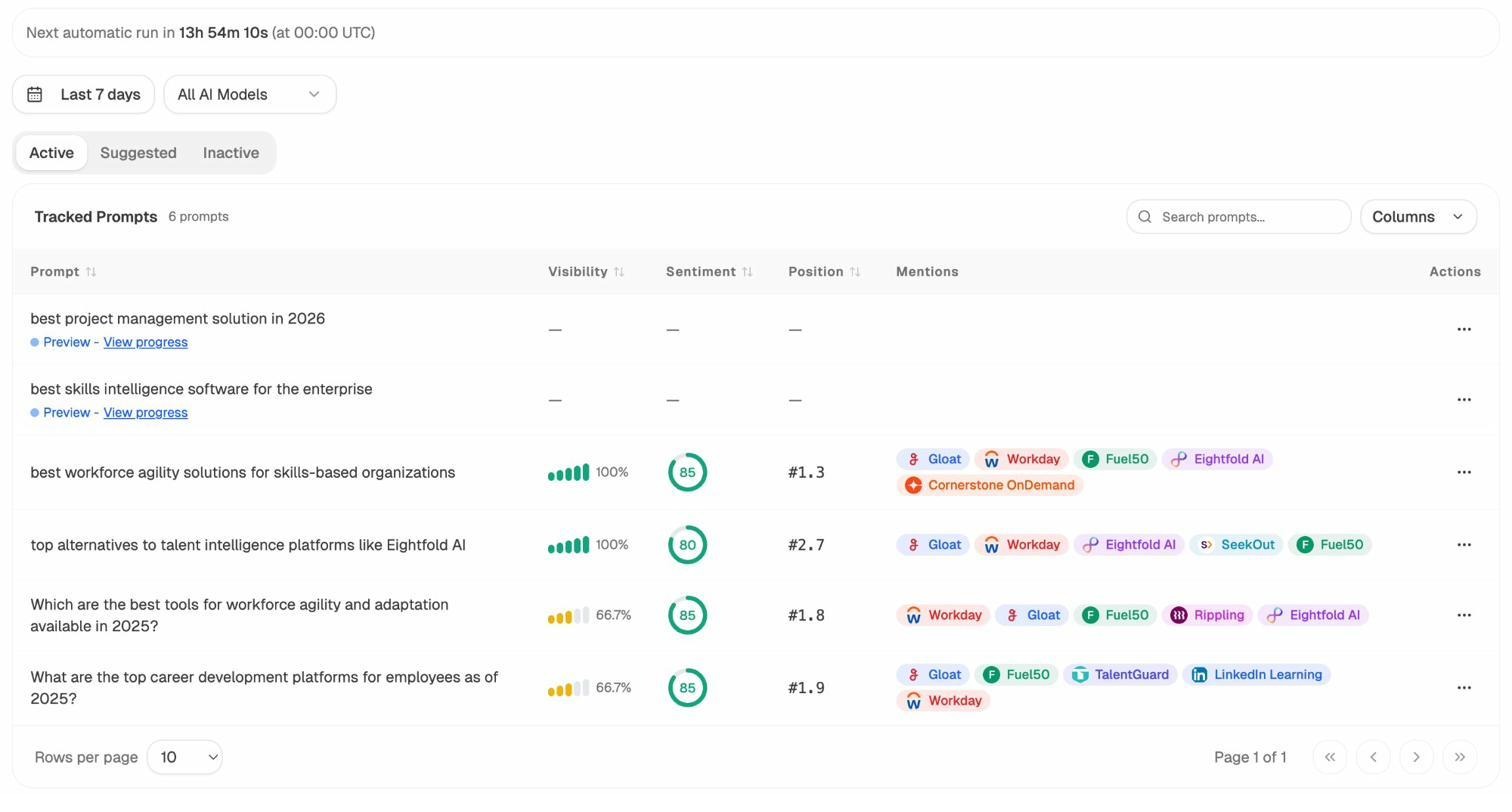

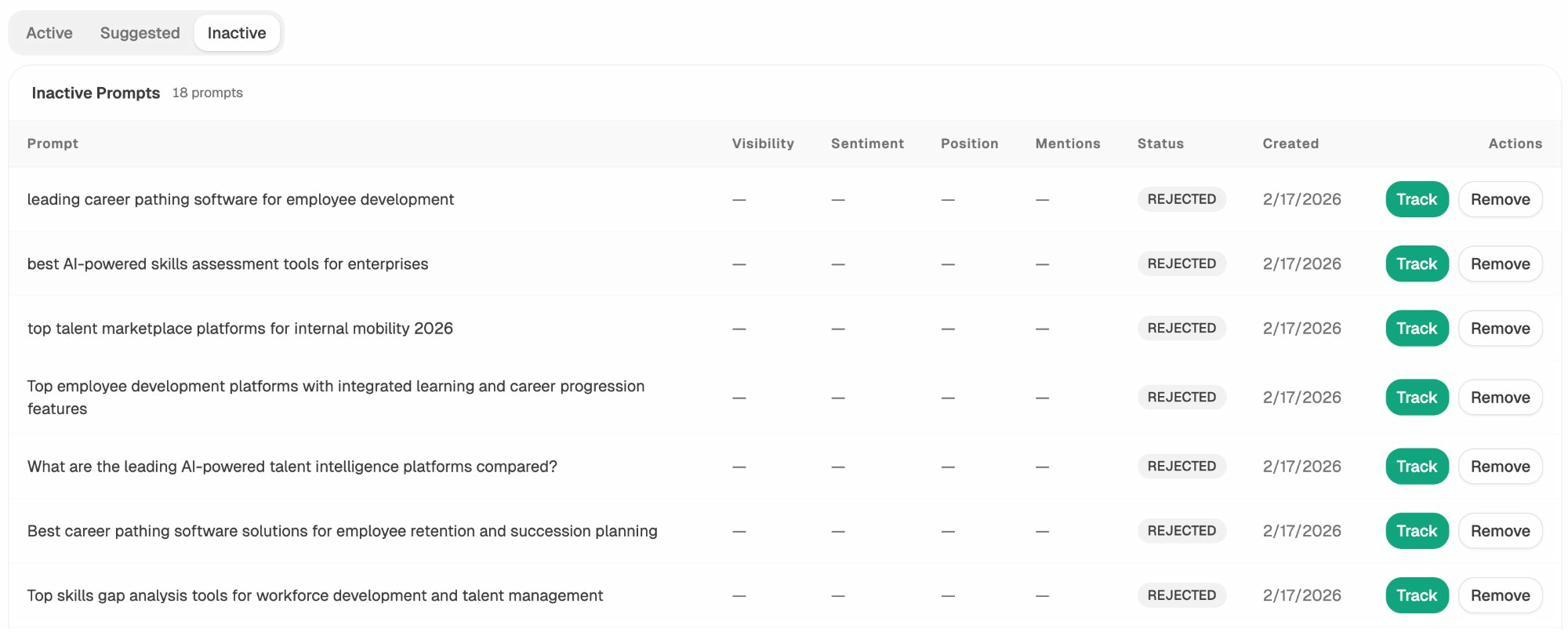

Prompt Tracking runs your tracked prompts daily across ChatGPT, Perplexity, Claude, Gemini, Copilot, and Google AI Mode, including the three engines Brand Radar leaves out. For each prompt you see your visibility percentage, average position, sentiment, and which competitors appear alongside you.

If you don’t already know which prompts to track, Prompt Discovery surfaces the bottom-of-funnel queries your buyers actually use. There is also an Ad Hoc Prompt Search for one-off validation runs across all engines without setting up a tracking project.

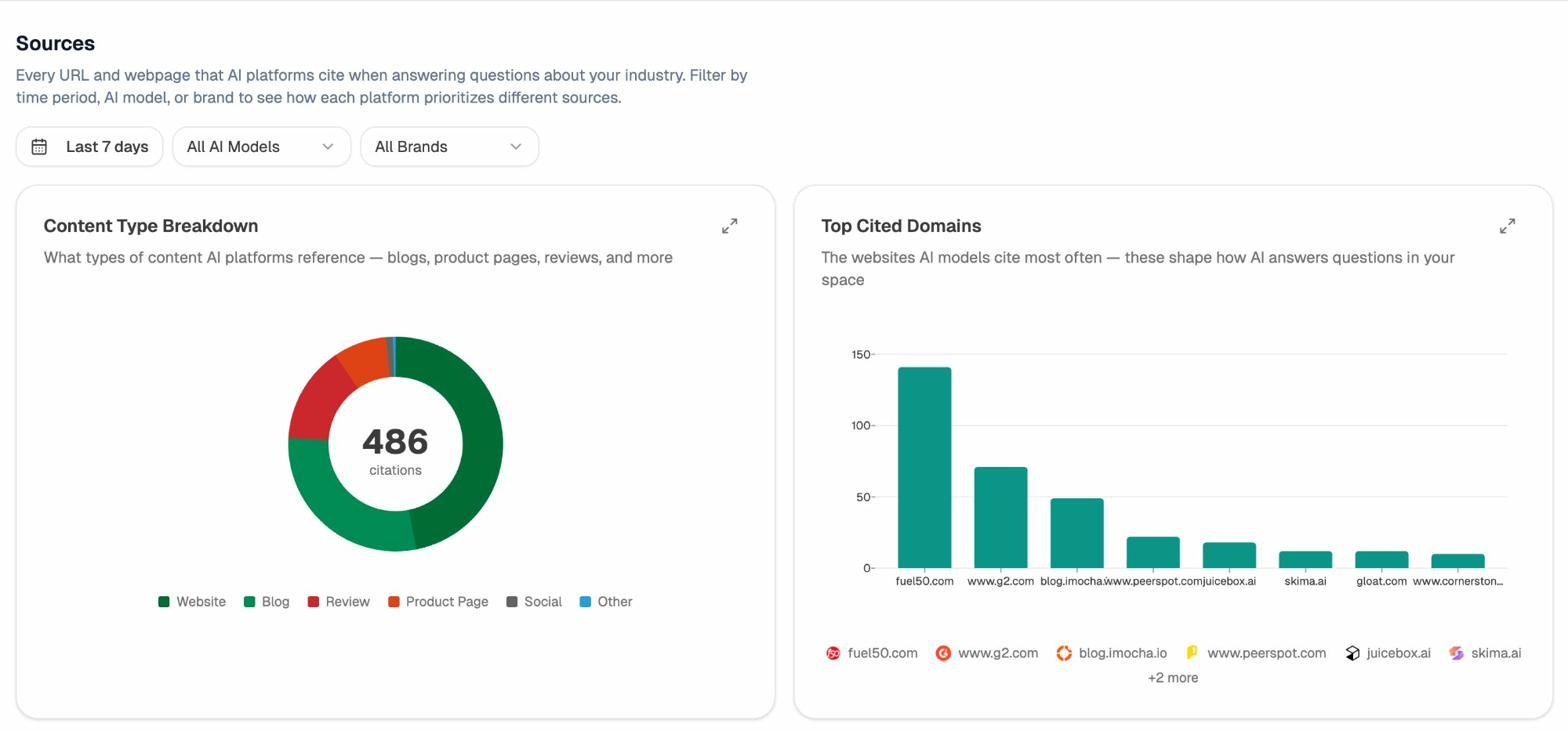

See exactly which sources models cite, and where competitors get their authority

Citation Analytics maps every URL and domain AI engines reference when answering questions in your category. You see citation counts per domain, which models reference each source, when those citations first appeared, and which content types (blogs, product pages, reviews, documentation) the engines actually pick up.

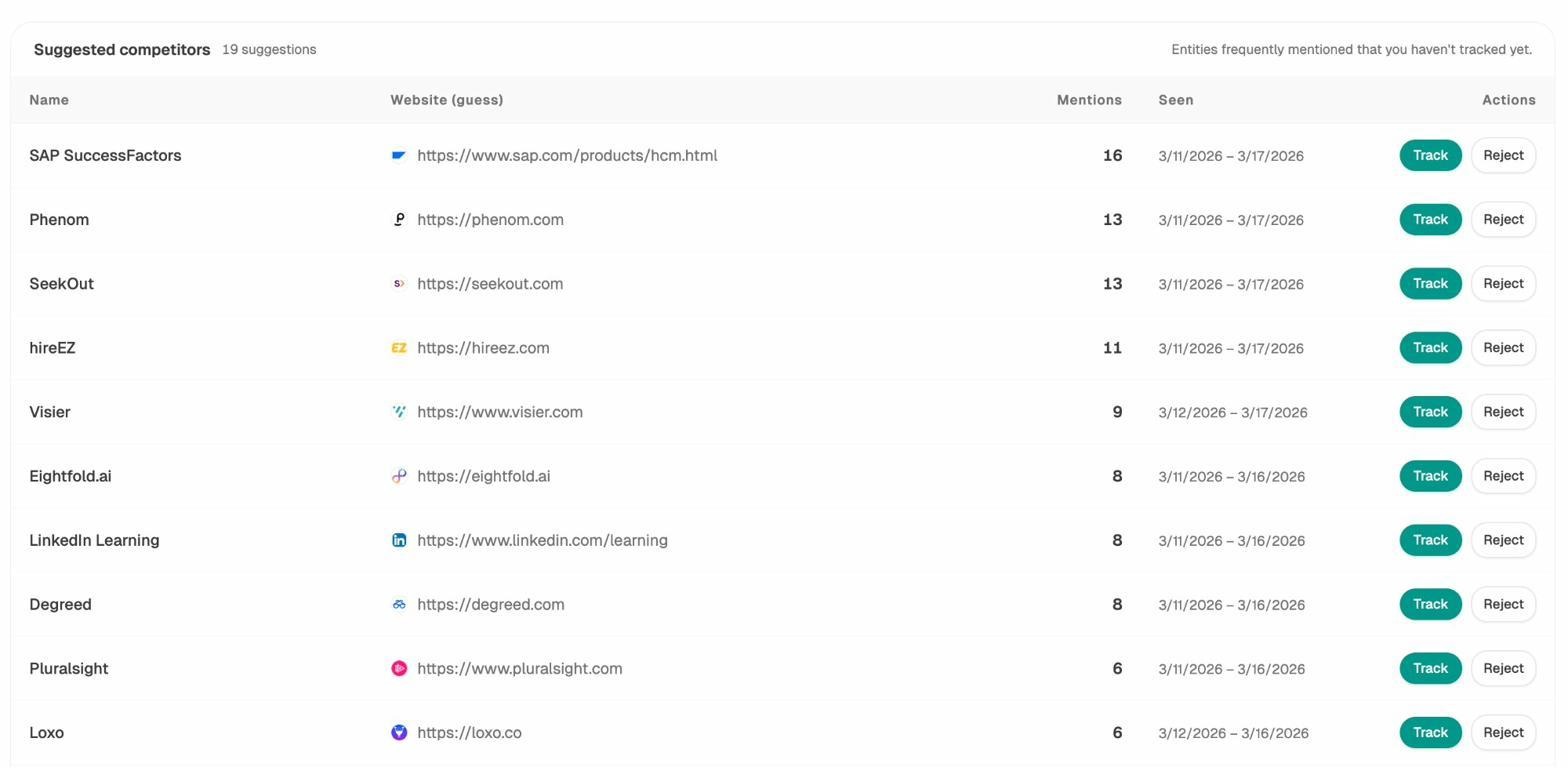

The Competitor Intelligence layer surfaces brands frequently mentioned in your category that you haven’t added yet, with mention counts and date ranges. Click Track and they’re added to your scoreboard. This is how you find out a competitor was mentioned 16 times in the last week before they show up on your sales team’s radar.

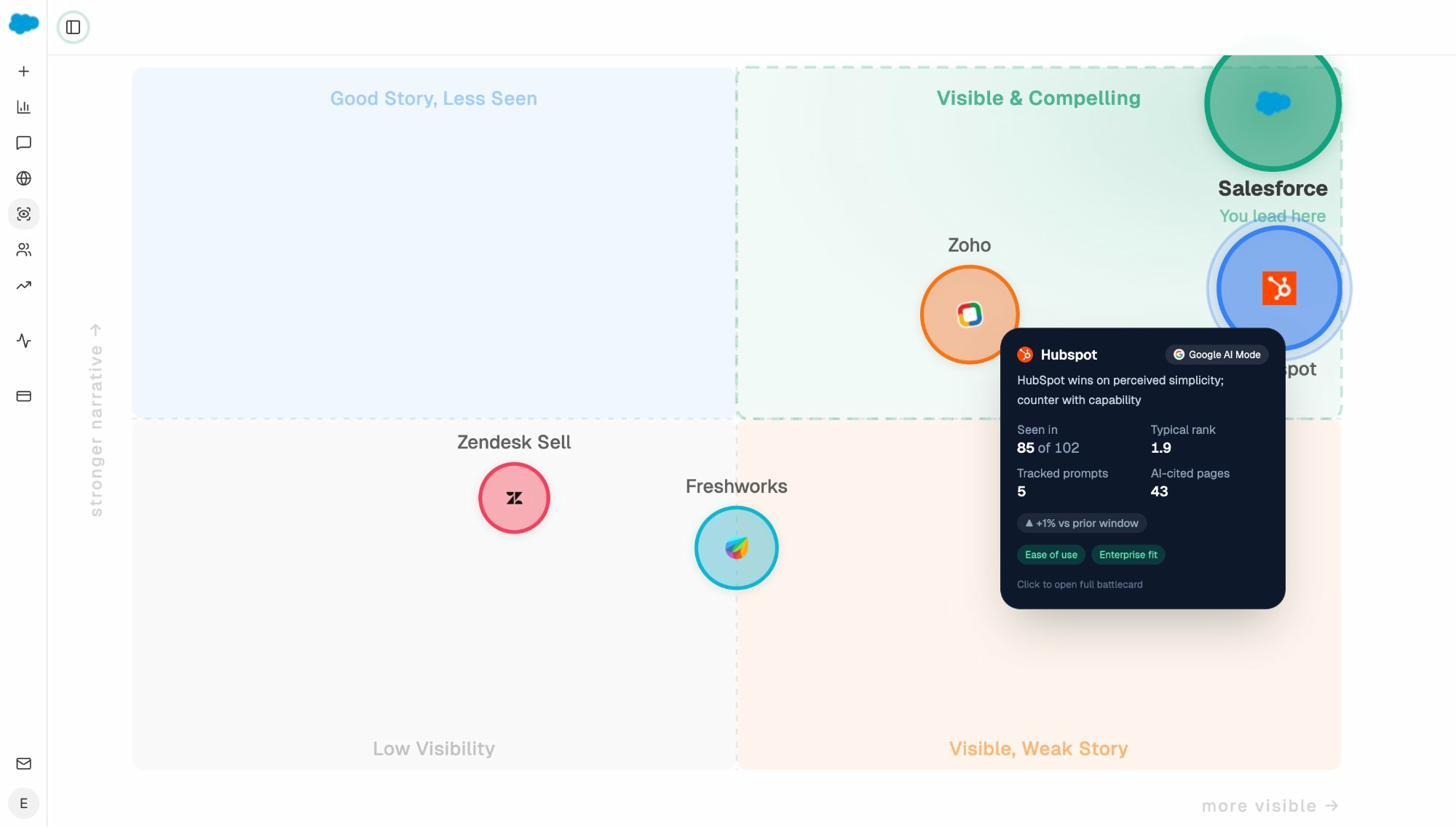

Map the perception, not just the visibility

The Perception Map plots every tracked brand on a quadrant of presence (how often they appear) versus narrative strength (how strongly the AI describes them). You see at a glance whether you’re in the “Visible & Compelling” zone, the “Good Story, Less Seen” zone, or the “Visible, Weak Story” zone where mentions exist but the framing is hurting you. Visibility tells you that you exist. Perception tells you whether existing is helping or hurting.

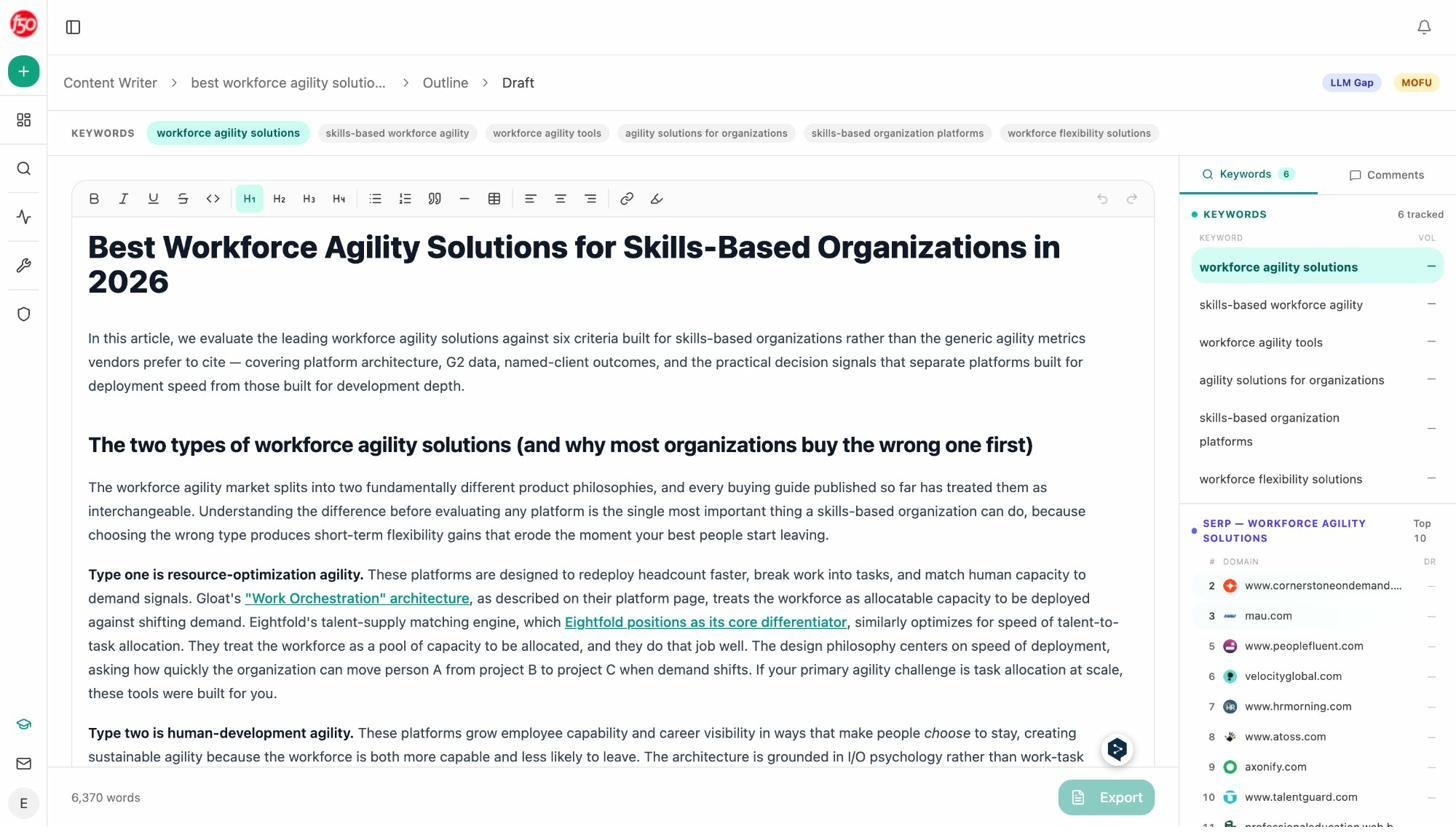

A content writer and optimizer that produce better drafts because the methodology is better

This is where the comparison breaks fully open. Brand Radar has no content production layer.

The Analyze AI Content Writer runs a four-stage pipeline. Idea details, research with reviewer comments, structured outline, then draft. Each stage produces output the next stage builds on, and the keyword panel surfaces SERP and AI engine citation gaps as you write. The result is a 6,000-word draft with internal links, evidence-backed claims, and brand voice that matches your knowledge base.

The Content Optimizer takes any existing URL and runs the same diagnostic-and-rewrite loop. The screenshot above shows a real run. Original quality score 48, optimized score 100, 51 editorial comments addressed, 28 internal links found and embedded, 18 external links verified, every claim either verified against a source or removed.

Wake up to a plan every Monday

The Weekly Email Digest lands in your inbox every Monday with the metrics that moved (visibility, rank, sentiment, citations, AI traffic), the pages that improved or declined, the prompts where you started appearing, and the citations you gained or lost. For agency owners, this makes monthly client retainer reporting effectively free. For in-house leaders, it gives you a coherent answer when the CEO asks “how are we doing in AI search” on a Monday morning.

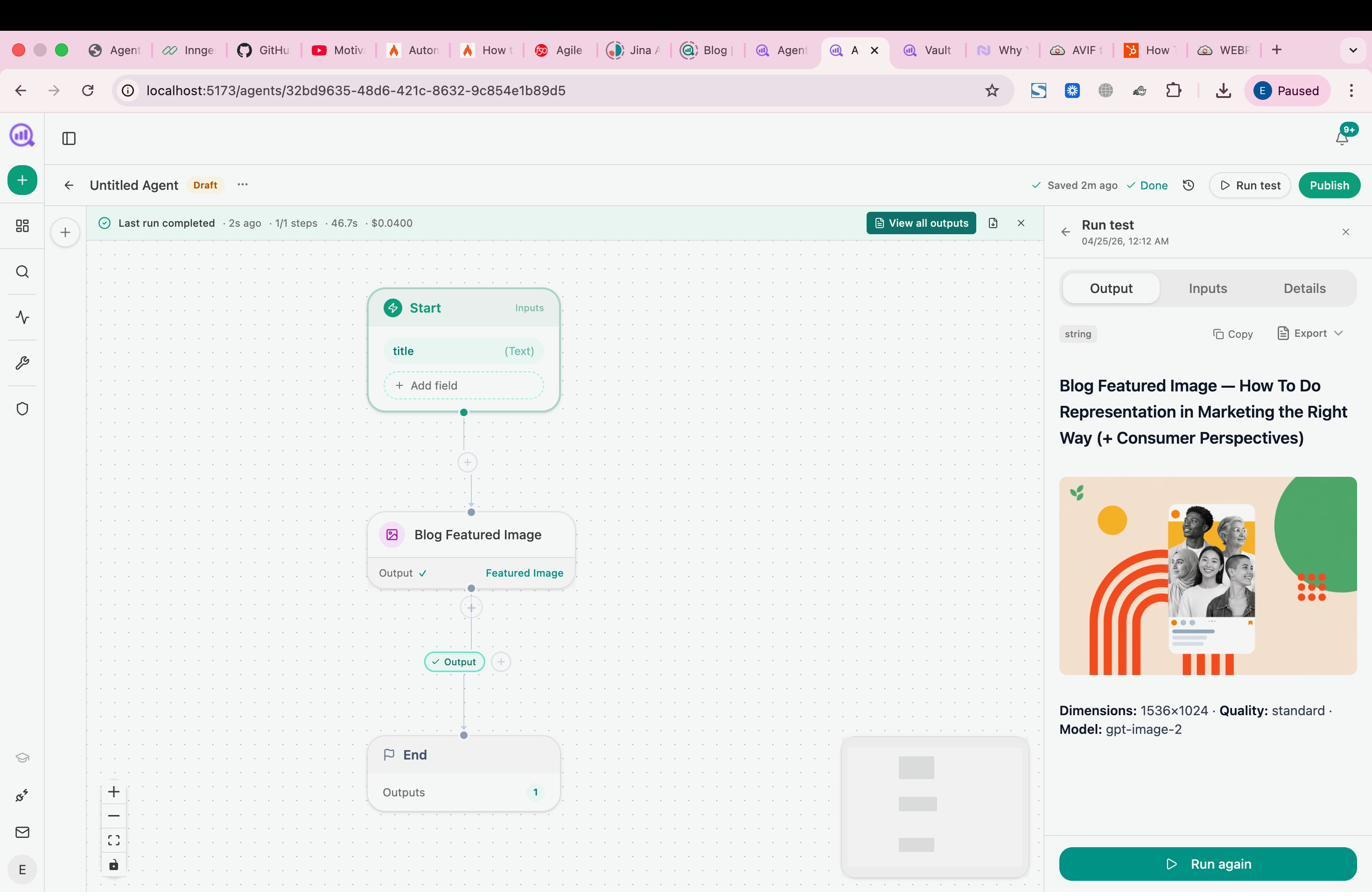

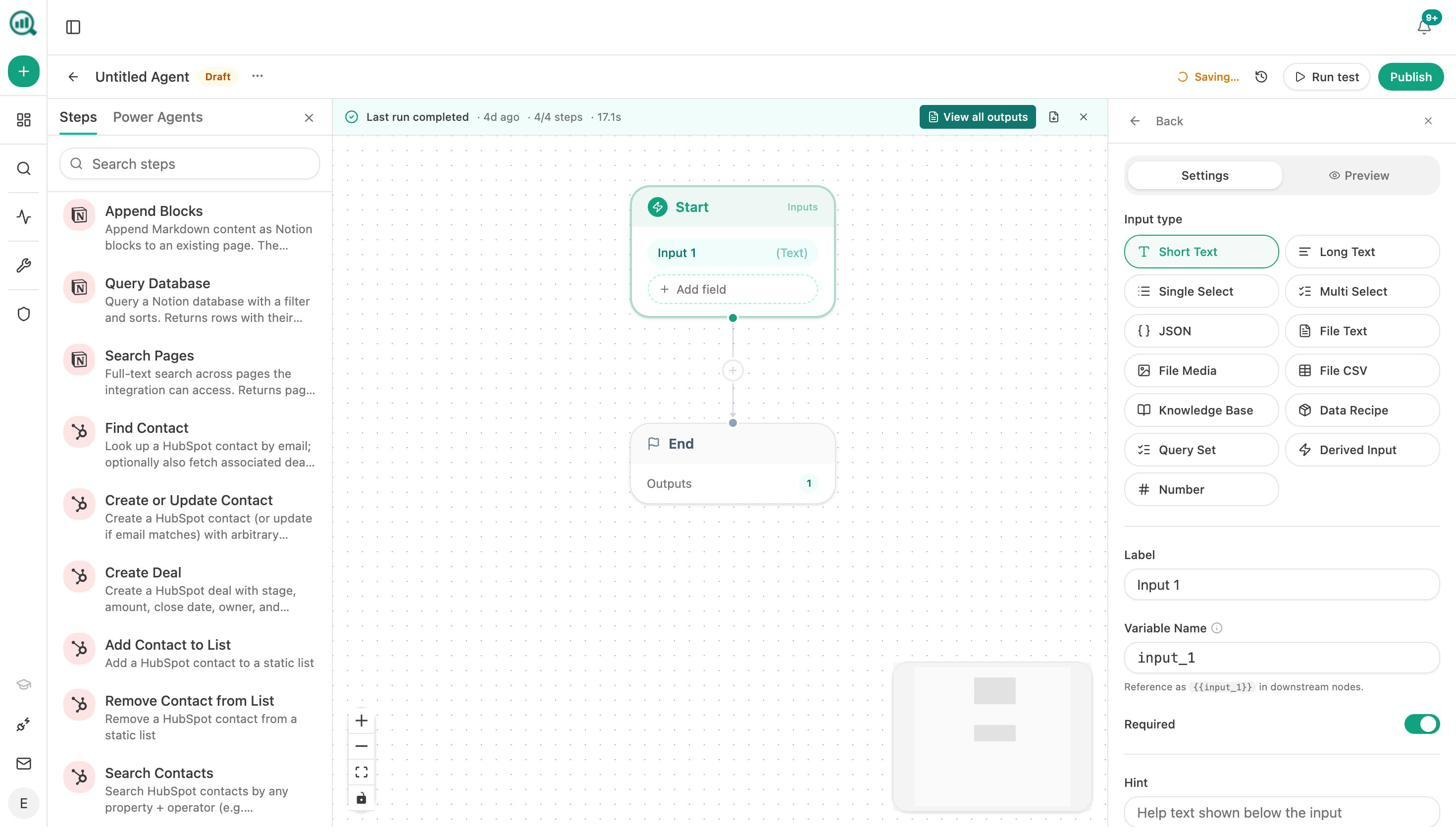

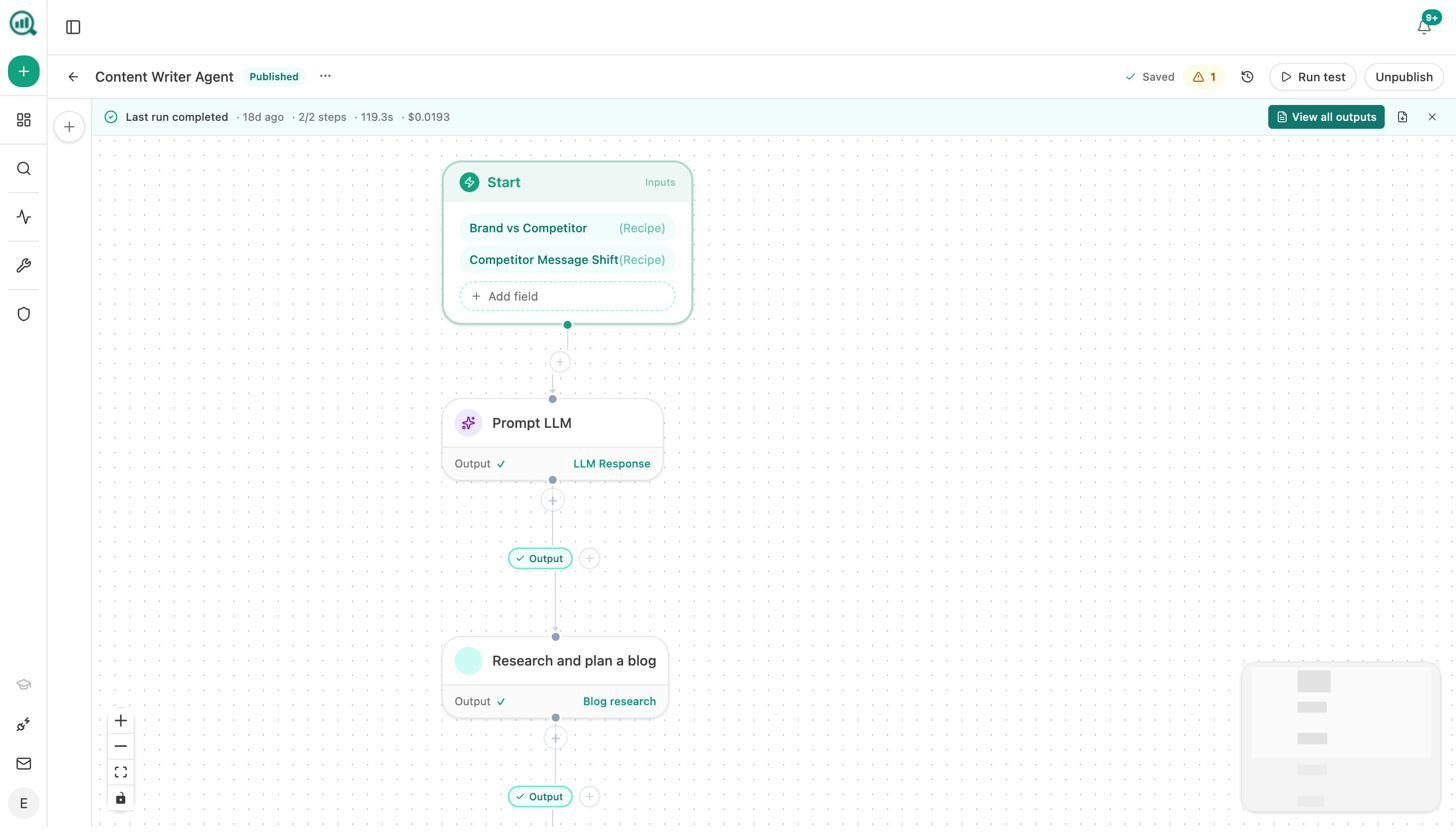

The Agent Builder: a programmable substrate that replaces five other tools

This is the part of the platform Brand Radar has no equivalent for, and the part most teams underestimate until they start using it.

The Agent Builder is a node-based workflow canvas with 180+ production nodes spanning AI models (Claude, GPT, Gemini, Perplexity), web research, SEO research (DataForSEO with 27 endpoints, Semrush with 7), Google Search Console, GA4 AI traffic, HubSpot full read/write, Notion, WordPress, Sanity, Contentful, Mailchimp, B2B enrichment (Hunter, Tomba), image generation, and 34 pre-built data recipes that pull pre-computed Analyze AI insights into any workflow.

You can run agents three ways. Manual triggers handle one-off briefs. Scheduled triggers handle Monday board prep that builds itself at 7am, weekly competitor diffs, and monthly retainer reports. Webhook triggers handle event-driven workflows. A HubSpot deal closes and a case study draft starts itself. A media monitoring tool flags negative coverage and a response brief lands in Slack.

A few real workflows teams have shipped:

-

Monday board prep runs on a schedule, pulls executive summaries, share of voice, GA4 traffic, AI referrer data, and new HubSpot deals, and emails leadership a DOCX before 7am Monday.

-

Brief-to-publish pipeline triggers from a Notion status change, generates research, an outline, and a full draft, runs it through the AEO scorecard, and either publishes to WordPress or Slacks the writer with the gaps.

-

Crisis early-warning runs every 15 minutes, monitors brand mentions and news, filters for sentiment and reach thresholds, and Slacks the comms team within minutes of a negative hit.

-

Inbound-form to enriched lead triggers from a Typeform submission, runs Hunter and Tomba enrichment, pulls a DataForSEO domain overview, runs a Lighthouse audit, upserts the contact in HubSpot, and Slacks the AE.

A scheduled agent is a virtual analyst who does the same job every Monday and costs cents. A webhook agent collapses the lag between event and action from days to seconds. A manual agent replaces the 30-minute paste-format-send loop with a single click.

How Analyze AI compares to Ahrefs Brand Radar

|

Capability |

Ahrefs Brand Radar |

Analyze AI |

|---|---|---|

|

AI engines tracked |

6 (no Claude, Grok, Meta AI) |

8+ (includes Claude, Meta AI, DeepSeek) |

|

Prompt-level tracking accuracy |

Documented 97.5% gap on ChatGPT |

Live prompt-level execution daily |

|

GA4 traffic and conversion attribution |

No |

Yes, by engine and landing page |

|

Sentiment analysis at prompt level |

No |

Yes |

|

Citation analytics with content type breakdown |

Partial |

Yes |

|

Perception map (presence × narrative) |

No |

Yes |

|

Content writer with research + outline + draft |

No |

Yes |

|

Content optimizer with QA scorecard |

No |

Yes |

|

Workflow automation / agents |

No |

180+ nodes, scheduled + webhook + manual |

|

Pricing structure |

Add-on, $828–$1,148/mo full coverage |

Single plan with all features |

|

Free trial |

No |

Yes |

If your team’s job is to prove AI search drives revenue, ship the content that earns the citations, and run the operations on a cadence that doesn’t depend on someone remembering, the comparison stops being close.

The verdict

Ahrefs Brand Radar is a competent Google AI Overviews tracker bolted onto a strong traditional SEO database. It also has accuracy gaps on ChatGPT and Perplexity, no coverage of Claude or Grok or Meta AI, no GA4 attribution, no content production layer, no automation surface, and a price tag of $828–$1,148/month for full coverage on top of an existing Ahrefs plan.

If you are an Ahrefs power user with a large budget and a Google-AI-Mode-first strategy, it is a defensible add-on. For everyone else, you are paying enterprise prices for a measurement layer that stops short of action.

Analyze AI is built around the gaps. Real prompt-level tracking across all major engines, full GA4 attribution from engine to landing page to conversion, a writer and optimizer that ship drafts instead of suggestions, and an agent builder that automates the operational layer of your marketing org so the team can focus on judgment instead of glue work.

Start a free trial or book a walkthrough. For more, see our breakdown of Ahrefs Brand Radar alternatives, the 9 best LLM monitoring tools for 2026, and our direct feature comparison.

Ernest

Ibrahim

![7 LLMrefs Alternatives That Do More Than Track Mentions [2026]](/_next/image?url=https%3A%2F%2Fwww.datocms-assets.com%2F164164%2F1779313840-blobid0.png&w=3840&q=75)