Summarize this blog post with:

SE Ranking’s AI Visibility Tracker does one thing well. It shows you whether your brand appears in Google AI Overviews and AI Mode for your tracked keywords. But if you need to track ChatGPT, Perplexity, Claude, and Copilot today, tie visibility to actual sessions and conversions, or automate the content work that moves the metric, you will run into limits fast.

The add-on pricing stacks up quickly too. SE Ranking’s base SEO suite starts at $52/month billed annually, but once you add meaningful AI prompt coverage, you are looking at $150 to $240+ per month. Smaller tiers cap you at around 200 prompts. Track multiple competitors across multiple engines and you hit that ceiling within weeks.

In this article, you’ll learn which SE Ranking AI Visibility Tracker alternatives actually connect AI visibility to revenue, which ones stop at mention counting, and how to pick the right tool based on your team size, budget, and what you need beyond tracking.

Table of Contents

Quick Comparison

|

Tool |

Best for |

Engines today |

Ties to revenue? |

Content/action layer |

Price range |

|---|---|---|---|---|---|

|

Analyze AI |

Teams that need visibility, content, and ops in one stack |

ChatGPT, Perplexity, Claude, Copilot, Gemini |

Yes (GA4 attribution) |

Writer, Optimizer, 180+ node Agent Builder |

Mid-tier |

|

Peec AI |

Multi-engine visibility with clean dashboards |

ChatGPT, Perplexity, Gemini, Claude, AI Overviews |

No |

None |

~$99/mo |

|

Rankability |

Agencies that need white-label AI reporting |

ChatGPT, Perplexity, Claude, Gemini |

No |

Via Rankability SEO suite |

~$79/mo+ |

|

LLMrefs |

Quick citation checks without setup |

ChatGPT, Perplexity, Claude, Gemini |

No |

None |

Low |

|

Otterly AI |

Brand sentiment and tone analysis |

ChatGPT, Perplexity, Gemini, AI Overviews |

No |

GEO Audit (diagnostic) |

Mid-tier |

|

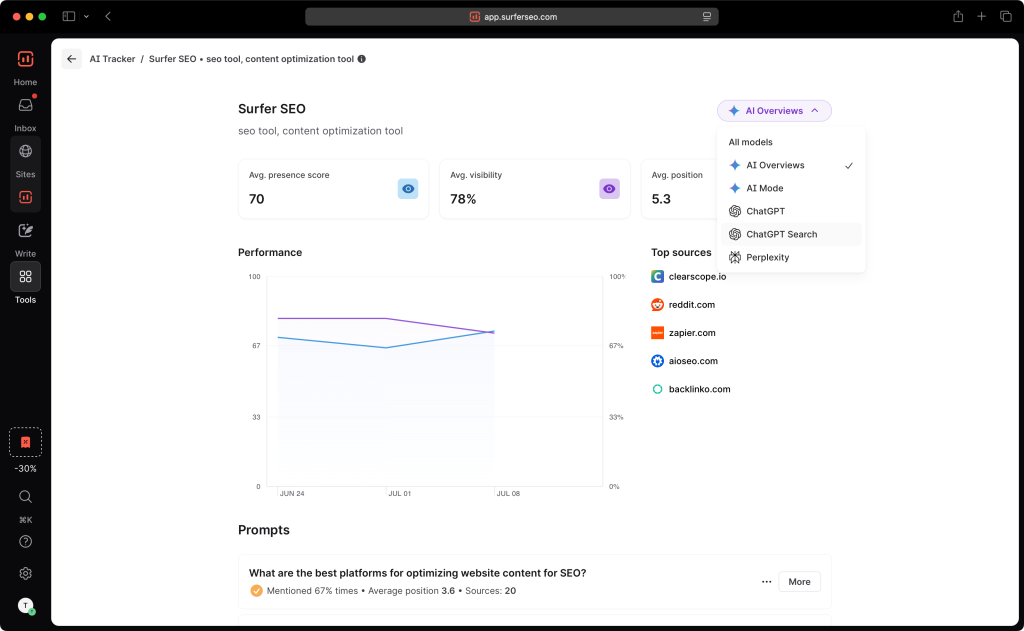

Surfer AI Tracker |

Teams already using Surfer SEO |

ChatGPT, Google AI Mode, Perplexity (expanding) |

No |

None (optimize via Surfer) |

Add-on by prompt count |

|

Profound |

Enterprise compliance and audit depth |

ChatGPT, Perplexity, Gemini, Copilot, AI Overviews |

No |

Emerging “Actions” |

~$499/mo+ |

The biggest difference between these tools is not engine coverage. Most now track four or five engines. The real split is what happens after you see the data. Most tools show you a visibility score and stop. A few connect that score to traffic, content decisions, and actual pipeline.

1. Analyze AI: Best Overall SE Ranking Alternative for Teams That Need More Than Tracking

Most AI visibility tools show you that your brand appeared in a ChatGPT response. Then they leave you to figure out what to do about it.

You get a visibility percentage, maybe a sentiment score, but no connection to what happened after the mention. Did anyone click? Did they convert? Was it worth the content investment?

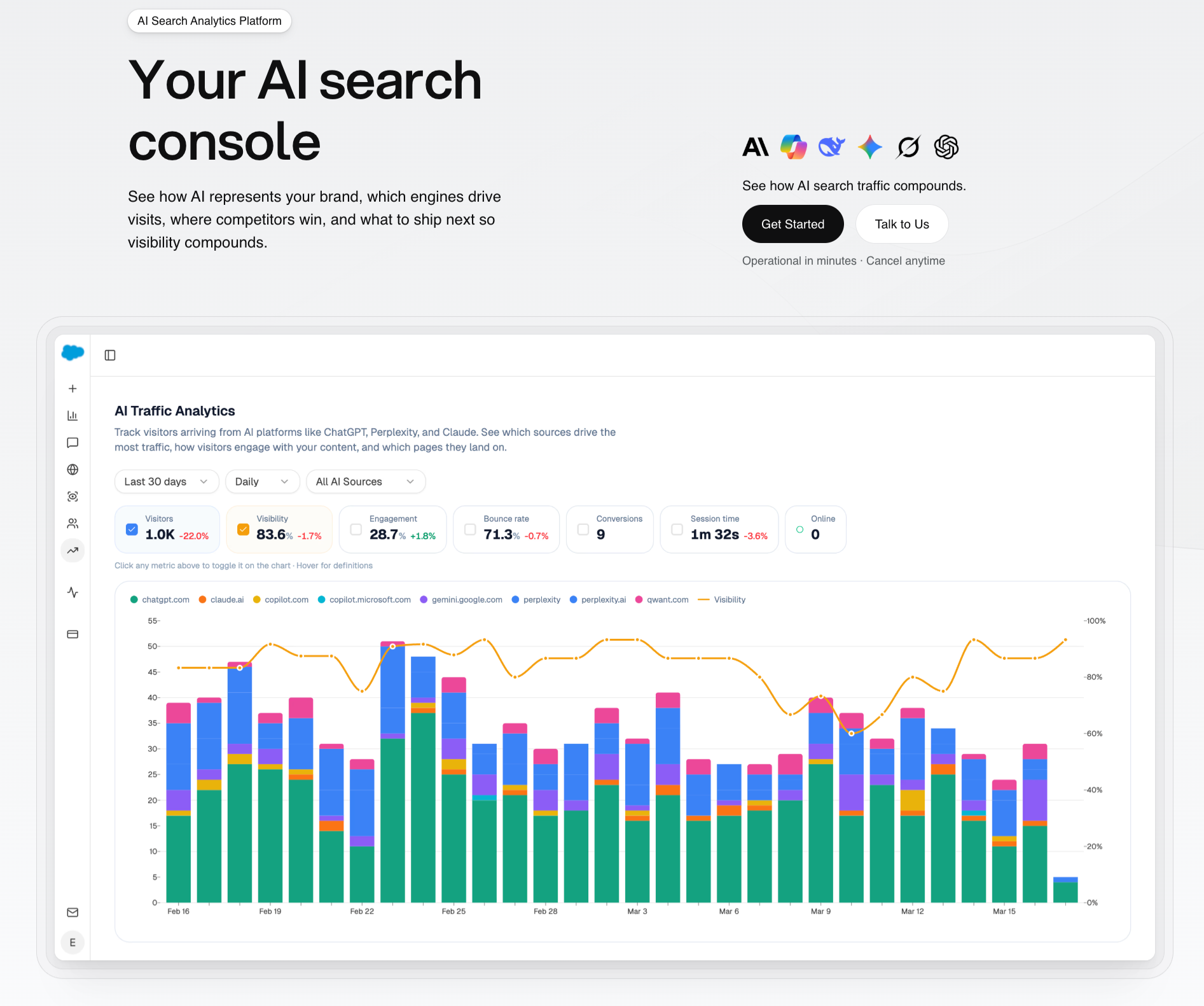

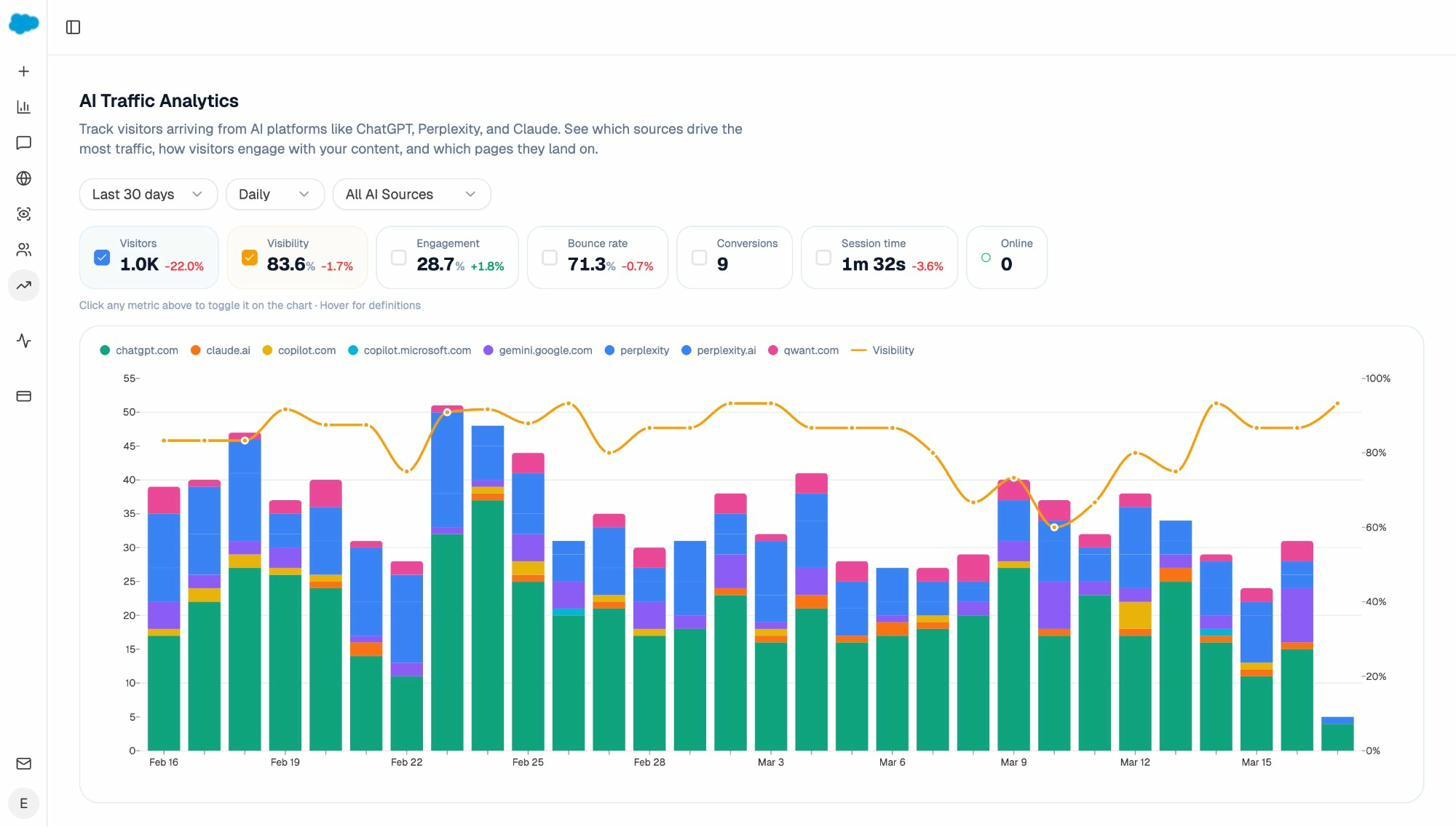

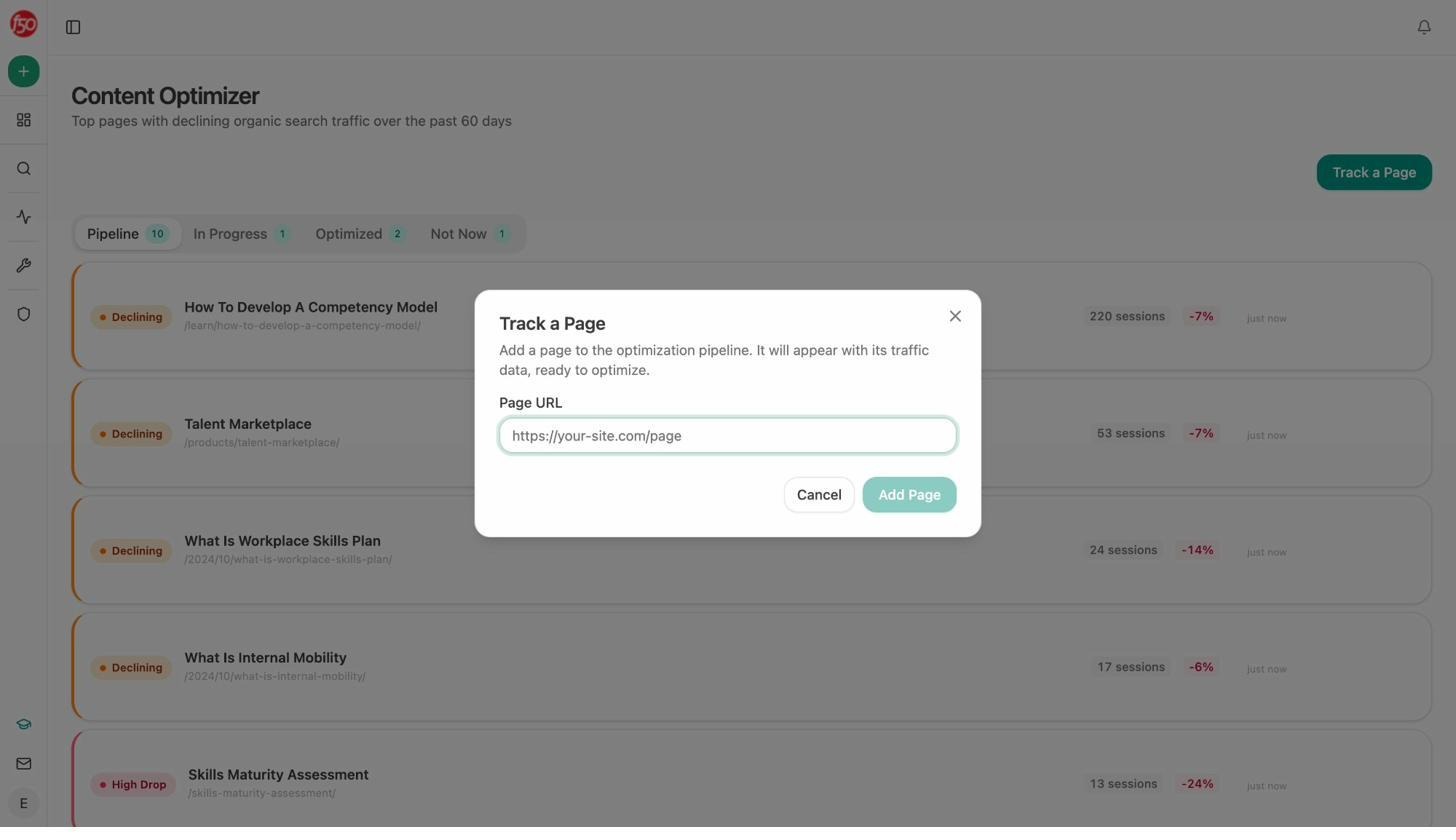

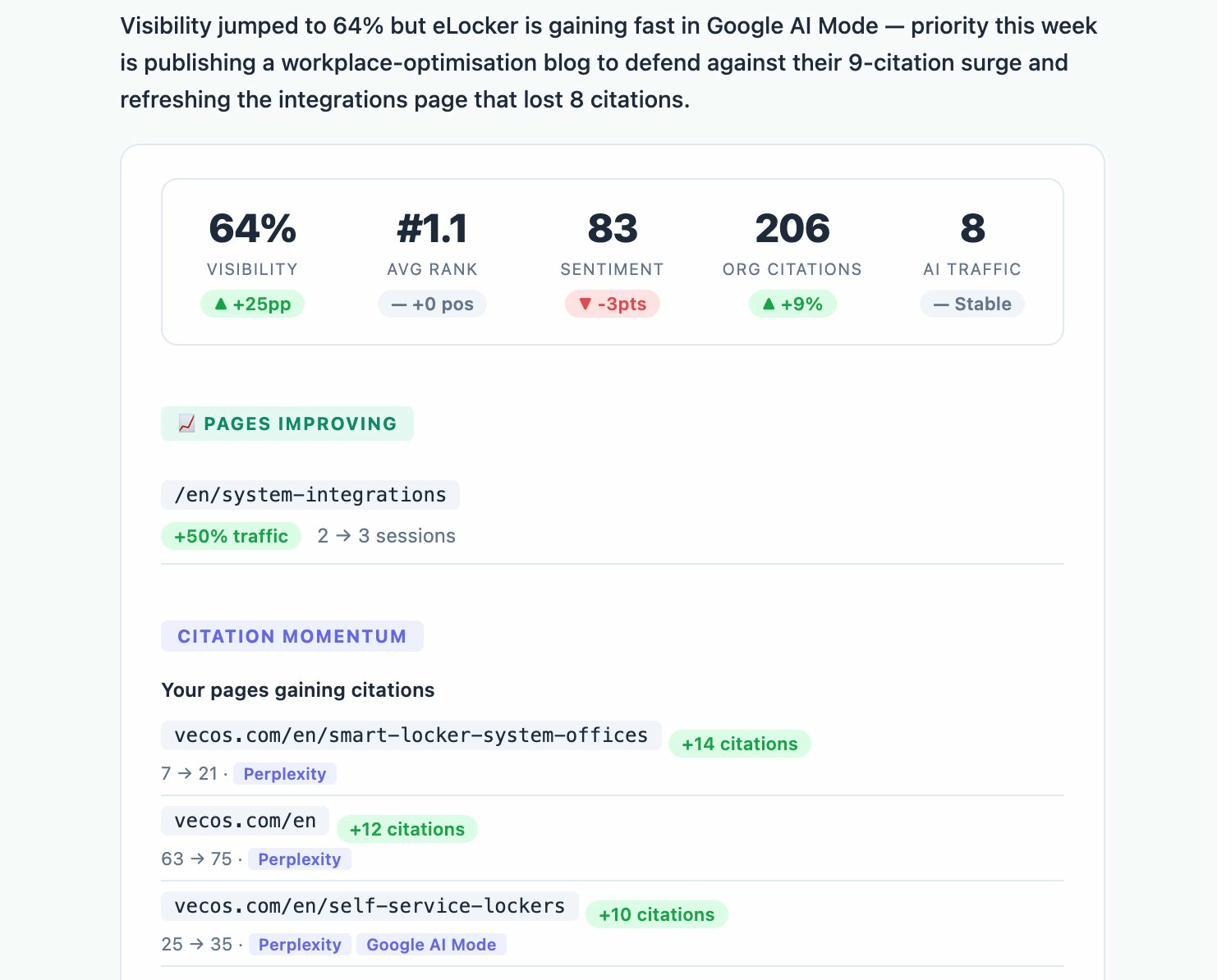

Analyze AI does not stop at tracking. It is the agentic platform for SEO, AEO, content, and GTM ops that connects AI visibility data to GA4 sessions, landing pages, and conversions. And it gives you the tools to act on what you find.

See which AI engines send traffic and which pages convert it

Analyze AI attributes every session from answer engines to its specific source. You see session volume by engine, trends over time, and what percentage of your total traffic comes from AI referrals.

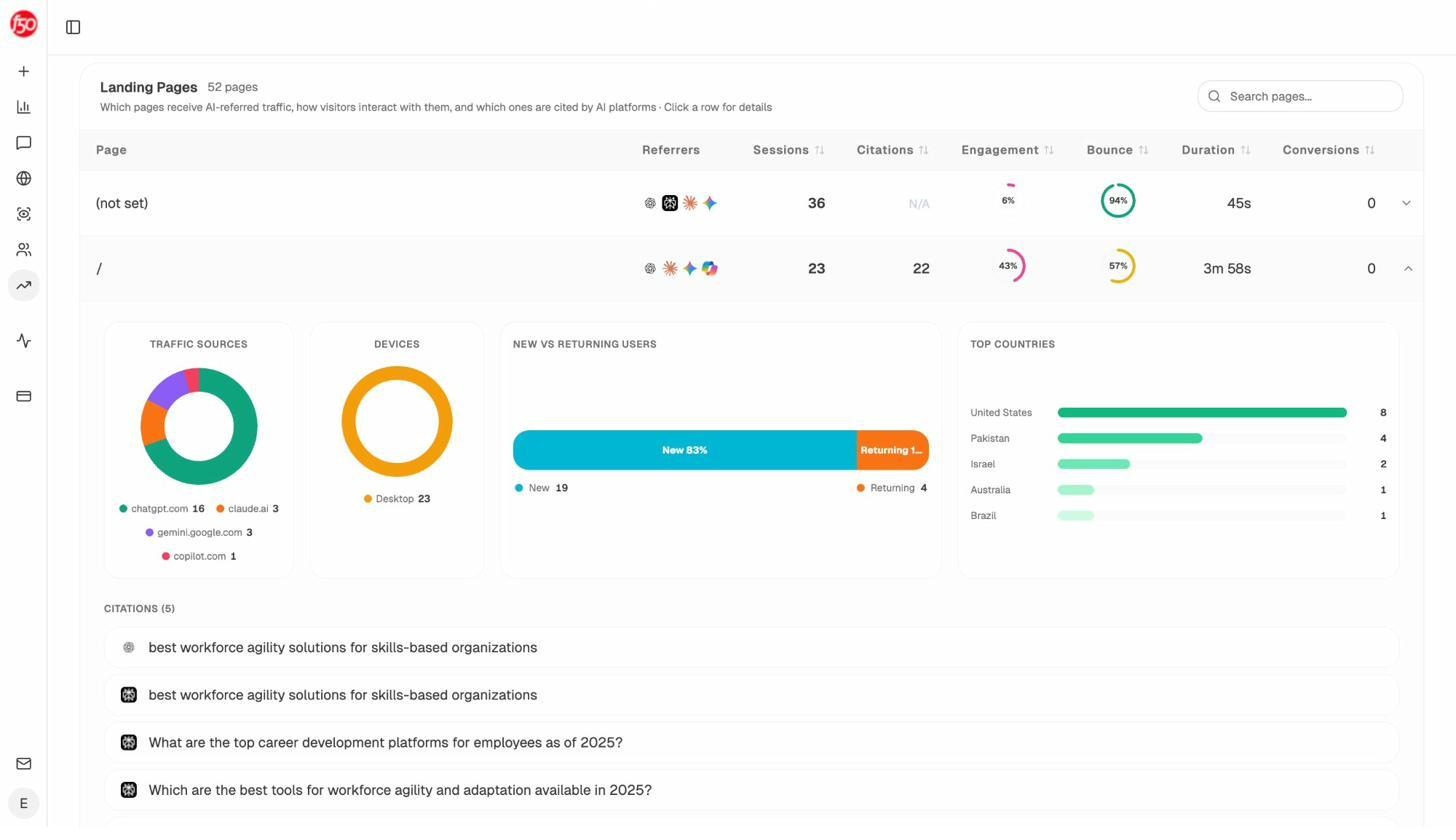

When ChatGPT sends 248 sessions and Perplexity sends 142, you know exactly where to invest. The AI Traffic Analytics dashboard also shows which landing pages receive that traffic, what conversion events those visits trigger, and which pages deserve more optimization.

This is the gap most trackers leave open. They tell you “your brand was mentioned.” Analyze AI tells you which mentions drove revenue and which ones drove nothing.

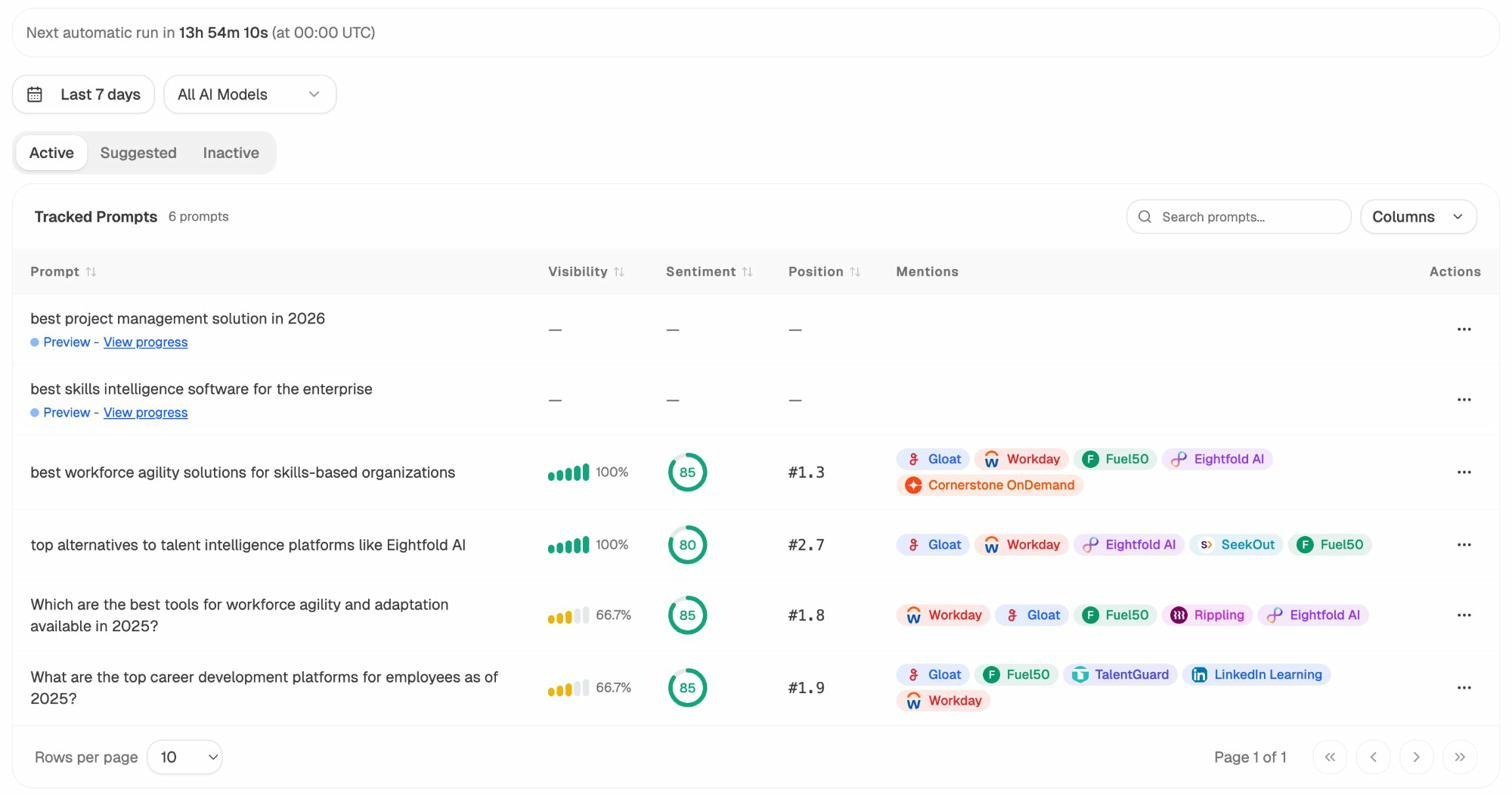

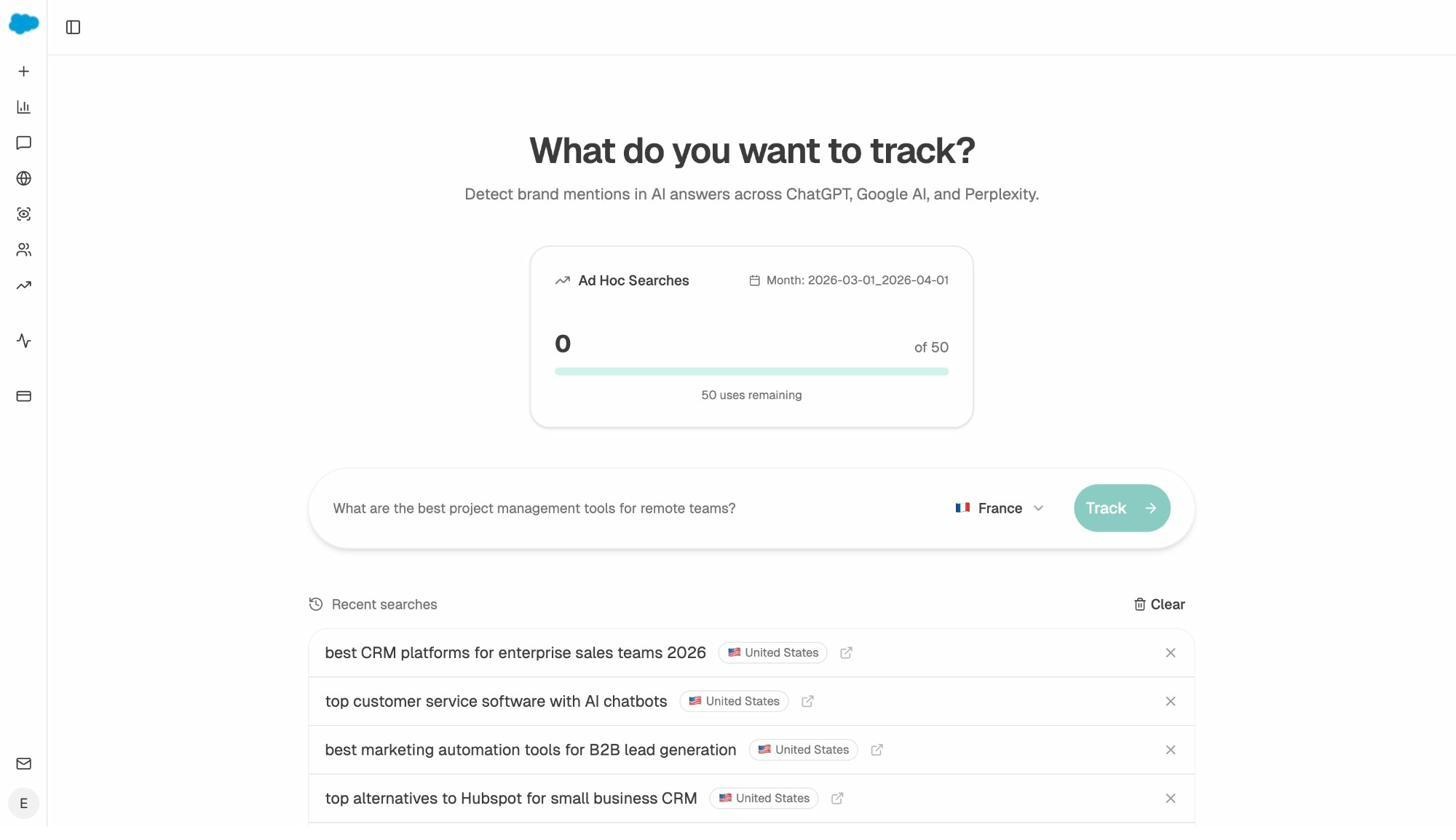

Track prompts, sentiment, and competitive position across every engine

You can monitor specific prompts across ChatGPT, Perplexity, Claude, Copilot, and Gemini. For each prompt, you see your brand’s visibility percentage, position relative to competitors, and sentiment score.

You can also see which competitors appear alongside you, how your position changes over time, and whether sentiment is improving or declining. The Competitor Intelligence dashboard makes competitive gaps obvious so you can prioritize where to focus.

Not sure which prompts to track? Analyze AI’s prompt suggestion feature surfaces the actual bottom-of-funnel prompts your buyers are using across engines.

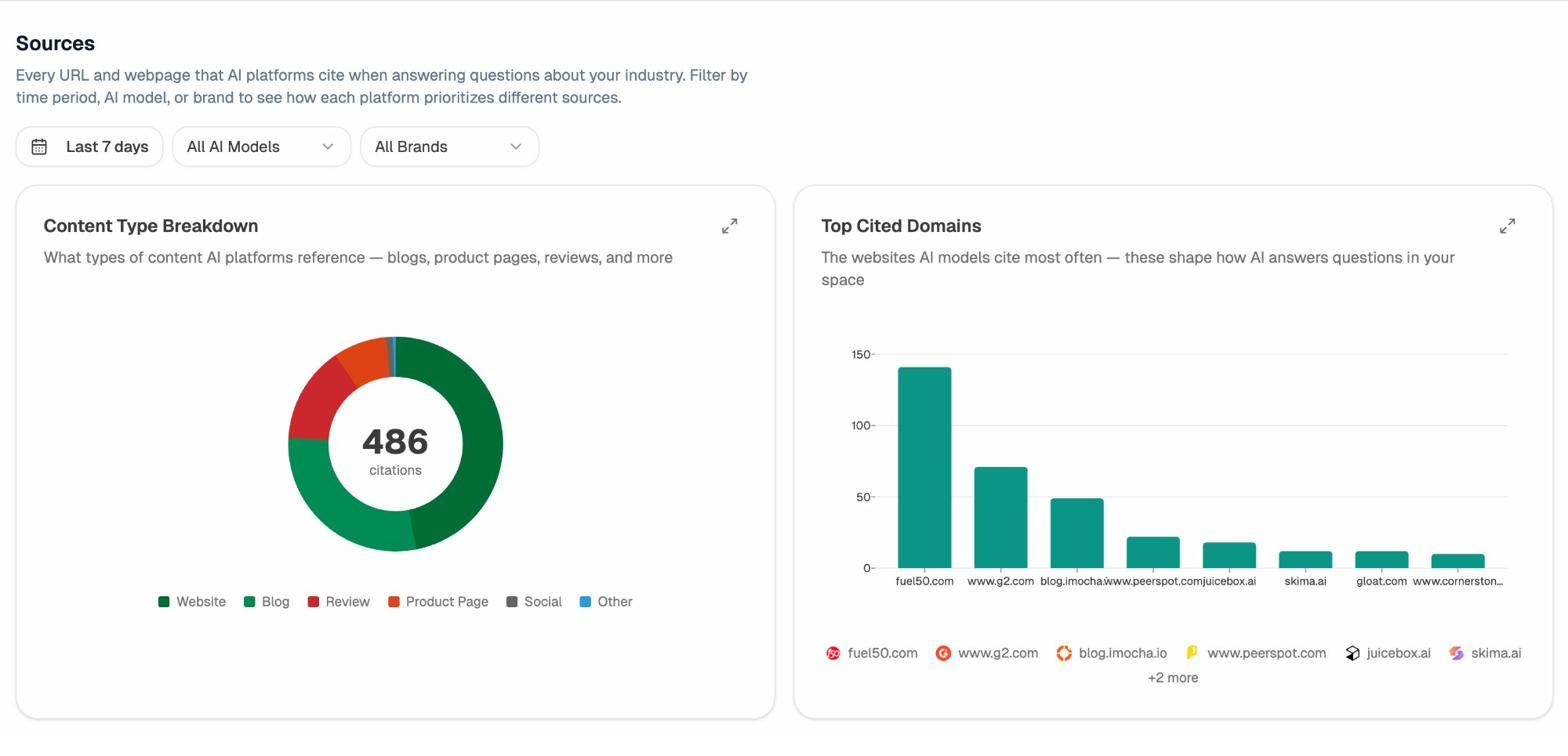

Audit which sources models trust

Citation Analytics reveals exactly which domains and URLs models cite when answering questions in your category. You see usage count per source, which models reference each domain, and when those citations first appeared.

Instead of guessing where to build authority, you target the specific sources that shape AI answers in your space. You strengthen relationships with domains that models already trust and create content that fills gaps in their coverage.

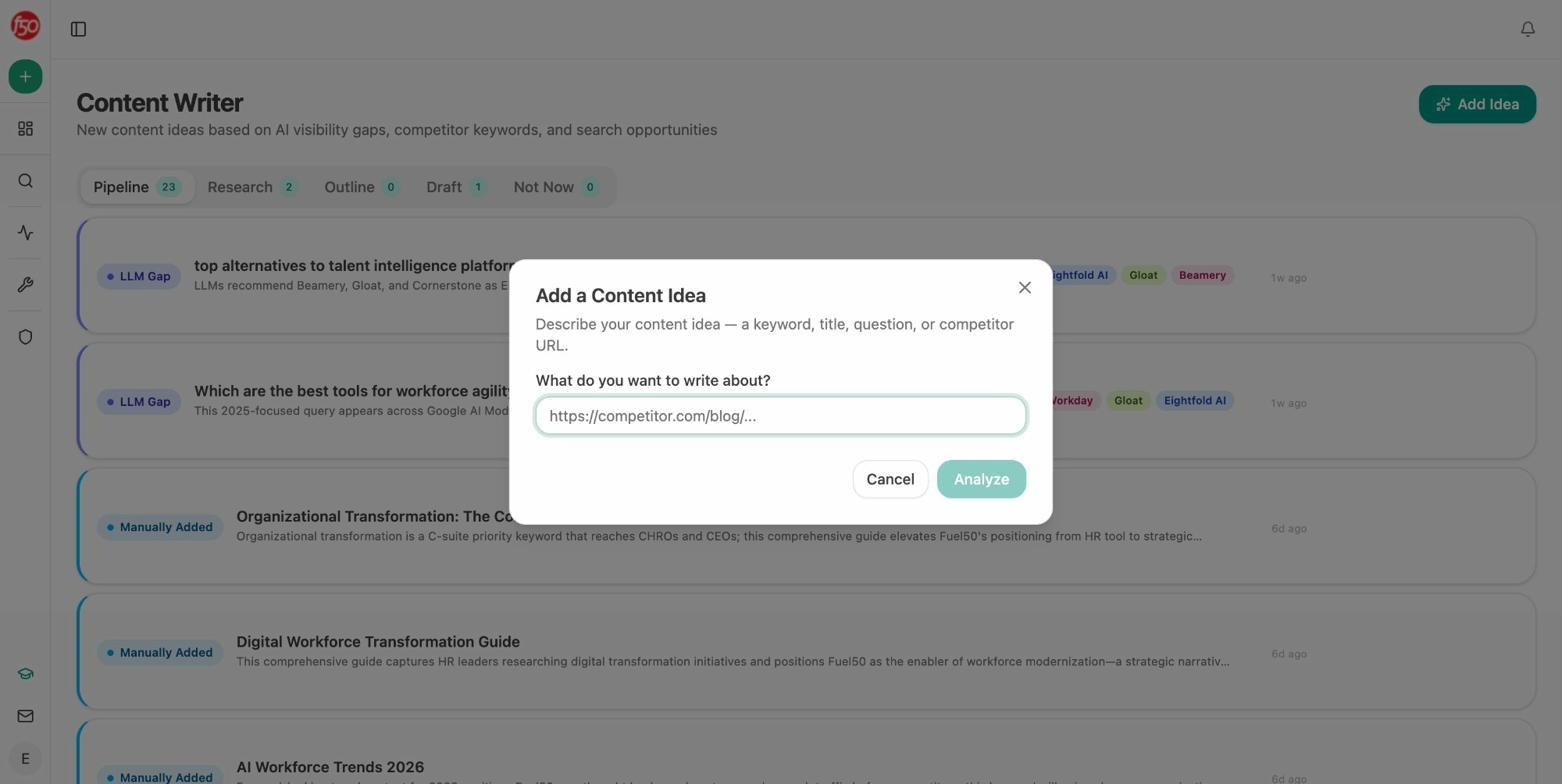

Write and optimize content that gets cited

This is where Analyze AI separates from every other tool on this list. The platform includes an AI Content Writer that takes you from idea to research to outline to draft with AI visibility gaps, competitor analysis, and editorial comments built into every step.

The AI Content Optimizer lets you paste any existing URL and get a content score, argument gaps, and line-by-line suggestions to make it visible to both search engines and AI models.

No other SE Ranking alternative on this list ships a complete content creation and optimization pipeline inside the same platform.

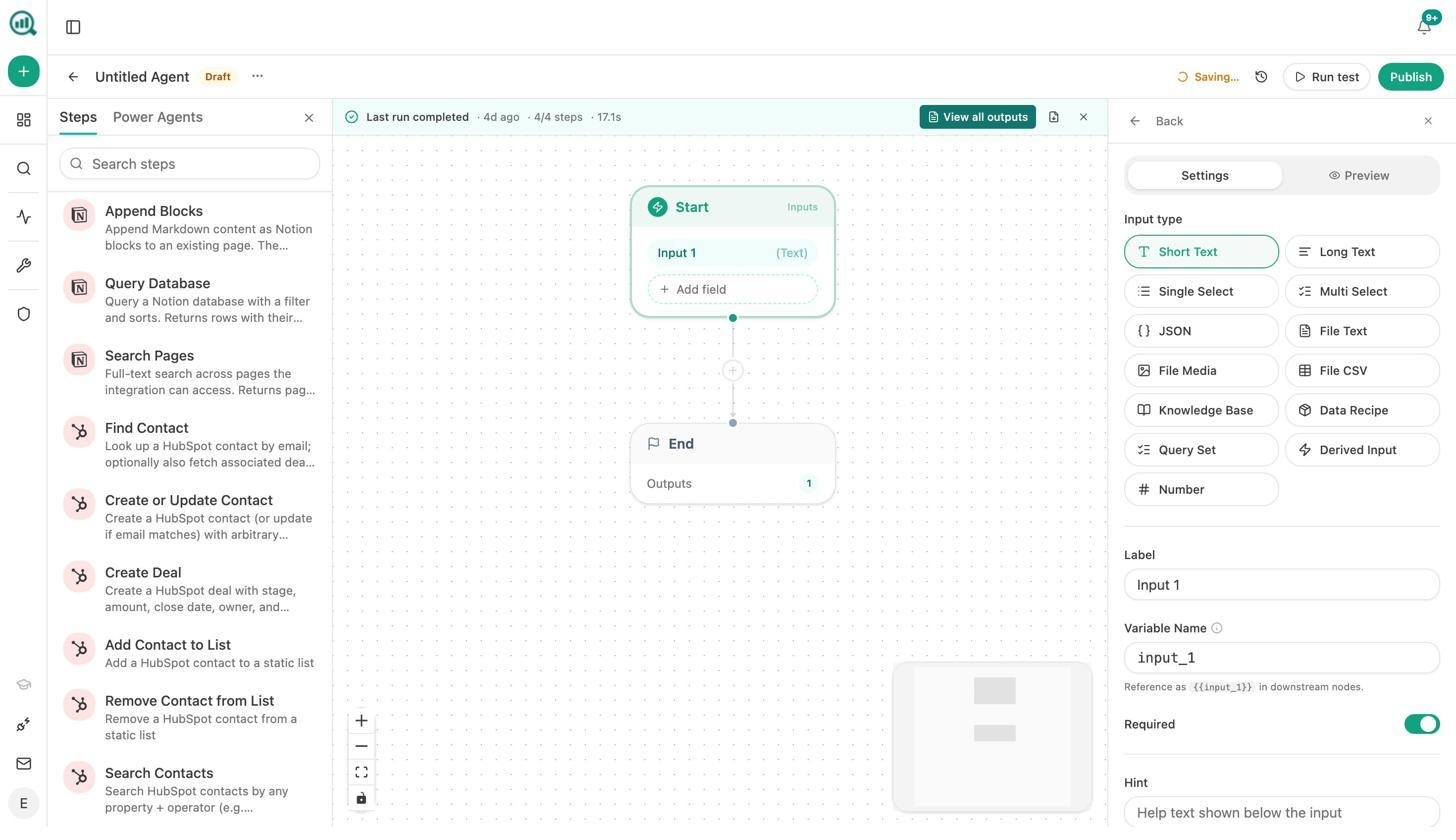

Automate everything with the Agent Builder

The Agent Builder is the feature that changes the math entirely. It comes with 180+ nodes, 34 pre-built data recipes, 13 input primitives, and three trigger modes (manual, scheduled, webhook). It connects directly to GA4, Google Search Console, DataForSEO, Semrush, HubSpot, Notion, WordPress, Slack, and every major LLM.

Here is what that means in practice. A CMO can set up a scheduled agent that pulls AI visibility data, GA4 traffic, competitive gaps, and new HubSpot deals every Monday at 7am, assembles an executive summary in brand voice, exports it to DOCX, and emails it to leadership. No analyst involved.

An agency can build a single agent that generates client briefing packs for every account on a loop. A content team can build a brief-to-publish pipeline where no piece goes live without passing an AEO quality gate. A PR team can get crisis early warnings every 15 minutes by combining brand mention monitoring with sentiment thresholds.

This is not just an automation layer. It is a programmable substrate with the same surface area as Zapier, Retool, and Make combined, but pre-wired to the SEO, content, and AI search data you are already paying for. You can build anything from a daily visibility regression alert to a full closed-won-to-case-study pipeline.

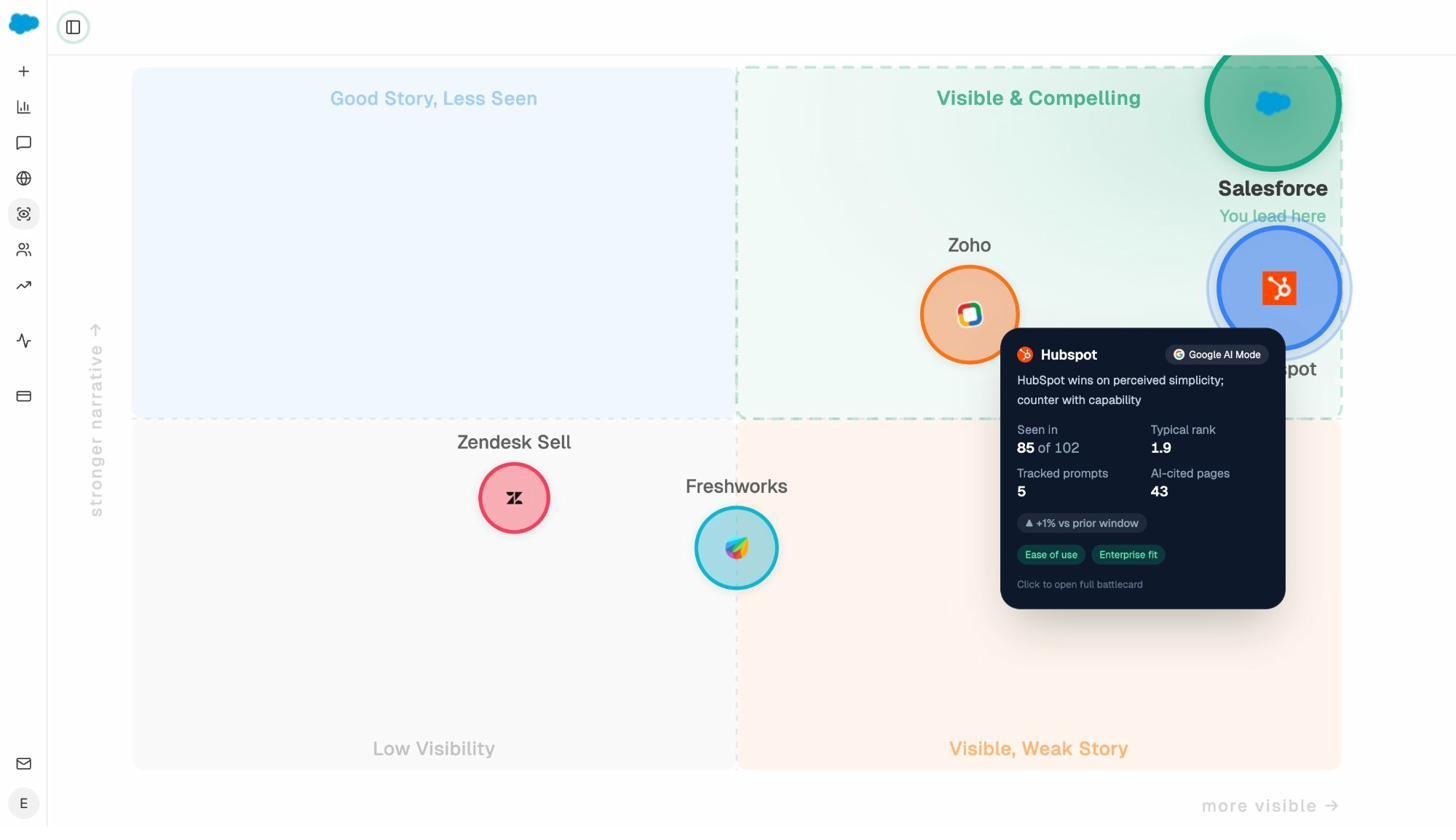

Govern your brand narrative

The Perception Map positions your brand on a quadrant against competitors based on visibility and narrative strength. AI Sentiment Monitoring flags negative narratives before they spread. AI Battlecards give you the competitive positioning data your sales team needs.

Weekly Email Digests deliver prioritized actions, citation changes, and competitor shifts to your inbox every Monday without logging in.

Why pick Analyze AI over SE Ranking: SE Ranking gives you keyword-level AI Overview tracking inside a traditional SEO suite. Analyze AI gives you multi-engine tracking, GA4 revenue attribution, a content writer, a content optimizer, and a full agentic platform that can automate your entire marketing ops stack. If you need more than a tracking add-on, start here.

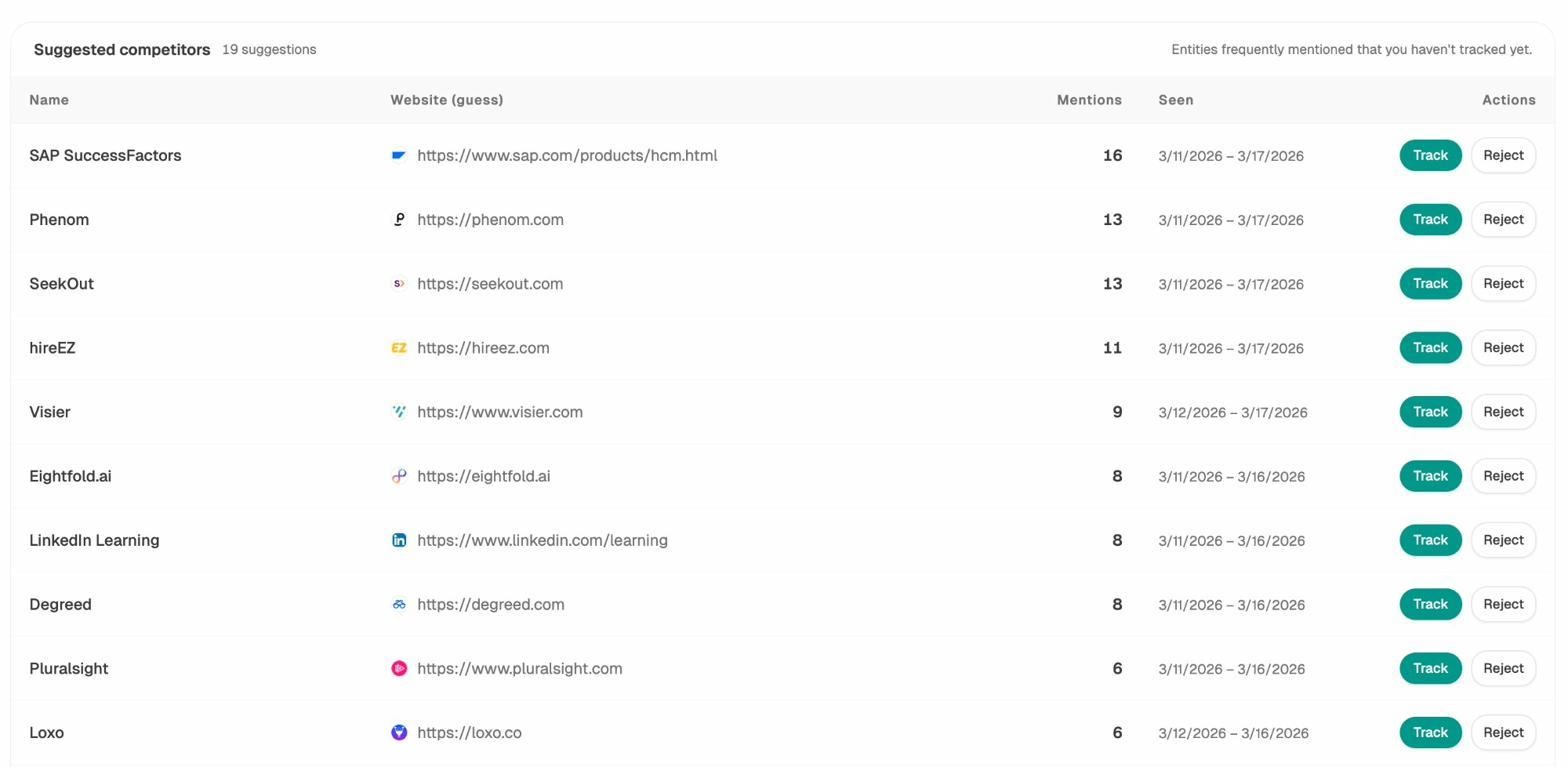

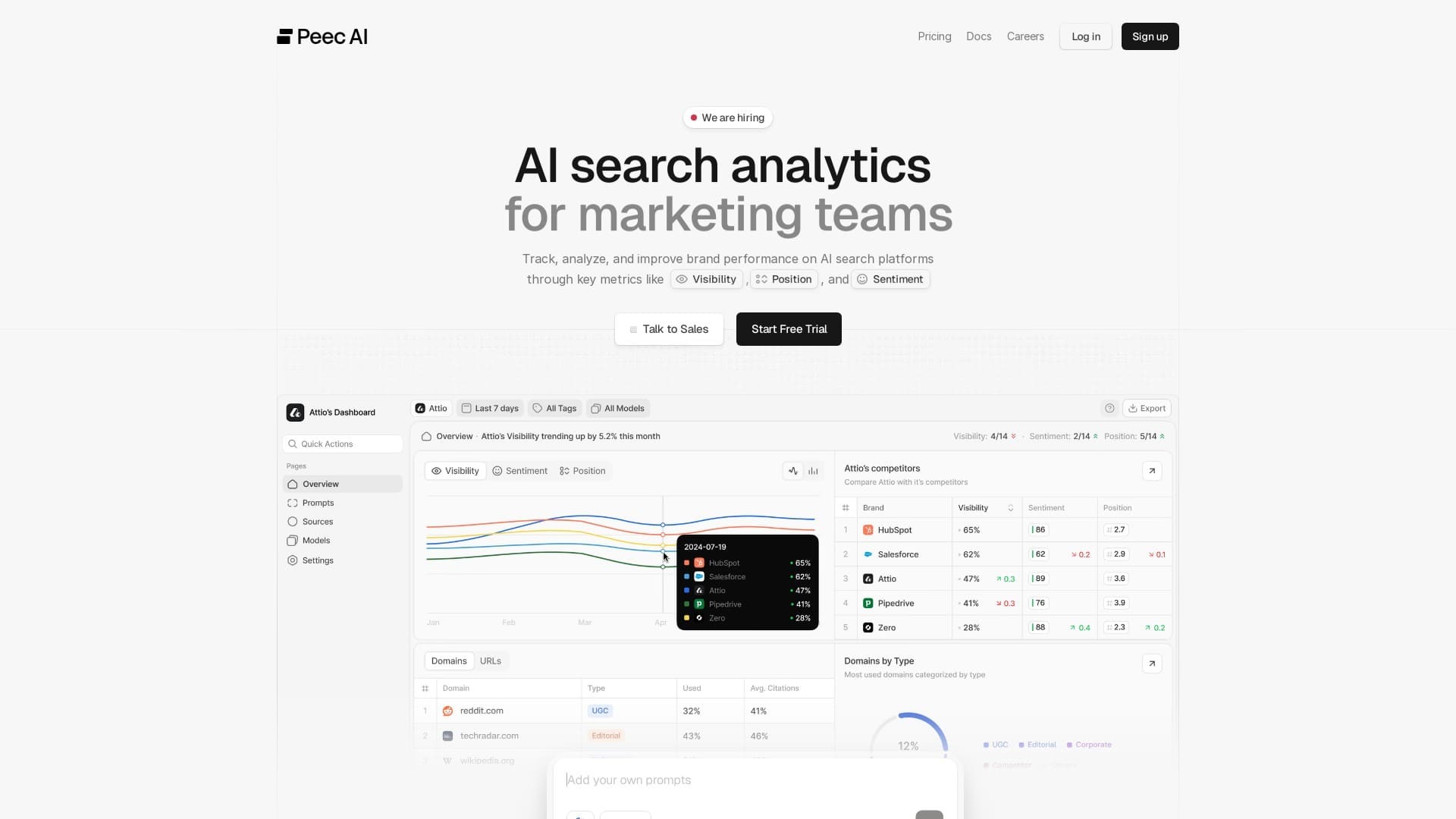

2. Peec AI: Best for Clean Multi-Engine Dashboards

Peec AI starts from the answer, not the keyword list. The platform groups prompts, answers, mentions, and citations in one place across ChatGPT, Perplexity, Gemini, Claude, and AI Overviews.

You can trace a mention to the exact page that fueled it, which removes guesswork during content triage. The citation-to-page mapping is clean and makes it easy to connect visibility gains to specific content updates.

Exports include CSV, API access, and Looker Studio integration. For agencies and in-house teams that need a monitoring-focused tool with solid multi-engine coverage, Peec delivers.

The trade-offs: Peec runs on a schedule, so results may lag during fast news cycles. There is no traffic attribution, no content creation tools, and no ROI layer. Lower tiers cap prompts and engines. You will still need separate tools for content and optimization work.

Pick Peec over SE Ranking if you need cross-engine visibility with prompt-level answer snapshots and source mapping. Stay with SE Ranking if you want one platform for classic SEO and lighter AI tracking.

Pricing: Peec starts at ~$99/month with plans scaling by prompt volume and engine coverage.

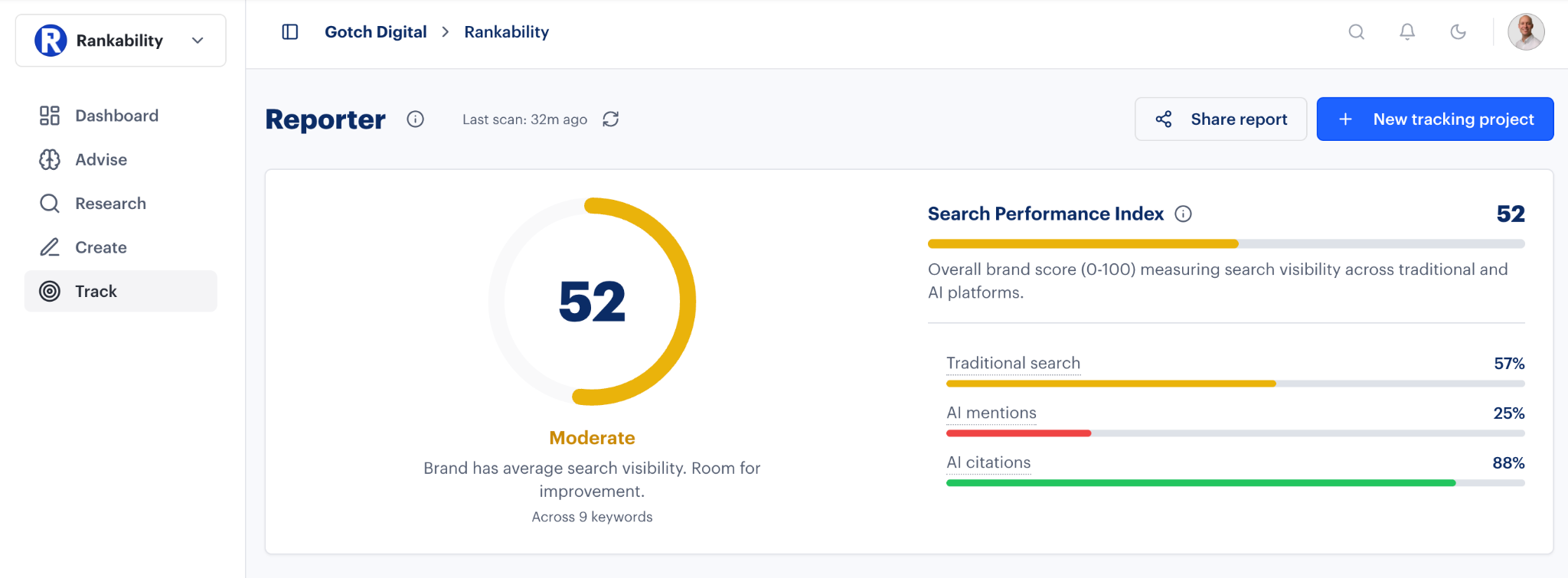

3. Rankability’s AI Analyzer: Best for Agency Client Reporting

Rankability’s AI Analyzer takes the familiar SEO reporting format and extends it to AI visibility. Built-in dashboards present visibility trends, citation counts, and share-of-voice comparisons that mirror standard SEO reports.

The integration with Rankability’s broader SEO and content suite is the real advantage. Once you spot a visibility gap, you can connect that insight to content tasks inside the same platform. Multi-brand workspace support means agencies can manage separate client accounts with consistent formatting across reports.

The trade-offs: The AI Analyzer module is newer, so depth of engine coverage and advanced analysis are still maturing. Prompt refresh cadence is not always clear. Accessing full AI visibility features often requires upgrading to higher-tier plans, and regional coverage varies.

Pick Rankability over SE Ranking if you are an agency that needs white-label, cross-engine client reports. Stay with SE Ranking if you only need AI Overview tracking inside a single SEO workspace.

Pricing: Starts at ~$79/month for the Solo plan. Full AI Analyzer access requires Growth or Agency tiers.

4. LLMrefs: Best Lightweight Option for Quick Citation Checks

LLMrefs strips AI visibility tracking down to its simplest form. Enter your domain, and the system begins capturing mentions and citations across ChatGPT, Perplexity, Claude, and Gemini automatically.

The proprietary LLMrefs Score turns raw citation data into a single index that makes benchmarking easy. Weekly trend charts and competitor comparisons show exactly how your content is used in AI-generated results. Setup takes minutes.

The trade-offs: LLMrefs is tracking only. It will not tell you why your brand did or did not get cited, and it offers no content recommendations, audit tools, or optimization guidance. Updates are weekly by default (daily for Pro users). There is no diagnostic layer that explains why models chose certain sources.

Pick LLMrefs over SE Ranking if you need a simple, fast way to confirm where your content appears in AI answers without complexity. Stay with SE Ranking if you want integrated SEO tools alongside AI visibility.

Pricing: Low entry cost. Pro plans unlock daily refresh and deeper competitor data.

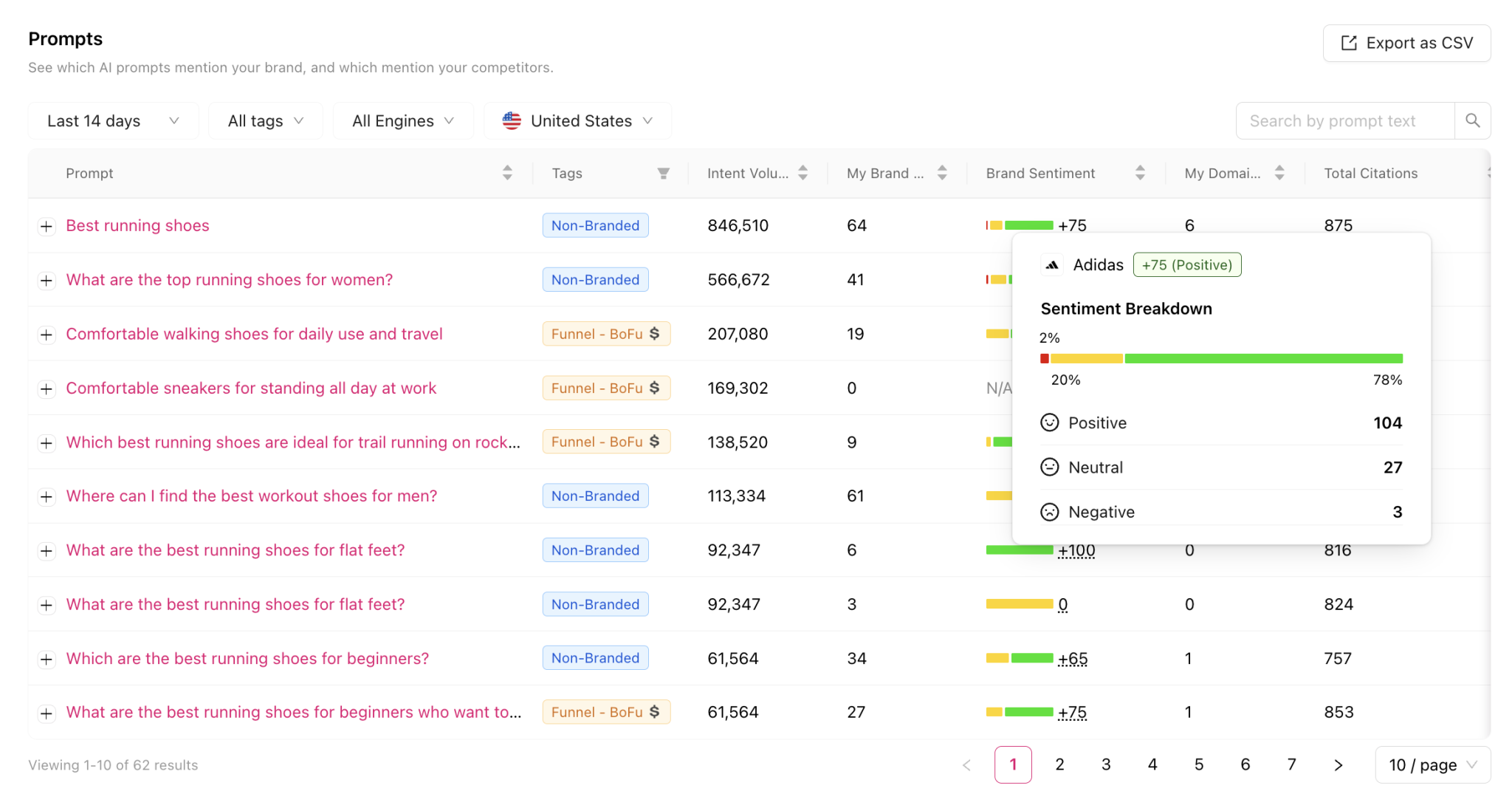

5. Otterly AI: Best for Brand Sentiment in AI Answers

Otterly AI goes beyond tracking whether your brand appears and analyzes how it is represented. It identifies the sentiment and framing of each mention, showing whether your brand is portrayed positively, neutrally, or negatively.

The GEO Audit examines 25+ on-page and technical factors that influence AI visibility. Combined with competitor tracking, Otterly’s benchmarking tools show how sentiment and visibility evolve over time. You can compare brand tone and citation frequency against competitors across specific prompts and regions.

The trade-offs: The dataset is smaller than larger trackers. Coverage for niche LLMs and regional variants is still developing. The tool prioritizes analysis over optimization. While the GEO Audit surfaces contributing factors, it does not prescribe step-by-step fixes. Update cadence is not always clear.

Pick Otterly over SE Ranking if you care about how AI frames your brand, not just whether you appear. Stay with SE Ranking if you need general visibility tracking without sentiment analysis.

Pricing: Mid-tier. Plans scale by prompt volume and engine coverage.

6. Surfer AI Tracker: Best for Existing Surfer SEO Users

Surfer AI Tracker plugs directly into the Surfer SEO platform as an add-on module. Teams can activate it inside their current Surfer environment and start tracking mentions within minutes.

The tracker shows prompt-level detail for each tracked query, including the exact wording, the model used, and the snippet or source cited. Historical trend charts visualize how visibility changes over time. Daily refresh is available on higher plans.

The trade-offs: Surfer AI Tracker is a visibility add-on, not a deep analytics platform. Some engines are still marked “coming soon.” Pricing is per prompt count, which constrains enterprise use. There is no sentiment analysis, no diagnostic tools, and no guidance on how to improve citation probability. You still rely on Surfer’s traditional on-page features to bridge that gap.

Pick Surfer AI Tracker over SE Ranking if you already live in Surfer and want integrated AI tracking without a new platform. Stay with SE Ranking if you prefer a broader SEO suite.

Pricing: Add-on priced by prompt volume on top of a Surfer SEO subscription.

7. Profound: Best for Enterprise Compliance and Scale

Profound was built for large organizations that need AI visibility data at depth. It covers multiple engines and conversation flows, giving enterprise teams a single command center for understanding brand presence across generative search environments.

![Profound’s enterprise dashboard with share-of-voice and answer archives]](https://www.datocms-assets.com/164164/1779522050-blobid18.png)

What separates Profound from lighter tools is its enterprise architecture. Role-based access controls, audit logs, and historical archives of AI answers with screenshots and metadata make it valuable for compliance-heavy teams. Agent Analytics reveals how AI crawlers access your site, connecting technical SEO signals with visibility outcomes.

The trade-offs: Pricing starts at $499/month and scales steeply. Onboarding takes longer due to dashboard complexity. The new “Profound Actions” optimization module is still early-stage. Dashboards can feel dense without disciplined role-based filtering.

Pick Profound over SE Ranking if you are an enterprise team that treats AI visibility as an auditable, compliance-grade channel. Stay with SE Ranking if you need something leaner and more affordable.

Pricing: Enterprise-grade. Starts at ~$499/month. See full comparison.

How to Choose the Right Alternative

The right tool depends on what you need beyond visibility counting.

If you need a complete platform, Analyze AI is the only option here that combines AI visibility tracking, GA4 revenue attribution, a content writer, a content optimizer, and a programmable Agent Builder with 180+ nodes. It replaces two to five separate tools.

If you need monitoring only, Peec AI and LLMrefs give you clean dashboards and citation data without the complexity of a full platform.

If you are an agency, Rankability’s white-label reporting and multi-brand workspaces save time across client accounts.

If brand narrative matters, Otterly AI’s sentiment and tone analysis gives your PR and comms team the context they need.

If you are already in Surfer, the AI Tracker add-on is the lowest-friction path to AI visibility data.

If you are enterprise, Profound delivers the compliance, archival, and scale infrastructure large teams require.

Whatever you pick, the important thing is to stop relying on AI visibility trackers that show you a number and leave you to figure out what to do next. The tools that connect visibility to traffic, content, and revenue are the ones that will actually compound.

Check your brand’s current AI visibility for free and see where you stand before committing to any tool.

Ernest

Ernest