Summarize this blog post with:

In this article, you’ll get a detailed breakdown of what LLMrefs does well, where it falls short, how its pricing works, and how it compares to a platform that goes beyond tracking to connect AI visibility with traffic, content, and revenue. If you’re evaluating AI search rank tracking tools for your team, this review will help you decide whether LLMrefs deserves a spot in your stack or whether you need something with more depth.

Table of Contents

What LLMrefs Actually Does

LLMrefs is a focused AI visibility tracker that monitors how often your brand gets mentioned or cited in AI-generated answers. It runs controlled prompts across major AI engines like ChatGPT, Perplexity, Gemini, Claude, and Grok, then records which websites appear in those responses.

For each keyword you track, LLMrefs captures the AI-generated answer, extracts the cited domains and URLs, and threads those data points into a historical timeline. You can filter results by AI model, country, and language.

The platform compresses its findings into a proprietary metric called the LLMrefs Score (LS), which gives you a single number representing your brand’s overall AI visibility.

At $79/month for the Pro plan, LLMrefs positions itself as one of the most affordable options in the GEO tools market. It tracks up to 50 keywords, updates daily, and includes unlimited team members.

That’s the pitch. Now let’s look at what works, what doesn’t, and whether the price justifies the output.

Three Things LLMrefs Gets Right

1. Broad AI Engine Coverage

LLMrefs tracks citations across 11+ AI platforms, including ChatGPT, Perplexity, Claude, Gemini, Grok, and several others. That’s broader than most tools in this category. If your goal is to see whether your brand shows up across the full spectrum of AI engines, LLMrefs covers more ground than competitors that only track two or three models.

The platform uses the same testing protocol across all engines, which means you can make apples-to-apples comparisons between how ChatGPT cites you versus how Perplexity does. You can filter by model to isolate which engine drives your visibility and which ones ignore you entirely.

2. Simple Pricing, No Seat Limits

At $79/month for the Pro plan with unlimited team members, LLMrefs removes a common friction point. You can share dashboards across your marketing, SEO, and leadership teams without worrying about per-seat costs eating into your budget.

The pricing structure is transparent. Free tier gives you one keyword with monthly reports. Pro gives you 50 keywords with daily updates, CSV exports, and priority support. Enterprise is custom. No hidden modules or surprise upsells.

For small teams or solo marketers testing AI visibility for the first time, $79/month is a reasonable entry point.

3. Auto-Generated Prompts

LLMrefs automatically generates relevant prompts for your tracked keywords, saving you from having to manually guess which queries to monitor. This feature is unique in the market and helps teams that don’t have deep experience with generative engine optimization get started faster.

Instead of spending hours brainstorming which prompts your buyers might type into ChatGPT or Perplexity, LLMrefs suggests them for you based on your keyword inputs.

Three Places Where LLMrefs Falls Short

1. No Connection Between Visibility and Traffic

This is the fundamental gap. LLMrefs tells you that your brand was mentioned in an AI answer. It does not tell you whether anyone clicked through, which pages they landed on, or whether those visits turned into conversions.

A brand mention in a ChatGPT response and a citation in Perplexity are two very different things. One sends clickable links. The other often doesn’t. LLMrefs treats both the same way, which means you could see a high visibility score while getting zero actual traffic from AI engines.

For content teams and CMOs who need to justify budget, visibility without traffic data is a slide deck metric, not a business outcome.

2. Keyword-Centric Model in a Prompt-Driven World

LLMrefs organizes everything around keywords, which follows the traditional SEO model. But AI search doesn’t work like Google search. Users type full questions, multi-sentence prompts, and conversational queries that don’t map neatly to keyword buckets.

The 50-keyword cap on the Pro plan also becomes limiting fast. If you manage multiple brands, cover multiple product lines, or operate in several markets, you’ll burn through your keyword budget quickly. And since each keyword tracked across multiple models consumes credits, the math can get expensive at scale.

3. The LLMrefs Score Is a Black Box

The LS metric condenses your cross-model performance into a single number. That sounds helpful for executive reporting, but without knowing how the score weighs each engine, position, or citation type, the number becomes hard to act on.

A score increase could mean stronger citations in one model, or it could mean weaker competition in another. When you present this number to stakeholders who aren’t deep in the AI search weeds, they may mistake movement for progress. Teams need to drill into the underlying data to understand what the score actually reflects, which partly defeats the purpose of having a summary metric.

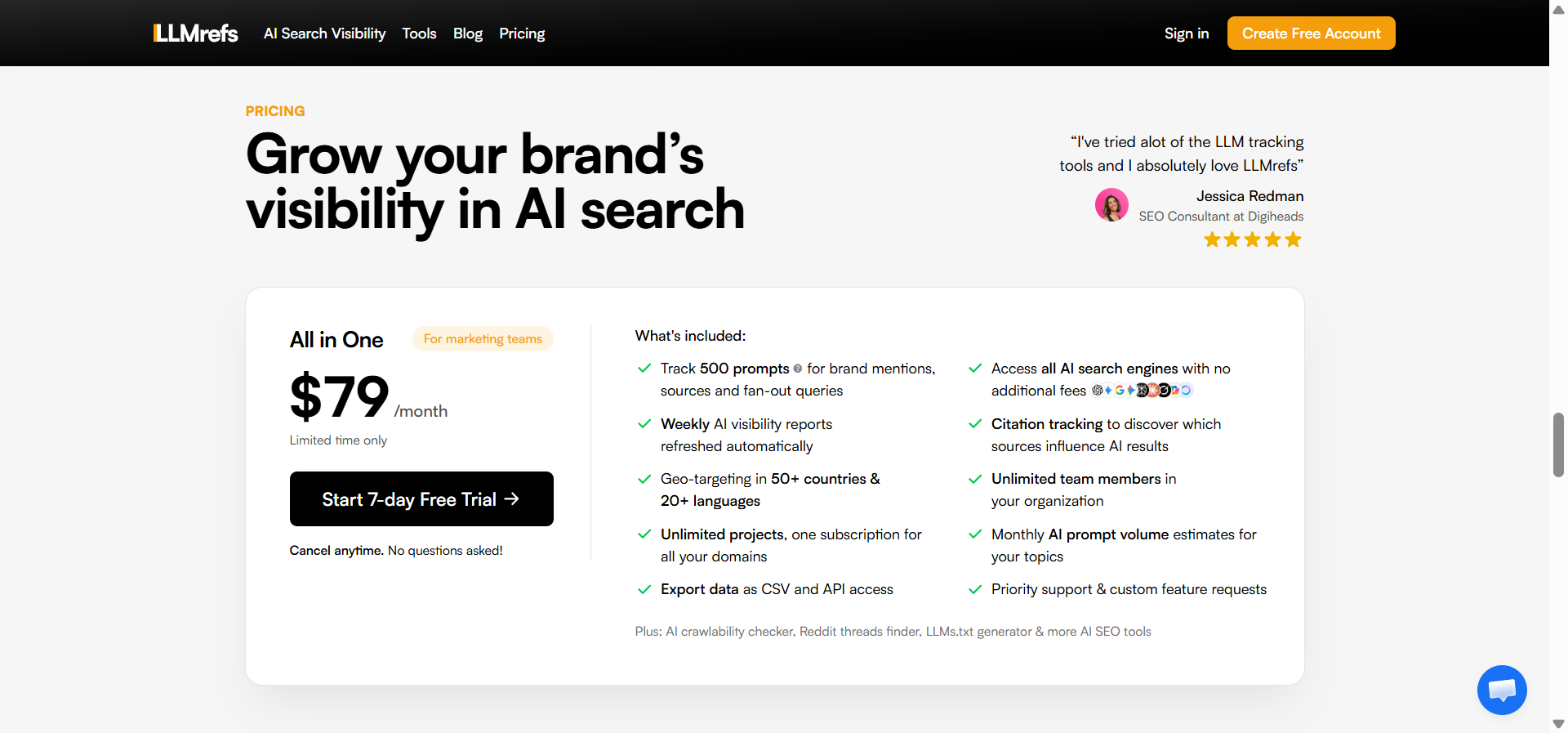

LLMrefs Pricing: The Full Picture

|

Plan |

Price |

Keywords |

Refresh Rate |

Key Limits |

|---|---|---|---|---|

|

Free |

$0/month |

1 |

Monthly |

Limited models, basic reporting |

|

Pro |

$79/month |

50 |

Daily |

All AI engines, CSV export, unlimited seats |

|

Enterprise |

Custom |

Unlimited |

Custom |

SLA, dedicated support |

At $79/month, LLMrefs is the lowest-cost option in the AI visibility space. For context, tools like AthenaHQ charge around $295/month, and most enterprise platforms start in the mid-hundreds.

The value question isn’t whether $79/month is cheap. It is. The question is whether a tracker that stops at “you were mentioned” gives you enough to make decisions. If your team’s workflow is just monitoring mentions across AI engines, LLMrefs delivers. If you need to connect visibility to traffic, optimize the content that gets cited, or automate your response to competitive shifts, you’ll outgrow it quickly.

The free tier tracks a single keyword with monthly reports, which means the trial phase is too narrow to evaluate the product properly. Most teams upgrade within days.

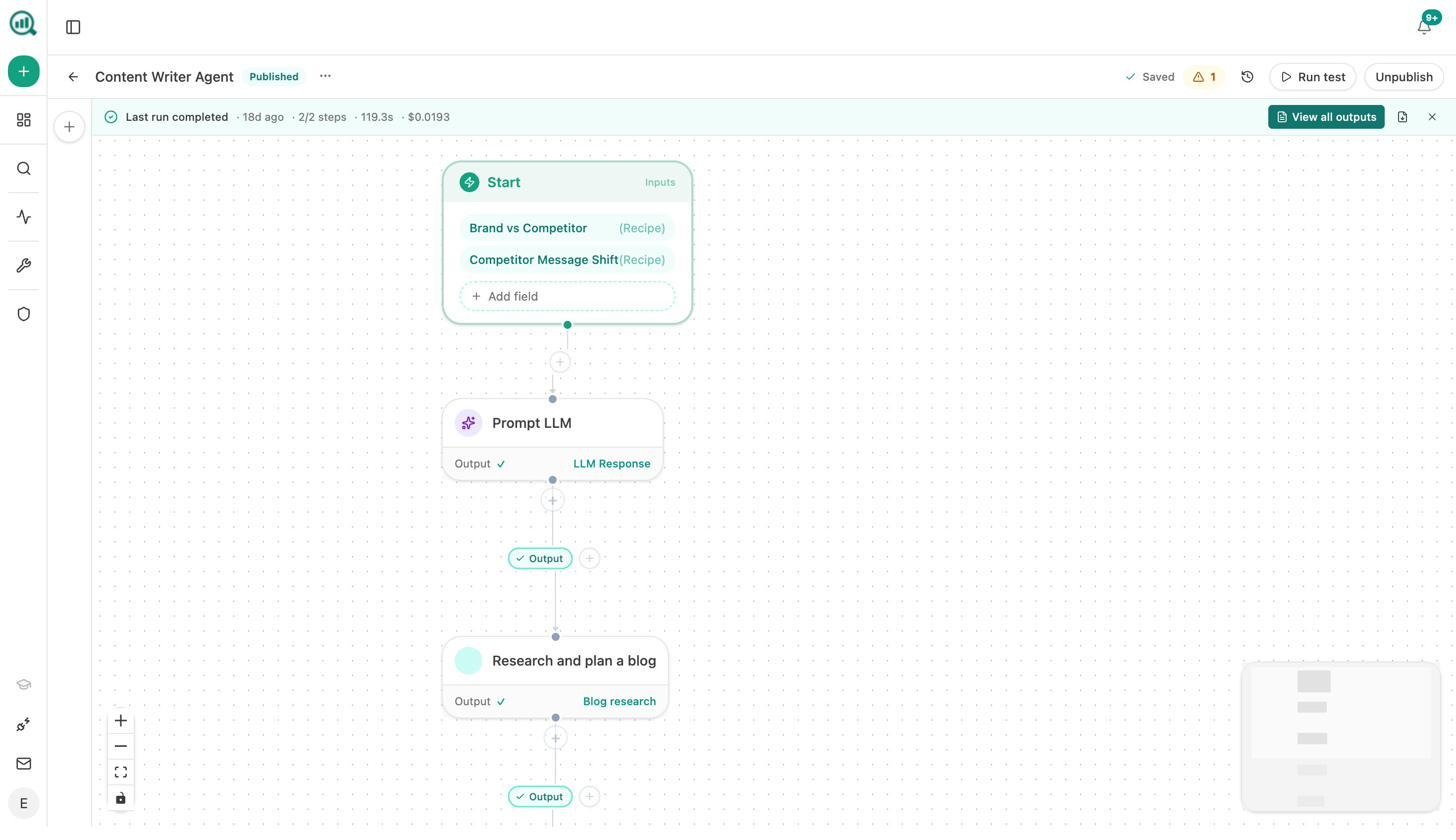

Why Teams Switch to Analyze AI

Most AI visibility tools tell you whether your brand appeared in a ChatGPT response. Then they stop.

Analyze AI is not another tracking layer. It is the agentic platform for SEO, AEO, content, and GTM operations that connects AI visibility to actual traffic, conversions, and revenue. And beyond monitoring, it gives you the tools to write better content, optimize existing pages, automate workflows, and take action on every insight.

Here is how it works in practice.

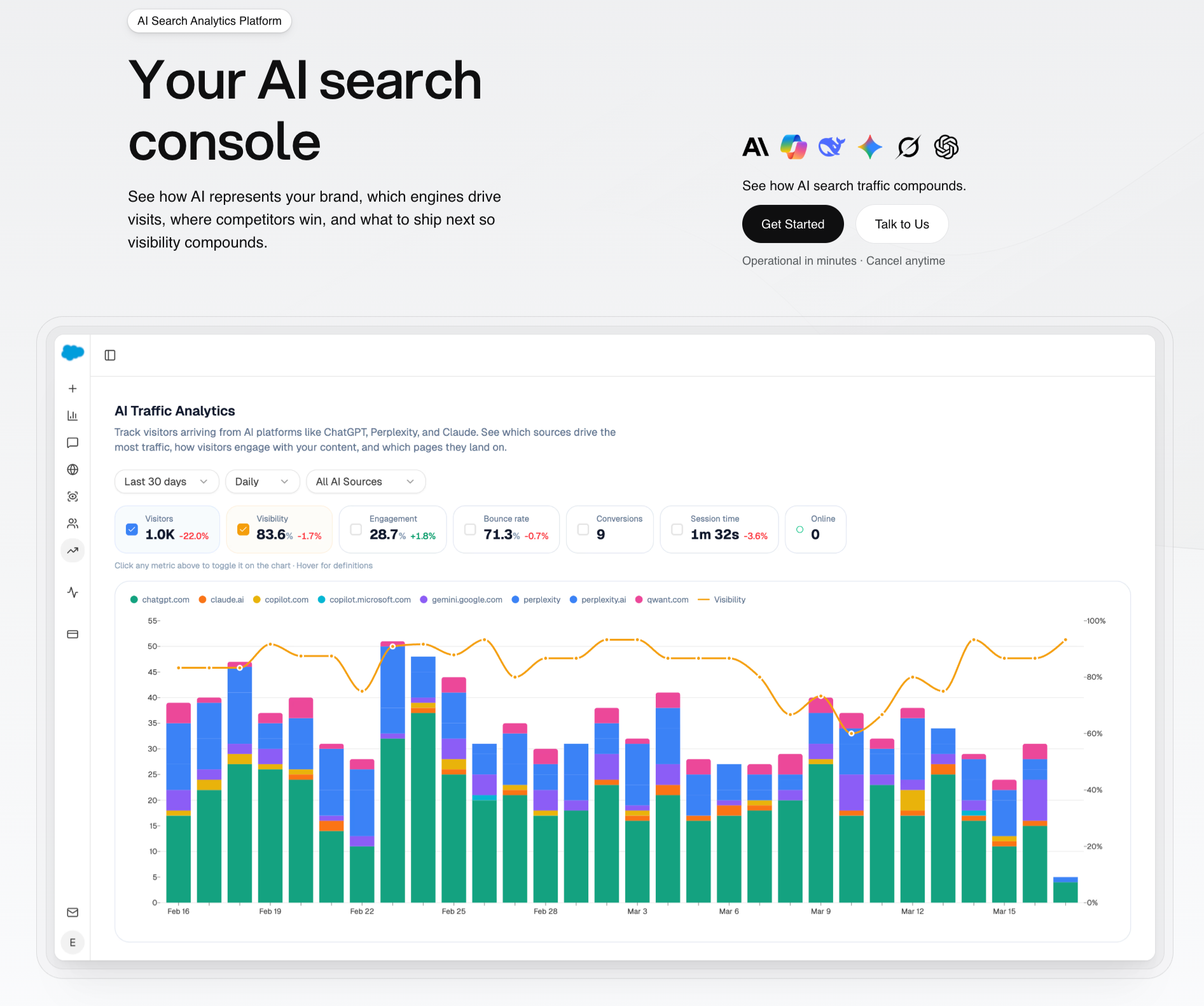

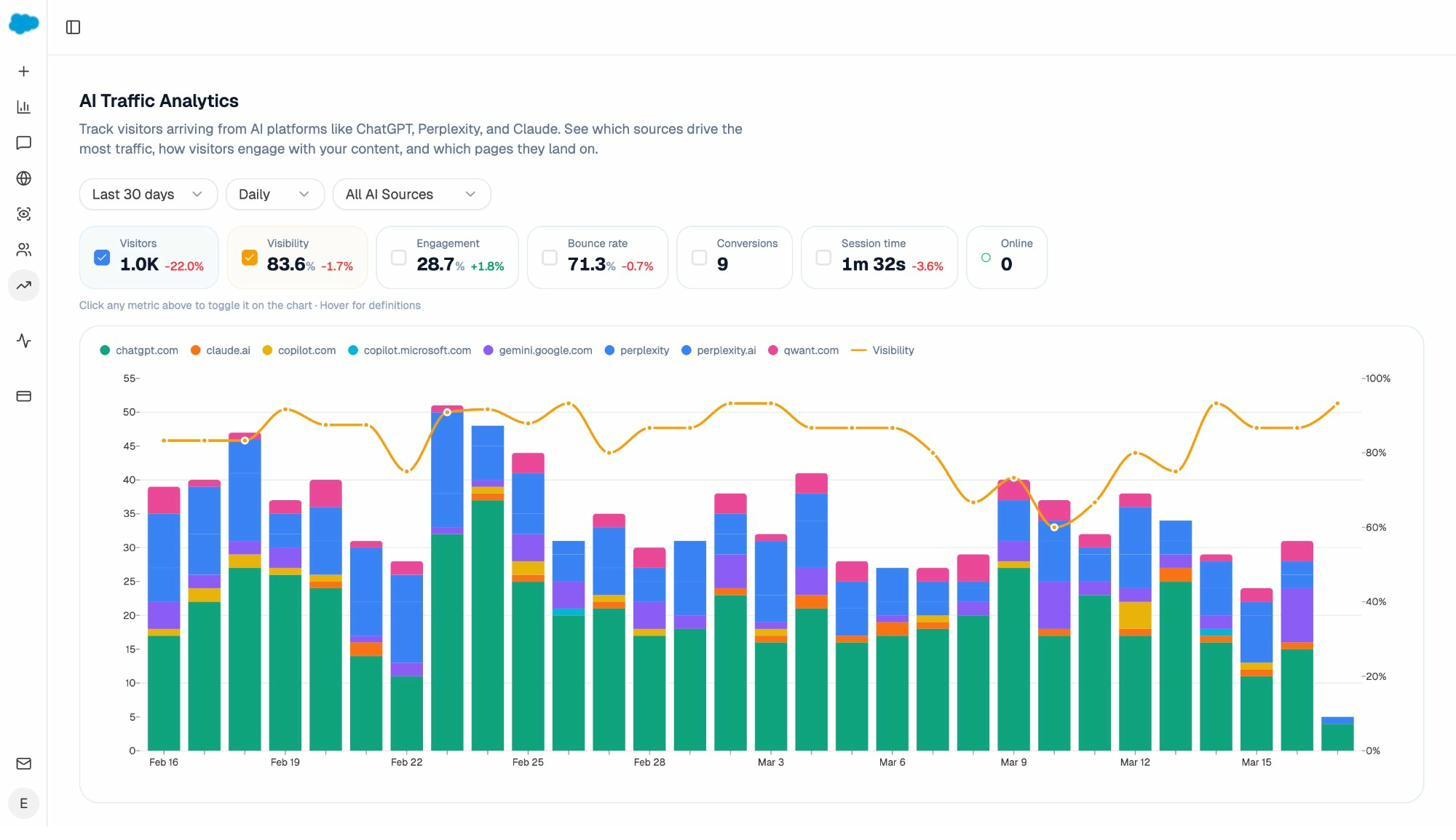

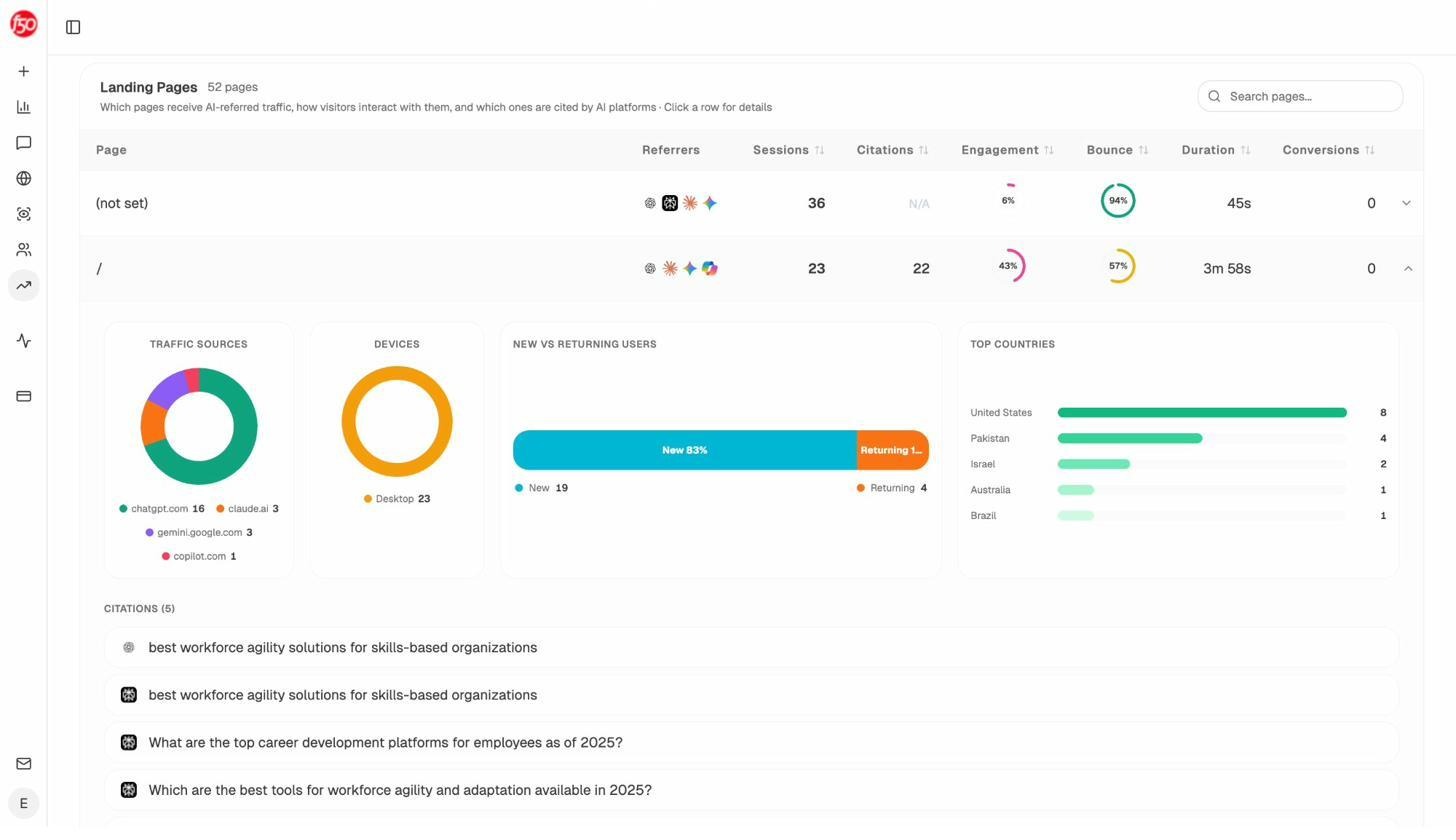

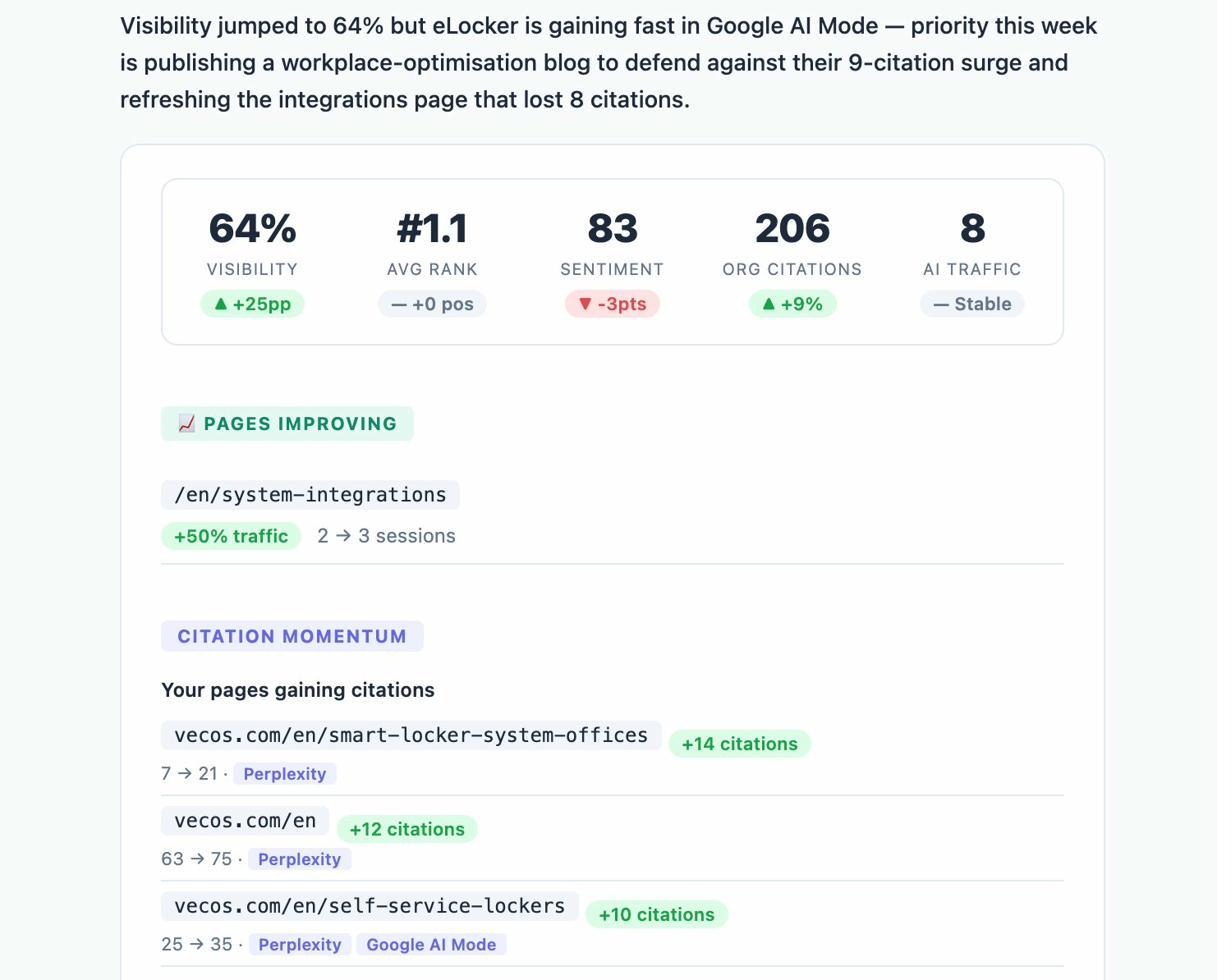

See AI Traffic by Engine, Landing Page, and Conversion

Analyze AI connects directly to your analytics through GA4 to attribute every session from AI engines to its specific source. You see exactly how many visitors came from ChatGPT versus Perplexity versus Claude, which pages they landed on, and what they did next.

The Landing Pages report shows which pages receive AI referrals, which engine sent each session, and what conversion events those visits triggered. When your product comparison page gets 50 sessions from Perplexity and converts at 12%, while an old blog post gets 40 sessions from ChatGPT with zero conversions, you know exactly what to double down on.

LLMrefs cannot do this. It tracks mentions. Analyze AI tracks the full journey from AI answer to landing page to revenue.

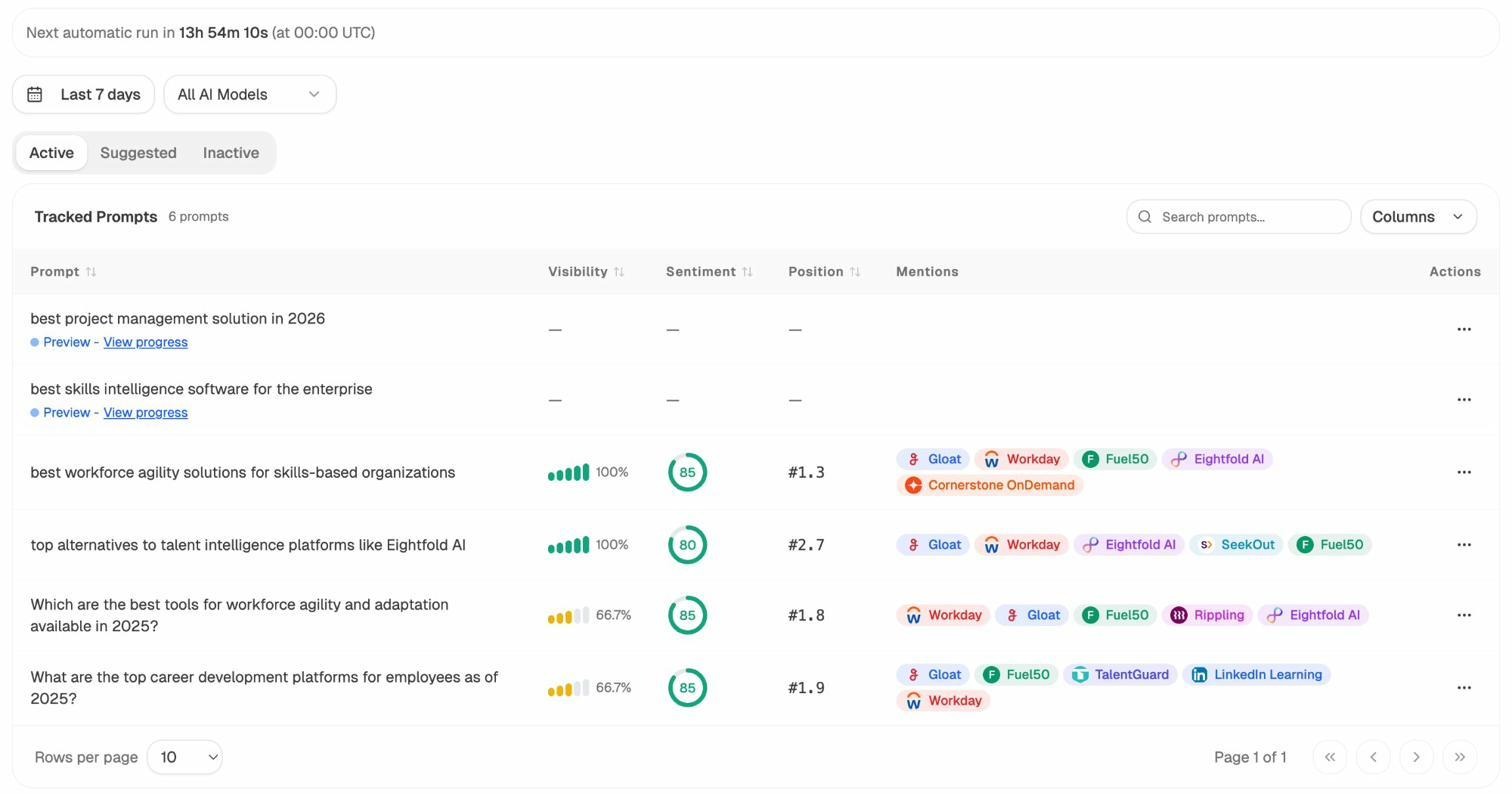

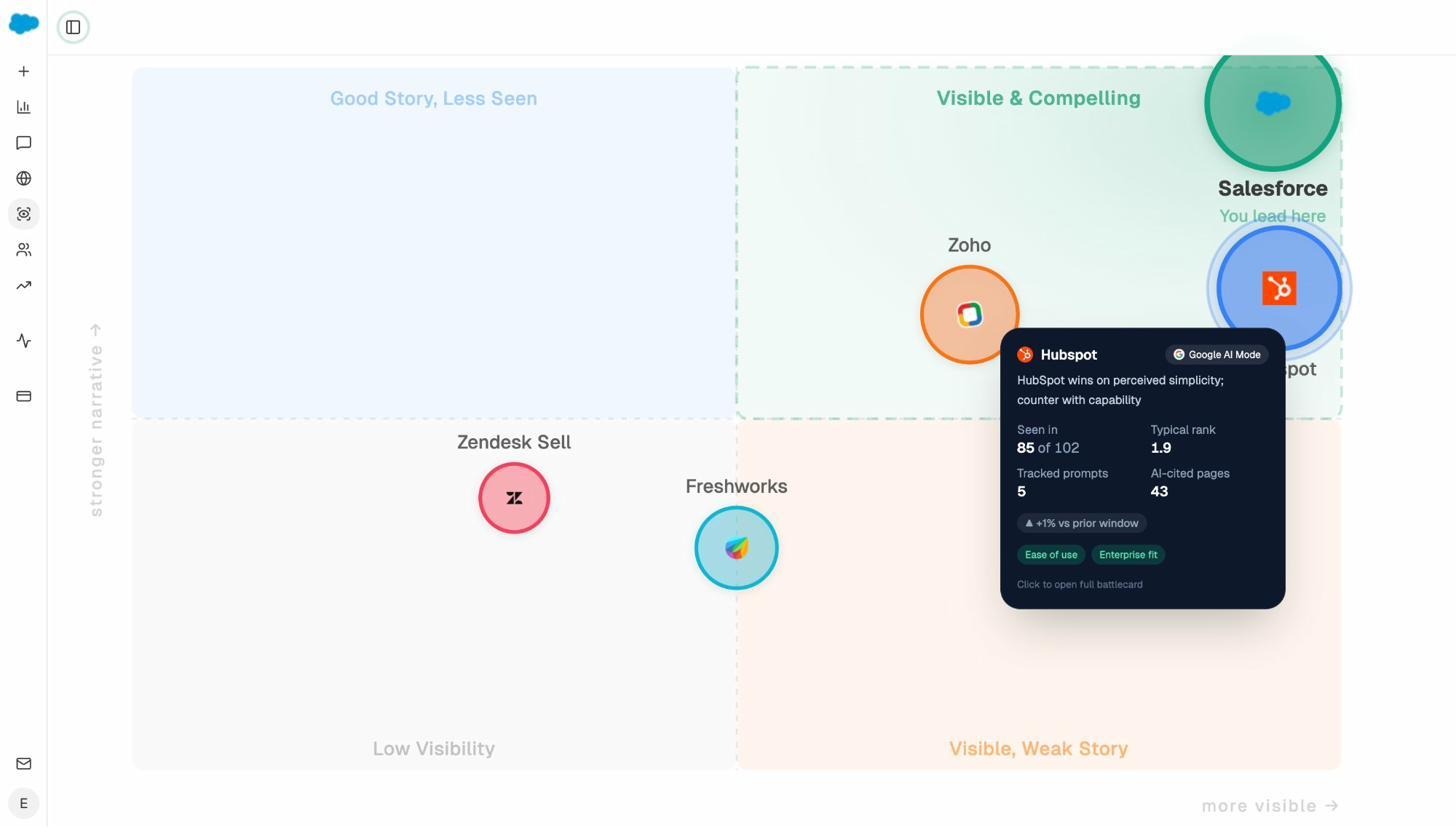

Track Prompts With Visibility, Sentiment, and Competitive Position

Analyze AI monitors specific prompts across all major AI engines. For each prompt, you see your visibility percentage, position relative to competitors, sentiment score, and exactly which brands appear alongside you.

You also see how your position changes over time and whether sentiment is improving or declining. This is prompt-level tracking, not keyword-level tracking. The difference matters because AI users don’t search in keywords. They ask full questions.

Don’t know which prompts to track? Analyze AI includes a prompt suggestion feature that surfaces the actual bottom-of-funnel queries your buyers are asking across AI engines.

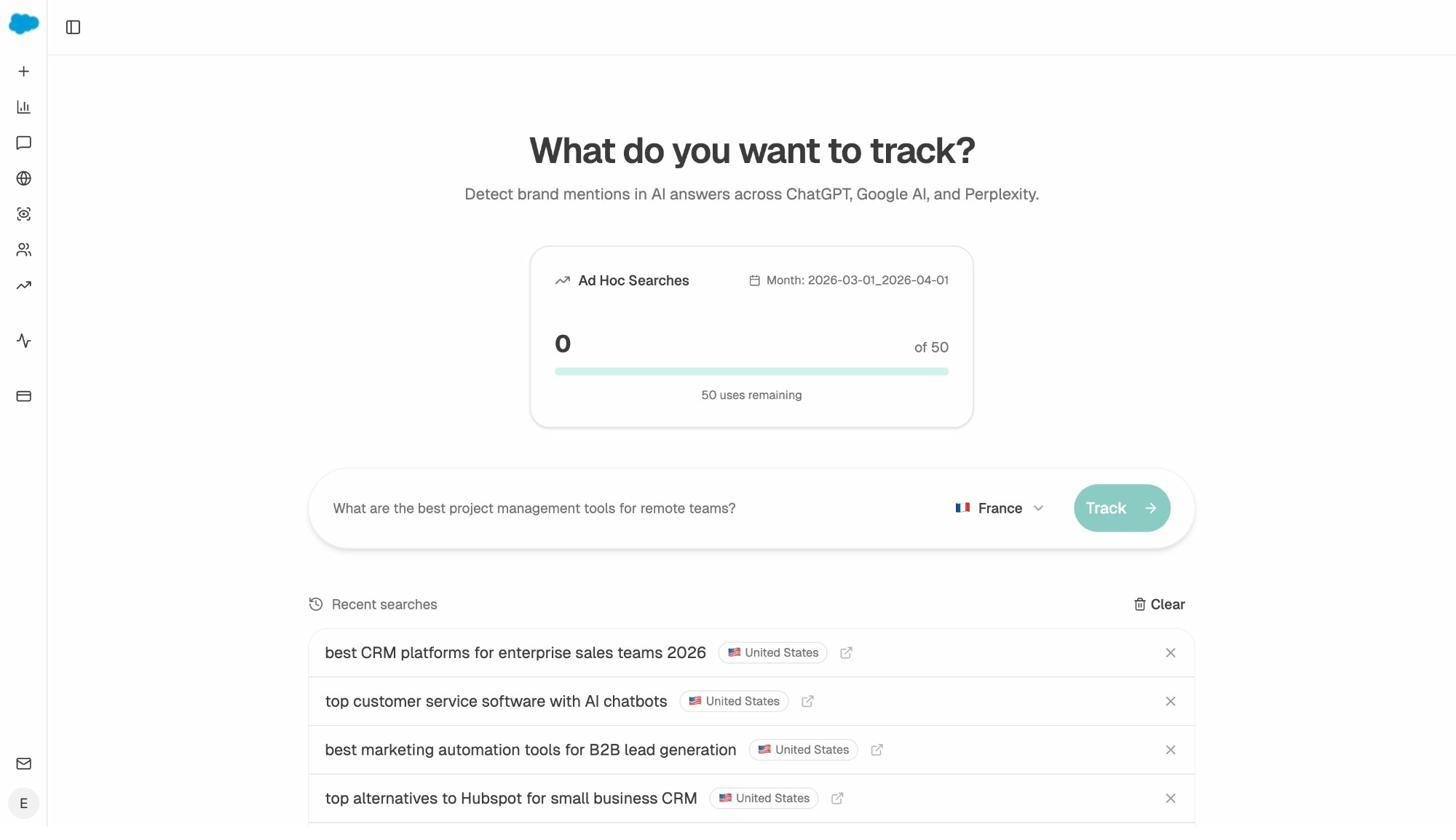

You can also run ad hoc prompt searches to test any query across all engines in real time, without adding it to your tracked set first.

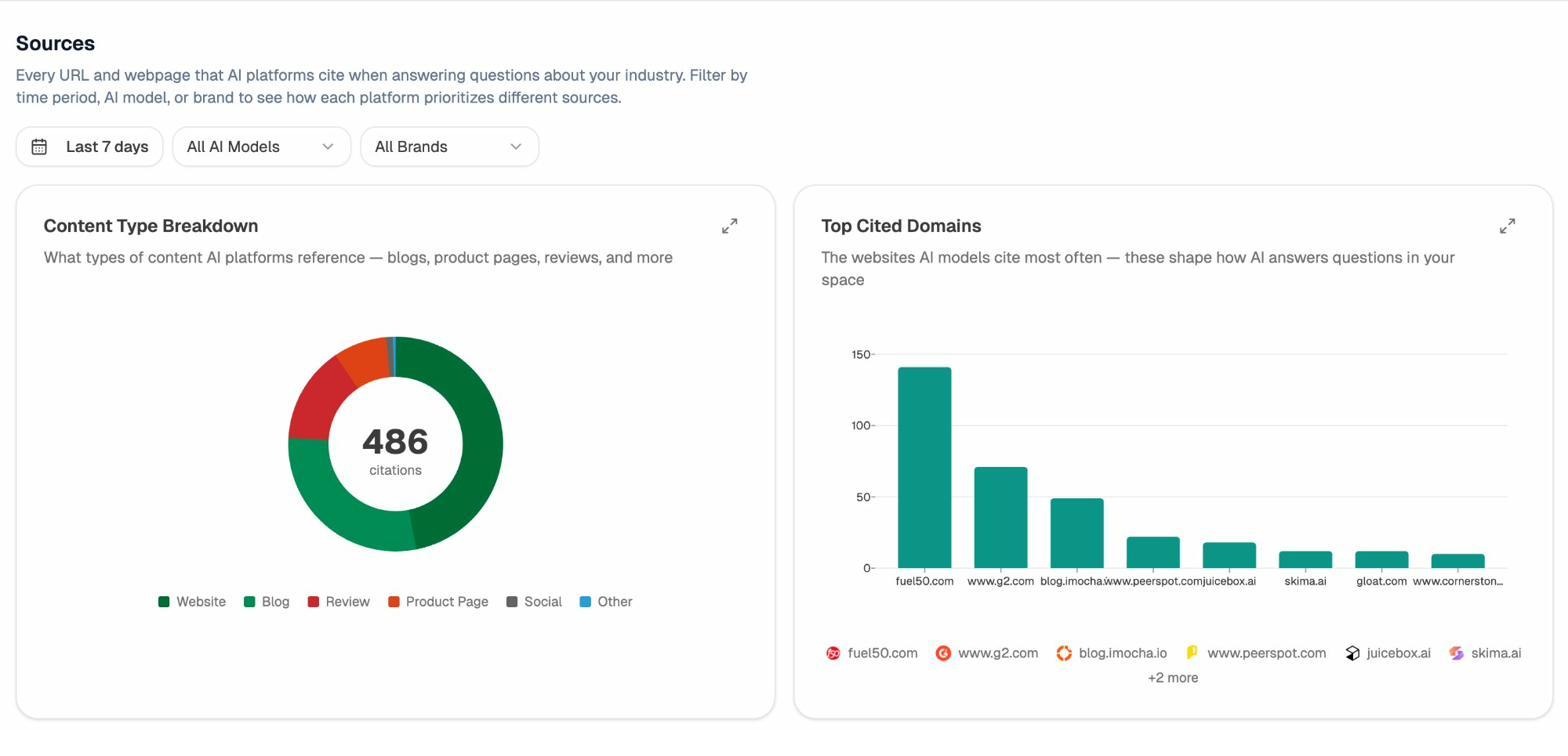

Audit Which Sources AI Models Trust

Analyze AI reveals which domains and URLs each AI model cites when answering questions in your category. You see citation counts per source, which models reference each domain, and when those citations first appeared.

This matters because it shows you where to invest. Instead of generic link building, you target the specific sources that shape AI answers. You can build relationships with domains that models already trust, create content that fills gaps in their coverage, and track whether your citation frequency increases after each initiative.

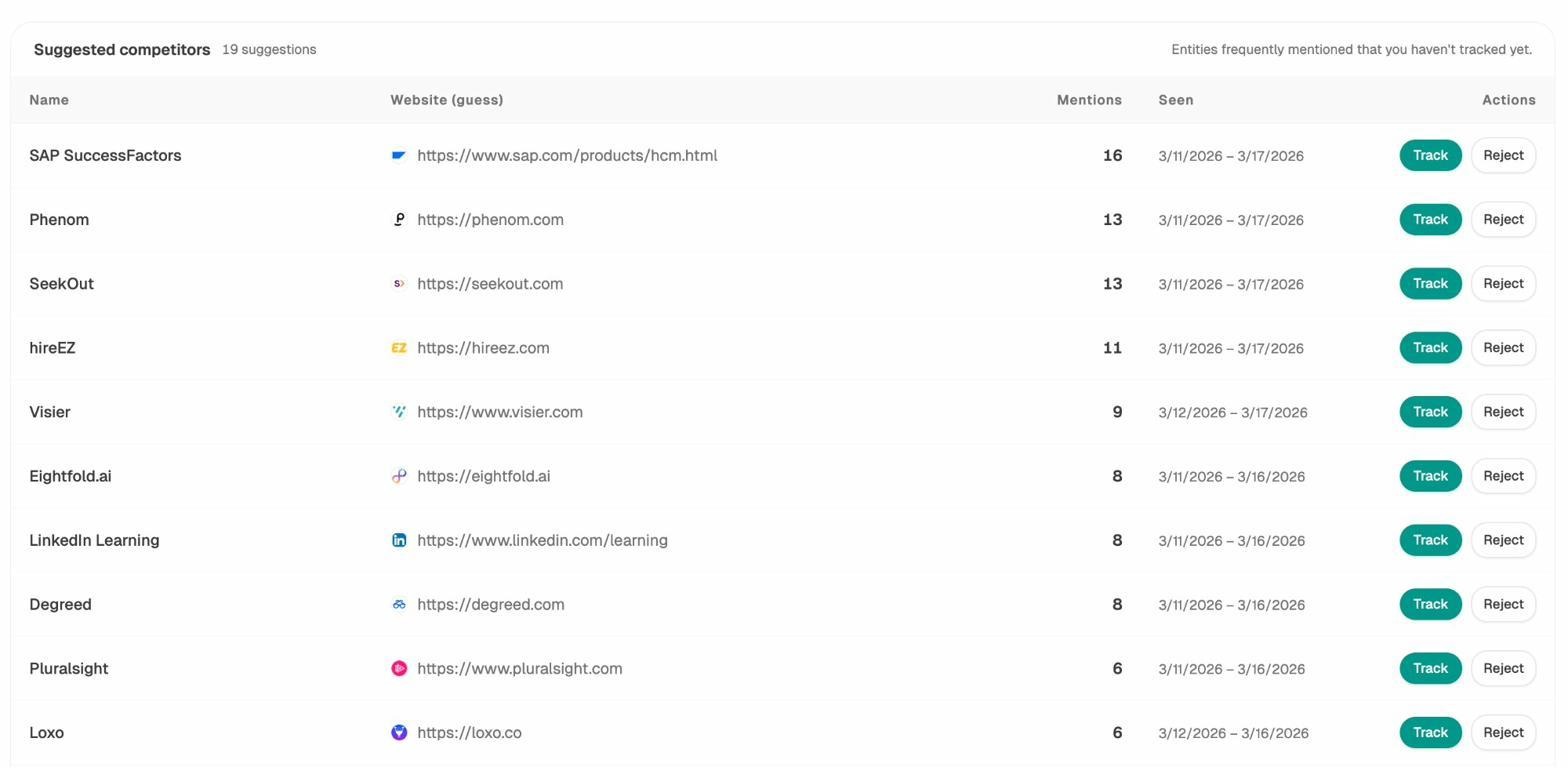

Discover Competitors You Didn’t Know You Had

Analyze AI surfaces competitors you may not be tracking yet. It scans AI responses in your category and identifies entities that appear frequently alongside your brand or instead of it. You can then add them to your tracking set with a single click.

This is different from manually adding competitors you already know about. AI engines often cite brands that wouldn’t appear on your traditional competitor list, like industry publications, niche review sites, or adjacent tools that are showing up in the same buyer prompts.

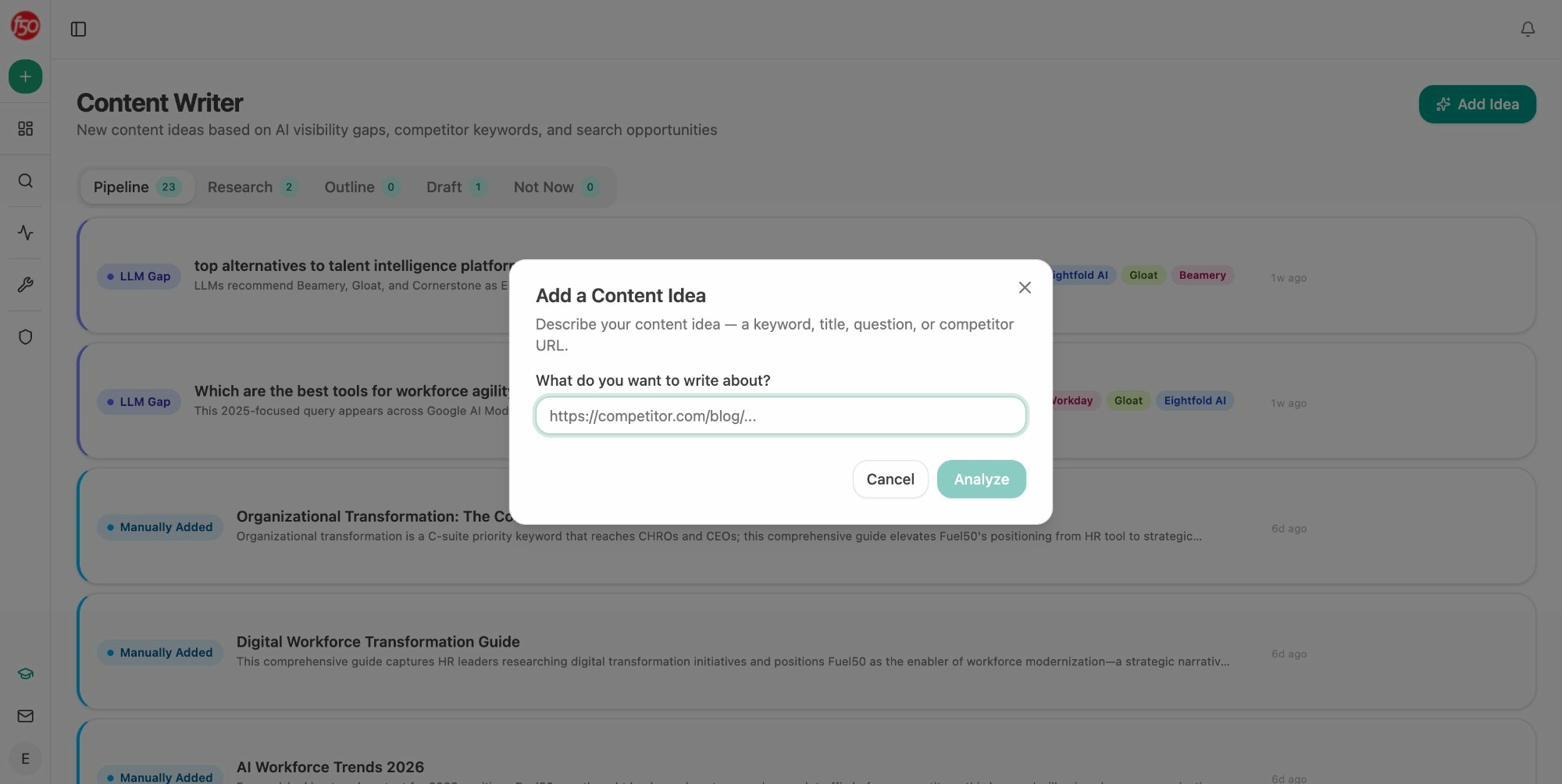

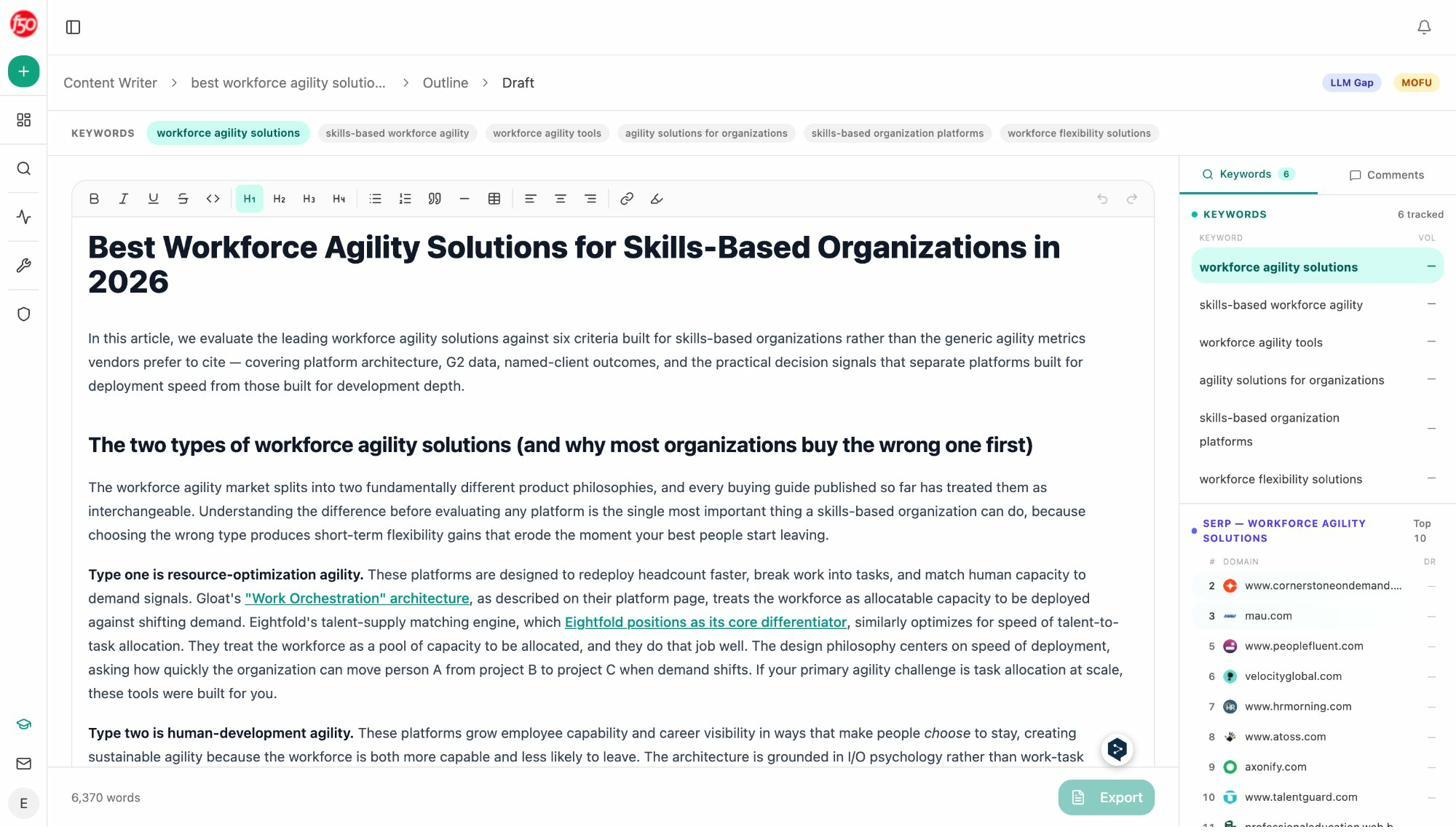

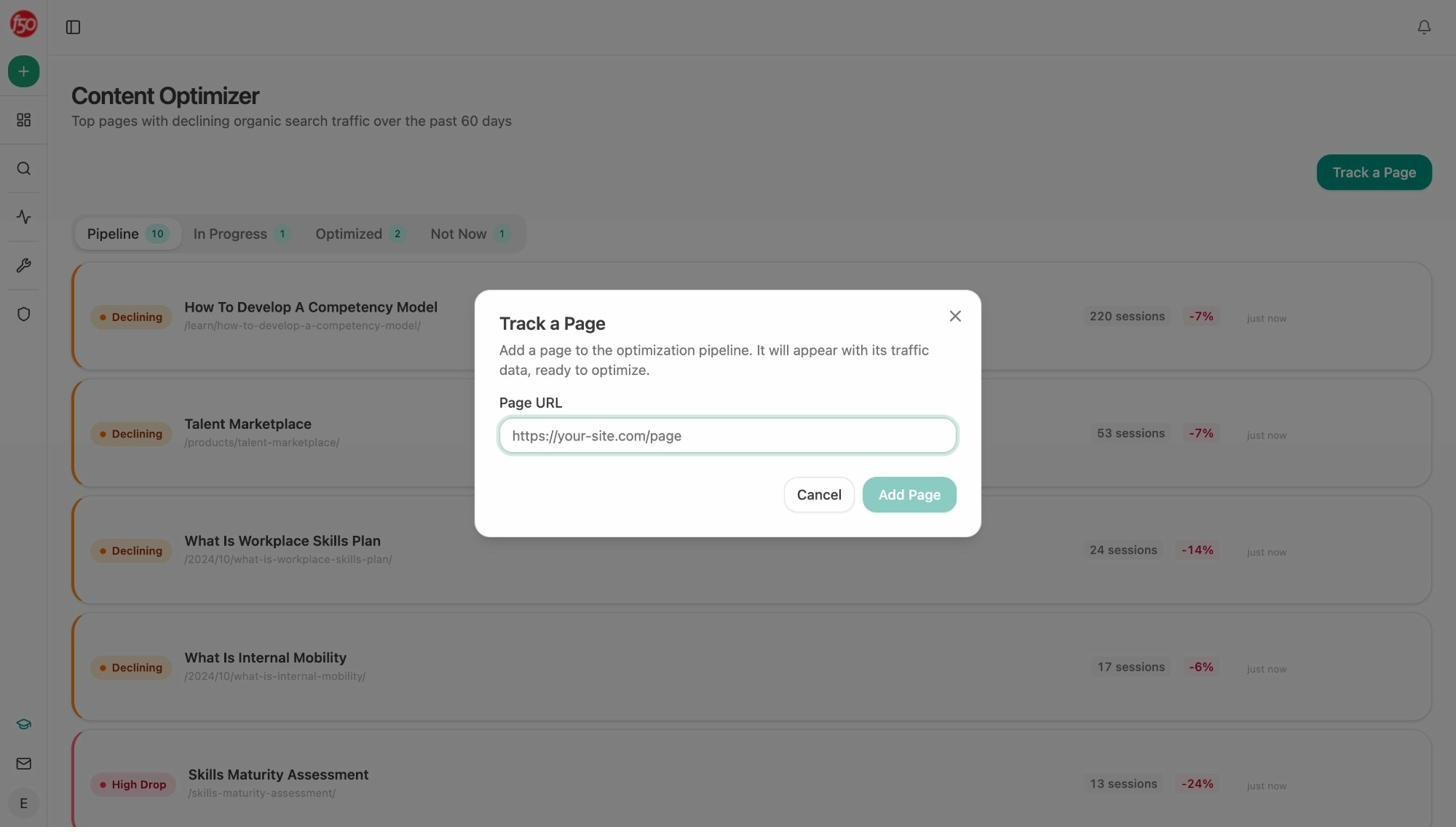

Write and Optimize Content That AI Engines Actually Cite

This is where the gap between LLMrefs and Analyze AI becomes widest. LLMrefs is a read-only dashboard. Analyze AI includes a full AI Content Writer and AI Content Optimizer that help you create and improve the content that drives AI visibility.

The Content Writer doesn’t just generate drafts. It builds a full pipeline of content ideas based on AI visibility gaps, competitor keywords, and search opportunities. Each idea goes through research, outline, and draft stages, with your Knowledge Base brand voice injected at every step.

The Content Optimizer works differently. You feed it an existing URL, and it scores the page on argument structure, clarity, and AEO readiness, then provides specific edits and comments to improve it.

These outputs are better than generic AI writing tools because the system pulls in your competitive landscape, your brand voice, and the actual prompts where you need to show up. The optimizer doesn’t give you vague suggestions. It tells you exactly what to change and why.

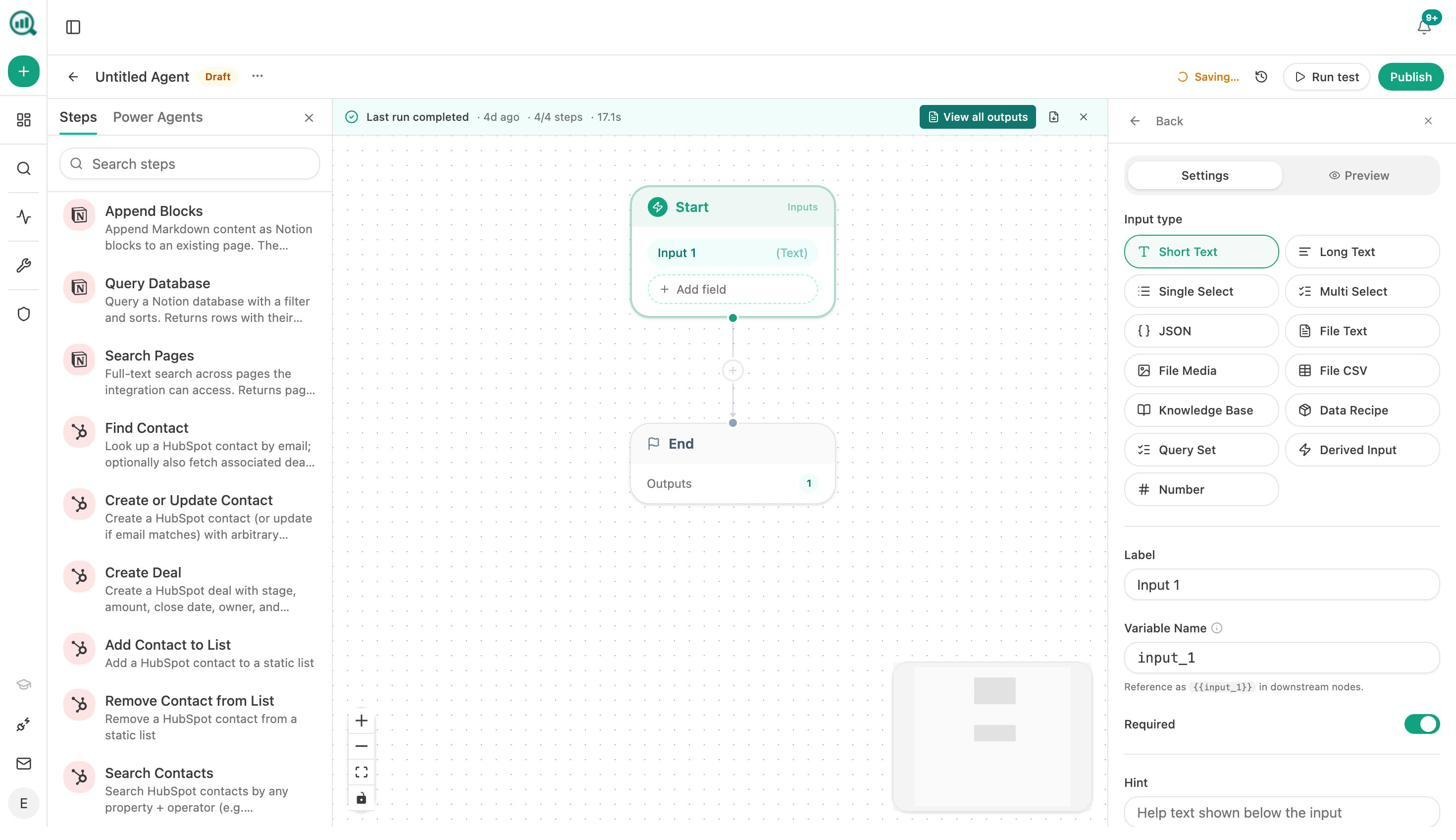

Automate Everything With the Agent Builder

The Agent Builder is where Analyze AI separates from every other tool in this category. It is a programmable operations layer with 180+ nodes, 34 pre-built data recipes, 13 input types, and three trigger modes (manual, scheduled, webhook).

This is not a simple automation add-on. The Agent Builder connects directly to GA4, Google Search Console, Semrush, DataForSEO, HubSpot, Notion, WordPress, Slack, Mailchimp, and all major LLMs. You can build workflows that would otherwise require stitching together Zapier, Make, Retool, and a custom data pipeline.

Here is what teams actually build with it.

Content teams set up brief-to-publish pipelines that research, outline, draft, score for AEO quality, and publish to WordPress automatically. If the score is below threshold, the system routes the piece back to the writer with the gaps flagged.

Agencies run one workflow per client that generates weekly intelligence reports pulling from AI visibility data, GSC, GA4, and competitor benchmarks, then emails each account team automatically. Reporting day stops existing.

PR teams build crisis early-warning agents that scan brand mentions every 15 minutes, filter by sentiment and reach, and Slack the comms team before the CEO even hears about it.

Sales teams wire up inbound form submissions to trigger automatic lead enrichment using Hunter, Tomba, and DataForSEO, then push the enriched profile into HubSpot with full research notes attached. The AE gets a fully qualified lead before they even open the notification.

A scheduled agent runs like a virtual employee that never forgets, never takes vacation, and costs cents per run. A webhook agent is event-driven. A deal closes in HubSpot, and the case study draft starts writing itself. A press hit lands, and the response brief is already in Slack.

LLMrefs has no equivalent to this. No automation layer. No integrations. No way to take action on the data it surfaces.

Monitor Brand Perception Across AI Engines

Analyze AI includes a Perception Map that visualizes how AI engines position your brand on key attributes relative to competitors. This is useful for brand and comms teams who need to understand not just whether they’re mentioned, but how they’re described.

The platform also sends Weekly Email Digests that summarize your AI visibility changes, top-performing engines, competitive shifts, and recommended actions. Your leadership team stays informed without logging into a dashboard.

How Analyze AI Compares to LLMrefs

|

Feature |

LLMrefs ($79/month) |

Analyze AI |

|---|---|---|

|

AI engine coverage |

11+ engines |

ChatGPT, Perplexity, Claude, Gemini, Copilot, Google AI Mode |

|

Prompt tracking |

Keyword-based, 50 keyword cap (Pro) |

Prompt-level, with suggestions and ad hoc search |

|

AI traffic analytics |

No |

Yes, with GA4 integration, landing pages, conversions |

|

Citation analytics |

Basic domain tracking |

Full source audit with content type breakdown |

|

Sentiment monitoring |

No |

Yes, per prompt and per engine |

|

Content writer |

No |

Full pipeline from idea to published draft |

|

Content optimizer |

No |

AEO scoring with editorial comments |

|

Agent Builder |

No |

180+ nodes, 34 data recipes, GA4/GSC/HubSpot/Semrush integrations |

|

Competitor intelligence |

Manual competitor tracking |

Auto-suggested competitors with mention frequency |

|

Perception mapping |

No |

Visual brand positioning across AI engines |

|

Weekly digests |

Weekly trend reports |

Actionable email summaries for leadership |

|

Free tools |

No |

Keyword Generator, SERP Checker, Keyword Difficulty Checker, Website Traffic Checker, and more |

The Bottom Line

LLMrefs is a solid entry point for teams that just want to monitor whether their brand appears in AI-generated answers. At $79/month with unlimited seats, it’s affordable and easy to set up. The broad engine coverage (11+ AI platforms) is a genuine strength.

But tracking mentions is only the first step. If you need to know whether those mentions drive traffic, which pages convert AI visitors, what content to create next, or how to automate your response to competitive shifts, LLMrefs doesn’t have answers for you.

Analyze AI connects the full chain. It tracks visibility, attributes traffic, scores content, writes and optimizes pages, automates workflows across your entire GTM stack, and gives you a clear picture of how AI engines perceive your brand. It is not a monitoring tool. It is the operating system for teams that treat AI search as another organic channel alongside traditional SEO, not a replacement for it.

If your budget allows only a basic tracker, LLMrefs works. If you need a platform that moves you from “we’re being mentioned” to “here’s what we’re doing about it,” start with Analyze AI.

Ernest

Ibrahim

![7 LLMrefs Alternatives That Do More Than Track Mentions [2026]](/_next/image?url=https%3A%2F%2Fwww.datocms-assets.com%2F164164%2F1779313840-blobid0.png&w=3840&q=75)

![6 AthenaHQ Alternatives That Skip the Credit Math [2026]](/_next/image?url=https%3A%2F%2Fwww.datocms-assets.com%2F164164%2F1778742681-blobid0.png&w=3840&q=75)