Summarize this blog post with:

In this article, you’ll learn what the Flesch Reading Ease score actually measures, what two extensive data studies on rankings and readability found, and whether AI search engines treat readability any differently than Google does. You’ll also get a clear answer on when to pay attention to your score, when to ignore it, and what to do instead if you want pages that earn organic traffic from both Google and AI engines like ChatGPT, Perplexity, and Gemini.

Table of Contents

What is Flesch Reading Ease?

Flesch Reading Ease (FRE) is a formula that scores how easy a piece of text is to read. The scale runs from 0 to 100. Higher numbers mean easier reading.

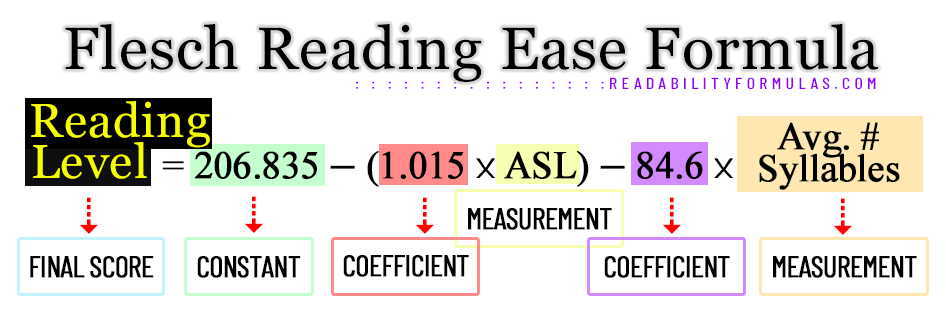

Rudolf Flesch developed the formula in the 1940s to help writers and editors check whether their work was understandable to the average adult. It uses two inputs, average sentence length and average syllables per word. That is all.

Here is the formula.

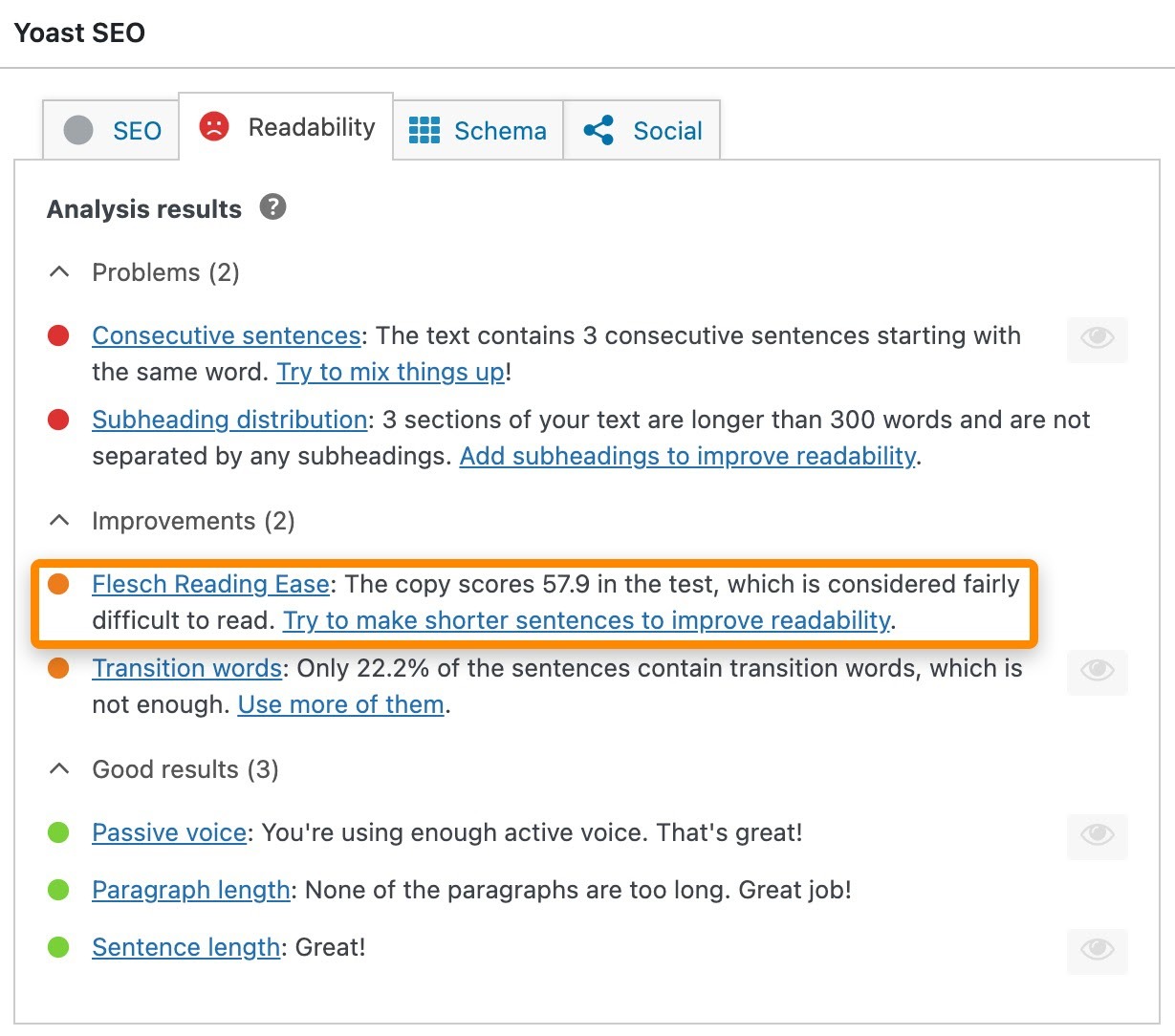

You will probably never need to calculate this by hand. Tools like Yoast, Hemingway, Grammarly, and Microsoft Word do it for you. The point worth remembering is that only two variables shape the score. Longer sentences and longer words push the number down. Shorter sentences and shorter words push it up.

Here is how to read the result.

|

Score |

Reading level |

Description |

|---|---|---|

|

90–100 |

5th grade |

Very easy. An average 11-year-old can read it. |

|

80–90 |

6th grade |

Easy. Conversational English. |

|

70–80 |

7th grade |

Fairly easy. |

|

60–70 |

8th–9th grade |

Plain English. |

|

50–60 |

10th–12th grade |

Fairly difficult. |

|

30–50 |

College |

Difficult. |

|

10–30 |

College graduate |

Very difficult. |

|

0–10 |

Professional |

Extremely difficult. |

That sets up the question every SEO has. Does this score actually help or hurt your rankings?

Does Flesch Reading Ease affect Google rankings?

The short answer is no.

In 2018, John Mueller from Google said he was not aware of any algorithms that use basic readability scores. Since FRE looks only at sentence length and word length, it would be a poor signal for an algorithm trying to judge content quality.

Two large data studies confirm this.

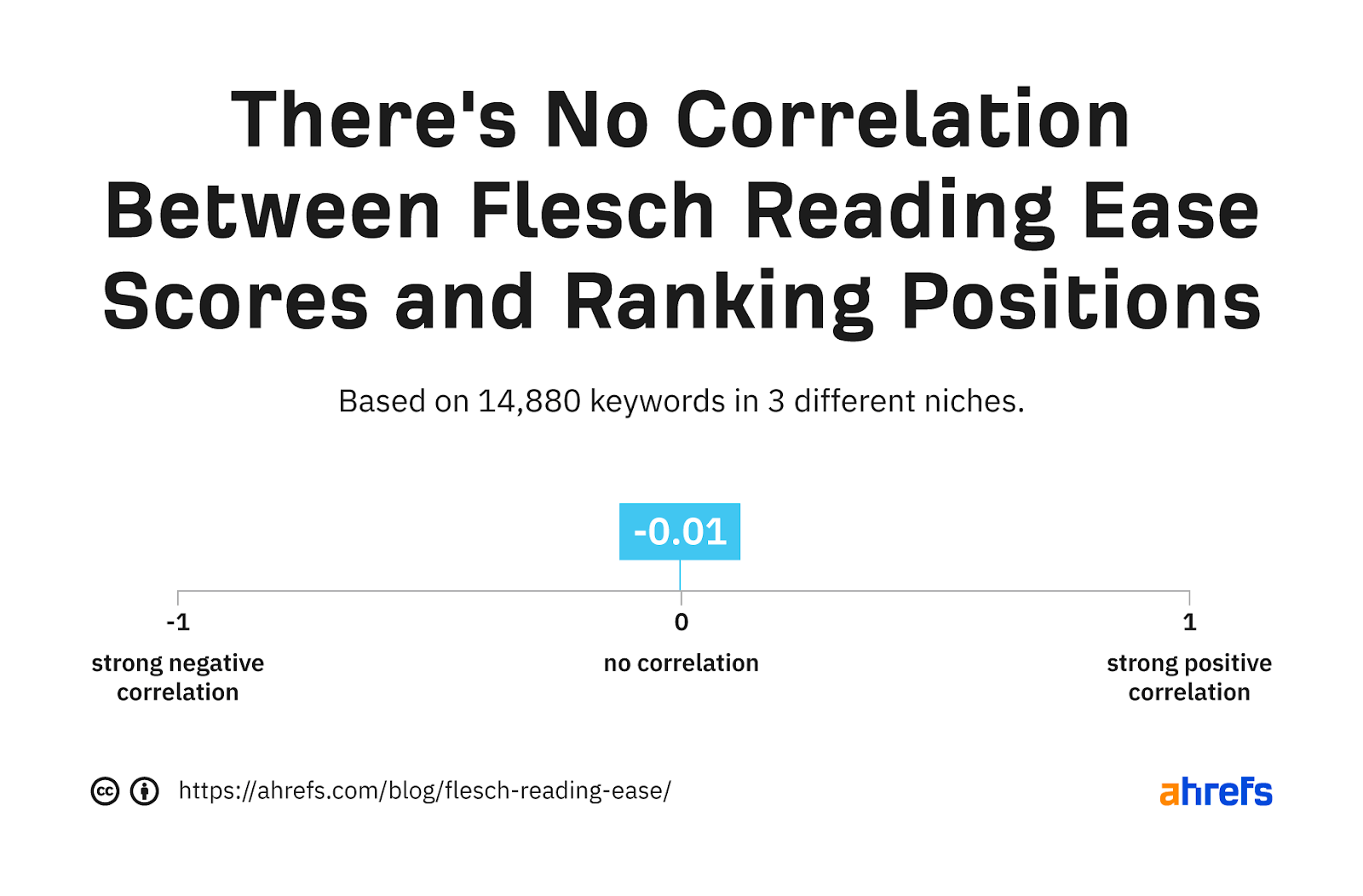

Study 1: Ahrefs (15,000 keywords). Ahrefs analyzed 15,000 keywords across food, marketing, and engineering topics. They found virtually zero correlation between Flesch Reading Ease scores and ranking position.

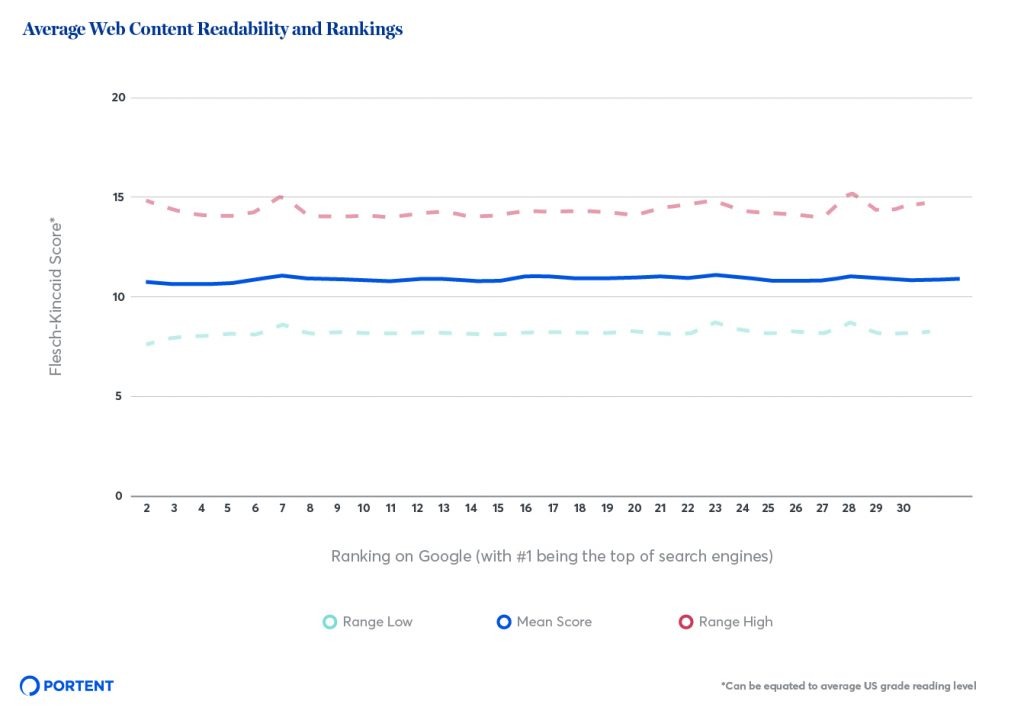

Study 2: Portent (5.8 million pages). Portent took the question further. They crawled 5,813,565 pages across 30,000 search queries and scored every page using both FRE and Flesch-Kincaid Grade Level. Two findings stand out.

First, the average FRE score for pages ranking in the top 30 was between 51.8 and 53.1. That maps to a 10th to 12th grade reading level.

Second, ranking position made no difference. Pages at position 1 had roughly the same average score as pages at position 30, and pages at position 30 had roughly the same score as pages at position 100.

Both studies arrive at the same conclusion. FRE is not a Google ranking factor.

That said, readability still matters for outcomes that influence rankings indirectly. If a page is hard to read, fewer people finish it. Fewer people link to it. Fewer people share it. Backlinks remain a key Google ranking factor, and content nobody finishes earns very few of them.

Readability is a downstream cause of ranking signals, not an input to the ranking algorithm. That distinction is the entire reason this article exists.

Does Flesch Reading Ease affect AI search visibility?

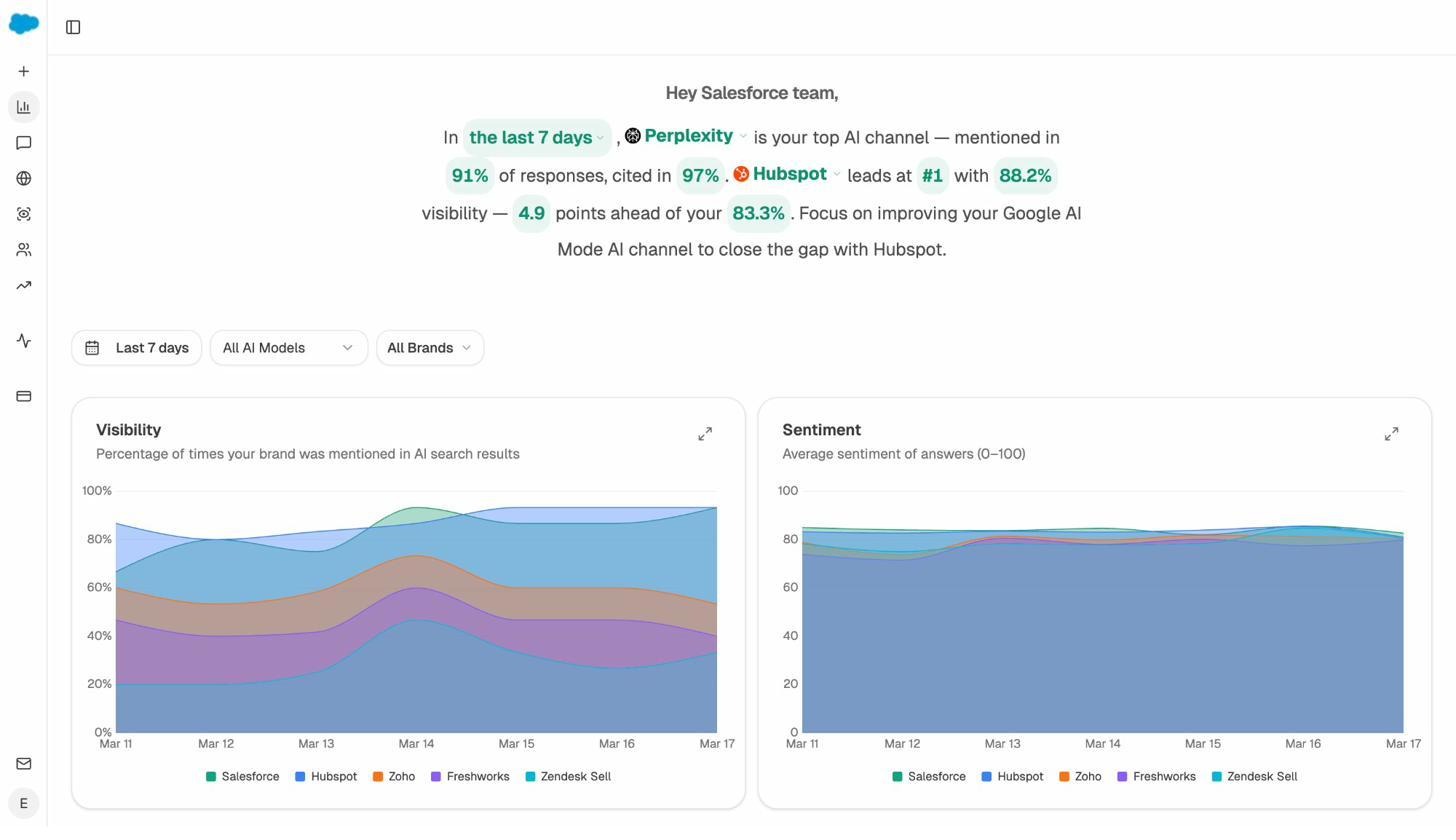

This is the question every SEO is asking now, and one Ahrefs’ original article never answered. Search is no longer a single channel. People find brands through ChatGPT, Perplexity, Gemini, and Copilot, and the rules for showing up in those answers differ from Google’s.

The short answer is the same. FRE is not a direct factor for AI engines either. But the indirect link is stronger than it is on Google, and worth understanding.

AI engines do not rank pages the way Google does. They use retrieval-augmented generation (RAG), which means they pull passages from a search index and use a language model to summarize and cite them. The unit of analysis is the passage, not the page.

That has three consequences for readability.

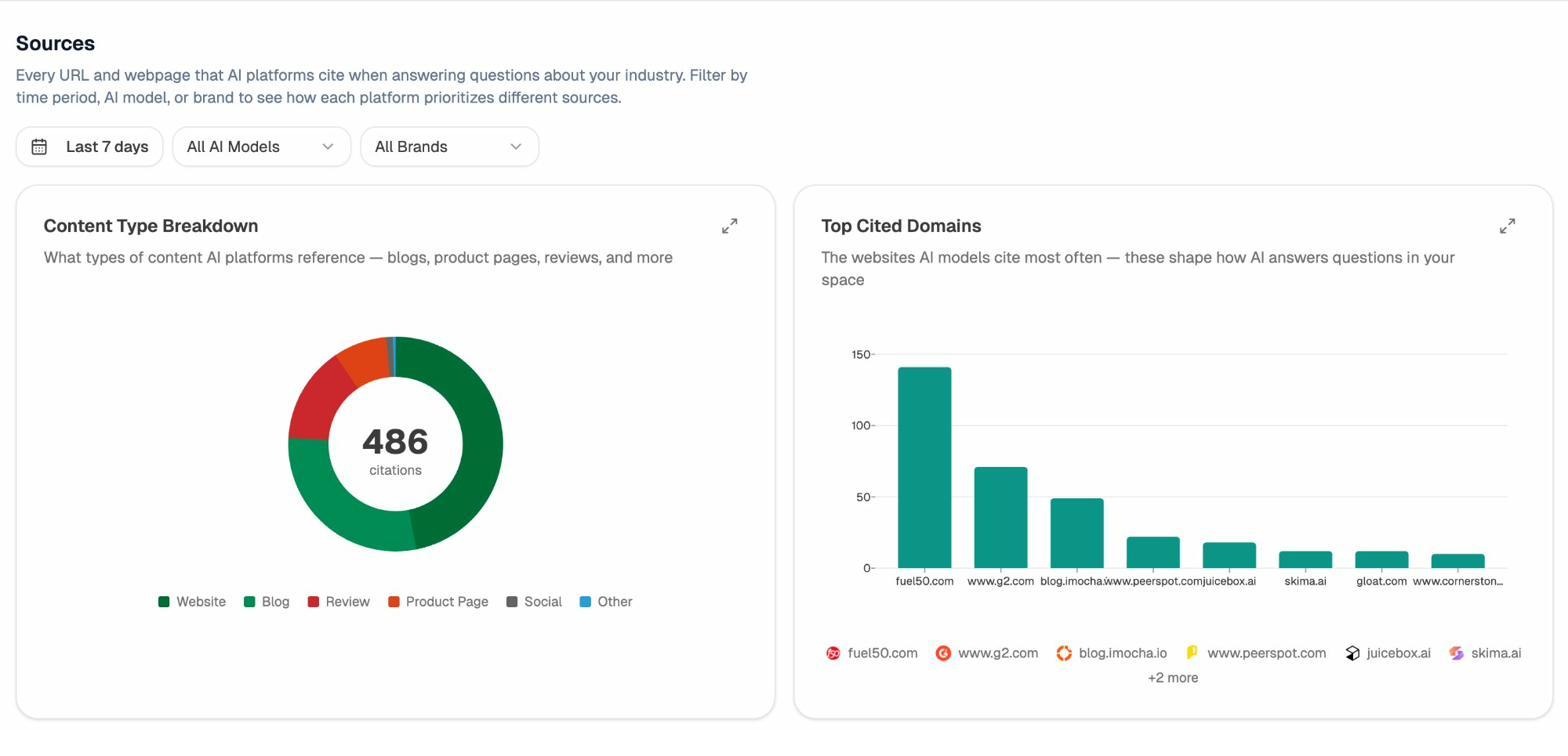

1. Sentences that are hard to lift do not get cited. A passage with a 35-word sentence and three subordinate clauses is harder for a model to extract cleanly. Shorter, declarative sentences are quoted more often. Perplexity averages 21.87 citations per response according to a 118,000-answer analysis from Whitehat SEO, which means it is constantly looking for clean, quotable passages.

2. Citation patterns reward clarity, not simplicity. ChatGPT pulls 47.9% of its top citations from Wikipedia, which writes at roughly a 10th to 11th grade level on most topics. That is the same range Portent found in Google’s top 30. Clarity wins. Dumbing down does not.

3. Each engine has its own bias. Only 11% of cited domains overlap across ChatGPT, Perplexity, Google AI Mode, and Claude. Optimizing your prose for one engine does not guarantee citations on the others. The right approach is to write content any engine can extract, which is the same approach that already works on Google.

This is also why we say at Analyze AI that SEO is not dead. Search engine visibility and AI visibility are now two organic channels that share most of their underlying signals, namely clarity, authority, and structure. The brands that win are not the ones chasing FRE scores. They are the ones writing clearly enough that both Google and AI engines can use the work. We cover the broader strategy in our guide to the 4 pillars of an effective SEO strategy for AI search.

If you want to know which AI engines cite you, which prompts trigger citations, and which competitors win the prompts you do not, the AI Visibility Tracking feature in Analyze AI surfaces that data per engine.

Why average FRE scores vary so much by topic

If you check the FRE scores of the top 10 Google results for “pancake recipe” and the top 10 results for “kubernetes service mesh,” you will find very different averages. That is not because food bloggers are better writers than engineering bloggers. It is because the two topics demand different vocabulary.

Ahrefs found this pattern in their study.

|

Topic |

Avg FRE score (top 10) |

Reading level |

|---|---|---|

|

Food |

69.7 |

Fairly easy |

|

Marketing |

60.2 |

Plain English |

|

Engineering |

49.6 |

Difficult |

A pancake recipe does not need polysyllables. A piece on Kubernetes does. Forcing the same target score across both would either bloat the recipe or strip the engineering article of the precision its readers need.

The takeaway is simple. There is no universal FRE score to chase. Look at what is already ranking for your keyword. That is the realistic floor and ceiling for your topic. Our SERP checker shows you the exact pages currently ranking, which you can then run through any FRE tool to set your benchmark.

What matters more than your Flesch Reading Ease score

If FRE is not a ranking factor and AI engines do not check it directly, where should the attention go?

Three things move the needle far more than syllable counts.

1. Argument-level clarity

Does each section state a claim, support it, and move on? Or does it meander? AI engines and human readers both reward content that answers the question without warm-up. Lead each paragraph with the conclusion, then add the evidence. The Animalz BLUF principle (bottom line up front) applies as much to a Perplexity citation as to a human skim.

2. Structural signals

Headings, lists, tables, and short paragraphs help engines parse what your content is about. They also help readers scan. A page with one wall of text scores poorly on every reader-facing metric, regardless of FRE.

3. Source coverage and entity completeness

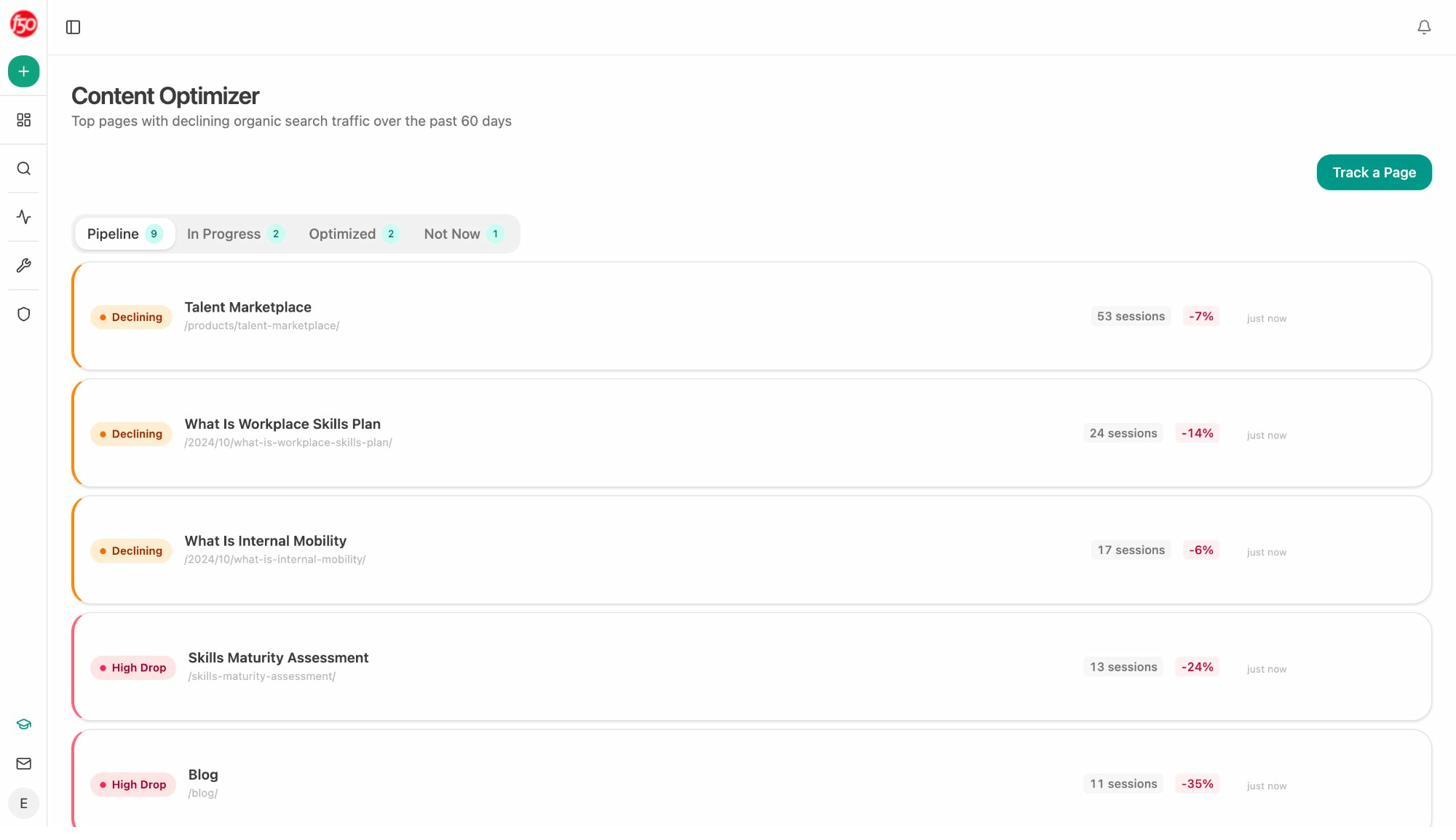

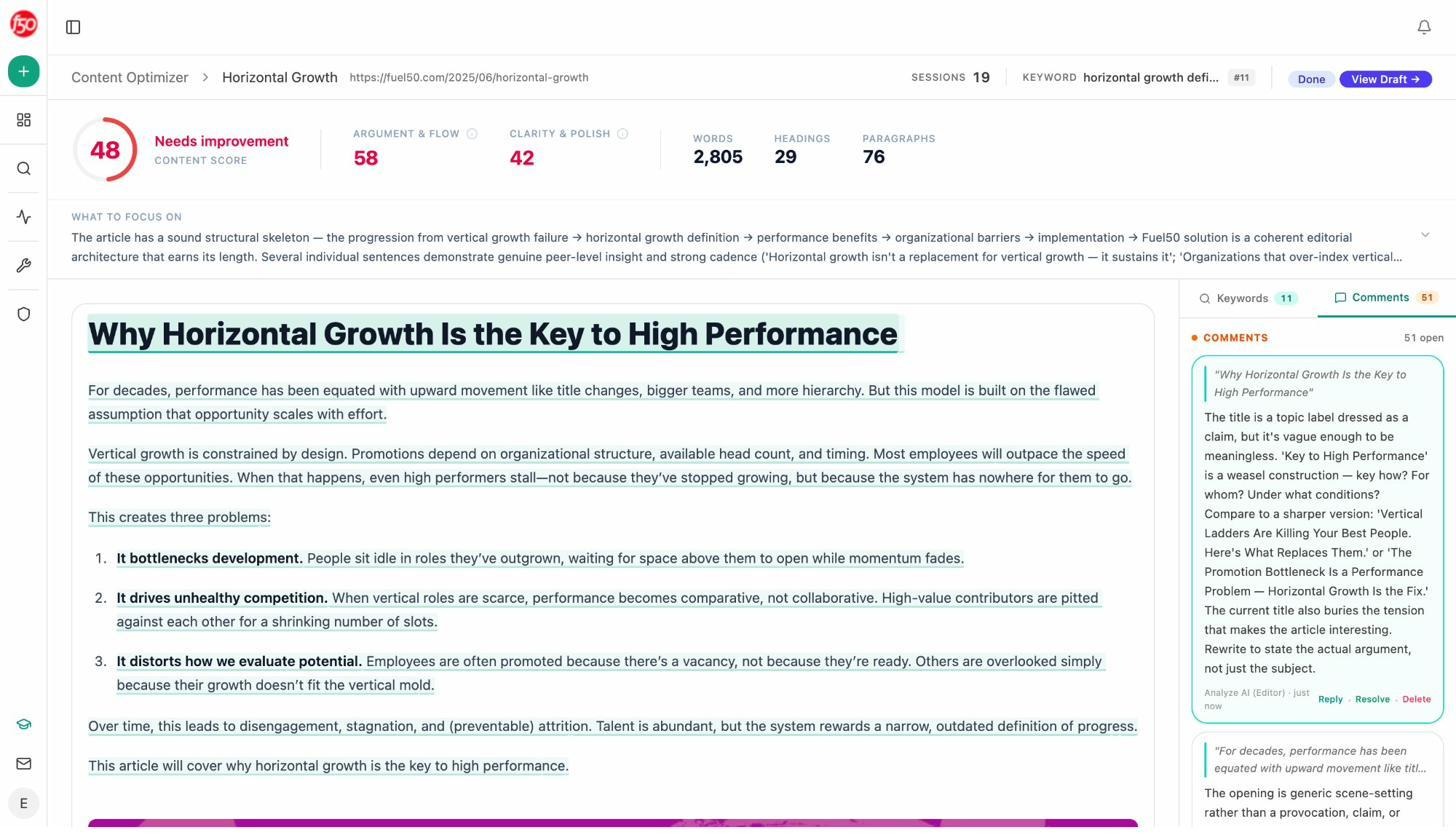

AI engines cite content that names the relevant entities, includes the right comparisons, and links to the same authoritative sources their other citations link to. The Analyze AI Content Optimizer checks each draft against the citations and entities competing pages use, and surfaces what is missing.

Once a page is published, the Sources view inside Citation Analytics shows which domains AI engines actually cite for your topics. If your pages keep losing to the same five competitors, you can see which sources those competitors cite that you do not, and earn placements on those sources.

These are signals AI engines use directly. FRE is not one of them.

When you should care about readability

Readability still matters in cases where the score is a proxy for the reader’s experience. Three cases stand out.

1. High-stress informational queries. If someone is searching “how to fix a leaky faucet,” they are not sitting back to enjoy your prose. They want the steps. A page with a high FRE (70+) reduces friction. A page with a low FRE creates it.

2. Mainstream consumer audiences. If you are writing for a general audience, your readers represent the full range of US literacy levels. According to the National Center for Education Statistics, about half of US adults read below the 9th grade level. That is a realistic ceiling for consumer-facing content.

3. Mobile-first content. On a 5-inch screen, long sentences look longer. Three-line paragraphs become walls. Higher FRE scores correlate with better mobile readability simply because shorter constructions render better on small viewports.

Outside those cases, the vocabulary you need is the vocabulary you need. A finance article cannot avoid “amortization.” A medical guide cannot replace “bilateral” with “both sides” without losing precision. Forcing a higher FRE in those contexts trades clarity for a number.

Six writing habits that improve readability without chasing a score

If your FRE score is well below the average for your topic, the fix is not to run a thesaurus over the page. It is to clean up the writing. These six habits move the score in the right direction as a side effect of better writing.

1. Lead each paragraph with the answer

Sequential writing mirrors how you think. Reverse it. Put the conclusion first. Add the evidence after. Readers and AI engines both pull what they need from the top of each paragraph, so giving them the answer first improves both citations and time on page.

2. Cut sentences that do not advance the argument

Read each sentence and ask what work it does. If it just restates the previous one, cut it. If it is a hedge, cut it. Tight articles that respect the reader’s time outperform longer articles that do not, both in dwell time and in AI extraction.

3. Replace abstract nouns with verbs

“Implementation of the optimization” becomes “we optimized.” Active sentences are shorter, score higher on FRE, and read better. Microsoft Word’s grammar check flags passive voice, and Hemingway highlights it in green. Use both.

4. Read the draft aloud

Anything you stumble over is a sentence the reader will stumble over. Anywhere you run out of breath is a sentence that needs to be split. This catches issues no tool will flag.

5. Use no more than one technical term per paragraph

Specialist words slow readers down. They are not banned, but they need room. If a paragraph contains “cohort,” “retention curve,” and “decay coefficient,” the reader is doing extra work. Spread them across paragraphs, or define the one that needs defining.

6. Use transitions that earn their place

Transition words guide the reader through the argument when they connect real ideas. They become filler when they do not. Our 200+ transition words guide covers the ones that pull weight and the ones to drop.

For more on the writing process end-to-end, see our step-by-step guide to writing an article.

Tools to check your Flesch Reading Ease score

Several free tools calculate FRE on demand. They differ in scope and feedback.

|

Tool |

What it does |

Best for |

|---|---|---|

|

Microsoft Word |

Built-in readability check via the spelling and grammar tool |

Writers who already draft in Word |

|

Highlights long sentences, passive voice, and adverbs as you write |

Drafting and tightening prose |

|

|

WordPress plugin with readability and SEO checks |

WordPress publishers |

|

|

Grammarly |

Browser app with grammar, tone, and readability scoring |

Editing across platforms |

|

Analyze AI Content Optimizer |

Combines clarity checks with AI search citation gaps and entity coverage |

Writing content that ranks in both Google and AI engines |

For a wider list of writing software, see our roundup of the 14 best writing tools and the 7 content editing tools recommended by our editors.

If you want to see what Analyze AI’s optimizer flags on a real draft, the screenshot below shows the editor view, with comments left in line by the AI strategist for the writer.

The point of any of these tools is not the score. It is the diagnostic. A low score tells you where readers will struggle, and that is the part worth acting on.

Notes about the data

The 15,000-keyword figure comes from the Ahrefs study published in 2021. They sampled food, marketing, and engineering domains, exported keywords from their Site Explorer where each domain ranked in the top 10 in the US, and excluded brand keywords. Some pages (19% in engineering, 9% in marketing, 10% in food) were excluded because the FRE could not be reliably calculated.

The 5,813,565-page figure comes from the Portent study, also published in 2021. Portent crawled the top 100 organic results for 30,000 desktop queries, ignored anchor text and HTML-heavy pages, and ran the rest through a Flesch-Kincaid Grade Level API. They reran the same data through the FRE formula for comparison.

The AI citation overlap and per-engine citation counts come from a 118,000-answer analysis run across ChatGPT, Perplexity, Google AI Mode, and Claude, summarized by Whitehat SEO. The ChatGPT-Wikipedia citation share is from a separate analysis of 680 million citations published by Profound.

Final word

Flesch Reading Ease is a useful diagnostic. It is not a Google ranking signal, and AI engines do not check it either. The score is a proxy for clarity, and clarity is what actually moves rankings, citations, and conversions.

Write for the reader who searched the keyword. Match the vocabulary they expect. Cut what does not advance the argument. The score will land where it lands.

If you want to see whether the work is paying off across both Google and AI engines, Analyze AI tracks both, in one place. The data tells you which pages earn AI citations, which prompts you win, and which ones a competitor took before you got there.

Ernest

Ibrahim