Summarize this blog post with:

Rankscale does one thing well. It gives you wide engine coverage at a low entry price. For €20/month, you get prompt tracking across 17+ AI engines using a credit-based model. That’s a strong starting point for teams piloting AI visibility tracking for the first time.

But the credit model gets expensive fast when you scale. And tracking alone does not tell you what to fix, which pages to rewrite, or whether that visibility is generating pipeline. If you are asking those questions, you need a different class of tool.

In this article, you’ll get a hands-on breakdown of seven Rankscale AI alternatives, based on what happened when we ran thousands of prompts through each platform. You’ll see which tools just count mentions, which ones connect visibility to revenue, and which ones let you actually fix the problems they find. You’ll also learn where Analyze AI fits as the agentic platform for SEO, AEO, content, and GTM ops, and why it handles more than any pure-play GEO tracker.

Table of Contents

Quick comparison

|

Tool |

Best for |

Connects to revenue? |

Content tools? |

Agent/automation layer? |

Starting price |

|---|---|---|---|---|---|

|

Full-funnel AI + SEO ops |

Yes (GA4 traffic attribution) |

Writer, Optimizer, Knowledge Base |

Yes (180+ nodes, 34 data recipes) |

||

|

Peec AI |

Prompt-level answer snapshots |

No |

No |

No |

Mid-high |

|

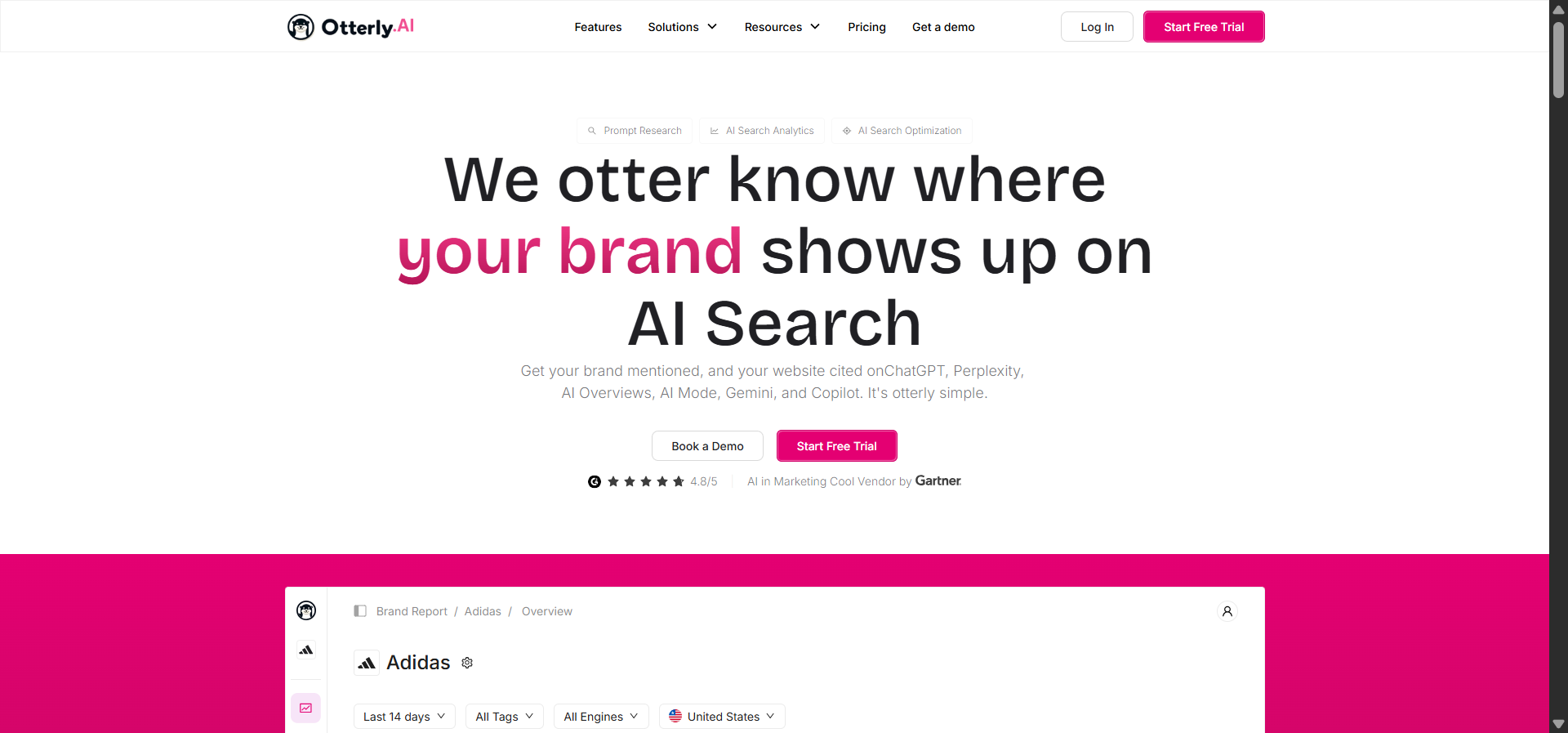

Otterly.ai |

GEO audits + citation tracking |

No |

On-page diagnostics only |

No |

~$29/mo |

|

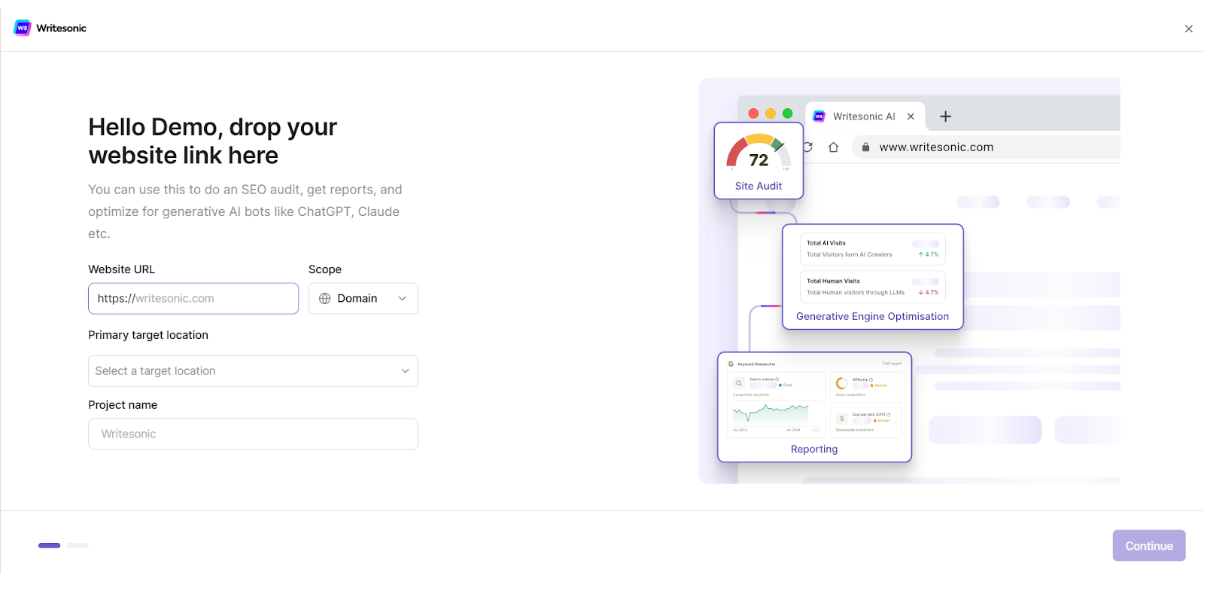

Writesonic GEO |

Tracking + content editing |

Limited |

Built-in editor |

No |

Bundled with Writesonic |

|

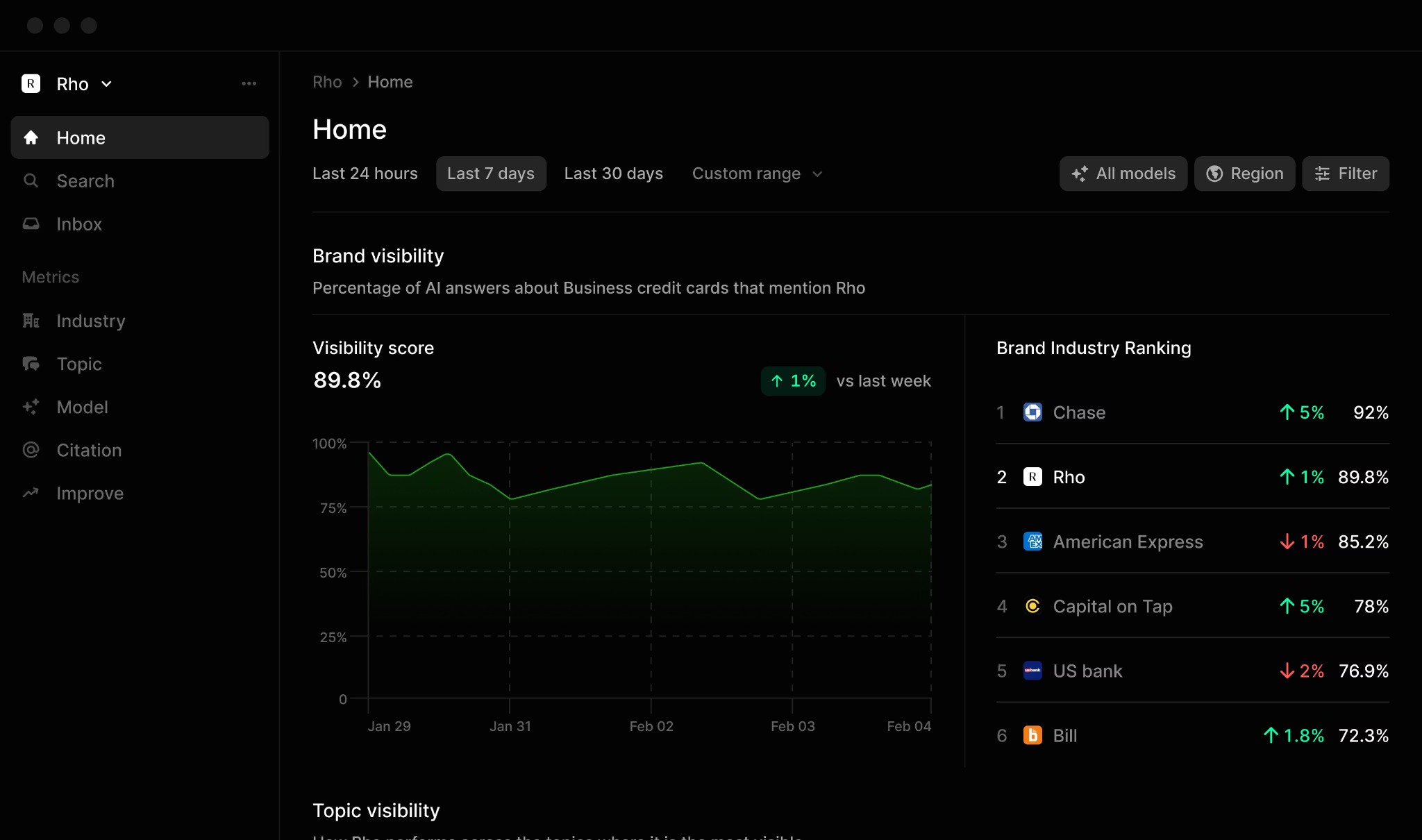

Profound |

Enterprise brand intelligence |

No |

No |

No |

~$499/mo |

|

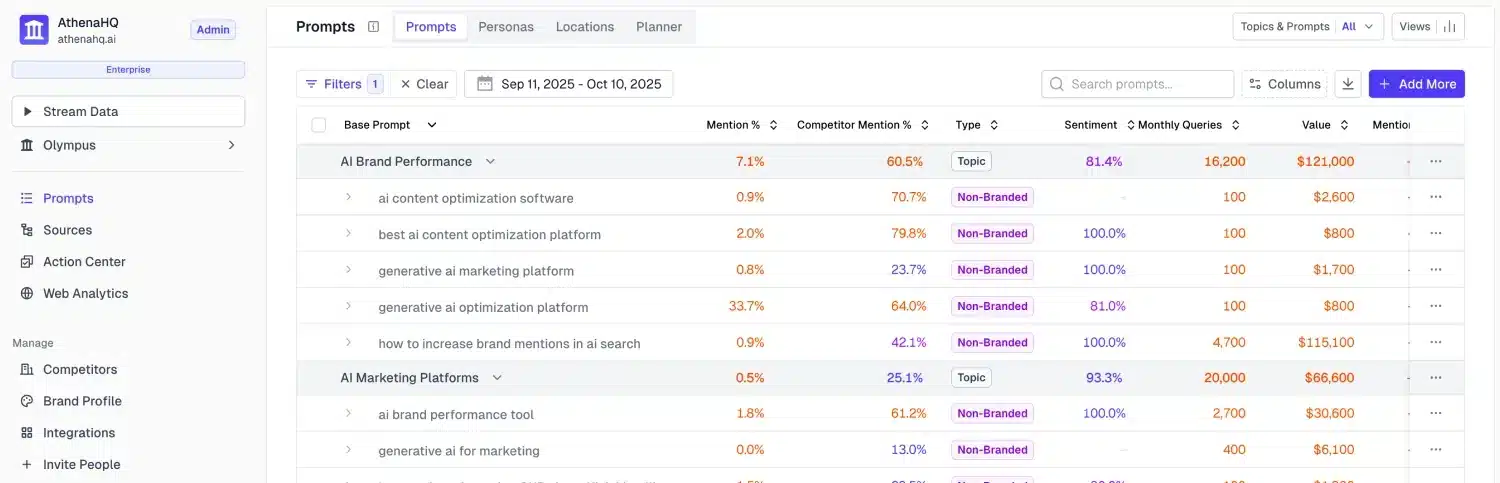

AthenaHQ |

Custom dashboards for agencies |

No |

No |

No |

High (enterprise) |

|

Rankshift |

Lightweight daily tracking |

No |

No |

No |

Moderate |

|

LLMrefs |

Citation-level reference logs |

No |

No |

No |

Freemium |

Analyze AI: the agentic platform for SEO, AEO, content, and GTM ops

Most tools on this list answer one question: “Does our brand show up in AI answers?” That is useful. But it stops at the dashboard.

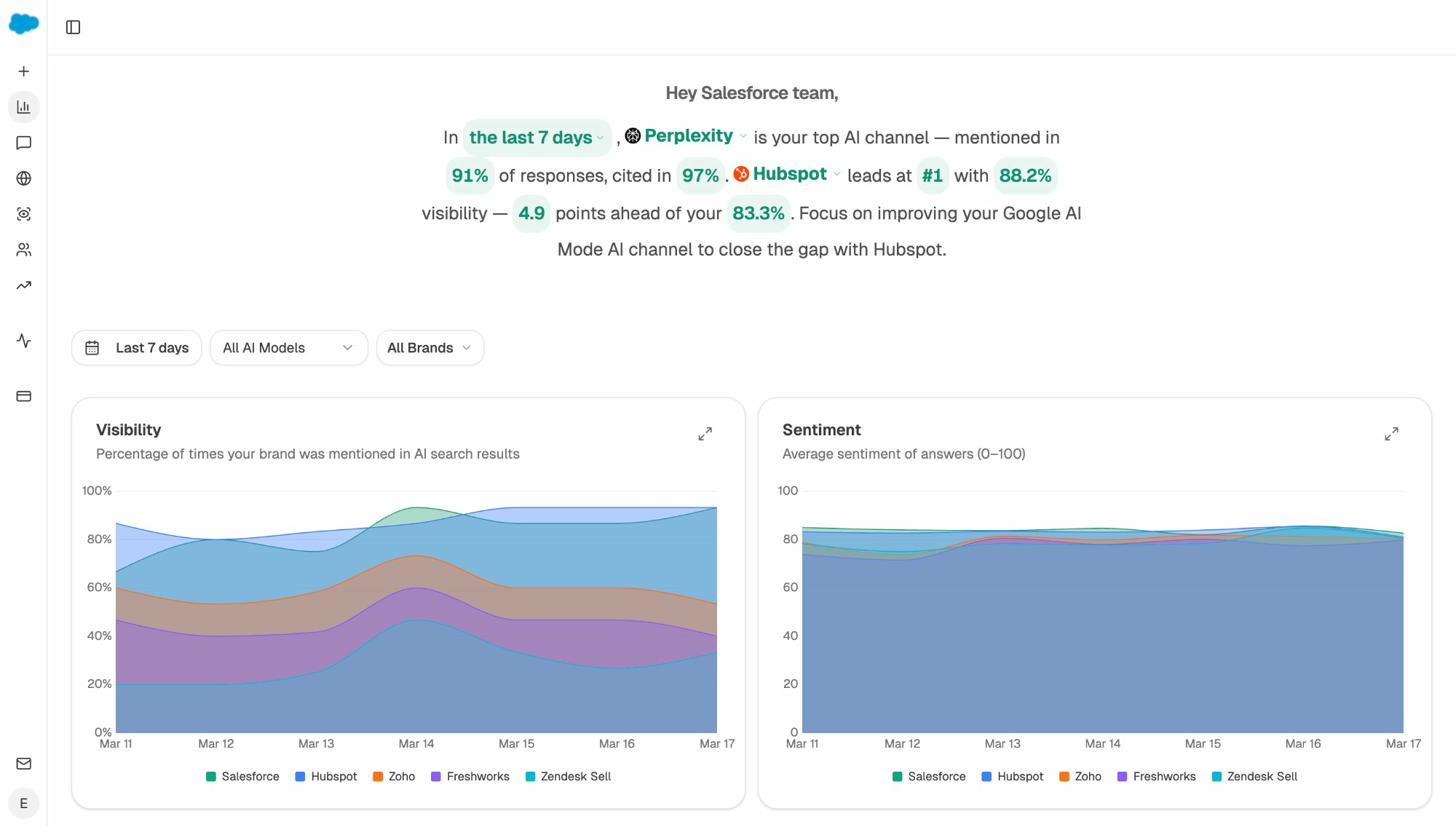

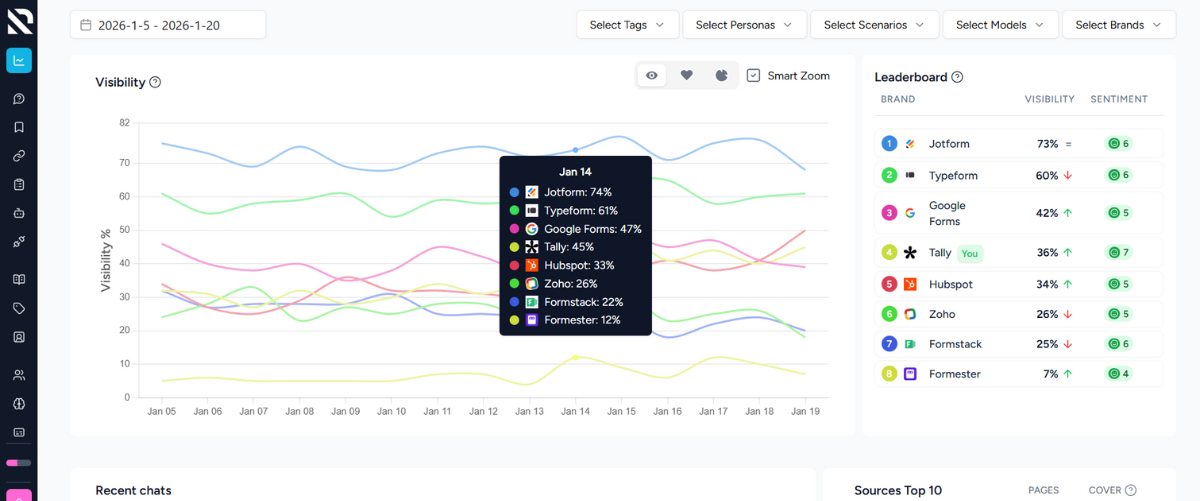

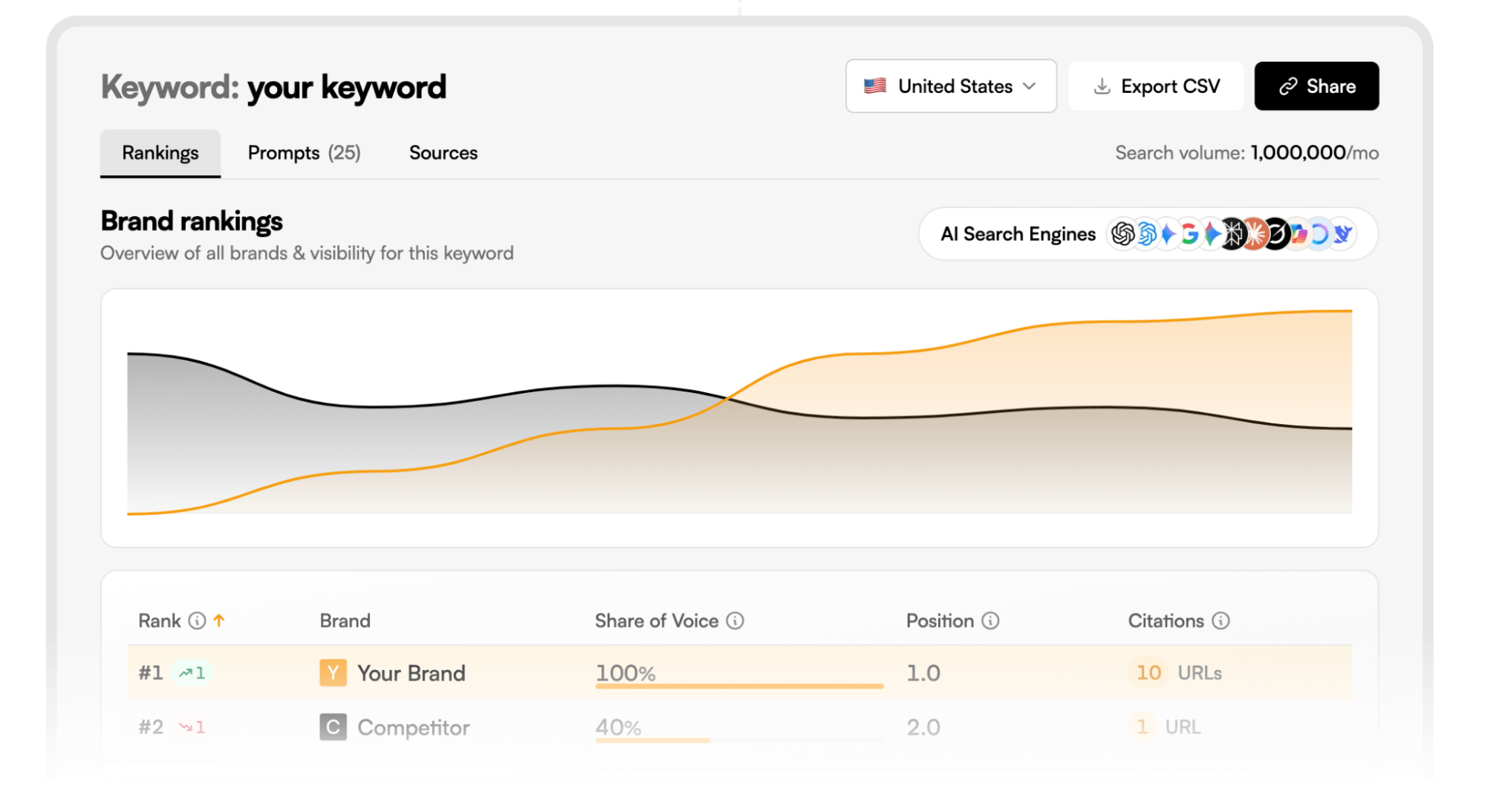

Analyze AI starts where those dashboards end. It tracks your presence across ChatGPT, Perplexity, Gemini, Claude, Copilot, and Google AI Mode. It benchmarks you against competitors under identical prompt conditions. Then it does something none of the other tools on this list do. It connects that data to traffic attribution, shows you which pages AI engines actually send visitors to, and tells you what to fix.

The platform is organized around four pillars: Discover, Monitor, Improve, and Govern.

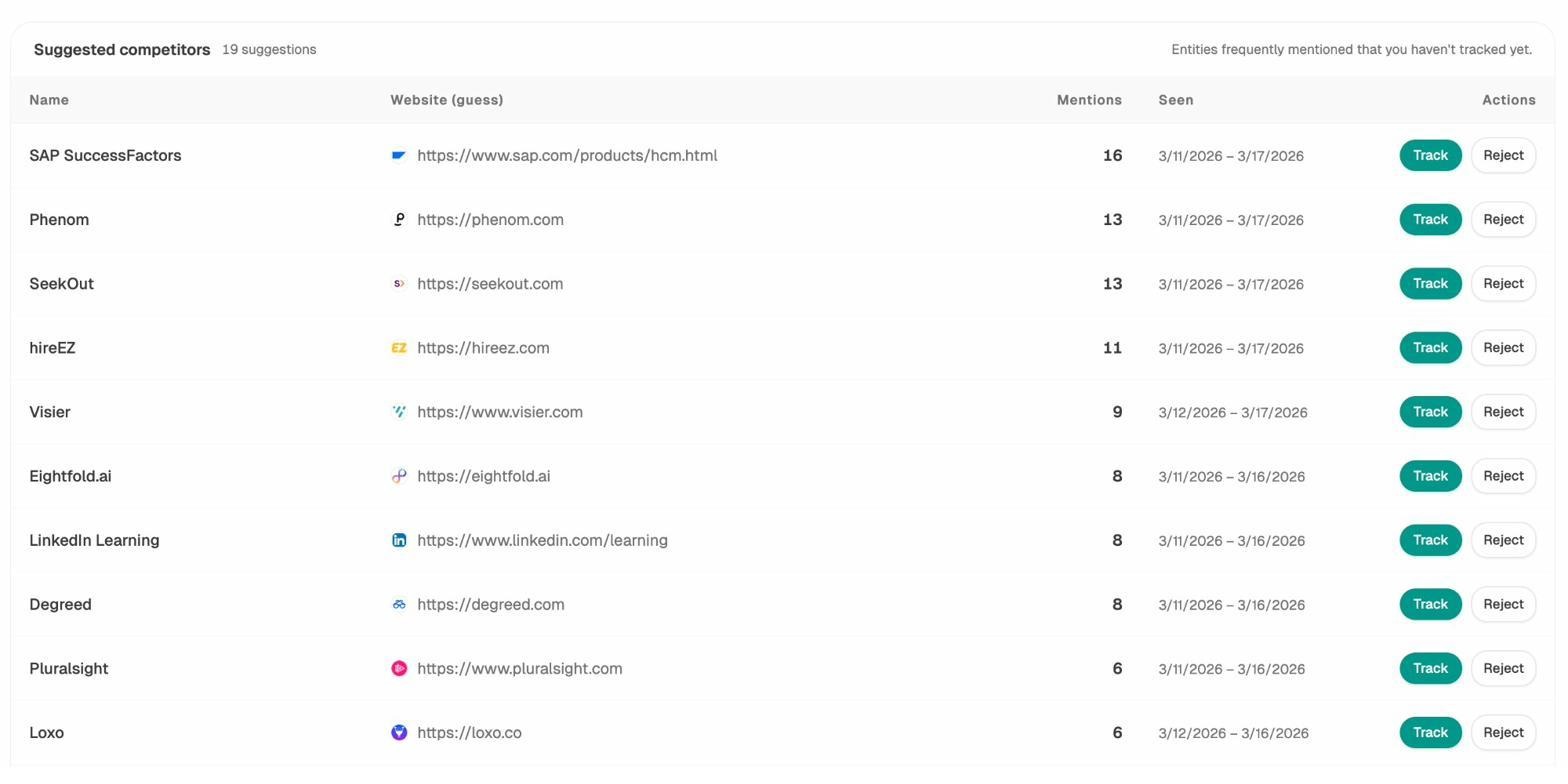

Discover surfaces the prompts your buyers are actually asking AI engines, then maps how each model responds. You see which competitors get cited and which claims they use to anchor credibility. This is not a keyword list. These are the exact questions shaping purchase decisions inside ChatGPT and Perplexity right now. The Prompt Discovery and Competitor Intelligence features work together to show you where you are being out-positioned in moments that influence buying.

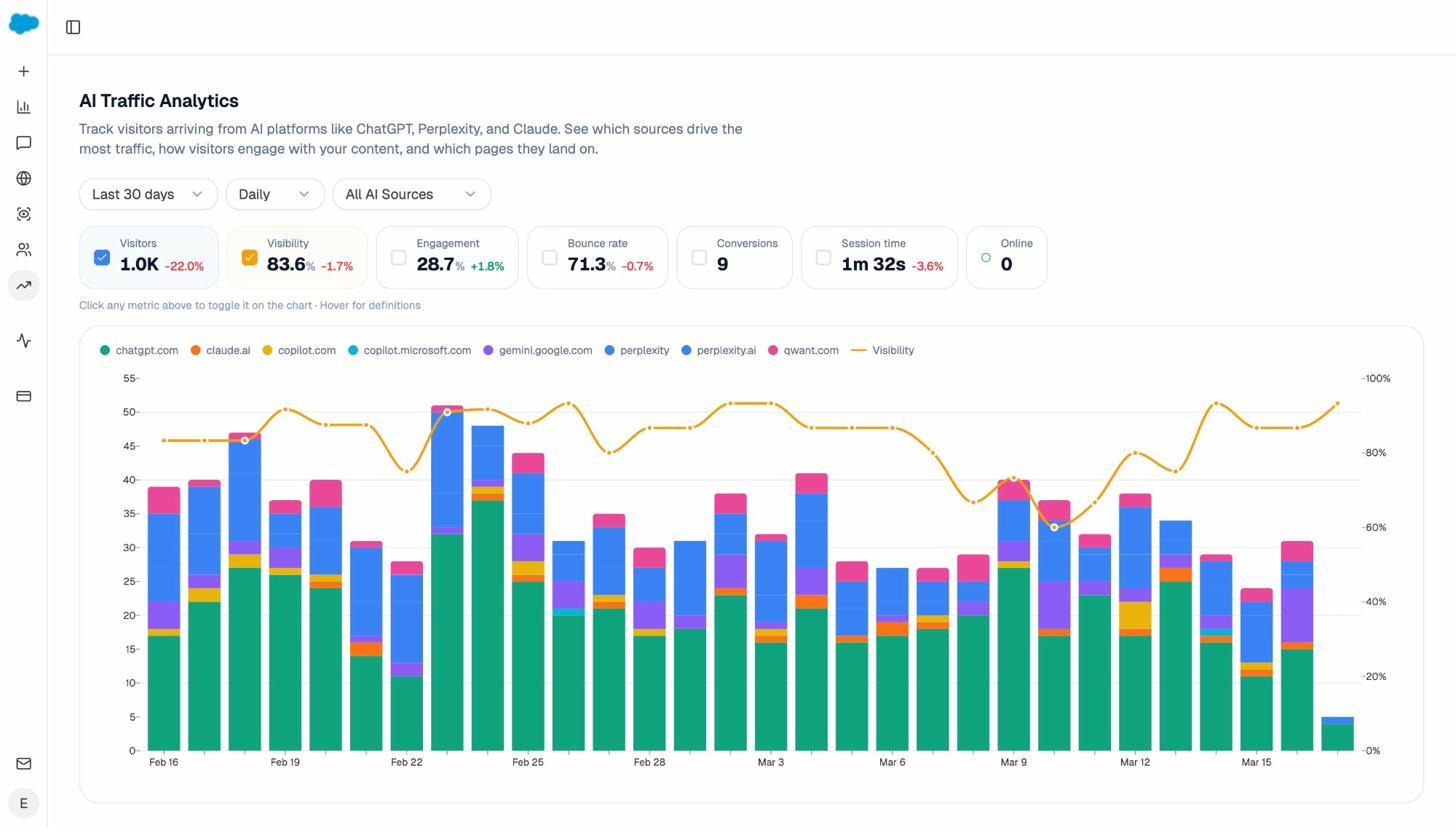

Monitor tracks those prompts daily. You see position trends, mention rates, and sentiment shifts across engines. More importantly, the AI Traffic Analytics module shows which AI engine is sending traffic, which landing page receives it, and whether those visitors convert. You can say “Claude sends us 200 sessions/month to our comparison page, and 8% request a demo.” That is channel-level attribution, not a visibility score.

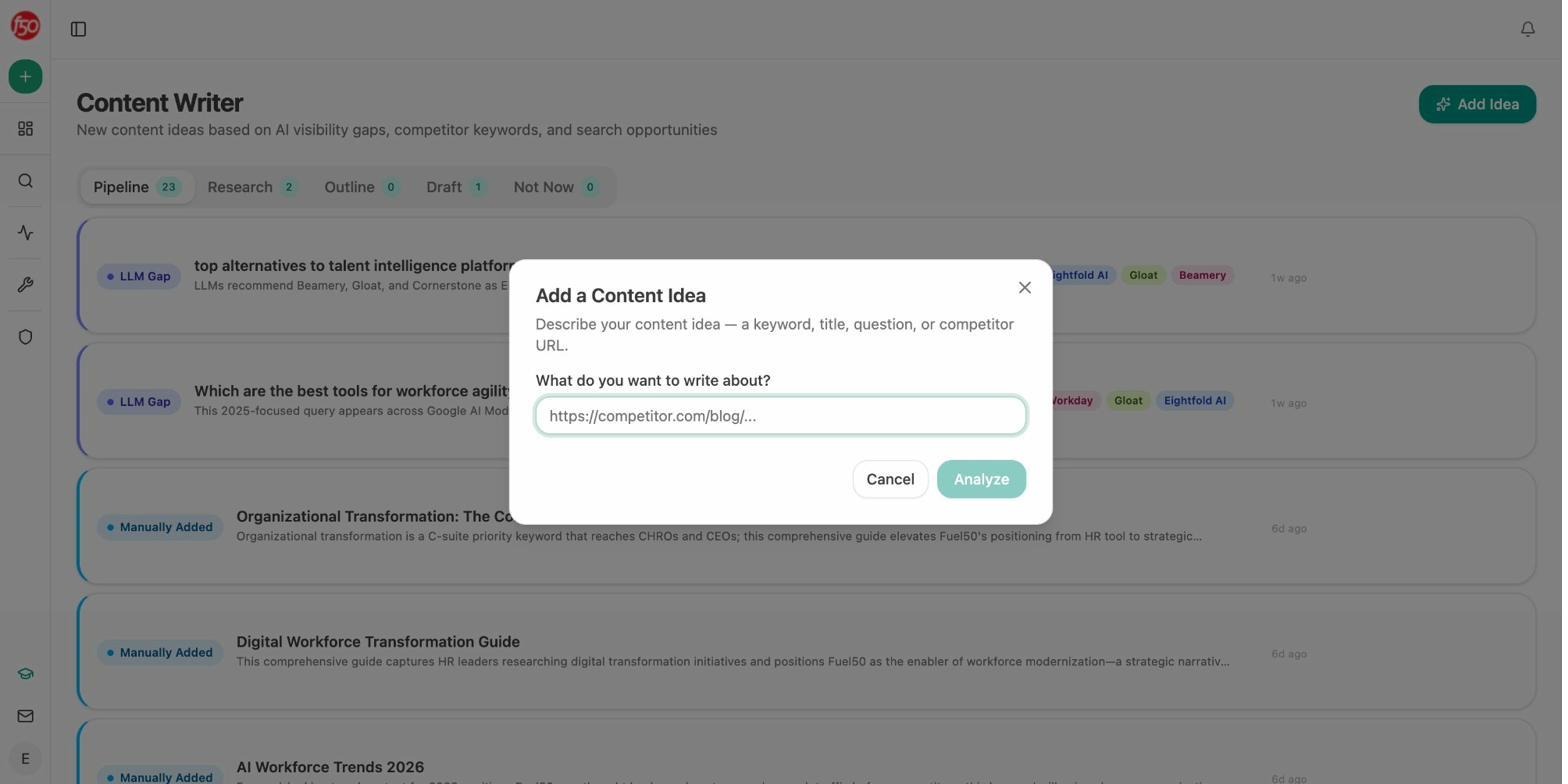

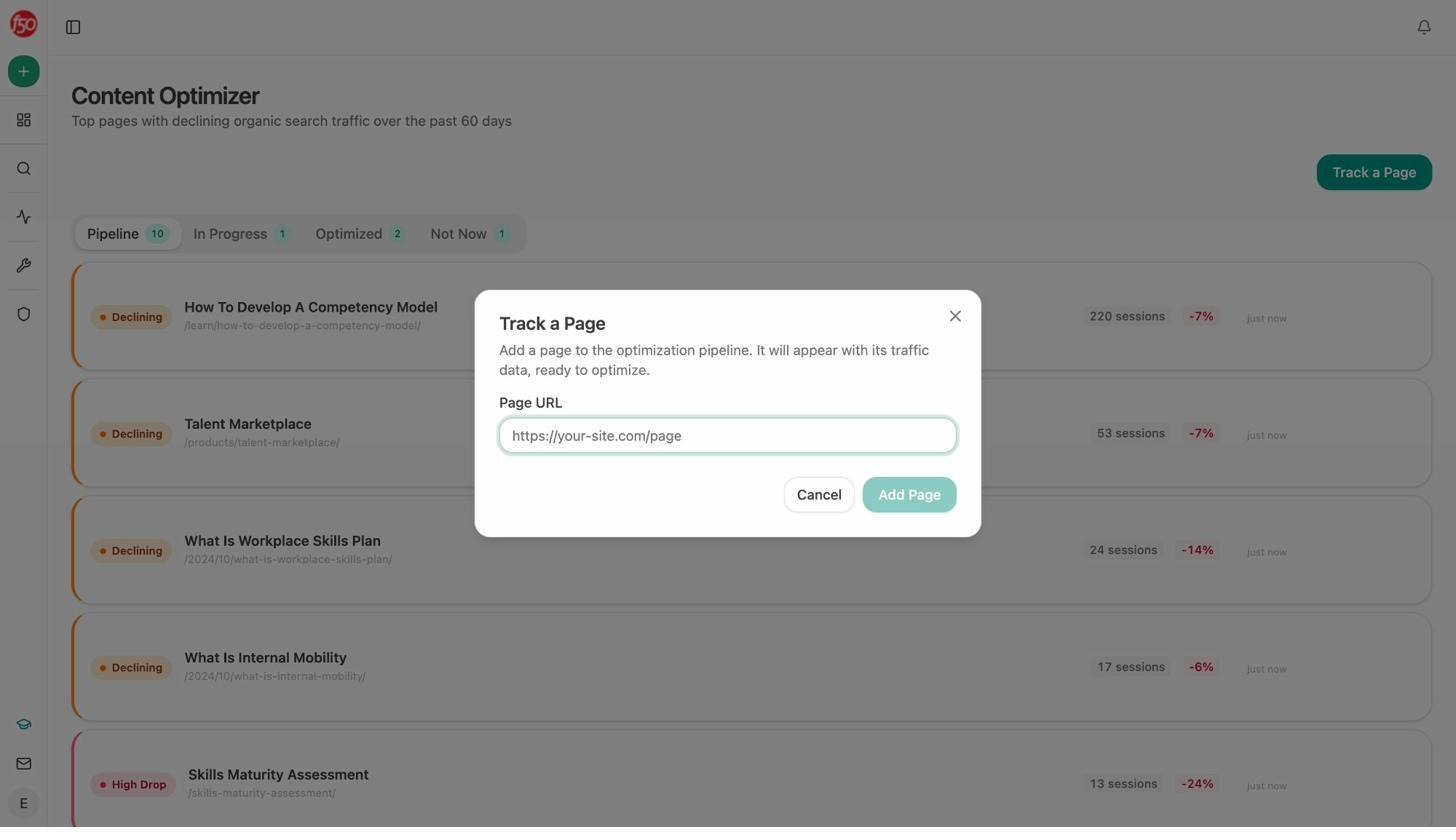

Improve is where the platform moves from analytics to execution. The AI Content Writer generates research, outlines, and full drafts with your brand voice injected from a Knowledge Base. The AI Content Optimizer audits existing pages, scores them for AI Engine Optimization readiness, and rewrites them with tracked changes so you see exactly what changed and why.

Both tools produce better outputs than most competitors because they go through a multi-step pipeline. Research comes first, then outline, draft, QA scoring, and brand-voice enforcement. They are not “paste a keyword and get a blog post” tools.

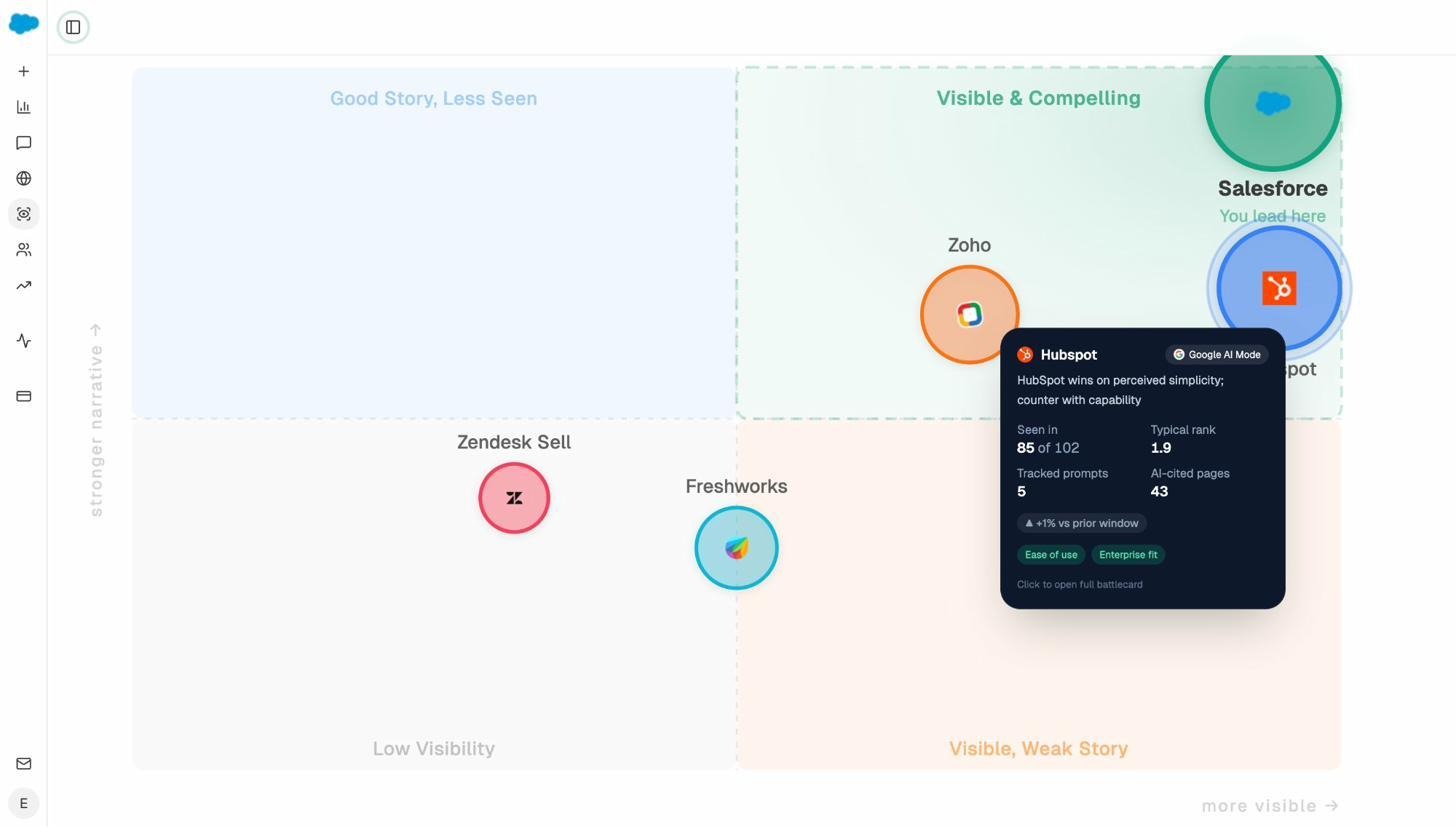

Govern protects your narrative before it shapes pipeline. The Perception Map positions all tracked brands on a 2D quadrant of presence versus narrative strength. AI Sentiment Monitoring flags when models describe you with off-message positioning or outdated claims. AI Battlecards show which competitors are gaining momentum and which external sources the models treat as authoritative on your category. And Weekly Email Digests keep your team updated without requiring anyone to log in.

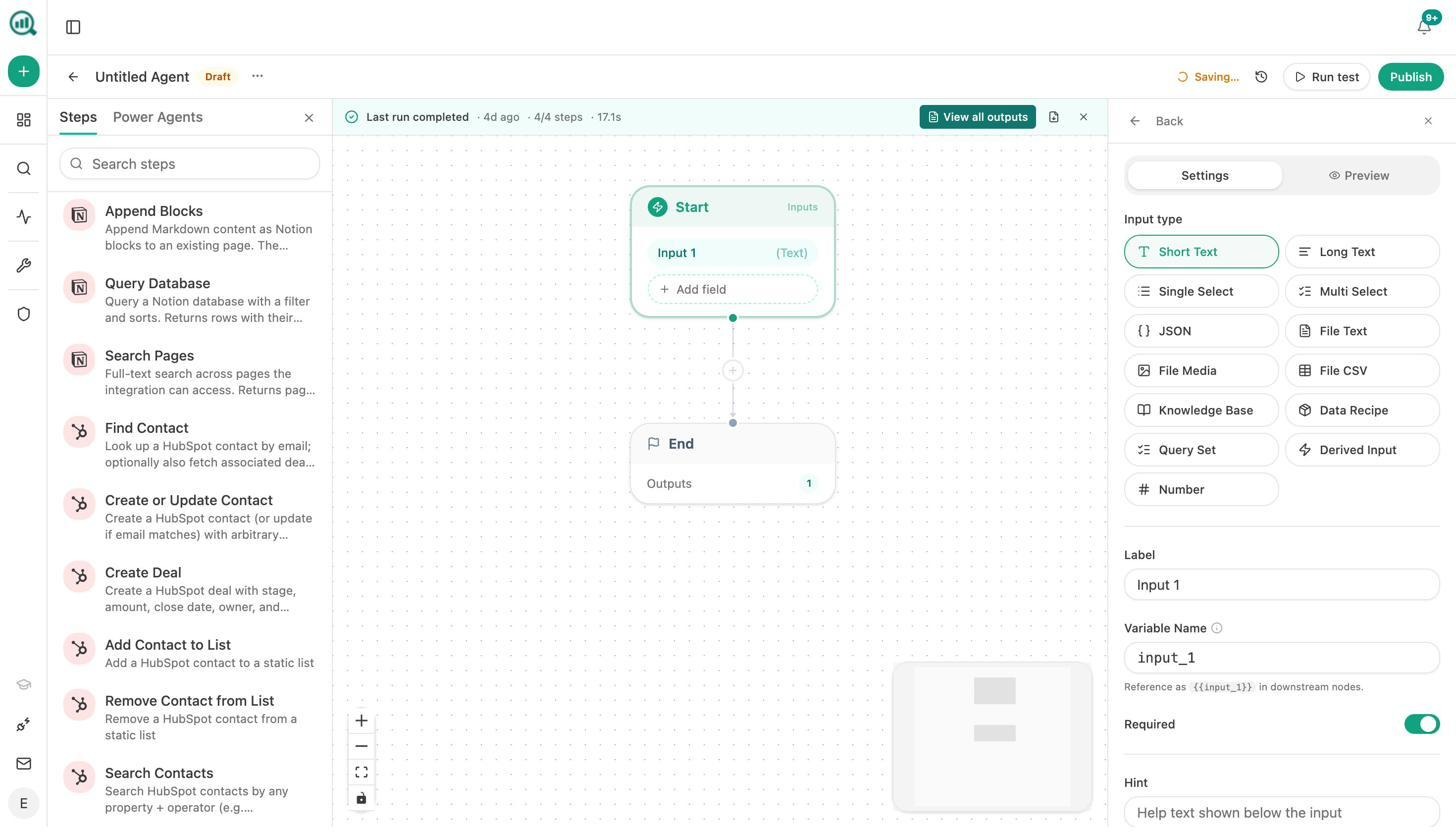

Why the Agent Builder changes the comparison entirely

This is where Analyze AI stops being a visibility tracker and becomes infrastructure for your marketing operations.

The Agent Builder gives you 180+ nodes, 34 pre-built data recipes, 13 input primitives, and three trigger modes (manual, scheduled, webhook). It integrates directly with GA4, Google Search Console, DataForSEO, Semrush, HubSpot, Notion, WordPress, Slack, Mailchimp, and every major LLM.

What does that mean in practice? Here are real workflows teams build:

For CMOs: A scheduled agent runs every Monday at 7am. It pulls your share-of-voice data, GA4 traffic, AI visibility changes, and new HubSpot deals. It assembles an executive summary in your brand voice and emails it to leadership. Your Monday board prep is done before you open Slack.

For content teams: A webhook fires when a brief moves to “approved” in Notion. The agent generates research, builds an outline, writes a full draft with brand voice injected, runs an AEO quality score, and publishes to WordPress if it passes. If it does not pass, it sends the gaps to the writer in Slack.

For agencies: One agent, looped over a client list, builds a branded report for each account every Monday morning. It pulls GSC top pages, AI visibility deltas, new backlinks, and competitor movement. It exports a DOCX and emails it to each account team. Reporting day stops existing.

For PR teams: A scheduled agent checks brand mentions and news every 15 minutes. If sentiment drops below a threshold, it drafts three response options and sends them to Slack. You hear about a crisis before your CEO does.

None of the other tools on this list have an automation layer. They track. Analyze AI tracks, analyzes, creates, optimizes, distributes, and reports, on a schedule, without human intervention.

How Analyze AI handles the SEO side

SEO is not dead. AI search is an additional organic channel alongside traditional SEO, not a replacement. Analyze AI reflects that belief. The Agent Builder includes 27 DataForSEO nodes and 7 Semrush nodes for keyword research, SERP analysis, backlink audits, domain overviews, and on-page SEO analysis. The free tools suite includes a Keyword Generator, Keyword Difficulty Checker, SERP Checker, Keyword Rank Checker, Website Authority Checker, and a Broken Link Checker.

You do not need a separate SEO tool. You need one platform that covers both channels and connects them.

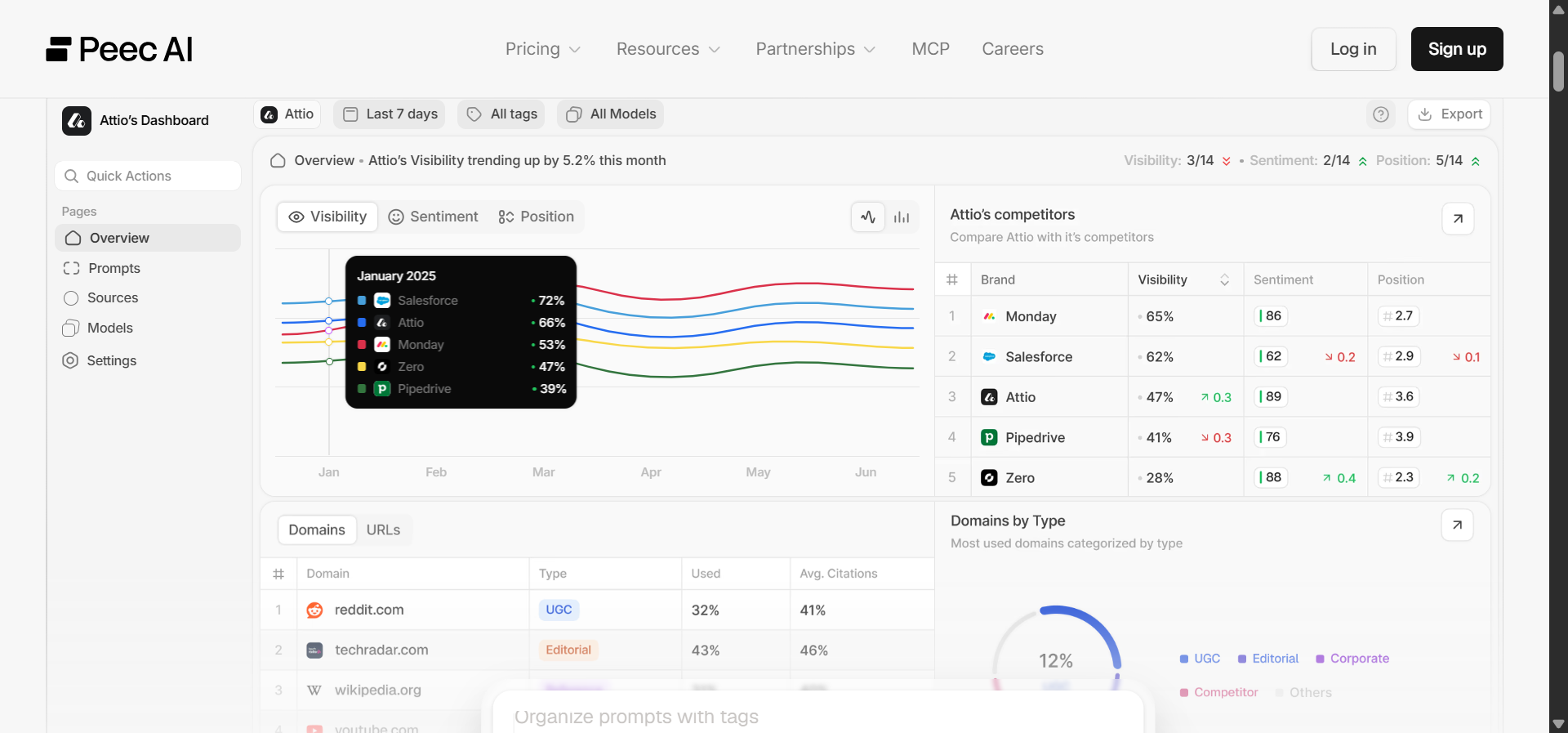

Peec AI: best for prompt-level answer snapshots

Peec AI runs controlled prompts across ChatGPT, Perplexity, Gemini, Claude, and AI Overviews, then stores the full answer including your mention position and the cited source. Every mention links to one prompt and one page, which makes attribution clean. The Looker Studio connector and API access let you pull data into existing reporting stacks.

The prompt-level granularity is strong. You can drill into any visibility drop, see the exact prompt that caused it, and pull the underlying citation within seconds. Competitor benchmarking uses share-of-voice, position, and trend views, all under identical test conditions. For agencies building client reports, the export stack (CSV, API, Looker Studio) makes data portability straightforward.

Where Peec falls short is on the action side. It does not tell you what to fix. There is no content writer, no optimizer, no agent layer, and no traffic attribution. You need a separate analytics stack to connect visibility to revenue. Setup requires careful planning of prompt sets, regions, and competitor lists. Smaller brands may see sparse data during early weeks because many prompts will not trigger mentions yet.

Best for: SEO and content teams that need defensible AI visibility proof for stakeholder reports and can handle the interpretation themselves.

Otterly.ai: best for GEO audits tied to visibility data

Otterly.ai blends AI mention monitoring with on-page GEO diagnostics. It scans ChatGPT, Perplexity, Gemini, Copilot, and AI Overviews, then ties each detection to the full prompt, response text, and cited URLs. The built-in GEO audit analyzes page structure, schema, and content quality to surface issues that might prevent your site from getting referenced.

The share-of-voice dashboards show competitive movement clearly. But Otterly’s historical depth is limited compared to dedicated tracking platforms, and prompt libraries need manual refreshing. The tool measures and diagnoses, but it does not prescribe or execute. You still need to interpret audit findings and design content changes yourself.

Best for: Teams that want AI visibility monitoring with built-in page-level diagnostics at a lower price point.

Writesonic GEO: best for teams already inside Writesonic

Writesonic GEO is not a standalone product. It is embedded in the Writesonic content suite. The GEO dashboard tracks visibility across ChatGPT, Gemini, Claude, Perplexity, and AI Overviews. The AI Traffic Analytics module captures AI crawler visits. The Action Center lets you see visibility issues, open the page in Writesonic’s editor, fix it, and check whether the next scan shows improvement.

That closed-loop workflow is appealing. The trade-off is granularity. Writesonic GEO is less precise than dedicated GEO tools like Peec or Otterly for prompt-level analysis. The dense data panels can feel overwhelming when layered on top of Writesonic’s writing features. Setup requires connecting domains, adding verification tags, and scheduling scans.

Best for: Marketing teams already using Writesonic for content creation who want visibility tracking without adding another tool.

Profound: best for enterprise brand intelligence

Profound is built for large brands that need a panoramic view of AI visibility across languages, regions, and product lines. It tracks mentions, sentiment, and citations from ChatGPT, Perplexity, Gemini, Claude, Copilot, and AI Overviews. The Prompt Volume reports show which questions are trending across engines, which helps PR and content teams align messaging.

The dashboards are polished and built for executive reporting. Multi-language and multi-region support is strong. But Profound starts at $499/month, and most advanced features sit in higher tiers. There are few SEO integrations, no page-level optimization guidance, and no prescriptive recommendations. It tells you where visibility dropped but not how to recover it.

Best for: Enterprise brand and comms teams focused on global AI visibility trends, not tactical SEO execution.

AthenaHQ: best for agency dashboards and BI integrations

AthenaHQ focuses on data visualization and custom reporting for generative engine optimization. Dashboards are fully configurable by audience, team, region, or campaign. Agencies can white-label them for client delivery. The QVEM API estimates prompt query volumes and connects to Tableau and Power BI.

The reporting engine is strong, but AthenaHQ does not include content tools. No writer, no optimizer, no on-page audits. Pricing is positioned at the higher end of the GEO market. It is a measurement and presentation layer, not an execution platform.

Best for: Agencies managing multiple clients who need branded, embeddable GEO dashboards without building them from scratch.

Rankshift: best for lightweight daily tracking

Rankshift strips AI visibility tracking down to what is essential. It tracks mentions, citations, and prompts across ChatGPT, Perplexity, Gemini, Claude, and AI Overviews in a clean, minimal interface. You control which prompts and engines to monitor and how often to run scans.

The tool is reliable and fast but intentionally limited. No content analysis, no benchmarking depth, no content optimization. It will not capture every long-tail prompt variation or emerging phrasing. Rankshift works best as a daily monitoring complement paired with a tool that handles the strategy and execution.

Best for: Teams that want clear, repeatable visibility data without feature complexity.

LLMrefs: best for citation-level tracking

LLMrefs monitors when AI models cite your content, showing the exact text where the citation appears. The proprietary LLMrefs Score aggregates citation frequency across ChatGPT, Gemini, Claude, and Perplexity into a single benchmark. The freemium model lets small teams start immediately.

The focus is narrow by design. No sentiment analysis, no SEO integration, no content tools. Free and lower-tier plans cap data frequency and exports. LLMrefs answers one question well: “Is AI citing us, and how often compared to competitors?” If you need more than that, you will need additional tools.

Best for: Publishers and research-heavy sites that care about citation authority in AI answers.

How to pick the right Rankscale alternative

Your choice depends on what problem you are solving, and honestly, on what you plan to do with the data once you have it.

If you need visibility data for reports, Peec AI, Otterly.ai, or Rankshift will give you clean dashboards at different price points. All three track the major engines. The differences come down to granularity (Peec leads here), diagnostics (Otterly adds GEO audits), and simplicity (Rankshift keeps it minimal).

If you need branded client reporting, AthenaHQ’s white-label dashboards and BI integrations are purpose-built for agencies managing multiple accounts.

If you need enterprise brand intelligence across languages and regions, Profound covers the broadest scope but demands the biggest budget.

If you need a closed-loop system that tracks visibility, attributes traffic to revenue, creates and optimizes content, builds automated workflows with 180+ integrations, and runs on a schedule without human intervention, Analyze AI is the only platform on this list that does all of it.

The difference is not about tracking more engines. Every tool on this list tracks the major ones. The difference is what happens after the tracking. Most tools give you a dashboard. Analyze AI gives you an operating system for the entire organic channel, SEO and AI search together.

Start a free trial or book a demo to see how it works for your brand.

Ernest

Ibrahim

![6 AthenaHQ Alternatives That Skip the Credit Math [2026]](/_next/image?url=https%3A%2F%2Fwww.datocms-assets.com%2F164164%2F1778742681-blobid0.png&w=3840&q=75)