Summarize this blog post with:

Scrunch AI does monitoring well. The G2 reviews say so, and we believe them. The product struggles when teams need to act on the data.

Three pain points show up over and over.

The Core plan locks Claude, Gemini, Meta, and Grok behind Enterprise pricing, so a $250-a-month tracker watches only four engines. The prompt-credit system splits 125 prompts across every engine you turn on, which means tracking 125 prompts across four engines actually leaves you with around 31 unique questions. And the Insights and Site Audit features are still in beta, with a real writing layer essentially absent. You see a problem. You still need a second tool to fix it.

Each alternative below is reviewed against four criteria you should care about:

-

Engine coverage at the entry price, not at the Enterprise tier

-

What happens after a visibility drop, since alerts without action are anxiety

-

Pricing transparency, since credit-based pricing tends to hide real cost

-

Whether content production lives inside the tool or forces a stack hop

If you want to verify pricing claims against the source, here is Scrunch AI’s own pricing page.

In this article, you’ll find seven Scrunch AI alternatives ranked by what they actually do once they show you a visibility drop. Some only watch. Some help you write the fix and ship it. A few do both, plus run the work as scheduled agents that check on the brand every Monday morning so you don’t have to.

Table of Contents

TL;DR comparison

|

Tool |

Best for |

Engine coverage at entry |

Acts on visibility data? |

Approx. starting price |

|---|---|---|---|---|

|

Analyze AI |

Agentic SEO and content teams that want tracking, writer, optimizer, and workflow agents in one stack |

All major engines (ChatGPT, Perplexity, Claude, Gemini, Copilot, AI Overviews) |

Yes. Writer + Optimizer + Agent Builder with 180+ nodes |

Mid-tier, transparent per-brand pricing |

|

Peec AI |

Multi-engine monitoring with agency-clean exports |

ChatGPT, Perplexity, AI Overviews (others are paid add-ons) |

Partial. Actions feature in beta |

~$89/mo |

|

Rankability AI Analyzer |

Teams already inside the Rankability SEO suite |

Most major engines |

Light. Weekly cadence |

~$149/mo (suite) |

|

LLMrefs |

Keyword-first benchmarking and source visibility |

Most major engines |

No. Reporting only |

~$79/mo |

|

Profound |

Fortune 500 procurement and shopping visibility |

ChatGPT at Starter, full coverage at Enterprise |

Light. 3 articles/month on lower tiers |

$99-$499+/mo |

|

Otterly AI |

Solo marketers needing fast monitoring plus a GEO audit |

ChatGPT, Perplexity, AI Overviews |

No writing layer |

~$29/mo |

|

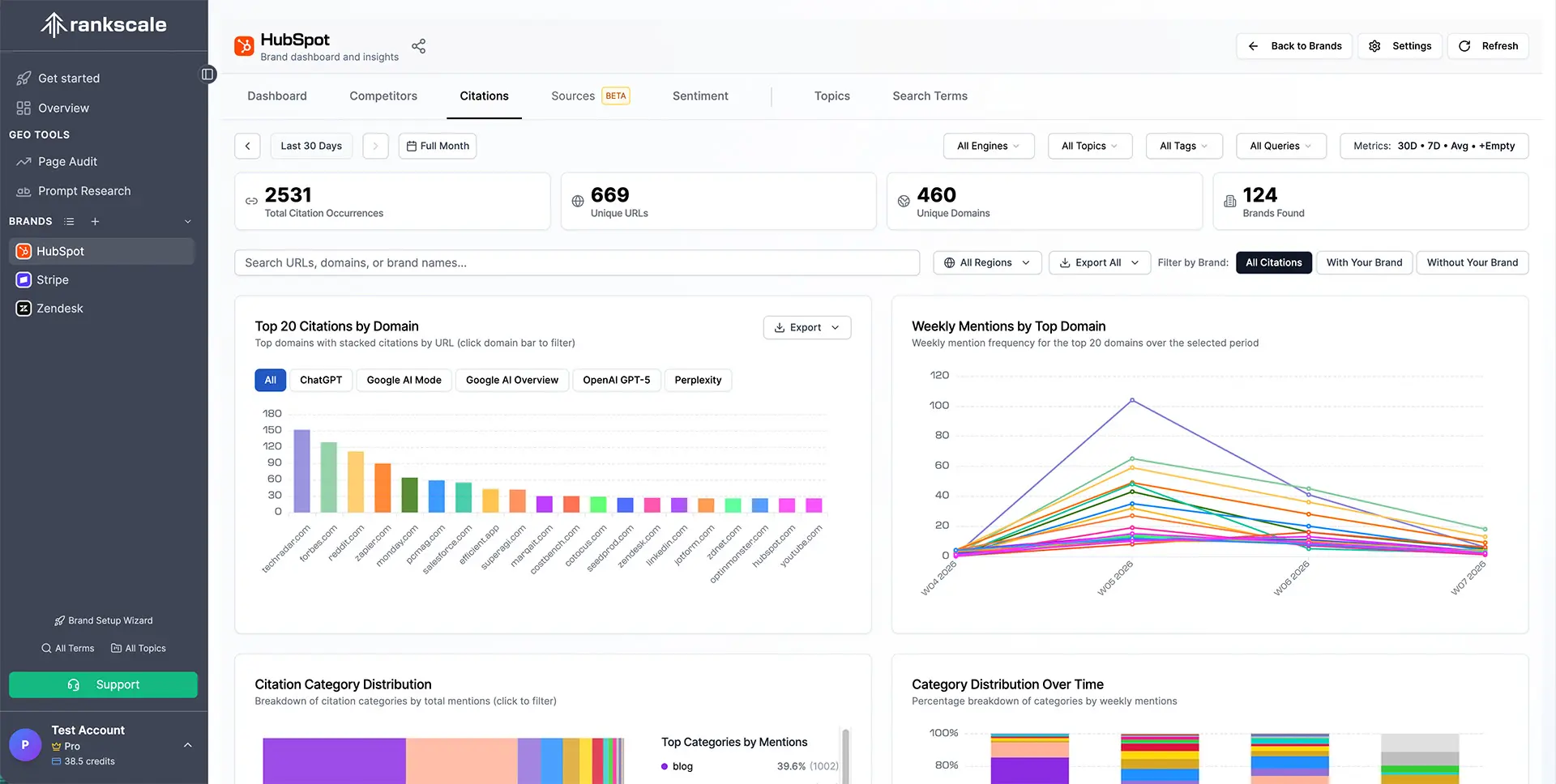

Rankscale AI |

Freelancers tracking daily on a tight budget |

ChatGPT, Perplexity mostly |

No. Monitoring only |

~$20/mo |

|

AthenaHQ |

Multi-region growth-stage brands |

All major engines |

Action Center recommendations |

~$295/mo |

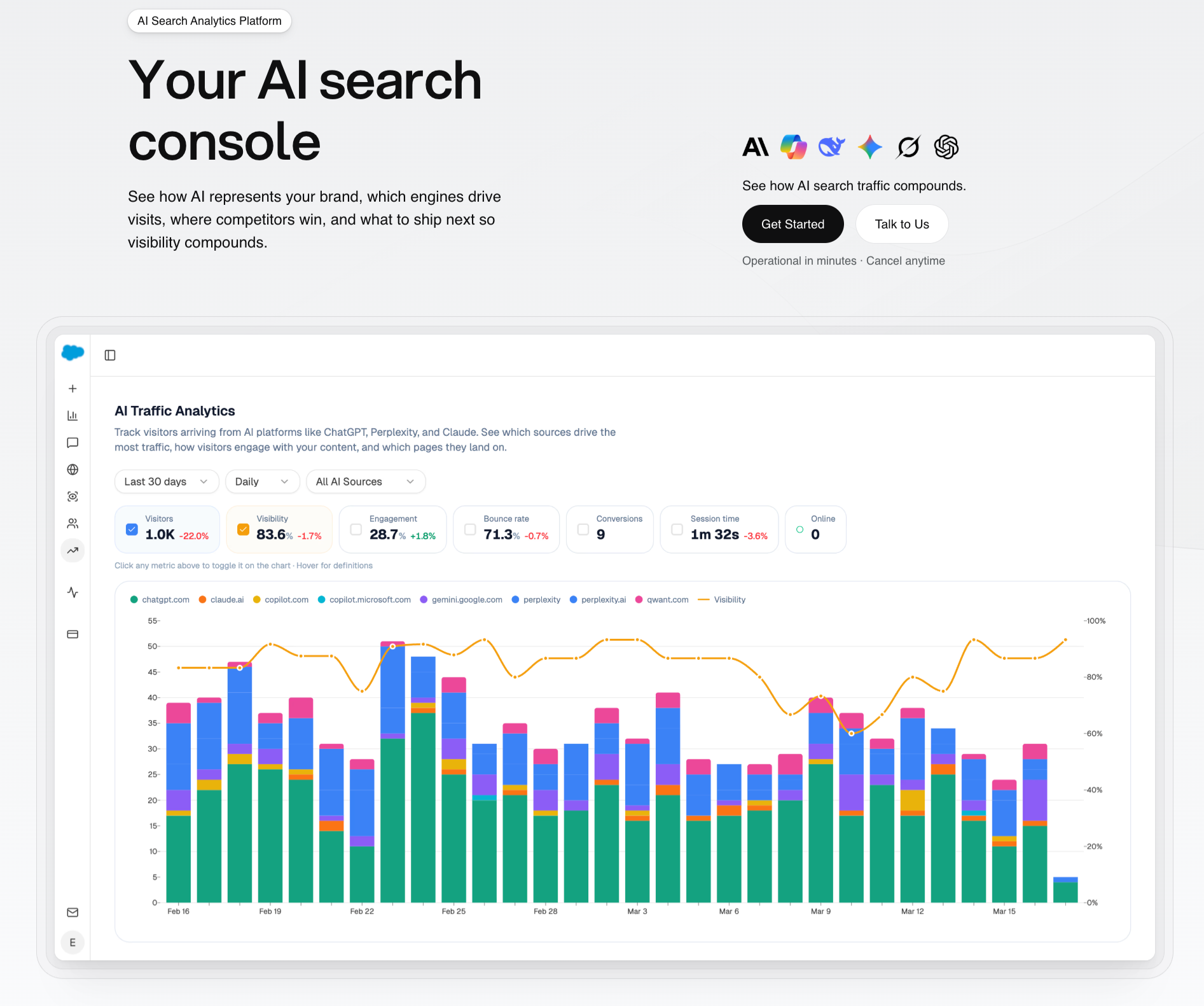

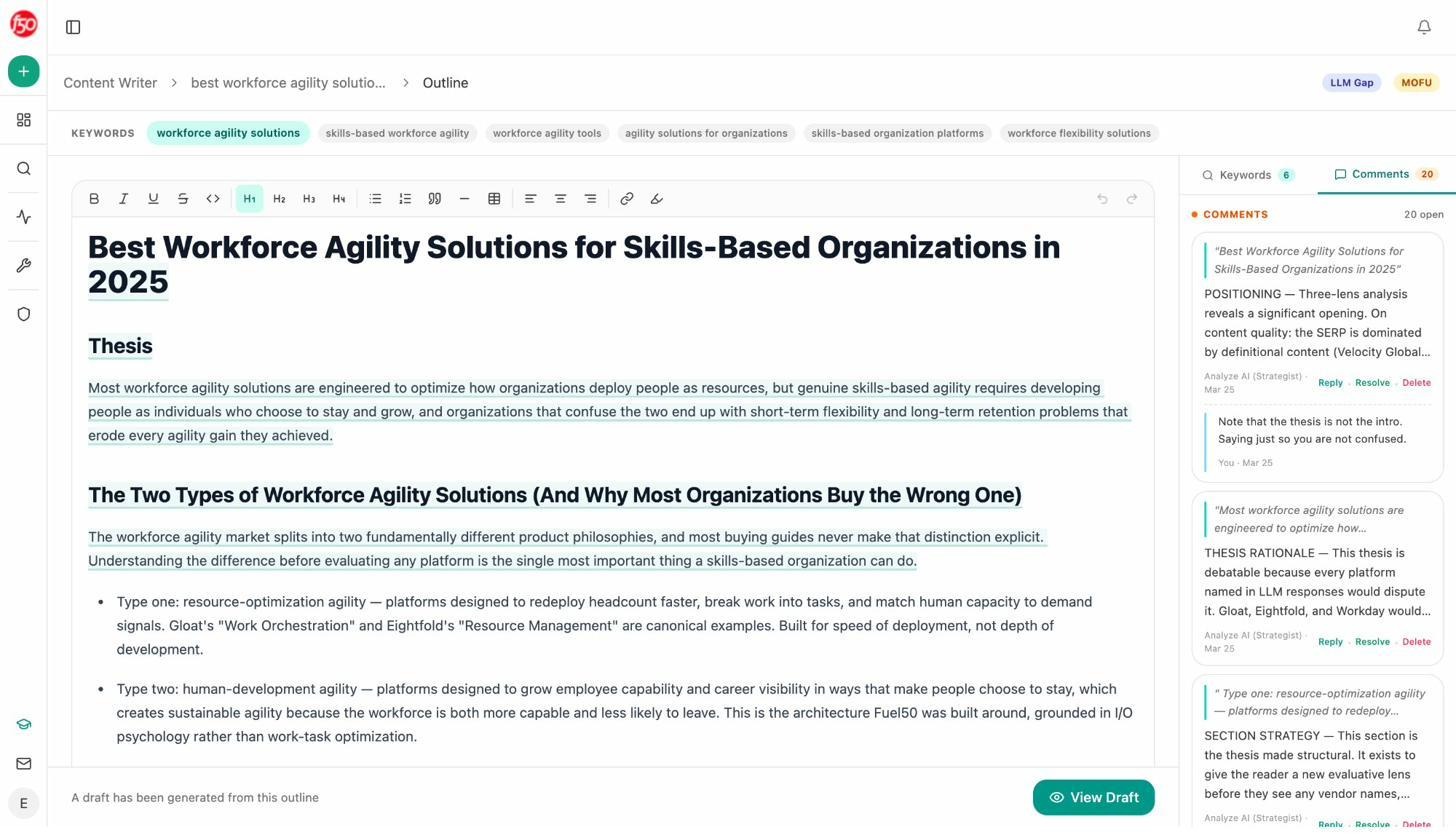

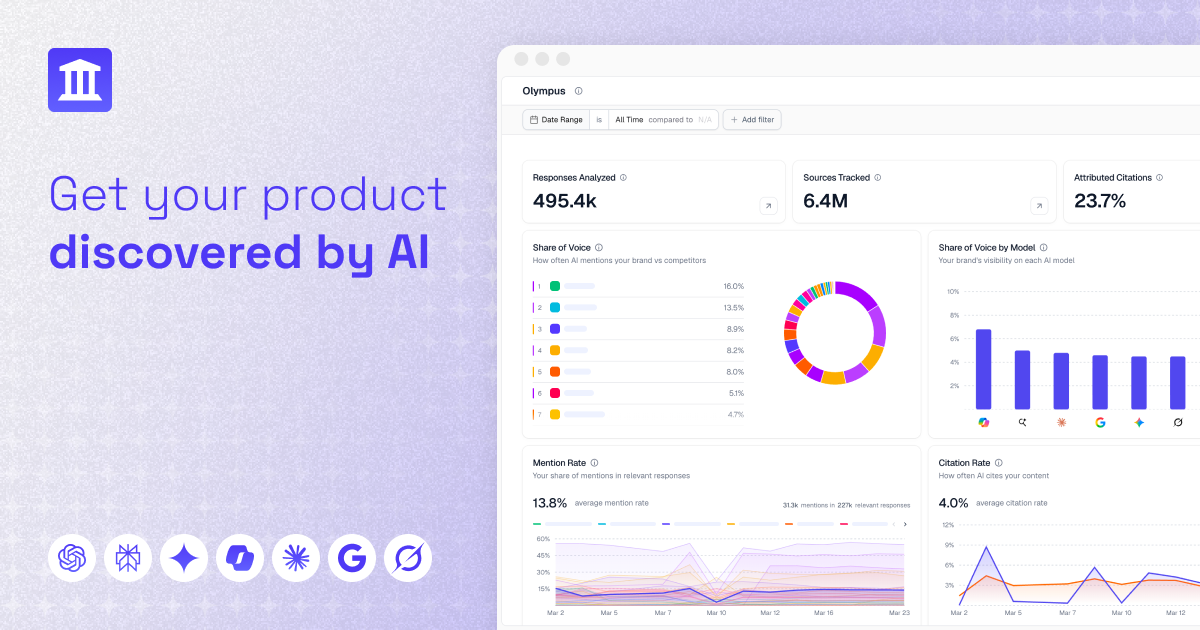

1. Analyze AI: best for teams that want tracking, content, and agents in one platform

Most tools on this list show you that ChatGPT is citing a competitor instead of you. They hand you the data and stop. You go off and brief a writer, manage the optimization, push a content update, schedule the re-check.

Analyze AI does the showing. It also does the writing, the optimizing, and the work that runs while you sleep.

That last part is what Scrunch does not have, and it is why we will spend most of this section on it. Tracking is table stakes in 2026. Closing the loop is not.

What Analyze AI tracks (the table stakes)

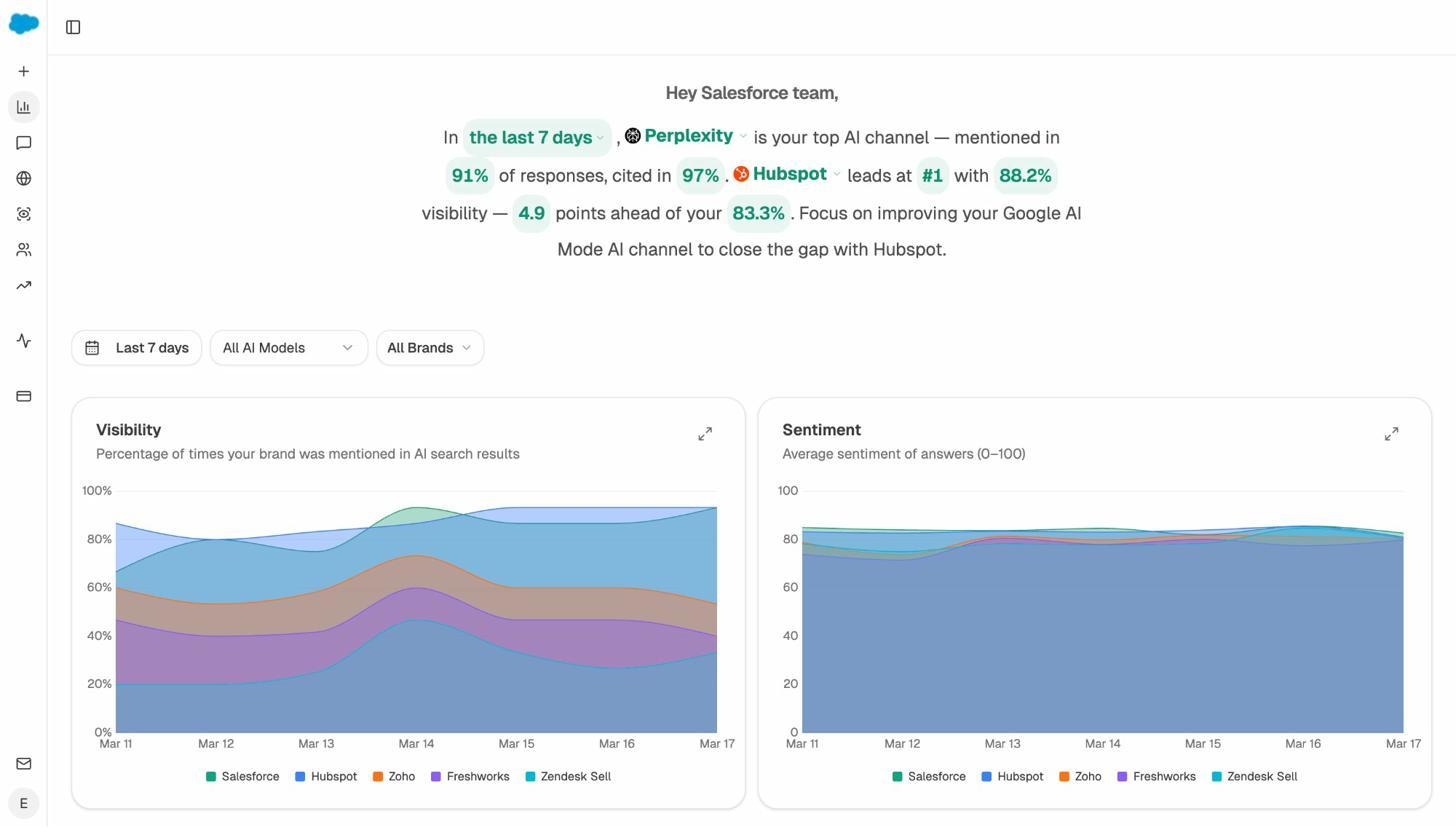

You get prompt-level visibility across ChatGPT, Perplexity, Claude, Gemini, Copilot, and Google AI Overviews on every plan, not just at the Enterprise tier. The platform pulls how often each prompt mentions your brand, the position you hold inside the answer, the sentiment of the mention, and which sources the model cited to compose the response.

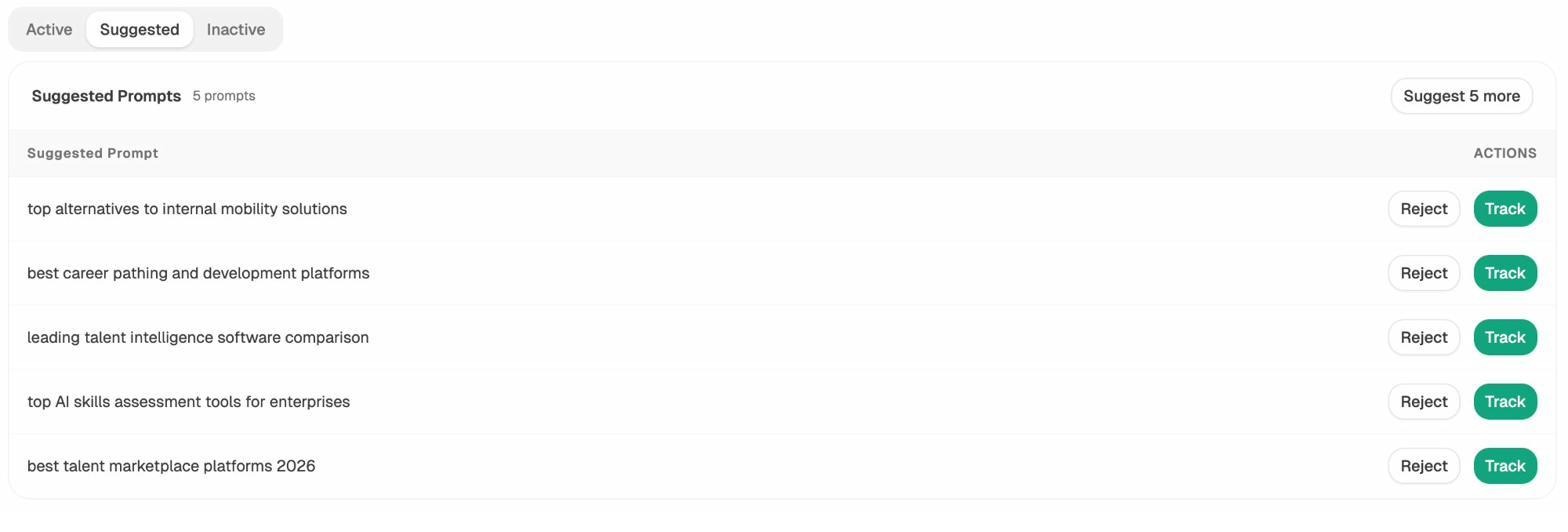

If you do not know which prompts to track, the Prompt Discovery feature suggests bottom-of-funnel prompts a buyer in your category would actually ask. You can also run an ad hoc prompt search to test a single query across every engine without committing it to monitoring.

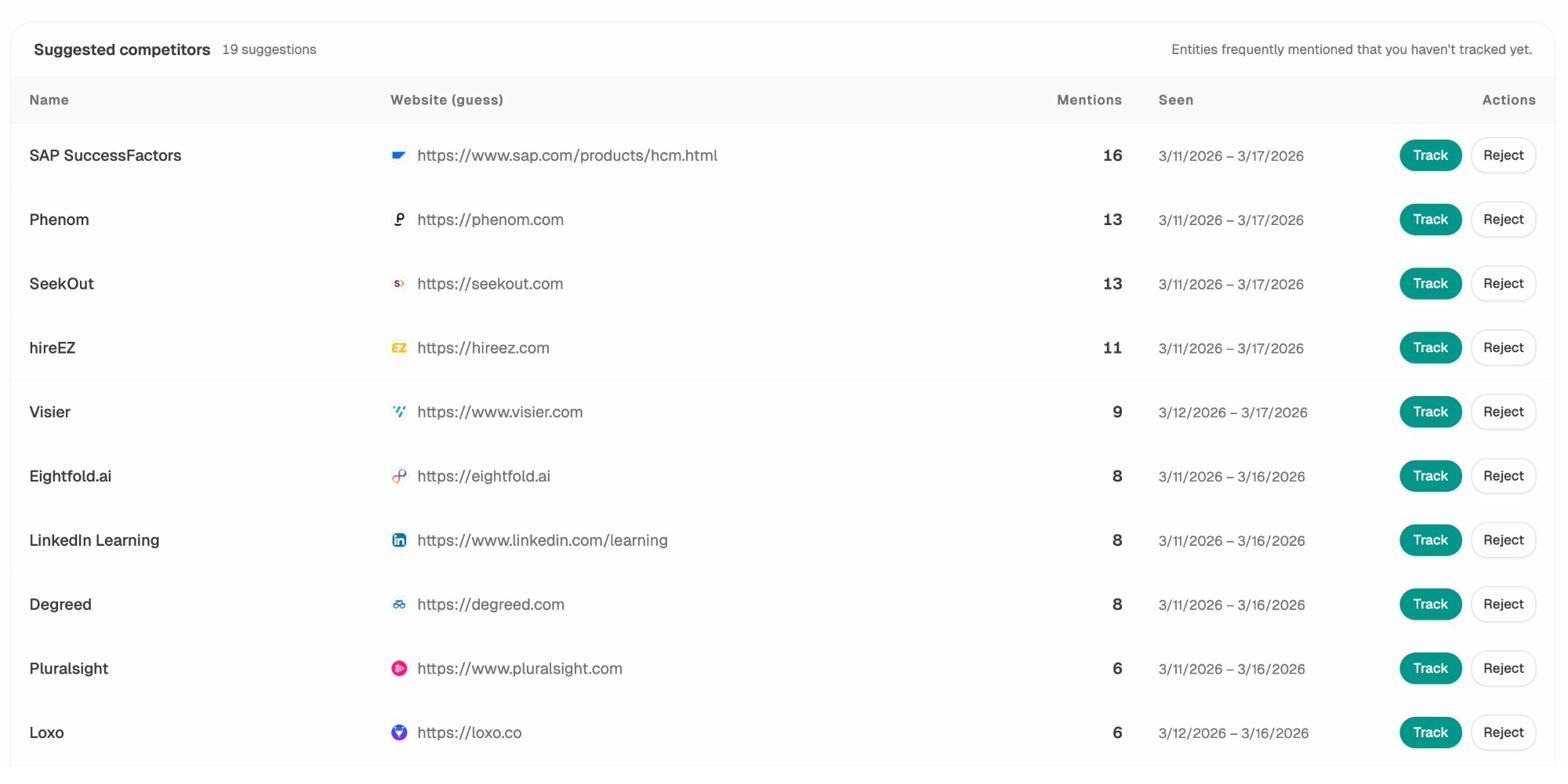

The Competitors dashboard stacks your brand against rivals on share of voice, citation share, and sentiment, then surfaces the exact prompts where they win and you do not. That last view is where most teams find their first month of work.

Citation visibility is a sub-feature most monitoring tools treat as an afterthought. Analyze AI shows you the domains and URLs that models pull from when they answer questions in your category, how many times each source was used, and which models reference it. That signal tells you where to invest your link and PR work. You are no longer building authority blind. You can read more about how this works in our AI search competitor analysis guide.

What Analyze AI does that Scrunch does not

This is where the tools genuinely diverge.

The Content Writer turns research into a publishable draft inside the platform. You add a topic. The writer pulls the SERP and the AI search landscape, surfaces the sources, builds the outline, and produces a full draft against your brand voice. You do not move the brief to a Google Doc and start over. You ship from where you researched.

The Content Optimizer scores existing pages against AI search readiness and rewrites them. Drop a URL. The optimizer fetches the live content, marks the gaps your competitors are filling that you are not, and produces an optimized version. Both the gaps and the rewrite are AEO-aware, meaning they target the structure, claim density, and proof patterns AI engines reward.

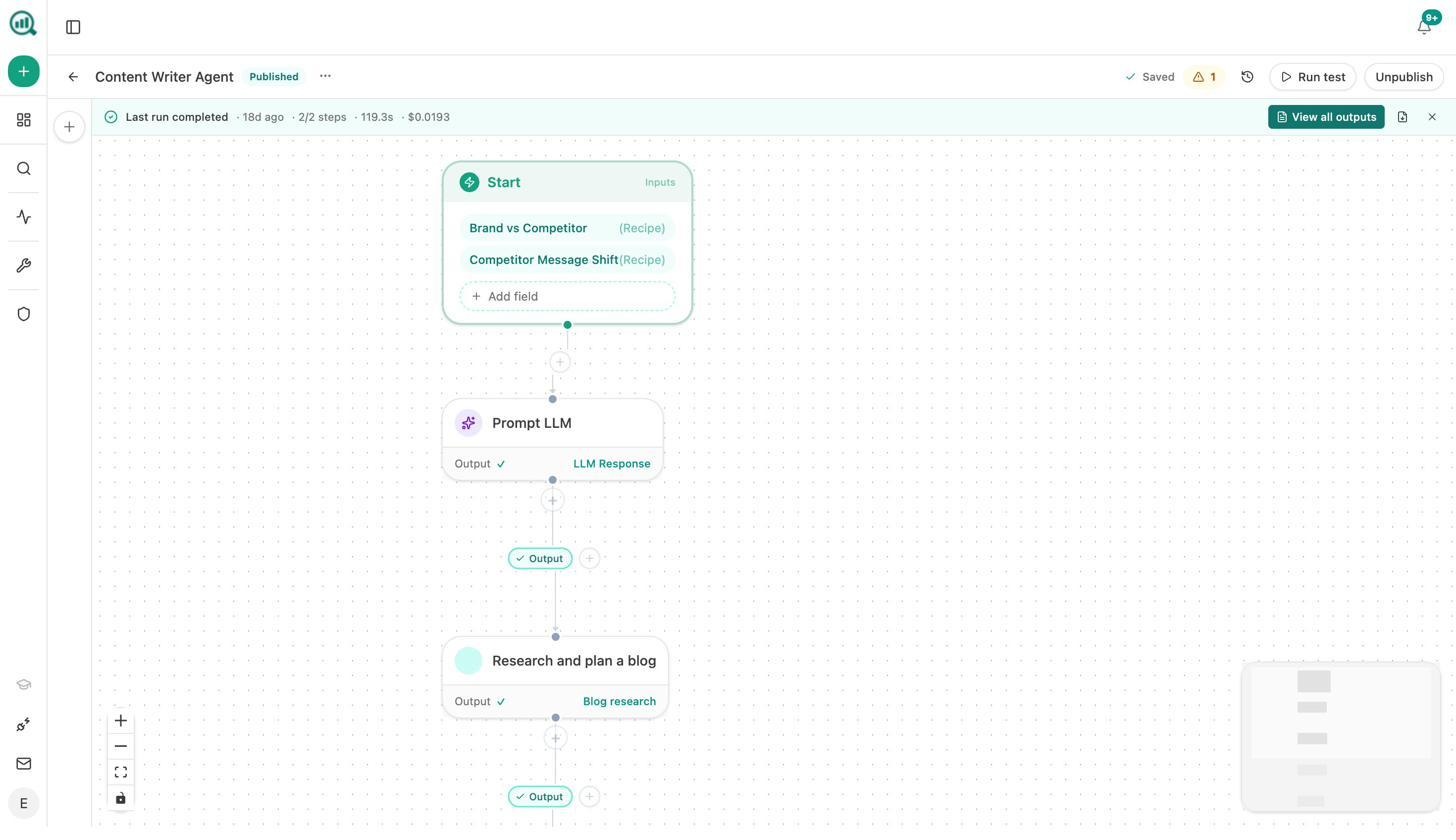

The Agent Builder runs everything as automation. This is the layer most other tools on this list do not have, and it is the part of Analyze AI most people underestimate.

The Agent Builder gives you 180+ nodes covering GA4, Google Search Console, DataForSEO, Semrush, HubSpot, WordPress, Notion, Sanity, Contentful, Mailchimp, Tomba, Hunter, Slack, Gmail, the full AI visibility data layer, image generation, and the entire content production pipeline. You wire them into workflows that run on a schedule, on a webhook, or on demand.

It also ships with 34 pre-built data recipes so you do not wire up four nodes to get the input you need. One recipe pulls visibility losers from the last seven days. Another returns the prompts where competitors outrank you. Another returns the pages losing citations faster than they are losing traffic. Each one is a single drop-in.

A few real builds teams ship with it:

-

A Monday morning briefing that pulls share of voice, competitor message shifts, the week’s GSC top pages, and AI traffic deltas, runs an LLM over them, exports a DOCX, and emails it to leadership before you finish your coffee.

-

A content refresh fleet that loops weekly over stale pages, rewrites each one for freshness and AEO, and pushes the diff to WordPress if it passes the score gate.

-

A crisis response agent that fires on a webhook when negative press lands, drafts three response options, and alerts the comms team in Slack within seconds.

-

A citation-stop alert that fires daily on pages losing citations and queues a re-optimization task in Notion before the page falls off the AI search radar entirely.

This is what we mean by agentic SEO and content. Tracking is one of the things you can do with Analyze AI. The platform is the operations layer for your whole search and content function. If you would rather see the broader argument first, our manifesto lays out why we built it this way.

Analyze AI vs Scrunch AI

|

Capability |

Analyze AI |

Scrunch AI |

|---|---|---|

|

Engine coverage at entry tier |

ChatGPT, Perplexity, Claude, Gemini, Copilot, AI Overviews |

ChatGPT, Perplexity, Copilot, AI Overviews (Claude, Gemini, Meta require Enterprise) |

|

Content writer |

Full research-to-draft pipeline, brand voice aware |

Not included |

|

Content optimizer |

Live page fetch, AEO scoring, full rewrite |

Limited optimization, in beta |

|

Agent and automation layer |

180+ nodes, scheduled and webhook agents, 34 data recipes |

None |

|

Sentiment, perception map, battlecards |

Yes |

Sentiment yes, perception limited |

|

GA4 AI traffic attribution |

Yes |

Yes |

|

Pricing model |

Per brand, transparent |

Per brand, credits deplete across engines |

You can run a free AI visibility audit on your domain before you sign up to see your starting position.

2. Peec AI: best for clean multi-engine monitoring

Peec AI does one thing and does it well. It tracks prompt-level visibility across ChatGPT, Perplexity, and AI Overviews, captures the full answer snapshot, links the citation back to the source URL, and stacks share of voice against competitors.

The interface is clean. The exports are clean. The CSV, API, and Looker Studio connector make it agency-friendly. Unlimited seats on every plan is a real edge if your team is bigger than three people.

The trade-off is structural. The Starter plan at around $89 a month covers only three engines, and adding Claude, Gemini, AI Mode, or any model beyond the base three costs an extra $30 to $140 per month per model. By the time you have full coverage across six engines, you are well past $240 a month before you account for prompt volume.

There is no writer. There is no optimizer. Peec recently shipped an Actions feature in beta that surfaces optimization recommendations, but you still need a separate tool to draft and publish the fix.

|

Capability |

Peec AI |

Scrunch AI |

|---|---|---|

|

Engines on entry plan |

3 included, paid add-ons for the rest |

4 fixed |

|

Prompts |

25 (Starter), 100 (Pro) |

125 (across engines) |

|

Content production |

None |

None |

|

Agent automation |

None |

None |

|

Unlimited seats |

Yes |

No |

Good fit if: You want clean multi-engine reporting and you already have a content workflow elsewhere.

Skip if: You want one tool that monitors and produces.

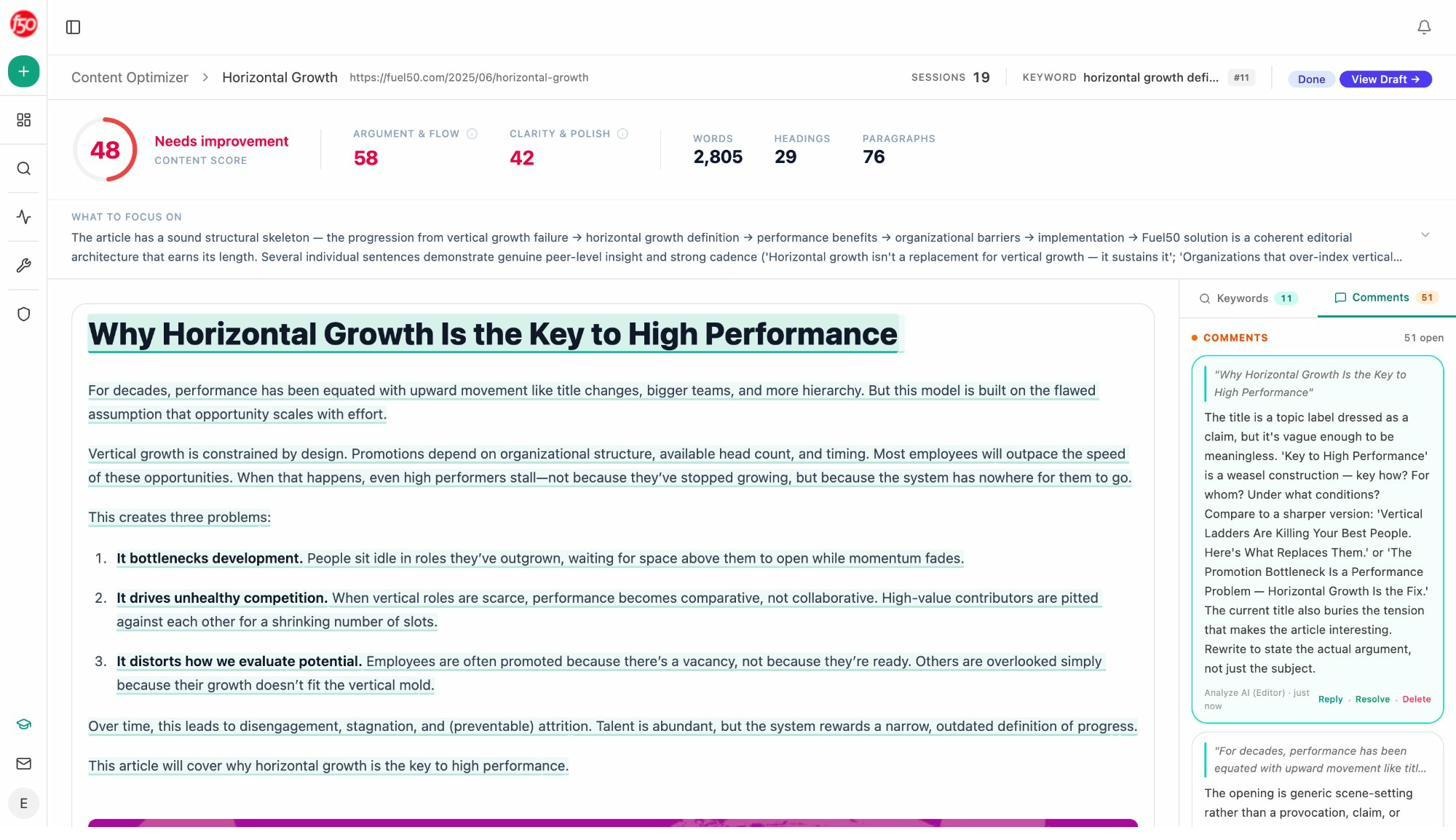

3. Rankability AI Analyzer: best if you already live in Rankability

Rankability bolted an AI Analyzer onto its existing SEO suite. If your team already pays for Rankability for keyword research, content auditing, and reporting, the AI Analyzer extends that surface into AI search without forcing a new login.

You can test branded and commercial prompts across ChatGPT, Gemini, Claude, Perplexity, and AI Overviews, see citation heatmaps, compare your pages to competitor pages on a content diff basis, and export weekly insights.

The trade-off is cadence and density. Updates are weekly, so fast-moving topics will miss days of movement. New users who do not already know the Rankability interface face the same learning curve a full SEO suite carries.

|

Capability |

Rankability AI Analyzer |

Scrunch AI |

|---|---|---|

|

Engine coverage |

ChatGPT, Gemini, Claude, Perplexity, AI Overviews |

Core plan limited to 4 engines |

|

Update cadence |

Weekly |

Daily |

|

SEO integration |

Inside Rankability suite |

Standalone |

|

Content production |

Editor and brief tools inside the suite |

None |

|

Best fit |

Existing Rankability users |

Standalone visibility teams |

Good fit if: You already pay for Rankability and want one more module rather than another vendor.

Skip if: You need real-time movement, or you are not committed to a full SEO suite.

4. LLMrefs: best for citation benchmarking on a budget

LLMrefs takes a different angle. Instead of asking you to author prompts, it generates prompt fan-outs from a list of keywords and aggregates the results into a single score it calls the LLMrefs Score. The score combines citation frequency, model coverage, and position strength.

That keyword-first approach makes onboarding fast for SEO teams that think in keywords before prompts. The freemium tier lets you test the product. Paid plans start around $79 a month.

Two caveats. The refresh cycle is weekly, which fits long-horizon benchmarking but misses fast-moving topics. The aggregated score is convenient until it hides a weak engine inside an otherwise healthy number. You will want to drill into the engine-level view before drawing conclusions.

There is no writer, no optimizer, no automation layer. LLMrefs is reporting.

|

Capability |

LLMrefs |

Scrunch AI |

|---|---|---|

|

Methodology |

Keyword fan-out |

Prompt-level |

|

Cadence |

Weekly |

Daily |

|

Aggregated score |

LLMrefs Score |

Visibility score |

|

Content production |

None |

None |

|

Pricing |

Freemium, paid from ~$79/mo |

~$250/mo Core |

Good fit if: You want quarterly visibility benchmarking and you already have an execution team.

Skip if: You need to act on the data inside the same tool.

5. Profound: best for Fortune 500 procurement

Profound is the enterprise pick. SOC 2 Type II, SSO, SAML, dedicated account team, the procurement checklist is fully covered. Coverage is broad once you reach Enterprise, with prompt-level monitoring, Conversation Explorer, Prompt Volumes for demand forecasting, and Agent Analytics that maps how AI crawlers like GPTBot and PerplexityBot access your site.

Pricing is sales-led above the $99 Starter, with the Growth plan published at $399 and Enterprise at $499 and up. The Starter tier looks affordable until you read the fine print, which limits you to ChatGPT-only monitoring and 50 prompts. Real multi-engine coverage requires the Growth or Enterprise tiers.

Profound recently added a writer that produces three articles per month on lower tiers. That is helpful but light compared to a standalone content suite. Trakkr’s review notes the platform is best treated as an enterprise procurement decision rather than a self-serve buy.

|

Capability |

Profound |

Scrunch AI |

|---|---|---|

|

Engines at Starter |

ChatGPT only |

4 engines |

|

Enterprise coverage |

8+ engines including Meta, Grok, DeepSeek |

8 engines at Growth |

|

Content production |

3 articles/month at lower tiers |

None |

|

Agent crawler analytics |

Yes |

Yes |

|

Pricing |

$99 Starter, $399 Growth, $499+ Enterprise |

$250 Core, $500 Growth |

Good fit if: You are Fortune 500 with a procurement team and an enterprise budget.

Skip if: You want self-serve onboarding or you need to act on the data with a real writer.

6. Otterly AI: best for solo marketers and small agencies

Otterly AI is the entry-level pick that punches above its weight. The Lite plan starts at $29 a month and covers ChatGPT, Perplexity, and Google AI Overviews with up to 15 tracked prompts. The interface is clean, the GEO audit tool checks 25+ on-page factors automatically, and the keyword and prompt research module helps you find what to track without leaving the platform.

CSV and Excel exports plug into client reporting templates without custom work. Reviewers on G2 consistently call Otterly fast and accurate. There is no real-time alerting, no traffic attribution, and no content writing layer.

For freelancers and small agencies who need to start tracking AI visibility without an enterprise budget, Otterly is a sensible first buy.

|

Capability |

Otterly AI |

Scrunch AI |

|---|---|---|

|

Engine coverage |

ChatGPT, Perplexity, AI Overviews |

4 engines at Core |

|

Built-in GEO audit |

Yes (25+ factors) |

Limited |

|

Content production |

None |

None |

|

Best price tier |

$29/mo Lite |

$250/mo Core |

|

Best fit |

Solo marketers, small agencies |

Mid-market and up |

Good fit if: You are testing AI visibility tracking on a small budget.

Skip if: You need automation, a writer, or deep competitor benchmarking.

7. Rankscale AI: best for daily checks on the cheapest plan

Rankscale AI is the budget option. Around $20 a month, daily or hourly scans across ChatGPT and Perplexity, competitor tracking, sentiment scoring, and a lightweight dashboard that loads fast and requires almost no training.

The coverage is narrow. Gemini and Claude are partial. There is no API. No agent layer. No writer. If your only question is whether your brand surfaces in ChatGPT and Perplexity day to day, Rankscale answers it for less than the cost of a Slack seat.

For freelance SEOs running visibility experiments across a few client domains, this is the easiest entry point on the list.

|

Capability |

Rankscale AI |

Scrunch AI |

|---|---|---|

|

Engine coverage |

ChatGPT, Perplexity (others partial) |

4 engines at Core |

|

Cadence |

Daily or hourly |

Daily |

|

API access |

None |

Available |

|

Content production |

None |

None |

|

Pricing |

~$20/mo |

~$250/mo |

Good fit if: You want the cheapest daily tracker on the market.

Skip if: You need broader engine coverage or any optimization workflow.

8. AthenaHQ: best for multi-region growth-stage brands

AthenaHQ was founded by ex-Google Search and DeepMind engineers, and the founding-team DNA shows up in the product. The platform tracks all major engines (ChatGPT, Perplexity, Gemini, Claude, AI Overviews) on the entry tier, supports regional and language segmentation, and includes a proprietary query volume estimation model (QVEM) that approximates demand inside AI search where classic keyword volumes do not reflect actual buyer behavior.

The Action Center surfaces visibility gaps and recommends next steps, which is a real improvement over pure monitoring tools.

Pricing starts around $295 a month for the Self-Serve plan with enterprise tiers above that. Credit-based metering means broad prompt sets can burn budget quickly. Plan accordingly.

|

Capability |

AthenaHQ |

Scrunch AI |

|---|---|---|

|

Engine coverage |

All major engines at entry |

4 at Core |

|

Regional segmentation |

Yes |

Limited |

|

QVEM demand modeling |

Yes |

No |

|

Content production |

None |

None |

|

Pricing |

~$295/mo Self-Serve |

~$250/mo Core |

Good fit if: You are a multi-region brand and you want analytics depth beyond tracking.

Skip if: You want a writer and optimizer inside the same tool.

How to actually pick

If you skipped to the bottom looking for a decision rule, here is the short version.

Pick Analyze AI if you want tracking, content production, and agents in one platform, and if you would rather pay for the work that compounds than for a dashboard that watches.

Pick Peec AI or Otterly AI if you only need monitoring and you already have a writer and an optimization workflow elsewhere.

Pick Rankscale AI or LLMrefs if budget is the binding constraint and you can live with limited engine coverage or weekly cadence.

Pick Profound or AthenaHQ if you have an enterprise procurement process and you want a platform with predictive features or regional segmentation.

Pick Rankability AI Analyzer if you already pay for the broader Rankability suite and you want one more module instead of one more vendor.

One last thing worth saying out loud. SEO is not dead. AI search is not replacing it. The right way to think about every tool on this list is as a way to manage an additional channel that compounds with the SEO work you are already doing, not a replacement for it. The platforms that close the loop, the ones that take the visibility data and turn it into shipped content and recurring automation, are the ones that compound. The platforms that stop at the dashboard force you to compound elsewhere on your own time.

That is the criterion we would apply if we were buying for our own team. It is also the criterion we built Analyze AI against.

If you want to see your starting position before you decide, run a free AI visibility check on your domain, browse the feature list, or book a quick demo.

Ernest

Ibrahim

![7 LLMrefs Alternatives That Do More Than Track Mentions [2026]](/_next/image?url=https%3A%2F%2Fwww.datocms-assets.com%2F164164%2F1779313840-blobid0.png&w=3840&q=75)