Summarize this blog post with:

Two sites can show identical traffic, rank for the same 600 keywords, and share half their backlinks. Three months later, one doubles and the other plateaus. The difference lives in what you compare and how you read the comparison.

In this article, you’ll learn the seven website comparisons that predict who wins in search, the four shortcuts that mislead most teams, and the tools and steps to run each one today.

For each method below, you get steps, tools, the trap that derails most teams, and a parallel for AI engines like ChatGPT, Perplexity, Google AI Mode, Claude, and Copilot. In 2026, the website that wins is the one that shows up inside the answer.

Table of Contents

TL;DR

|

Comparison |

What it answers |

Best tools |

Trap to avoid |

|---|---|---|---|

|

Organic traffic |

Who captures demand and whether growth is durable |

Semrush, Ahrefs, Similarweb, GSC, GA4 |

A one-month snapshot is not a verdict |

|

Paid visibility |

Who pays to intercept high-intent searches |

Google Ads Auction Insights, SpyFu, iSpionage |

Presence is not profitability |

|

Keyword ownership |

Which queries each site owns versus shares |

Semrush, Ahrefs, SE Ranking |

Chasing every shared term, not high-intent ones |

|

Backlink profiles |

Where each site’s trust actually comes from |

Ahrefs, Majestic, Semrush |

Counting links instead of weighing them |

|

Site structure |

Whether organization helps content compound |

Screaming Frog, Sitebulb, AI Site Audit |

Treating structure as a one-time fix |

|

Content performance |

Which pages move outcomes |

GA4, Google Search Console, Hotjar |

Measuring volume instead of impact |

|

AI search visibility |

Who AI engines cite, mention, and recommend |

Analyze AI, Profound, Peec AI |

Assuming SEO rankings carry over to AI |

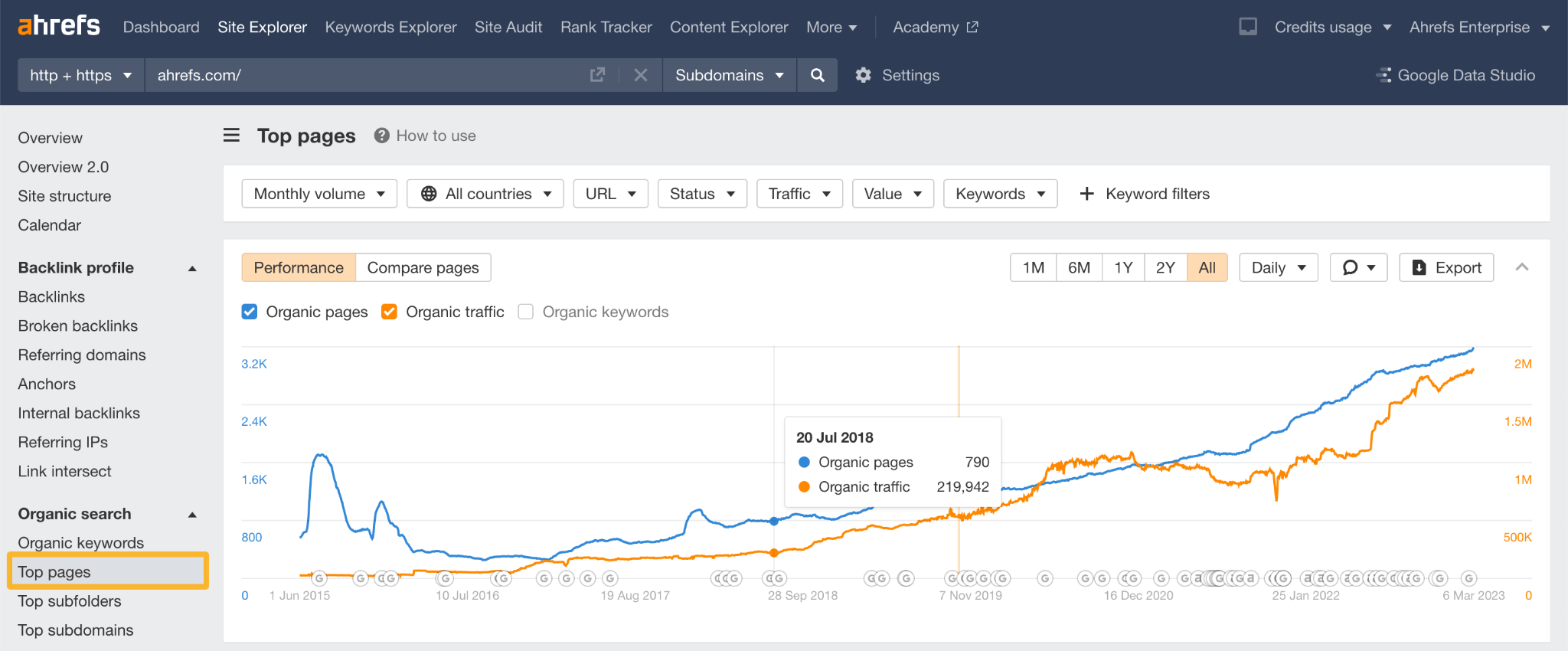

1. Compare organic traffic by curve, not by number

A site doing 80,000 visits a month looks better than one doing 50,000, until you see the first is sliding 8 percent on one declining page and the second is compounding across 200 pages at 4 percent a week.

Compare three things. The 12-to-24-month curve. Page concentration (five pages driving 60 percent of traffic is fragile). The top-page list (a viral one-off is borrowed growth, a category cluster is owned).

How to do it

-

Plug competitor domains into Semrush, Ahrefs, or Similarweb.

-

Pull the 18-month organic chart and top 100 pages report for each.

-

Calculate page concentration. If the top 5 hold more than 50 percent, it is a concentration risk.

-

Validate against Google Search Console. Third-party tools are directional. GSC is ground truth.

-

Flag pages that grew 200 percent or more in the last 90 days. Reverse-engineer those.

For deeper walkthroughs, read Check Competitor Website Traffic: 17 Smart Ways or run the free Website Traffic Checker.

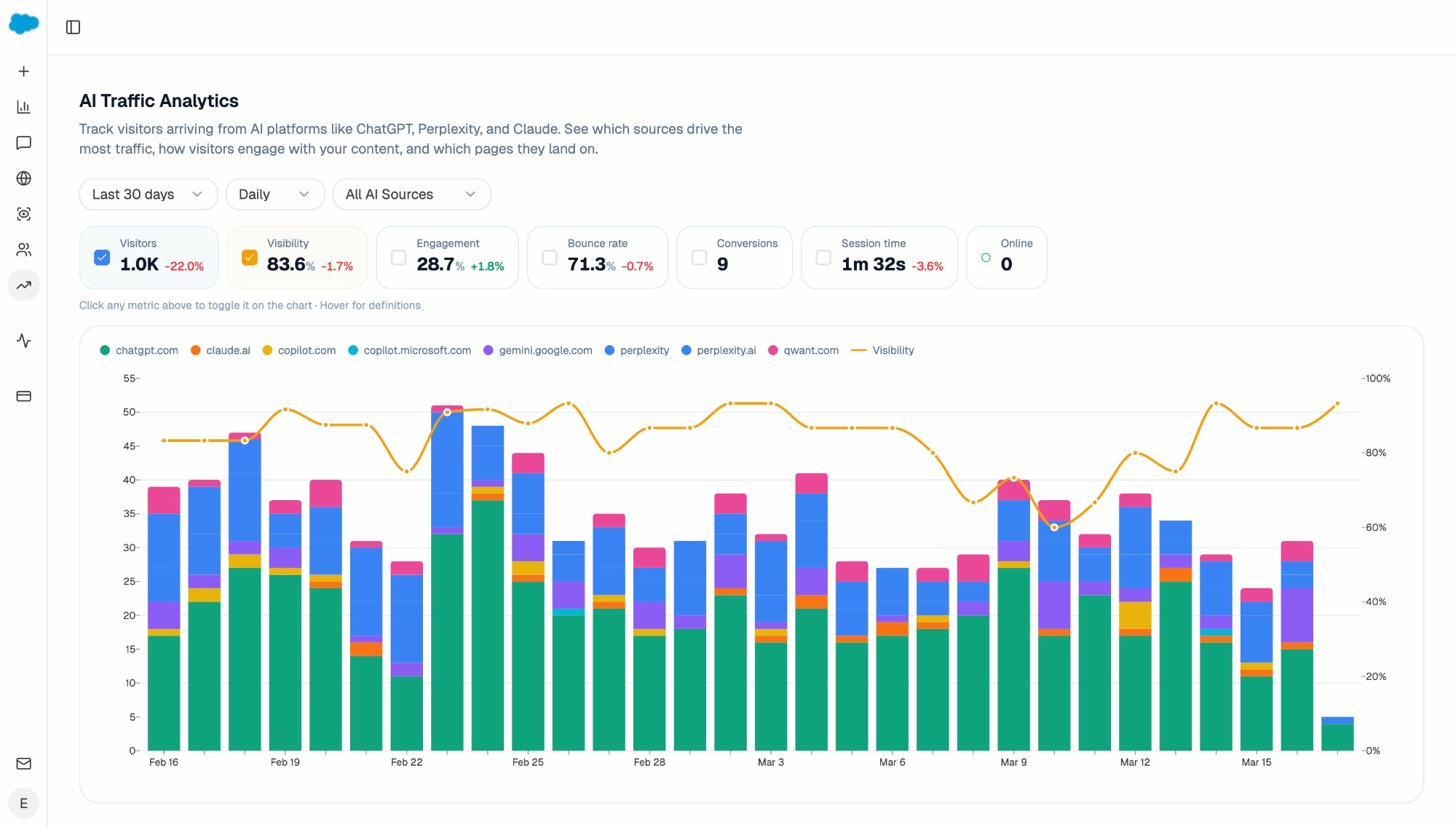

The parallel for AI search

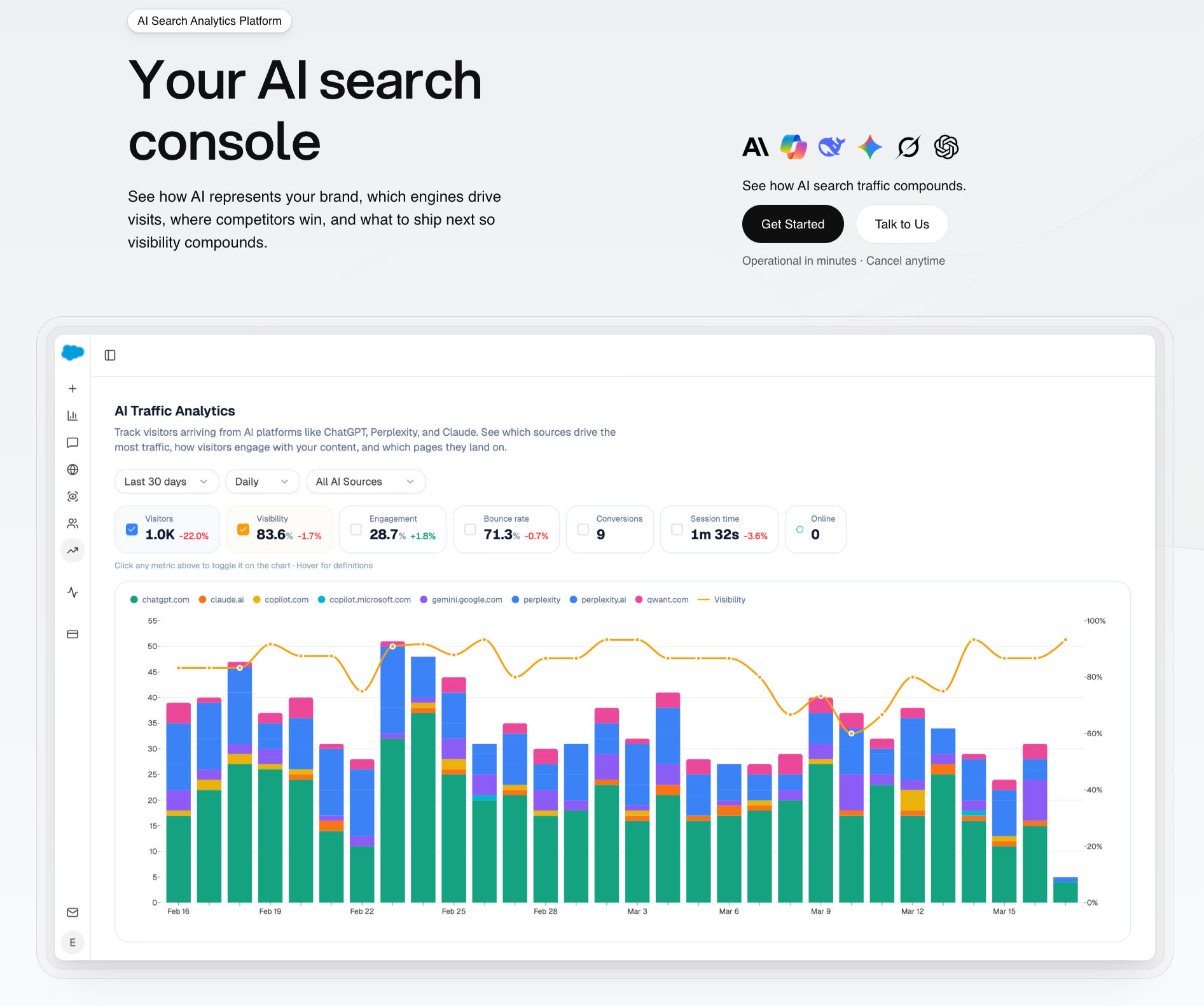

A site can lose 15 percent of Google clicks and gain 30 percent in AI referral traffic from ChatGPT, Perplexity, and Claude. Break GA4 sessions out by referrer hostname (chatgpt.com, perplexity.ai, claude.ai, gemini.google.com, copilot.microsoft.com) to see your own. To compare against competitors, AI Traffic Analytics gives you visitors, engagement, bounce, conversions, and session duration per engine.

Trap. Comparing one month to another. Use rolling 90-day averages.

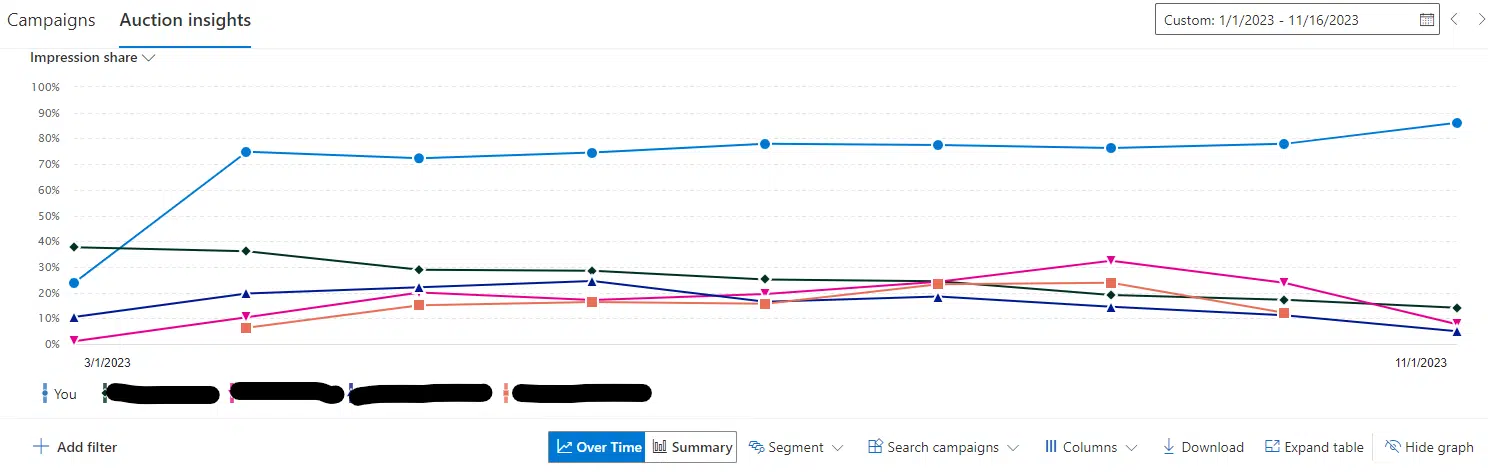

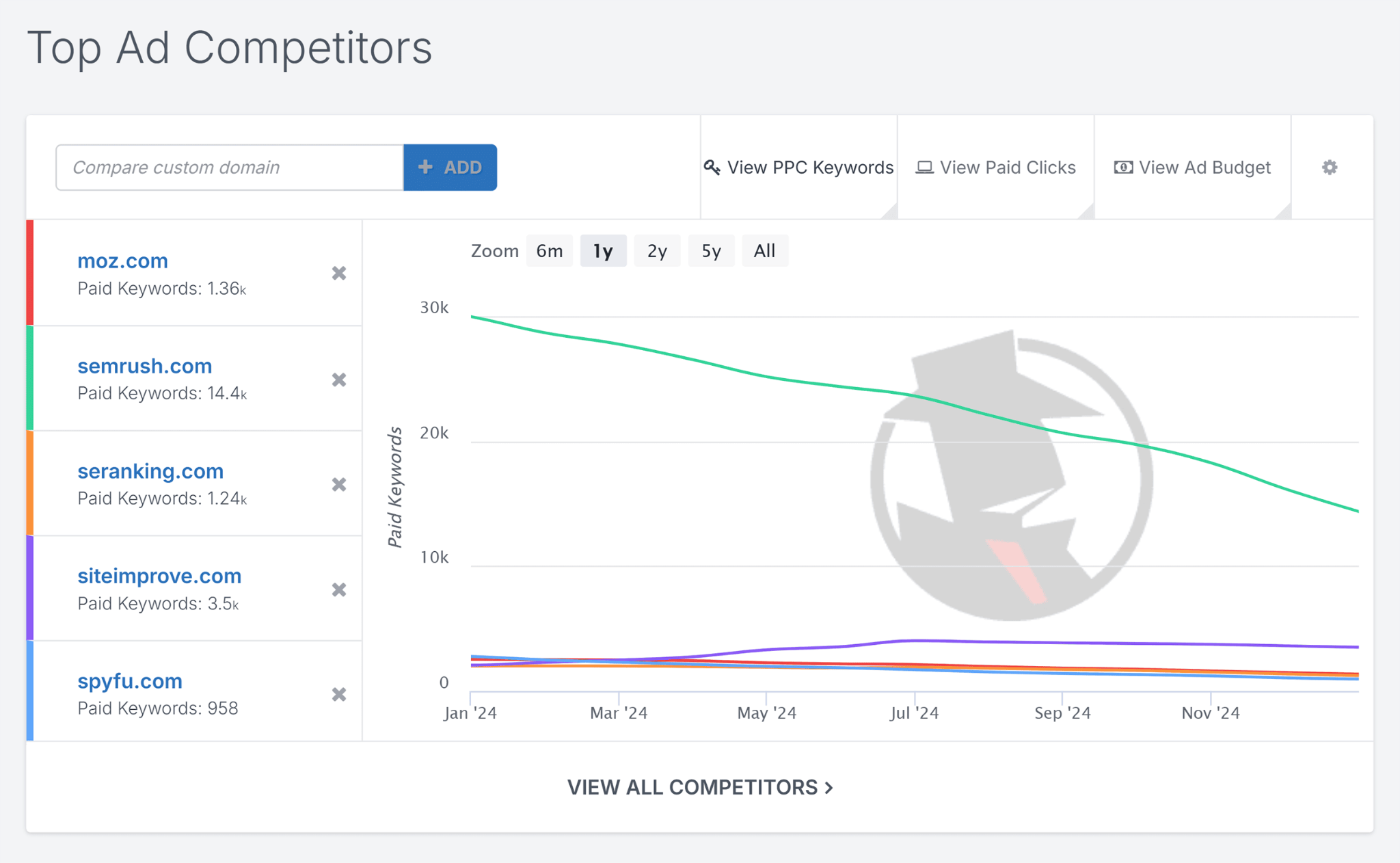

2. Compare paid visibility to see where money actually buys results

Paid data tells you which keywords competitors believe convert. A term running in a competitor’s account for six straight months is either making them money or defending pipeline.

How to do it

-

Open Google Ads Auction Insights for any campaign you already run. You get ground-truth data for searches you are in.

-

Use SpyFu or iSpionage for keywords you do not yet bid on. Pull 12 months of ad history per competitor.

-

Sort by months of consecutive presence. The longer a term stays, the more likely it converts.

-

Open the top three landing pages they use. Note headline, offer, social proof, and CTA structure.

Read PPC Spying: 8 Ways to Analyze Competitors’ Ads for the template.

The parallel for AI search

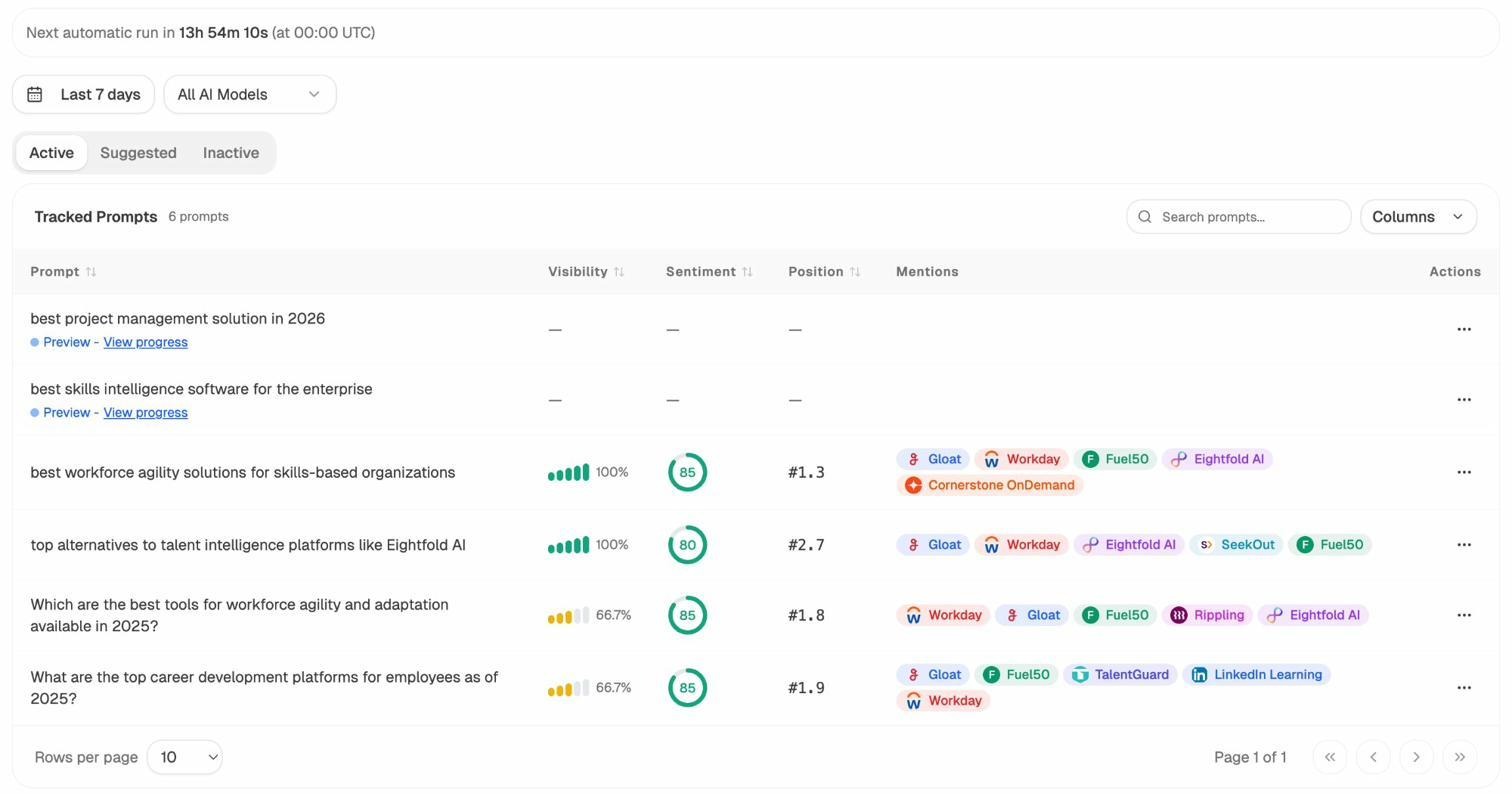

AI engines do not run ads yet, but the question still applies. Which buyer prompts do competitors win week after week on the highest-intent queries? Prompt Tracking shows which prompts each competitor wins, their visibility percentage, position, and sentiment inside the answer.

Trap. Assuming a competitor’s bid means you should match it. Treat paid data as signal.

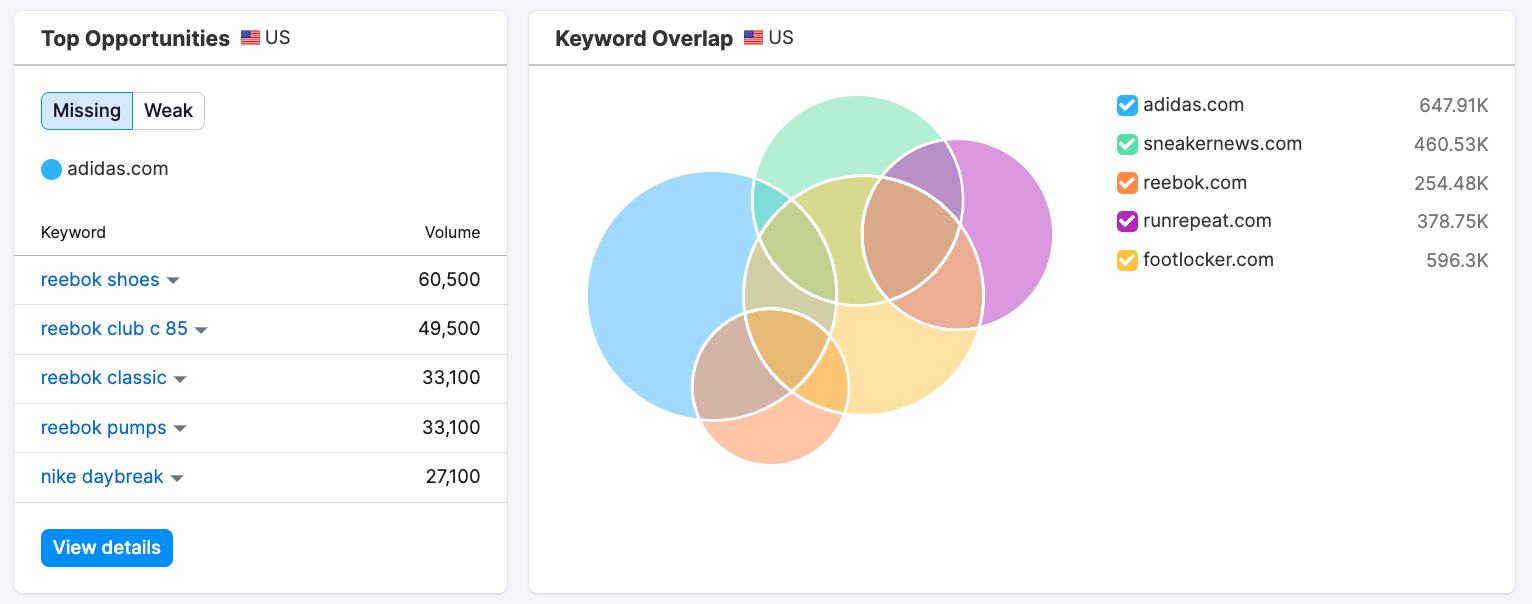

3. Compare keyword ownership, not keyword overlap

Stop comparing overlap. Compare ownership. Ownership means top-3 positions on terms that drive outcomes. Two sites can share 800 keywords, but if one owns the 80 with commercial intent and the other owns the 720 informational ones, they are tenants in different buildings.

How to do it

-

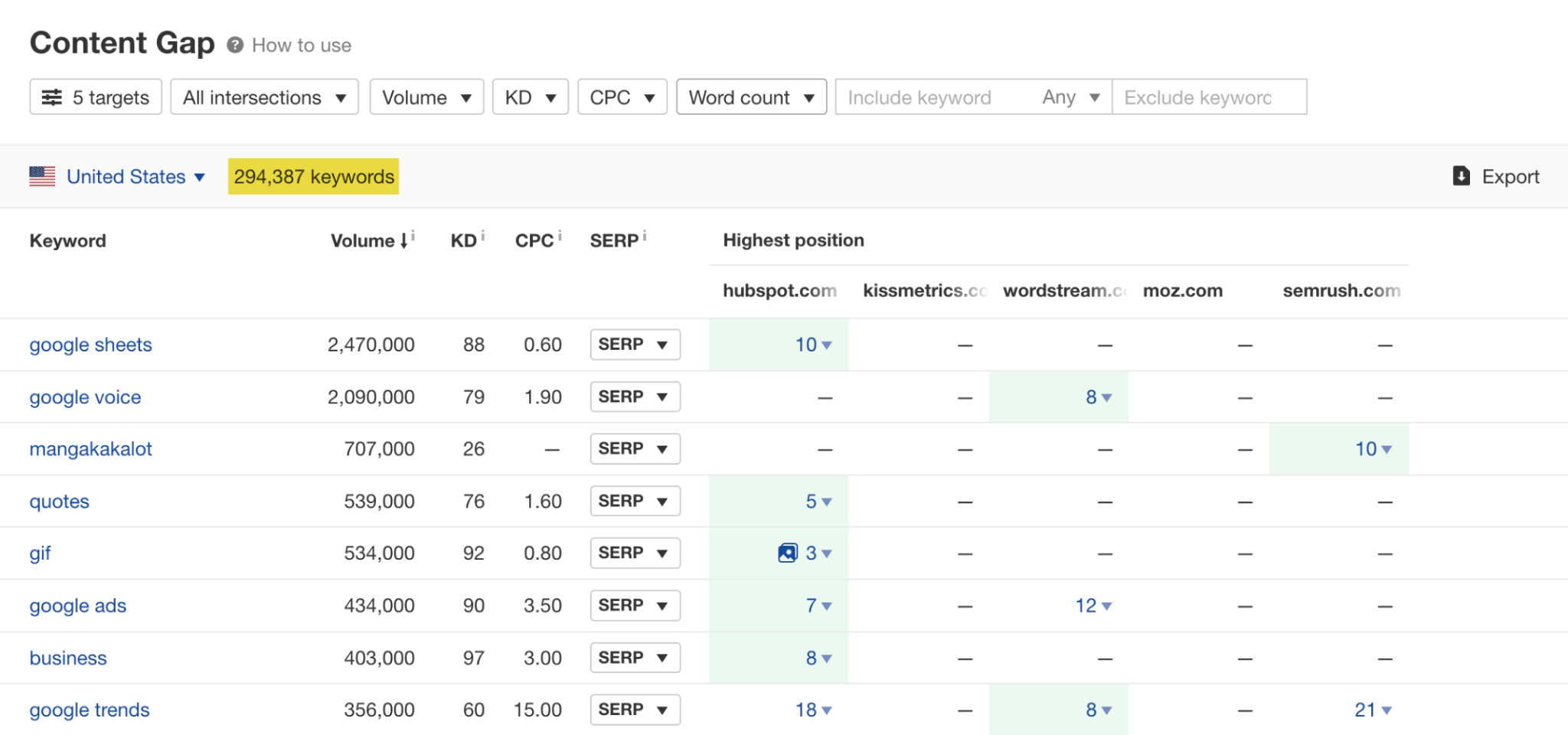

Run a keyword gap report in Ahrefs, Semrush, or SE Ranking.

-

Filter hard. Keep keywords where a competitor ranks 1 to 5. Drop anything under 50 monthly searches unless clearly buyer-intent.

-

Tag every remaining keyword by intent. Navigational, informational, commercial investigation, transactional. The last two move pipeline.

-

Build a plan ranked by intent, not volume. A 320-search “best X for Y” keyword out-converts a 6,000-search “what is X” keyword.

For depth, read How to Do a Competitive Analysis (With Template) and 9 Keyword Research Tools to Try, or run the free Keyword Generator and Keyword Difficulty Checker.

The parallel for AI search

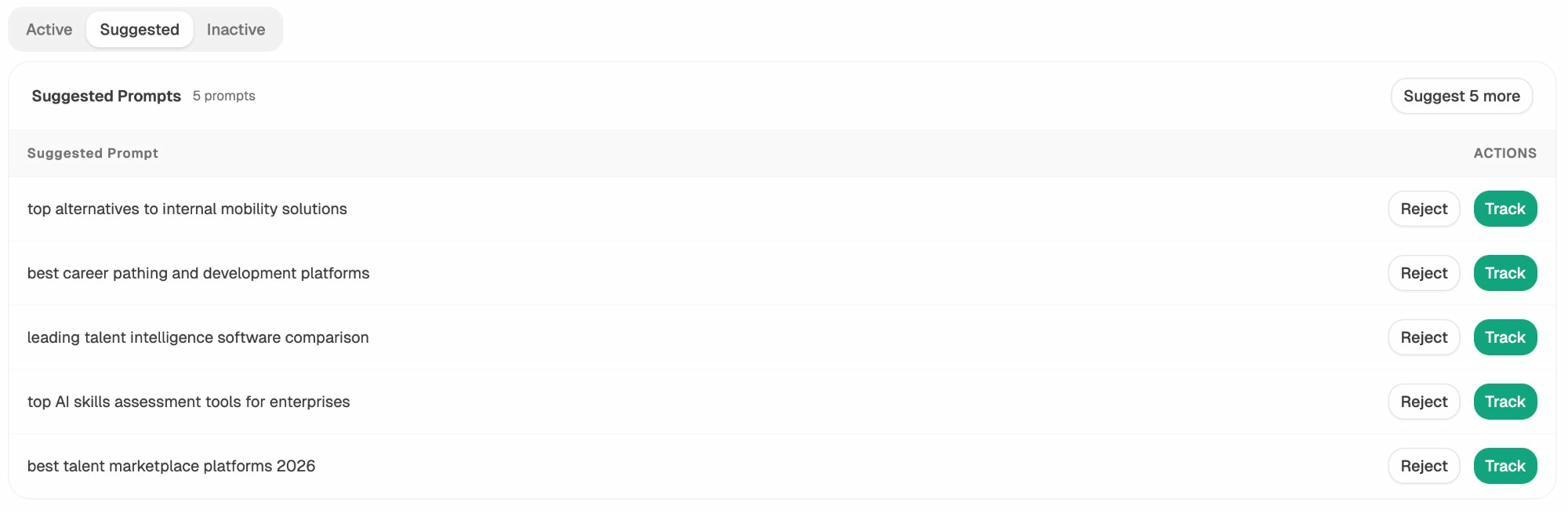

Keywords are to Google what prompts are to AI engines. The buyer searching “best CRM for small business” on Google types “what is the best CRM for a 12-person SaaS company in 2026” into ChatGPT. Same intent. Different format.

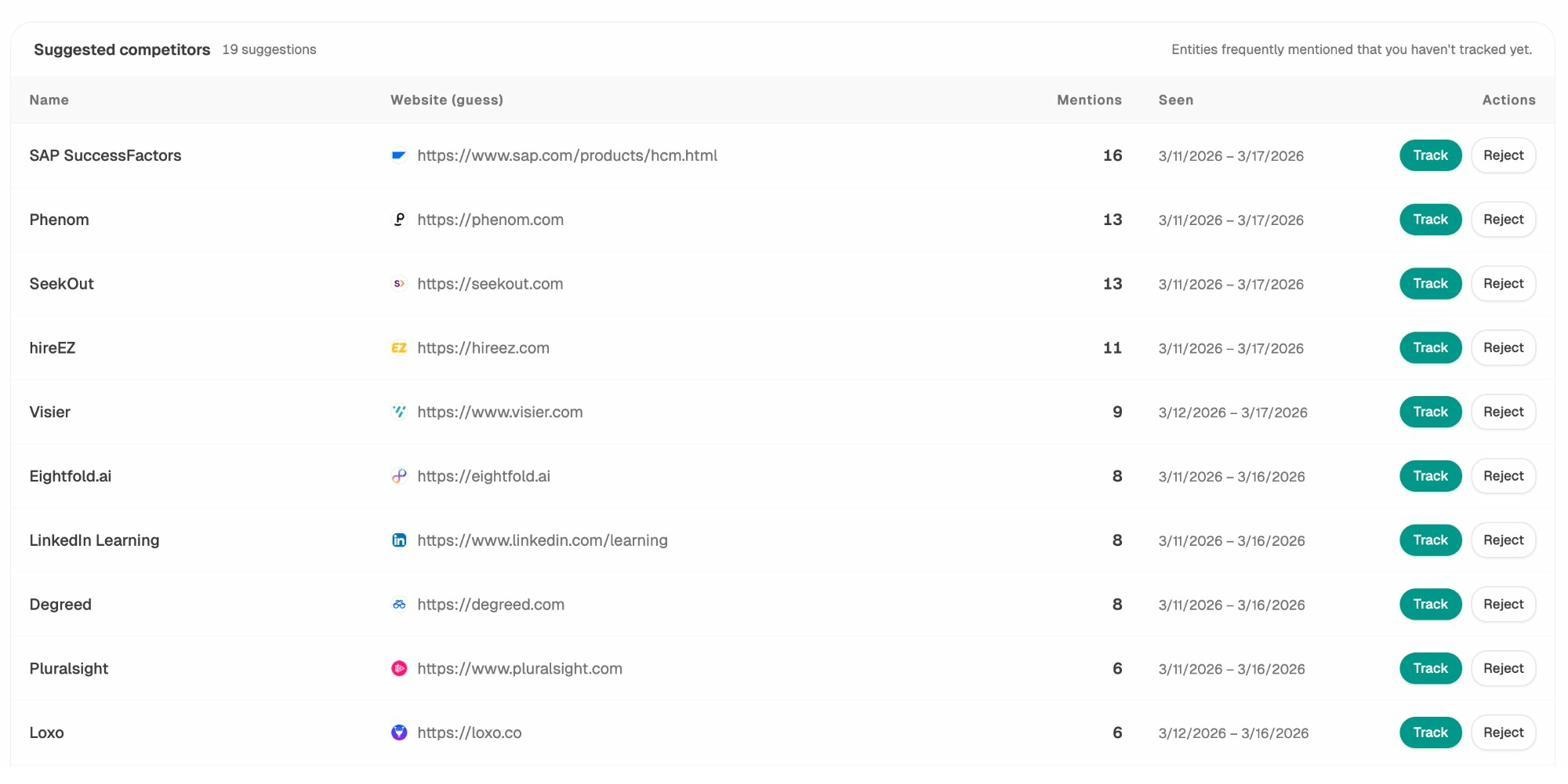

A prompt gap analysis now matters as much as a keyword gap analysis. Suggested Prompts surfaces the bottom-funnel prompts your buyers actually use.

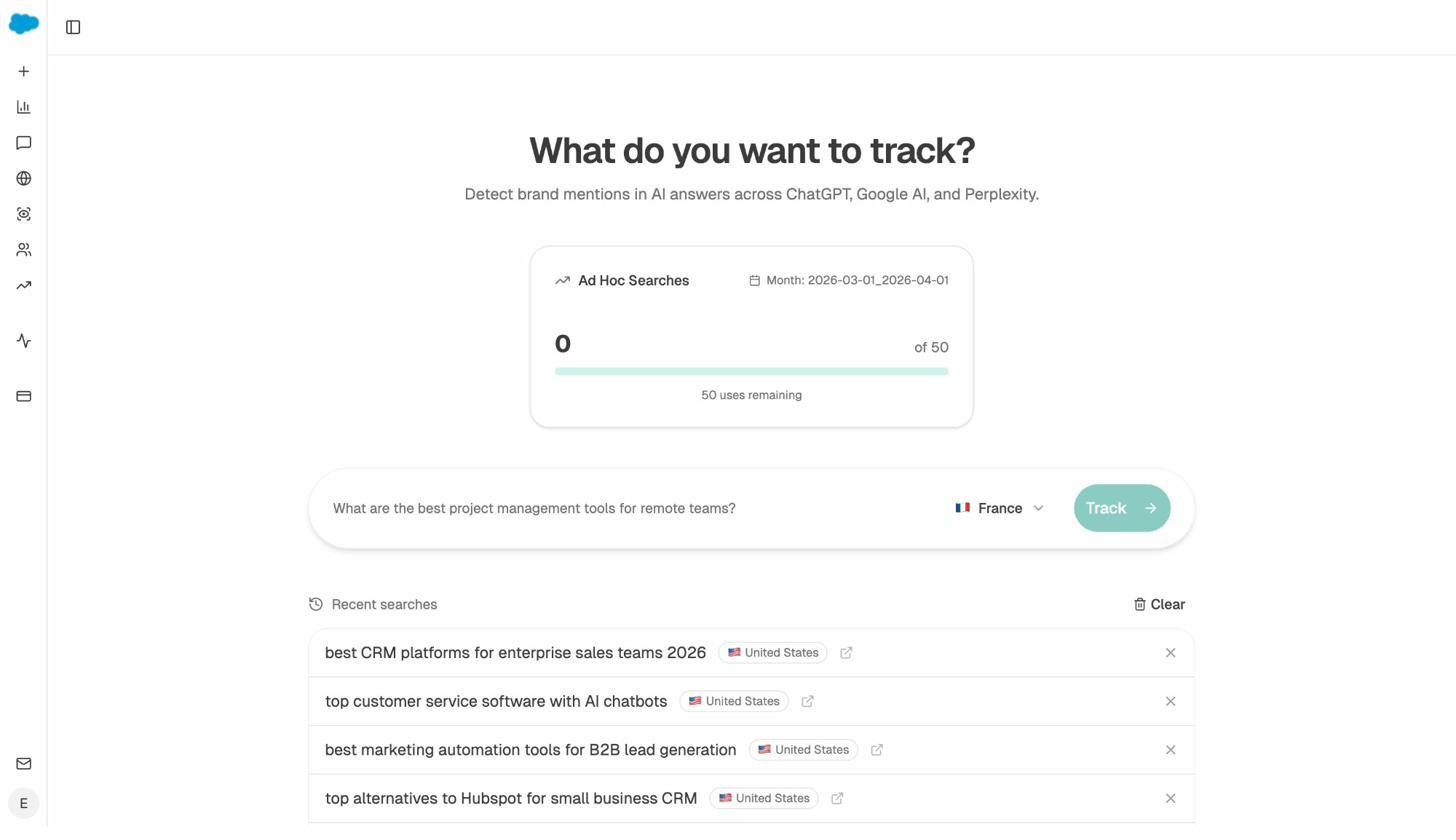

To spot-check a single prompt before tracking it, the Ad Hoc Prompt Search runs it across every major engine in one click.

Trap. Treating “shared keywords” as “competitive threat.” Filter to top-5 ownership on commercial intent and the real competitor list usually shrinks from 20 sites to 3.

4. Compare backlink profiles by weight, not by count

A profile with 50,000 directory submissions and a profile with 800 editorial links from publications buyers actually read are not the same profile. The first is decorative. The second compounds.

Three questions matter. Where does authority concentrate? Is growth editorial or one-time spikes? How diverse is the link neighborhood?

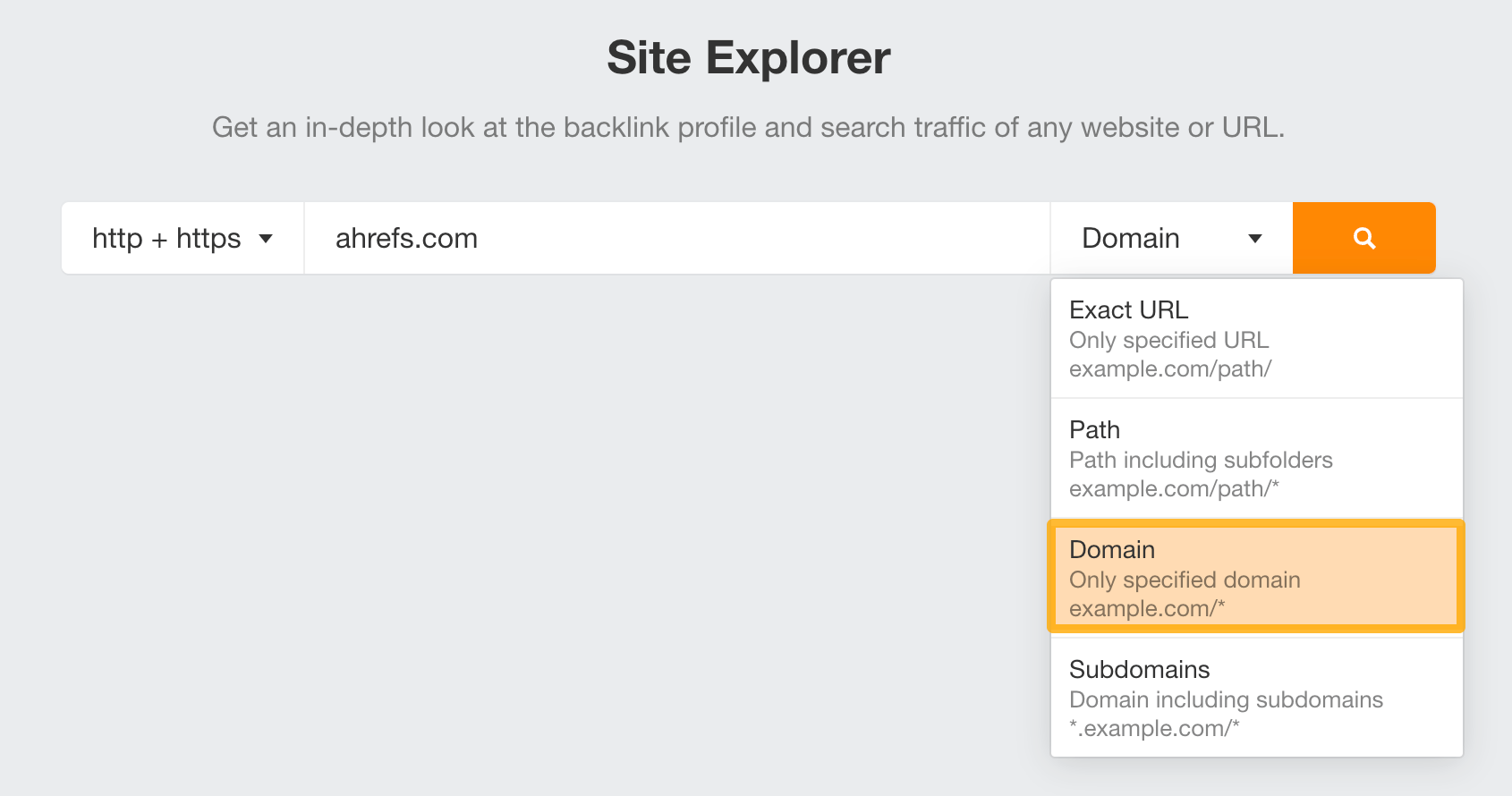

How to do it

-

Pull each competitor’s backlink profile in Ahrefs, Majestic, or Semrush.

-

Sort by authority and read the top 50 referring domains manually.

-

Identify each competitor’s “link magnets,” the pages attracting the most authority. Reverse-engineer why they earn links (data study, original framework, free tool, definitive guide).

-

Build your own version with information gain. A fresher dataset, a sharper angle, an interactive component.

Read How to Find Your Competitors’ Backlinks and How to Do a Backlink Gap Analysis. For a quick authority read, use the free Website Authority Checker.

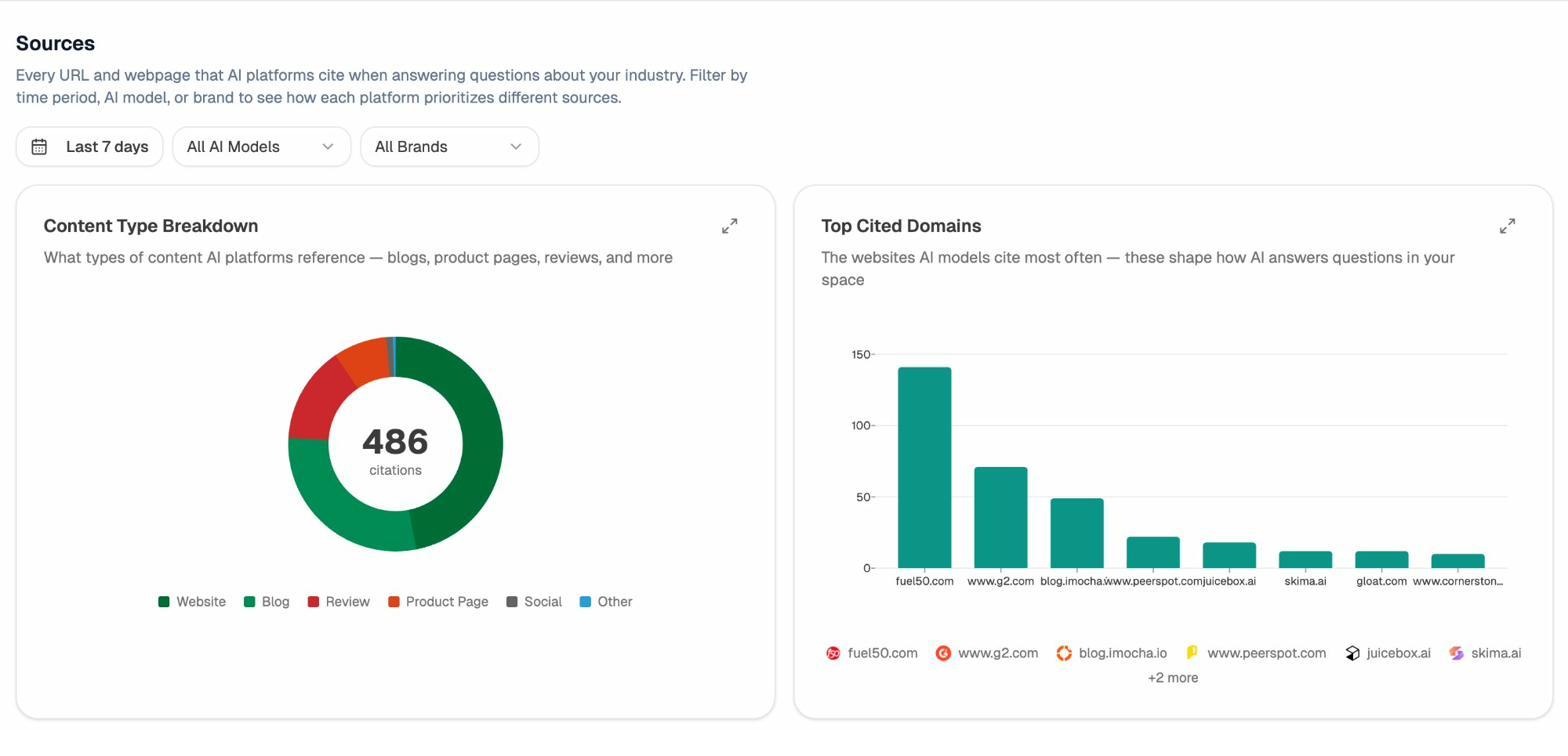

The parallel for AI search

AI engines do not count backlinks, but they have a citation equivalent. They pull from a recurring set of trusted domains, and those are not always the high-DR posts that win on Google. G2, Reddit, YouTube, niche review sites, and customer case study pages often carry more weight inside an AI answer than a generic SEO blog.

Citation Analytics shows every URL AI engines cite for questions in your category, broken down by engine, frequency, and which brands ride along.

When 28 percent of your category’s citations route through three review sites and a Reddit thread, that tells you exactly where to invest in PR.

Trap. Chasing DR averages. A DR-40 site with 12 citations on the top review site in your category will out-convert a DR-60 site with zero category presence.

5. Compare site structure (the hidden multiplier most teams ignore)

Two competitors can publish the same articles and win the same backlinks. One pulls ahead by 30 percent. The variable is usually structure. How internal links route authority, how categories cluster topics, and whether a crawler or LLM can understand the site in one pass.

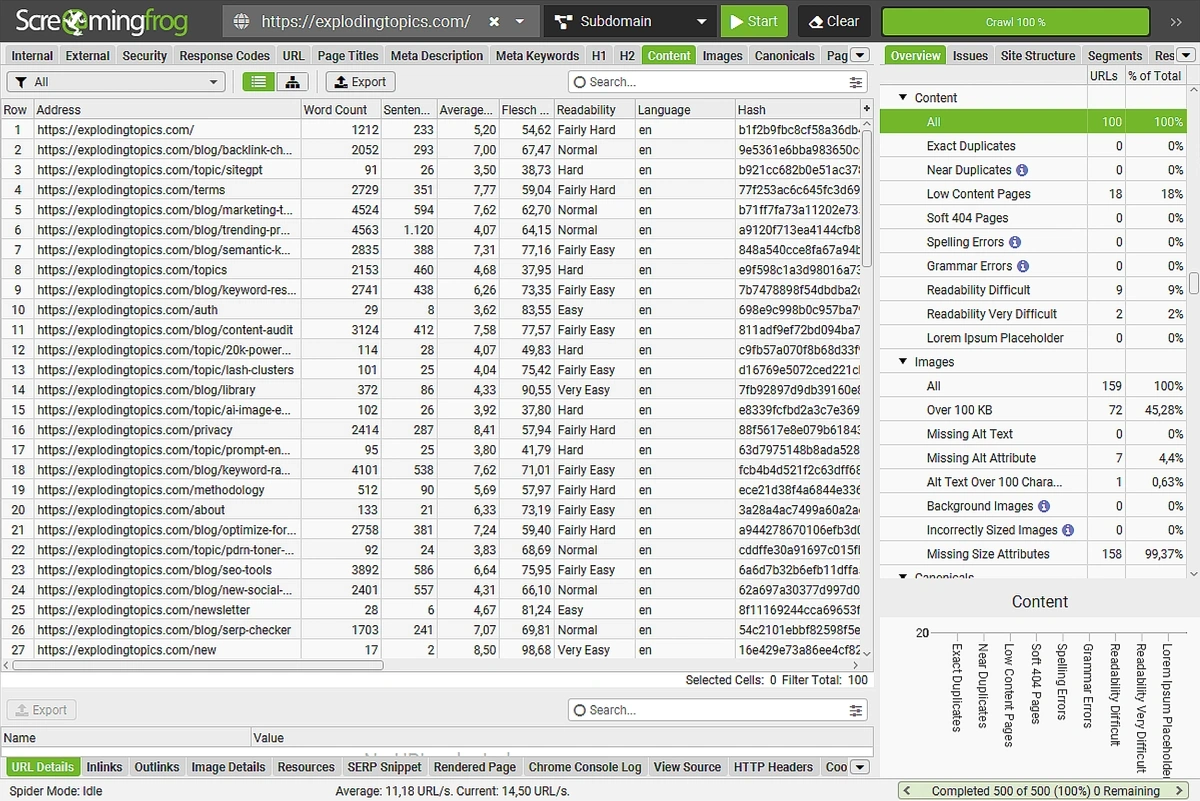

How to do it

-

Crawl the competitor in Screaming Frog or Sitebulb at depth 5.

-

Sort by internal link count. The top 20 most-linked pages reveal what they want to rank.

-

Find pages with zero internal links. 200 orphans signal structural neglect.

-

Run the same scan on your site. Clean dead links with the Broken Link Checker, then run the AI Website Audit Tool to catch issues that hurt AI parsing.

-

Map your hub-and-spoke against theirs. Where they cluster around a category page you do not, that is your next pillar.

The parallel for AI search

AI engines parse pages differently than Google. They reward clear claims, dense supporting evidence, and clean structural cues. FAQ schema, table-of-contents anchors, heading hierarchy, and short summary paragraphs all help. Structural rigor that helps a crawler also helps an LLM cite you accurately. Read the SEO and AI Visibility Checklist for the breakdown.

Trap. Treating structure as a one-time project. Re-audit every six months.

6. Compare content performance, not content volume

Teams that publish 12 posts a month rarely beat teams that publish 4. High-output teams measure “did we publish.” Low-output teams measure “did this piece move pipeline.” Three metrics matter. Engagement, conversion contribution, citation magnetism.

How to do it

-

Pull each competitor’s top 50 organic pages from Ahrefs or Semrush.

-

Cross-reference against indexed page count. 200 pages with 50 top performers runs at 25 percent efficiency. 2,000 pages with 50 top performers runs at 2.5 percent.

-

Read 5 of their winners. Identify the pattern (format, depth, originality nugget, internal link structure).

-

On your own site, flag pages down 30 percent or more in the last 90 days. Those are your refresh candidates.

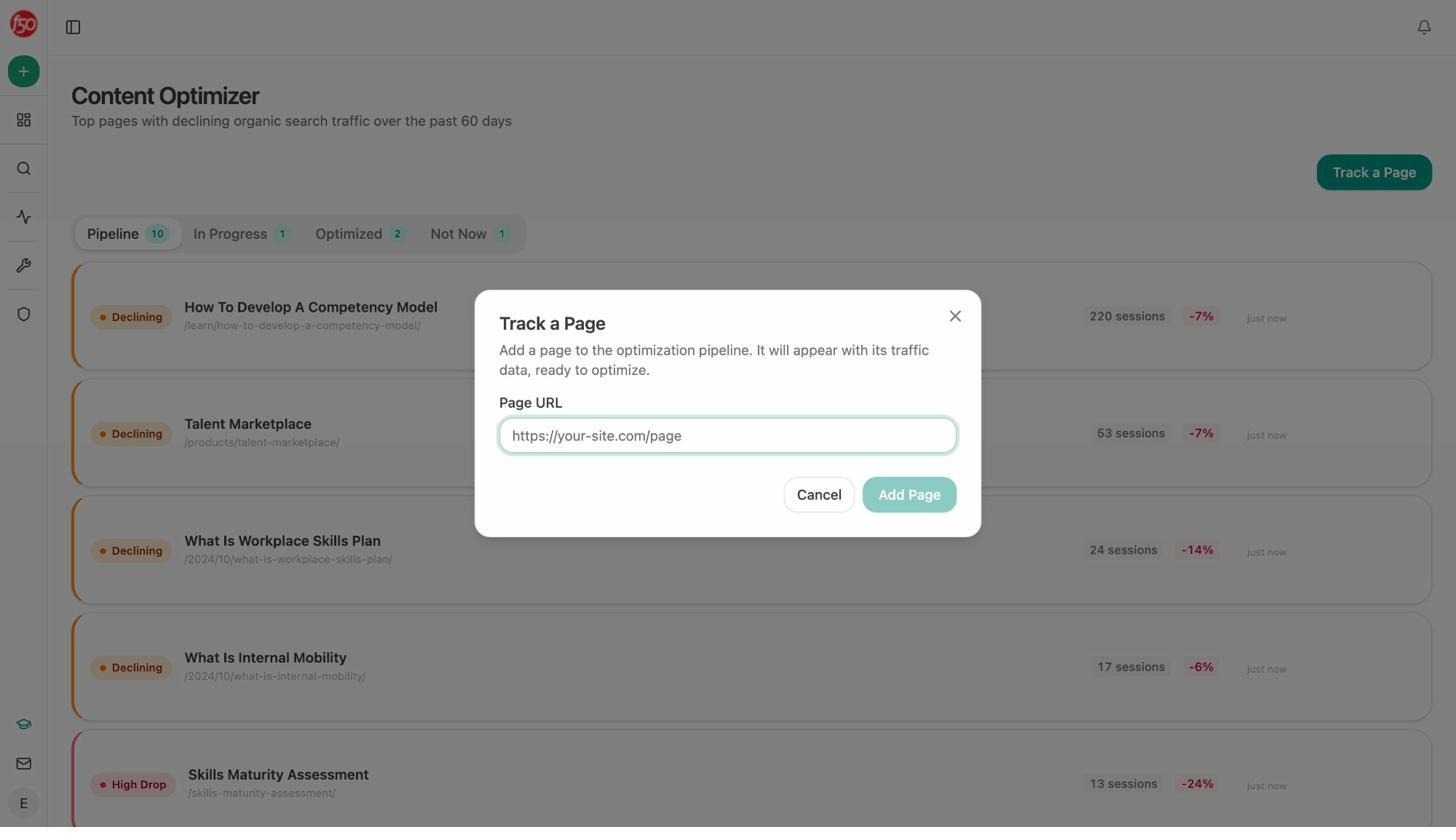

Run the full process with How to Do a Content Audit. Drop a declining page into Analyze AI’s Content Optimizer. It pulls the live page, surfaces gaps, and produces a rewrite that is intent-aligned and structurally clean.

The parallel for AI search

Which of your pages do models actually cite when buyers ask category questions? That list is not the same as your top 50 by Google traffic. Some of your best AI-cited pages have modest Google traffic because they do something LLMs reward (clear claims, specific data, original framing).

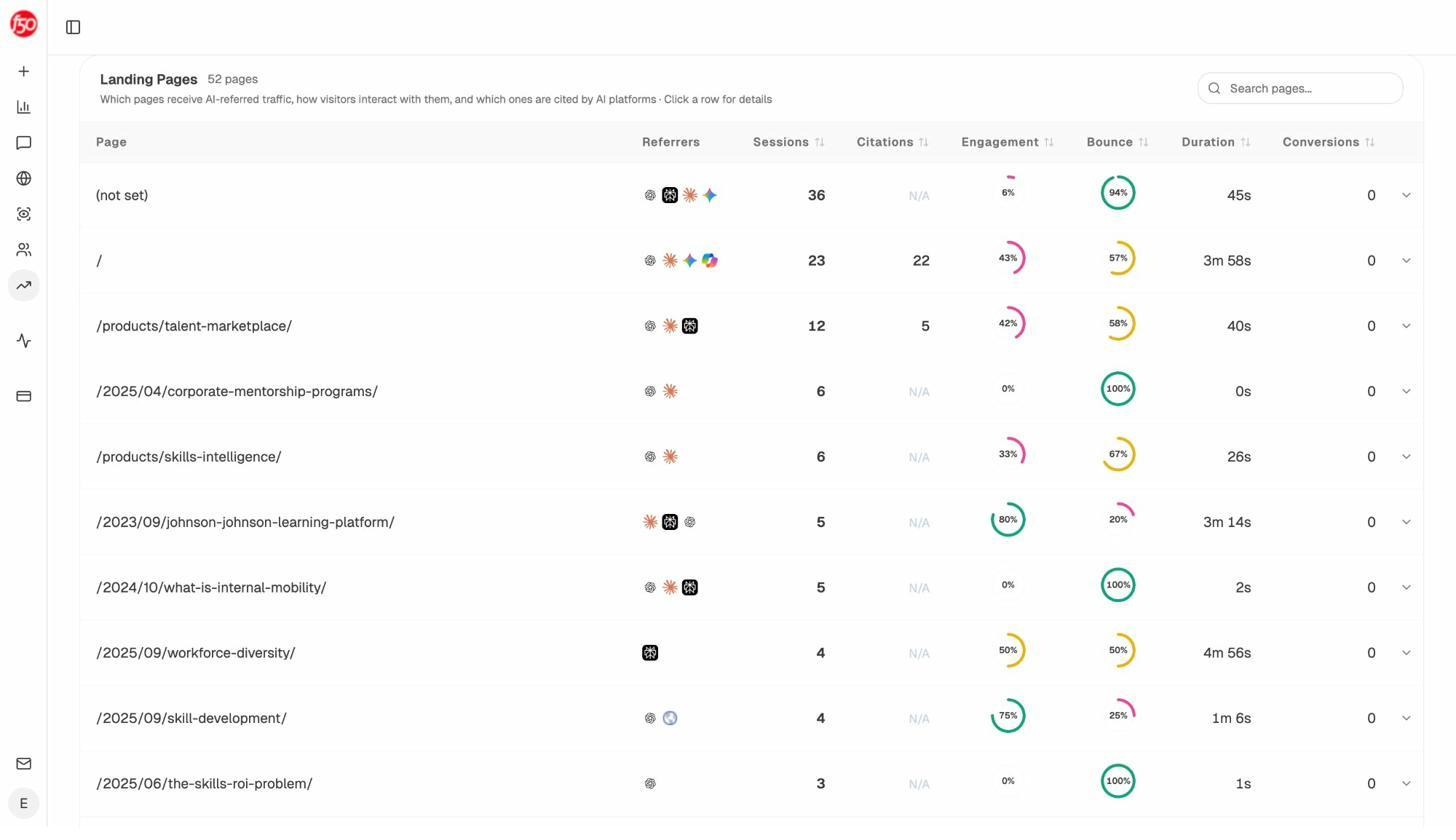

Analyze AI’s Landing Pages report shows which of your pages receive AI referral traffic, from which engines, with what citation count, and what conversions they drive.

A page with 36 sessions from 4 engines and a 94 percent bounce rate has a hook problem. A page with 23 sessions, 22 citations, and a 57 percent bounce has a conversion problem worth solving, because discovery is already working.

Trap. Comparing competitors by indexed page count. Volume is the laziest signal in content. Measure per page.

7. Compare visibility in AI answers

The first six methods compare websites where the click is the unit of analysis. This one compares them where the answer is. When a buyer asks ChatGPT for the “best customer support software for SaaS under 100 employees,” they get three to five named brands and two to four cited sources. That answer shapes pipeline whether anyone clicks.

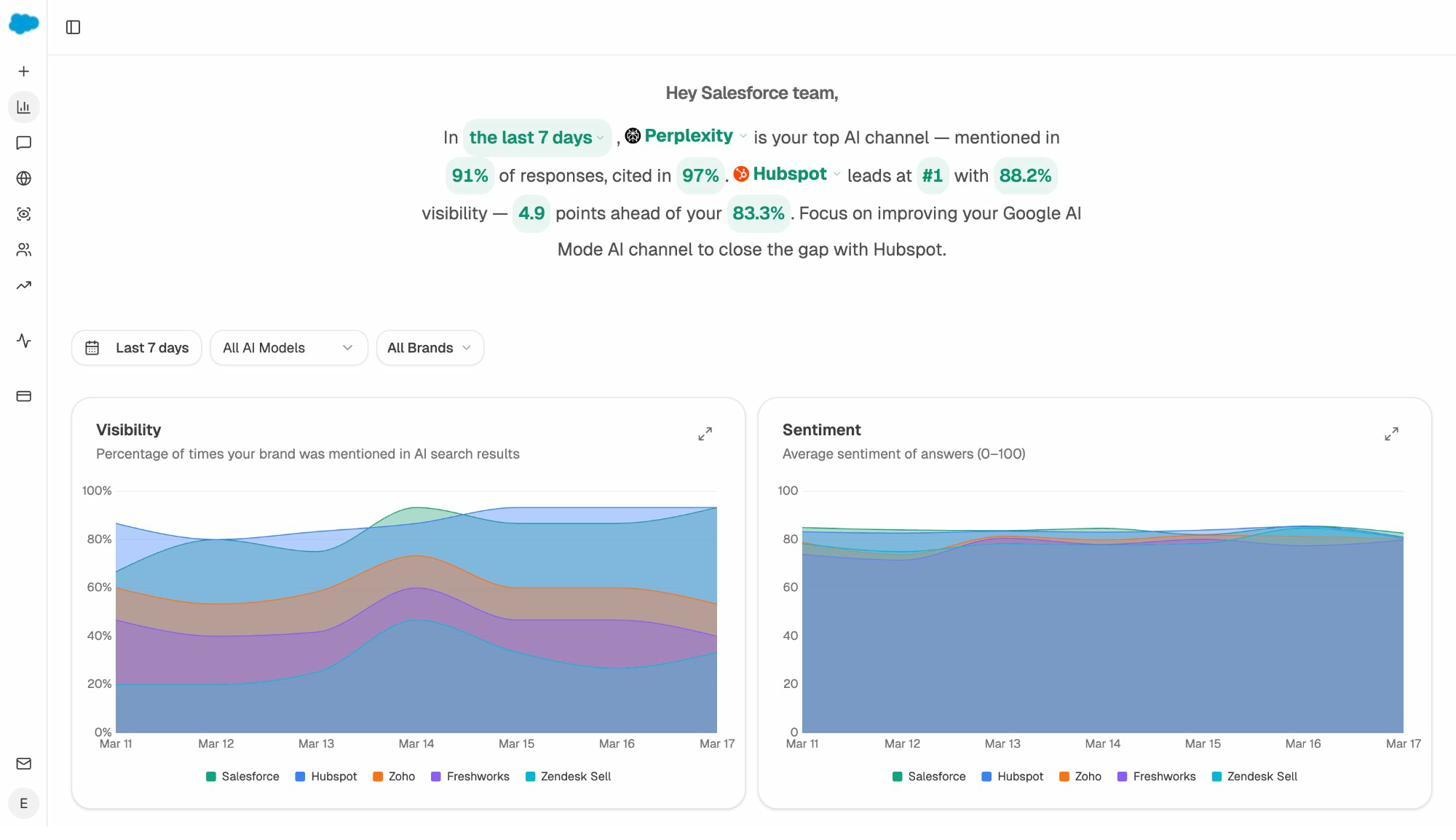

Compare four things across ChatGPT, Perplexity, Google AI Mode, Claude, Copilot, and Gemini. Visibility, sentiment, citations, share of voice.

How to do it

-

Build a list of 20 to 50 buyer prompts. Start with prompts your sales team already hears.

-

Run each across the major AI engines. Note which brands appear, in which order, with what framing.

-

Repeat weekly. AI answers shift faster than Google rankings.

-

Track citations. When a model mentions your brand, where did the supporting URL come from? That citation reveals your real authority signal.

For the full playbook, read How to Compare Your AI Visibility Against Your Competitors and Outrank Competitors in AI Search.

Trap. Tracking 5 prompts. AI visibility is a long-tail problem. You need 30 to 50 to see patterns.

How Analyze AI runs all seven comparisons in one workspace

Most tools above do one job well. Semrush handles keyword and traffic intelligence. Ahrefs handles backlinks. Screaming Frog handles crawls. Profound, Peec AI, and Otterly AI handle narrow AI visibility tracking. Each needs its own subscription, dashboard, and export-to-spreadsheet ritual.

Analyze AI is the agentic platform for SEO, AEO, content, and GTM ops that runs every comparison above in one workspace and ties the answer to revenue.

Four layers. Discover finds prompts, competitors, and opportunities. Monitor tracks visibility, citations, sentiment, and AI traffic. Improve produces the content that closes the gap. Govern keeps the narrative on track.

The narrative summary tells you what changed yesterday. The charts compare visibility and sentiment against every competitor across every engine.

Catch competitors before they take share

Competitor Intelligence surfaces every brand that shows up alongside you in AI answers and flags new ones you did not know to track. When a brand starts appearing in your prompts, Analyze AI suggests a Track button. They are now in your daily comparison.

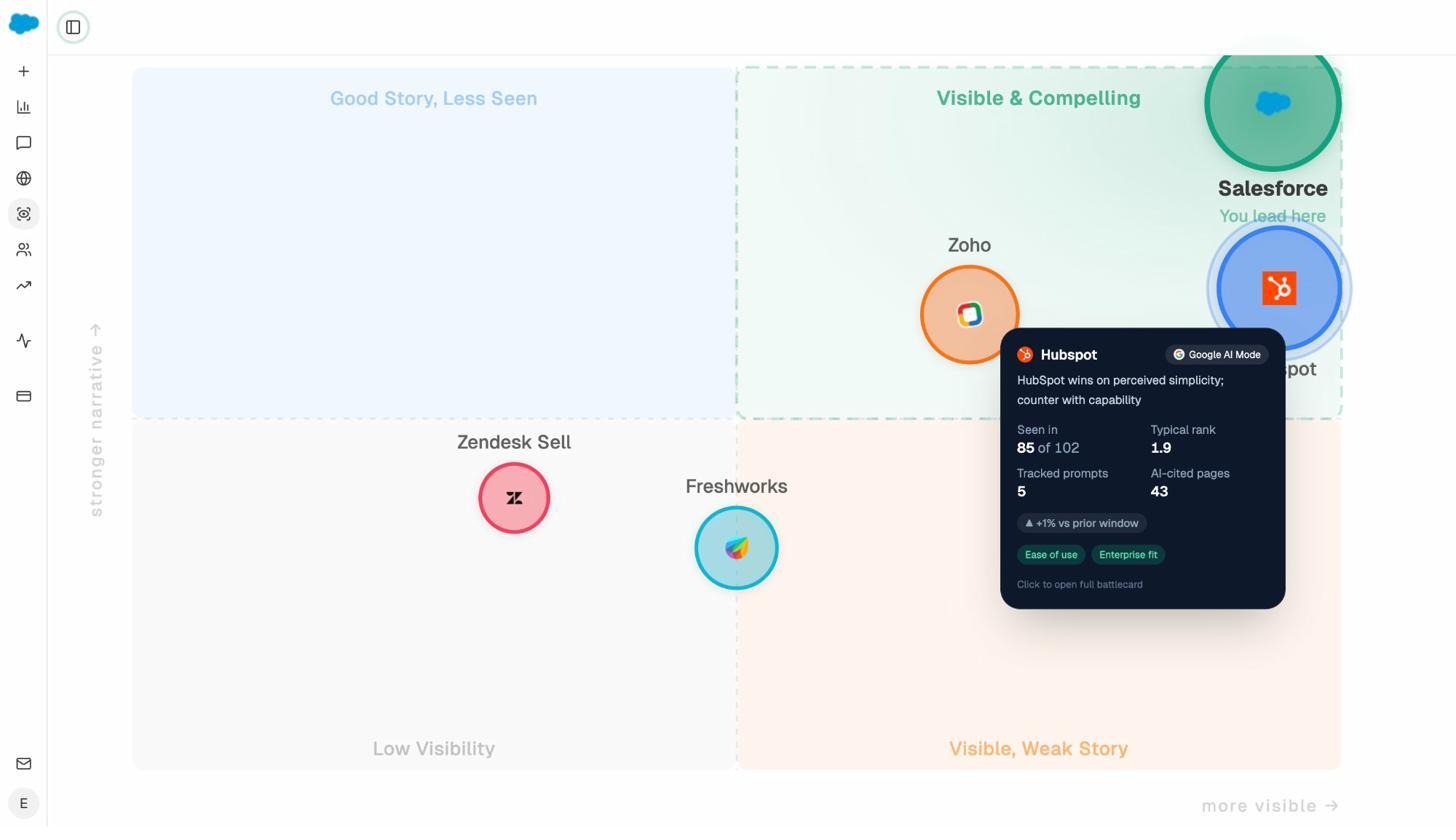

Map perception, not just visibility

The Perception Map plots every tracked brand on a quadrant of presence and narrative strength. You see whether you have a visibility problem, a narrative problem, both, or neither. “Visible, Weak Story” is a counter-narrative opening. “Visible and Compelling” is a competitor you dislodge with proof. Pair this with AI Battlecards and your sales team has the talking points.

Produce content AI engines actually cite

The AI Content Writer and Content Optimizer run a four-step process (research, outline, draft, QA) using your Knowledge Base for brand voice, tracked prompts for intent, and the AEO scorecard for structural fitness. Output is a draft that scores 80+, with verified claims, working internal links, and structure LLMs can parse.

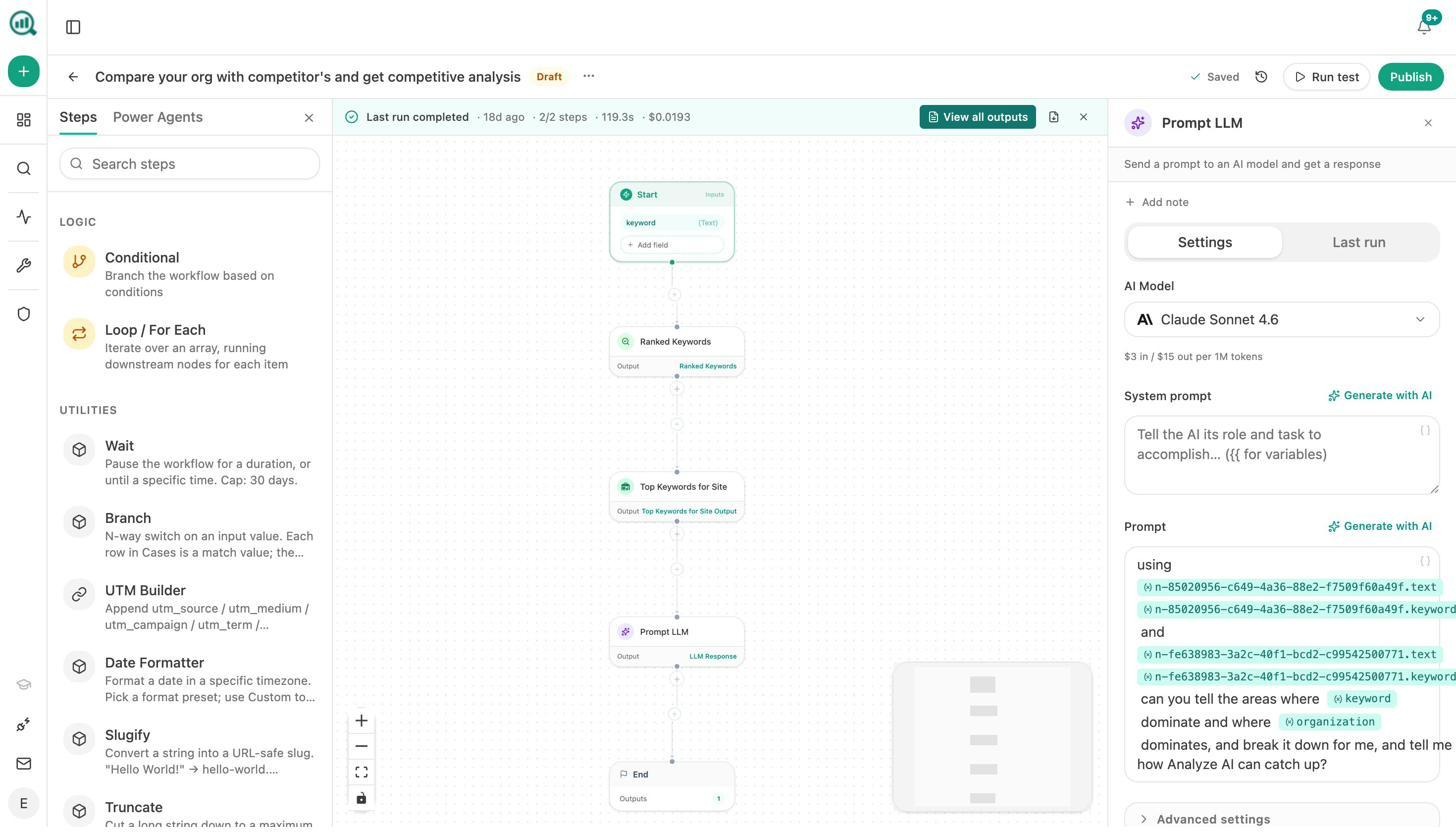

The Agent Builder is the real product

Underneath Analyze AI sits an Agent Builder with 180+ nodes, 34 pre-built data recipes, 13 input primitives, and three trigger modes (manual, schedule, webhook). Direct nodes for GA4, Google Search Console, DataForSEO, Semrush, HubSpot, Notion, WordPress, Slack, and every major LLM.

Every comparison in this article can run as a scheduled or webhook agent.

-

A Monday morning competitor briefing that pulls share of voice, visibility losers, and new citation sources, then drops the synthesis into Slack before stand-up.

-

A citation-decay alert that pings you daily when a page loses citations faster than traffic, with a refresh brief attached.

-

An inbound lead enrichment agent that fires on form-fill, runs an AI visibility audit on the prospect, and posts the brief to the AE’s Slack.

-

A content refresh fleet that loops every declining page weekly, rewrites underperformers through the AEO scorecard, and publishes only the ones that pass.

Competitor analysis becomes a Monday-at-7-a.m. background process.

Pricing math

|

Tool |

Entry plan |

Best-value plan |

What you get |

|---|---|---|---|

|

Semrush |

$139.95/mo Pro |

$249.95/mo Guru |

SEO and keyword intelligence. AI visibility is an add-on |

|

Ahrefs |

$29/mo Starter (limited) |

$129 to $249/mo Lite or Standard |

Best-in-class backlinks. Brand Radar separate for AI |

|

Profound, Peec AI, Otterly AI |

Custom or higher tiers |

Custom |

AI visibility tracking only. No SEO, no content, no agents |

|

Analyze AI |

Operational in minutes |

One predictable plan |

All seven comparisons plus the Agent Builder that runs them on a schedule |

No fear-based upsells. No vanity metrics. Every comparison ties to qualified demand, attributable revenue, and defensible share of voice. That is the Analyze AI manifesto.

What to do this week

Start with the two comparisons most likely to expose a gap you can act on.

-

Today. Pick three competitors. Pull their organic traffic curve and top 50 pages from Semrush, Ahrefs, or the free Website Traffic Checker. Note the concentration risk.

-

This week. Build a list of 20 buyer prompts. Run them across ChatGPT, Perplexity, and Google AI Mode using Ad Hoc Prompt Search. Note who appears and who does not.

-

This month. Track those prompts in Analyze AI. Add the three competitors. Connect GA4 and Google Search Console. By week four, you have a daily comparison view that ties visibility to traffic, traffic to conversions, and surfaces the next move automatically.

SEO is not dead. It is one of two organic channels every brand needs to win. Teams that compound in 2026 compare websites against both with the same rigor.

Ernest

Ibrahim