Summarize this blog post with:

In this article, you’ll learn what Google PageRank actually is and why it still matters in 2026, how the original formula worked (and why it was technically wrong), the full history of PageRank from the Stanford dorm room to SpamBrain, how the algorithm has evolved over nearly three decades, whether you can still check your PageRank today, and exactly how to improve it. You’ll also learn why the same authority principles behind PageRank now shape how AI search engines like ChatGPT, Perplexity, and Gemini decide which sources to cite—and what that means for your visibility strategy.

Table of Contents

What Is Google PageRank?

PageRank (PR) is a Google algorithm that evaluates the importance of web pages based on the number and quality of links pointing to them. It was created by Google co-founders Sergey Brin and Larry Page in 1997 at Stanford University. The name is a play on both Larry Page’s surname and the concept of ranking web pages.

The core idea is simple: links act as votes. When one page links to another, it casts a vote of confidence. Pages that receive more votes from high-quality sources are considered more important and tend to rank higher in search results.

Think of it like academic citations. A research paper cited by hundreds of other credible studies carries more weight than one cited by none. PageRank applied this logic to the web. A page linked to by authoritative sites like the New York Times or a major university carries more weight than one linked to by a brand-new blog with no audience.

By combining link analysis with content relevance, Google’s results outperformed every competitor in the late 1990s. Links became the currency of the web. They still are—but the landscape has gotten a lot more complex.

Google Still Uses PageRank

There’s a persistent myth in SEO that PageRank is dead. It isn’t.

Google has confirmed repeatedly that PageRank remains part of its ranking algorithm. In a 2017 tweet, Google’s Gary Illyes stated that after 18 years, Google was still using PageRank alongside hundreds of other signals. In 2018, at the SMX conference, Illyes further explained that E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) is largely based on links and mentions on authoritative sites.

Google’s own documentation on How Google Fights Disinformation makes the connection explicit. It states that PageRank uses links on the web to understand authoritativeness, and that this is one of the best-known signals Google uses to identify trustworthiness.

![[Screenshot: Google’s “How Google Fights Disinformation” document highlighting the PageRank reference]](https://www.datocms-assets.com/164164/1776980797-blobid1.png)

PageRank also plays a confirmed role in two other critical areas of how Google handles your site:

Crawl budget. Pages with higher PageRank get crawled more frequently. This makes sense—Google wants to spend its resources on pages the web considers important. If your most critical pages have weak link profiles, Google may crawl them less often, which delays indexing of updates.

Canonicalization. When Google encounters duplicate or near-duplicate pages, it uses PageRank as one of the signals to determine which version to index and show in search results. The version with the stronger link profile is more likely to be chosen as the canonical.

And here’s the most telling evidence: Google once experimented internally with removing links from its algorithm entirely. The result, according to a Google Search Central video, was that search quality became significantly worse. Backlinks, despite the noise and spam, remain a major quality signal.

The Same Principle Now Powers AI Search

Here’s what most people miss: the authority logic behind PageRank doesn’t stop at Google’s traditional search results. AI search engines—ChatGPT, Perplexity, Claude, Gemini, Microsoft Copilot—use a related principle when deciding which sources to cite in their answers.

When you ask ChatGPT a question, it doesn’t just pull from its training data. Increasingly, AI engines retrieve live information from the web, evaluate source credibility, and cite the pages they consider most authoritative. The pages that get cited most often tend to be the same ones with strong link profiles, high domain authority, and deep topical coverage.

In other words, the “votes” that PageRank counts haven’t gone away. They’ve expanded to a new channel. If your pages have earned authority through links, structured content, and trustworthy sourcing, they’re more likely to be cited by AI engines too.

This is why building genuine authority—through the same mechanisms that improve PageRank—now has a compound effect across both traditional search and AI search.

The PageRank Formula Explained

The original PageRank formula, published in Brin and Page’s 1997 Stanford paper, looks like this:

PR(A) = (1-d) + d (PR(T1)/C(T1) + … + PR(Tn)/C(Tn))

Where:

-

PR(A) = PageRank of page A

-

T1…Tn = pages that link to page A

-

C(Tn) = the number of outbound links on page Tn

-

d = damping factor (set at 0.85 in the original paper)

In plain language, assuming a damping factor of 0.85:

PageRank of a page = 0.15 + 0.85 × (a share of the PageRank of each page linking to it, split across that page’s outbound links)

Each linking page divides its PageRank equally among all its outbound links. So a page with a PageRank of 1.0 and 10 outbound links passes 0.1 to each linked page. A page with the same PageRank but only 2 outbound links passes 0.5 to each.

This is why a link from a high-authority page with few outbound links is far more valuable than a link from a low-authority page with hundreds of outbound links.

Why the Original Formula Was Wrong

Here’s a fact most people don’t know: the formula published in the original paper was mathematically incorrect.

PageRank was described as a probability distribution—the likelihood of a random web surfer landing on any given page. For this to work, the sum of all PageRank values across every page on the web must equal 1 (representing 100% probability).

But in the published formula, each page gets a minimum PageRank of 0.15 (which is 1 - d). With billions of pages on the web, the total would far exceed 1. That breaks the probability model.

The corrected formula divides that minimum value by the total number of pages:

PageRank of a page = (0.15 / total pages on the web) + 0.85 × (share of PageRank from linking pages)

This ensures the total probability adds up to 1.

How the Calculation Actually Works

The formula is iterative. Google doesn’t calculate PageRank once—it runs the calculation repeatedly until the values stabilize. Here’s a simplified walkthrough:

Step 1: Assign initial scores. Imagine five pages on the web with no links between them. Each starts with a PageRank of 1/5 = 0.2.

![[Screenshot: Diagram showing five pages with equal initial PageRank of 0.2 each]](https://www.datocms-assets.com/164164/1776980806-blobid2.png)

Step 2: Add links and recalculate. Now add some links between pages. Pages receiving more links from higher-scored pages get a higher PageRank. The scores shift.

![[Screenshot: Diagram showing the same five pages after one iteration of PageRank, with scores redistributed based on link structure]](https://www.datocms-assets.com/164164/1776980808-blobid3.jpg)

Step 3: Repeat. Run the calculation again. Even though the links haven’t changed, the base PageRank values are different now, so the resulting scores shift again. Each iteration brings the values closer to their final, stable state.

![[Screenshot: Diagram showing five pages after two iterations, with scores further refined]](https://www.datocms-assets.com/164164/1776980816-blobid4.jpg)

The Damping Factor

The damping factor (d = 0.85) simulates the behavior of a random web surfer. The idea: there’s an 85% chance that a user will click a link on the current page, and a 15% chance they’ll get bored and jump to a completely random page.

This has a practical implication for SEO. If a high-authority page links directly to your page, you receive a large share of value. But if the link is buried four clicks deep—through intermediary pages—the value passed to your page decreases significantly with each hop.

This is one reason why pages linked from a site’s homepage or main navigation tend to accumulate more PageRank than pages buried deep in the site architecture.

A Brief History of PageRank

PageRank has been through multiple eras since its creation. Understanding this timeline helps you grasp why link building looks the way it does today.

1997–2000: The Birth of PageRank

The first PageRank patent, titled “Method for node ranking in a linked database,” was filed on January 9, 1998. It expired in 2018 and was not renewed.

Google first made PageRank visible to the public on March 15, 2000, when the Google Directory launched. This was a version of the Open Directory Project, but sorted by PageRank. The directory was eventually shut down on July 25, 2011.

Then, on December 11, 2000, Google launched PageRank in the Google Toolbar. This was the version that would dominate SEO conversations for over a decade. It displayed a simple green bar showing a page’s PageRank on a scale of 0 to 10.

![[Screenshot: The old Google Toolbar showing a PageRank score of 8/10 with the green bar indicator]](https://www.datocms-assets.com/164164/1776980818-blobid5.png)

2005–2009: The Nofollow Era

As SEOs quickly figured out that links meant rankings, spam followed. Blog comment sections filled with junk links. Trackbacks were abused. Forum signatures became link farms.

On January 18, 2005, Google partnered with other major search engines to introduce the rel="nofollow" attribute. The idea was straightforward: add nofollow to links you don’t want to vouch for (like blog comments), and Google would stop counting them as votes.

Almost all modern CMS platforms now automatically apply nofollow to user-generated comment links.

But SEOs found a way to abuse nofollow too. A technique called “PageRank sculpting” emerged, where site owners would add nofollow to certain internal links to funnel more PageRank to their most important pages. In 2009, Google’s Matt Cutts confirmed this no longer worked—PageRank would be distributed across all links on a page, but only passed through followed links.

In 2019, Google added two more specific link attributes: rel="ugc" for user-generated content and rel="sponsored" for paid or affiliate links. These gave site owners more granular ways to label their links.

2005–2009: PageRank in Search Console

PageRank briefly appeared in Google Sitemaps (now Google Search Console) from November 17, 2005 to October 15, 2009. It was displayed in broad categories—high, medium, low, or N/A—rather than specific numbers.

2012–2016: The Penguin Era

When the original Penguin algorithm launched on April 24, 2012, it devastated websites that had relied on manipulative link building. Entire businesses saw their traffic collapse overnight.

Google gave affected site owners a lifeline later that year by introducing the disavow tool on October 16, 2012. This allowed webmasters to tell Google to ignore specific backlinks.

The real game-changer came with Penguin 4.0 on September 23, 2016. Instead of penalizing websites with spammy links, it simply devalued those links. This meant most sites no longer needed to actively disavow unless they had extreme spam problems.

2013–2016: The End of Public PageRank

The PageRank score shown in the Google Toolbar was last updated on December 6, 2013. The toolbar feature was officially removed on March 7, 2016.

This created a vacuum. SEOs could no longer see the number that had driven so many decisions. Third-party metrics like Domain Rating, Domain Authority, and URL Rating stepped in to fill the gap.

2021–Present: AI-Powered Link Spam Detection

Google launched its first Link Spam Update on July 26, 2021. By December 14, 2022, Google announced it was using SpamBrain—an AI-based system—to detect and neutralize unnatural links.

SpamBrain doesn’t just look at individual links. It analyzes patterns across the web to identify link networks, paid link schemes, and other manipulative tactics. This made traditional link spam significantly riskier and less effective.

Link Spam: What Google Considers Manipulation

Over the years, Google has compiled a detailed list of link schemes it considers manipulative. Understanding this list helps you avoid tactics that could get your links devalued—or worse.

Google’s documented link schemes include:

|

Link Scheme |

Example |

|---|---|

|

Buying or selling links |

Paying for a link on someone’s blog |

|

Excessive link exchanges |

“I’ll link to you if you link to me” at scale |

|

Automated link creation |

Using software to generate links across directories |

|

Contractual links |

Requiring links as part of a partnership agreement |

|

Unattributed text ads |

Paid ads that pass PageRank without nofollow or sponsored |

|

Optimized guest post links |

Guest articles with keyword-rich anchor text inserted for SEO |

|

Low-quality directory links |

Submitting to hundreds of irrelevant directories |

|

Widget link schemes |

Embedding links in widgets distributed across other sites |

|

Footer/template links |

Hard-coding links into WordPress themes or site templates |

|

Forum spam |

Dropping optimized links in forum posts or signatures |

The common thread: any link that exists primarily to manipulate rankings, rather than to genuinely help users navigate the web, is a link scheme.

How PageRank Has Changed

The PageRank running inside Google today is not the same algorithm from the 1997 paper. According to a former Google employee, the original was replaced in 2006 with a less resource-intensive algorithm that produces similar results but computes significantly faster.

The replacement algorithm eliminated the need for the iterative convergence process described earlier. As the web grew from millions to hundreds of billions of pages, this efficiency gain became essential.

Beyond the computational changes, several fundamental shifts have reshaped how PageRank works.

From Random Surfer to Reasonable Surfer

The original PageRank formula used a “random surfer” model, where every link on a page was equally likely to be clicked. A link in the footer had the same weight as a link in the first paragraph of the main content.

Google appears to have shifted to a “reasonable surfer” model, based on patents and statements from Google engineers. In this model, some links are more likely to be clicked than others, so they carry more weight.

Links that are more likely to pass significant value include those that are prominent and visible in the main content area, use descriptive anchor text relevant to the target page, appear early on the page, and are contextually relevant to the surrounding content.

Links that likely pass less value include those hidden in footers or sidebars, using generic anchor text like “click here,” buried in long lists of links, and placed in areas users rarely interact with.

Links That Are Ignored

Several systems now prevent certain links from passing PageRank:

Nofollow, UGC, and Sponsored attributes. Links with these attributes don’t pass PageRank, though Google may still use them as hints for discovery.

Penguin and Link Spam Updates. Links identified as manipulative by Google’s algorithms are devalued automatically.

The Disavow Tool. Site owners can manually tell Google to ignore specific links pointing to their site.

Robots.txt blocking. If a page is blocked by robots.txt, Google can’t crawl it, which means it can’t see or count any links on that page.

How Links Are Consolidated

Google’s canonicalization system determines which version of a page should be indexed when duplicates exist. Signals like PageRank from all duplicate versions are consolidated to the canonical version.

Canonical link elements, introduced on February 12, 2009, let you specify your preferred version. And redirects—which originally caused some PageRank loss—now pass full value. Google’s Gary Illyes confirmed in 2016 that 30x redirects no longer lose PageRank.

What’s Still Unknown

There’s an open question about how Google treats links on pages marked with a noindex tag.

Google’s John Mueller has said that pages with noindex will eventually be treated as noindex, nofollow—meaning links on those pages will eventually stop passing value.

But Gary Illyes has stated that Googlebot will continue to discover and follow links on a noindex page as long as the page itself still has links pointing to it.

These aren’t necessarily contradictory, but the practical implication is unclear. If a page has valuable outbound links, it could take a very long time—possibly never—for Google to stop counting them, even with a noindex tag.

Can You Still Check Your PageRank?

No. There is no public way to see Google’s internal PageRank score. The toolbar is gone, Search Console no longer shows it, and Google has not provided any replacement.

But several third-party metrics serve as useful proxies.

URL Rating (UR)

URL Rating, a metric from Ahrefs, measures the strength of a specific page’s backlink profile on a scale of 0 to 100. It shares several principles with the PageRank formula: it accounts for both internal and external links, considers the quality of linking pages, and distributes value across outbound links.

![[Screenshot: Ahrefs Site Explorer showing a URL Rating score for a specific page]](https://www.datocms-assets.com/164164/1776980823-blobid6.png)

UR is one of the few third-party metrics that includes internal links in its calculation. Most other authority metrics (like Domain Rating or Domain Authority) only consider links from external sites. This makes UR particularly useful for understanding how your internal linking structure contributes to page-level authority.

However, UR isn’t a perfect PageRank replica. It ignores some link types, doesn’t count nofollow links, and can’t account for links that site owners have disavowed or that Google has chosen to ignore.

Domain Rating (DR) and Domain Authority (DA)

Domain Rating (Ahrefs) and Domain Authority (Moz) measure the overall strength of a website’s backlink profile, rather than individual pages. They’re useful for comparing sites at a high level but shouldn’t be confused with page-level authority.

Think of it this way: DR/DA tells you how strong the brand’s overall link profile is. UR tells you how strong a specific page is. For PageRank-style analysis, UR is the closer proxy.

Page Rating in Site Audit

Ahrefs’ Site Audit tool includes a Page Rating (PR) metric that functions as an internal PageRank calculation. It shows which pages on your site are the strongest based purely on internal link structure—useful for identifying pages where your internal linking is underperforming.

Checking Your Authority in AI Search

Traditional PageRank metrics tell you about Google. But what about your authority in AI search?

AI engines like ChatGPT, Perplexity, and Gemini don’t publish a “PageRank” equivalent. Instead, their authority signals are visible through a different lens: citations. When an AI engine answers a question and cites your page as a source, that’s a direct signal that the model considers your content authoritative for that topic.

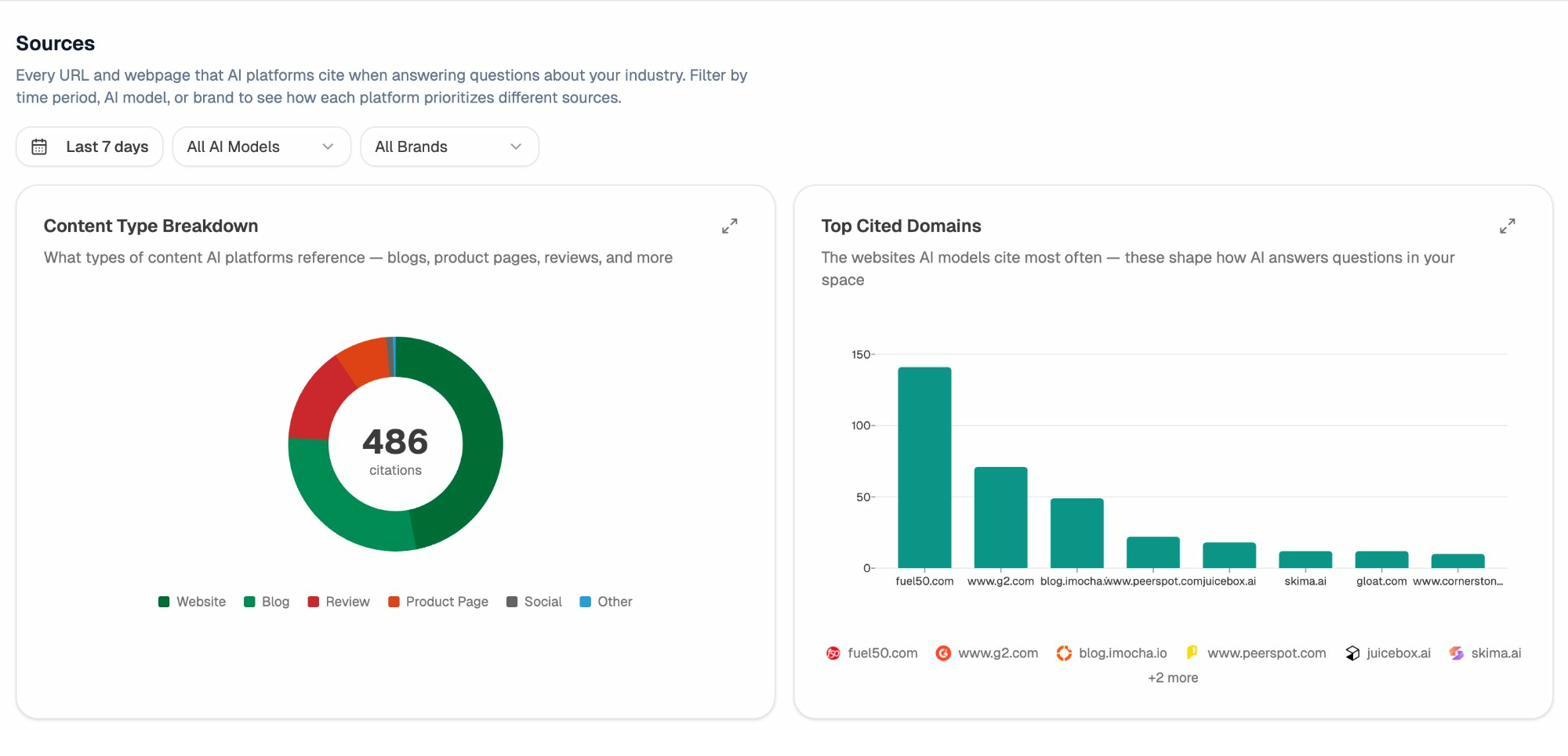

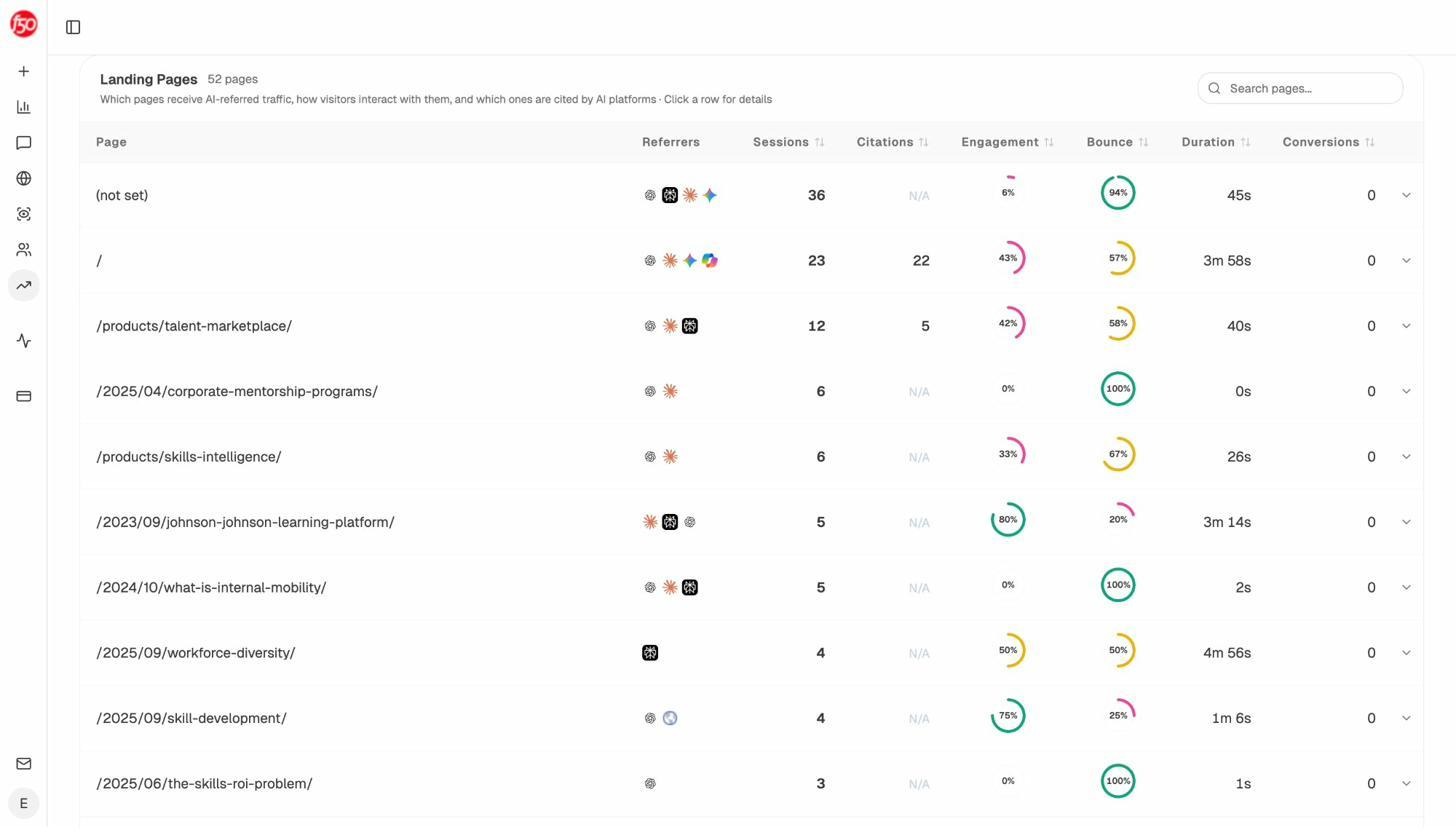

Analyze AI lets you track exactly which of your pages AI engines are citing, how often, and across which AI models. The Sources dashboard shows every URL and domain that AI platforms reference when answering questions in your industry—essentially, a citation-based authority map for AI search.

Analyze AI Sources dashboard showing Content Type Breakdown and Top Cited Domains for AI search citations

You can filter by AI model (ChatGPT, Perplexity, Claude, Gemini, and more) and by time period to spot trends. If a competitor’s page is gaining citations while yours is losing them, that’s the AI search equivalent of a competitor earning more backlinks.

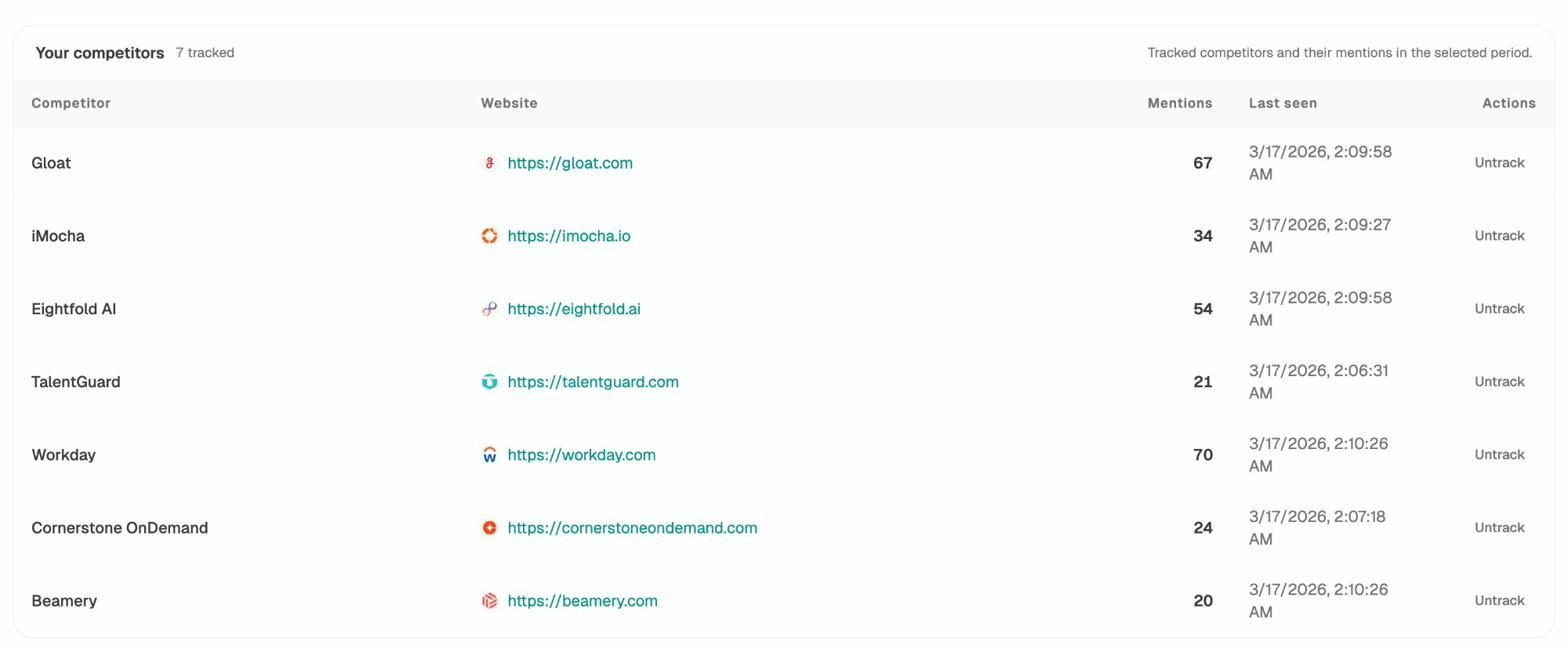

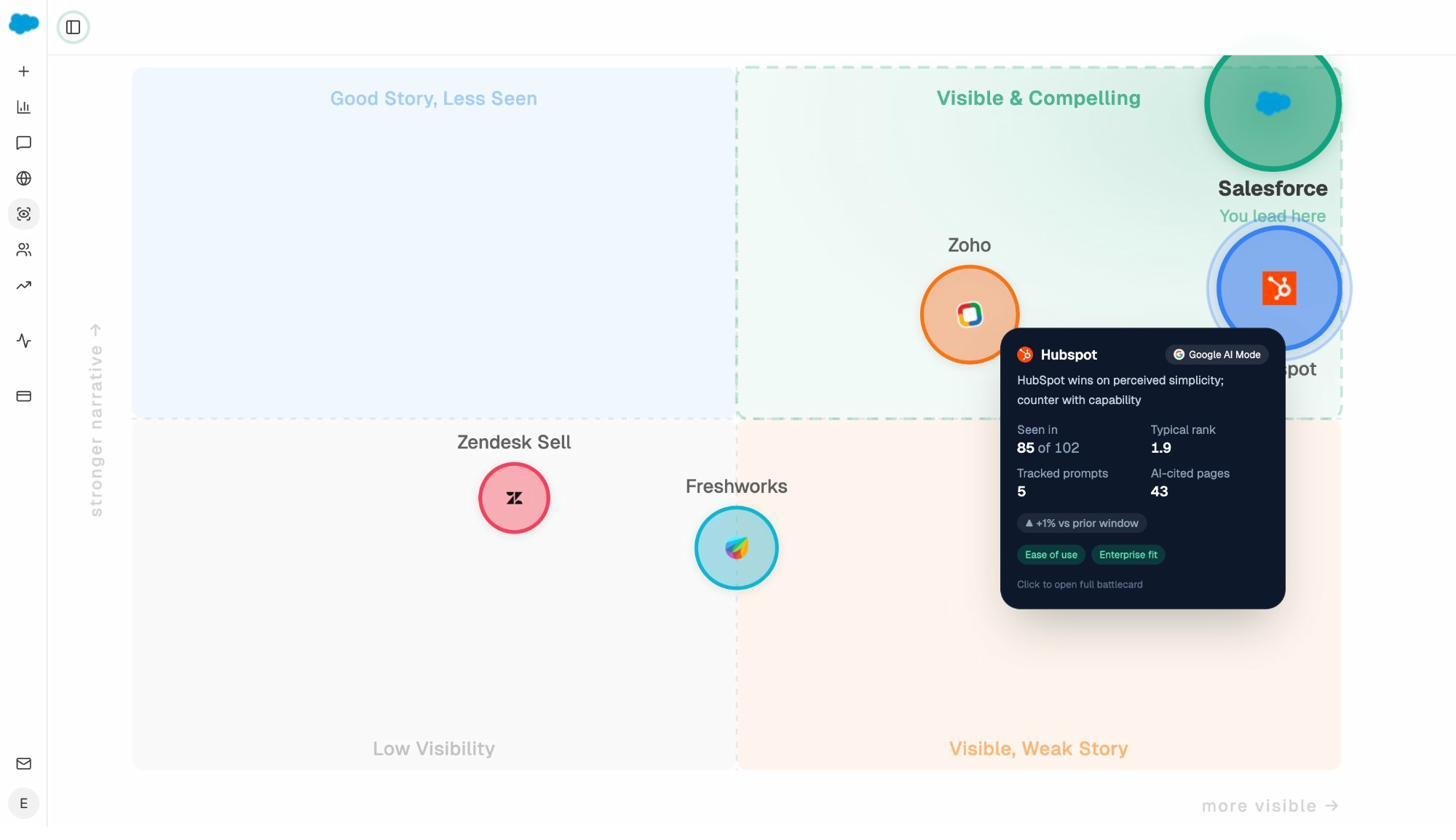

The Competitors view in Analyze AI makes this competitive analysis explicit. It shows which brands are being mentioned alongside yours in AI responses, how many mentions each competitor has received, and whether new competitors are emerging that you haven’t tracked before.

Analyze AI Competitors dashboard showing tracked competitors with mention counts and tracking dates

This is a new form of competitive intelligence. In traditional SEO, you’d check who’s earning backlinks. In AI search, you check who’s earning citations. The principle is the same—authority flows to the most trusted sources—but the channel is different.

How to Improve Your PageRank

Since PageRank is built on links, improving it means building a stronger, more authoritative link profile. Here’s how to do it, step by step.

Reclaim Lost Links by Redirecting Broken Pages

Websites change over time. Pages get deleted, URLs get restructured, and old content gets archived. When this happens, any backlinks pointing to those now-broken pages are wasted. The links still exist, but they point to 404 errors, so no PageRank passes through.

Redirecting broken pages to relevant current pages is often the easiest win in link building, because those links already point to you. You’re not earning new links—you’re reclaiming value you’ve already built.

Here’s how to find and fix these opportunities:

Step 1. Open a backlink analysis tool and enter your domain.

Step 2. Navigate to the “Best by links” or equivalent report that shows pages sorted by the number of referring domains.

Step 3. Filter for pages returning a 404 (Not Found) HTTP status code.

![[Screenshot: A backlink tool’s “Best by links” report filtered to show only 404 pages, sorted by referring domains]](https://www.datocms-assets.com/164164/1776980844-blobid10.png)

Step 4. Sort by referring domains. The pages at the top of this list have the most backlinks pointing to dead URLs—these are your highest-priority redirects.

Step 5. For each broken page, identify the most relevant current page on your site and set up a 301 redirect. If the old content covered “beginner’s guide to email marketing” and you have a current page on the same topic, redirect there. If no close match exists, redirect to the most relevant parent category or pillar page.

For large sites with hundreds of broken pages, automated matching tools can help. These tools compare archived versions of your old content (from the Wayback Machine) with current pages on your site and suggest the closest match.

You can use Analyze AI’s Broken Link Checker to quickly identify broken pages on your site that may need redirects.

Strengthen Your Internal Linking

Backlinks from external sites are partially outside your control. People link to whatever page they choose, with whatever anchor text they prefer. But internal links—links between pages on your own site—are entirely within your control.

Internal links serve two purposes for PageRank. First, they distribute PageRank from your strongest pages (usually your homepage and pages with the most backlinks) to deeper pages that need a boost. Second, they help Google understand your site’s structure and which pages are most important.

Here’s how to approach internal linking strategically:

Identify your strongest pages. Use a backlink tool to find pages on your site with the highest URL Rating or most referring domains. These are your PageRank sources—the pages that have authority to pass along.

Identify pages that need a boost. Look at important pages that are ranking on page 2 or 3 of Google. These pages have good content but may lack enough authority to break into the top 10. They’re ideal candidates for more internal links.

Add contextual internal links. Link from your strong pages to your target pages using descriptive, relevant anchor text. The link should make sense in context—don’t force it.

![[Screenshot: A site audit tool showing internal link opportunities, with a suggestion to add a link from one article to another based on keyword mentions]](https://www.datocms-assets.com/164164/1776980849-blobid11.png)

Audit your existing internal links regularly. Pages get published and forgotten. A post you wrote two years ago might reference a topic you’ve since covered in depth, but without a link to the newer, more comprehensive page. Find these opportunities and add links.

For a deeper dive on internal linking strategy, see our guide on internal linking for SEO.

Earn More External Links

External links—backlinks from other websites—are the core input to PageRank. The more high-quality backlinks you earn, the more PageRank your pages accumulate.

But earning links today requires a fundamentally different approach than it did in 2005. The era of mass directory submissions, blog comment spam, and link exchanges is over. Google’s algorithms are too sophisticated for those tactics to work, and the risk of having your links devalued (or worse) is high.

Modern link earning focuses on creating content so genuinely useful, original, or insightful that other sites want to reference it. Here are approaches that consistently work:

Original research and data. Content that presents new data, original surveys, or unique analysis earns links naturally. Other writers cite your findings because they can’t replicate them. If you’ve conducted a study, compiled a dataset, or analyzed trends in your industry, publish it.

Comprehensive guides. Long-form, definitive guides on important topics earn links over time as other writers reference them when covering related subjects. These are “link magnets” that compound—the more people cite them, the more others discover and cite them too.

Free tools. Building a genuinely useful free tool is one of the most effective link-earning strategies. Tools like keyword generators, SERP checkers, or website authority checkers attract links because they provide ongoing utility that a single blog post can’t.

Unlinked brand mentions. Sometimes other sites mention your brand or product without linking to you. These are low-hanging fruit—reach out and ask for a link. Most site owners are happy to add one because they already trust you enough to mention you.

For a full breakdown of link building tools and strategies, see our dedicated guide.

How to Strengthen Your Authority in AI Search

The same authority you build through links for PageRank now compounds in AI search. But AI engines have their own signals and patterns worth understanding.

AI engines decide which sources to cite based on several factors: the source’s domain authority, the depth and specificity of the content, how well it matches the user’s prompt, and whether other authoritative sources reference it. In other words, the pages that earn the most backlinks in traditional SEO also tend to earn the most citations in AI search.

But there are specific steps you can take to strengthen your presence in AI answers.

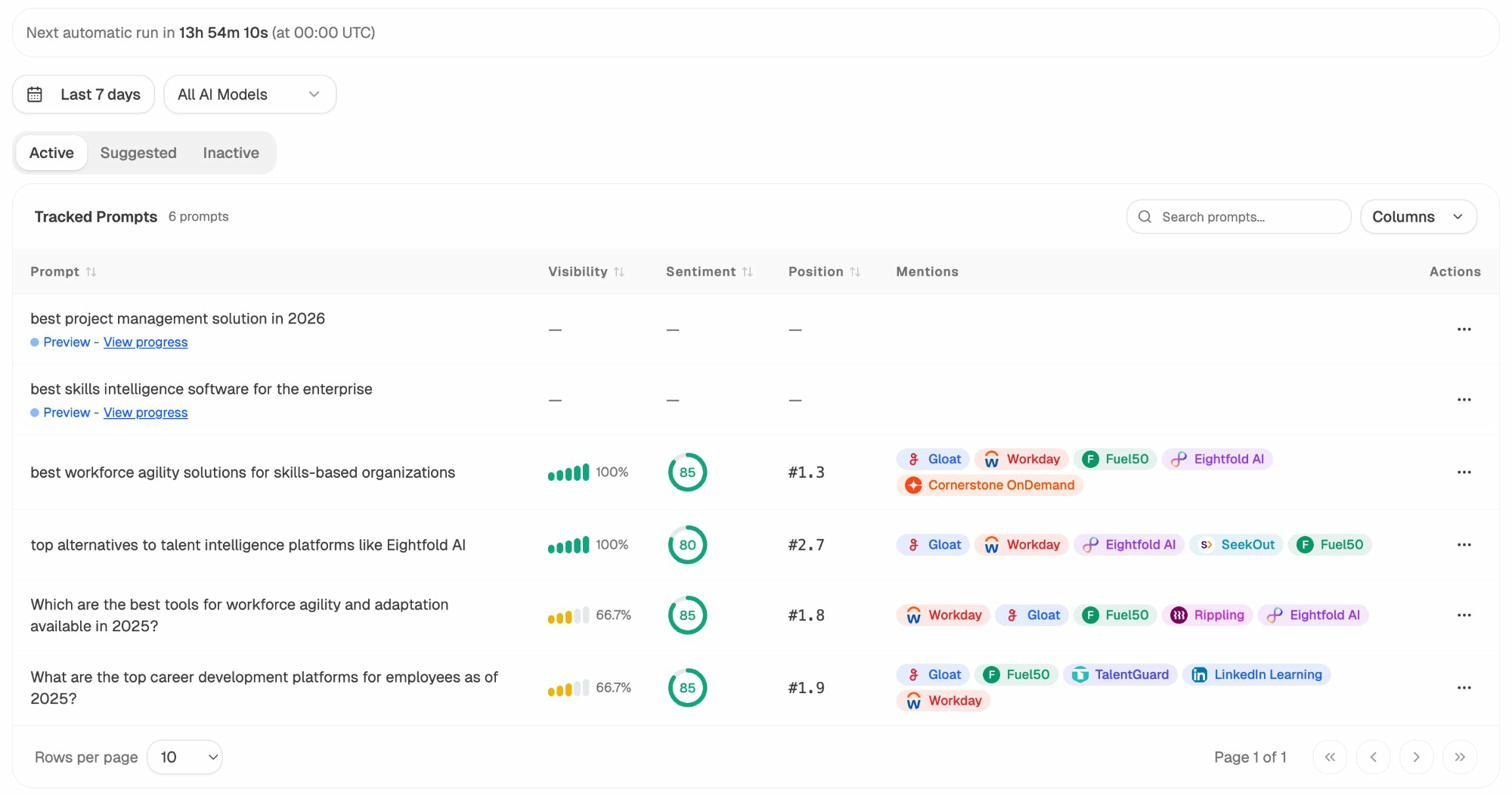

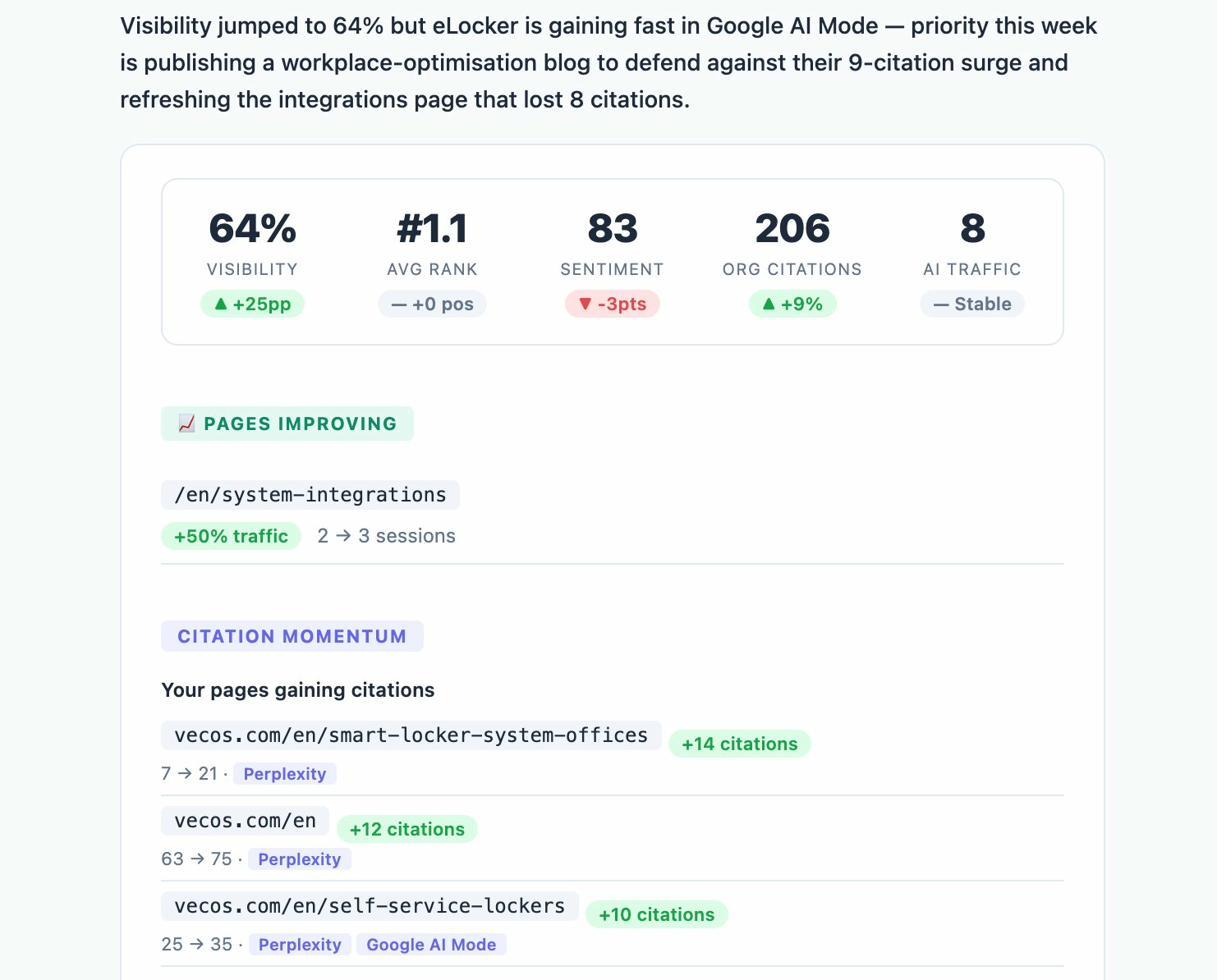

Track the prompts where you appear—and where you don’t. In Analyze AI’s Prompts dashboard, you can see every tracked prompt, your visibility percentage, sentiment score, position, and which competitors appear alongside you.

If you have 100% visibility for a prompt, your brand is being mentioned in every AI engine’s response. If you have 0%, you’re invisible for that query. The gap between where you appear and where you don’t reveals exactly where to focus your content efforts.

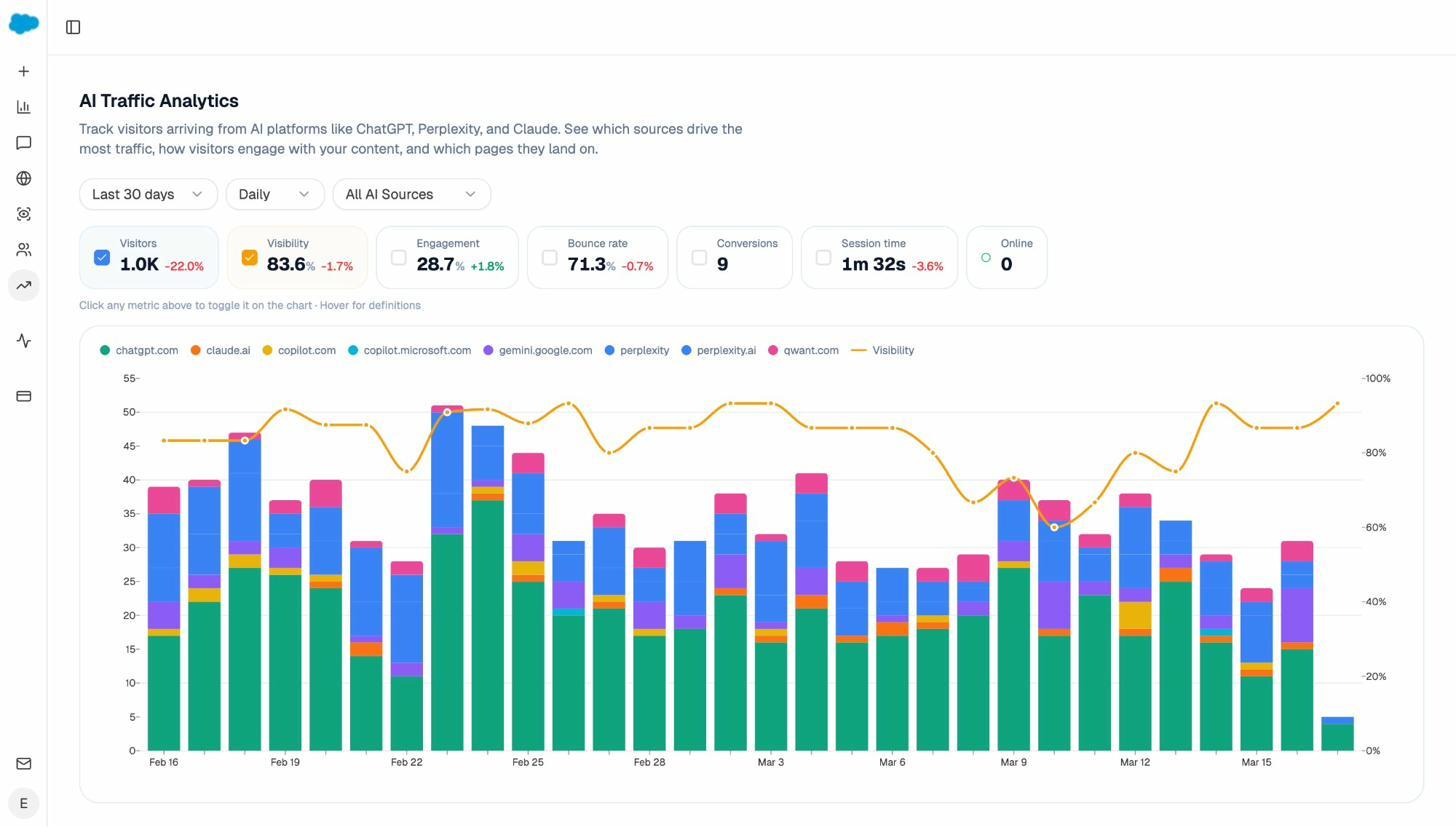

Analyze which of your pages earn AI traffic. Analyze AI’s AI Traffic Analytics connects to your GA4 data and shows which pages receive visitors referred from AI platforms—broken down by engine (ChatGPT, Perplexity, Gemini, Copilot, and more).

The Landing Pages report takes this further. It shows exactly which pages AI engines are sending traffic to, along with sessions, citations, engagement, bounce rate, and conversions for each page.

This data reveals patterns. You might discover that your long-form comparison guides earn significantly more AI citations than your short-form content. Or that product pages with detailed specs get cited more than generic marketing pages. Once you see the pattern, you can double down on what works.

Monitor competitor citation gains. Just as you’d track competitors earning backlinks in traditional SEO, you should track competitors gaining citations in AI search. Analyze AI’s Weekly Emails provide an automated summary that flags when competitors gain citations, when your own pages lose them, and where the biggest opportunities lie.

Use the Perception Map to see your competitive position. The Perception Map plots your brand against competitors on two axes: visibility (how often AI engines mention you) and narrative strength (how positively they describe you). This gives you a strategic view of where you stand and which competitors to prioritize.

The goal isn’t to choose between PageRank optimization and AI search optimization. The strategies that improve one tend to improve the other. Build genuine authority through great content and earned links, and you’ll see compound returns across both channels.

PageRank Myths Worth Debunking

Several persistent misconceptions about PageRank lead to wasted effort or misguided strategy. Here are the most common.

“PageRank is dead”

No. Google has confirmed it’s still part of the algorithm. What’s dead is the public toolbar score, not the underlying system. The algorithm has evolved, but the principle—links as votes of authority—remains foundational.

“All links are equal”

They never were, and the gap has only widened. A link from a high-authority, topically relevant page carries far more weight than a link from a low-quality, irrelevant one. The reasonable surfer model further weights links based on their likelihood of being clicked.

“Nofollow links are worthless”

Not entirely. While nofollow links don’t pass PageRank directly, Google has said it treats nofollow as a “hint” rather than a directive. Google may choose to count a nofollow link in some cases. More importantly, nofollow links still drive referral traffic, brand awareness, and can lead to followed links from people who discover your content through the original nofollow link.

“More links always means higher PageRank”

Quantity without quality is a losing strategy. A single link from a highly authoritative, relevant page can be worth more than hundreds of links from low-quality sites. Google’s SpamBrain algorithm specifically targets unnatural link patterns, so mass link building can actually hurt your site.

“Internal links don’t affect PageRank”

This is one of the most damaging myths. Internal links absolutely distribute PageRank within your site. Google has confirmed this repeatedly. Many SEOs focus exclusively on external link building while ignoring the internal linking structure that distributes that authority across their site. A strong backlink profile with poor internal linking is like filling a bucket with holes—the authority leaks instead of flowing where you need it.

Practical PageRank Audit Checklist

Here’s a step-by-step checklist you can run through right now to assess and improve your PageRank situation.

1. Find and redirect broken pages with backlinks. Use a broken link checker and a backlink tool to identify 404 pages on your site that still have external links pointing to them. Redirect each to the most relevant current page.

2. Audit your internal linking. Run a site audit to find pages with high authority but few internal links pointing elsewhere, and important pages that receive few internal links. Bridge the gap with contextual links.

3. Check your backlink profile quality. Review your referring domains. Are they relevant to your industry? Are they from authoritative sites? If you see a large number of links from spammy or irrelevant domains, consider whether they’re causing issues.

4. Analyze your anchor text distribution. Your backlink anchor text should look natural—mostly branded terms, URLs, and generic phrases, with some keyword-rich anchors mixed in. An unnatural concentration of exact-match keyword anchors is a red flag.

5. Monitor your keyword rankings and domain authority. Track whether your pages are maintaining or improving their positions. Use a website authority checker to monitor your domain’s authority over time.

6. Audit your AI search citations. Use Analyze AI to check which of your pages are being cited by AI engines, which competitors are gaining citations, and where your biggest gaps are. This is the new frontier of authority measurement.

7. Review your content for citability. Ask yourself: does your content contain original data, unique insights, or comprehensive coverage that other sites (and AI engines) would want to reference? If not, that’s where your content strategy should focus.

Final Thoughts

PageRank is nearly three decades old, and it’s still running inside Google. The algorithm has changed—the math is faster, the anti-spam systems are more sophisticated, and the public score is gone—but the core principle hasn’t: links from trusted sources signal authority, and authority drives visibility.

What has changed is where that authority matters. In 2000, PageRank only affected your position in Google’s ten blue links. In 2026, the same authority signals now influence whether AI engines cite your content in their answers. The pages with strong link profiles, deep topical coverage, and genuine expertise are the ones that show up in both channels.

This isn’t about choosing between SEO and AI search. It’s about recognizing that the fundamentals compound. Build real authority through great content, earn legitimate links, structure your site well, and the returns multiply across every channel—traditional search, AI search, and whatever comes next.

The brands that win aren’t chasing algorithm updates. They’re building the kind of authority that algorithms—whether PageRank or AI citation systems—are designed to reward.

Ernest

Ibrahim

![[Screenshot: Ahrefs Site Audit showing Page Rating scores for pages on a website]](https://www.datocms-assets.com/164164/1776980825-blobid7.png)