Summarize this blog post with:

JavaScript powers most of the modern web. More than 80% of major ecommerce sites use JavaScript frameworks to build pages, load content, and create interactive experiences. If your site uses React, Angular, Vue, Next.js, Nuxt, or any similar framework, some or all of your content depends on JavaScript to appear on the page.

For traditional search engines like Google, this has been manageable. Google’s rendering service can execute JavaScript and index the content it produces. It’s not perfect—there are delays, quirks, and resource limits—but it works for most sites.

For AI search engines, the story is different. Most AI search engines and LLM crawlers cannot render JavaScript. They fetch the raw HTML, and if your content only appears after JavaScript runs, those crawlers see nothing. Your pages might rank on Google but be completely invisible to ChatGPT, Perplexity, Claude, and Gemini when they generate answers.

This creates a dual optimization challenge that didn’t exist two years ago. You need your JavaScript site to work for Google’s renderer and for AI crawlers that skip rendering entirely. This guide covers both.

In this article, you’ll learn how Google and AI search engines handle JavaScript, the most common issues that break your visibility, and the exact steps to fix them. You’ll also learn why JavaScript SEO matters more now than ever—because AI crawlers like ChatGPT, Perplexity, and Claude cannot render JavaScript at all, and that means an entirely new layer of optimization most SEOs are ignoring.

Table of Contents

What Is JavaScript SEO?

JavaScript SEO is the subset of technical SEO that deals with making JavaScript-heavy websites crawlable, renderable, and indexable by search engines. The goal is to make sure content built or modified by JavaScript gets found, indexed, and ranked.

JavaScript itself isn’t bad for SEO. It’s just different from what most SEOs are used to working with. You’re still going to look at HTML code most of the time. All standard on-page SEO best practices still apply: unique titles, descriptive meta descriptions, clean URL structures, proper heading hierarchy, internal linking.

Where things get complicated is when JavaScript is responsible for building the page content, inserting elements, modifying metadata, or handling navigation. If JavaScript constructs your page instead of just adding interactivity to an already-complete HTML page, you have JavaScript SEO work to do.

The common JavaScript frameworks each have their own SEO considerations:

|

Framework |

Common SEO Module |

Default Rendering |

Key Concern |

|---|---|---|---|

|

React |

React Helmet, Next Head |

Client-side (CSR) |

Content invisible without rendering |

|

Vue |

vue-meta |

Client-side (CSR) |

Hash-mode routing by default |

|

Angular |

Angular Universal |

Client-side (CSR) |

Heavy bundle sizes |

|

Next.js |

Built-in Head |

Server-side (SSR) or Static (SSG) |

Misconfigured fallback pages |

|

Nuxt |

Built-in useSeoMeta |

Server-side (SSR) or Static (SSG) |

Duplicate content from dynamic routes |

|

Gatsby |

React Helmet |

Static (SSG) |

Build-time data query issues |

|

Svelte/SvelteKit |

Built-in svelte:head |

Server-side (SSR) |

Relatively new, fewer edge cases |

Most of these frameworks have SEO modules (often called packages or plugins) that handle metadata, canonical tags, and other standard SEO elements. Search for your framework name plus “SEO module” or “head management” to find the right one. React Helmet, vue-meta, and built-in head components in Next.js and Nuxt are the most widely used.

JavaScript SEO Issues and Best Practices

These are the issues you’re most likely to encounter on JavaScript-powered sites, along with exactly how to fix each one.

Have Unique Title Tags and Meta Descriptions

JavaScript frameworks are template-driven. A single template often generates hundreds or thousands of pages. If no one configured dynamic title tags and meta descriptions per page, every page ends up with the same metadata—or worse, the framework’s default placeholder text.

This is one of the most common issues on JavaScript sites, and it’s one of the easiest to fix.

How to check: Open your site in Chrome, right-click, and choose “Inspect.” Look at the <title> and <meta name="description"> tags in the <head>. Navigate to several different pages and see if the values change. If they don’t, you have a problem.

![[Screenshot: Chrome DevTools showing the head section with title and meta description tags on a JavaScript site]](https://www.datocms-assets.com/164164/1774992350-blobid1.png)

You can also run a crawl with a tool that supports JavaScript rendering. Look for duplicate title tags and duplicate meta descriptions across your pages.

![[Screenshot: Site audit tool showing duplicate title tag report across multiple pages]](https://www.datocms-assets.com/164164/1774992356-blobid2.png)

How to fix it: Use your framework’s SEO module to set unique metadata per page. In React, this means using React Helmet or Next.js’s built-in <Head> component. In Vue, use vue-meta or Nuxt’s useSeoMeta. Every page template should pull unique data (page title, description) from your CMS or data source.

Here’s what proper implementation looks like in Next.js:

import Head from 'next/head'

export default function ProductPage({ product }) {

return (

<>

<Head>

<title>{product.name} | Your Store</title>

<meta name="description" content={product.shortDescription} />

</Head>

{/* Page content */}

</>

)

}

One nuance: JavaScript can also overwrite default metadata values. Google will process the overwritten version. But users may see a brief flash in the browser tab as the title changes. If you notice this, it means your initial HTML has one title and JavaScript replaces it with another. The cleaner approach is to set the correct title server-side so it’s already in the raw HTML.

Google rewrites titles on its own about a third of the time anyway. But getting your titles right in the source gives you the best chance of controlling what appears in search results.

Canonical Tag Issues

Canonical tags tell search engines which version of a page is the “main” one. JavaScript can complicate this in two ways.

First, if you insert a canonical tag with JavaScript and there’s already one in the raw HTML, Google now sees two canonical tags. When that happens, Google has to decide which one to trust—or it may ignore both and use other signals to determine the canonical URL.

Second, the standard SEO advice of “every page should have a self-referencing canonical” can backfire on JavaScript sites. A developer takes that requirement literally and makes both example.com/page and example.com/page/ self-canonical. Now you have two different URLs, each claiming to be the canonical version. The correct fix is to pick one format, make it self-canonical, and redirect the other version to it.

How to fix it: Set canonical tags server-side whenever possible, not through JavaScript injection. If you must use JavaScript, make sure no canonical tag exists in the raw HTML first. Pick one URL format (with or without trailing slash) and enforce it with redirects.

Google Uses the Most Restrictive Directives

This is a critical concept that trips up many SEOs working with JavaScript sites. When Google sees conflicting directives between the raw HTML and the rendered HTML, it always follows the most restrictive one.

If your raw HTML says index and your JavaScript changes it to noindex, the page will be treated as noindex. If your raw HTML says noindex and JavaScript overwrites it with index, the page is still noindex—because Google saw the restrictive directive in the raw HTML and may not even send the page to the renderer.

This last point is especially important. A page with a noindex directive in the raw HTML will never be sent to Google’s renderer. So any JavaScript that tries to remove or override that directive will never run in Google’s system.

The same logic applies to nofollow. Google will take whichever directive is more restrictive, regardless of where it appears.

How to check: View the raw HTML source (Ctrl+U in Chrome) and search for “noindex” or “nofollow.” Then inspect the rendered page and check again. If the values differ between raw and rendered HTML, the more restrictive one wins.

Set Alt Attributes on Images

Missing alt attributes are an accessibility issue that can also become a legal issue. Many JavaScript developers leave alt attributes blank or skip them entirely when rendering images dynamically.

Alt text counts as text on the page for web search. Its direct ranking impact is often overstated, but it helps significantly with image search rankings. More importantly, it’s required for accessibility compliance. Companies get sued over ADA compliance issues regularly.

How to fix it: Audit your images for missing alt attributes. Run a crawl with JavaScript rendering enabled and check the images report. For decorative images (icons, spacers, design elements), use an empty alt="" attribute—don’t omit it entirely. For content images, write descriptive alt text that explains what the image shows.

![[Screenshot: Site audit tool showing missing alt text report on image elements]](https://www.datocms-assets.com/164164/1774992360-blobid3.jpg)

Allow Crawling of JavaScript Files

If your robots.txt file blocks access to JavaScript or CSS files, Google can’t download the resources it needs to render your pages. This means it can’t see any content that depends on those files.

How to check: Look at your robots.txt file. Make sure you’re not blocking .js or .css files. The easiest fix is to add these lines:

User-Agent: Googlebot

Allow: .js

Allow: .css

Also check the robots.txt files on any subdomains or external domains your site makes API calls to. If your JavaScript fetches data from api.yoursite.com and that subdomain’s robots.txt blocks crawlers, Google won’t be able to see the data your page depends on.

Quick test: In Chrome DevTools, go to the “Network” tab. Right-click on a JavaScript file and select “Block request URL.” Reload the page. If the content changes or disappears, that file is essential for rendering, and blocking it in robots.txt would hide that content from Google.

![[Screenshot: Chrome DevTools Network tab showing how to block a JavaScript file request for testing]](https://www.datocms-assets.com/164164/1774992362-blobid4.png)

Check If Google Sees Your Content

Many JavaScript sites load content dynamically—meaning it doesn’t appear in the HTML until a user takes an action like clicking a tab, expanding an accordion, or scrolling down. Google doesn’t click or scroll. If content requires user interaction to load, Google won’t see it.

Three ways to check:

1. Search for a content snippet in Google. Copy a unique phrase from your page, put it in quotation marks, and search for it. If Google returns your page, it saw that content. If it doesn’t, the content may not be rendering for Googlebot.

2. Use the Inspect Element method. Right-click on your page, choose “Inspect,” and search for text within the “Elements” tab. But be careful: if you’ve already clicked on an accordion or tab, the content may have loaded into the DOM from your click. Reload the page in an incognito window and check again without clicking anything.

![[Screenshot: Chrome DevTools Elements tab with search function open, searching for specific text content]](https://www.datocms-assets.com/164164/1774992372-blobid5.jpg)

3. Use Google Search Console’s URL Inspection tool. This is your source of truth. Inspect a URL, view the rendered HTML, and search for your content within it. If it’s there, Google can see it. If it’s not, you have a rendering problem.

![[Screenshot: Google Search Console URL Inspection tool showing rendered HTML output]](https://www.datocms-assets.com/164164/1774992372-blobid6.jpg)

Pay special attention to content behind accordions, tabs, dropdown menus, and “load more” buttons. These are the most common places where important content gets hidden from search engines.

Duplicate Content Issues

JavaScript frameworks can generate multiple URLs for the same content. This happens because of capitalization differences, trailing slashes, route parameters, ID-based URLs, and query strings. All of these might exist simultaneously:

example.com/Product-Page

example.com/product-page

example.com/product-page/

example.com/products/123

example.com/products?id=123

Each URL serves the same content, but search engines treat them as separate pages. This dilutes your ranking signals and wastes crawl budget.

Another common issue specific to JavaScript: app shell models. With an app shell, the initial HTML response contains very little content—just the framework code needed to bootstrap the application. Every page on the site returns the same shell HTML. Until JavaScript runs and populates the content, all pages look identical to a crawler that doesn’t render JavaScript.

If Google’s system detects these identical HTML responses before rendering, it may treat them as duplicates and skip rendering some of them. This means your content never gets indexed.

How to fix it: Pick one canonical URL format for each page. Set self-referencing canonical tags. Redirect all other URL variations to the canonical version. And if possible, use server-side rendering so that each page has unique HTML content in the initial response—not just after JavaScript runs.

Don’t Use Fragments (#) in URLs

The hash symbol (#) has a defined function in browsers: it links to a specific section of the current page. Servers ignore everything after a #. So example.com/#about and example.com/ are the same URL as far as the server is concerned.

JavaScript developers sometimes use # for client-side routing, which means different “pages” in your application have URLs like example.com/#/about and example.com/#/contact. These all resolve to the same server response. Google can’t differentiate them reliably.

This is especially common with Vue (which uses hash-mode routing by default) and older versions of Angular.

How to fix in Vue: Switch your Vue Router from hash mode to history mode:

const router = new VueRouter({

mode: 'history',

routes: [/* your routes */]

})

History mode gives you clean URLs like example.com/about instead of example.com/#/about. You’ll also need to configure your server to serve your index.html for all routes so that direct URL access works correctly.

Create a Sitemap

Sitemaps help search engines discover all the pages on your site, which is especially important for JavaScript sites where internal links might not be in the raw HTML.

Most JavaScript frameworks have sitemap generation modules. Search for your framework name plus “sitemap” to find one. Next.js has next-sitemap. Nuxt has @nuxtjs/sitemap. Gatsby has gatsby-plugin-sitemap.

If your site generates pages dynamically from a CMS or database, make sure your sitemap module pulls from the same data source so it includes every page.

Submit your sitemap to Google Search Console. If you’re also optimizing for AI search, submit it to Bing Webmaster Tools as well, since Bing’s index feeds into Microsoft Copilot’s search results.

Status Codes and Soft 404s

JavaScript frameworks run client-side, so they can’t return proper HTTP status codes like a 404. When someone visits a nonexistent URL on a JavaScript site, the server returns a 200 OK status (because the app shell loaded successfully), even though the page doesn’t exist.

Google treats this as a “soft 404”—a page that says it doesn’t exist but returns a success status code. Soft 404s waste crawl budget and can confuse Google’s indexing.

Two ways to handle this:

1. JavaScript redirect to a proper 404 page. When your app detects an invalid route, redirect the user to a page that actually returns a 404 status code from the server.

2. Add a noindex tag. If you can’t set the correct status code, add a <meta name="robots" content="noindex"> tag to error pages along with a clear “Page Not Found” message. Google will treat this as a soft 404 and exclude the page from its index.

The cleaner solution is server-side rendering, which lets you return proper HTTP status codes for every request.

JavaScript Redirects

SEOs are familiar with 301 and 302 redirects, which happen server-side. JavaScript redirects happen client-side and are less ideal but still work.

Google will see and process JavaScript redirects during its rendering step. They’re treated as permanent redirects and pass all ranking signals including PageRank. But they’re slower for Google to discover because the page has to be rendered first, whereas server-side redirects are detected immediately during crawling.

You can find JavaScript redirects in code by searching for window.location.href or window.location.replace. In Next.js, check the config file for the redirect function. In other frameworks, check the router configuration.

Best practice: Use server-side redirects (301/302) whenever possible. Use JavaScript redirects only when server-side options aren’t available.

Internationalization Issues

If your site serves content in multiple languages or regions, JavaScript can cause several internationalization problems.

Hreflang tags tell search engines which version of a page to show to users in different regions. Most JavaScript frameworks have modules for this—look for i18n, intl, or the same head management modules you use for meta tags (like Helmet or vue-meta).

A more dangerous issue is IP-based or geo-based redirects. If your site redirects users based on their location, it might also redirect Googlebot. Google crawls primarily from the United States, so if your site redirects U.S. visitors to an English version, Googlebot may never see your French or German versions.

How to fix it: Exclude known bots from geo-redirect logic. Or better, don’t use server-side geo-redirects at all. Instead, serve a language selector that lets users (and bots) access all versions.

Use Structured Data

JavaScript can generate or inject structured data using JSON-LD, and this generally works fine. Google processes JSON-LD structured data whether it’s in the raw HTML or injected by JavaScript.

But test your implementation. Dynamically generated structured data can have formatting errors, missing required fields, or incorrect values that static implementations wouldn’t have.

How to check: Use Google’s Rich Results Test tool to see if your structured data is valid. Check several different page types, not just one. Template-driven sites often have structured data errors that affect entire sections of the site.

![[Screenshot: Google’s Rich Results Test tool showing structured data validation results]](https://www.datocms-assets.com/164164/1774992380-blobid7.jpg)

Use Standard Format Links

Internal and external links must use the web-standard format: an <a> tag with an href attribute. This is the only link format that search engines reliably follow and pass ranking signals through.

These work:

<a href="/page">Standard link</a>

<a href="/page" onclick="trackClick()">Link with tracking</a>

These don’t work for SEO:

<a onclick="goTo('/page')">No href attribute</a>

<span onclick="goTo('/page')">Wrong HTML element</span>

<a href="javascript:void(0)">No real URL</a>

<a href="#">No destination</a>

Google’s parsers are forgiving and may still crawl some non-standard link formats. But you can’t count on those links passing PageRank or being treated as proper internal links. Fix them.

Internal links added by JavaScript won’t be discovered until Google renders the page. For most sites, this delay is negligible—rendering typically happens within seconds. But for news sites or time-sensitive content where every second matters, having links in the raw HTML is better.

Use File Versioning for Cache Issues

Google aggressively caches all resources: HTML, JavaScript files, CSS files. It doesn’t respect your cache headers. It fetches new copies when it decides to, not when you tell it to.

This caching can cause “impossible states” where Google renders a page using outdated JavaScript or CSS files combined with current HTML. The result is a page that never actually existed in this form—parts are current, parts are old.

How to fix it: Use file versioning (also called fingerprinting). Instead of app.js, name your files app.abc123.js where the hash changes with each build. When you deploy significant changes, the new filename forces Google to download the fresh version.

Most modern build tools (Webpack, Vite, Rollup) do this automatically. Check that your build process includes content hashing in filenames.

Check What Googlebot Actually Sees

Your browser and Googlebot may see different things. Sites that use dynamic rendering, prerendering, or user-agent detection can serve different content to different crawlers.

How to check: Use Google Search Console’s URL Inspection tool for the authoritative view of what Googlebot sees. You can also change your browser’s user-agent string using Chrome DevTools (Network conditions > User agent) to emulate Googlebot and see if the page content changes.

![[Screenshot: Chrome DevTools Network conditions panel showing user-agent override options]](https://www.datocms-assets.com/164164/1774992381-blobid8.png)

Lazy Loading

Modern lazy loading uses the browser’s native loading="lazy" attribute on images, which is safe for SEO. Google handles this correctly.

Older JavaScript-driven lazy loading implementations may cause problems. If content (not just images) is lazy-loaded—meaning it only appears when a user scrolls to it—Google may not see that content. Google does resize its viewport to a very long page (around 12,000 pixels on mobile), which triggers some scroll-based loading. But don’t rely on this.

Best practice: Only lazy-load images below the fold. Never lazy-load text content, links, or other SEO-critical elements.

Infinite Scroll

Infinite scroll loads more content as the user scrolls down. Google can sometimes trigger this when it resizes its viewport, which can cause unexpected results—like two articles getting indexed as one.

How to fix it: Provide a paginated fallback alongside infinite scroll. Each “page” of content should have its own URL that Google can crawl independently. Use <link rel="next"> and <link rel="prev"> tags to indicate the relationship between paginated pages.

If you can’t add pagination, consider blocking the JavaScript file that handles infinite scroll from Googlebot so the functionality doesn’t trigger during rendering.

Performance Issues

JavaScript can be heavy. Poorly optimized JavaScript bundles increase page load times, which affects Core Web Vitals and user experience.

Modern frameworks help with this through code splitting (loading only the JavaScript needed for the current page) and tree shaking (removing unused code from bundles). But these optimizations only work if your build process is configured correctly.

Key performance checks:

Check your Largest Contentful Paint (LCP). If JavaScript is responsible for rendering your main content, LCP will be delayed until the JavaScript downloads, parses, and executes. Aim for LCP under 2.5 seconds.

Check your Total Blocking Time (TBT). Heavy JavaScript execution blocks the main thread and prevents user interaction. Long tasks (over 50ms) should be broken up or deferred.

Check your bundle size. Use Chrome DevTools’ “Coverage” tab to see how much of your JavaScript is actually used on each page. If more than 40% is unused, you’re loading too much.

![[Screenshot: Chrome DevTools Coverage tab showing JavaScript usage analysis with unused code highlighted]](https://www.datocms-assets.com/164164/1774992388-blobid9.png)

For more detail, see our guide on SEO content strategy and how technical performance fits into the bigger picture.

JavaScript Sites Use More Crawl Budget

Every XHR request (API call) that JavaScript makes during rendering eats into your crawl budget. Unlike CSS and JavaScript files that get cached, XHR requests are made live during rendering. A page that makes 20 API calls to assemble its content uses significantly more of Google’s rendering resources than a page with everything in the HTML.

This matters most for large sites (over 10,000 pages) where crawl budget is a real constraint. For smaller sites, crawl budget is rarely a limiting factor.

How to reduce impact: Minimize API calls during page rendering. If your page makes separate requests for header data, main content, sidebar content, and footer data, consider consolidating these into fewer requests or moving the data into the initial HTML response.

HTTP Connections Only

Googlebot supports HTTP requests but does not support WebSockets or WebRTC connections. If your page uses WebSockets to stream content (like real-time updates or chat interfaces), provide an HTTP fallback for the initial content load.

Content that depends entirely on WebSocket connections will be invisible to Google.

Why JavaScript SEO Matters Even More for AI Search

Here is where most JavaScript SEO guides stop. But there’s a critical second layer that most sites completely ignore: AI search engines.

AI crawlers from ChatGPT (via Bing and its own OAI-SearchBot), Perplexity (PerplexityBot), Claude, and other LLM-powered search tools process web content fundamentally differently from Google. The key difference is simple and severe: most AI crawlers do not render JavaScript.

When an AI crawler visits your page, it fetches the raw HTML response and works with that. If your content only appears after JavaScript executes, the AI crawler sees an empty shell—your app framework code, maybe a loading spinner placeholder, but none of your actual content.

This means a site that ranks perfectly on Google can be completely invisible in AI-generated answers. And with AI search traffic growing across platforms, that invisibility represents a real and compounding loss.

How to Check If AI Crawlers Can See Your Content

The simplest test: disable JavaScript in your browser (Chrome DevTools > Settings > check “Disable JavaScript”) and reload your page. What you see is approximately what most AI crawlers see.

If your page is blank or shows only a loading indicator with JavaScript disabled, your content is invisible to AI search. This is the single biggest issue for JavaScript-heavy sites trying to gain visibility in AI-generated answers.

A more thorough check: Use the curl command to see the raw HTML response:

curl -s https://yoursite.com/page | head -100

If the output contains your actual page content (headings, paragraphs, product details), your raw HTML is fine. If it contains only framework boilerplate and script tags, AI crawlers can’t see your content.

The Fix: Server-Side Rendering or Static Generation

If you’re using a client-side rendered (CSR) JavaScript framework and you want visibility in AI search, you need to move to server-side rendering (SSR) or static site generation (SSG). This isn’t optional—it’s a requirement for AI search visibility.

With SSR or SSG, the server generates complete HTML for each page before sending it to the browser. The HTML contains all your content, headings, links, and metadata. JavaScript then “hydrates” the page to add interactivity. Both Google and AI crawlers get the full content from the initial HTML response.

|

Rendering Method |

Google Can See Content? |

AI Crawlers Can See Content? |

Performance |

Best For |

|---|---|---|---|---|

|

Client-Side Rendering (CSR) |

Yes (after rendering) |

No |

Fast initial load, slow to content |

Internal apps, dashboards |

|

Server-Side Rendering (SSR) |

Yes (immediately) |

Yes |

Slower initial load, fast to content |

Dynamic pages, personalized content |

|

Static Site Generation (SSG) |

Yes (immediately) |

Yes |

Fastest |

Blogs, docs, marketing pages |

|

Incremental Static Regeneration (ISR) |

Yes (immediately) |

Yes |

Fast with fresh data |

Product pages, frequently updated content |

If you’re running React, migrate to Next.js. If you’re running Vue, migrate to Nuxt. Both frameworks support SSR and SSG out of the box and handle the hydration process automatically.

Make Your Site Accessible with llms.txt

A practical step for JavaScript sites: create an llms.txt file. This is a plain-text file at your domain root that tells AI crawlers where to find your most important content in a format they can process.

Think of it as a simplified sitemap specifically for LLM crawlers. It lists your key pages with brief descriptions, helping AI models understand your site’s structure without having to render JavaScript.

You can generate one using a free llms.txt generator tool or create it manually.

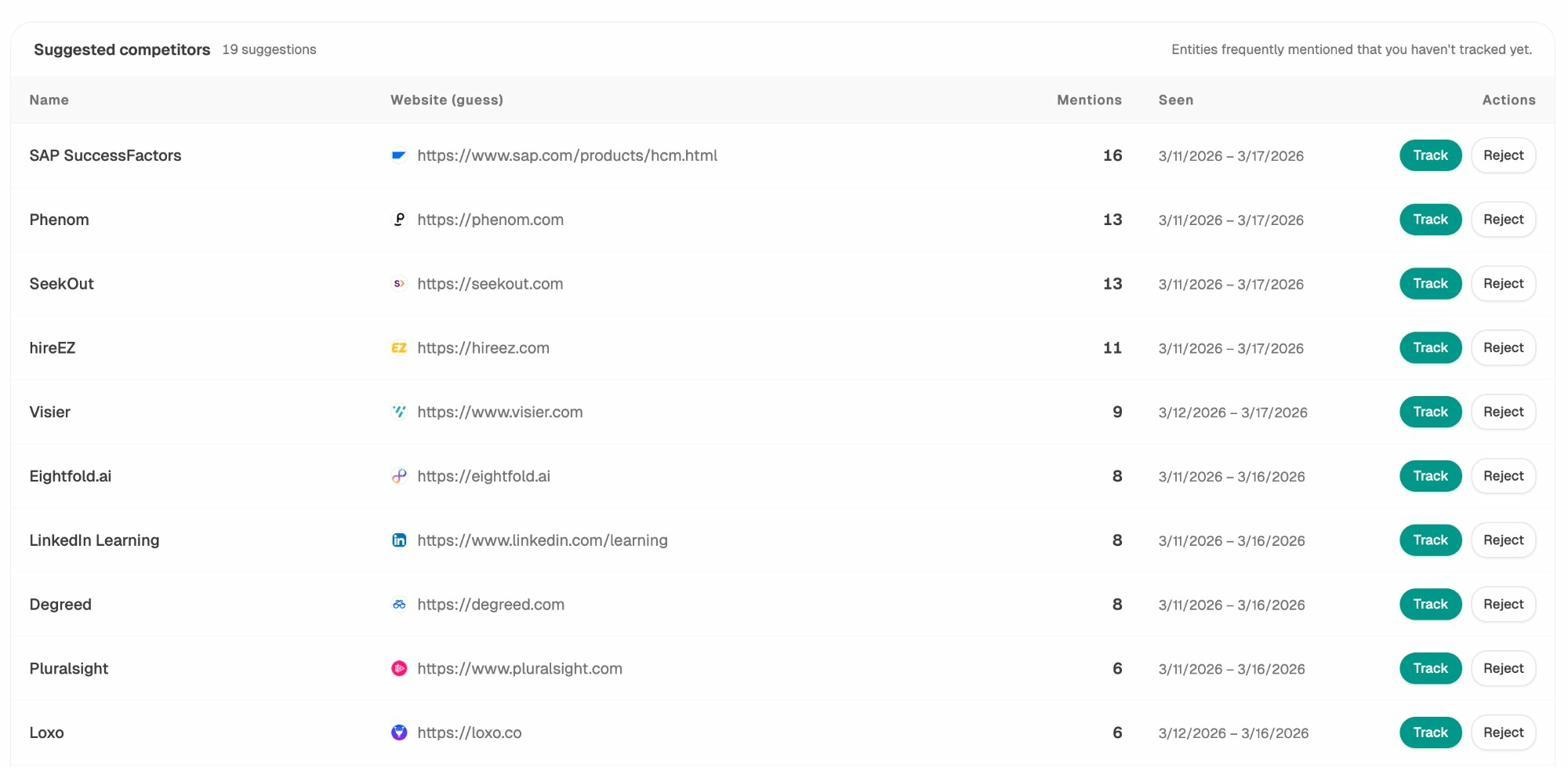

Track Your AI Search Visibility

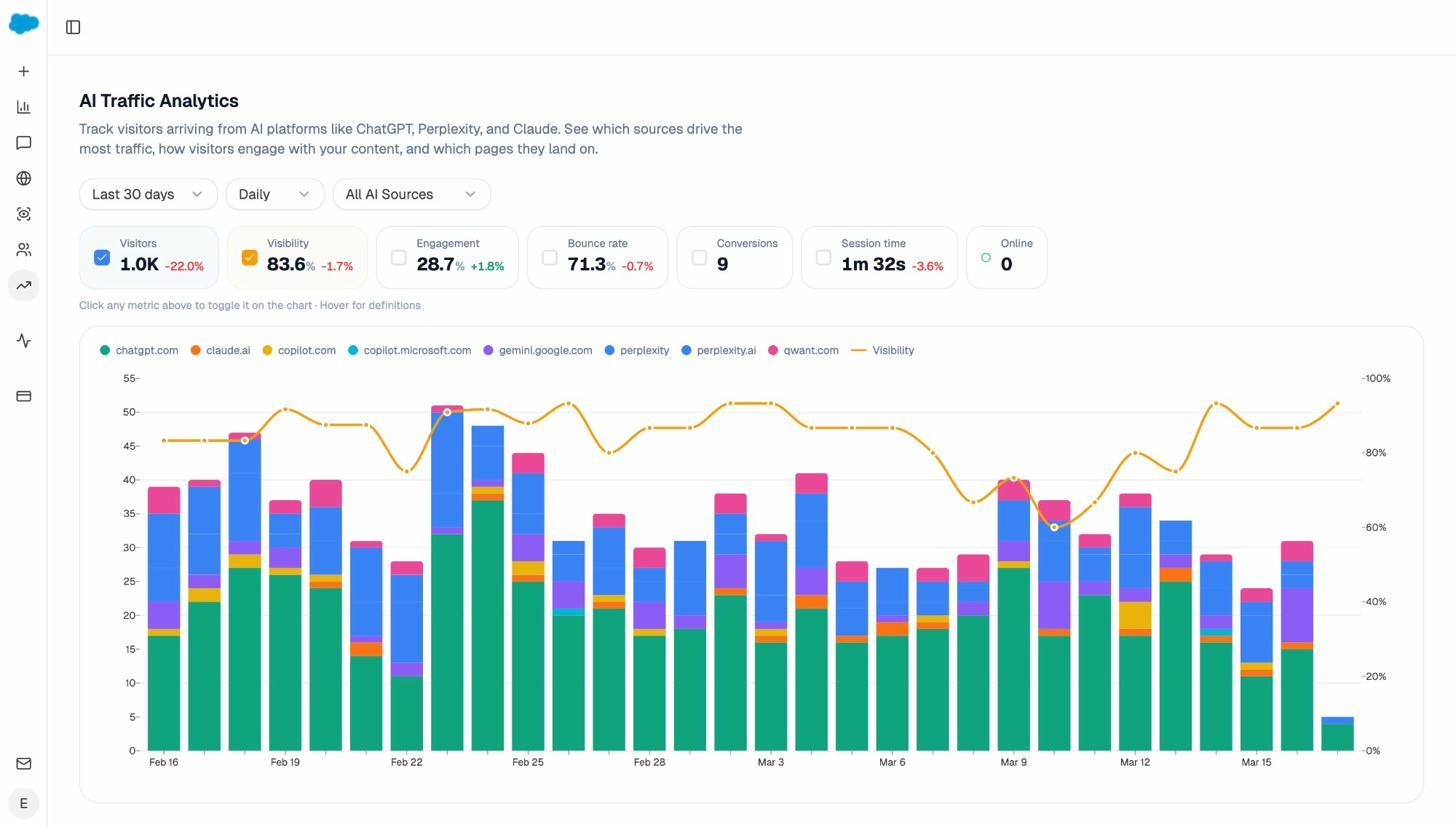

Once you’ve fixed your JavaScript rendering for AI crawlers, you need to track whether those fixes are working. This is where AI search analytics tools become essential.

With a platform like Analyze AI, you can see exactly which AI engines are sending traffic to your site, which pages they’re landing on, and how that traffic trends over time.

The AI Traffic Analytics dashboard breaks down your traffic by AI source—ChatGPT, Claude, Copilot, Perplexity, Gemini, and others. For a JavaScript-heavy site, you’d expect to see very low AI traffic before implementing SSR, and a measurable increase after. This gives you concrete proof that your technical changes are working.

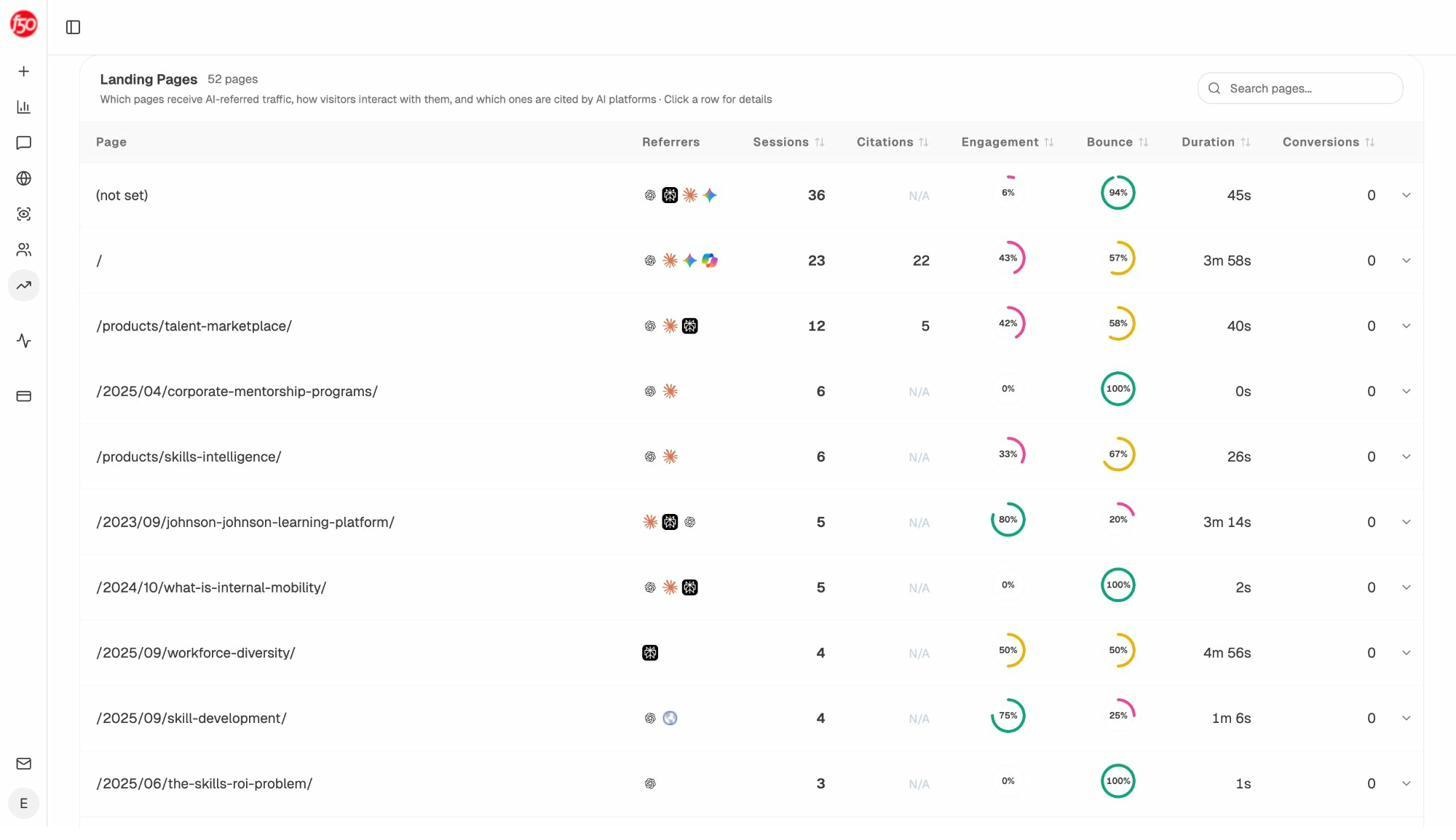

You can also drill down to see exactly which landing pages are receiving AI-referred traffic and how visitors behave after arriving.

This data tells you which of your pages AI engines are actually citing and sending users to. If your highest-value pages aren’t showing up here, they may still have JavaScript rendering issues that prevent AI crawlers from accessing the content.

Monitor What AI Engines Say About You

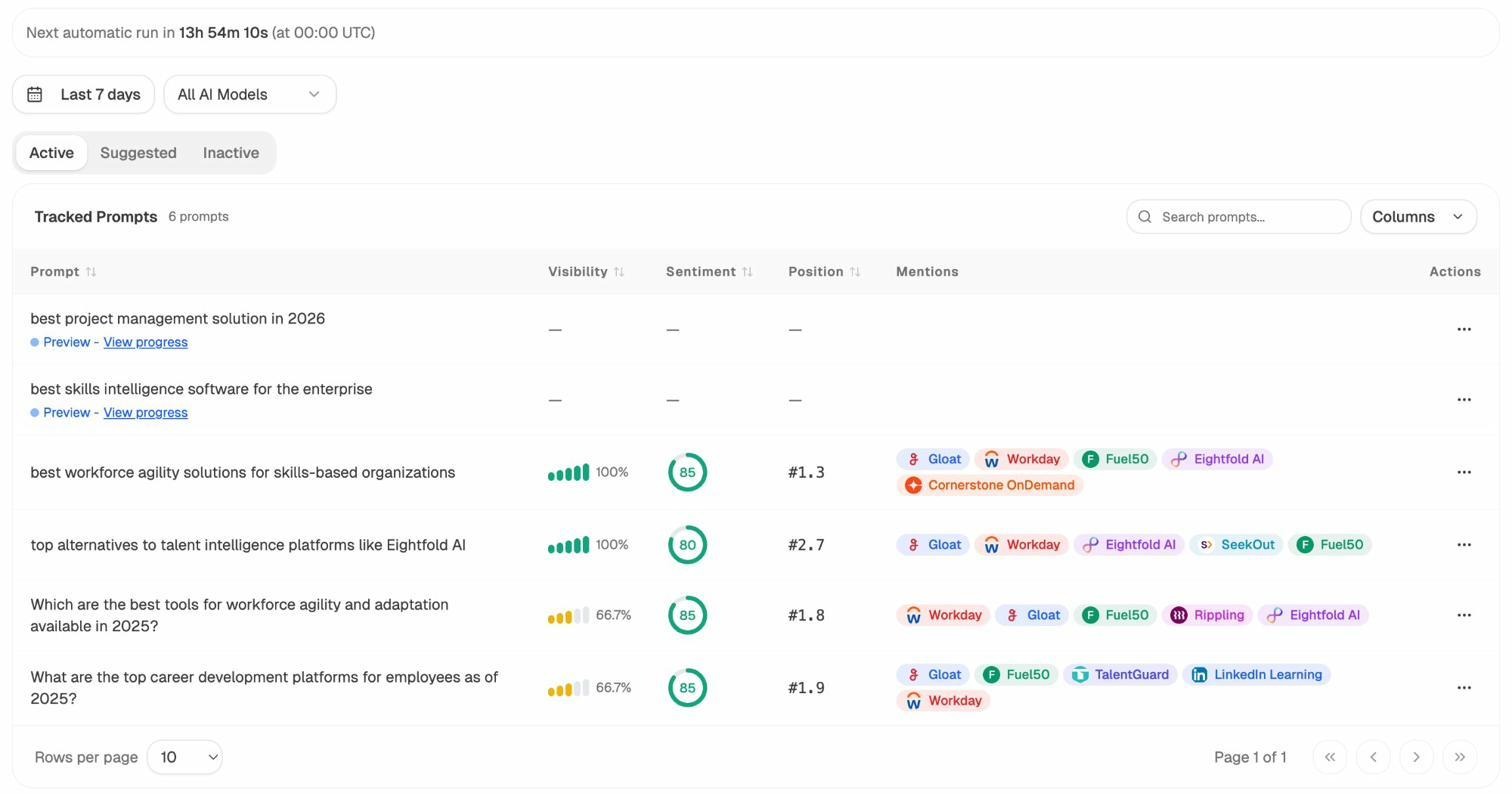

Beyond traffic, you can track the actual prompts where your brand appears (or doesn’t appear) across AI models. This is a different dimension than traditional SEO, where you track keyword rankings. In AI search, you’re tracking prompt visibility—whether your brand shows up when someone asks a question relevant to your business.

Each prompt shows your visibility percentage, sentiment score, average position, and which competitors appear alongside you. If a JavaScript rendering issue is preventing AI crawlers from accessing your content, you’ll see low or zero visibility scores for prompts where you should be appearing.

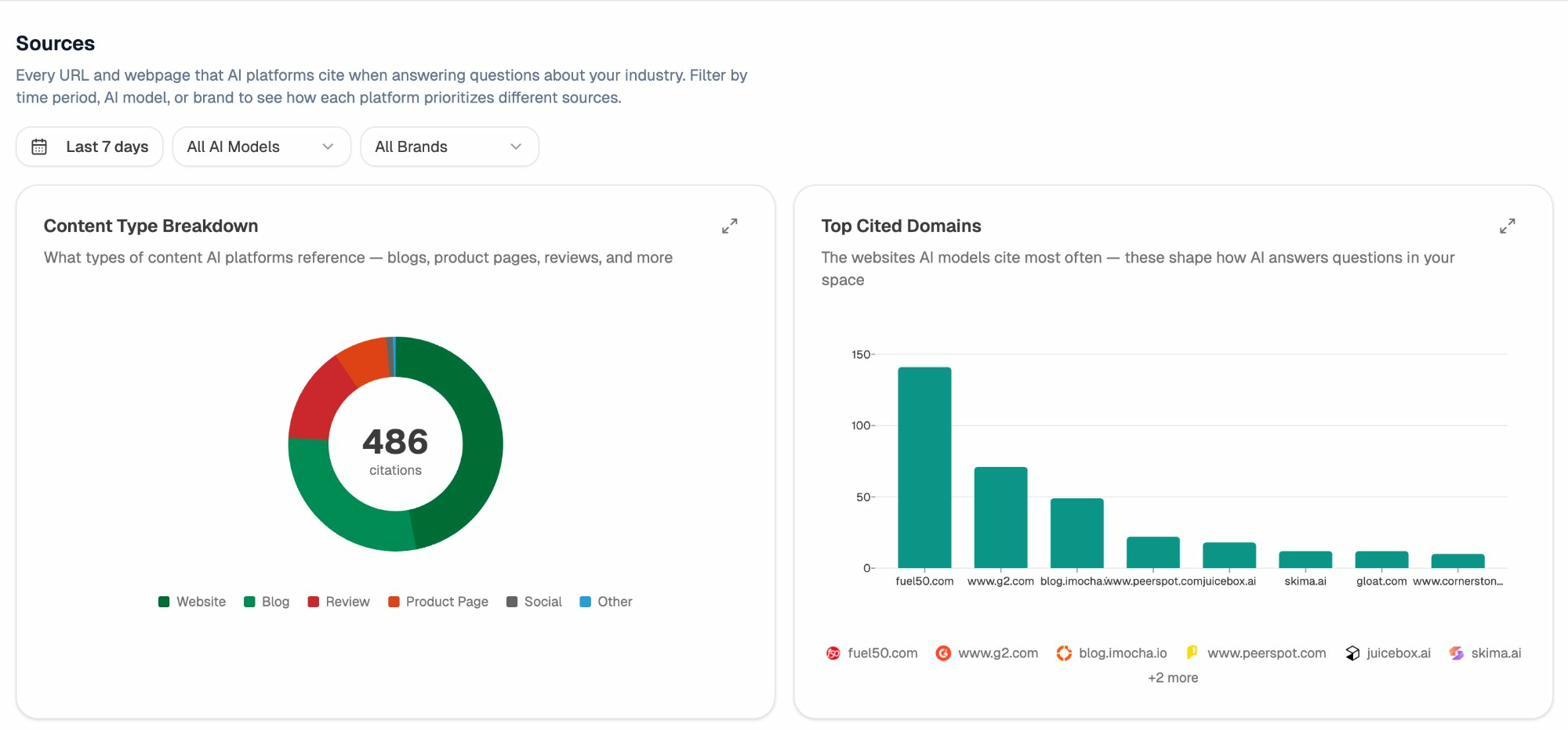

Identify Citation Sources AI Models Rely On

AI engines cite specific sources when generating answers. If your JavaScript site isn’t rendering its content in raw HTML, your pages won’t be cited—even if they have the best content on the topic.

The Sources dashboard in Analyze AI shows which domains get cited most often in your industry. If competitors with server-rendered content appear here and you don’t, the rendering gap is likely the cause. This gives you a clear target: fix the rendering, then monitor whether your citations increase.

Watch Where Competitors Win

The Competitors section shows which brands appear alongside yours across AI-generated answers. If a competitor with a less JavaScript-dependent site consistently outperforms you in AI answers, their content accessibility advantage may be the reason.

You can track competitors by prompt cluster and see exactly where they appear and you don’t. This helps prioritize which pages to fix first—start with the content areas where competitor visibility is highest and yours is lowest.

JavaScript SEO Testing Tools and Troubleshooting

Google Search Console URL Inspection Tool

This is your source of truth for understanding what Google sees on your JavaScript pages. When you inspect a URL, you get the rendered HTML from Google’s system, a screenshot of the rendered page, resource loading details, and any error messages.

![[Screenshot: Google Search Console URL Inspection tool showing the rendered HTML and screenshot for a JavaScript-heavy page]](https://www.datocms-assets.com/164164/1774992413-blobid15.jpg)

You can run a live test as well as view the last indexed version. The live test uses fresh resources (not cached), so its behavior is slightly different from Google’s actual rendering process. But it’s the closest approximation you have.

Pro tip: If you don’t have access to a site’s Search Console, you can still run a live test on it. Add a redirect from your own site (where you do have Search Console access) to the target URL. Inspect your redirect URL, and the live test will follow the redirect and show you the target page’s rendered result.

Rich Results Test

Google’s Rich Results Test lets you check a rendered page as Googlebot would see it—for both mobile and desktop. It’s useful for checking structured data validation and seeing the rendered HTML simultaneously.

Chrome DevTools

Chrome DevTools is your primary browser-based debugging tool. The key features for JavaScript SEO work:

Elements tab: Shows the live DOM after JavaScript has run. Use Ctrl+F to search for specific text and verify it’s in the DOM.

Network tab: Shows all resource requests. Filter by “JS” to see JavaScript files. Use “Block request URL” to test what happens when specific resources are blocked.

Coverage tab: Shows how much of each JavaScript file is actually used on the current page.

Performance tab: Shows the full page load timeline including JavaScript parsing, compilation, and execution.

Application tab: Shows service workers and cached resources.

View Source vs. Inspect

When you right-click in a browser window, “View Page Source” shows the raw HTML before JavaScript runs. “Inspect” shows the DOM after JavaScript has executed and made changes.

For JavaScript SEO work, you’ll primarily use Inspect. But sometimes you need View Source to check for issues that originate in the raw HTML—like a noindex tag that JavaScript tries to override, or a canonical tag that gets duplicated.

When to use View Source: Checking for noindex or nofollow in raw HTML, verifying canonical tags in the initial response, and confirming that critical content exists before JavaScript runs.

When to use Inspect: Checking the final state of the page after JavaScript, verifying that dynamic content loads correctly, and debugging DOM modifications.

Don’t Browse with JavaScript Disabled (for Google SEO)

Some guides recommend browsing with JavaScript turned off to see what Google sees. This is wrong for Google SEO. Google renders JavaScript, so the JavaScript-off view is not what Google sees.

However—and this is the important nuance—browsing with JavaScript disabled is exactly what you should do to check AI crawler visibility. Since most AI crawlers don’t render JavaScript, the JavaScript-off view approximates what they see. Use it for AI search optimization, not for Google optimization.

Don’t Rely on Google Cache

Google’s cache shows the raw HTML snapshot of your page. Your browser then fires the JavaScript referenced in that HTML. But resources may fail to load because of Cross-Origin Resource Sharing (CORS) policies—the cache is hosted on webcache.googleusercontent.com, and your site’s CORS policy may block resource requests from that domain.

The result is a broken-looking page that doesn’t represent what Google actually saw when it rendered your page. Don’t use cache as a debugging tool for JavaScript SEO.

How Google Processes Pages with JavaScript

Understanding Google’s rendering pipeline helps you diagnose issues and prioritize fixes. Here’s how the process works step by step.

Step 1: Crawling

Google’s crawler sends a GET request to your server and receives the raw HTML response, along with HTTP headers (status code, cache headers, etc.). This initial response is what Google processes first.

The crawler runs primarily with a mobile user-agent since Google is on mobile-first indexing. It mostly crawls from Mountain View, California, but also does some crawling from other locations for locale-adaptive pages.

There’s a 15 MB size limit on HTML files. Pages larger than this get truncated.

Step 2: Processing

After crawling, Google does several things with the raw HTML:

Extracts links. Google pulls out all links to other pages and resources (CSS, JavaScript files). These get added to the crawl queue for future crawling.

Caches everything. HTML pages, JavaScript files, CSS files—all get aggressively cached. Google ignores your cache headers and fetches new copies on its own schedule.

Checks for duplicate content. The raw HTML gets compared against other pages. If multiple pages have nearly identical raw HTML (common with app shell models), some may be deprioritized or skipped for rendering.

Applies restrictive directives. If Google finds a noindex tag in the raw HTML, the page stops here. It won’t be sent to the renderer.

Step 3: Render Queue

Pages that pass the processing stage go into the render queue. Google has found that the median time between crawling and rendering is about five seconds, with the 90th percentile being minutes. So the delay between crawling and rendering is not a concern for most sites.

Not all pages get rendered. Pages with noindex tags skip rendering. Pages that Google determines are low-quality based on other signals may also be skipped.

Step 4: Rendering

Google’s renderer uses a headless Chrome browser running the latest version (it’s “evergreen”). It takes the cached HTML, JavaScript, and CSS files and renders the page to see the final DOM.

Important details about the renderer:

Cached resources. The renderer uses cached versions of resources, not live versions. The exception is XHR (API) requests, which are made live. This means if you update a JavaScript file but Google hasn’t recrawled it yet, the renderer uses the old version.

No five-second timeout. The common myth that Google only waits five seconds is false. The renderer doesn’t make live resource requests (except XHR), so there’s nothing to “wait” for. It runs JavaScript against cached files, monitors the browser’s event loop, and waits until all actions complete. It has safeguards for infinite loops but no fixed timeout.

No clicking or scrolling. The renderer doesn’t interact with the page. But it does resize the viewport to see content that would normally be below the fold. On mobile, it extends from 411×731 pixels to 411×12,140 pixels. On desktop, from 1,024×768 to 1,024×9,307.

No pixel painting. Google doesn’t actually render the visual pixels. It processes the DOM structure, layout, and text content without generating the final visual output. This saves significant resources.

Step 5: Indexing

After rendering, the final DOM is processed for indexing. Text content, links, metadata, and other SEO-relevant elements from the rendered page are combined with what was already extracted from the raw HTML. The indexed version becomes what Google uses for ranking and displaying in search results.

JavaScript Rendering Options Compared

Choosing the right rendering strategy determines whether your content is accessible to Google, AI crawlers, and users. Here’s how the main options compare.

Client-Side Rendering (CSR)

The browser does all the rendering. The server sends a minimal HTML shell, and JavaScript builds the entire page in the user’s browser.

Pros: Simple to deploy, great for interactive apps. Cons: Content invisible to AI crawlers. Google can render it but relies on its rendering queue. Worst option for SEO and AI search.

Server-Side Rendering (SSR)

The server renders the HTML for each request. The browser receives a complete page, then JavaScript hydrates it for interactivity.

Pros: Full content visible to all crawlers (Google and AI). Dynamic content support. Cons: Higher server load. Slightly slower Time to First Byte (TTFB) since the server does rendering work per request.

Static Site Generation (SSG)

Pages are pre-rendered at build time. The server delivers pre-built HTML files.

Pros: Fastest option. Full content visible to all crawlers. Low server load. Cons: Not suitable for highly dynamic or personalized content. Build times increase with page count.

Incremental Static Regeneration (ISR)

A hybrid of SSG and SSR. Pages are pre-built but can be regenerated in the background when data changes.

Pros: Fast delivery with fresh content. Full content visible to all crawlers. Cons: Slight complexity in configuration. May serve stale content briefly during regeneration.

Dynamic Rendering

Different content is served to different user-agents. Crawlers get a pre-rendered version; users get the client-side rendered version.

Pros: Can fix crawler visibility without changing the user-facing architecture. Cons: It’s cloaking, even though Google says it’s acceptable. Adds complexity. Google now recommends against it. Doesn’t solve for AI crawlers unless you specifically target their user-agents.

Bottom line: If you’re building a new JavaScript site or migrating an existing one, use SSR or SSG. Both Next.js and Nuxt make this straightforward. Your content will be visible to Google, AI crawlers, and users from the initial HTML response.

Auditing a JavaScript Site: A Step-by-Step Process

Here’s the workflow for systematically auditing a JavaScript site for both traditional SEO and AI search issues.

Step 1: Identify the tech stack. Use a browser extension like Wappalyzer or BuiltWith to identify the JavaScript framework, rendering method, and CMS. This tells you what kinds of issues to look for.

![[Screenshot: Wappalyzer browser extension showing detected technologies on a website including React and Next.js]](https://www.datocms-assets.com/164164/1774992414-blobid16.png)

Step 2: Check the raw HTML. View the page source of several important pages. Look for your main content, title tags, meta descriptions, canonical tags, and internal links in the raw HTML. If they’re missing, you have a rendering dependency issue.

Step 3: Compare raw vs. rendered. Use Chrome DevTools to compare View Source and Inspect. Note any differences in content, metadata, links, or directives. Every difference represents a dependency on JavaScript rendering.

Step 4: Test with JavaScript disabled. This tells you what AI crawlers see. Disable JavaScript in Chrome (Settings > “Disable JavaScript”) and browse your key pages. If critical content disappears, prioritize SSR or SSG migration.

Step 5: Run a crawl with JavaScript rendering. Use a crawler that supports JavaScript rendering to crawl your entire site. Look for duplicate titles, missing metadata, broken internal links, missing alt text, and structured data errors.

Step 6: Check Google Search Console. Use the URL Inspection tool on your most important pages. Verify the rendered HTML contains your content. Check for any flagged issues.

Step 7: Audit AI search visibility. Use Analyze AI to check whether your pages appear in AI-generated answers. If your Google rankings are strong but AI visibility is low, JavaScript rendering is likely the cause.

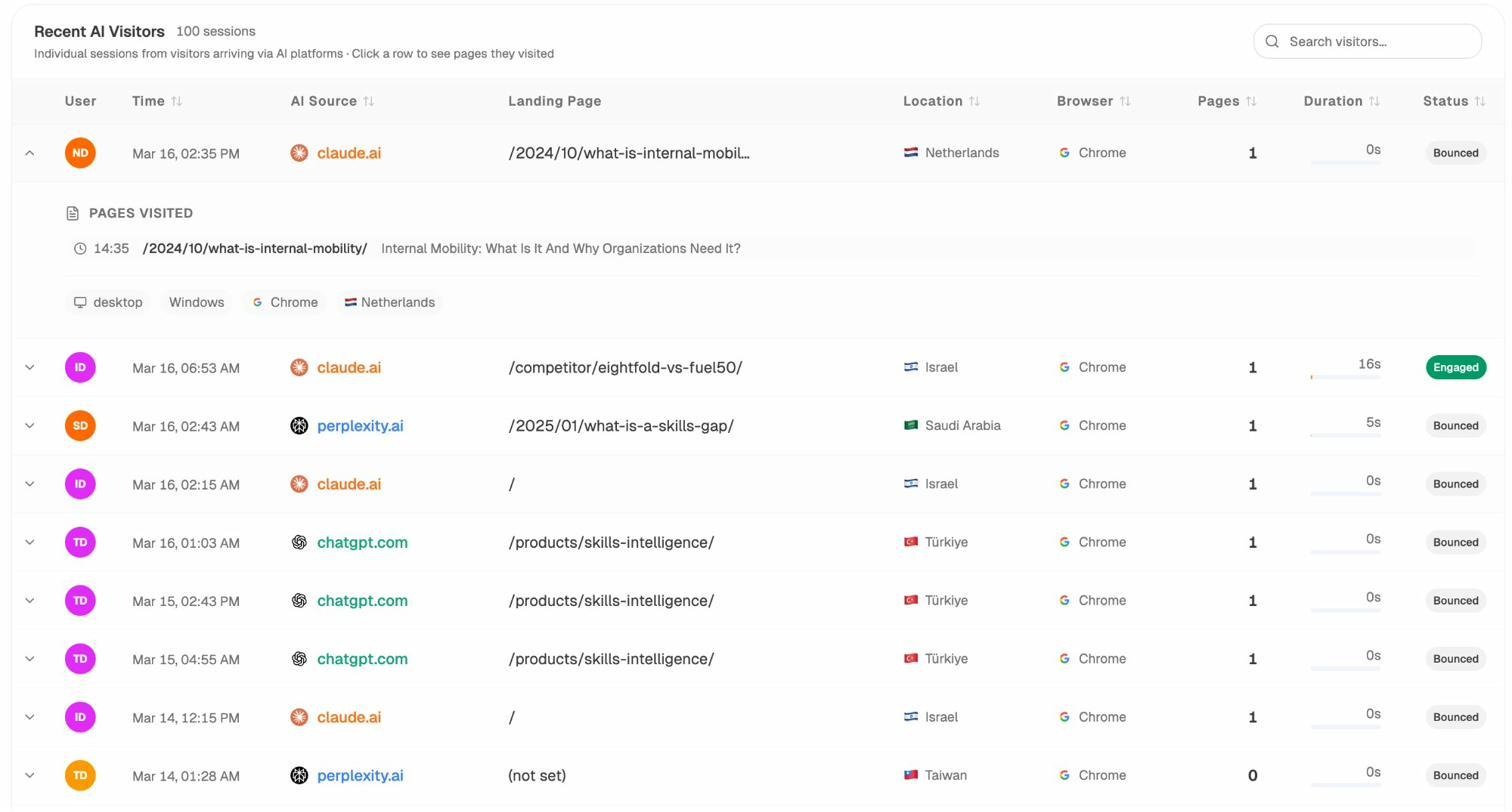

The Recent AI Visitors view shows individual sessions from AI platforms. You can see which AI source referred each visitor, which page they landed on, and whether they engaged or bounced. If your JavaScript site is properly optimized for AI crawlers, you should see sessions from multiple AI platforms.

Step 8: Fix and monitor. Implement fixes starting with the highest-impact issues (usually rendering method and critical metadata). Resubmit pages to Google Search Console and monitor both Google rankings and AI search visibility to confirm improvements.

Final Thoughts

JavaScript SEO has always been about making sure search engines can see your content despite the added complexity of client-side rendering. The rise of AI search adds urgency to this work because AI crawlers are less forgiving than Google—they don’t render JavaScript at all.

The good news is that the fix for both problems is the same: serve complete HTML from the server. SSR and SSG solve for Google and AI crawlers simultaneously. If you’re running a client-side rendered site, migrating to server-side rendering is the single highest-impact change you can make for search visibility across all channels.

Start with the audit process above. Check what Google sees. Check what AI crawlers see. Fix the gaps. Then track your results across both traditional and AI search to make sure the fixes are working.

JavaScript is not the enemy. Bad JavaScript implementation is. Get the rendering right, and the rest is standard SEO.

Useful resources:

-

JavaScript SEO Basics – Google’s official documentation

-

Web Rendering Service – How Google’s renderer works

-

Rendering on the Web – Google’s guide to rendering strategies

-

Analyze AI Free Tools – Check your site’s AI search visibility

-

Broken Link Checker – Find broken links on your JavaScript site

-

Website Traffic Checker – See traffic estimates for any site

-

Keyword Generator – Find keyword opportunities for your content

-

SERP Checker – Check who ranks for your target keywords

-

Website Authority Checker – Check domain authority scores

Ernest

Ibrahim