Summarize this blog post with:

In this article, you’ll learn what LLM visibility actually is, why it has become a measurable growth lever in 2026, and how to move the needle on getting your brand mentioned and cited inside ChatGPT, Perplexity, Google AI Overviews, Google AI Mode, Claude, and Copilot. You’ll see the page types LLMs prefer, the writing habits that make your content quotable, the silent mistakes that keep you out of AI answers, and how to track all of it week over week.

Table of Contents

What is LLM visibility?

LLM visibility is how often your brand shows up inside the answers and citations generated by large language models. That includes ChatGPT, Perplexity, Claude, Copilot, Google’s AI Overviews, and Google AI Mode.

When someone asks ChatGPT for “the best CRM for a 20-person sales team” and your product is named in the response, that is an LLM mention. When Perplexity links to your blog post as a source, that is an LLM citation. Mentions and citations are the two units that make up LLM visibility.

It is not the same as ranking on Google. Rankings tell you where you sit on a list of blue links. LLM visibility tells you whether you appear inside the generated answer, regardless of which page would have ranked first.

Some people call this generative engine optimization (GEO) or answer engine optimization (AEO). The labels overlap. The job is the same. Get your brand named, sourced, and recommended inside AI-generated responses for the queries that matter to your business. We have a longer breakdown in our guide on what generative engine optimization is if you want to go deeper on terminology.

![[Screenshot of a ChatGPT or Perplexity response that names 4 to 5 brands by name when asked for “best [tool category]” recommendations]](https://www.datocms-assets.com/164164/1778114097-blobid1.png)

Why LLM visibility matters now

Buyer behavior moved fast. According to SparkToro, more than 20% of Americans now use AI tools at least 10 times a month, and nearly 40% use them at least once a month. AI assistants are no longer a side experiment. They are a daily research surface for a real share of your audience.

The conversion economics are worth paying attention to. Several B2B teams have reported that visitors arriving from AI sources convert at multiples of what traditional search visitors do. They land on your site already pre-qualified, having read a comparison or recommendation in the AI response itself. Ahrefs has shared that AI search visitors sign up at 23 times the rate of organic search visitors.

There is a second reason to care. AI assistants compress an entire research journey into one answer. A buyer who would have read three blog posts, two G2 listings, and a Reddit thread now reads a single paragraph. If your brand is not in that paragraph, you do not get a second chance with the same buyer. People also remember the names they see inside an answer and look you up later, which means LLM visibility creates pipeline even when no one clicks the citation.

How LLM visibility differs from SEO visibility (and why both still matter)

Traditional search visibility is positional. You measure it through traffic, keyword rankings, and SERP position. The higher you rank, the more clicks you get.

LLM visibility is presence-based. You measure it by the percentage of AI answers that include your brand for a given prompt, the position you appear in when listed, and whether you are cited as a source. There is often no click. The click has been replaced by a recommendation.

Here is the practical difference between the two channels.

|

Traditional SEO |

LLM visibility |

|

|---|---|---|

|

Surface |

Google, Bing |

ChatGPT, Perplexity, Claude, AI Overviews, AI Mode |

|

Unit of measurement |

Position 1–10 |

Mention, citation, share of voice |

|

What the user sees |

Ten blue links |

One synthesized answer |

|

Outcome |

Click to your site |

Brand named, sometimes a click |

|

Optimization input |

Keywords, links, on-page |

Brand authority, entity coverage, content depth, mentions across the web |

Now here is the part most “AI search” articles get wrong. They tell you SEO is dead. It isn’t.

When Ahrefs analyzed pages cited inside AI Overviews, they found a positive correlation between ranking high in Google and being cited in the AI answer. Grow and Convert ran a similar study and found their clients were mentioned 67% of the time in ChatGPT and 77% of the time in Perplexity for keywords where they ranked on page one of Google.

The signal is clear. LLMs lean heavily on existing search indexes through retrieval-augmented generation (RAG). If you are already winning on Google, you have a head start in AI answers. If you are not, you have two channels to fix at the same time.

This is why we treat AI search as another organic channel sitting alongside SEO, not a replacement for it. SEO is the foundation. LLM visibility is the next layer built on top. Our 4 pillars of an effective SEO strategy for AI search walks through how to think about both together, and our GEO vs SEO breakdown covers the differences and overlaps in more detail.

How LLMs decide who to mention and cite

Two mechanisms shape every AI answer.

Training data. Models learn about your brand from text scraped during training. Wikipedia entries, Reddit threads, GitHub repos, podcast transcripts, news articles, product reviews, and forum posts all feed the model’s baseline understanding of who you are and what you do. Training cutoffs are infrequent (GPT-5 cut off in September 2024, Gemini 2.5 Pro in January 2025), so this is a slow-moving lever.

Retrieval at query time. When you ask a model a current question, it searches a live index, pulls top results, and writes the answer from that retrieved content. ChatGPT and Copilot use Bing. Gemini and AI Mode use Google. Perplexity uses its own. This is RAG, and it is the fast-moving lever you can influence week to week.

Every tactic below maps to one of these two mechanisms. The training-data lever is about long-term entity building. The retrieval lever is about making sure the right pages on your site are findable, indexable, and quotable.

How to optimize for LLM visibility

1. Build brand mentions across the web

This is the highest-leverage move on the list. The more often your brand is mentioned in relevant context across the public web, the more confidently a model can recommend you.

Ahrefs studied 75,000 brands and found that the strongest correlation with appearing in AI Overviews was the count of brand web mentions. Not links. Mentions. Your brand name appearing in a sentence about your category, on pages that LLMs trust.

Where to focus first.

-

Reddit and Quora. User-generated content is overweighted by every major LLM. Participate honestly, answer questions in your category, and let your team be visible.

-

G2, Capterra, TrustRadius. These get cited constantly when LLMs are asked for “best of” lists. Get your existing customers to leave detailed reviews.

-

YouTube. Transcripts are searchable, and LLMs cite YouTube descriptions and timestamps frequently.

-

Industry publications and podcasts. Guest posts, interviews, and contributed articles plant your name on domains the models already trust.

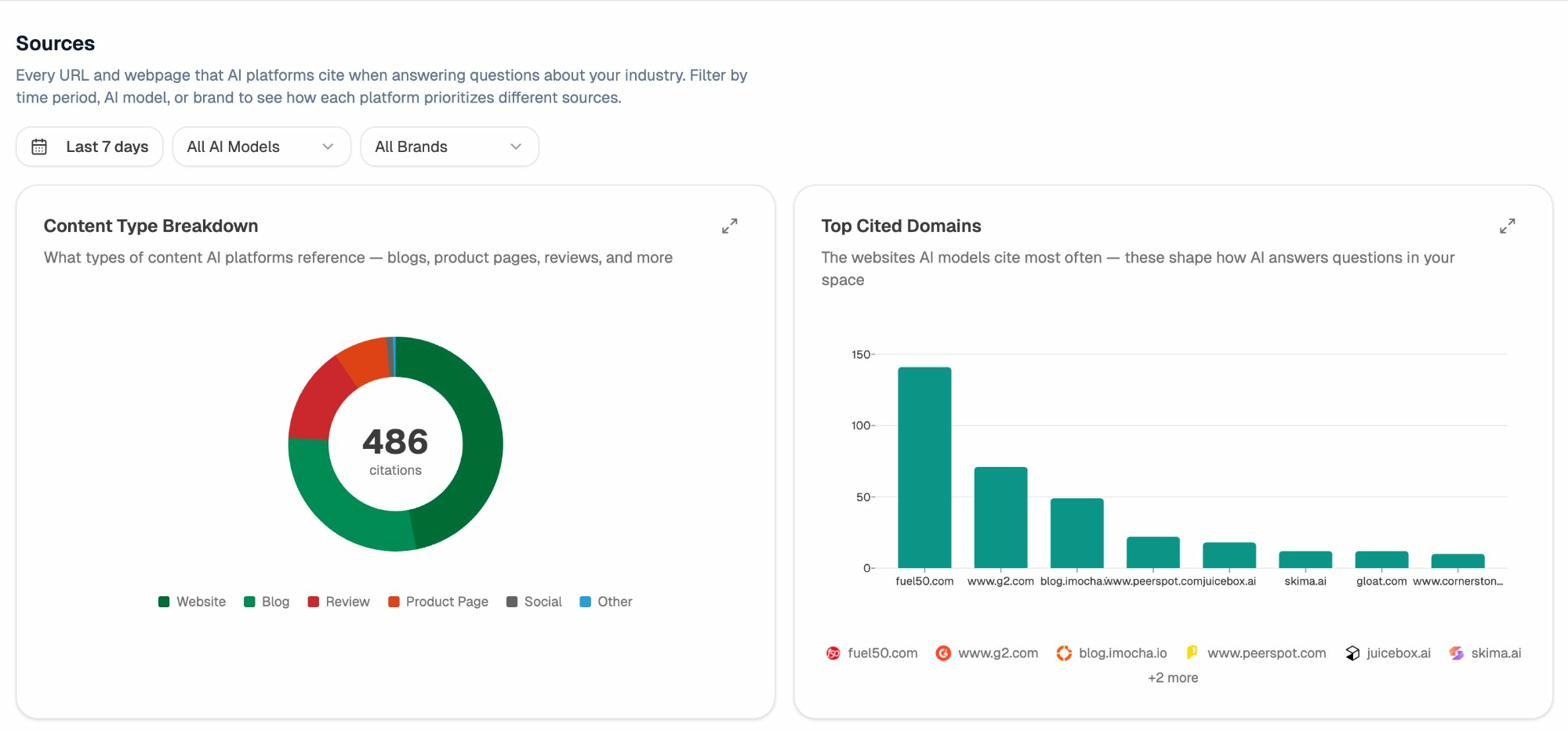

To find which domains you should be present on for your topics, look at the Sources report inside Analyze AI. It shows every URL and domain that AI platforms cite when answering questions about your industry, ranked by frequency.

The domains at the top of “Top Cited Domains” are your outreach target list. They are the sites most likely to influence which brands get named in AI answers in your category. For more on building external mentions, see our guide on off-page SEO strategies that work with AI tracking.

2. Plug your entity gaps

LLMs build a model of your brand by reading the words that appear near your brand name. These are called co-mentions. If you sell project management software but you are never mentioned alongside terms like “Gantt chart,” “sprint planning,” or “team capacity,” LLMs will not connect you to those subtopics.

The fastest way to find these gaps is to look at where your competitors appear in AI answers and you do not.

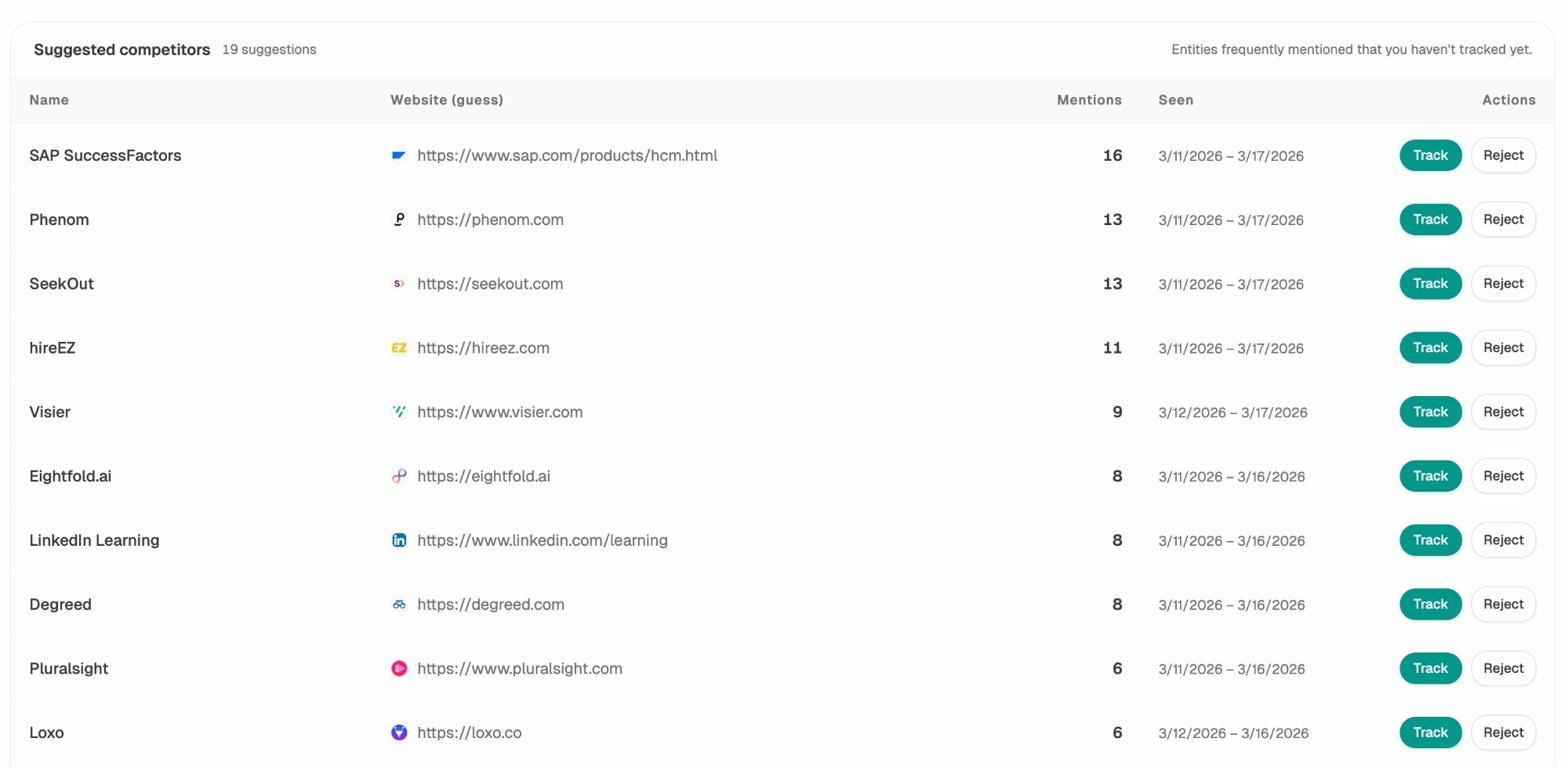

Inside Analyze AI’s Competitors view, the “Suggested competitors” panel surfaces brands that frequently appear in your tracked prompts but that you have not added to your tracking list yet. These are the entities the models are pairing with your category.

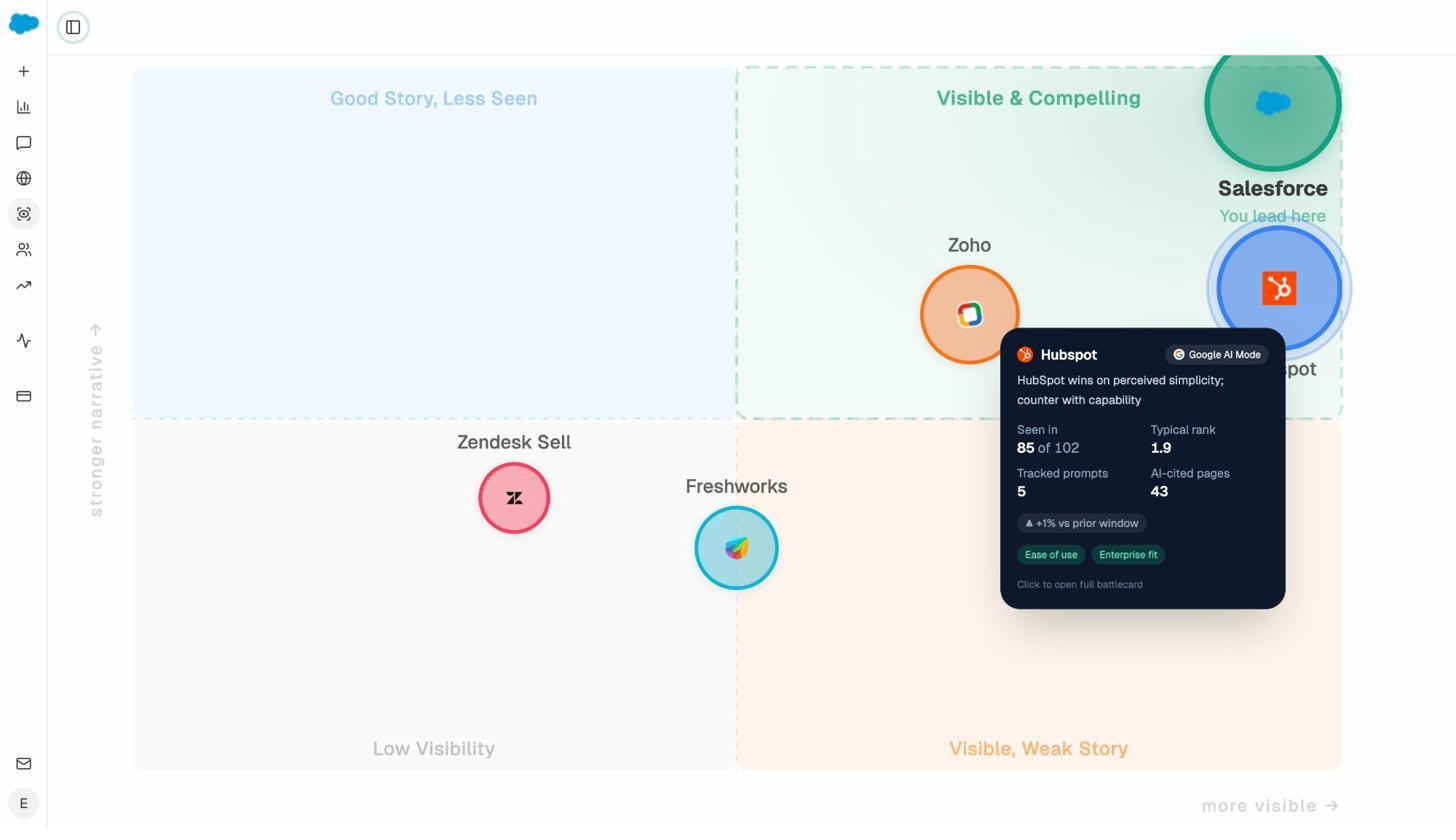

From there, the Perception Map plots each brand on two axes, narrative strength and visibility. Brands in the “Visible & Compelling” quadrant are your hardest competition. Brands in the “Good Story, Less Seen” quadrant are vulnerable. The quadrants you are missing from are the gaps to fill.

Once you have identified which topics or angles you are missing, the playbook is straightforward. Publish on-site content for each gap, and earn off-site mentions on those same topics from cited domains. Our walkthrough of SEO competitor analysis with AI search rivals has a fuller process.

3. Optimize the page types LLMs prefer

Not every page on your site has the same shot at being cited. When Ahrefs analyzed AI traffic across 35,000 websites, the most-cited URL patterns were predictable. Blog posts and guides. Comparison pages (“best”, “vs”, “alternatives”). Core pages (product, pricing, about, contact). Original research. PDFs. Video.

If you are starting from a thin page inventory, prioritize these formats.

-

Comparison content for your category and your closest competitors. AI answers love multi-option lists.

-

Original research with proprietary data. Models prefer to cite primary sources.

-

Definitive guides for the high-intent keywords you already care about. Going deep wins both Google ranking and LLM citation.

-

Use-case and integration pages. When someone asks a model “best X for Y,” your dedicated page for “Y” is what gets retrieved.

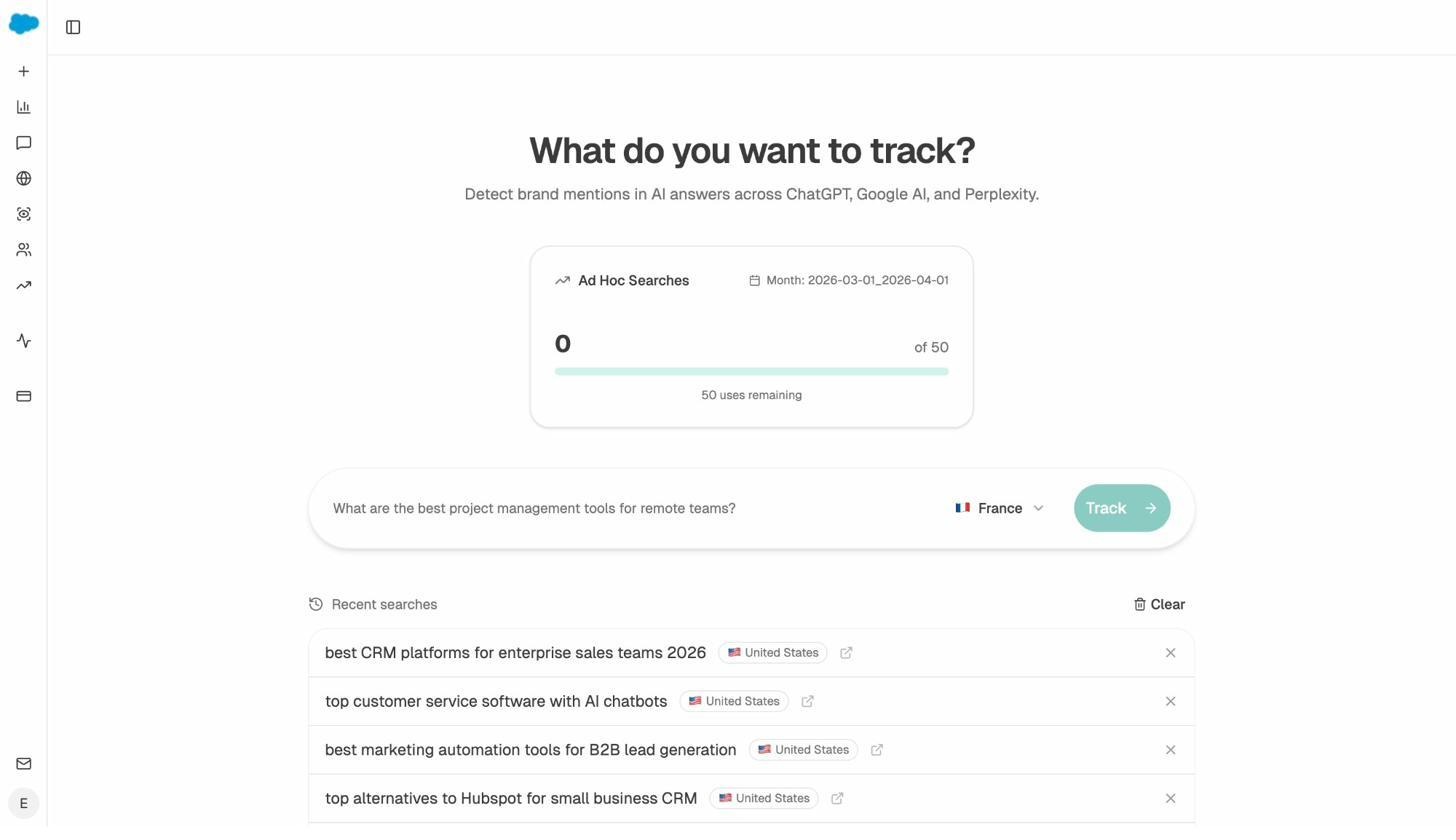

To find the comparison and “best of” prompts you should be targeting before you write, run them through Analyze AI’s Ad Hoc Prompt Searches. Type the prompt, choose a country, and see in real time which brands get named and which sources get cited.

If a prompt returns competitor pages but not yours, you have just identified a content gap worth filling. For more on the SEO side of this same workflow, our free keyword generator tool and SERP checker help you stress-test the ideas before publishing.

4. Write content the way LLMs read it

LLMs are not reading your post for pleasure. They are scanning for confident, retrievable claims they can quote without ambiguity. A few habits make your content more quotable.

-

Lead with the answer. Bottom Line Up Front. Put the main claim in the first sentence of the section, not the last. Models often pull the opening sentence as the answer snippet.

-

Write declarative sentences. “X is the leading approach for Y” beats “X might be considered one of many approaches that some practitioners use for Y.” Confidence is retrievable. Hedging gets skipped.

-

Keep sentence structure simple. Subject, verb, object. Save the rhetorical wind-ups for thought leadership posts you do not need to be cited.

-

Pack each section with related entities. If your topic is keyword research, mention search volume, intent, difficulty, SERP analysis, clustering, and the tools used for each. Entity-rich passages get retrieved more often.

-

Re-state the topic in long pieces. In a 4,000-word guide, remind the reader (and the model) what the document is about every few sections so retrieval can find the relevant chunk.

-

Add original research and proprietary data. A quotable statistic from your own dataset is a magnet for citations.

-

Keep content fresh. Ahrefs analyzed 17 million citations and found that LLMs prefer to cite content newer than what typically ranks on Google. Refresh the pages you most want cited every few months.

For a deeper breakdown of how to structure content for both Google and AI, see our 2026 SEO content strategy.

5. Monitor and fix hallucinated URLs

LLMs hallucinate URLs. They send users to pages that do not exist on your site, often because the model expects you to have a page at that URL based on what it knows about you.

Ahrefs found that AI assistants send users to 404 pages 2.87 times more often than Google does. That is lost traffic and lost trust.

The fix is mechanical. Find the hallucinated URLs, then 301-redirect them to the closest existing page on your site. Here is how to do it with Analyze AI.

-

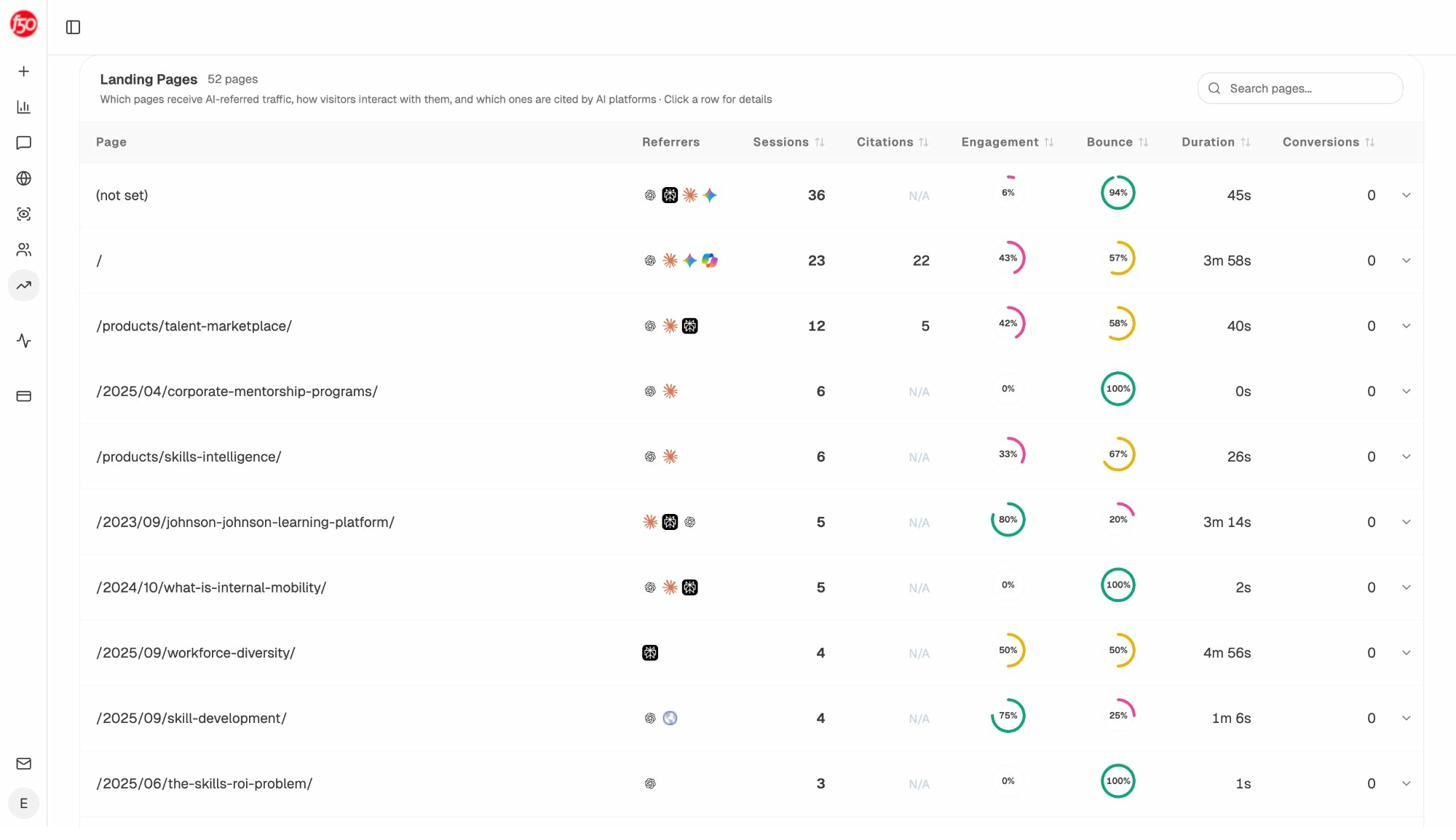

Open AI Traffic Analytics and review the Landing Pages report. Sort by sessions, then look for URLs with very short session times and 100% bounce rates.

-

Cross-check each suspect URL in your browser. If it returns a 404, add it to your redirect list.

-

In your CMS or hosting layer, set up a 301 redirect from each hallucinated URL to the most relevant live page.

Run our free broken link checker at the same time to catch internal 404s that hurt both Google rankings and AI retrieval.

6. Strengthen your presence in novel training data sources

LLMs are trained on sources most SEOs have ignored for years. GitHub READMEs. Wikipedia. arXiv and PubMed papers. Patents. Books. Public datasets.

You cannot game these. You can make sure that wherever your brand legitimately appears, the information is current, accurate, and consistently named.

A few moves worth your time. If your product is open source or has a public SDK, write a thorough GitHub README and pin it. If your brand has a Wikipedia entry, keep it factual and well-sourced (and if it doesn’t and you meet notability requirements, this is worth pursuing through an experienced third party). If you publish research, syndicate it to arXiv, SSRN, or your industry’s preprint server. If you have a podcast or speak at conferences, get transcripts onto a public, indexable page. Each of these creates a stable reference the next training run can find.

7. Don’t hide behind JavaScript

Most AI crawlers do not render JavaScript yet. If your most important content (pricing, product descriptions, comparison tables, or your homepage hero) loads through client-side JavaScript, AI models cannot see it.

The check is simple. View the source of the page, not the rendered DOM. If your important content is not in the raw HTML, AI crawlers cannot read it either.

This will change as crawlers ramp up rendering, but for now, ship your high-priority content as server-rendered HTML.

8. Skip the AI spam playbook

It is now technically cheap to publish a hundred AI-generated blog posts a week. Don’t.

Three reasons. Google has been filtering low-effort content for two decades and is getting more aggressive about it, not less. AI platforms watch the same signals and will catch up quickly, especially as RAG becomes their primary retrieval method. And even when spam works for a quarter, the goal is not ranking, it is revenue. Spammy AI content has never built a brand worth recommending.

Spend your effort on a smaller number of pages with original research, real opinions, and proprietary data. Those are the pages that get cited in AI answers and that compound year after year.

How to track your LLM visibility

You cannot optimize what you cannot see. Tracking LLM visibility comes down to four questions. Which prompts in your category are worth tracking? How often are you mentioned, and at what position? Which of your pages are being cited? How much real traffic is AI sending to your site?

Here is how to answer each one.

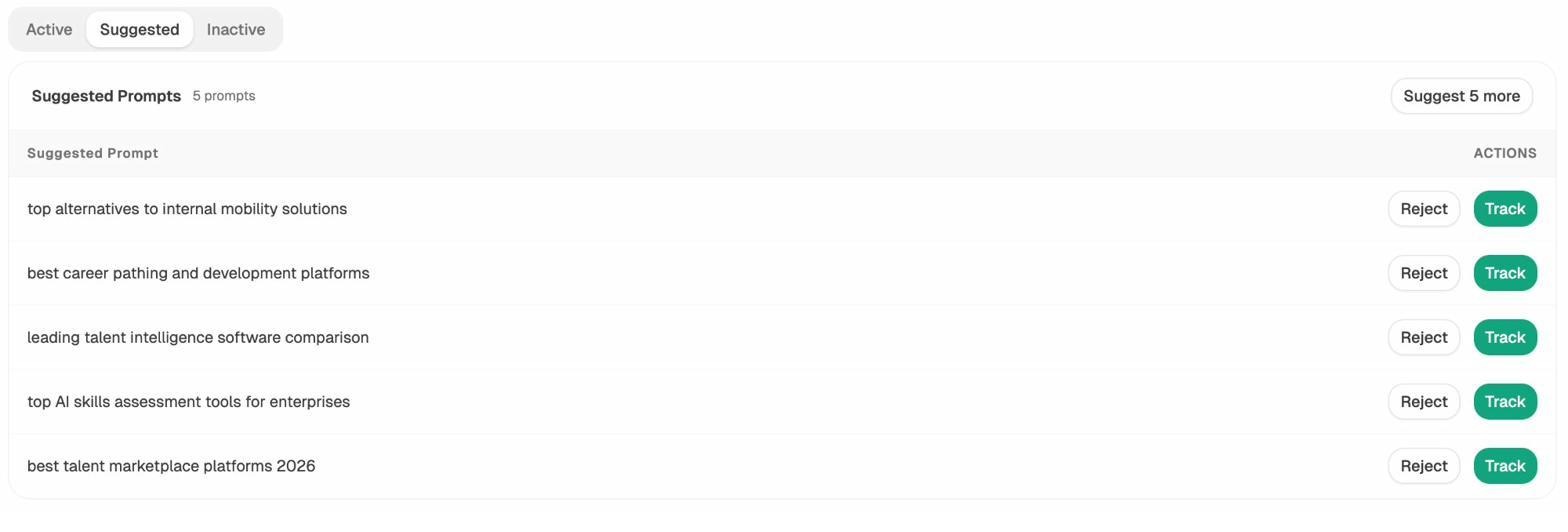

Find the right prompts to track. Start with the prompts your buyers actually ask. “Best [your category] for [segment].” “Alternatives to [biggest competitor].” “[Your category] for [use case].” Inside Analyze AI, the Suggested Prompts panel surfaces high-value prompts in your category that you have not added yet, based on what real buyers are asking AI assistants.

Add the ones that match your buyer’s intent and let them run.

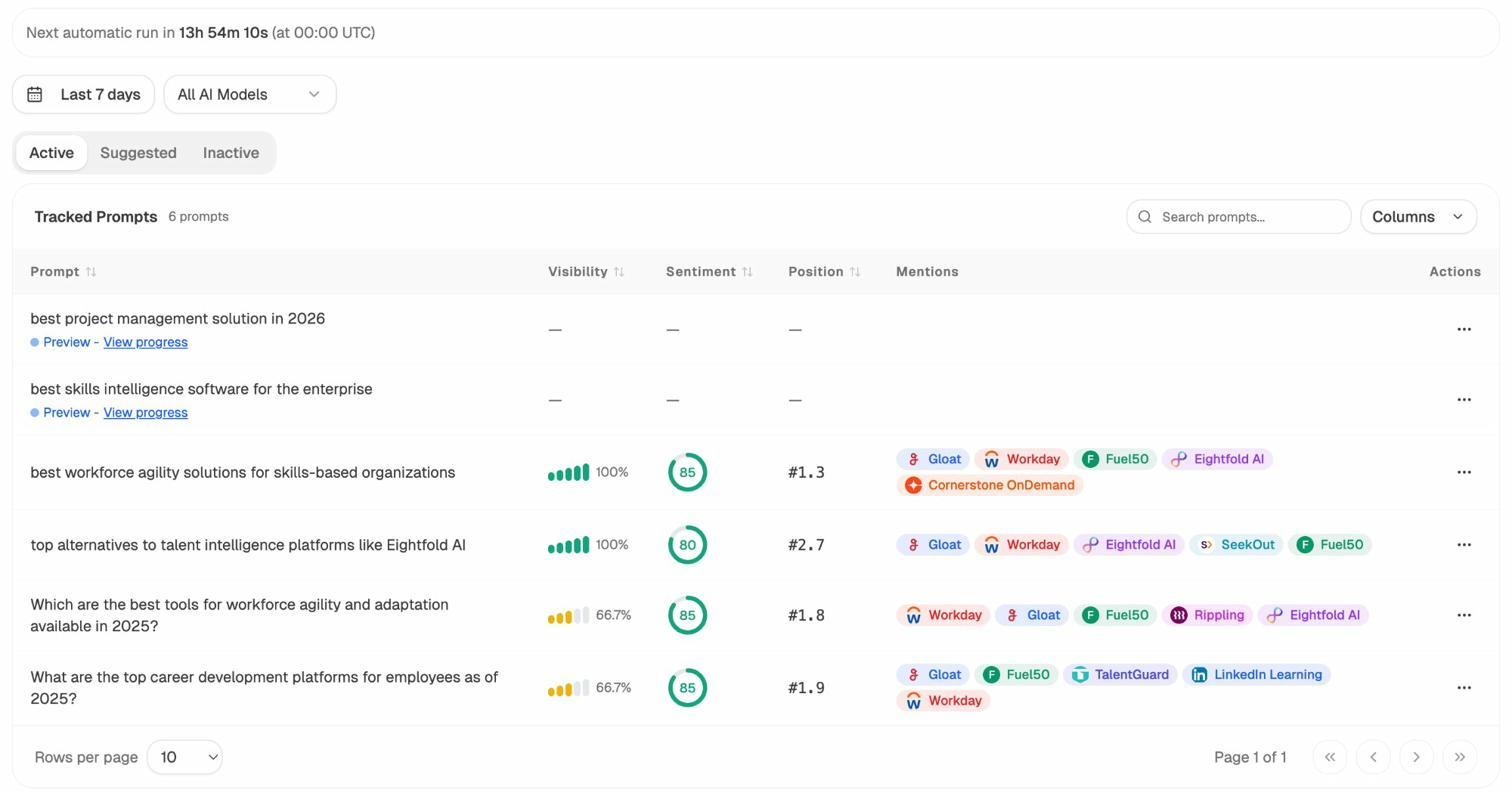

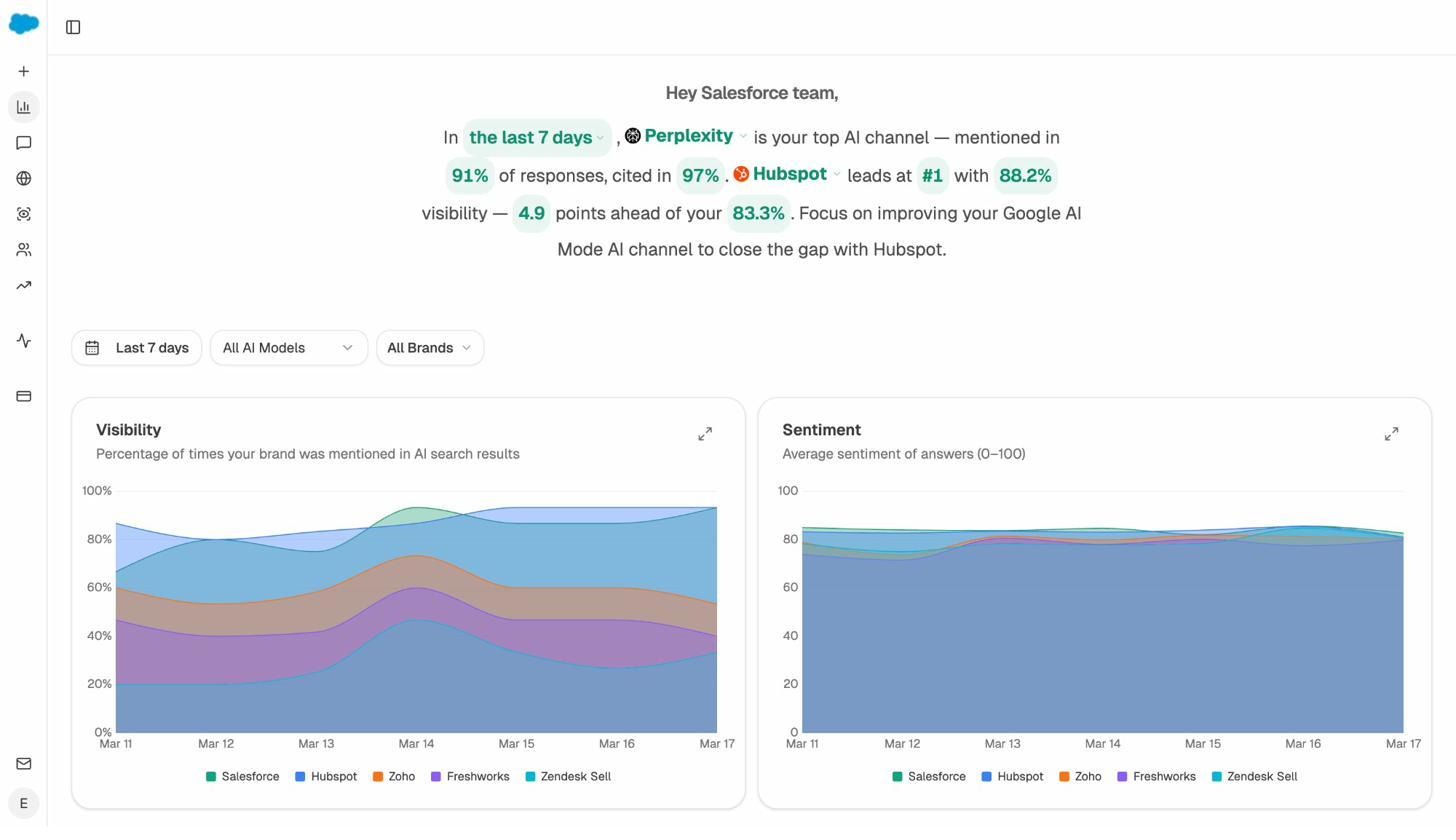

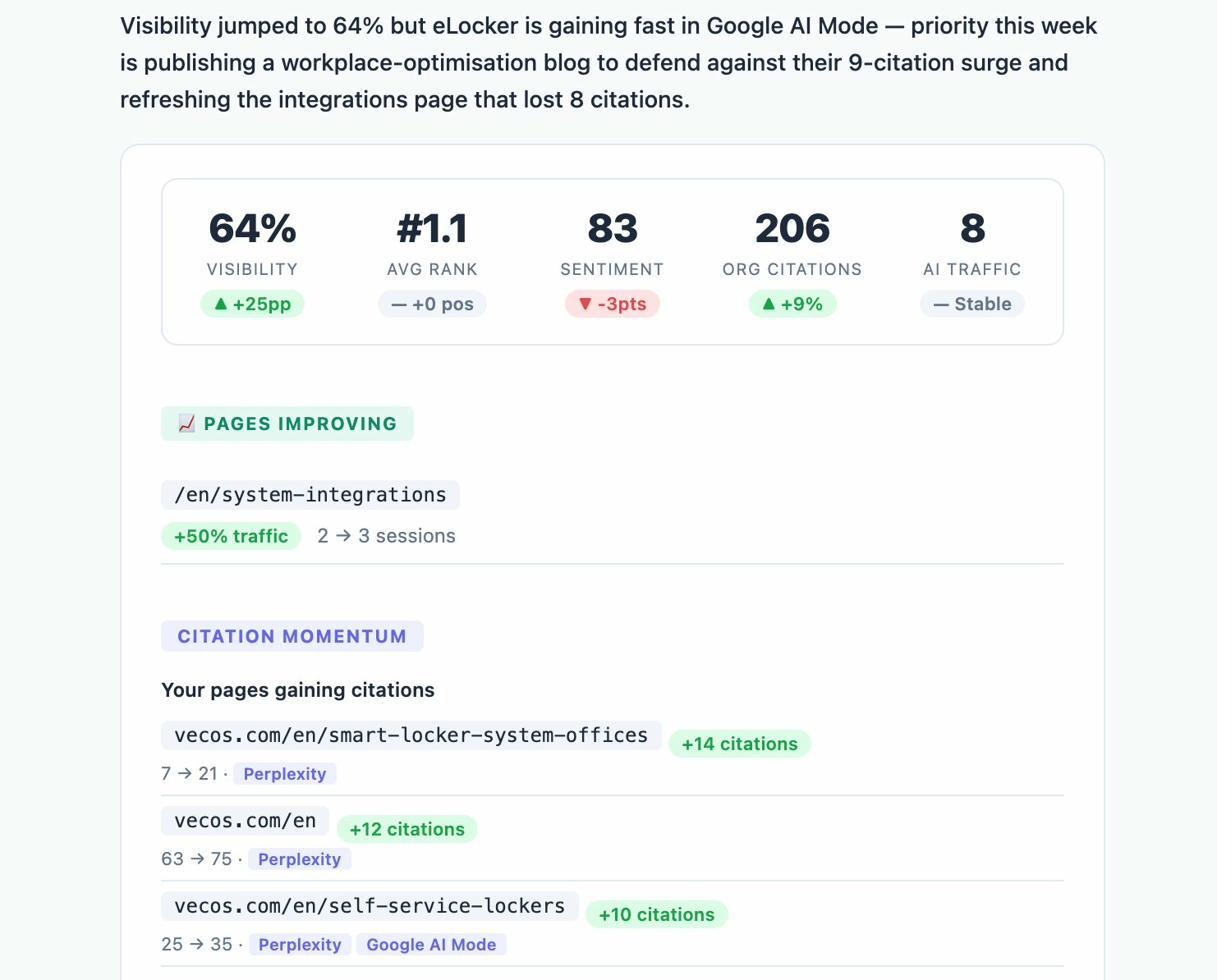

Measure mentions, position, and sentiment per prompt. Once prompts are tracked, the dashboard shows visibility (the percentage of runs that mention you), average position when listed, sentiment, and which competitor brands appear alongside you in the same answer.

Watch the trend, not the snapshot. AI answers are non-deterministic. The same prompt run twice can return different brands. The signal that matters is the trend over weeks. The Overview dashboard shows visibility, sentiment, and share of voice over time, with a written summary that highlights where you are winning and where you are slipping.

Track which of your pages get cited and where AI traffic actually lands. The AI Traffic Analytics view connects citations (which of your URLs are being retrieved) to traffic (visitors arriving from chatgpt.com, perplexity.ai, claude.ai, gemini.google.com, copilot.com), with engagement, bounce rate, and conversions for each session.

This is the report that tells you which pages are pulling weight in AI search. Once you find your winners, study the format and double down on what works.

Get a weekly summary delivered. If you would rather not log in every Monday, the Weekly Email Digest pushes the same summary to your inbox, including pages gaining citations, prompts where you slipped, and the recommended next move for the week.

If you want to compare different tools in this category before committing, our roundup of the best LLM monitoring tools for brand visibility covers the trade-offs.

What about llms.txt?

Skip it for now.

LLMs already use the existing crawler infrastructure (robots.txt, sitemaps, structured data) to discover and understand your content. There is no published evidence that adding an llms.txt file improves retrieval, citations, or AI traffic. No major model has committed to parsing it.

If you want to read more on this, our piece on the 7 best LLM.txt generator tools walks through what the file is, what it claims to do, and why we still recommend not prioritizing it.

Final thoughts

LLM visibility is the next chapter of organic growth, not a replacement for what came before. The brands that win in AI search are the same brands that have always won online. They publish original work, earn real mentions, hold a point of view on their category, and measure what is working.

If you are already doing the SEO work, the lift to add LLM visibility is smaller than the panic-marketing crowd would have you believe. Start by tracking the prompts your buyers actually use. Find the gaps where competitors get named and you do not. Strengthen your presence on the domains LLMs already trust. Make sure your best content is server-rendered, fresh, and quotable.

Then keep doing it for a year.

Search is changing. The job is the same. Build a brand worth recommending.

Ernest

Ibrahim