Summarize this blog post with:

In this article, you’ll learn which Geneo AI alternatives actually connect AI visibility to business outcomes, which ones stop at dashboards and mention counts, and how to pick the right tool for your team’s size, budget, and stage of growth. You’ll also see where each tool fits in a modern organic strategy that treats AI search as an additional channel alongside traditional SEO, not a replacement for it.

Table of Contents

Quick comparison

|

Tool |

Best for |

Engines covered |

Pricing (starting) |

Optimization depth |

|---|---|---|---|---|

|

Full-funnel teams tying AI visibility to revenue |

ChatGPT, Perplexity, Claude, Copilot, Gemini |

Full cycle: Discover, Monitor, Improve, Govern + Agent Builder (180+ nodes) |

||

|

Peec AI |

Agencies needing cross-engine evidence |

ChatGPT, Perplexity, Gemini, AIO |

~€89/mo (Starter) |

Monitoring-first, light on prescriptive guidance |

|

Rankability AI Analyzer |

SEO + content teams wanting unified GEO/SEO |

ChatGPT, Gemini, Claude, Perplexity, AIO |

Varies by tier |

Built-in audits and fix steps |

|

AthenaHQ |

Data-driven teams wanting diagnostic depth |

ChatGPT, Perplexity, Gemini, Claude, Copilot, AIO |

$295/mo (Self-Serve) |

Metadata and content recommendations |

|

SE Ranking |

SEO teams expanding into AI |

ChatGPT, AIO, AI Mode |

Add-on to SE Ranking subscription |

Descriptive (mentions and trends) |

|

LLMrefs |

Small teams piloting AI visibility |

ChatGPT, Gemini, Perplexity |

Budget-friendly |

Minimal signals |

|

Otterly.ai |

Brand and PR teams tracking sentiment |

ChatGPT, AIO, Perplexity, Gemini, Copilot |

$29/mo (Lite, 10 prompts) |

25+ factor GEO audit |

1. Analyze AI: the alternative that connects AI visibility to revenue

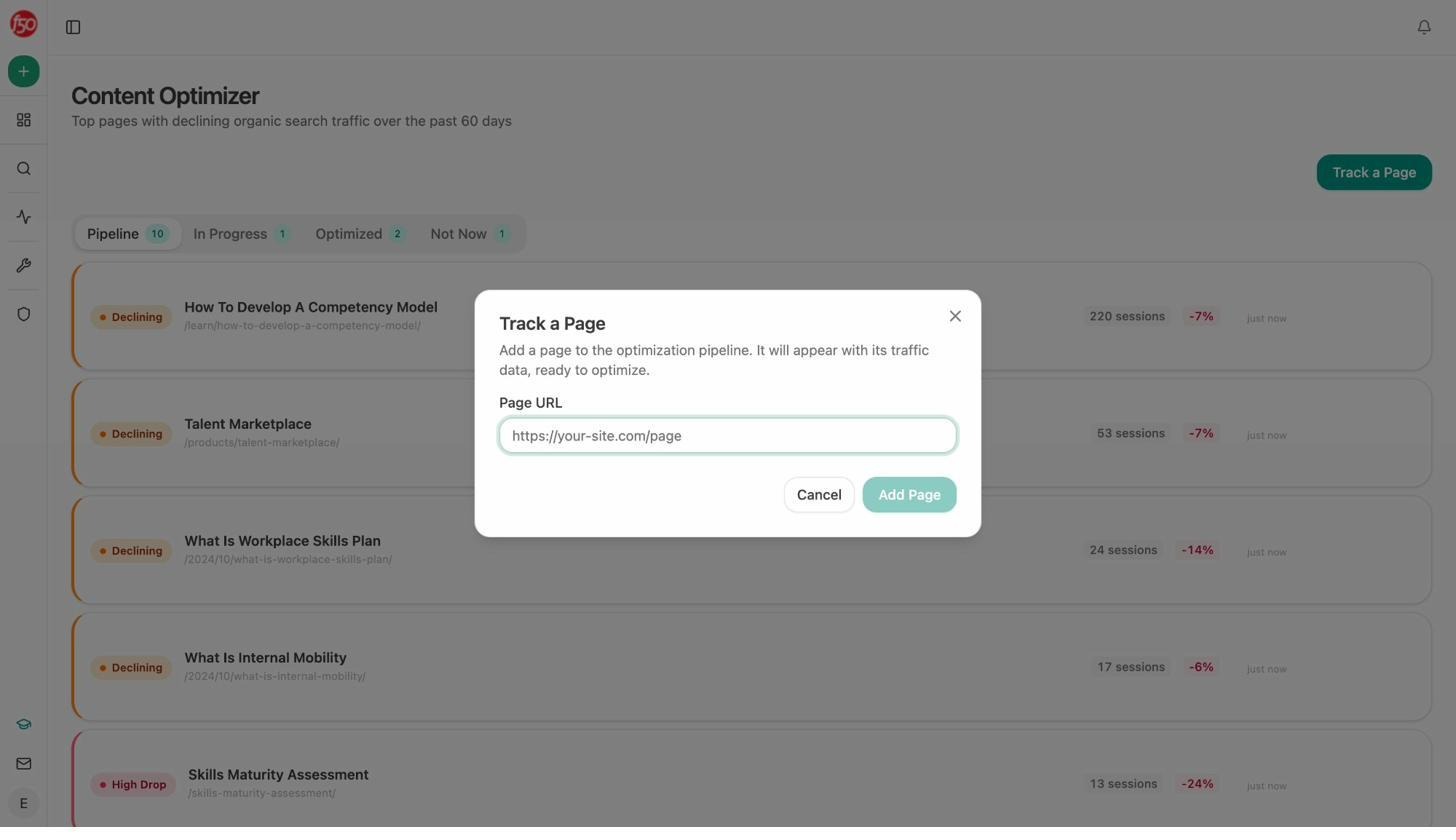

Most GEO tools treat AI visibility like a vanity metric. They tell you that your brand was mentioned in a Perplexity response. They do not tell you whether that mention sent traffic, whether that traffic converted, or whether it was worth optimizing for.

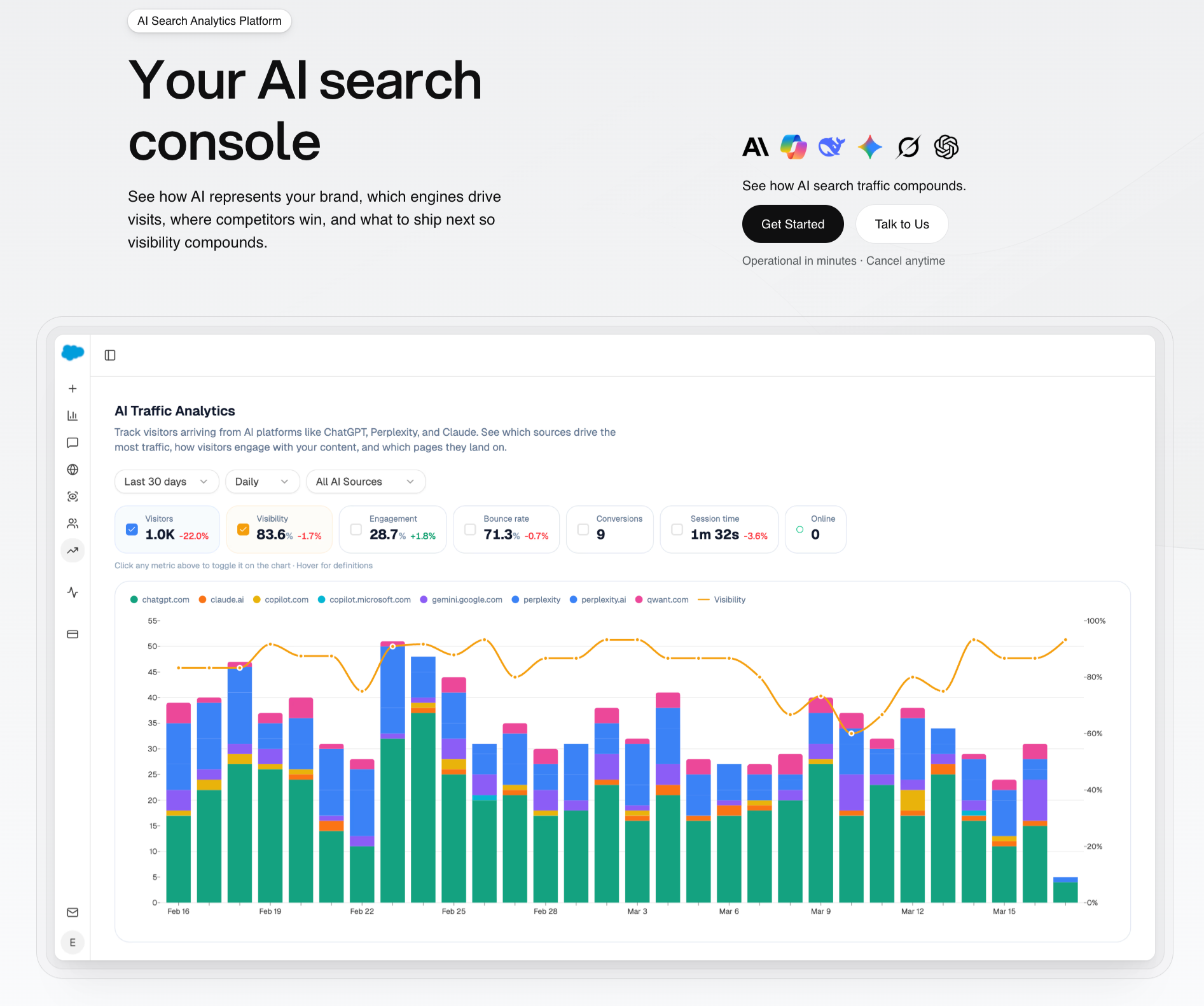

Analyze AI closes that loop. It is an agentic platform for SEO, AEO, content, and GTM operations that tracks the entire journey from AI answer to landing page to conversion. You see which engines send sessions, which pages those visitors land on, and how much revenue they influence.

Track real AI traffic by engine, not just mentions

The AI Traffic Analytics dashboard shows every session from ChatGPT, Perplexity, Claude, Copilot, and Gemini attributed to its source. You see volume by engine, trends over time, and what percentage of your total traffic comes from AI referrers.

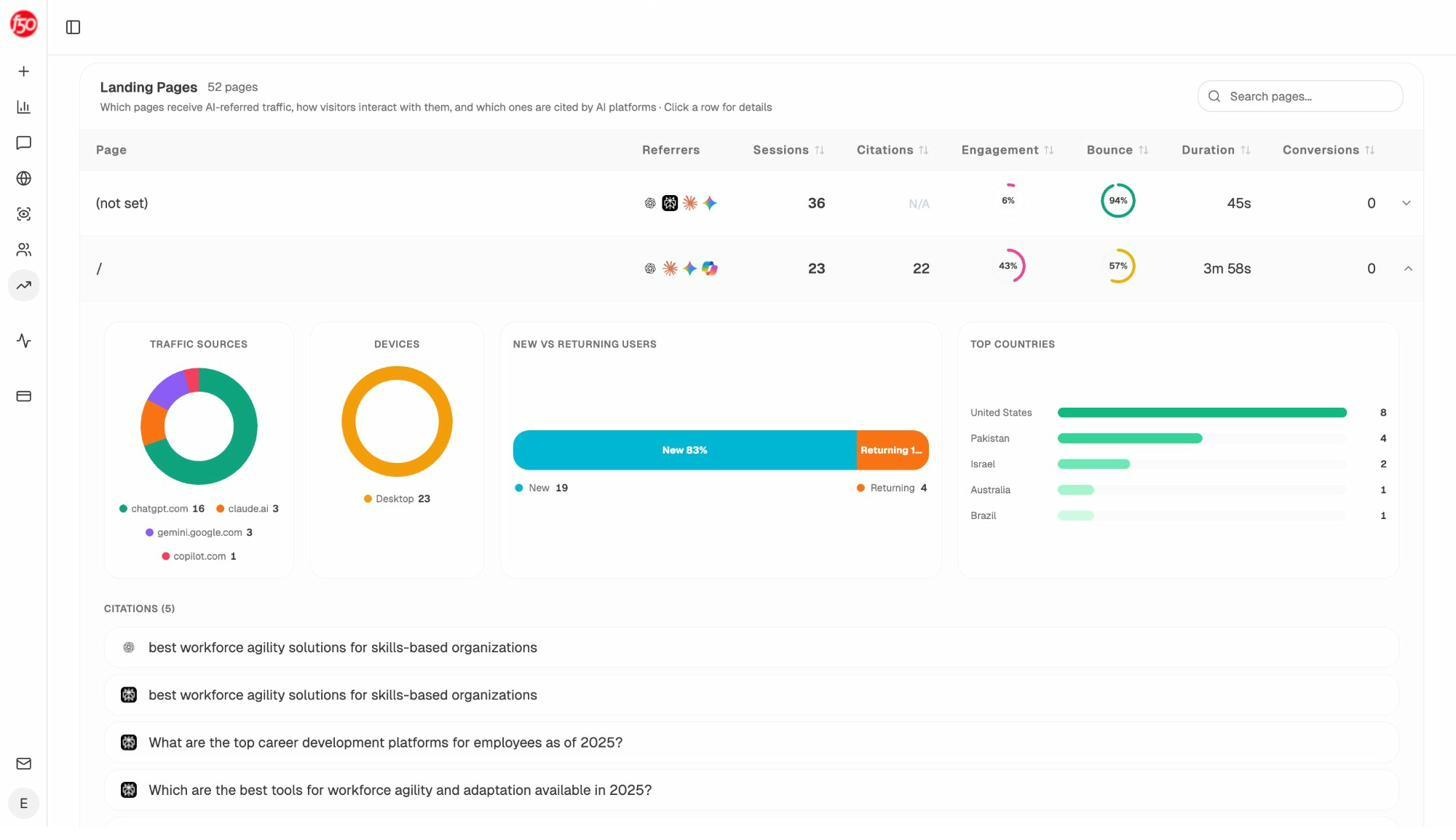

The Landing Pages report goes deeper. You see which pages receive AI referrals, which engine sent each session, and what conversion events those visits trigger. When your product comparison page converts 12% from Perplexity while an old blog post gets zero conversions from ChatGPT, you know exactly where to focus.

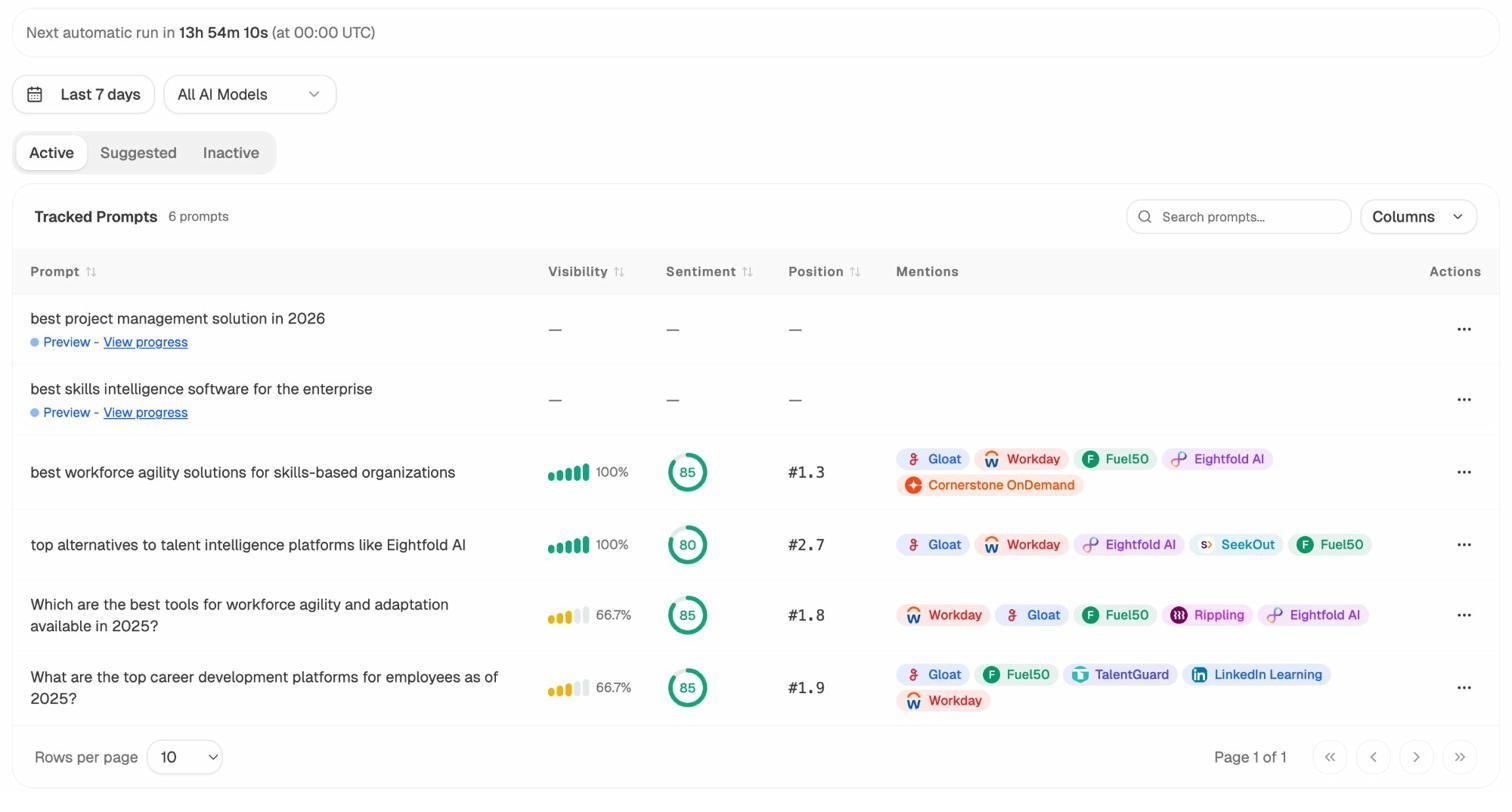

Track the prompts buyers use and see where you win or lose

Prompt Tracking shows your brand’s visibility percentage, position relative to competitors, and sentiment score for every tracked prompt across all major LLMs.

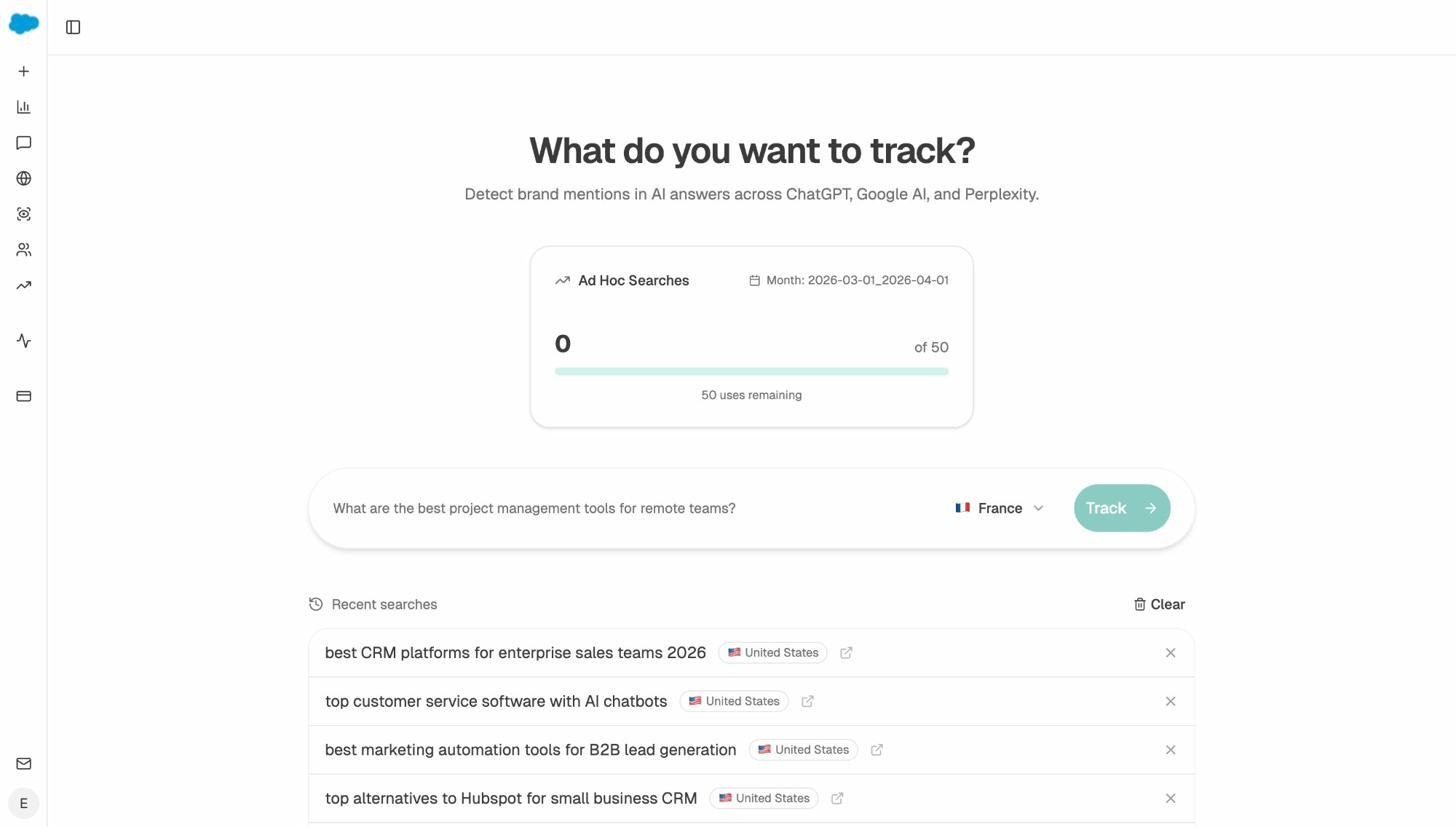

Not sure which prompts to track? The Prompt Discovery feature suggests bottom-of-funnel prompts based on your category. You can also run Ad Hoc Prompt Searches to check any prompt instantly across engines.

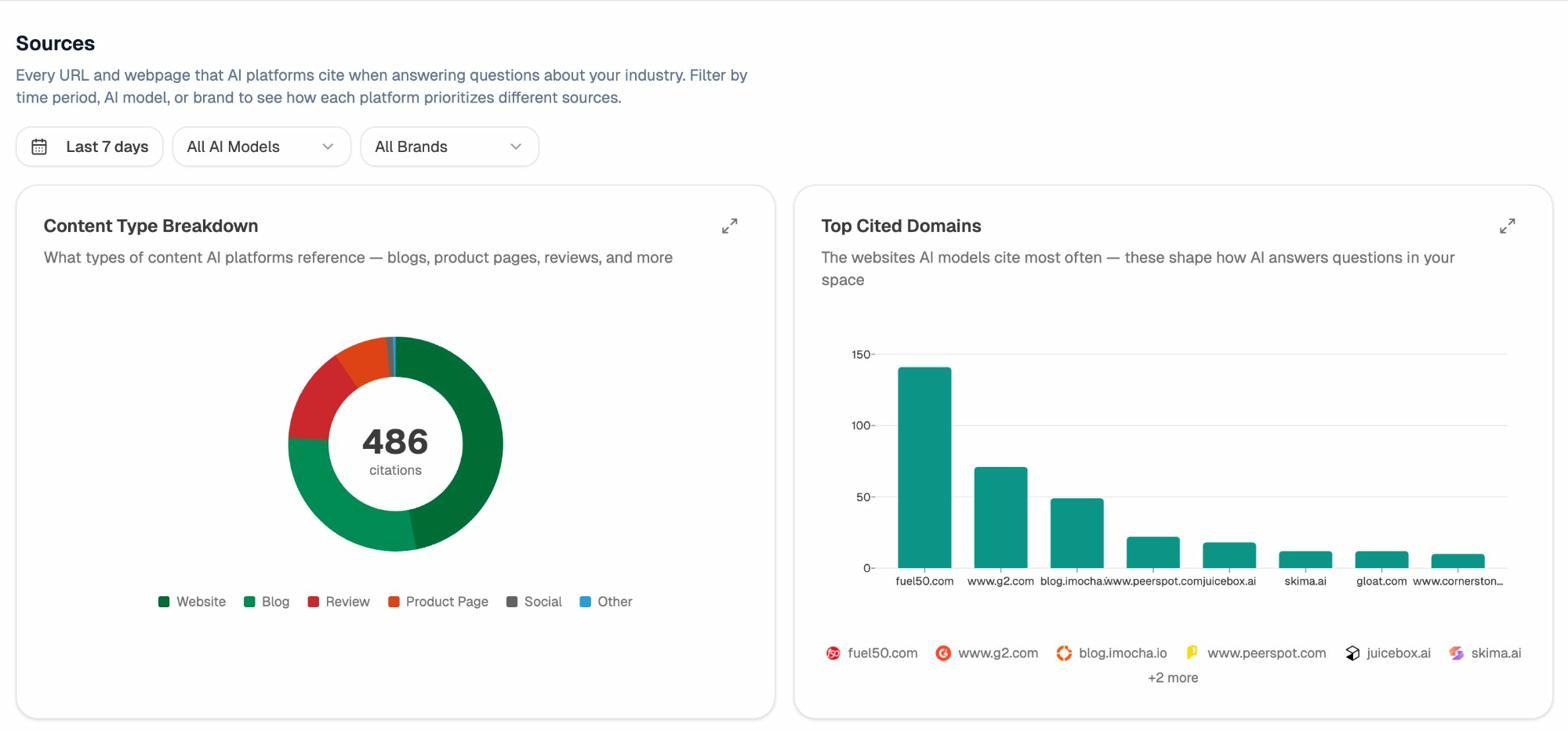

Audit the sources AI models trust

Citation Analytics reveals which domains and URLs models cite when answering questions in your category. Instead of generic link building, you target the specific sources that shape AI answers. You see usage count per source, which models reference each domain, and when citations first appeared.

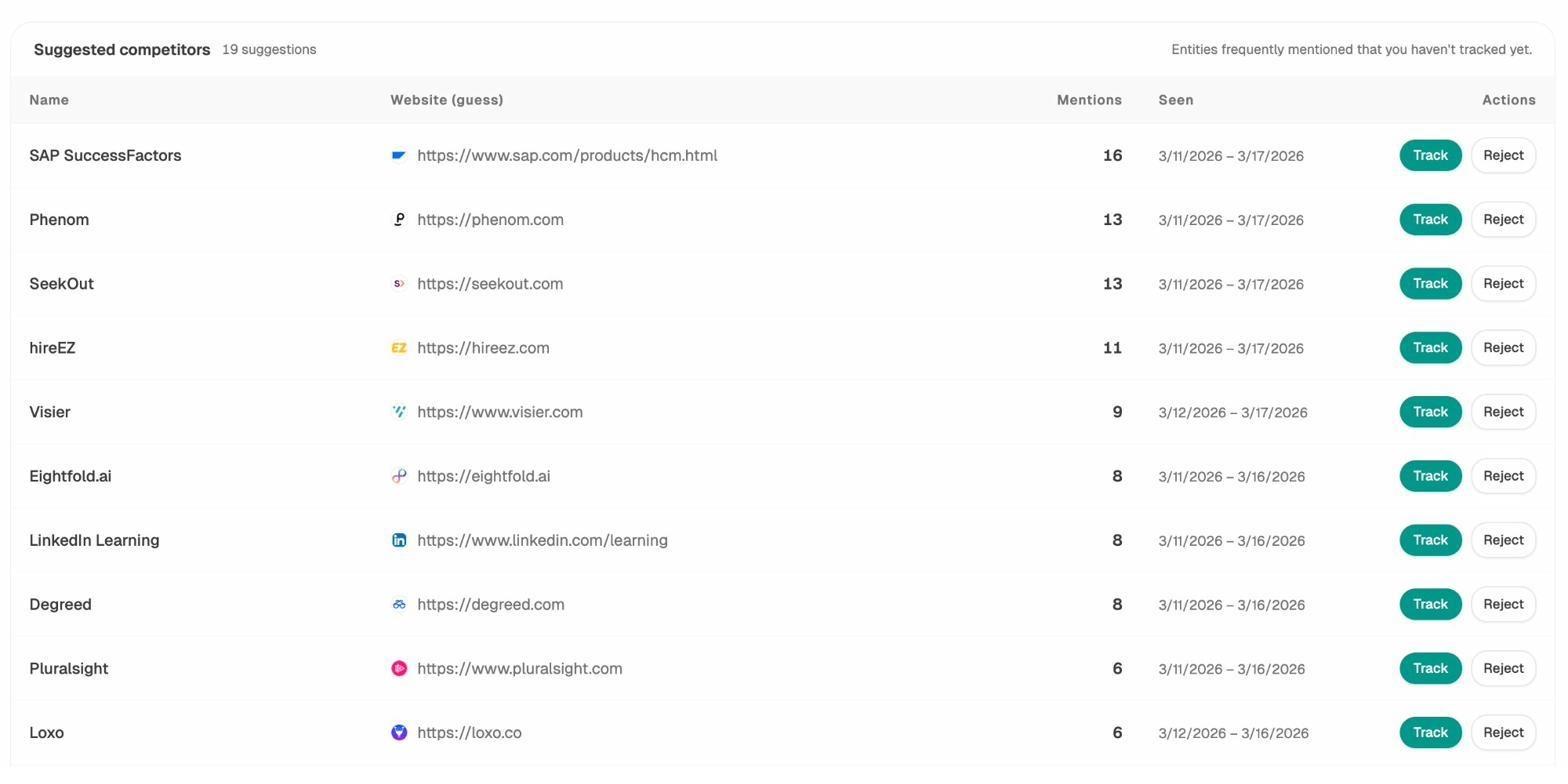

Find competitor gaps and act on them

The Competitor Intelligence module surfaces brands that appear alongside you in AI answers, even ones you did not know were competing for the same prompts.

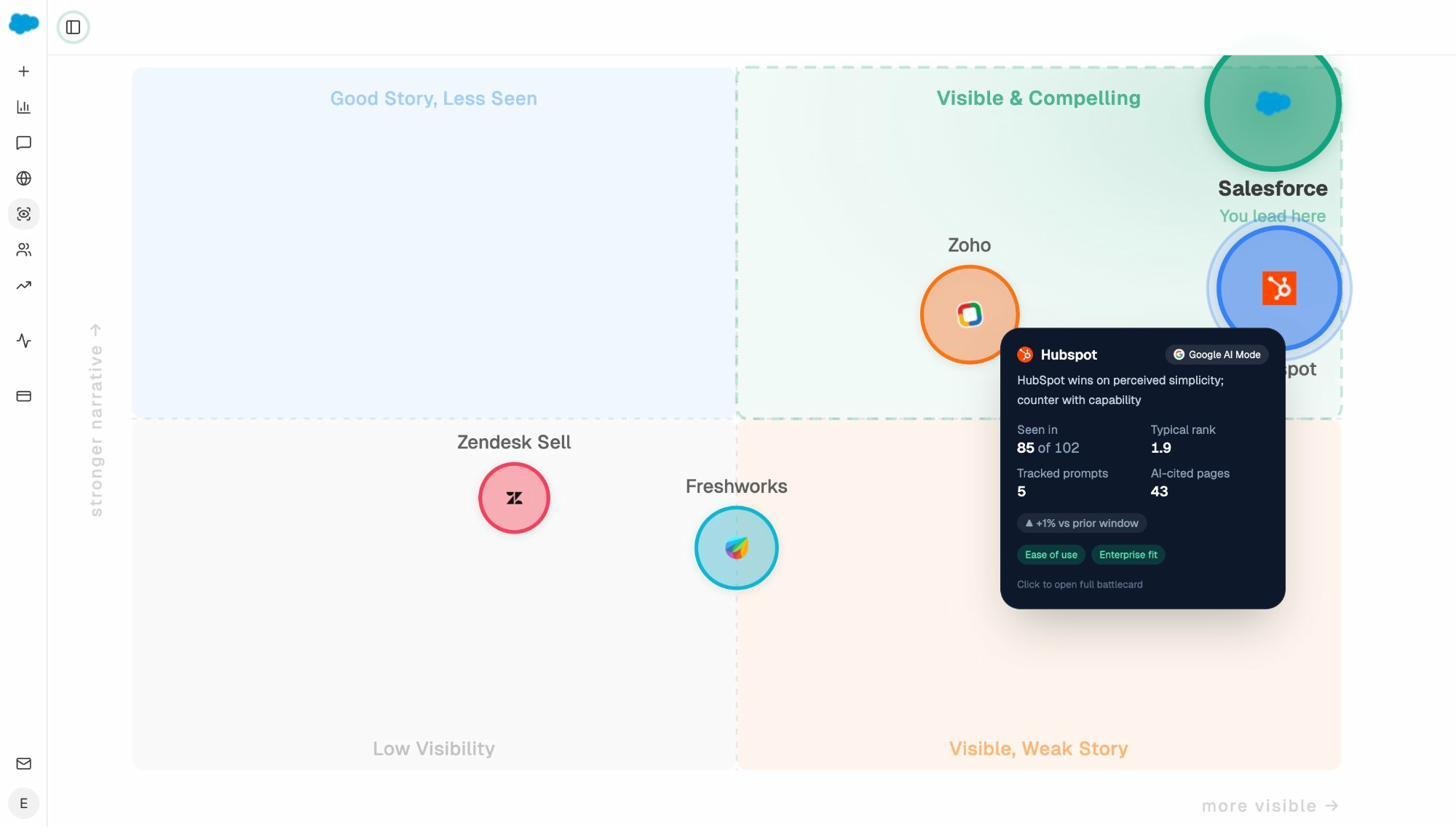

The Perception Map shows how AI models position your brand versus competitors across dimensions like trust, innovation, and value. You stop guessing how LLMs perceive you and start managing the narrative.

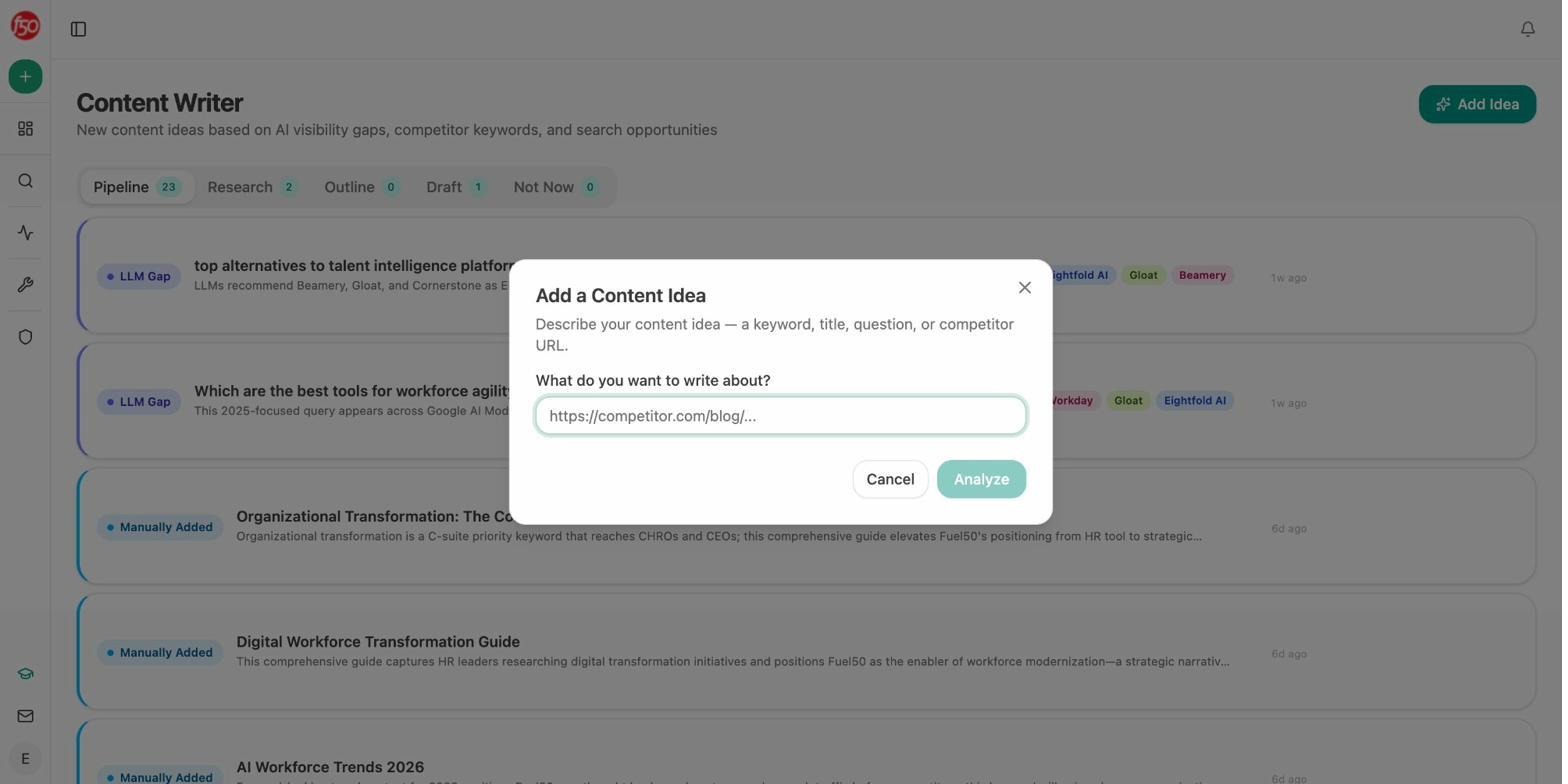

Write and optimize content that performs in both search and AI

This is where Analyze AI separates from every other tool on this list. Most GEO platforms stop at monitoring. Analyze AI includes a full content engine.

The AI Content Writer generates ideas based on AI visibility gaps, competitor keywords, and search opportunities. It moves ideas through a pipeline (research, outline, draft) with your brand’s voice and proof points injected at every stage.

The AI Content Optimizer fetches your existing content, scores it on argument flow, clarity, and structure, then provides line-by-line editorial comments and optimization suggestions based on identified gaps.

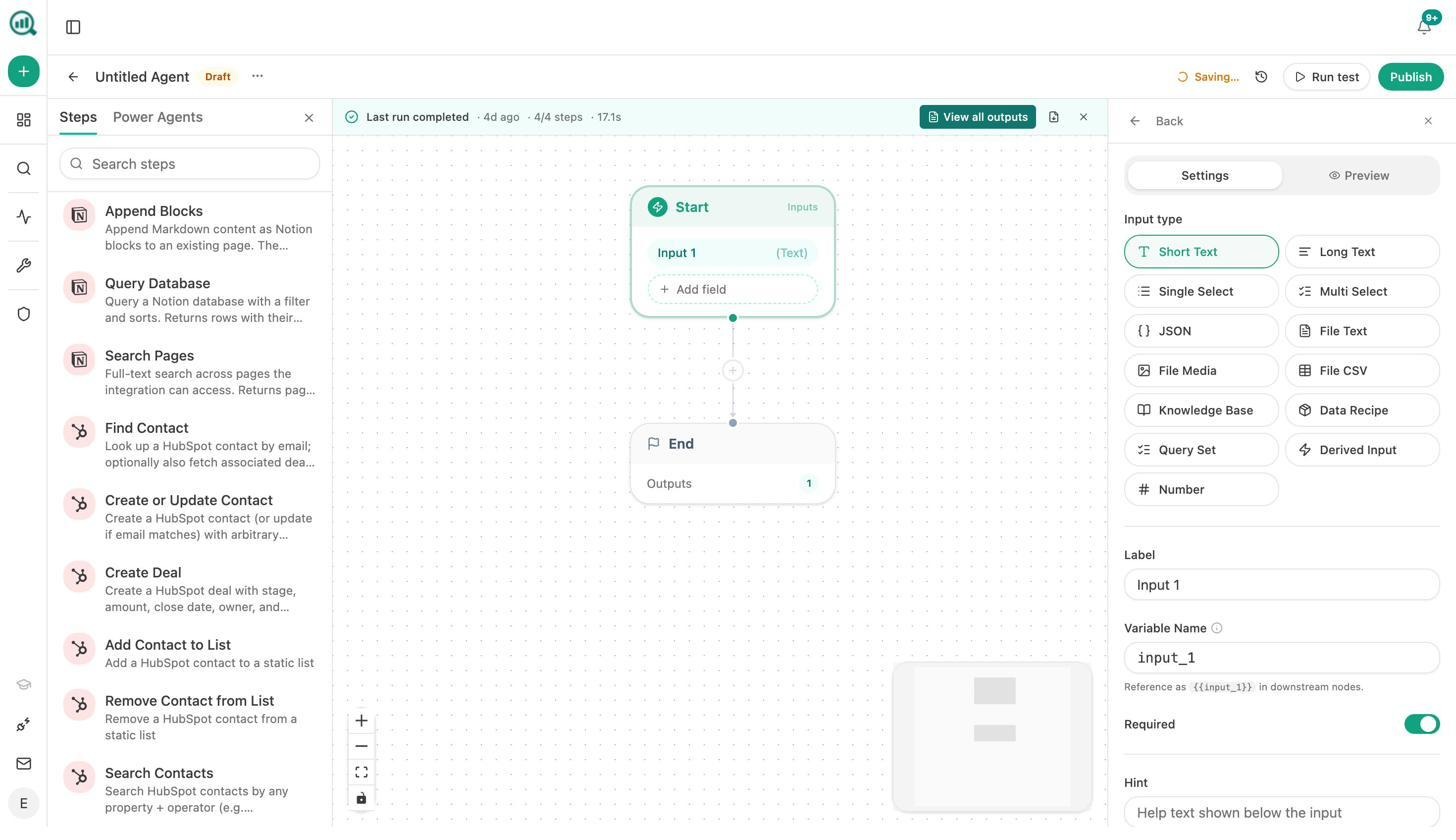

Automate your entire marketing operations with Agent Builder

Here is the real differentiator. The Agent Builder gives you 180+ nodes, 34 pre-built data recipes, 13 input primitives, and three trigger modes (manual, scheduled, webhook). It connects directly to GA4, Google Search Console, DataForSEO, Semrush, HubSpot, Notion, WordPress, Slack, and every major LLM.

This is not just an automation layer. It is a programmable substrate for running your entire marketing operation. A few examples of what teams build with it:

For agencies: A Monday morning client briefing agent runs automatically at 7am. It pulls AI visibility deltas, GSC top pages, new backlinks, and competitor movement for each client, generates a branded report, and emails it to the account team. Reporting day stops existing.

For content teams: A brief-to-publish pipeline fires when a Notion card moves to “approved.” It generates research, outline, and full draft with your brand vault injected, runs an AEO content scorecard, and publishes to WordPress only if the score passes your quality gate.

For PR and comms: A crisis early-warning agent runs every 15 minutes. It scans brand mentions and news, filters by sentiment and reach, and alerts your team in Slack with a drafted response before your CEO even finds out.

For sales: An inbound-form-to-enriched-lead agent fires on every form submission. It verifies the email, researches the company, pulls a domain overview and lighthouse audit, enriches the contact in HubSpot, and Slacks the AE. The lead is fully enriched before anyone touches it.

The difference between Analyze AI and every other tool on this list is that other tools show you data. Analyze AI shows you data, helps you create better content, and then automates the operational workflows around both. You get weekly email digests, AI Battlecards, Sentiment Monitoring, and an Engine Breakdown view across all providers.

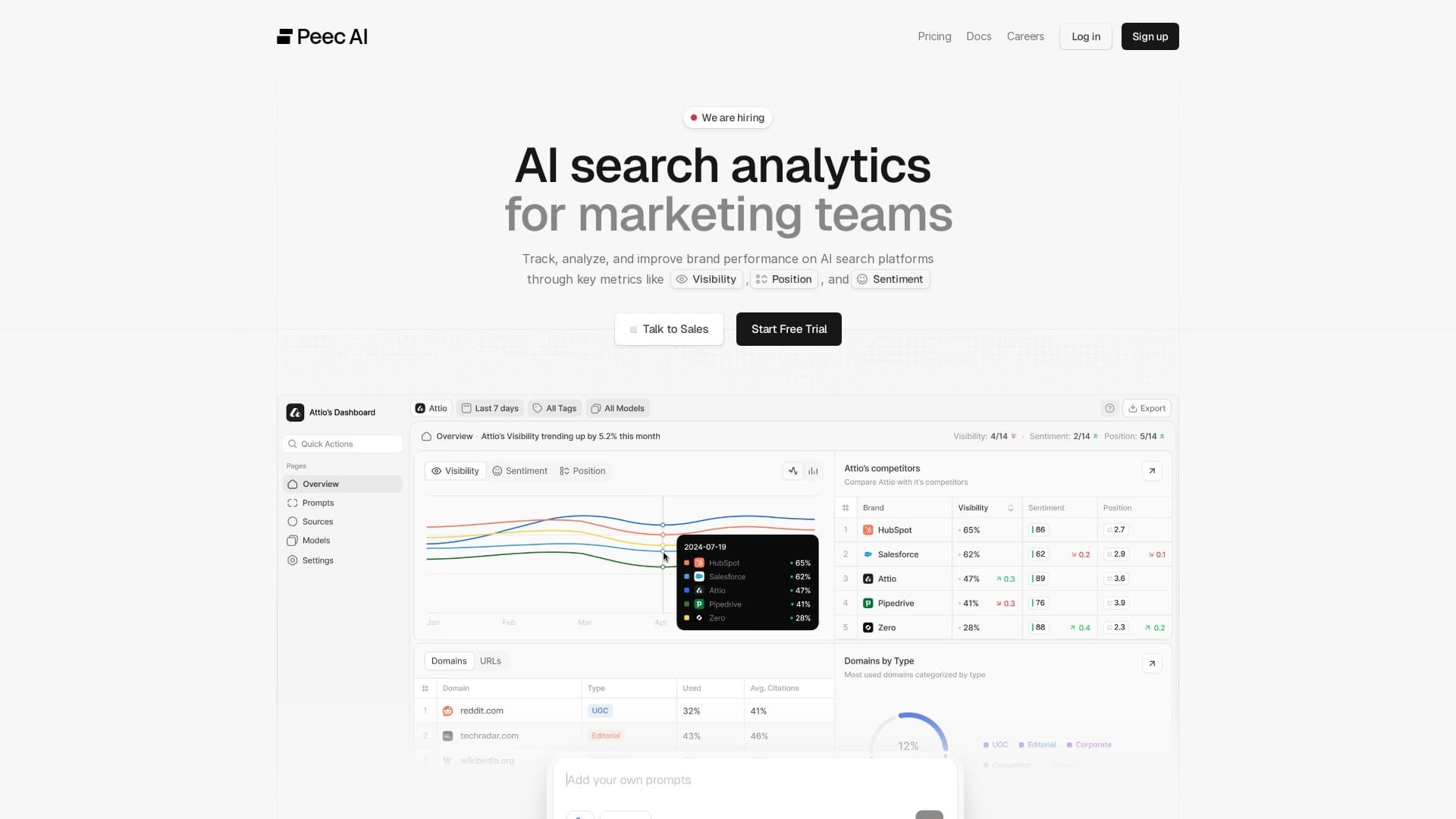

2. Peec AI: best for multi-engine evidence and agency reporting

Peec AI focuses on storing the full prompt, the generated answer, the mentions, and the citations across ChatGPT, Perplexity, Gemini, and Google AI Overviews. That evidence trail makes content triage faster because you can trace any visibility win or loss back to the source pages that influenced it.

Where it works well: Agencies love the export options (CSV, API, Looker Studio) because each data point carries the evidence needed for client-ready reporting. The share-of-voice views across engines are strong.

Where it falls short: Peec is monitoring-first. It tells you where you stand, but guidance on how to improve a weak prompt set is limited. Pricing starts at ~€89/month for Starter (25 prompts, 3 countries) and scales to €499 for Enterprise. Daily refreshes work for most brands, but highly volatile queries can shift between runs.

Versus Geneo: Peec offers broader multi-engine coverage and stronger evidence packaging. Geneo is simpler but shallower. Neither gives you the content creation, optimization, or automation capabilities that Analyze AI includes.

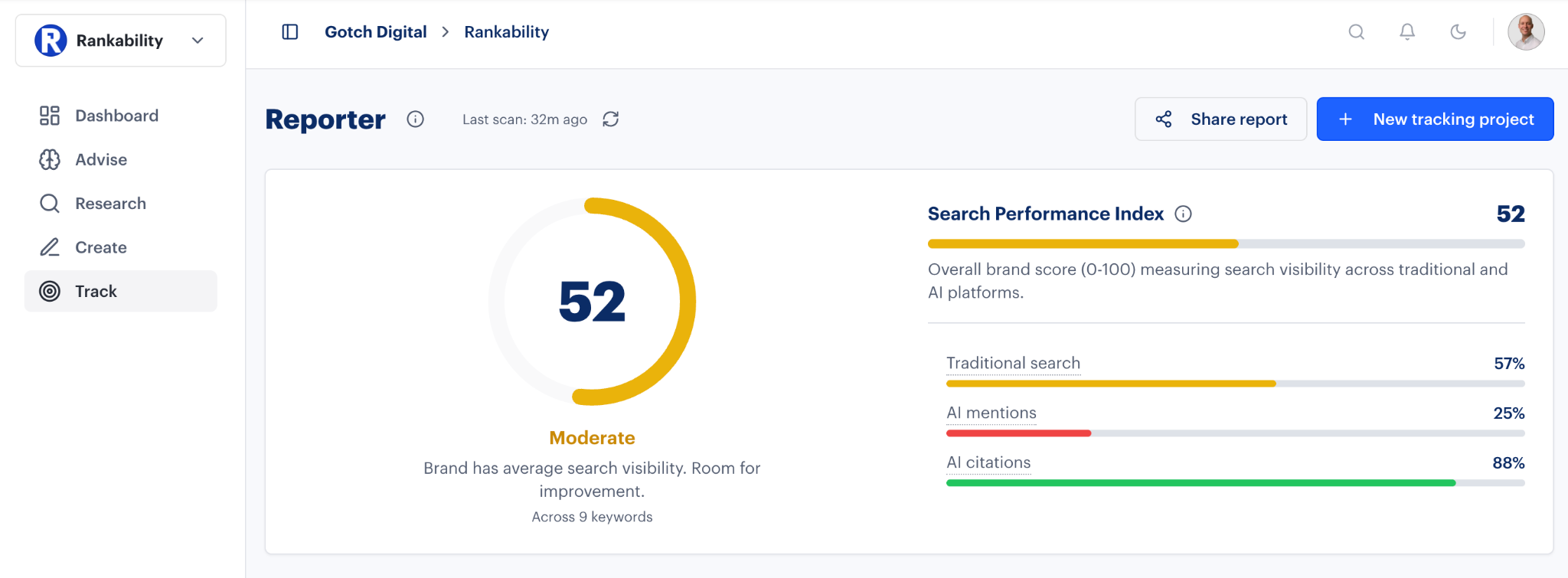

3. Rankability AI Analyzer: best for unified GEO + SEO workflows

Rankability combines AI visibility tracking with traditional SEO content metrics in one workspace. When the platform detects weak citations in ChatGPT or Gemini, it highlights on-page fixes, metadata gaps, and authority signals that could strengthen future mentions.

Where it works well: Content and search teams get a feedback loop between measurement and improvement. The system links AI visibility back to keyword relevance, content structure, and domain authority.

Where it falls short: Many advanced capabilities (full engine coverage, automated audits, trend alerts) are reserved for upper-tier plans. The platform is still maturing its AI attribution accuracy, and some users report that its LLM reasoning feels less precise than dedicated tools. Scaling up prompts or clients increases costs quickly.

Versus Geneo: Rankability extends the workflow into optimization, which Geneo does not emphasize. But its full power only unlocks at higher investment levels.

4. AthenaHQ: best for data-driven teams wanting diagnostic depth

AthenaHQ processes millions of AI responses and correlates them to hundreds of thousands of cited domains. It maps every brand mention back to the exact cited domains, showing which websites shape AI perception the most.

Where it works well: Analysts and data-driven marketers get structured diagnostics, cross-engine consistency views, and longitudinal trend tracking. The tool highlights prompts that should include your brand but do not, creating a focused action list for content strategists.

Where it falls short: Pricing starts at $295/month (Self-Serve) with a $95 first-month offer. Setup requires careful configuration of prompts, competitor domains, and content mapping logic. Without a dedicated analyst, the flexibility can become noise rather than clarity.

Versus Geneo: AthenaHQ trades simplicity for precision. It is the strongest diagnostic tool on this list, but its price and complexity make it a poor fit for small teams.

5. SE Ranking AI Visibility Tracker: best for SEO teams expanding into AI

SE Ranking’s AI Visibility Tracker adds AI answer tracking on top of the existing SEO suite. You see mentions, hyperlink presence, and competitor references in AI responses using the same filters and dashboards you already know.

Where it works well: SEO-first teams adopt AI monitoring without learning a new toolset. The integrated dashboards make client reporting easy because there is no need to merge exports from different systems.

Where it falls short: Engine coverage is limited to ChatGPT, AI Overviews, and AI Mode. The module is more descriptive than diagnostic. It shows when visibility occurs but offers limited insight into why results change. The AI Search add-on operates on “checks per month,” which can push costs up as you scale.

Versus Geneo: SE Ranking is a bridge, not a destination. It works best as a quick add-on for teams already paying for their SEO suite.

6. LLMrefs: best for small teams or early testing

LLMrefs uses keyword-based visibility instead of prompt-level monitoring. You enter your target terms and the tool checks how those terms appear inside AI answers across ChatGPT, Gemini, and Perplexity. The unified LLMrefs Score (LS) gives you a single health indicator.

Where it works well: Setup takes minutes. No prompt libraries, no API configurations. The weekly visibility reports with trend graphs and competitor comparisons are clean and clear.

Where it falls short: Engine coverage is narrower. The default weekly refresh cadence means short-term fluctuations go unnoticed. Because it abstracts away from prompt-level tracking, it tells you that you were mentioned but not always why or how to fix gaps.

Versus Geneo: LLMrefs is simpler and cheaper, making it a solid on-ramp for teams testing whether AI visibility matters before committing to a larger stack.

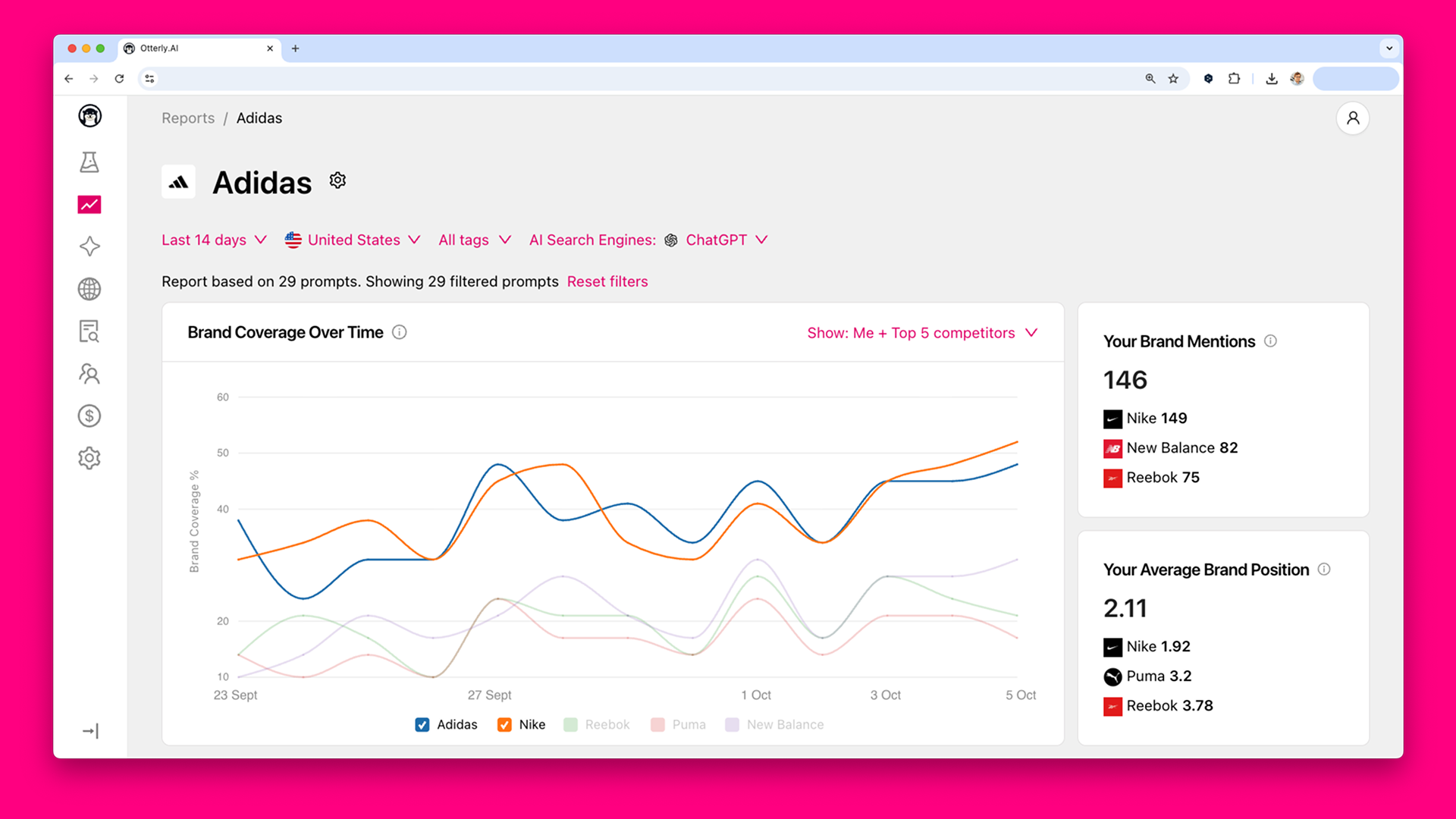

7. Otterly.ai: best for brand tone and sentiment tracking

Otterly.ai tracks not just whether AI models mention you, but how they describe you. The Brand Visibility Index measures tone and positioning across ChatGPT, Perplexity, Gemini, AI Overviews, AI Mode, and Copilot.

Where it works well: The built-in GEO audit analyzes 25+ on-page and structural factors to show why your content is or is not being cited. The Semrush integration connects familiar SEO workflows with AI visibility tracking.

Where it falls short: Otterly is still early in its lifecycle. Public case studies are scarce. The Lite plan ($29/month) gives you only 10 prompts, which scales fast. Advanced optimization workflows like automated content improvement are still developing.

Versus Geneo: Otterly focuses more on brand representation and sentiment than raw visibility counts. It is a stronger fit for PR and comms teams than for pure SEO operators.

How to choose the right Geneo alternative

The right tool depends on what you need next.

If you need to prove that AI visibility drives revenue, not just mentions, Analyze AI is the clear choice. It is the only platform on this list that connects AI visibility to traffic, conversions, and pipeline. And it is the only one with a full content creation engine and a programmable Agent Builder that can automate your entire marketing ops layer.

If you need cross-engine evidence for client reporting, Peec AI packs the strongest export and evidence trail for agencies.

If you are an SEO team taking your first step into AI monitoring, SE Ranking’s add-on lets you start without a new tool or login.

If you want to test whether AI visibility matters before investing, LLMrefs gives you a clean, affordable baseline.

Whatever you choose, remember that SEO is not dead. AI search is an additional organic channel. The brands that win are the ones that compound what works across both channels, not the ones that panic and pivot.

If you want to see how your brand shows up across AI engines right now, try Analyze AI’s free AI Visibility Checker or explore pricing to get started.

Ernest

Ibrahim