Summarize this blog post with:

Accessibility often looks “fine” right up until a user tries to act — and your site quietly stops them.

-

A checkout button passes design reviews but doesn’t exist for screen-reader users.

-

A visually clean page traps keyboard-only visitors because focus breaks mid-flow.

-

A small update removes ARIA roles and makes sections unreadable without anyone noticing.

None of this appears in analytics as an “accessibility error.” It shows up as drop-offs, abandoned flows, and frustrated users who never explain what went wrong.

We wrote this article to close that gap. It breaks down what accessibility actually means, how ADA and WCAG fit together, and how to check your site step by step using manual reviews, the right tools, real user testing, and ongoing monitoring — not one-off scans.

Table of Contents

What website accessibility actually means (beyond checklists and code fixes)

Accessibility isn’t about passing automated scans or adding a few ARIA tags. At its core, it’s about inclusive use — making sure every person, regardless of ability, can understand, navigate, and act on your site without unnecessary friction. WCAG is the framework we measure against, but real accessibility is the lived experience of your users. It’s how your content behaves when someone relies on a screen reader, navigates only with a keyboard, uses voice control, or processes information differently.

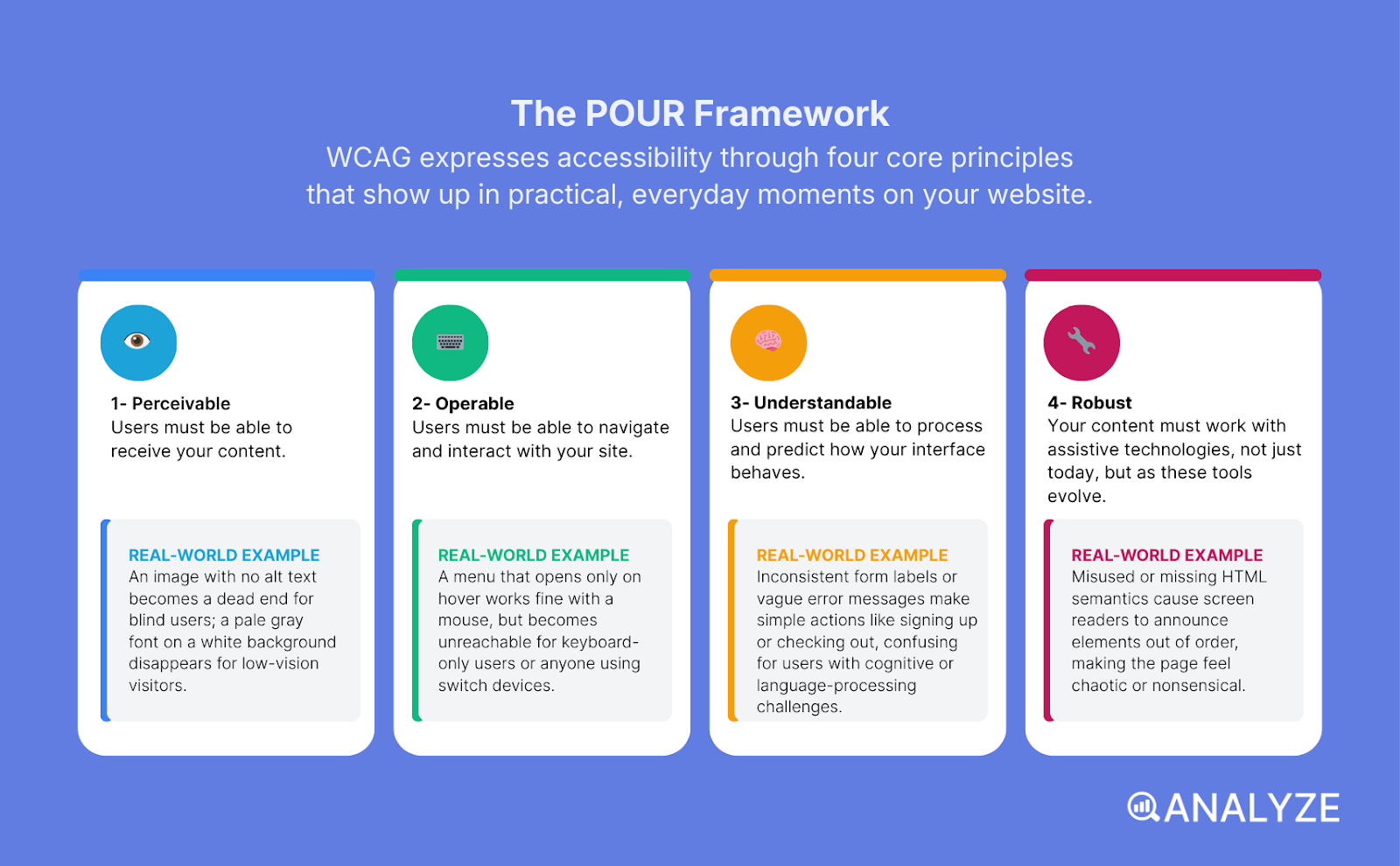

WCAG expresses this through four principles — POUR — and each one shows up in very practical, everyday moments:

-

Perceivable: Users must be able to receive your content.

Example: An image with no alt text becomes a dead end for blind users; a pale gray font on a white background disappears for low-vision visitors. -

Operable: Users must be able to navigate and interact with your site.

Example: A menu that opens only on hover works fine with a mouse, but becomes unreachable for keyboard-only users or anyone using switch devices. -

Understandable: Users must be able to process and predict how your interface behaves.

Example: Inconsistent form labels or vague error messages make simple actions—like signing up or checking out—confusing for users with cognitive or language-processing challenges. -

Robust: Your content must work with assistive technologies—not just today, but as these tools evolve.

Example: Misused or missing HTML semantics cause screen readers to announce elements out of order, making the page feel chaotic or nonsensical.

When you see accessibility through this lens, it stops being a technical task and becomes a user-experience commitment. It’s not about checking boxes — it’s about ensuring every person can access the same information, take the same actions, and experience your site with the same confidence as everyone else.

The different types of accessibility testing and why each one matters

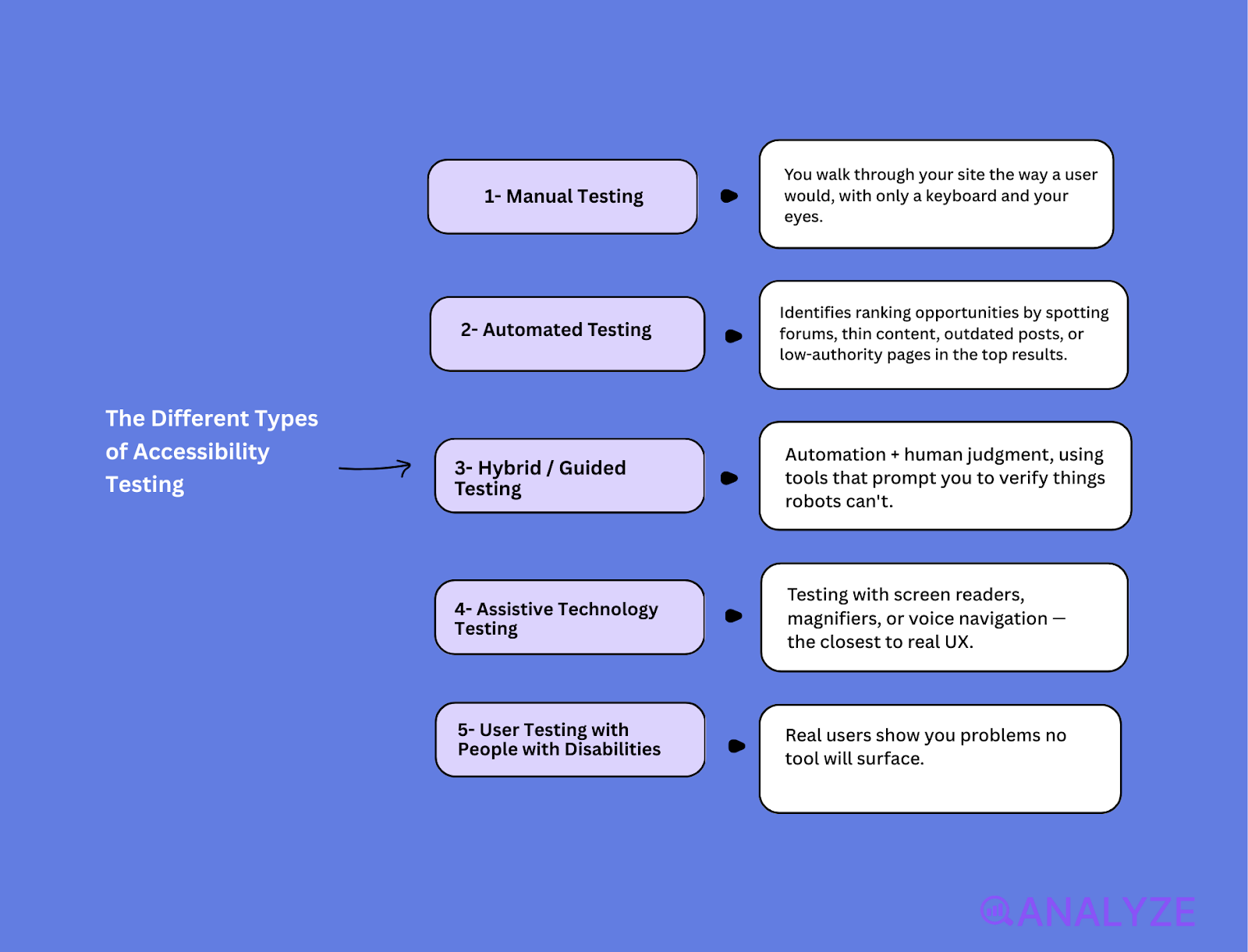

No single test catches every accessibility issue. Each method reveals a different kind of barrier, which is why relying only on automated scans leaves huge gaps in user experience.

Manual testing

You walk through your site the way a user would — with only a keyboard and your eyes.

What it catches: keyboard traps, broken focus order, missing labels, inconsistent interactions.

Example: A modal that looks perfect visually but lets keyboard focus slip behind it.

Automated testing

Fast scans that flag obvious WCAG violations at scale.

What it catches: missing alt text, contrast issues, incorrect ARIA roles, duplicate IDs.

Example: A sitewide audit revealing hundreds of unlabeled images in minutes.

Hybrid / guided testing

Automation + human judgment, using tools that prompt you to verify things robots can’t.

What it catches: unclear labels, alt text that’s technically present but meaningless.

Example: A scanner can’t tell if “image123.jpg” is a useful description — a human can.

Assistive technology testing

Testing with screen readers, magnifiers, or voice navigation — the closest to real UX.

What it catches: reading order issues, missing announcements, misinterpreted roles.

Example: Automated tools miss logical reading order mistakes that break screen-reader flow.

User testing with people with disabilities

Real users show you problems no tool will surface.

What it catches: cognitive load, unclear pathways, friction that isn’t “technically” an error.

Example: A blind user explaining that product cards read in the wrong sequence despite passing WCAG.

Summary table: What each testing type reveals

|

Testing type |

What it catches best |

What automated tools miss |

|

Manual testing |

Keyboard traps, focus issues, interaction problems |

Behavior that looks fine in code but breaks usability |

|

Automated testing |

Scalable WCAG violations, missing attributes |

Meaning, intent, reading order, real UX |

|

Hybrid testing |

Context errors (labels, alt text quality) |

Whether descriptions or roles make sense to humans |

|

Assistive tech testing |

Screen-reader flow, ARIA interpretation |

True user experience across assistive tools |

|

User testing |

Cognitive friction, confusion, unexpected barriers |

Lived-experience issues tools can’t mode |

Why accessibility must be a priority for every organization in 2026

Accessibility used to be framed as a compliance task. In 2026, it’s a business advantage — and ignoring it directly impacts revenue, visibility, and reputation. The shift isn’t theoretical; it’s happening because the way people use the web, and the way regulators enforce standards, has changed.

Legally, the pressure is unmistakable. The ADA continues to drive lawsuits in the U.S., and the EU Accessibility Act expands requirements across Europe. Even small teams now face expectations once reserved for enterprise brands. Regulators assume digital access is a basic right — and courts are treating inaccessible sites the same way they treat physical barriers.

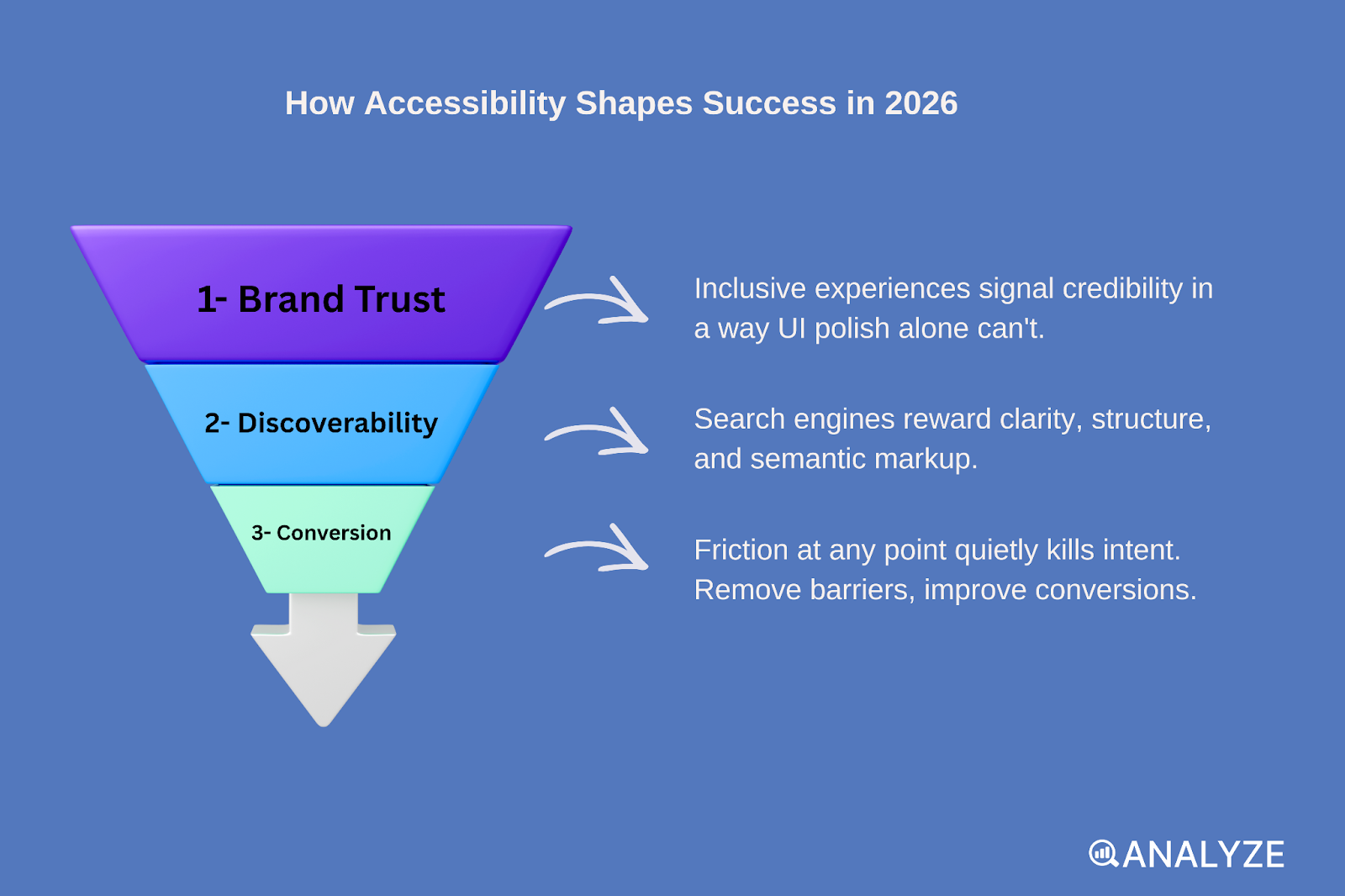

But the bigger shift is user expectation. People now move between devices, screen sizes, and assistive tools without thinking about it. If a website breaks on one of those paths, users simply leave. Accessibility has become part of usability:

-

It shapes conversion, because friction at any point in the flow quietly kills intent.

-

It shapes discoverability, because search engines reward clarity, structure, and semantic markup.

-

It shapes brand trust, because inclusive experiences signal credibility in a way UI polish alone can’t.

In 2026, accessibility isn’t just about avoiding penalties — it’s about building websites people can actually use, search engines can properly understand, and brands can stand behind confidently.

How to check website accessibility step by step (our practical workflow)

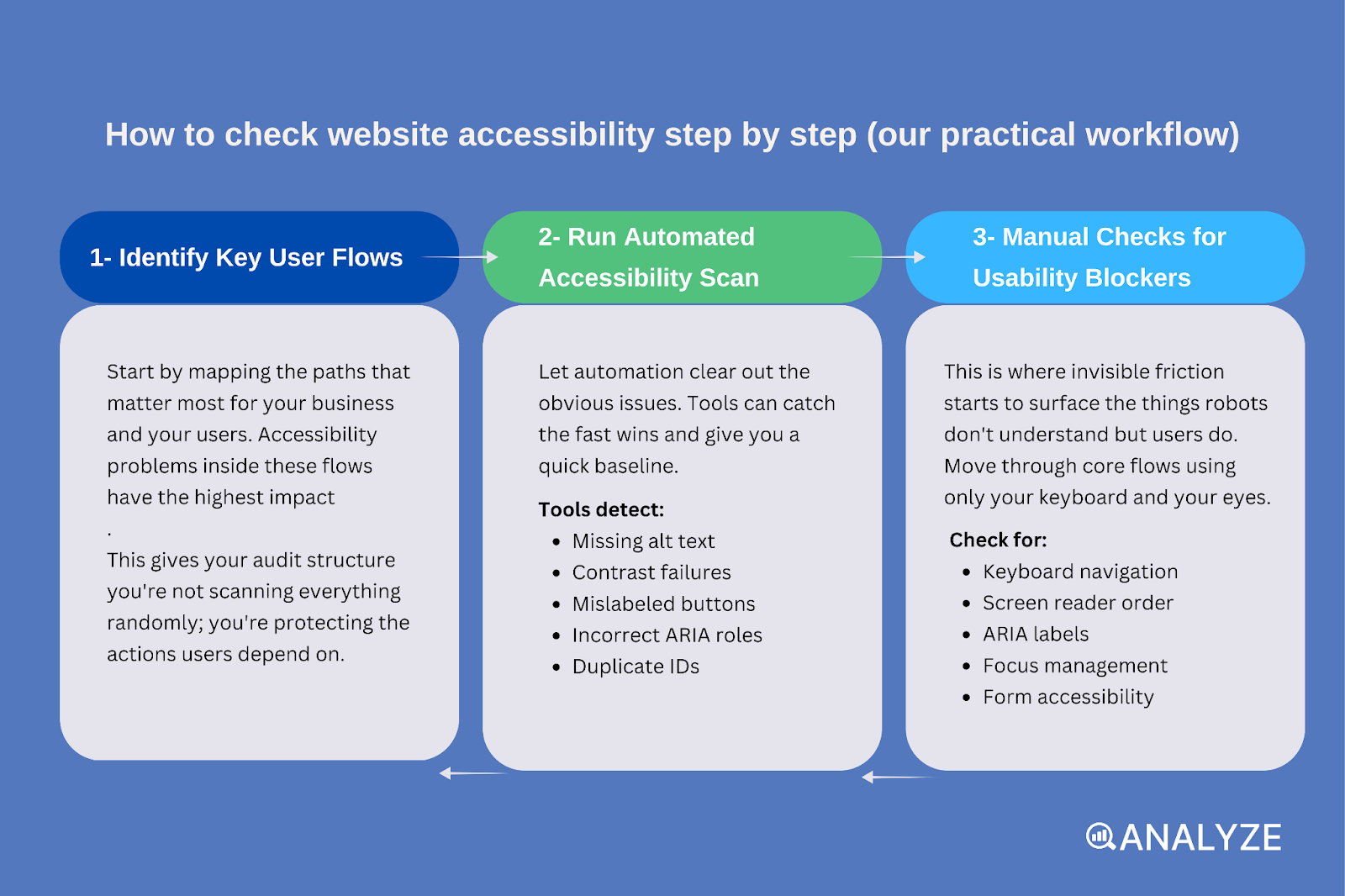

A proper accessibility check isn’t a scavenger hunt for errors — it’s a sequence. Each step builds on the one before it, moving from broad scans to lived experience. When teams follow this order, they uncover the issues that actually affect users, not just the ones tools know how to detect.

Identify key user flows

Start by mapping the paths that matter most for your business and your users. Accessibility problems inside these flows have the highest impact.

Think: sign-ups, checkouts, contact forms, booking flows, dashboards, onboarding screens.

This gives your audit structure — you’re not scanning everything randomly; you’re protecting the actions users depend on.

Run an automated accessibility scan

Next, let automation clear out the obvious issues. Tools like Lighthouse, WAVE, axe, or Accessibility Insights can catch the fast wins: missing alt text, contrast failures, mislabeled buttons, incorrect ARIA roles, duplicate IDs.

You get a quick baseline and eliminate the “low-hanging blockers” before diving deeper.

But remember: automation catches patterns, not experience.

Perform manual checks for usability blockers

This is where invisible friction starts to surface — the things robots don’t understand but users do. Move through core flows using only your keyboard and your eyes.

Check for:

-

Keyboard navigation: Can you reach every interactive element using Tab, Enter, and Space?

-

Screen reader order: Does the page read in a logical sequence, or does it jump around?

-

ARIA labels: Do icons, buttons, and inputs actually announce what they do?

-

Focus management: Does focus move predictably through modals, drop-downs, and form steps?

-

Form accessibility: Are labels clear? Do error messages announce themselves? Can users recover from mistakes?

If something feels off or forces you to “think around” a flaw, it’s an accessibility issue.

Test with assistive technologies

Automated scans and manual checks show you symptoms — assistive technology shows you the experience.

Run core flows using:

-

JAWS (Windows screen reader)

-

NVDA (free Windows screen reader)

-

VoiceOver (macOS and iOS)

-

Zoom/magnification tools

-

Speech navigation tools

Within minutes, you’ll uncover issues that never show up in reports: wrong announcements, cut-off content, mislabeled controls, inconsistent reading order.

Validate with real users where possible

Nothing replaces lived experience. A blind user may navigate with shortcuts you never considered. A neurodivergent user may struggle with instructions that seem “clear” to your team. A mobility-impaired user may reveal timing issues or unreachable controls.

This step transforms your audit from “technically correct” to actually usable.

Prioritize fixes and create an accessibility improvement loop

Accessibility isn’t a one-time project — it’s maintenance.

Once you’ve identified issues:

-

Sort them by user impact, not by how easy they are to fix.

-

Assign ownership across design, engineering, and content teams.

-

Re-test continuously, especially whenever you ship new features or redesign components.

Recurring scans + periodic manual checks + occasional assistive tech/user testing create an ongoing loop that keeps accessibility from slipping as your site evolves.

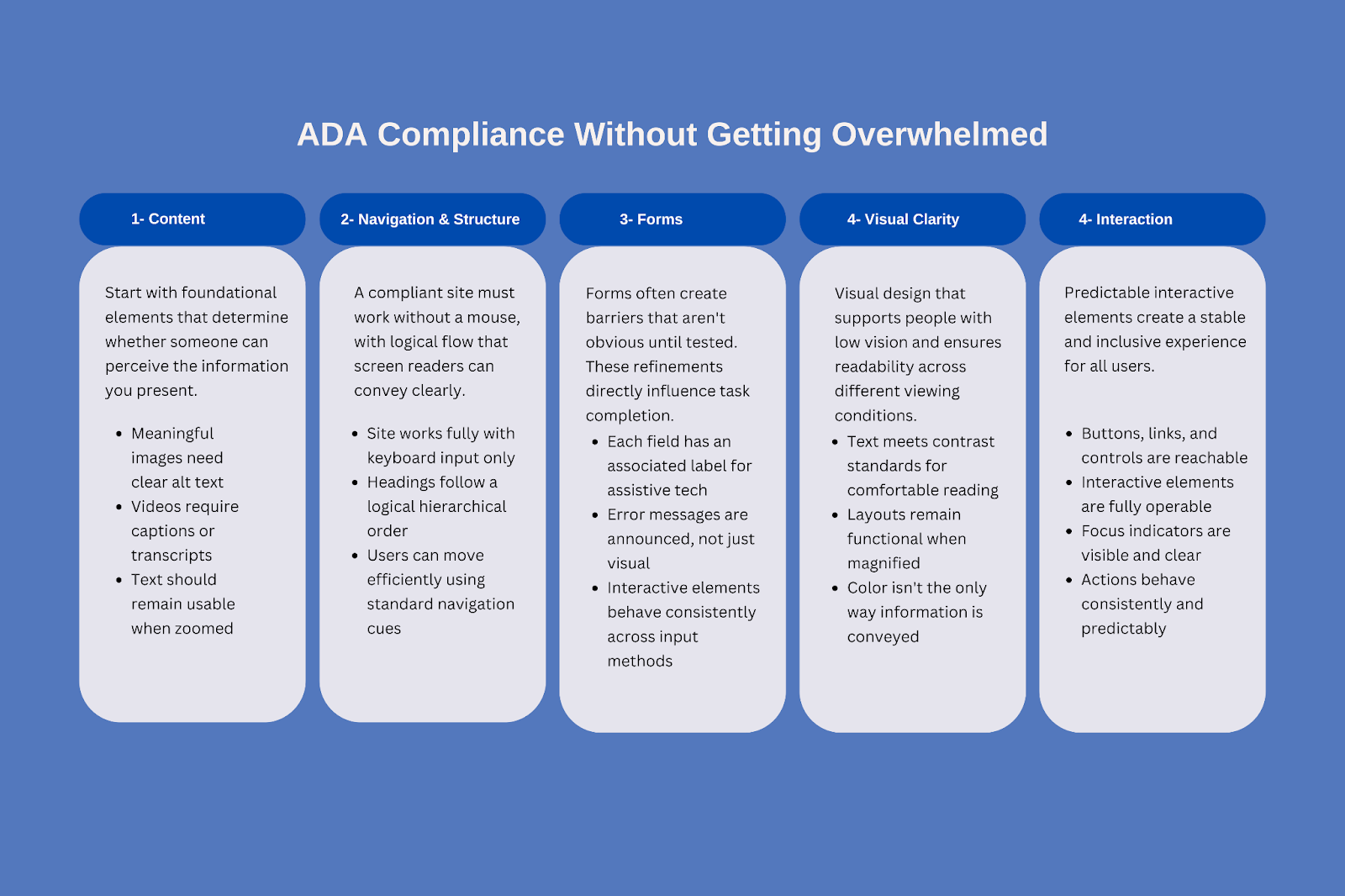

How to check ADA compliance without getting overwhelmed

ADA compliance becomes far less intimidating when you strip it down to its core purpose: making sure people with disabilities can use your website without unnecessary barriers. The law doesn’t spell out technical rules, which is why teams often feel lost. Instead, regulators and courts reference the WCAG 2.1 and 2.2 guidelines — specifically Level AA — as the practical benchmark. In other words, WCAG explains how to make a site accessible; the ADA expects you to follow it.

The connection is simple: WCAG provides the technical framework for accessible design, and ADA is the legal expectation that websites meet that framework. Because WCAG evolves and builds on itself, aiming for 2.1 or 2.2 AA gives teams the clearest path to meeting modern accessibility requirements without trying to interpret legal text.

A practical way to approach ADA compliance is to focus on the areas that have the biggest impact on real users. Start with content: meaningful images need clear alt text, videos require captions or transcripts, and text should remain usable when zoomed. These are foundational elements that determine whether someone can perceive the information you present.

Navigation and structure come next. A compliant site must work without a mouse, relying fully on keyboard input; headings should follow a logical order so screen readers can convey the page clearly; and users should be able to move efficiently through content using standard navigation cues.

Forms often create barriers that aren’t obvious until they’re tested. Each field needs an associated label that assistive technologies can read, error messages must be announced rather than simply displayed visually, and interactive elements should behave consistently across input methods. These refinements directly influence a user’s ability to complete essential tasks like sign-ups or checkouts.

Visual clarity matters just as much. Text must meet contrast standards so people with low vision can read comfortably, and layouts should remain functional when magnified. Combined with predictable interactive elements — buttons, links, and controls that are reachable and operable — these improvements create a more stable and inclusive experience.

This approach works because it aligns cleanly with WCAG while remaining understandable to non-technical teams. Instead of diving into dozens of individual success criteria, you’re focusing on the categories that genuinely affect accessibility: content, navigation, forms, clarity, and interaction. When teams prioritize these, they not only move closer to ADA compliance but also deliver a site that feels more usable, more consistent, and more trustworthy for everyone.

Accessibility tools that genuinely help (what each one is best at and where each one falls short)

Accessibility tools are excellent at surfacing problems — but none of them understand intent. They can tell you something is broken, not whether it blocks a real user from completing a task. That’s why the best teams treat tools as accelerators, not decision-makers.

Below is how the most commonly used tools actually perform in real workflows.

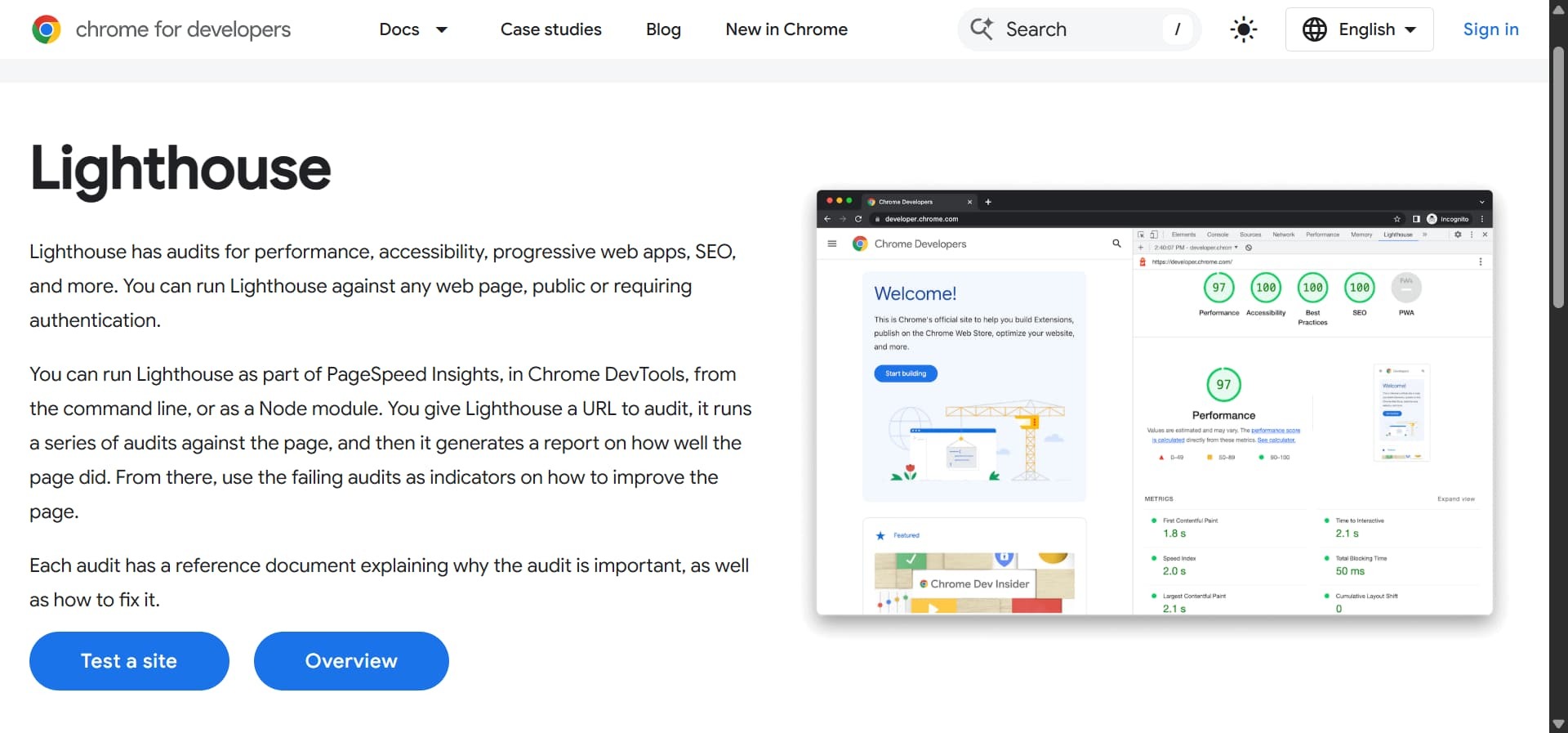

Lighthouse

Lighthouse is often the first accessibility tool teams encounter — mainly because it’s already built into Chrome and Edge. With a few clicks, it generates an accessibility score and flags common issues alongside performance and SEO checks. For developers and QA teams, it’s a fast way to spot obvious technical problems early.

Its strength is speed and convenience. You don’t need setup, accounts, or training to get value, and the recommendations link directly to documentation that explains what’s wrong and how to fix it.

The limitation is depth. Lighthouse only covers a subset of accessibility issues and focuses heavily on what can be detected automatically. It won’t tell you if your page feels usable, if the reading order makes sense, or if a keyboard user gets stuck halfway through a flow.

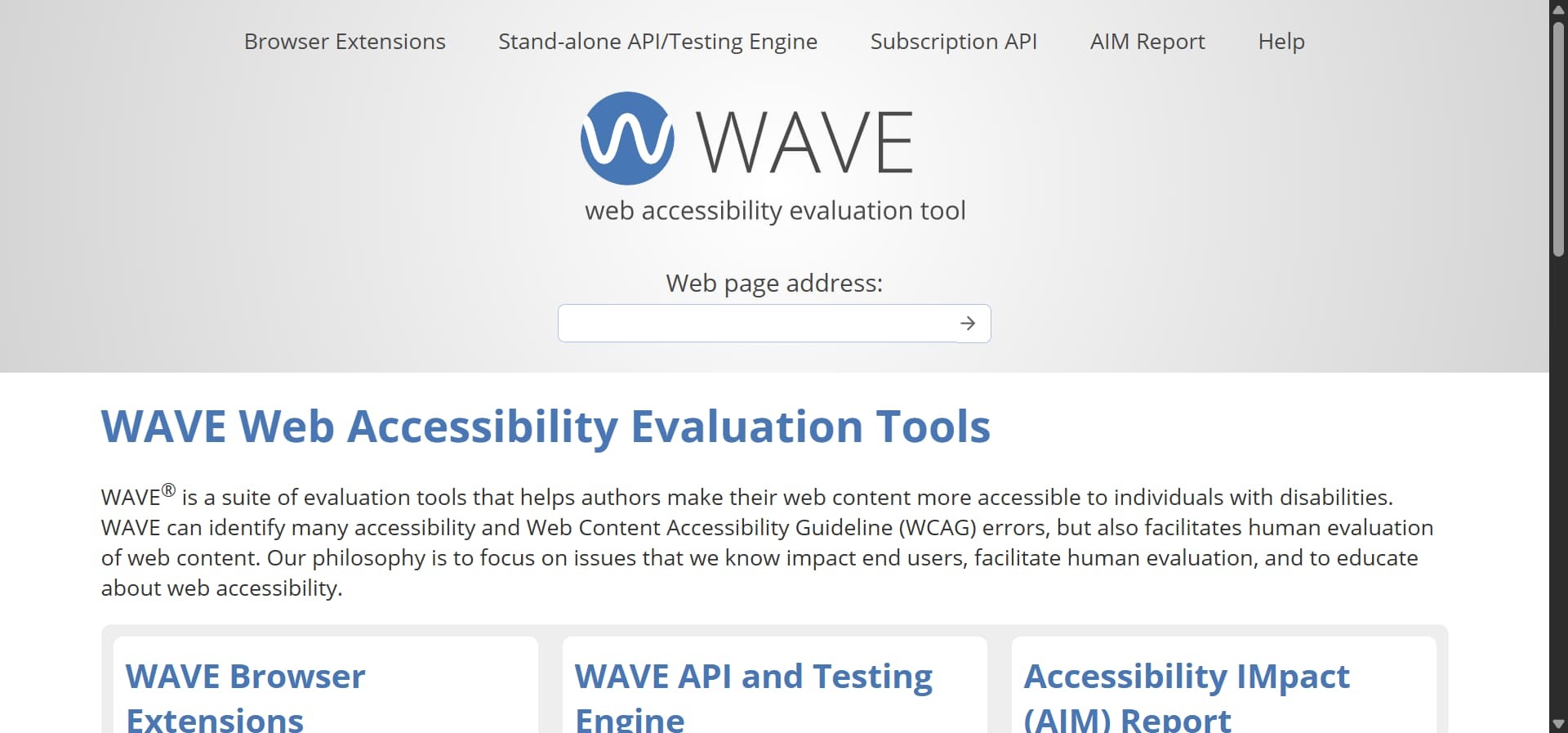

WAVE by WebAIM

WAVE approaches accessibility visually. Instead of a report, it overlays icons and warnings directly onto the page, making issues tangible and easier to understand — especially for non-technical teams. You see exactly where contrast breaks, alt text is missing, or structure is unclear.

This makes WAVE particularly useful for learning and collaboration. Designers, content teams, and stakeholders can point to problems without interpreting logs or code.

But WAVE is intentionally manual. It doesn’t scan entire sites or track progress over time. Each page must be checked individually, and someone still has to decide which issues matter most and why. It explains where problems exist — not how users experience them.

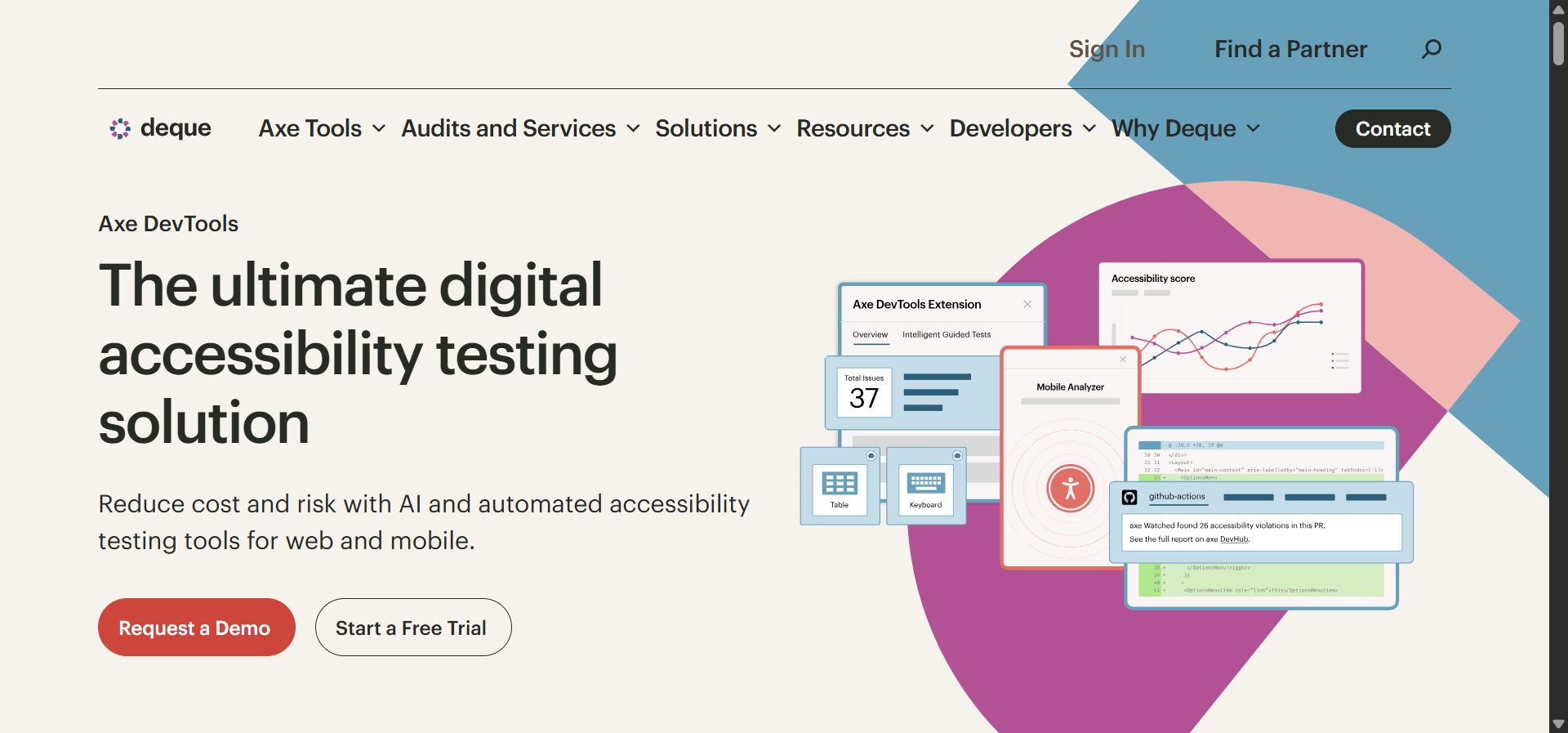

Axe DevTools

Axe DevTools is built for teams that want accessibility embedded into development workflows. It integrates with browser dev tools and CI/CD pipelines, helping teams catch regressions before code reaches production.

Its real value is prevention. When accessibility checks run alongside unit tests, issues stop being last-minute surprises and become part of standard quality control.

The trade-off is accessibility maturity. Axe assumes technical comfort and familiarity with accessibility concepts. For non-technical teams, setup and interpretation can be intimidating. And like all automated tools, it still needs manual validation to confirm real usability.

Accessibility Insights

Accessibility Insights sits between automation and human review. It combines automated scans with guided workflows that walk testers through checks like keyboard navigation, contrast evaluation, and structure validation.

This guided approach lowers the barrier for teams that want to do more than scan but don’t yet have deep accessibility expertise. It explains why an issue matters — not just that it exists.

Its limitation is scale. Accessibility Insights works best at the page level and isn’t designed for large, enterprise-wide monitoring. Teams still need experience to interpret findings correctly and turn them into consistent fixes.

Multi-page scanning and monitoring platforms (WebAbility, WebYes, Pope Tech)

These platforms solve a different problem: visibility at scale. Instead of checking one page at a time, they scan entire sites, track recurring issues, and surface trends over time through dashboards and reports.

They’re especially useful for large sites where accessibility regressions happen quietly as content changes. Continuous monitoring helps teams stay aware of what’s drifting out of compliance.

What they don’t do is replace judgment. Large scans reveal patterns, not experience. They can tell you where issues cluster — but not which ones break critical user flows or confuse real users. Interpreting results still requires trained reviewers.

AI-powered accessibility widgets

Accessibility widgets promise fast results: install a script, add UI controls, and give users options like contrast toggles, font resizing, or simplified layouts. For some users, these features provide immediate relief.

Their appeal is speed and ease. No audits. No redesigns. Instant visual adjustments.

But widgets don’t guarantee WCAG or ADA compliance. They sit on top of existing problems instead of fixing them. Structural issues — broken navigation, incorrect semantics, poor reading order — remain untouched. Widgets improve comfort for some users, but they don’t ensure equal access.

The reality most teams discover

Accessibility tools are great at detecting symptoms — missing labels, contrast failures, invalid roles. What they can’t detect is intent: whether a label helps someone complete a task, whether navigation feels predictable, or whether content makes sense when read aloud.

That’s why the strongest accessibility programs combine tools with manual checks, assistive technology testing, and real user feedback. Automation accelerates discovery. Human insight determines impact.

How accessibility scan and monitoring platforms strengthen long-term compliance

Accessibility rarely breaks in one big moment — it erodes quietly as teams ship new pages, update components, and publish content at scale. That’s why one-time audits aren’t enough. Monitoring platforms add continuity, catching regressions the moment they appear and showing how accessibility health changes over time. Scanners surface individual issues, dashboards reveal patterns across pages, and alerting systems flag new problems before they reach users. For larger teams especially, trend monitoring turns accessibility from a reactive cleanup task into a controlled, ongoing process — one that stays aligned with real-world use as the site evolves.

AI accessibility widgets: what they solve and what they can’t

AI accessibility widgets are often marketed as quick fixes, but their real value is more limited — and more specific. These tools enhance usability by giving users control over how they view content: adjusting contrast, resizing text, switching to dyslexia-friendly fonts, or enabling simplified layouts. For some users, these options make an immediate difference and reduce friction on the page.

Where confusion starts is compliance. Widgets don’t change the underlying structure of your website. They don’t fix broken navigation, incorrect semantics, missing labels, or poor reading order. Those issues remain in the code, even if the interface looks more adjustable on the surface.

That’s why accessibility overlays aren’t the same as accessible websites. Widgets can improve comfort and flexibility, but they don’t guarantee WCAG or ADA compliance. Used responsibly, they support users. Used as substitutes for real accessibility work, they create a false sense of coverage — and leave the hardest problems unsolved.

Address your web accessibility today with a balanced, realistic approach

The teams that make real progress with accessibility don’t rely on a single tool or a one-time audit. They combine automation for speed, manual reviews for judgment, assistive technology testing for accuracy, and monitoring for continuity. Each piece covers a blind spot the others can’t, and together they form a workflow that scales as the site evolves.

Accessibility becomes manageable when you treat it as an ongoing system, not a checklist to complete once. Start by auditing your most important user flows, fix the highest-impact blockers, and put lightweight monitoring in place to catch regressions as content changes. You don’t need perfection on day one — you need momentum and consistency.

The table below shows how each part of a balanced approach contributes to long-term accessibility:

|

Accessibility approach |

What it’s best at |

What it can’t do alone |

|

Automated scans |

Finding obvious, repeatable issues at scale |

Understand user intent or real usability |

|

Manual audits |

Catching interaction and flow problems |

Scale across large sites on their own |

|

Assistive tech & user testing |

Revealing real-world barriers |

Run continuously without structure |

|

Monitoring & reporting |

Preventing regressions over time |

Fix issues without human review |

When accessibility is treated as a workflow — not a compliance checkbox — it stops feeling overwhelming. It becomes a repeatable process that improves usability, reduces risk, and builds trust with every update you ship.

Accessibility ensures people can use your website. But in 2026, another question matters just as much: can AI engines understand, surface, and trust your content? As search behavior shifts toward LLMs and answer engines, visibility is no longer just about rankings — it’s about citations, prompt coverage, and how your brand appears inside AI-generated answers.

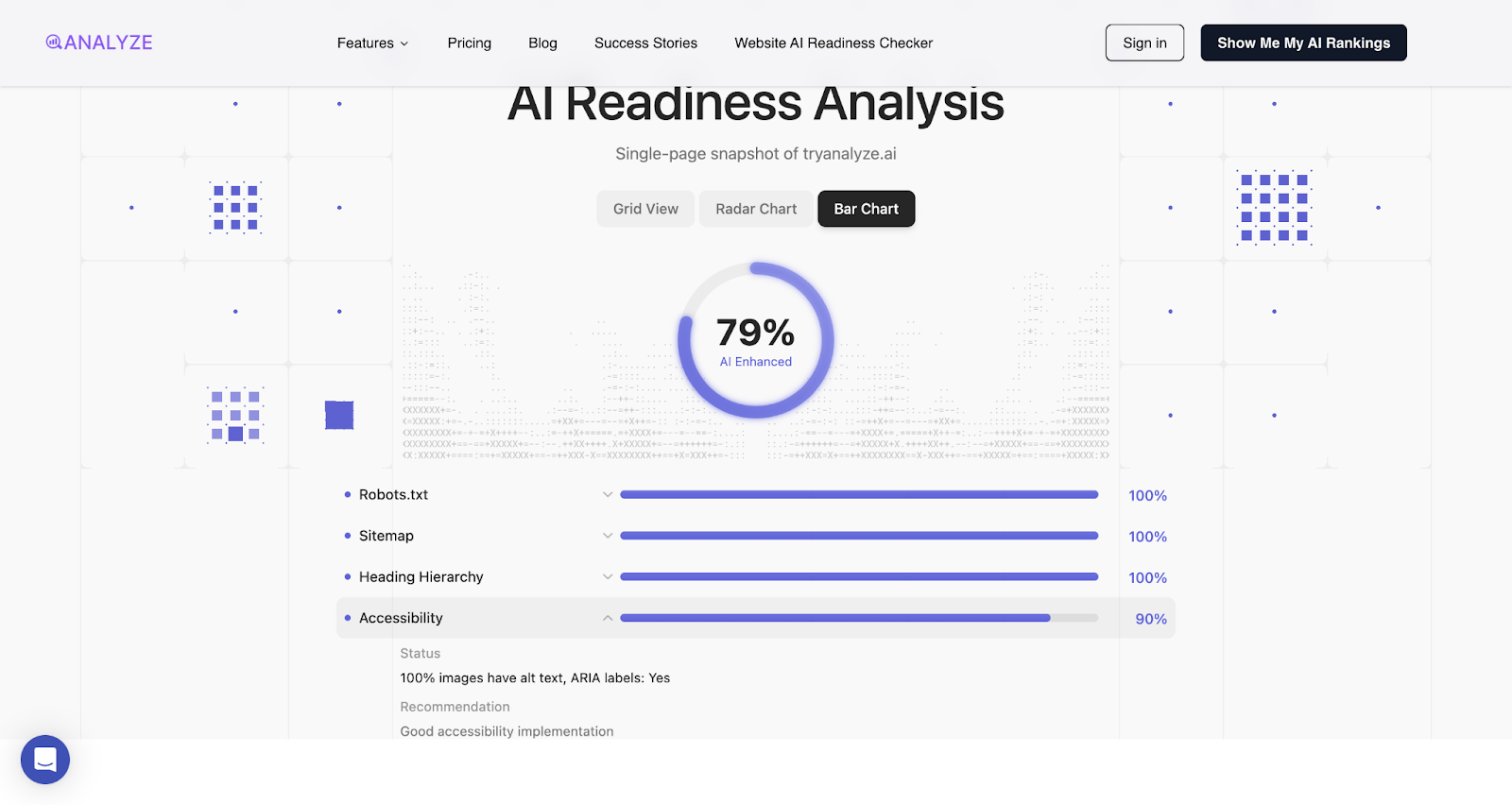

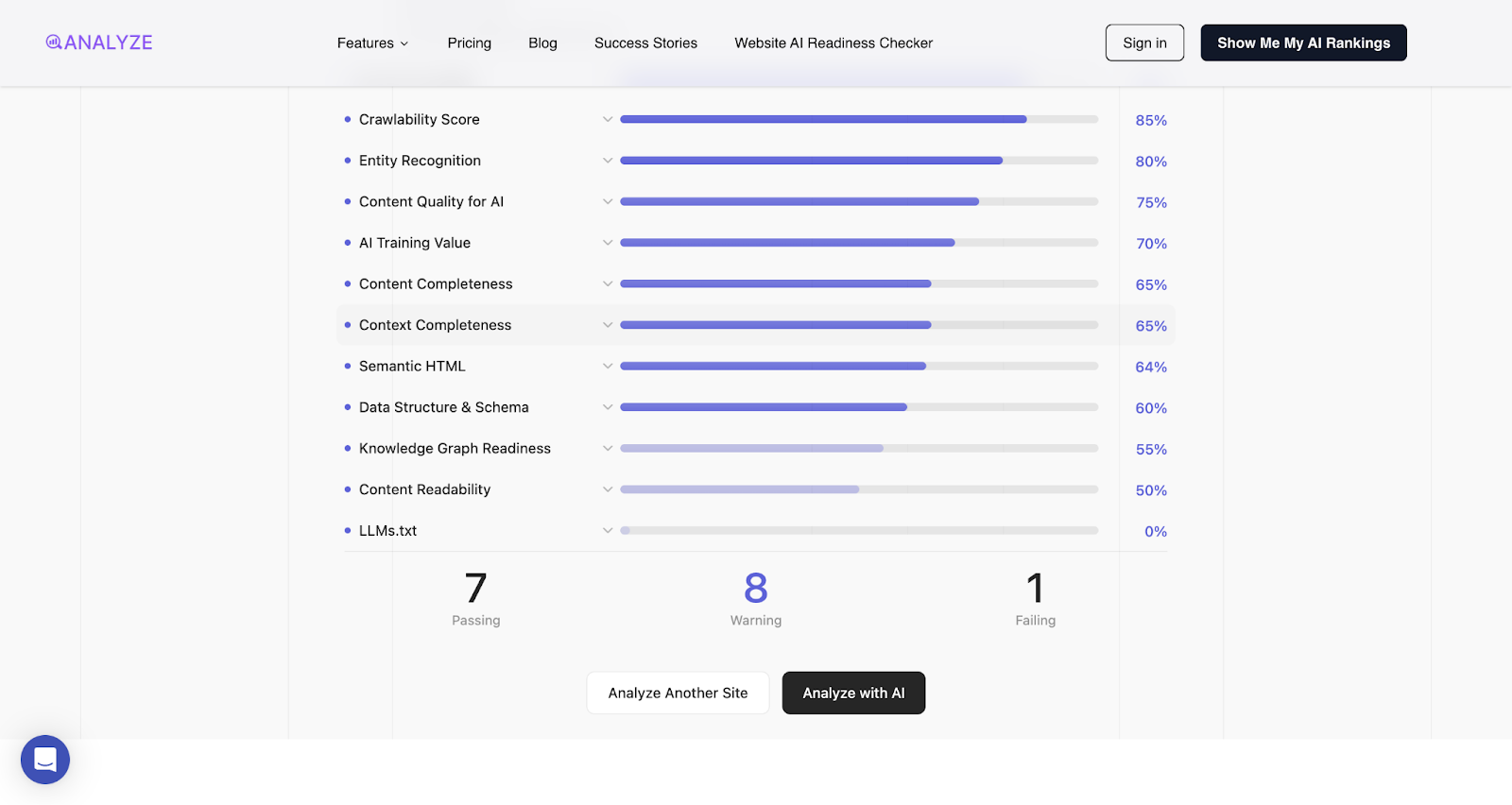

Analyze AI: Comprehensive website auditing for AI search, including accessibility assessment

Key Analyze AI features

-

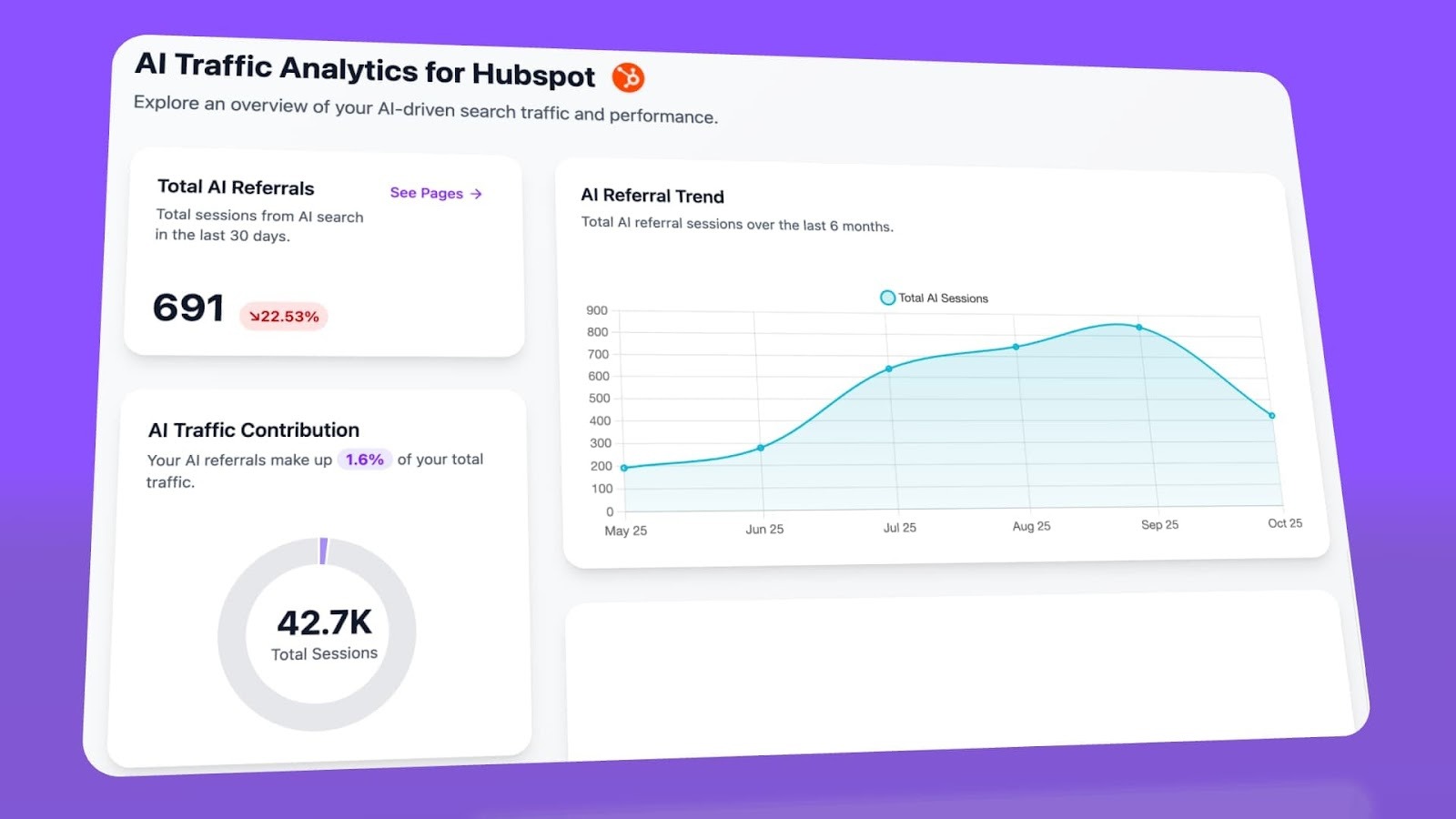

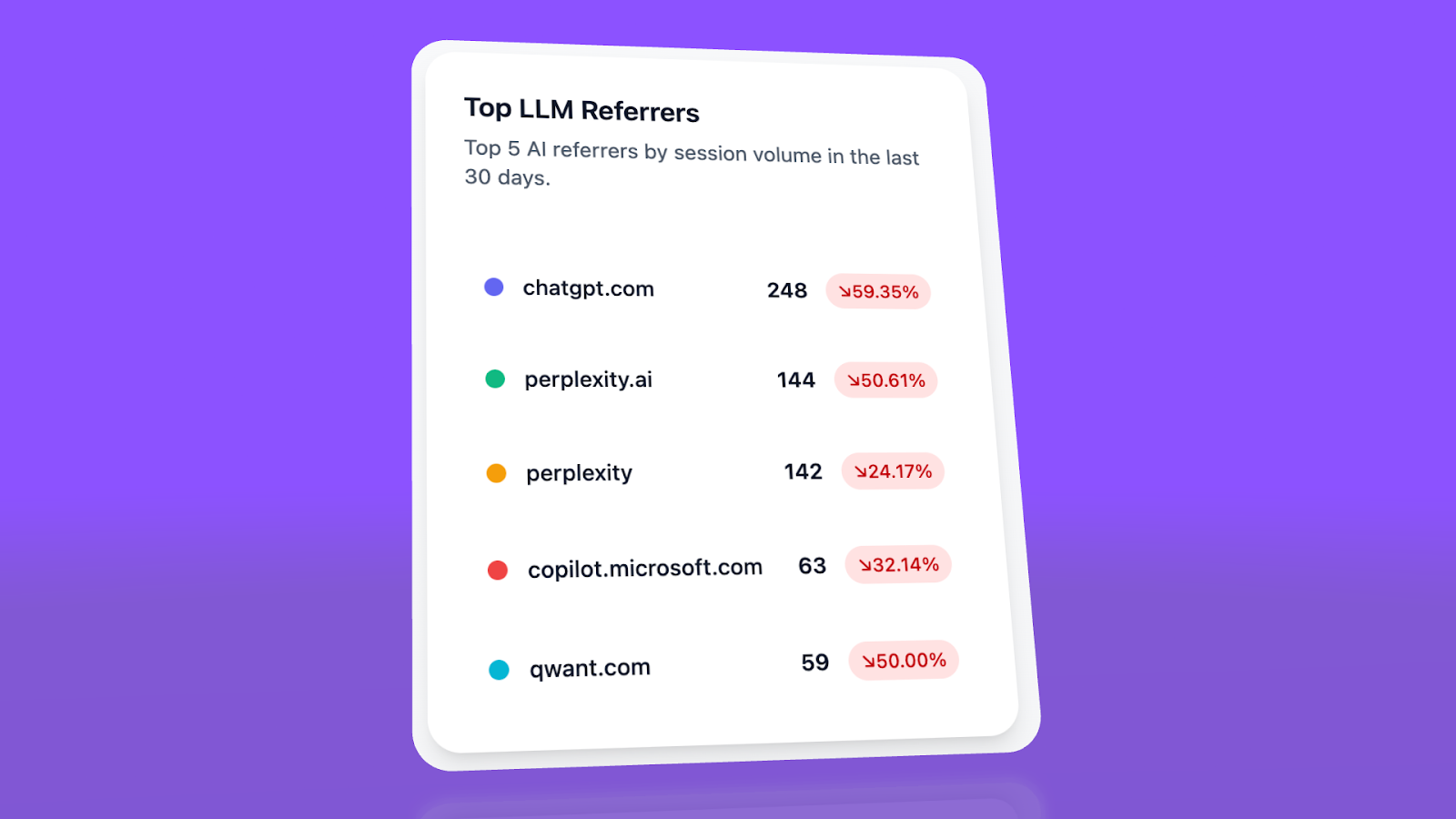

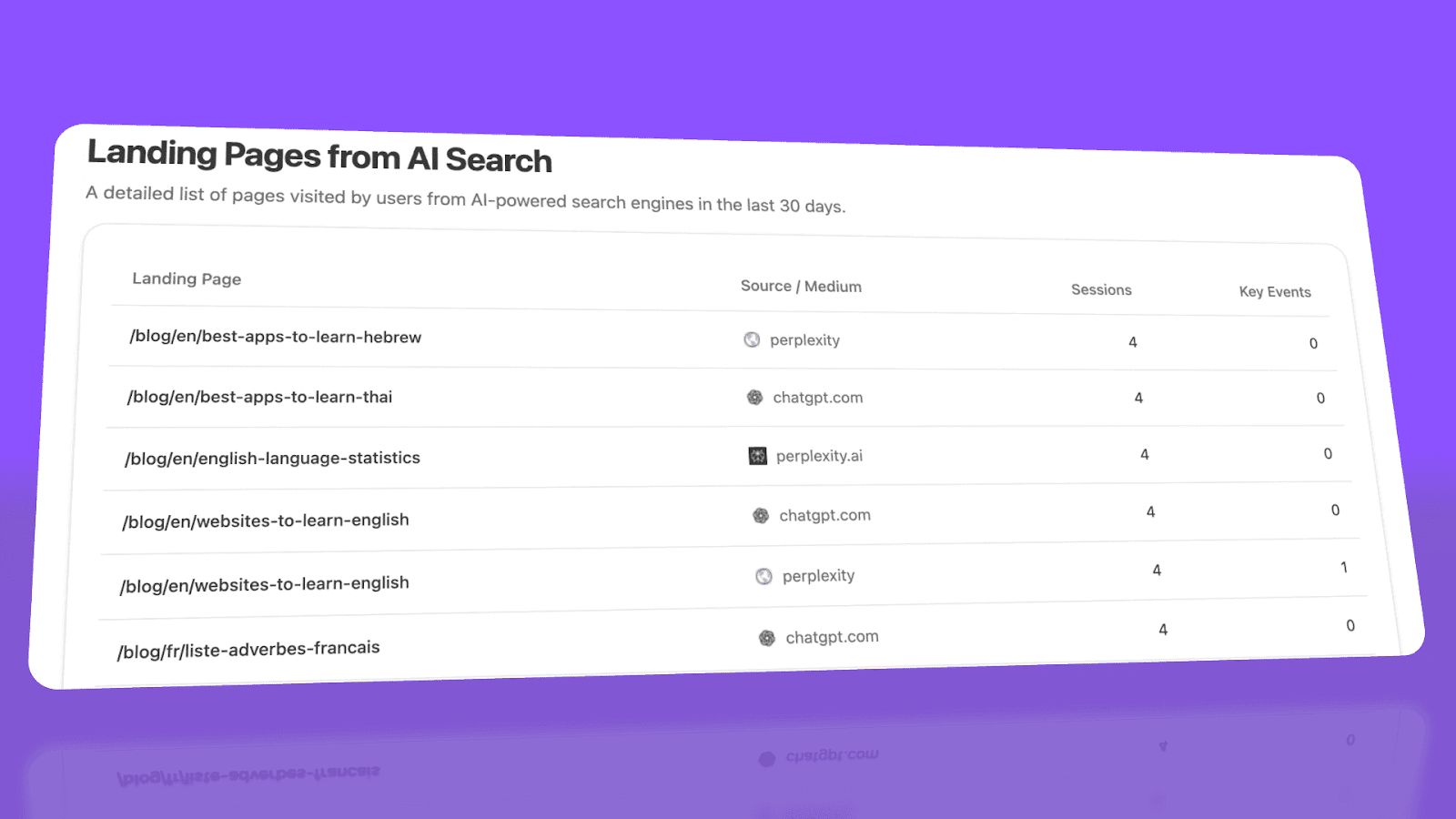

See actual AI referral traffic by engine and track trends that reveal where visibility grows and where it stalls.

-

See the pages that receive that traffic with the originating model, the landing path, and the conversions those visits drive.

-

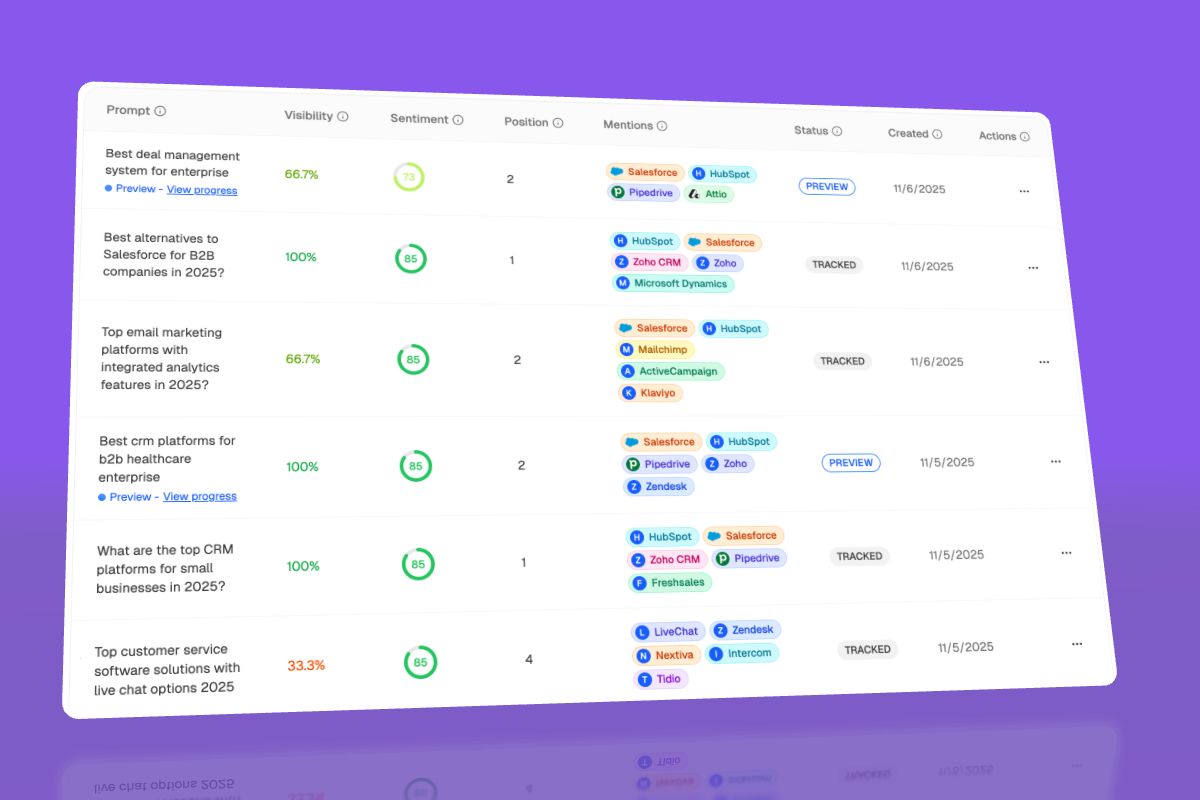

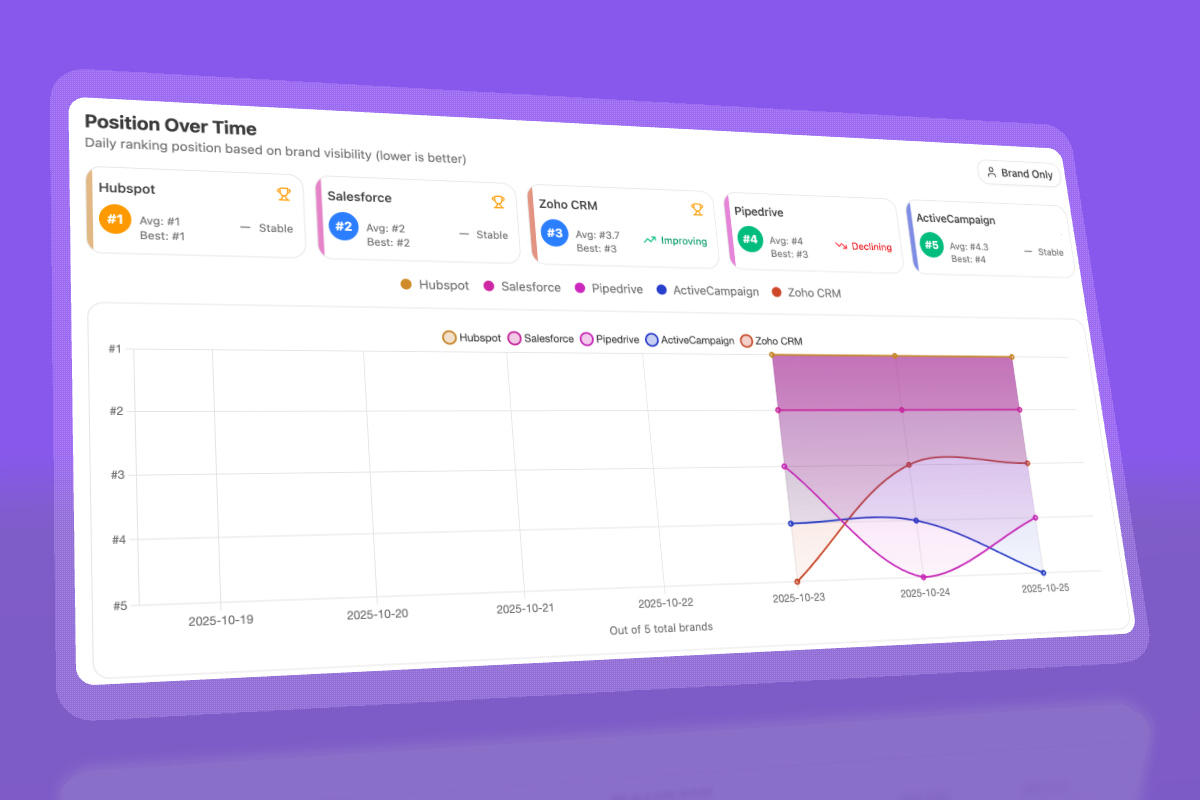

Track prompt-level visibility and sentiment across major LLMs to understand how models talk about your brand and competitors.

-

Audit model citations and sources to identify which domains shape answers and where your own coverage must improve.

-

Surface opportunities and competitive gaps that prioritize actions by potential impact, not vanity metrics.

Here are in more details how Analyze AI works:

See actual traffic from AI engines, not just mentions

Analyze AI attributes every session from answer engines to its specific source—Perplexity, Claude, ChatGPT, Copilot, or Gemini. You see session volume by engine, trends over six months, and what percentage of your total traffic comes from AI referrers. When ChatGPT sends 248 sessions but Perplexity sends 142, you know exactly where to focus optimization work.

Know which pages convert AI traffic and optimize where revenue moves

Most tools stop at "your brand was mentioned." Analyze AI shows you the complete journey from AI answer to landing page to conversion, so you optimize pages that drive revenue instead of chasing visibility that goes nowhere.

The platform shows which landing pages receive AI referrals, which engine sent each session, and what conversion events those visits trigger.

For instance, when your product comparison page gets 50 sessions from Perplexity and converts 12% to trials, while an old blog post gets 40 sessions from ChatGPT with zero conversions, you know exactly what to strengthen and what to deprioritize.

Track the exact prompts buyers use and see where you're winning or losing

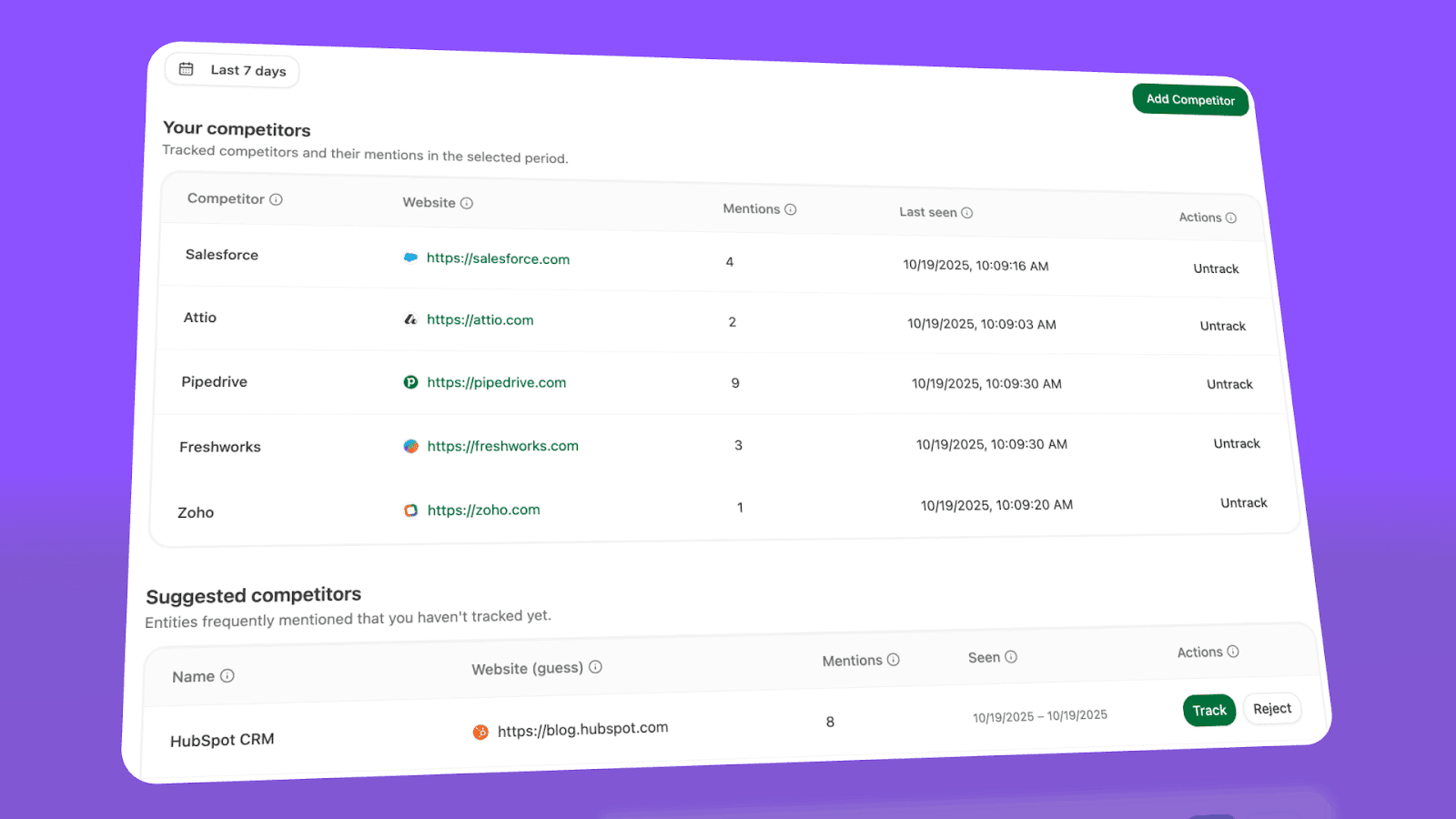

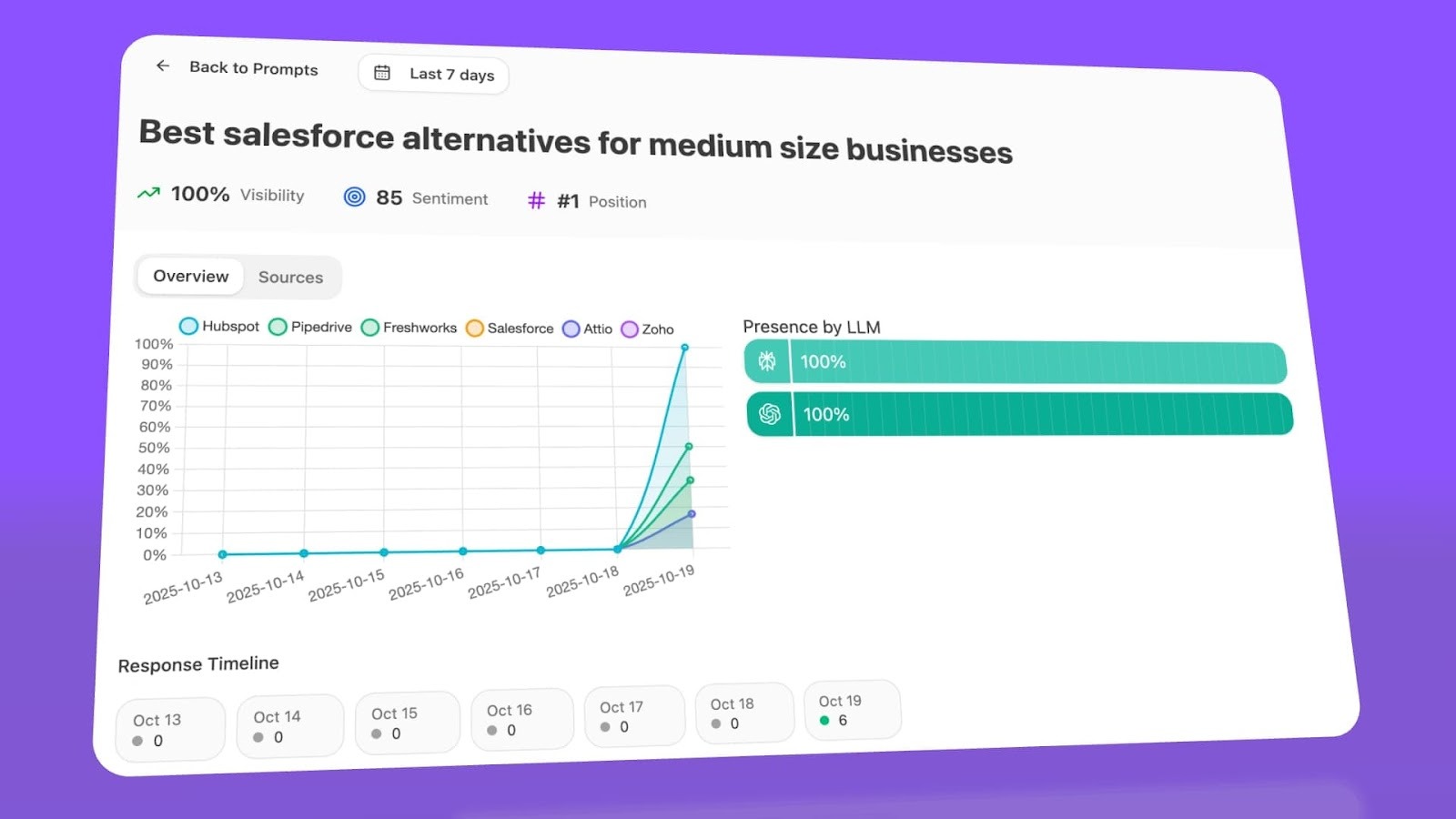

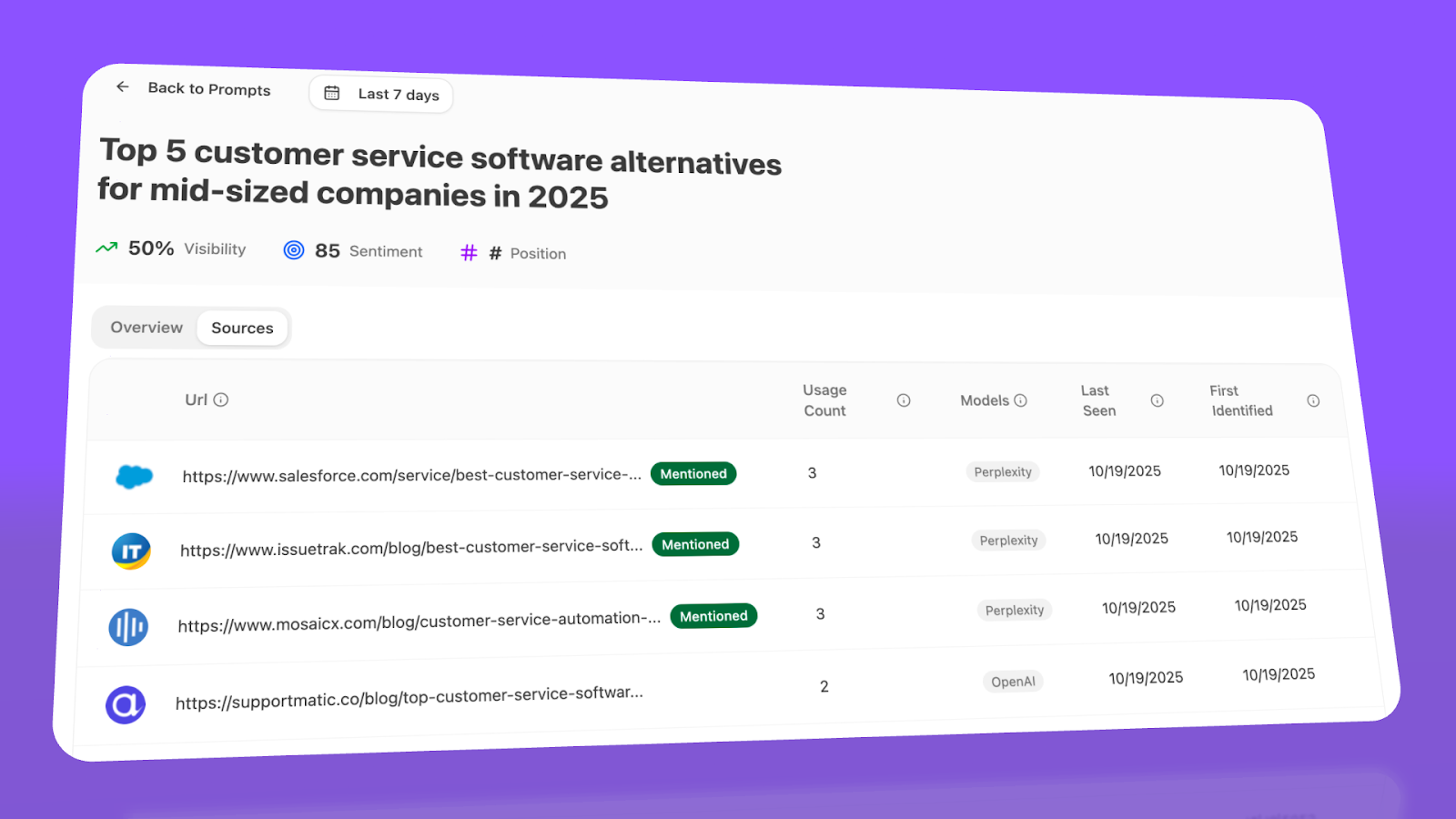

Analyze AI monitors specific prompts across all major LLMs—"best Salesforce alternatives for medium businesses," "top customer service software for mid-sized companies in 2026," "marketing automation tools for e-commerce sites."

For each prompt, you see your brand's visibility percentage, position relative to competitors, and sentiment score.

You can also see which competitors appear alongside you, how your position changes daily, and whether sentiment is improving or declining.

Don’t know which prompts to track? No worries. Analyze AI has a prompt suggestion feature that suggests the actual bottom of the funnel prompts you should keep your eyes on.

Audit which sources models trust and build authority where it matters

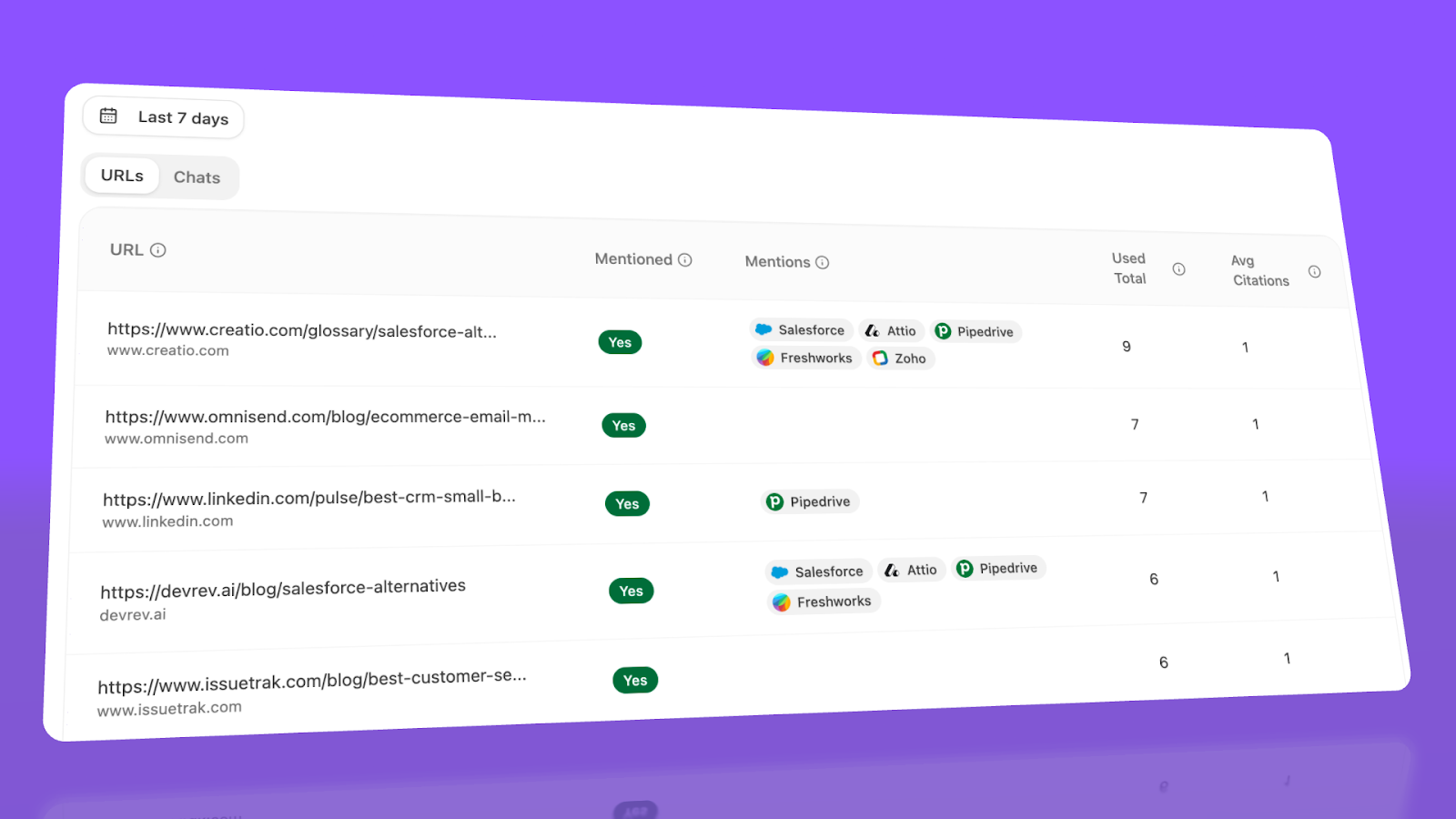

Analyze AI reveals exactly which domains and URLs models cite when answering questions in your category.

You can see, for instance, that Creatio gets mentioned because Salesforce.com's comparison pages rank consistently, or that IssueTrack appears because three specific review sites cite them repeatedly.

Analyze AI shows usage count per source, which models reference each domain, and when those citations first appeared.

Citation visibility matters because it shows you where to invest. Instead of generic link building, you target the specific sources that shape AI answers in your category. You strengthen relationships with domains that models already trust, create content that fills gaps in their coverage, and track whether your citation frequency increases after each initiative.

Prioritize opportunities and close competitive gaps

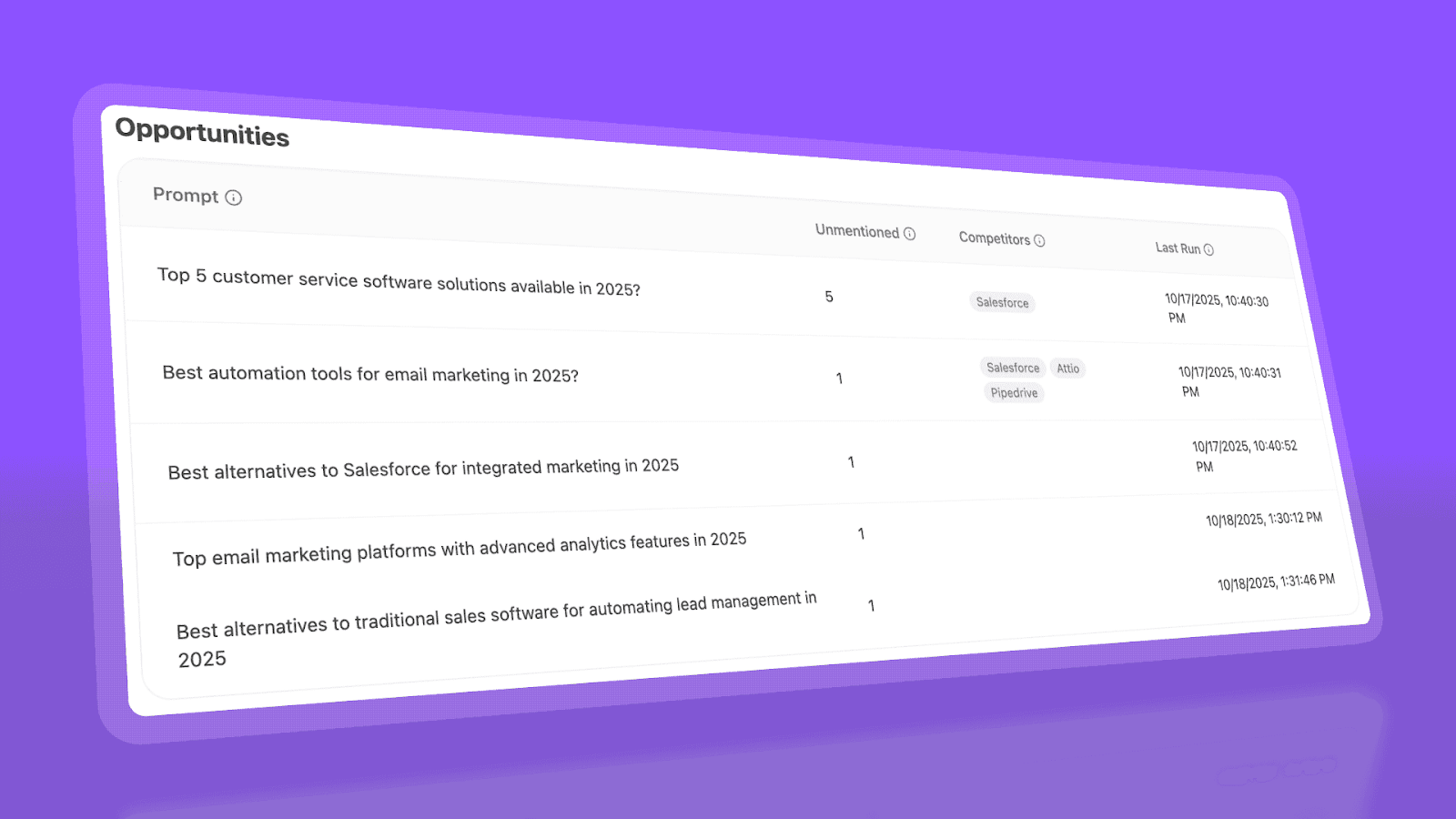

Analyze AI surfaces opportunities based on omissions, weak coverage, rising prompts, and unfavorable sentiment, then pairs each with recommended actions that reflect likely impact and required effort.

For instance, you can run a weekly triage that selects a small set of moves—reinforce a page that nearly wins an important prompt, publish a focused explainer to address a negative narrative, or execute a targeted citation plan for a stubborn head term.

Ernest

Ibrahim