Summarize this blog post with:

In this article, you’ll learn exactly how to get Google to index your website—step by step, from the first check in Google Search Console to advanced troubleshooting for pages that refuse to show up. You’ll also learn why indexing alone isn’t enough anymore, and how to make sure your content surfaces in AI search engines like ChatGPT, Perplexity, and Gemini—where a growing share of your audience is already looking for answers.

Table of Contents

What Does It Mean for Google to “Index” Your Website?

Google’s index is a massive library of hundreds of billions of web pages. When someone types a query into Google, they’re not searching the live internet—they’re searching this index. If your pages aren’t in it, they can’t appear in search results. Period.

Think of it like a bookstore. Google’s crawlers (called Googlebot) visit websites, read their pages, and decide which ones deserve a spot on the shelf. Pages that make the cut get “indexed”—stored in Google’s database and eligible to appear in search results. Pages that don’t make the cut sit in a warehouse no one visits.

Here’s the important nuance most guides skip: Google does not index every page it discovers. It crawls billions of URLs but only indexes the ones it considers valuable enough to show searchers. Even if Googlebot finds your page, it may skip indexing if the content is thin, duplicated, unhelpful, or on a site with limited authority.

So getting indexed isn’t just a technical checkbox. It requires two things working together: making your pages easy to find, and making them worth finding.

How to Check If Your Website Is Indexed

Before you try to fix anything, you need to know where you stand. There are three ways to check your indexing status, and each gives you a different level of detail.

Method 1: The site: Search Operator

Go to Google and type site:yourdomain.com. The number of results below the search bar gives you a rough count of how many pages Google has indexed.

![[Screenshot: Google search results page showing site:yourdomain.com query with result count highlighted]](https://www.datocms-assets.com/164164/1774968443-blobid1.png)

This isn’t perfectly accurate—Google rounds the number and sometimes includes variations—but it’s a quick gut check. If you have 500 pages on your site and Google shows 47 results, something is clearly wrong.

Method 2: Google Search Console’s URL Inspection Tool

For precise, page-level data, use the URL Inspection tool in Google Search Console (GSC). Paste any URL from your site into the inspection bar at the top of GSC.

If the page is indexed, you’ll see a green checkmark and the message “URL is on Google.”

![[Screenshot: Google Search Console URL Inspection tool showing “URL is on Google” with green checkmark]](https://www.datocms-assets.com/164164/1774968451-blobid2.png)

If the page isn’t indexed, you’ll see “URL is not on Google” along with a reason—like “Discovered – currently not indexed” or “Crawled – currently not indexed.”

![[Screenshot: Google Search Console URL Inspection tool showing “URL is not on Google” with specific reason]](https://www.datocms-assets.com/164164/1774968452-blobid3.png)

This distinction matters because each status points to a different root cause. “Discovered – currently not indexed” means Google knows the URL exists but hasn’t bothered crawling it. “Crawled – currently not indexed” means Google crawled the page and decided it wasn’t worth indexing.

Method 3: The Pages Report in GSC

For a site-wide view, open the Pages report (formerly the Coverage report) in Google Search Console. This shows you exactly how many pages are indexed, how many aren’t, and the specific reasons for exclusion.

![[Screenshot: Google Search Console Pages report showing indexed vs. not indexed breakdown with reason categories]](https://www.datocms-assets.com/164164/1774968458-blobid4.png)

Sort by the “Why pages aren’t indexed” section and work through the list from top to bottom. This gives you a prioritized roadmap for fixing indexing issues.

How to Get Google to Index Your Website: 5 Steps

If your content is genuinely valuable to searchers, getting indexed is usually straightforward. You need to make sure Google can find your pages, understand their importance, and trust your site enough to include them. Here’s how to do each of those things.

Step 1: Set Up Google Search Console and Request Indexing

Google Search Console is your direct line of communication with Google about your website. If you haven’t set it up yet, do that first.

Here’s how to set up GSC:

-

Go to Google Search Console and sign in with your Google account.

-

Click “Add property” and choose the Domain verification method (this covers all subdomains and protocols automatically).

-

Verify ownership by adding a DNS TXT record to your domain provider. GSC walks you through this step by step.

-

Wait for verification to complete—it usually takes a few minutes but can take up to 72 hours.

![[Screenshot: Google Search Console “Add property” dialog showing Domain vs URL prefix options]](https://www.datocms-assets.com/164164/1774968458-blobid5.png)

Once verified, navigate to the URL Inspection tool, paste your homepage URL, and click “Request Indexing.”

![[Screenshot: Google Search Console URL Inspection tool with “Request Indexing” button highlighted]](https://www.datocms-assets.com/164164/1774968464-blobid6.png)

This tells Google to prioritize crawling that URL. Since your homepage is the root of your site structure, Googlebot will follow internal links from there and discover the rest of your pages.

A few things to know about request indexing: - There’s a daily limit of roughly 10–15 URL submissions. Don’t try to submit hundreds of pages one by one. - Requesting indexing doesn’t guarantee indexing. Google still evaluates whether the page is worth adding. - For new websites, it can take anywhere from a few days to several weeks. For established sites with existing authority, new pages can be indexed within hours.

Step 2: Create and Submit an XML Sitemap

A sitemap is an XML file that lists all the pages on your site you want Google to know about. It’s like handing Google a table of contents for your website.

Most website platforms generate sitemaps automatically:

|

Platform |

Default Sitemap Location |

|---|---|

|

WordPress (with Yoast/Rank Math) |

yourdomain.com/sitemap_index.xml |

|

Shopify |

yourdomain.com/sitemap.xml |

|

Squarespace |

yourdomain.com/sitemap.xml |

|

Wix |

yourdomain.com/sitemap.xml |

|

Next.js / Custom |

Check robots.txt or set up manually |

To find your sitemap, try navigating to yourdomain.com/sitemap.xml or yourdomain.com/sitemap_index.xml. If neither works, check your robots.txt file at yourdomain.com/robots.txt—most platforms list the sitemap URL there.

![[Screenshot: A robots.txt file in a browser showing the Sitemap: directive with URL]](https://www.datocms-assets.com/164164/1774968464-blobid7.png)

If you’re using WordPress, install Yoast SEO or Rank Math and use their sitemap generator instead of the default WordPress sitemap. The default one tends to include unnecessary URLs like author archives and tag pages that can dilute your sitemap.

To submit your sitemap to Google:

-

Open Google Search Console.

-

Click “Sitemaps” in the left navigation.

-

Enter your sitemap URL in the “Add a new sitemap” field.

-

Click “Submit.”

![[Screenshot: Google Search Console Sitemaps section showing the “Add a new sitemap” input field and Submit button]](https://www.datocms-assets.com/164164/1774968470-blobid8.png)

After submission, GSC will show you the status. “Success” means Google received and read the file. Check back in a few days to see how many URLs from the sitemap Google has actually indexed.

Pro tip: You can also submit your sitemap to Bing Webmaster Tools using the same process. This helps with Bing indexing and, increasingly, with AI search visibility—since Microsoft’s Copilot relies on Bing’s index.

Step 3: Structure Your Website for Crawlability

Google follows links to discover pages. If a page on your site has no internal links pointing to it, Google may never find it—and even if it does, the lack of links signals that the page isn’t important.

These linkless pages are called orphan pages, and they’re one of the most common reasons for indexing problems.

The fix is to structure your site so every important page is reachable through internal links. The most common and effective approach is a pyramid structure:

-

Level 1 (top): Homepage

-

Level 2: Main category or hub pages (linked from the homepage navigation)

-

Level 3: Individual pages and blog posts (linked from category pages)

-

Level 4: Deep subpages (linked from level 3 pages)

![[Screenshot: Diagram illustrating a pyramid site structure with homepage at top, category pages in the middle, and individual posts/pages at the bottom, with arrows showing internal link flow]](https://www.datocms-assets.com/164164/1774968470-blobid9.png)

The goal is simple: every page should be reachable within 3–4 clicks from the homepage, and every page should receive at least one internal link from another page on your site.

How to find orphan pages on your site:

-

Run a site crawl using a tool like Screaming Frog, Sitebulb, or Google Search Console’s Pages report.

-

Compare the pages found during the crawl against the pages in your sitemap.

-

Any URL that’s in your sitemap but wasn’t found during the crawl is likely an orphan page.

Once you’ve found orphan pages, either add internal links to them from relevant existing pages or remove them from your sitemap if they’re not important.

Step 4: Build Backlinks to Establish Authority

Backlinks—links from other websites to yours—are one of the strongest signals Google uses to decide whether your content deserves indexing and ranking.

A brand-new website with zero backlinks is essentially unknown to Google. It has no authority, no votes of confidence from the rest of the web. That makes Google less likely to crawl it frequently and less likely to index all its pages.

Here’s a practical starting point for building your first backlinks:

1. Get listed in relevant directories. Every industry has directories. If you’re a SaaS company, submit to G2, Capterra, and Product Hunt. If you’re a local business, claim your Google Business Profile and list on Yelp, Bing Places, and industry-specific directories.

2. Find competitor backlinks you can replicate. Use a backlink analysis tool to see who links to your competitors. Look for patterns—directories, guest posts, resource pages, listicle mentions—and pursue the same opportunities.

3. Create genuinely linkable content. Original research, comprehensive guides, and free tools naturally attract backlinks over time. This is a long game, but it compounds.

4. Check for broken links on relevant sites. Find pages in your niche that link to dead URLs, then reach out and suggest your content as a replacement. This is one of the highest-conversion link building tactics because you’re solving a problem for the site owner.

Step 5: Don’t Block Google from Crawling or Indexing

This sounds obvious, but it’s surprisingly common—especially on websites that went through a redesign, a staging-to-production migration, or a CMS change. Developers sometimes leave behind crawl blocks that prevent Google from accessing pages.

Here are the three things to check:

robots.txt rules: Visit yourdomain.com/robots.txt and make sure you’re not blocking important pages or directories. A line like Disallow: / blocks your entire site. Use Google’s robots.txt tester to test specific URLs.

![[Screenshot: Google’s robots.txt tester showing a URL test result—either “Allowed” or “Blocked”]](https://www.datocms-assets.com/164164/1774968476-blobid10.png)

Noindex meta tags: A <meta name="robots" content="noindex"> tag in a page’s HTML tells Google not to index that page. This is intentional for pages like thank-you pages or admin dashboards, but sometimes it’s left on pages accidentally after a migration.

X-Robots-Tag HTTP headers: This works the same as the noindex meta tag but is set at the server level. It’s harder to spot because you can’t see it in the page source. Check it using the URL Inspection tool in GSC—look for the “Indexing allowed?” line.

![[Screenshot: URL Inspection tool in GSC showing the “Indexing allowed?” status with “Yes” or “No” indicator]](https://www.datocms-assets.com/164164/1774968476-blobid11.png)

Still Not Indexed? Troubleshoot Deeper Issues

If you’ve done everything above and certain pages still aren’t indexed, there’s likely a more specific technical or quality problem. Here’s a systematic approach to diagnosing it.

Check for Rogue Canonical Tags

A canonical tag tells Google which version of a page is the “primary” one. When it works correctly, it prevents duplicate content issues. When it’s misconfigured, it can tell Google to ignore the page you actually want indexed.

For example, if Page A has a canonical tag pointing to Page B, Google will try to index Page B instead of Page A—even if Page A is the one you want showing up in search results.

How to check:

-

Open the URL Inspection tool in GSC.

-

Enter the URL of the page that’s not getting indexed.

-

Look for the message “Alternative page with proper canonical tag” or “Duplicate without user-selected canonical.”

![[Screenshot: URL Inspection tool showing “Alternative page with proper canonical tag” warning]](https://www.datocms-assets.com/164164/1774968482-blobid12.png)

If you see this on a page you want indexed, either remove the canonical tag or change it to a self-referencing canonical (pointing to itself).

Common canonical mistakes: - A plugin or CMS automatically adds canonical tags pointing to the wrong URL. - HTTP and HTTPS versions of a page have conflicting canonicals. - Paginated pages all canonicalize to page 1 when they shouldn’t.

Check for Duplicate Content

Google generally picks one version of duplicate or near-duplicate pages to index and ignores the rest. If you have multiple pages with very similar content, Google might choose to index a different version than the one you prefer—or skip all of them.

Common sources of duplicate content include:

-

WWW vs. non-WWW versions of your site (should redirect one to the other).

-

HTTP vs. HTTPS versions (should redirect HTTP to HTTPS).

-

URL parameters creating multiple versions of the same page (e.g., ?sort=price, ?ref=partner).

-

Printer-friendly or AMP versions without proper canonicalization.

-

Product pages accessible through multiple category paths.

How to fix it: Set up 301 redirects to consolidate duplicate URLs, add proper canonical tags, and use the URL Parameters tool in GSC if parameter-based duplicates are the issue.

Check for Nofollow Internal Links

If the only internal links pointing to a page use the rel="nofollow" attribute, Google may not follow them—which means it may never discover or properly value that page.

This is rare but happens when: - Your CMS adds nofollow to certain internal links by default. - A developer added nofollow to links in sidebars, footers, or navigation menus. - User-generated content (comments, forums) uses nofollow on all links, including internal ones.

How to check: Run a site crawl using Screaming Frog or a similar tool and filter for pages that only receive nofollowed internal links. Then remove the nofollow attribute from those links.

Check That Your Content Provides Real Value

If there are no technical blockers and Google still won’t index your pages, the problem might be the content itself.

Google’s John Mueller has said directly that Google doesn’t index every page it discovers. Pages need to be worth indexing. If your content is thin (under 300 words with no unique information), duplicates what’s already ranking elsewhere, or doesn’t match any search intent, Google may decide it’s not worth including.

Ask yourself: - Does this page answer a question someone would actually search for? - Does it offer something that existing top-ranking pages don’t? - Would you, as a searcher, find this page useful if you landed on it?

If the answer to any of these is no, consider improving the content before worrying about indexing. Add original data, practical examples, step-by-step instructions, or expert insights. In short, make it the kind of page that earns its place in the index.

Find and Fix Internal Linking Opportunities

Even if a page isn’t orphaned, it might have too few internal links to signal its importance to Google. The more relevant internal links a page has, the more likely Google is to crawl and index it.

Here’s how to find internal linking opportunities:

-

Pick a page you want indexed (or indexed more prominently).

-

Search your own site using Google: site:yourdomain.com "target keyword".

-

Open each result and look for natural places to add a link to your target page.

-

Add the links using descriptive anchor text.

For example, if you want to improve indexing for your page about “keyword research,” search your site for mentions of “keyword research” or related phrases. Each mention is a potential place to add an internal link.

After adding internal links, go back to the URL Inspection tool and click “Request Indexing” to prompt Google to recrawl the page with its new link signals.

Check for Crawl Budget Issues

Crawl budget refers to how many pages Google is willing to crawl on your site within a given timeframe. For most small-to-medium websites (under a few thousand pages), this isn’t an issue. Google can crawl your entire site without breaking a sweat.

But if your site has tens of thousands of pages—especially if many of them are low-quality, duplicated, or generated by faceted navigation and URL parameters—crawl budget can become a bottleneck. Google spends its crawl budget on junk pages and never gets around to your important ones.

Signs of crawl budget problems: - Your site has significantly more URLs than Google has indexed. - New pages take weeks to get indexed, even with proper internal links. - The Crawl Stats report in GSC shows a high number of crawled pages but a low number of indexed pages.

How to fix it: - Remove or noindex low-quality pages that don’t serve searchers. - Fix redirect chains (multiple redirects in sequence waste crawl budget). - Block crawling of faceted navigation URLs in robots.txt. - Use our content audit guide to identify and prune pages that aren’t earning traffic or links.

Check for Manual Actions or Security Issues

In rare cases, Google may penalize your site for violating its guidelines or flag it for security issues like malware. Both can prevent pages from being indexed.

To check:

-

Open Google Search Console.

-

Click “Manual Actions” in the left sidebar.

-

Click “Security Issues” below it.

If either report shows problems, you’ll see specific details about what’s wrong and how to fix it. For manual actions, you’ll need to fix the issue and submit a reconsideration request. For security issues, clean up the malware or hacked content and request a review.

![[Screenshot: Google Search Console Manual Actions report showing “No issues detected” message]](https://www.datocms-assets.com/164164/1774968482-blobid13.png)

Beyond Google: Get Your Content Visible in AI Search

Here’s what most indexing guides miss entirely: getting indexed by Google is only half the picture in 2026.

A growing share of your potential audience now gets their answers from AI search engines—ChatGPT, Perplexity, Google’s AI Mode, Claude, and Microsoft Copilot. These tools don’t just link to your content. They read it, synthesize it, and cite it in their answers. If your pages aren’t being picked up by these AI models, you’re invisible to a growing segment of searchers.

This doesn’t mean SEO is dead. It means SEO is evolving. AI search engines still rely heavily on the same fundamentals—quality content, strong authority, proper structure, and clear topical relevance. The brands that win in AI search are the ones that already do traditional SEO well and layer on a few additional practices.

How AI Search Engines Find and Cite Your Content

Unlike Google, most AI search engines don’t maintain a traditional index that you submit pages to. Instead, they work in a few different ways:

Web-grounded models (like Perplexity and ChatGPT with browsing) search the live web when answering questions, then cite the sources they pull from. For these, your Google SEO directly impacts your AI visibility—if you rank well for a topic, these models are more likely to find and cite your page.

Training data for large language models (LLMs) includes massive crawls of the web. If your content is well-structured, authoritative, and widely linked, it’s more likely to be included in training data—which means the model “knows” about your brand and can mention it in responses.

Bing’s index powers Microsoft Copilot and influences other AI tools. Submitting your sitemap to Bing Webmaster Tools helps ensure AI tools built on Bing’s infrastructure can find your content.

Practical Steps to Improve AI Search Visibility

Here are specific actions you can take alongside your traditional SEO work:

1. Create an llms.txt file. This is like a robots.txt for AI crawlers—it tells LLMs what your site is about and which pages are most important. You can generate one in minutes using Analyze AI’s free LLM.txt generator.

2. Structure content for extraction. AI models pull from well-organized content. Use clear headings, concise definitions, bullet points for lists, and structured data (schema markup). Pages that are easy for humans to scan are also easy for AI to extract from.

3. Build topical authority. AI models favor sources that demonstrate deep expertise on a topic. Instead of writing one thin page about a subject, create a cluster of interlinked pages that cover the topic from multiple angles. This signals to both Google and AI models that your site is a credible source.

4. Earn citations from authoritative sources. When reputable websites link to or mention your content, AI models are more likely to trust and cite you. This is the same principle as traditional link building, but the payoff extends to AI search.

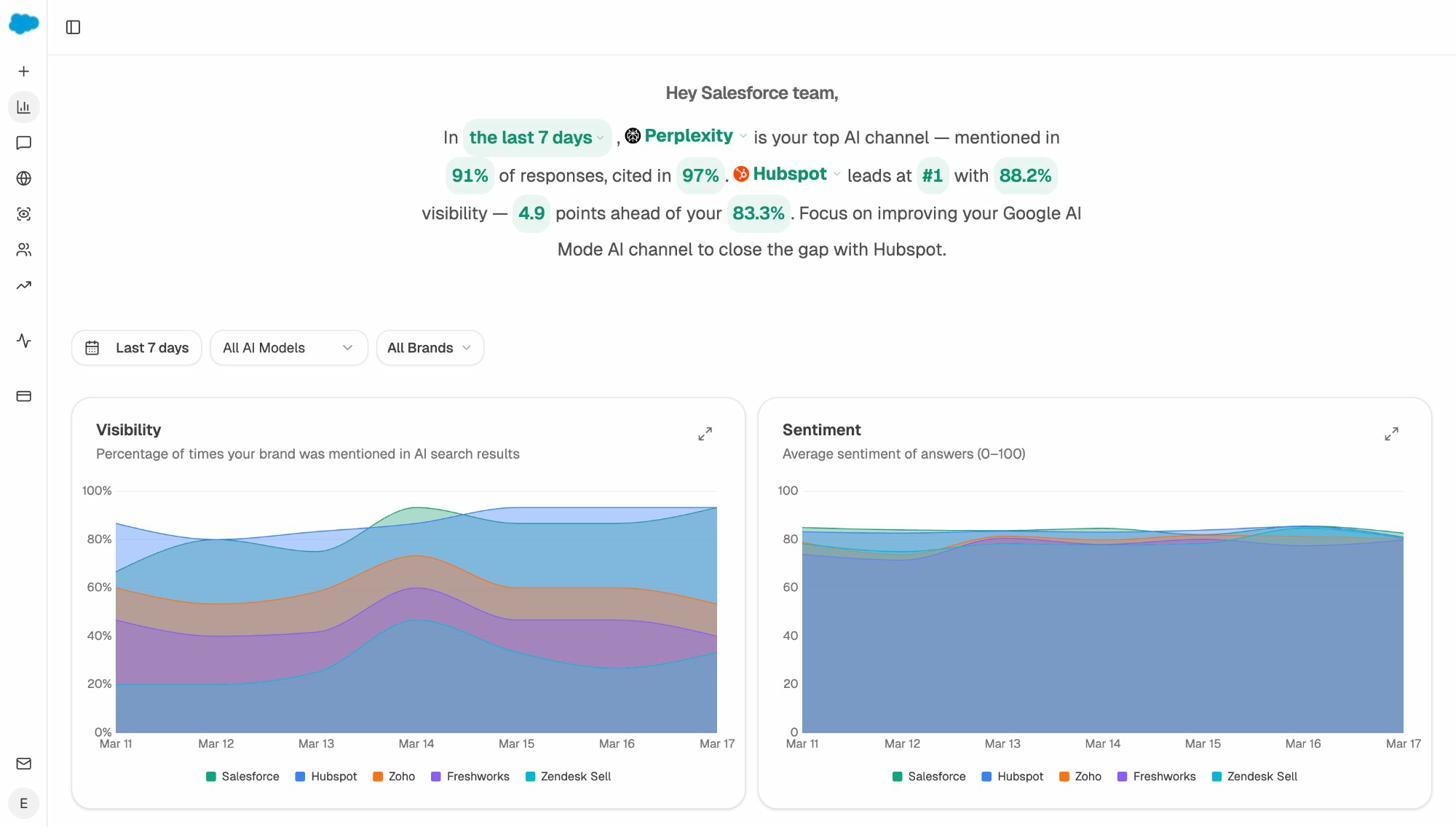

5. Monitor your AI search presence. You can’t improve what you can’t measure. Tools like Analyze AI let you track where your brand appears in AI-generated answers across ChatGPT, Perplexity, Claude, Gemini, Copilot, and more.

With Analyze AI, you can see your visibility percentage across AI models, track sentiment over time, and compare your presence against competitors—all in one dashboard.

Track Which AI Engines Drive Real Traffic to Your Site

One of the biggest gaps in most SEO strategies is the inability to measure AI search as a traffic channel. Traditional analytics tools lump AI referral traffic into “direct” or “other” buckets, making it invisible.

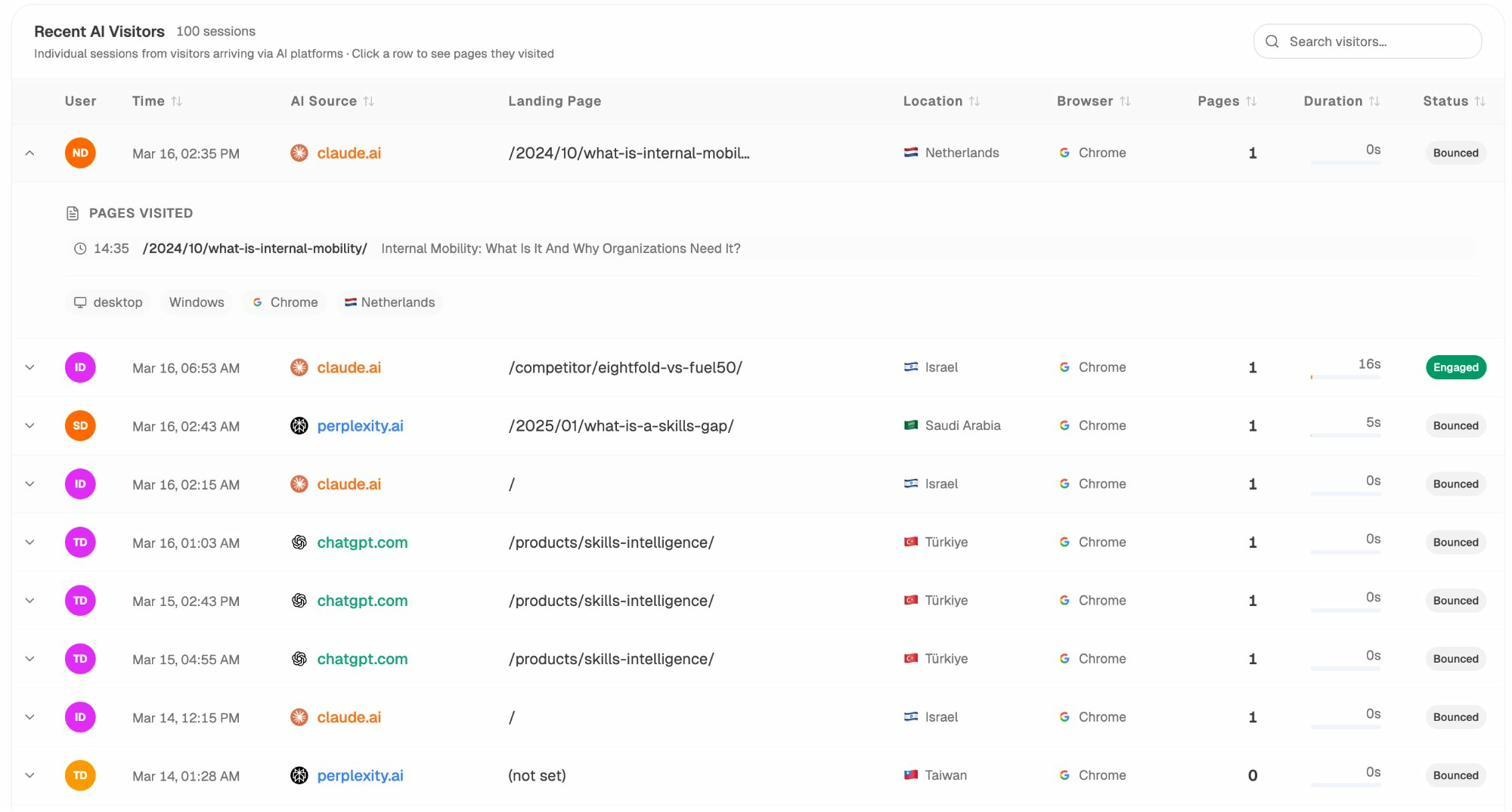

Analyze AI solves this by connecting to your GA4 data and breaking down AI referral traffic by source engine, landing page, engagement, and conversions.

This lets you answer critical questions: Is ChatGPT sending more traffic than Perplexity? Which landing pages do AI visitors land on? Are those visitors engaging or bouncing? By understanding which pages attract AI traffic, you can double down on the content formats and topics that work.

You can even drill down to individual AI visitor sessions to see exactly which pages they visited, how long they stayed, and whether they converted.

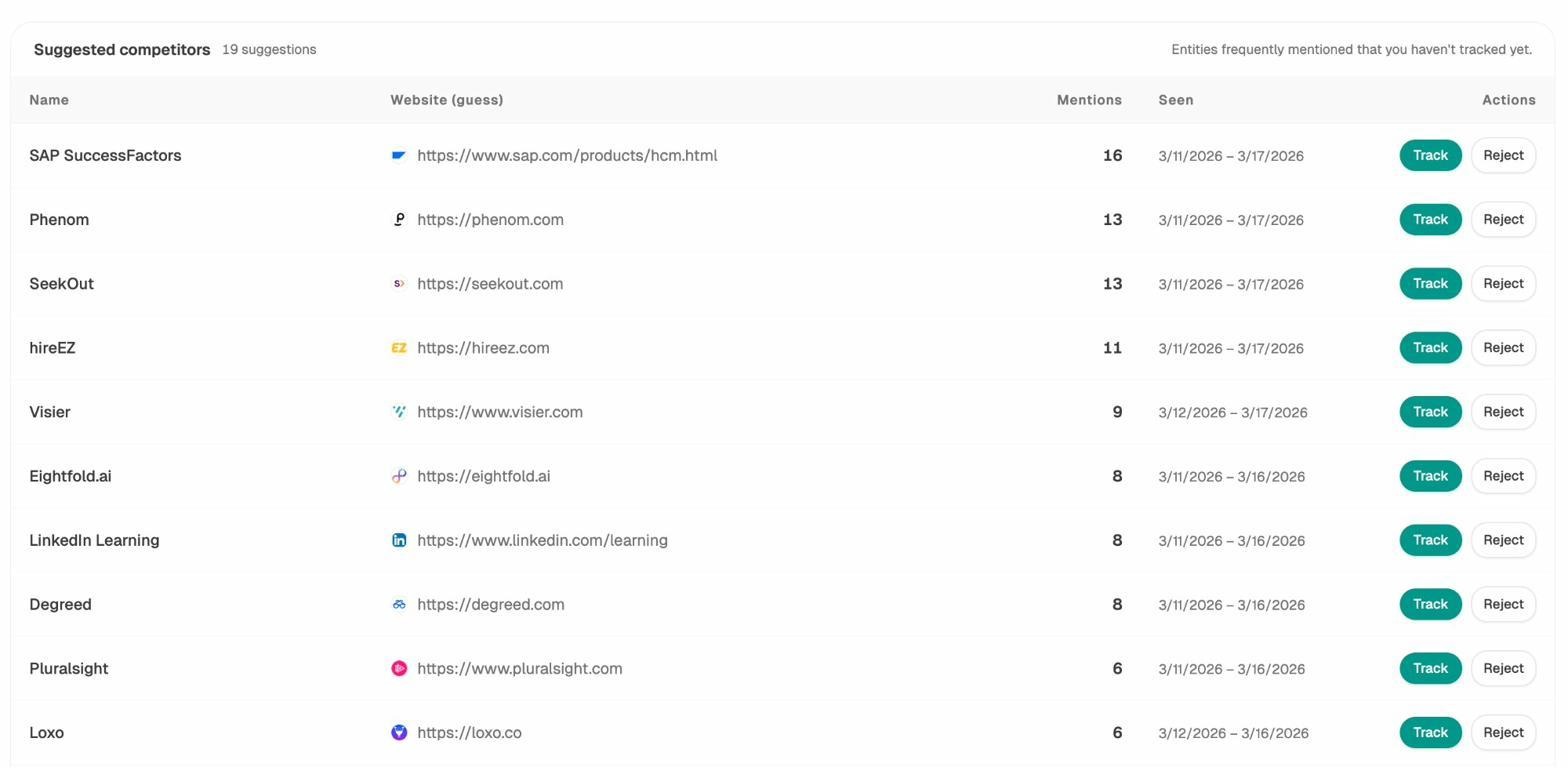

Find Where Competitors Win in AI Search (And Close the Gap)

One of the most powerful features for improving both your Google and AI visibility is competitive analysis. With Analyze AI’s Competitors view, you can see which brands appear alongside yours in AI answers—and where competitors get mentioned while you don’t.

This reveals specific gaps: maybe a competitor gets cited for a topic you haven’t covered yet, or they’re mentioned in a prompt cluster where you’re completely absent. Each gap is an opportunity to create or improve content.

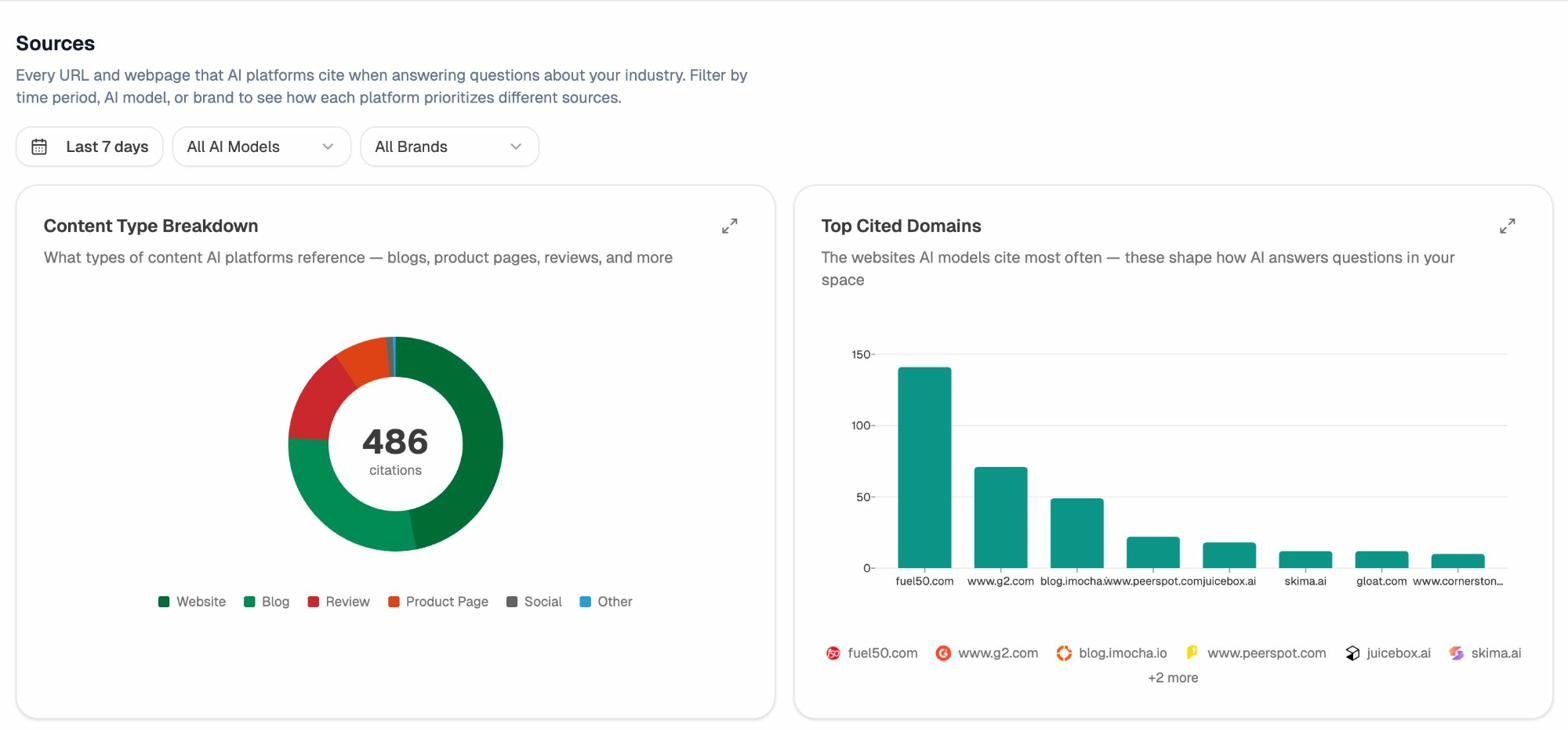

Understand the Sources AI Models Trust

AI engines don’t pull answers from thin air. They cite specific sources—and understanding which sources get cited most in your industry helps you prioritize where to earn mentions and backlinks.

Analyze AI’s Sources dashboard shows you exactly which domains and content types (blogs, product pages, reviews, news) AI models reference when answering questions about your space.

If you notice that AI models cite G2 reviews and industry blogs most frequently in your category, that tells you where to focus your off-page SEO and digital PR efforts. Getting mentioned on highly-cited sources has a compounding effect: it improves your Google rankings and your AI search visibility simultaneously.

Indexing Timeline: What to Expect

How long indexing takes depends on several factors. Here’s a realistic breakdown:

|

Scenario |

Typical Timeline |

What Affects Speed |

|---|---|---|

|

Brand-new website, first pages |

Days to weeks |

Domain authority, backlinks, content quality |

|

New page on established site |

Hours to days |

Internal links, sitemap inclusion, crawl frequency |

|

Updated page (content refresh) |

Hours to days |

Change significance, crawl frequency |

|

Page after fixing technical issue |

Days to weeks |

Severity of the issue, recrawl request |

|

Large site with crawl budget issues |

Weeks to months |

Number of pages, site architecture, server speed |

Don’t panic if a new site takes several weeks to get fully indexed. Focus on building backlinks, creating high-quality content, and ensuring your technical foundations are solid. Indexing speed improves naturally as your site gains authority.

Quick Reference Checklist

Use this checklist to make sure you’ve covered all the bases:

Getting indexed (foundational steps): - [ ] Set up Google Search Console and verify your domain - [ ] Request indexing for your homepage and key pages - [ ] Create and submit an XML sitemap - [ ] Submit your sitemap to Bing Webmaster Tools too - [ ] Structure your site so every page is reachable within 3–4 clicks - [ ] Build initial backlinks from directories and competitor analysis - [ ] Confirm robots.txt isn’t blocking important pages - [ ] Confirm no accidental noindex tags exist

Troubleshooting (if pages aren’t getting indexed): - [ ] Check for rogue canonical tags in GSC URL Inspection - [ ] Check for duplicate content (WWW vs non-WWW, HTTP vs HTTPS) - [ ] Check for nofollow internal links - [ ] Evaluate content quality—is the page genuinely useful? - [ ] Add more internal links to underperforming pages - [ ] Review crawl budget if your site has thousands of pages - [ ] Check for manual actions or security issues in GSC

AI search visibility (extending your reach): - [ ] Create an llms.txt file for your site - [ ] Structure content with clear headings and schema markup - [ ] Build topical authority through content clusters - [ ] Track your AI search visibility with Analyze AI - [ ] Monitor which AI engines send traffic to your site - [ ] Identify competitor gaps in AI search and create content to fill them

Final Thoughts: Indexing Is the Starting Line, Not the Finish

Getting your website indexed by Google is essential, but it’s just the beginning. Indexing means you’re eligible to appear in search results—it doesn’t mean you’ll rank for anything.

That’s where SEO strategy comes in: finding what your audience searches for, creating content that serves those queries better than anything else, optimizing your pages, building authority through backlinks, and keeping your site technically healthy.

And in 2026, strategy needs to extend beyond Google. AI search engines are not replacing traditional search—they’re adding a new layer to it. The brands that treat AI search as a complementary organic channel (not a replacement) will have a significant edge.

The fundamentals haven’t changed. Quality content, strong authority, and technical clarity still govern visibility. What’s changed is where that quality needs to be legible—not just to crawlers, but to the AI models reading, synthesizing, and citing your work.

Start with the basics. Get indexed. Then keep building.

Ernest

Ibrahim