In this article, you’ll learn which SEO and AI bots get blocked the most across ~140 million websites, why certain crawlers face higher block rates than others, which industries are most aggressive about blocking, how blocking decisions affect your data quality in SEO tools, and what the rise of AI bot blocking means for your brand’s visibility in AI search engines like ChatGPT, Perplexity, and Gemini.

Table of Contents

Key takeaways

Here’s the short version of what the data shows:

-

MJ12bot (Majestic) is the most blocked SEO bot, with 6.49% of websites blocking it. SemrushBot and AhrefsBot follow closely at 6.34% and 6.31%.

-

GPTBot (OpenAI) is the most blocked AI bot, with 5.89% of websites blocking it. ClaudeBot (Anthropic) grew fastest, with a 32.67% increase in block rates over the past year.

-

Bots that crawl more get blocked more. There’s a statistically significant positive correlation (0.512 Pearson coefficient) between crawl volume and block rate.

-

Arts & entertainment sites block AI bots the most (45%), followed by law & government (42%). Autos & vehicles leads for SEO bot blocks (39%).

-

Blocking AI bots doesn’t reliably prevent citations. A 2026 study of 4 million AI citations found that 70.6% of sites blocking ChatGPT’s retrieval bot still appeared in its citations.

-

The crawl-to-refer ratio for AI bots is wildly lopsided. Anthropic’s ClaudeBot crawls roughly 20,583 pages for every single referral it sends back to publishers. OpenAI’s ratio sits around 1,255:1.

What are SEO and AI bots?

Before diving into block rates, a quick primer on what these bots actually do.

SEO bots are web crawlers operated by SEO tool companies like Ahrefs, Semrush, Moz, and Majestic. They crawl your website to build their link indexes, map site structures, and power the data you see when you run a backlink check or site audit. When you use a tool like Ahrefs to analyze a competitor’s backlink profile, the data comes from AhrefsBot’s crawling activity.

AI bots are a newer category. They crawl websites to collect data for training large language models (like GPT-4 or Claude) and to power real-time retrieval for AI search products. Some companies, like OpenAI, operate separate bots for each purpose. GPTBot handles training data collection, while OAI-SearchBot crawls for live ChatGPT search results.

This distinction matters. Blocking an SEO bot affects your data quality inside that tool. Blocking an AI bot affects whether your content appears in AI-generated answers, which is increasingly how people find products, services, and information.

How often are SEO bots blocked?

According to an analysis of ~140 million websites, between 5.47% and 6.49% of all sites block the major SEO bots. The analysis accounted for all robots.txt blocking methods, including explicit disallow directives, general blocks (where all bots are blocked), and cases where a directive allowed a specific bot after a blanket block.

Two caveats worth noting. The data doesn’t capture firewall or IP-based blocks, which some sites use instead of or in addition to robots.txt. It also reflects only the sites visible to the crawling tool’s index, meaning sites that block a tool completely may not appear in that tool’s data at all.

Here are the most blocked SEO bots across ~140 million websites:

|

Bot Name |

Block Rate (%) |

Total Sites Blocked |

Bot Operator |

|---|---|---|---|

|

MJ12bot |

6.49% |

9,081,205 |

Majestic |

|

SemrushBot |

6.34% |

8,868,486 |

Semrush |

|

AhrefsBot |

6.31% |

8,831,316 |

Ahrefs |

|

dotbot |

6.13% |

8,569,766 |

Moz |

|

BLEXBot |

5.99% |

8,374,216 |

SEO PowerSuite |

|

serpstatbot |

5.63% |

7,878,935 |

Serpstat |

|

DataForSeoBot |

5.63% |

7,872,939 |

DataForSEO |

|

SemrushBot-CT |

5.62% |

7,855,400 |

Semrush |

|

Barkrowler |

5.58% |

7,804,425 |

Babbar |

|

SemrushBot-BA |

5.57% |

7,796,785 |

Semrush |

A few things stand out in this data.

Majestic’s MJ12bot leads the list despite Majestic being a smaller company than Semrush or Ahrefs. There are a few likely reasons. MJ12bot is a distributed crawler, meaning it crawls from many different IP addresses rather than a known set. That makes it harder for site owners to verify and easier for them to distrust. Majestic has also been crawling the web for longer than most competitors, giving site owners more time to notice and block it. And with a smaller user base than Semrush or Ahrefs, there’s less business incentive for website owners to keep the bot unblocked.

Semrush operates multiple bots. Beyond the main SemrushBot, there’s SemrushBot-CT (content analysis), SemrushBot-BA (backlink audit), SemrushBot-SWA (site-wide analysis), and SemrushBot-SI (site indexing). Each appears separately in robots.txt files, which means Semrush’s total crawling footprint is larger than any single bot entry suggests.

The gap between the top bots (6.49%) and the bottom of the list (5.47%) is relatively narrow. That’s because a large portion of these blocks come from generic “block all bots” directives rather than targeted decisions. Many website owners use CMS templates or security plugins that include blanket bot blocks, catching every crawler in the same net.

The subdomain picture

The numbers above reflect the main robots.txt file for each website. But every subdomain can have its own robots.txt with different rules. When accounting for all ~461 million robots.txt files across subdomains, the rankings shift:

-

SemrushBot: 5.76%

-

dotbot (Moz): 5.34%

-

MJ12bot (Majestic): 4.96%

-

BLEXBot: 4.88%

-

AhrefsBot: 4.67%

Semrush jumps to the top spot when subdomains are included. This likely reflects the fact that Semrush’s multiple bot variants appear more frequently across subdomain-specific robots.txt files.

How often are SEO bots specifically targeted?

The overall block rates include generic blocks. But some website owners go out of their way to block specific bots by name. These explicit blocks tell a different story about which crawlers site owners find most problematic.

Here are the SEO bots most often explicitly targeted:

|

Bot Name |

Explicit Block Rate (%) |

Sites Explicitly Blocking |

Bot Operator |

|---|---|---|---|

|

MJ12bot |

1.43% |

2,000,372 |

Majestic |

|

dotbot |

1.00% |

1,402,305 |

Moz |

|

AhrefsBot |

0.97% |

1,350,771 |

Ahrefs |

|

SemrushBot |

0.92% |

1,285,857 |

Semrush |

|

BLEXBot |

0.62% |

861,184 |

SEO PowerSuite |

|

serpstatbot |

0.25% |

354,683 |

Serpstat |

|

DataForSeoBot |

0.20% |

284,694 |

DataForSEO |

|

Barkrowler |

0.20% |

276,332 |

Babbar |

When you strip away the generic blocks, MJ12bot leads by a wide margin. Over 2 million websites specifically name Majestic’s bot in their disallow directives. That’s 40% more than the next most-targeted bot (Moz’s dotbot) and more than 55% more than SemrushBot.

The gap between overall blocks and explicit blocks is revealing. SemrushBot has an overall block rate of 6.34% but an explicit block rate of just 0.92%. That means the vast majority of SemrushBot blocks come from generic directives rather than deliberate decisions by site owners. For MJ12bot, the explicit-to-overall ratio is much higher, suggesting that site owners actively seek it out and block it.

Which industries block SEO bots the most?

Block rates vary significantly by industry. Among the top 1 million sites (DR > 45), the industries most likely to block SEO bots are:

-

Autos & Vehicles: 39%

-

Books & Literature: 27%

-

Real Estate: 17%

The auto industry’s lead likely reflects the heavy use of inventory management systems and dealer networks, which generate massive numbers of pages that site owners don’t want indexed in third-party tools. Dealer sites often use CMS platforms that ship with aggressive bot-blocking configurations by default.

Books and literature sites rank second, likely due to copyright concerns. Publishers and literary platforms are protective of their content and less interested in the SEO data that crawlers collect.

Which SEO bots crawl the fastest?

Crawl speed matters because faster crawlers consume more server resources, which gives site owners more reason to block them.

According to Cloudflare Radar, which reflects roughly 20% of all internet traffic, AhrefsBot is the fastest SEO crawler by a significant margin. It makes roughly 4.6 times more requests than Moz’s dotbot and about 6.7 times more than SemrushBot.

Despite that crawl volume, AhrefsBot’s block rate (6.31%) is actually lower than MJ12bot’s (6.49%). This suggests that crawl speed alone doesn’t determine block rates. Factors like crawler identification, IP transparency, and the perceived value of the tool play a role too.

The AI bot blocking wave

SEO bot blocking has been a slow, steady phenomenon. AI bot blocking is something different. It’s growing fast, it’s driven by fundamentally different concerns, and it has implications that extend well beyond data quality in a third-party tool.

The core issue is what’s called the “social contract” between bots and website owners. Search engine bots (like Googlebot) have historically crawled websites in exchange for sending referral traffic back through search results. Website owners tolerate the resource cost because they get visitors in return.

AI bots don’t hold up their end of this bargain. When Ahrefs analyzed the traffic makeup of ~35,000 websites, they found that AI engines send just 0.1% of total referral traffic. That’s far behind traditional search (43.8%), direct traffic (42.3%), and even social media (13.1%).

The crawl-to-refer ratio makes the imbalance concrete. According to data from Cloudflare’s network analysis, OpenAI’s bots crawl roughly 1,255 pages for every single referral they send back to publishers. Anthropic’s ClaudeBot is even more lopsided at roughly 20,583 pages per referral. Meta’s AI bots send zero referrals.

For comparison, Google crawls about 14 pages for every referral it sends. The gulf between search engine crawlers and AI crawlers is enormous.

How often are AI bots blocked?

AI bot blocking is rising quickly. An analysis of the same ~140 million websites shows the following block rates for AI bots:

|

Bot Name |

Block Rate (%) |

Total Sites Blocked |

Bot Operator |

|---|---|---|---|

|

GPTBot |

5.89% |

8,245,987 |

OpenAI |

|

CCBot |

5.85% |

8,188,656 |

Common Crawl |

|

Amazonbot |

5.78% |

8,082,636 |

Amazon |

|

Bytespider |

5.74% |

8,024,980 |

ByteDance |

|

ClaudeBot |

5.74% |

8,023,055 |

Anthropic |

|

Google-Extended |

5.71% |

7,989,344 |

|

|

anthropic-ai |

5.69% |

7,963,740 |

Anthropic |

|

FacebookBot |

5.67% |

7,931,812 |

Meta |

|

ChatGPT-User |

5.64% |

7,890,973 |

OpenAI |

|

PerplexityBot |

5.61% |

7,844,977 |

Perplexity |

GPTBot leads, which makes sense. It’s the most active AI bot on the web according to Cloudflare Radar, and OpenAI’s ChatGPT is the most widely known AI product. Site owners who want to block AI training often start with OpenAI’s crawler.

There’s a statistically significant correlation between how much a bot crawls and how often it gets blocked. The Pearson correlation coefficient is 0.512 with a p-value of 0.0149, meaning bots that make more requests tend to get blocked more often. Not a perfect correlation, but strong enough to confirm what common sense suggests.

The fastest-growing blocks

ClaudeBot (Anthropic) saw the fastest growth in block rates over the past year, increasing by 32.67%. Here’s how block rate growth looks across the major AI bots:

|

Bot Name |

Growth (%) |

Bot Operator |

|---|---|---|

|

ClaudeBot |

32.67% |

Anthropic |

|

anthropic-ai |

25.14% |

Anthropic |

|

claude-web |

20.66% |

Anthropic |

|

Bytespider |

19.57% |

ByteDance |

|

ChatGPT-User |

15.52% |

OpenAI |

|

PerplexityBot |

15.37% |

Perplexity |

|

GPTBot |

13.38% |

OpenAI |

Anthropic’s bots occupy the top three spots for block rate growth. This likely reflects a combination of increased awareness (Claude became much more widely used in 2024-2025), the company’s multiple bot identifiers, and the growing concern about AI training data.

Meanwhile, some bots are seeing decreasing block rates. Applebot dropped by 3.32%, Meta-ExternalFetcher by 4.32%, and Kangaroo Bot by 5.89%. These decreases may reflect site owners becoming more selective about which AI bots they block rather than applying blanket rules.

Which industries block AI bots the most?

The industry breakdown for AI bot blocking is different from SEO bot blocking:

-

Arts & Entertainment: 45%

-

Law & Government: 42%

-

News & Media: High (though lower than expected)

-

Books & Literature: High (copyright concerns)

-

Shopping: Notable (competitive intelligence fears)

Arts and entertainment sites lead, which makes sense given the ongoing debates about AI and creative work. Artists, musicians, and entertainment companies are among the most vocal opponents of AI training on their content.

Law and government sites rank second, likely driven by compliance requirements and sensitivity about having legal or government content repurposed by AI models.

News and media sites block at a lower rate than many expected given how much coverage the AI-and-journalism debate has received. Many news publishers have actually struck deals with AI companies rather than blocking their bots entirely.

Does blocking AI bots actually prevent citations?

This is the question that matters most for marketers. If you block AI bots, will AI engines stop mentioning you?

The short answer, based on the most comprehensive data available, is no.

A 2026 study by BuzzStream analyzed 4 million AI citations across 3,600 prompts covering ChatGPT, Gemini, Google AI Overviews, and Google AI Mode. Among the top 50 news sites that block ChatGPT’s live retrieval bot (ChatGPT-User), 70.6% still appeared in ChatGPT’s citations.

This happens for several reasons. AI models are trained on historical data, not just live crawls. Even if you block a bot today, your content from before the block still lives in the model’s training data. AI engines also pull from third-party sources like Common Crawl, cached versions, and aggregation sites. Your content might be blocked at the front door but available through a side entrance.

There’s also the Perplexity factor. Cloudflare’s team found evidence that Perplexity’s bots can bypass robots.txt in some cases. And as AI browsers become more sophisticated, the line between bot traffic and human traffic is blurring. Perplexity’s Comet browser and similar AI-integrated browsing tools are increasingly difficult to distinguish from regular human visitors in server logs.

The implication for marketers is clear. Blocking AI bots is a blunt instrument. It might reduce your visibility over time, but it won’t make you invisible. And in the meantime, you might be giving up the opportunity to be cited and recommended by the AI engines that your customers are increasingly using to make buying decisions.

The hybrid approach: block training, allow search

The smarter play is emerging. Rather than blocking all AI bots or allowing all of them, some site owners are adopting a hybrid approach. They block the training crawlers while allowing the search and retrieval crawlers.

Here’s what that looks like in robots.txt for OpenAI:

User-agent: GPTBot

Disallow: /

User-agent: OAI-SearchBot

Allow: /

This configuration blocks GPTBot (which collects data for model training) while allowing OAI-SearchBot (which crawls for ChatGPT’s search results). The result is that your content doesn’t feed the training pipeline, but it can still appear in ChatGPT search results where users might click through to your site.

Not every AI company offers this distinction yet. OpenAI is the clearest about separating training and search bots. Google’s Google-Extended controls training only, while Googlebot handles search indexing. Anthropic, Meta, and others use bots that serve multiple purposes, making selective blocking harder.

The Czech Association for Internet Development (SPIR) published a two-tier robots.txt framework in 2026 that formalizes this distinction between training bots and retrieval bots. It’s designed to align with EU copyright law’s text and data mining exceptions, giving publishers a legally grounded way to opt out of training while remaining visible in AI search results.

How to check what your robots.txt is doing right now

If you’re not sure what your current robots.txt file does, here’s how to check.

Step 1: View your robots.txt file. Open your browser and go to yourdomain.com/robots.txt. This file is always publicly accessible. Look for any User-agent directives that mention SEO or AI bots by name, and check for blanket Disallow: / rules that block all bots.

![[Screenshot: Example of a robots.txt file in a browser showing various bot directives]](https://www.datocms-assets.com/164164/1777128515-blobid1.png)

Step 2: Check for common SEO bot names. Search the file for these user agents: AhrefsBot, SemrushBot, MJ12bot, dotbot, BLEXBot, DataForSeoBot. If any of these are disallowed, it means the corresponding SEO tool won’t have complete data about your site.

Step 3: Check for AI bot names. Search for: GPTBot, OAI-SearchBot, ChatGPT-User, ClaudeBot, anthropic-ai, Google-Extended, PerplexityBot, Bytespider, CCBot, FacebookBot, Meta-ExternalAgent. Each disallow directive reduces your visibility in that platform’s AI features.

Step 4: Check your CMS and security plugins. Many WordPress security plugins, Cloudflare configurations, and CMS templates include bot-blocking rules by default. If you’re using tools like Wordfence, Sucuri, or similar security plugins, review their bot-blocking settings. You might be blocking crawlers without realizing it.

Step 5: Review server-level blocks. Robots.txt isn’t the only place bots get blocked. Check your .htaccess file (Apache), nginx configuration, or CDN settings for user-agent-based blocking rules. Cloudflare’s WAF rules can also block bots at the network level.

You can also use Analyze AI’s Broken Link Checker to audit your site’s accessibility, or the Website Authority Checker to see how SEO tools currently view your domain.

What blocking means for your SEO data

When you block an SEO bot, the most obvious consequence is incomplete data inside that tool. Specifically:

Link index gaps. The biggest impact is on backlink data. If AhrefsBot can’t crawl a page, Ahrefs can’t see where that page links. This means the backlink profiles you analyze through that tool may be incomplete. The same applies to Semrush’s backlink database, Majestic’s link index, and Moz’s link data.

Internal link blind spots. SEO tools use their crawlers to map your site’s internal linking structure. Blocking the bot means the tool can’t show you how your pages connect, which makes it harder to diagnose internal linking issues.

Page history gaps. Some tools track changes to your pages over time. Ahrefs, for example, shows page history through their crawler data. Blocking AhrefsBot means you lose this historical record.

Traffic estimates are unaffected. This is worth emphasizing. Traffic estimates, keyword rankings, and top pages data in tools like Ahrefs and Semrush come from clickstream data and SERP analysis, not from crawling your site directly. Blocking their bots won’t change these numbers.

If you’re an SEO professional using multiple tools, the practical impact of blocking any single bot is usually limited. The tools use many data sources, and backlink data is only one of them. But if you rely heavily on a specific tool for link analysis or site audits, blocking its crawler will degrade your data quality inside that tool.

What AI bot blocking means for your brand visibility

This is where things get more consequential. Blocking AI bots doesn’t just affect data inside a third-party tool. It affects whether AI engines recommend your brand, link to your pages, and send you visitors.

AI search is still a small channel. That 0.1% of referral traffic number is real. But the trajectory matters more than the current number. According to HUMAN Security’s 2026 report, AI-driven traffic grew 187% in 2025, and agentic AI traffic specifically grew 7,851% year-over-year.

For brands that are already seeing AI search traffic, the numbers can be meaningful. Ahrefs reported that for their own site, 0.5% of visitors from AI search drove 12.1% of signups. One case study from a career tech platform showed AI referral conversion rates of 5-8%, far above typical blog benchmarks of 1-2%.

The brands that benefit most from AI search visibility are the ones that treat it as a compounding channel rather than a replacement for SEO. This is the position we hold at Analyze AI. AI search is not replacing traditional search. It’s adding another organic channel that rewards the same things SEO has always rewarded: clear, original, useful content.

How to monitor your AI search visibility

If you’re not sure whether your brand is appearing in AI answers, or which AI engines cite you and which ones don’t, you need visibility data.

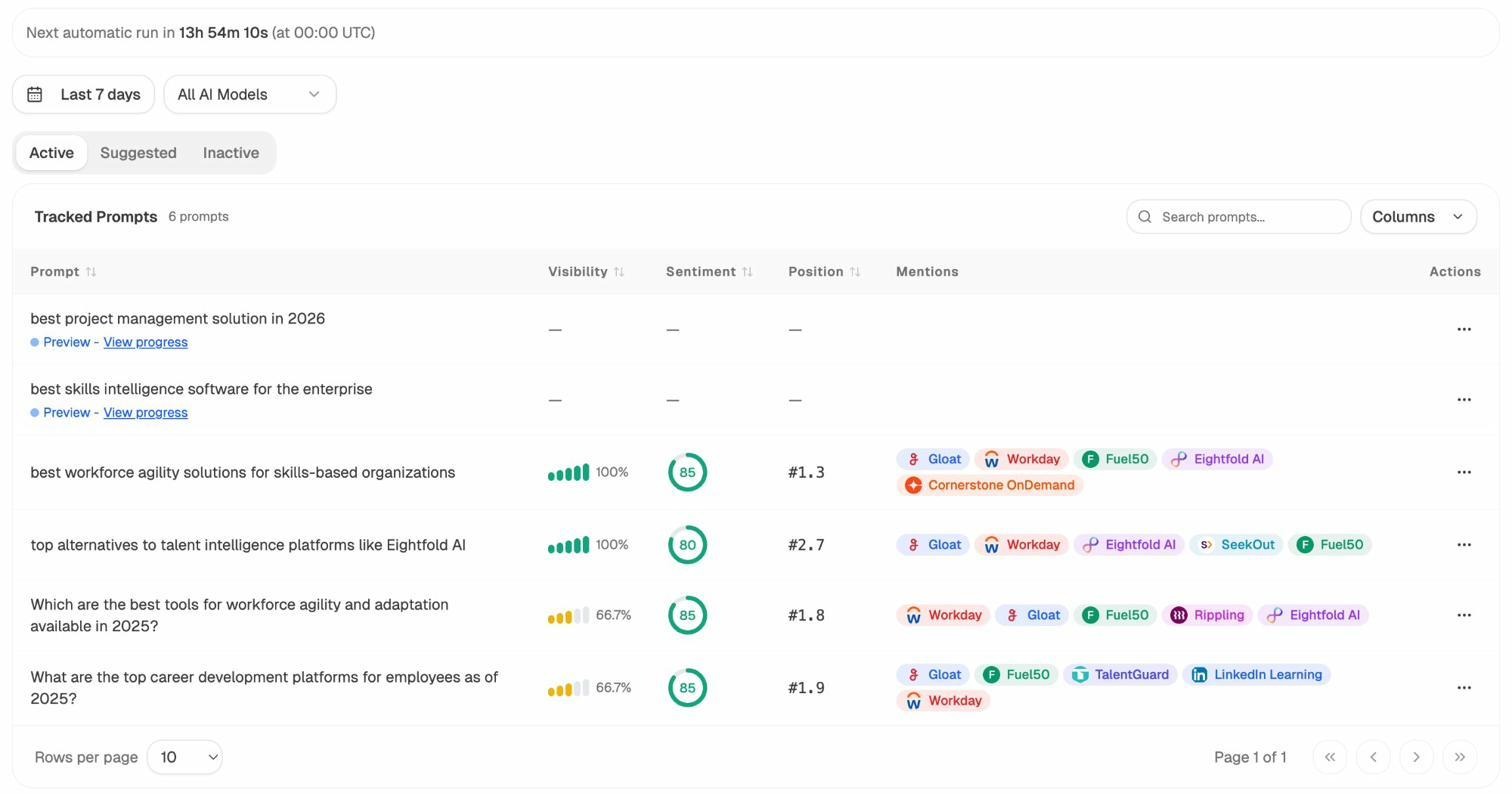

Analyze AI tracks how AI engines like ChatGPT, Perplexity, Gemini, and Copilot represent your brand. You can see which prompts mention you, what position you hold, what sentiment AI engines express about you, and which competitors show up alongside you.

The Prompts dashboard shows exactly where your brand appears and where it doesn’t. Each prompt displays your visibility percentage, sentiment score, position, and the competitors mentioned alongside you. This is the data you need to understand whether your robots.txt decisions are helping or hurting your AI search presence.

Track which AI engines send you traffic

Beyond visibility in AI answers, you need to know which AI engines actually send visitors to your site and which pages they land on.

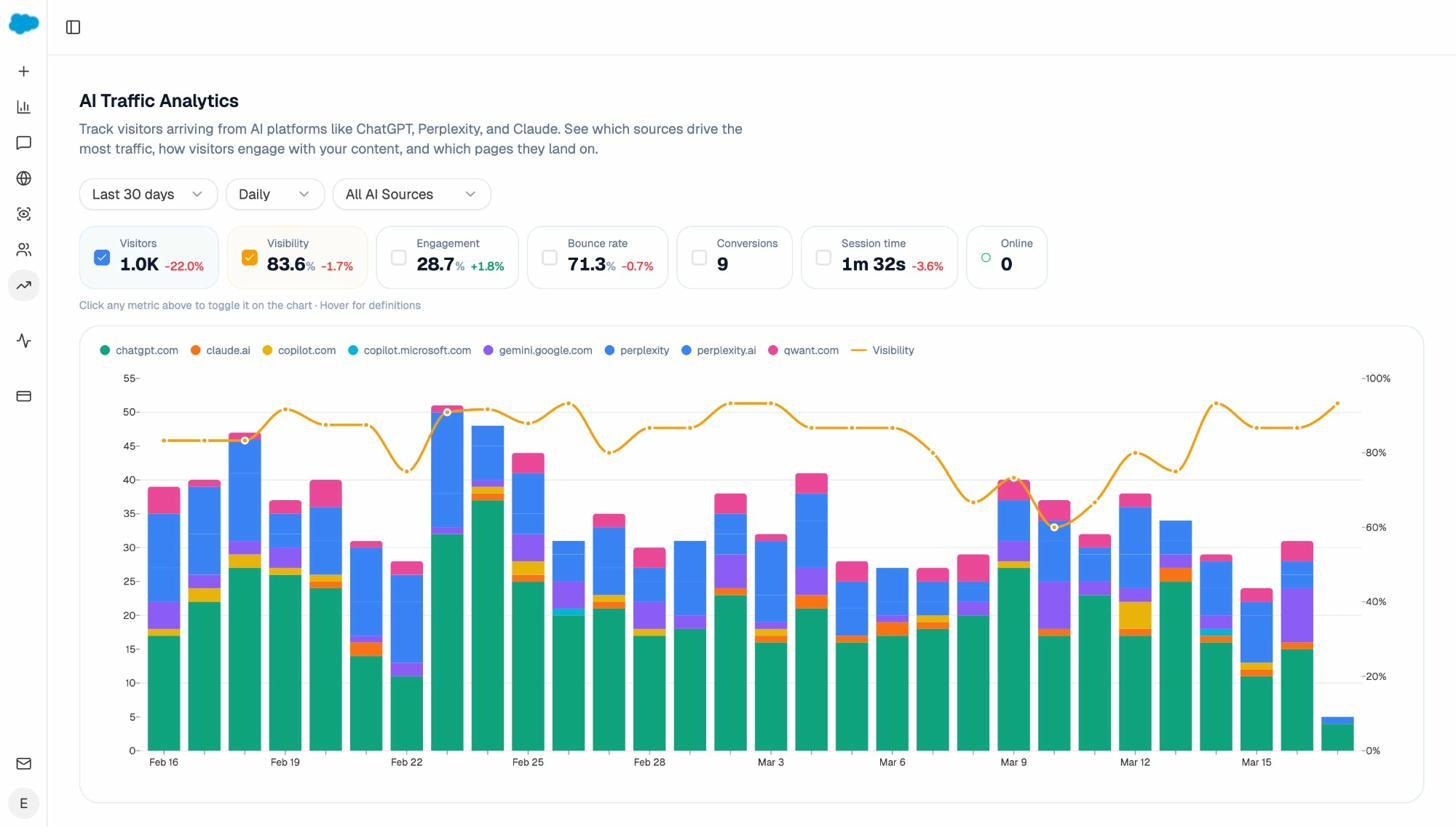

Analyze AI’s AI Traffic Analytics connects to your GA4 data and breaks down referral traffic by AI source. You can see visits from ChatGPT, Claude, Perplexity, Gemini, Copilot, and other AI engines, tracked over time with engagement metrics.

This dashboard answers the question that matters most after a robots.txt decision: is the traffic actually coming? If you’ve allowed AI bots and your AI referral traffic is growing, you know the decision is paying off. If you’ve blocked them and traffic is flat, you know what’s holding you back.

See which sources AI engines trust in your space

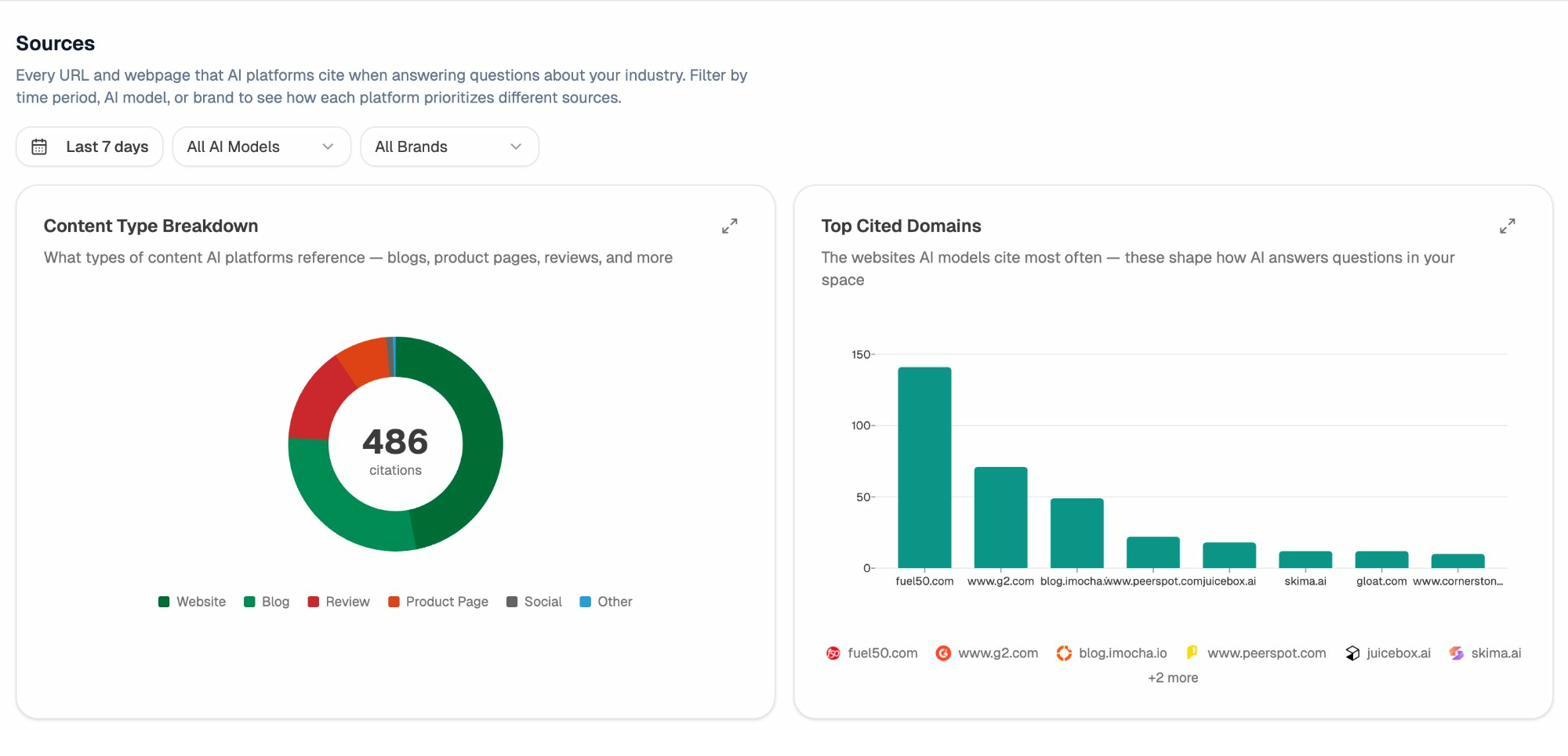

Understanding which domains AI engines cite helps you prioritize where to earn mentions and links. Analyze AI’s Sources dashboard shows every URL and domain that AI platforms cite when answering questions in your industry, broken down by content type and citation frequency.

If a review site or industry publication dominates AI citations in your space, getting featured there becomes a strategic priority. This is the AI search equivalent of link building. Instead of earning backlinks for Google, you’re earning citations from the sources that AI models trust.

What you should do about it

Based on everything the data shows, here’s a practical framework for making robots.txt decisions:

If you’re a content publisher or SaaS company that wants AI visibility:

-

Allow AI search and retrieval bots (OAI-SearchBot, PerplexityBot, Google’s standard Googlebot).

-

Consider blocking training-only bots if you’re concerned about your content being used for model training without compensation (GPTBot, Google-Extended, Bytespider).

-

Monitor your AI referral traffic to measure the impact of your decisions. Use Analyze AI’s AI Traffic Analytics to track visits from each AI engine.

-

Track your AI visibility across prompts relevant to your business. If your visibility drops after a blocking decision, you’ll know immediately.

If you’re an e-commerce site:

-

Keep SEO bots unblocked for tools you actively use. If your team relies on Ahrefs for competitor analysis, don’t block AhrefsBot.

-

Be cautious about blocking AI bots entirely. E-commerce sites absorb 28.1% of all AI crawling, more than double any other industry. That crawling represents potential product visibility in AI-powered shopping recommendations.

-

Block training bots for proprietary content like pricing strategies, internal documentation, or inventory data.

If you’re a news publisher or content creator:

-

Adopt the hybrid approach. Block training bots, allow retrieval bots.

-

Negotiate directly with AI companies if possible. Several major publishers have struck licensing deals with OpenAI and others.

-

Know that blocking alone won’t make you invisible. Your content already exists in training data and third-party indexes.

For everyone:

-

Audit your robots.txt today. Many sites have outdated or inherited blocking rules that no longer match their strategy.

-

Check your CMS and security plugins. Default settings might be blocking bots you want to allow.

-

Review your SEO keyword rankings and AI visibility together. Your organic strategy should account for both traditional search and AI search as complementary channels.

-

Use Analyze AI’s Ad Hoc Prompt Searches to test specific prompts across multiple AI engines and see whether your brand appears before committing to a full tracking campaign.

How the data was collected

The data in this article draws from multiple sources. The primary dataset comes from an analysis of robots.txt files across ~140 million websites, which examined both overall block rates (including generic blocks) and explicit block rates (where specific bots were named in disallow directives). Additional data on crawl volumes comes from Cloudflare Radar, which handles roughly 20% of all internet traffic. Crawl-to-refer ratios are based on Cloudflare’s network-level analysis of HTML page requests versus referral traffic. The citation persistence data comes from a BuzzStream study of 4 million AI citations across 3,600 prompts.

All data has inherent limitations. Robots.txt analysis can’t capture firewall or IP-based blocks. Crawl volume data from Cloudflare represents a large but not universal slice of the internet. And AI citation behavior changes as models are retrained and updated. Treat these numbers as directional indicators rather than exact measurements.

Final thoughts

The bot-blocking landscape is shifting fast. SEO bot blocks have been relatively stable for years, with the same crawlers occupying the same positions on the most-blocked list. AI bot blocking is a different story. Block rates are climbing, new bots appear every few months, and the rules of engagement are still being written.

The most important thing to understand is that blocking is a spectrum, not a binary choice. You don’t have to block everything or allow everything. The hybrid approach, where you block training crawlers while allowing search crawlers, gives you the best of both worlds. Your content stays out of training pipelines, but it remains visible in AI search results where your customers are asking questions.

If you’re not monitoring how AI engines represent your brand, you’re making these decisions in the dark. Tools like Analyze AI give you the data to make informed choices about which bots to allow, which to block, and how those decisions affect your visibility across every AI engine that matters.

The brands that win in this environment are the ones that treat AI search as an additional organic channel, not a threat. They create content worth citing, optimize what’s being ignored, monitor their competitive position, and compound what works.

SEO is not dead. AI search is not replacing it. The bots are multiplying, the channels are expanding, and the brands that pay attention to both will have the advantage.

Ernest

Ibrahim